I/O device and computing host interoperation

Cohen , et al. Dec

U.S. patent number 10,514,864 [Application Number 15/661,079] was granted by the patent office on 2019-12-24 for i/o device and computing host interoperation. This patent grant is currently assigned to Seagate Technology LLC. The grantee listed for this patent is Seagate Technology LLC. Invention is credited to Timothy L. Canepa, Earl T. Cohen.

View All Diagrams

| United States Patent | 10,514,864 |

| Cohen , et al. | December 24, 2019 |

I/O device and computing host interoperation

Abstract

Methods, systems and computer-readable storage media for receiving, via an external interface of a storage device, a command from a computing host, the command including at least one non-standard command modifier, executing the command according to a particular non-standard command modifier, storing an indication of the particular non-standard command modifier in an entry of a map associated with a logical block address of the command, and storing a shadow copy of the map in a memory of the computing host.

| Inventors: | Cohen; Earl T. (Oakland, CA), Canepa; Timothy L. (Los Gatos, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Seagate Technology LLC

(Cupertino, CA) |

||||||||||

| Family ID: | 47668897 | ||||||||||

| Appl. No.: | 15/661,079 | ||||||||||

| Filed: | July 27, 2017 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20170322751 A1 | Nov 9, 2017 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 13936010 | Jul 5, 2013 | ||||

| PCT/US2012/049905 | Aug 8, 2012 | ||||

| 61543666 | Oct 5, 2011 | ||||

| 61531551 | Sep 6, 2011 | ||||

| 61521739 | Aug 9, 2011 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/0611 (20130101); G06F 3/0659 (20130101); G06F 3/0607 (20130101); G06F 12/00 (20130101); G06F 3/0679 (20130101); G06F 12/1027 (20130101) |

| Current International Class: | G06F 12/1027 (20160101); G06F 3/06 (20060101); G06F 12/00 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 4942552 | July 1990 | Merrill |

| 5278703 | January 1994 | Rub et al. |

| 5440474 | August 1995 | Hetzler |

| 6427198 | July 2002 | Berglund et al. |

| 6763424 | July 2004 | Conley |

| 7159082 | January 2007 | Wade |

| 7594073 | September 2009 | Hanebutte et al. |

| 8327226 | December 2012 | Bernardo |

| 8479080 | July 2013 | Shalvi et al. |

| 8661196 | February 2014 | Eleftheriou et al. |

| 8677054 | March 2014 | Meir et al. |

| 8793429 | July 2014 | Call et al. |

| 9389805 | July 2016 | Coehn et al. |

| 2003/0055988 | March 2003 | Noma et al. |

| 2004/0250026 | December 2004 | Kazunori |

| 2006/0174067 | August 2006 | Soules |

| 2007/0162601 | July 2007 | Pendarakis et al. |

| 2007/0198799 | August 2007 | Shinohara et al. |

| 2008/0177935 | July 2008 | Lasser et al. |

| 2008/0320190 | December 2008 | Lydon |

| 2009/0193164 | July 2009 | Ajanovic |

| 2009/0249022 | October 2009 | Rowe |

| 2009/0287861 | November 2009 | Lipps |

| 2010/0037003 | February 2010 | Chen et al. |

| 2010/0131702 | May 2010 | Wong |

| 2010/0153631 | June 2010 | Moon |

| 2010/0169710 | July 2010 | Royer |

| 2010/0174866 | July 2010 | Fujimoto |

| 2011/0010489 | January 2011 | Chih-Kang |

| 2011/0099323 | April 2011 | Syu |

| 2011/0138148 | June 2011 | Friedman et al. |

| 2011/0161562 | June 2011 | Chang et al. |

| 2011/0238890 | September 2011 | Sukegawa |

| 2011/0264843 | October 2011 | Haines et al. |

| 2011/0296088 | December 2011 | Duzly et al. |

| 2012/0050076 | March 2012 | Rosenband et al. |

| 2012/0054419 | March 2012 | Chen et al. |

| 2012/0059976 | March 2012 | Rosenband et al. |

| 2012/0072641 | March 2012 | Suzuki et al. |

| 2012/0117209 | May 2012 | Schuette |

| 2012/0117309 | May 2012 | Schuette |

| 2013/0297894 | November 2013 | Cohen |

| 2014/0006683 | January 2014 | Rain et al. |

| 2014/0082261 | March 2014 | Cohen |

| 2014/0101379 | April 2014 | Tomlin |

| 2014/0181327 | June 2014 | Cohen |

| 2014/0208007 | July 2014 | Cohen |

| 1670701 | Sep 2005 | CN | |||

| 1851671 | Oct 2006 | CN | |||

| 1924830 | Mar 2007 | CN | |||

| 101356502 | Jan 2009 | CN | |||

| 101390043 | Mar 2009 | CN | |||

| 101576834 | Nov 2009 | CN | |||

| 101689246 | Mar 2010 | CN | |||

| 101930404 | Dec 2010 | CN | |||

| 102023818 | Apr 2011 | CN | |||

| 0898228 | Sep 2005 | EP | |||

| 2479235 | Oct 2011 | GB | |||

| H07-160602 | Jun 1995 | JP | |||

| 2007-226557 | Sep 2007 | JP | |||

| 100445134 | Aug 2004 | KR | |||

| 201007734 | Feb 2010 | TW | |||

| 2012099937 | Jan 2012 | WO | |||

| 2013022915 | Feb 2013 | WO | |||

Other References

|

OCZ Technology; "SSDs--Write Amplification, Trim and GC," Mar. 2010 (retrieved from http://www.oczenterprise.com/whitepapers/ssds-writeamplification-trim-and- -gc.pdf, using Internet Archive Wayback Machine Mar. 28, 2014), 3 pgs. cited by applicant . "Write Amplification," Wikipedia (retrieved from http://en.wikipedia.org/wiki/Write_amplification and converted to pdf format Apr. 7, 2014), 14 pgs. cited by applicant . Cohen, Earl T.; Advisory Action for U.S. Appl. No. 13/936,010, filed Jul. 5, 2013, dated Oct. 14, 2015, 3 pgs. cited by applicant . Cohen, Earl T.; Final Office Action for U.S. Appl. No. 13/936,010, filed Jul. 5, 2013, dated Jun. 17, 2015, 15 pgs. cited by applicant . Cohen, Earl T.; Notice of Allowance for Taiwanese Application No. 101128817, filed Aug. 9, 2012, dated Jan. 16, 2017, 3 pgs. cited by applicant . Cohen, Earl T.; U.S. Patent Application entitled: I/O Device and Computing Host Interoperation, having U.S. Appl. No. 13/936,010, filed Jul. 5, 2013, 166 pgs. cited by applicant . ) Cohen, Earl T.; International Search Report and Written Opinion for PCT/US2012/058583, filed Oct. 4, 2012, dated Feb. 25, 2013, 8 pgs. cited by applicant . Cohen, Earl T.; Final Office Action for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated Dec. 9, 2014, 27 pgs. cited by applicant . Cohen, Earl T.; Final Office Action for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated Jun. 10, 2015, 15 pgs. cited by applicant . Cohen, Earl T.; International Search Report and Written Opinion for PCT/US2012/049905, filed Aug. 8, 2012, dated Jan. 3, 2013, 9 pgs. cited by applicant . Cohen, Earl T.; Issue Notification for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated Jun. 22, 2016, 1 pg. cited by applicant . Cohen, Earl T.; Non-Final Office Action for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated Nov. 27, 2015, 29 pgs. cited by applicant . Cohen, Earl T.; Non-Final Office Action for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated May 30, 2014, 18 pgs. cited by applicant . Cohen, Earl T.; Notice of Allowance for U.S. Appl. No. 14/237,331, filed Feb. 5, 2014, dated Mar. 16, 2016, 6 pgs. cited by applicant . Smith, Kent, "Understanding SSD Over Provisioning," Proceedings Flash Memory Summit 2012, Aug. 2012, 16 pgs. cited by applicant . Cohen, Earl T.; U.S. Provisional Application entitled: I/O Device and Computing Host Interoperation, having U.S. Appl. No. 61/521,739, filed Aug. 9, 2011, 78 pgs. (see MPEP .sctn. 609.04(a) (citing "Waiver of the Copy Requirement").). cited by applicant . Cohen, Earl T.; U.S. Provisional Application entitled: I/O Device and Computing Host Interoperation, having U.S. Appl. No. 61/531,551, filed Sep. 6, 2011, 124 pgs. (see MPEP .sctn. 609.04(a) (citing "Waiver of the Copy Requirement").). cited by applicant . Cohen, Earl T.; U.S. Provisional Application entitled: I/O Device and Computing Hose Interoperation, having U.S. Appl. No. 61/543,666, filed Oct. 5, 2011, 126 pgs. (see MPEP .sctn. 609.04(a) (citing "Waiver of the Copy Requirement").). cited by applicant . Agrawal, et al, "Design Tradeoff's for SSD Performance," Proceedings of the 2008 USENIX Annual Technical Conference, Jun. 2008, 14 pgs. cited by applicant . LSI Corporation; Chinese Office Action for serial No. 201280049511.1, filed Aug. 8, 2012, dated Nov. 4, 2014, 18 pgs. cited by applicant . Cohen, Earl T.; Office Action for Taiwanese Application No. 10521148350, filed Aug. 9, 2012, dated Sep. 20, 2016, 6 pgs. cited by applicant . Cohen, et al; International Preliminary Report on Patentability for PCT/US2012/049905, filed Aug. 8, 2012, dated Feb. 11, 2014, 6 pgs. cited by applicant . Smith Kent, "Garbage Collection: Understanding Foreground vs. Background GC and Other Related Elements," Proceedings Flash Memory Summit 2011, Aug. 2011, 9 pgs. cited by applicant . Frankie, et al, "SSD Trim Commands Considerably Improve Overprovisioning," Proceedings Flash Memory Summit 2011, Aug. 2011, 19 pgs. cited by applicant . IEEE, "band" definition, IEEE Standard Dictionary of Electrical and Electronics Terms, Nov. 3, 1998, p. 80, IEE, Inc. New York, NY, 3 sheets total (1 sheet of content, 2 sheets of title pages). cited by applicant . Layton, Jeffrey, "Anatomy of SSD's," Linux Magazine, Oct. 27, 2009 (Retrieved from http://www.linux-mag.com/id/7590/2 and converted to pdf format Apr. 7, 2014), 4 pgs. cited by applicant . LSI Corporation; Extended European Search Report for serial No. 14151725.0, dated Apr. 22, 2014, 6 pgs. cited by applicant . LSI Corporation; International Search Report and Written Opinion for PCT/US2012/034601, filed Apr. 22, 2012, dated Nov. 30, 2012, 7 pgs. cited by applicant . LSI Corporation; Office Action for Chinese patent application No. 201280009888.4, filed Oct. 4, 2012, dated Mar. 10, 2014, 17 pgs. cited by applicant . LSI Corporation; Office Action for Korean Patent Application No. 10-2013-7029137, dated Jun. 27, 2014, 7 pgs. cited by applicant . LSI Corporation; Office Action for Taiwan Patent Application No. 101128817, dated Jul. 14, 2014, 17 pgs. cited by applicant . LSI Corporation; Search Report for Chinese Patent Application No. 201280009888.4, filed Oct. 4, 2012, dated Feb. 28, 2014, 4 pgs. cited by applicant . Mehling, Herman, "Solid State Drives Get Faster with TRIM," Jan. 27, 2010 (retrieved from http://www.enterprisestorageforum.com/technology/features/article.php/386- 1181/Solid-State-Drives-Get-Faster-with-TRIM.htm and converted to pdf format Apr. 7, 2014), 4 pgs. cited by applicant . LSI Corporation; Office Action for Taiwan Patent Application No. 101114992, dated Jun. 27, 2014, 8 pgs. cited by applicant . LSI Corporation; Office Action for Taiwan Patent Application No. 101137008, dated Jul. 31, 2014, 10 pgs. cited by applicant . LSI Corporation; Office Action for Chinese Patent Application No. 2012800314652, dated May 4, 2014, 14 pgs. cited by applicant . Shimpi, Anand Lal, "The Impact of Spare Area on SandForce, More Capacity at No Performance Loss?" May 3, 2010 (retrieved from http://www.anandtech.com/show/3690/the-impact-of-spare-area-on-sandforce-- more-capacity-at-no-performance-loss and converted to pdf), 14 pgs. cited by applicant . Simionescu, Horia; Notice of Allowance for Korean Patent Applicatio nno. 10-2013-0022004, filed Feb. 28, 2013, dated Mar. 28, 2019, 3 pages. cited by applicant. |

Primary Examiner: Peugh; Brian R

Attorney, Agent or Firm: Taylor English Duma LLP

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATIONS

The present application is a continuation of U.S. non-provisional application Ser. No. 13/936,010, filed Jul. 5, 2013, which is a continuation of International Application Ser. No. PCT/US2012/049905, filed Aug. 8, 2012, which claims the benefit of U.S. provisional application Ser. No. 61/543,666, filed Oct. 5, 2011, U.S. provisional application Ser. No. 61/531,551, filed Sep. 6, 2011, and U.S. provisional application Ser. No. 61/521,739, filed Aug. 9, 2011, wherein the foregoing applications are incorporated by reference in their entirety herein for all purposes.

Claims

What is claimed is:

1. A method comprising: receiving, via an external interface of a storage device, a command from a computing host, the command comprising at least one non-standard command modifier; executing the command according to a particular non-standard command modifier; storing an indication of the particular non-standard command modifier in an entry of a map associated with a logical block address (LBA) of the command; and storing a shadow copy of the map in a memory of the computing host.

2. The method of claim 1, wherein the command identifies one of a plurality of data destinations, the data destinations comprising a plurality of data bands.

3. The method of claim 2, wherein the data bands are dynamically changeable in number, arrangement, size and type and correspond to at least one of a database journal data band, a hot data band, and a cold data band.

4. The method of claim 3, wherein the database journal data band is reserved for database journal type data.

5. The method of claim 3, wherein in response to the computing host writing a long sequential stream, the hot data band grows in size.

6. The method of claim 1, wherein the command comprises location information obtained at least in part by the computing host from a memory device of an I/O card coupled to the computing host.

7. The method of claim 1, subsequent to the executing the command and without having received a trim command, further comprising accessing a determination to trim, rather than to recycle, at least a portion of data.

8. A storage system comprising: a memory; a host interface coupled to a computing host; and a storage controller communicatively coupled to the host interface and the memory, the storage controller configured to receive a command from the computing host, the command comprising at least one non-standard command modifier; execute the command according to a particular non-standard command modifier; and store an indication of the particular non-standard command modifier in an entry of a map associated with a logical block address (LBA) of the command, wherein a shadow copy of the map is stored in a memory of the computing host.

9. The storage system of claim 8, wherein the storage controller is further configured to determine whether a stored portion of data has information corresponding to LBAs associated with at least one read command received via an external interface of the storage system.

10. The storage system of claim 8, wherein the storage controller is further configured to determine whether a stored portion of data comprises information corresponding to LBAs associated with a first write command; and change the stored portion of data selectively as required in view of the determining, wherein the stored portion of data comprises the information corresponding to LBAs associated with the first write command.

11. The storage system of claim 8, wherein the command identifies a particular one of a plurality of data destinations, the data destinations comprising a plurality of data bands.

12. The storage system of claim 11, wherein the data bands are dynamically changeable in number, arrangement, size and type and correspond to one or more of a database journal data band, a hot data band, and a cold data band.

13. The storage system of claim 12, wherein in response to the computing host writing a long sequential stream, the hot data band grows in size.

14. The storage system of claim 12, wherein the cold data band is used at least for data from a recycler.

15. The storage system of claim 8, wherein the command comprises location information obtained at least in part by the computing host from a memory device of an I/O card coupled to the computing host.

16. The storage system of claim 8, wherein at least a portion of the memory of the computing host is in an address space accessible by the storage controller.

17. A non-transitory computer readable storage medium having processor-executable instructions stored thereon that, when executed by a processor, cause the processor to: receive a command from a computing host, the command comprising at least one non-standard command modifier; execute the command according to a particular non-standard command modifier; and store an indication of the particular non-standard command modifier in an entry of a map associated with a logical block address (LBA) of the command, wherein a shadow copy of the map is stored in a memory of the computing host.

18. The non-transitory computer readable storage medium of claim 17, wherein the command identifies a particular one of a plurality of data destinations, the data destinations comprising a plurality of data bands.

19. The non-transitory computer readable storage medium of claim 18, wherein the data bands are dynamically changeable in number, arrangement, size and type and correspond to one or more of a database journal data band, a hot data band, and a cold data band.

20. The non-transitory computer readable storage medium of claim 17, wherein the command comprises location information obtained at least in part by the computing host from a memory device of an I/O card coupled to the computing host.

Description

BACKGROUND

Field

Advancements in computing host and I/O device technology are needed to provide improvements in performance, efficiency, and utility of use.

Related Art

Unless expressly identified as being publicly or well known, mention herein of techniques and concepts, including for context, definitions, or comparison purposes, should not be construed as an admission that such techniques and concepts are previously publicly known or otherwise part of the prior art. All references cited herein (if any), including patents, patent applications, and publications, are hereby incorporated by reference in their entireties, whether specifically incorporated or not, for all purposes.

SYNOPSIS

The invention may be implemented in numerous ways, e.g. as a process, an article of manufacture, an apparatus, a system, a composition of matter, and a computer readable medium such as a computer readable storage medium (e.g., media in an optical and/or magnetic mass storage device such as a disk, an integrated circuit having non-volatile storage such as flash storage), or a computer network wherein program instructions are sent over optical or electronic communication links. The Detailed Description provides an exposition of one or more embodiments of the invention that enable improvements in cost, profitability, performance, efficiency, and utility of use in the field identified above. The Detailed Description includes an Introduction to facilitate understanding of the remainder of the Detailed Description. The Introduction includes Example Embodiments of one or more of systems, methods, articles of manufacture, and computer readable media in accordance with concepts described herein. As is discussed in more detail in the Conclusions, the invention encompasses all possible modifications and variations within the scope of the issued claims.

BRIEF DESCRIPTION OF DRAWINGS

FIG. 1A illustrates selected details of an embodiment of a Solid-State Disk (SSD) including an SSD controller compatible with operation in an I/O device (such as an I/O storage device) enabled for interoperation with a host (such as a computing host).

FIG. 1B illustrates selected details of various embodiments of systems including one or more instances of the SSD of FIG. 1A.

FIG. 2 illustrates selected details of an embodiment of mapping a Logical Page Number (LPN) portion of a Logical Block Address (LBA).

FIG. 3 illustrates selected details of an embodiment of accessing a Non-Volatile Memory (NVM) at a read unit address to produce read data organized as various read units, collectively having a length measured in quanta of read units.

FIG. 4A illustrates selected details of an embodiment of a read unit.

FIG. 4B illustrates selected details of another embodiment of a read unit.

FIG. 5 illustrates selected details of an embodiment of a header having a number of fields.

FIG. 6 illustrates a flow diagram of selected details of an embodiment of processing commands and optional hint information at an I/O device.

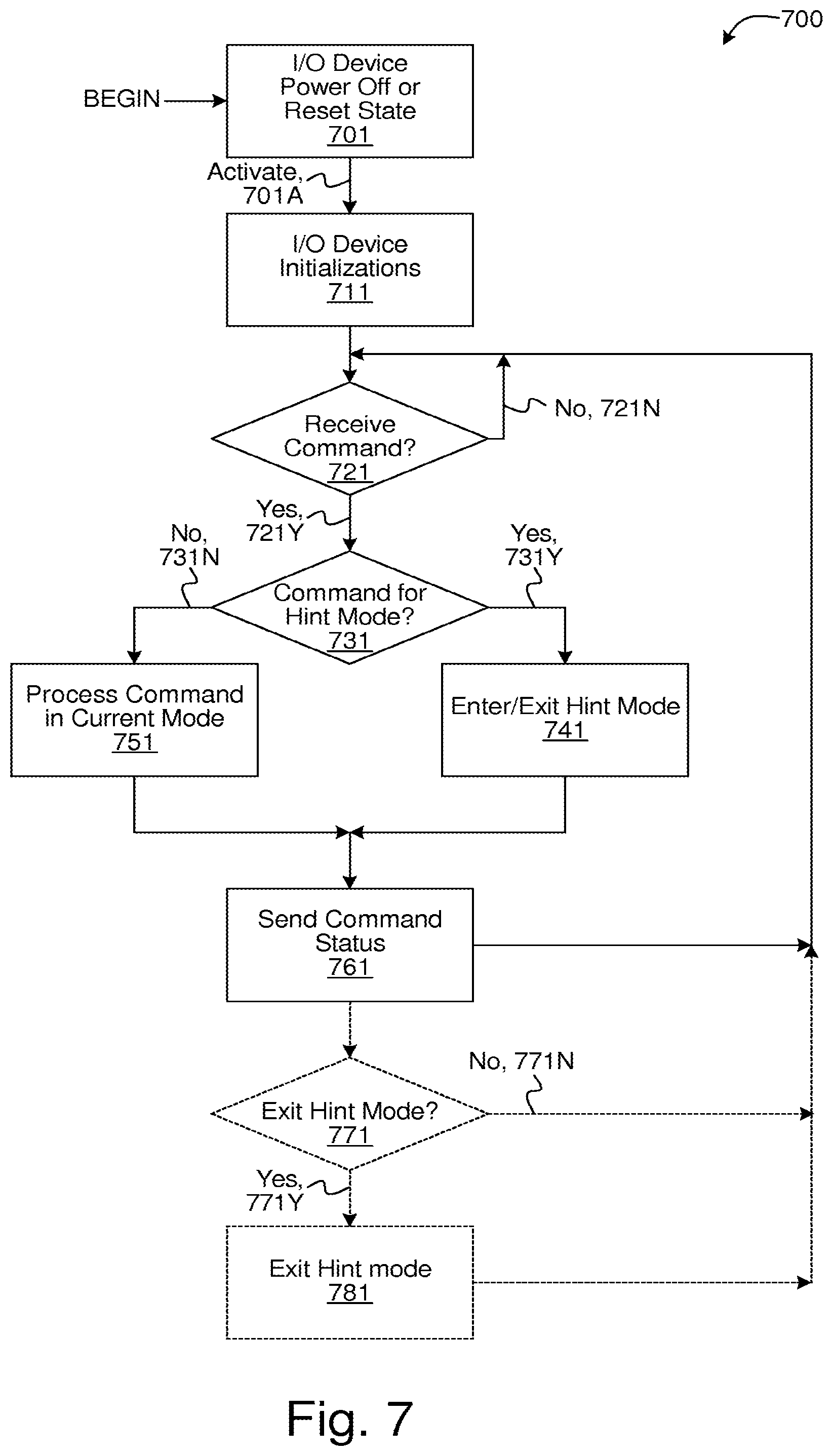

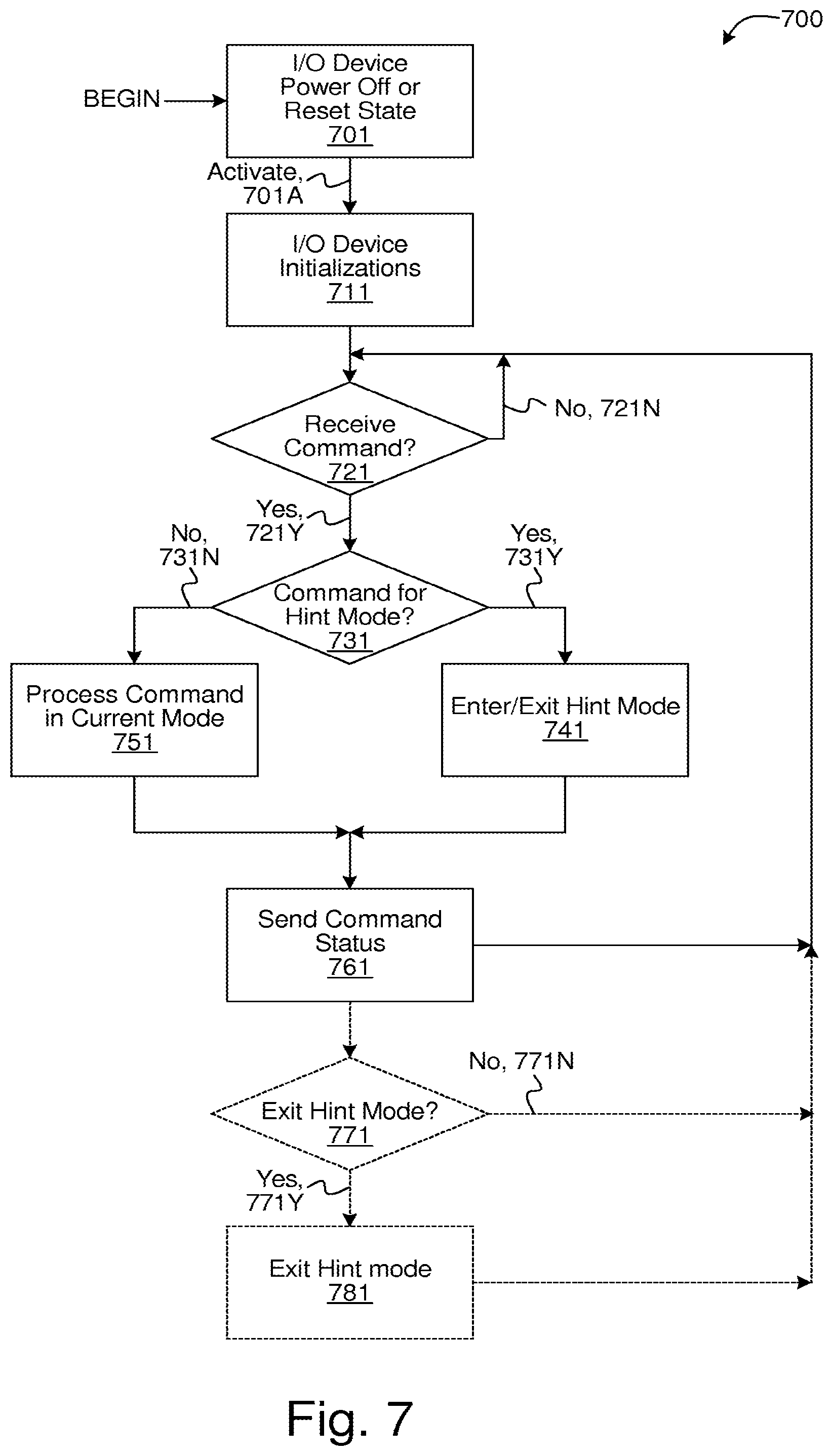

FIG. 7 illustrates a flow diagram of another embodiment of processing commands and optional hint information at an I/O device.

FIG. 8 illustrates a flow diagram of yet another embodiment of processing commands and optional hint information at an I/O device.

FIG. 9 illustrates a flow diagram of an embodiment of processing commands and optional shadow map information at an I/O device.

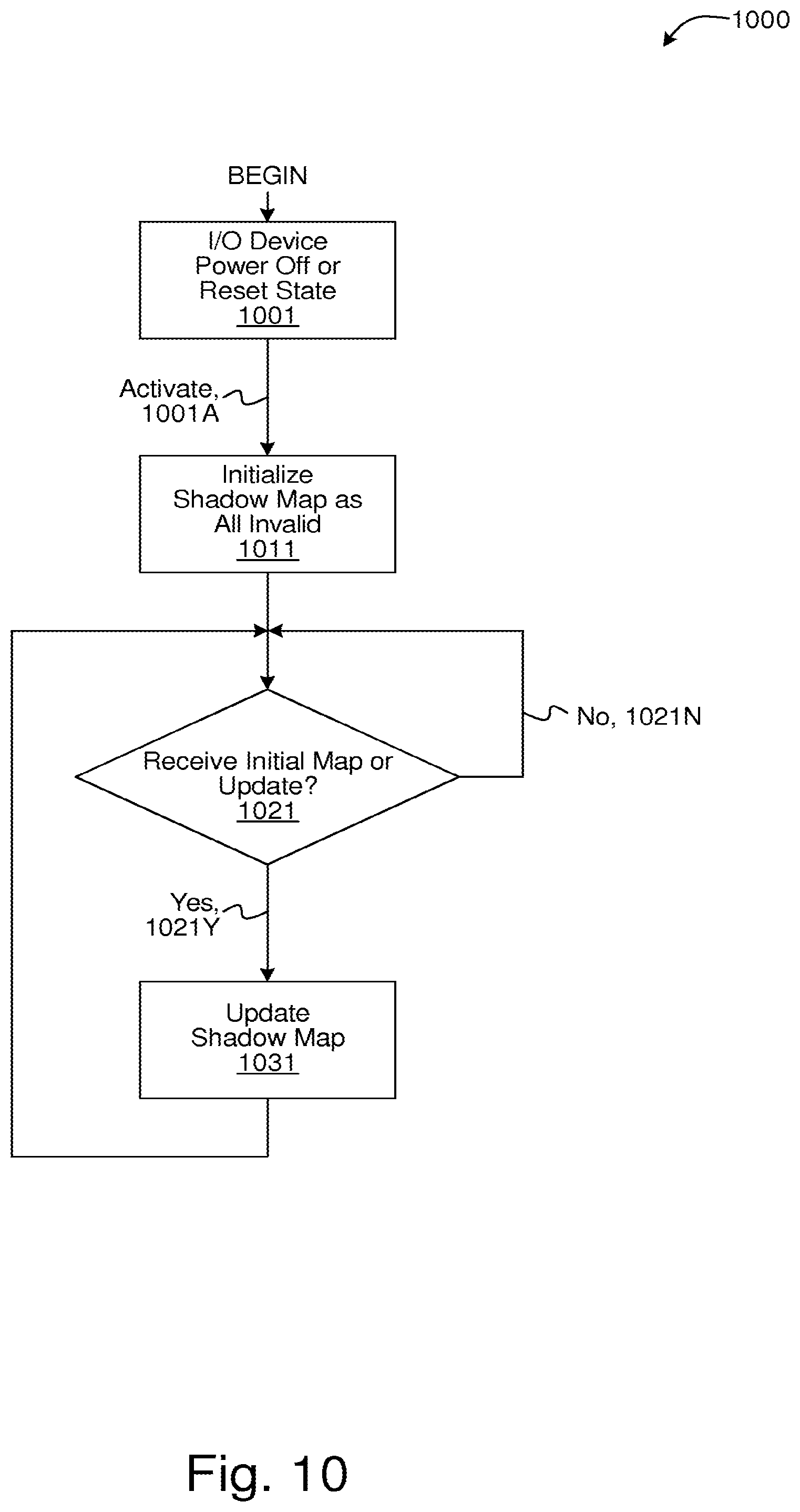

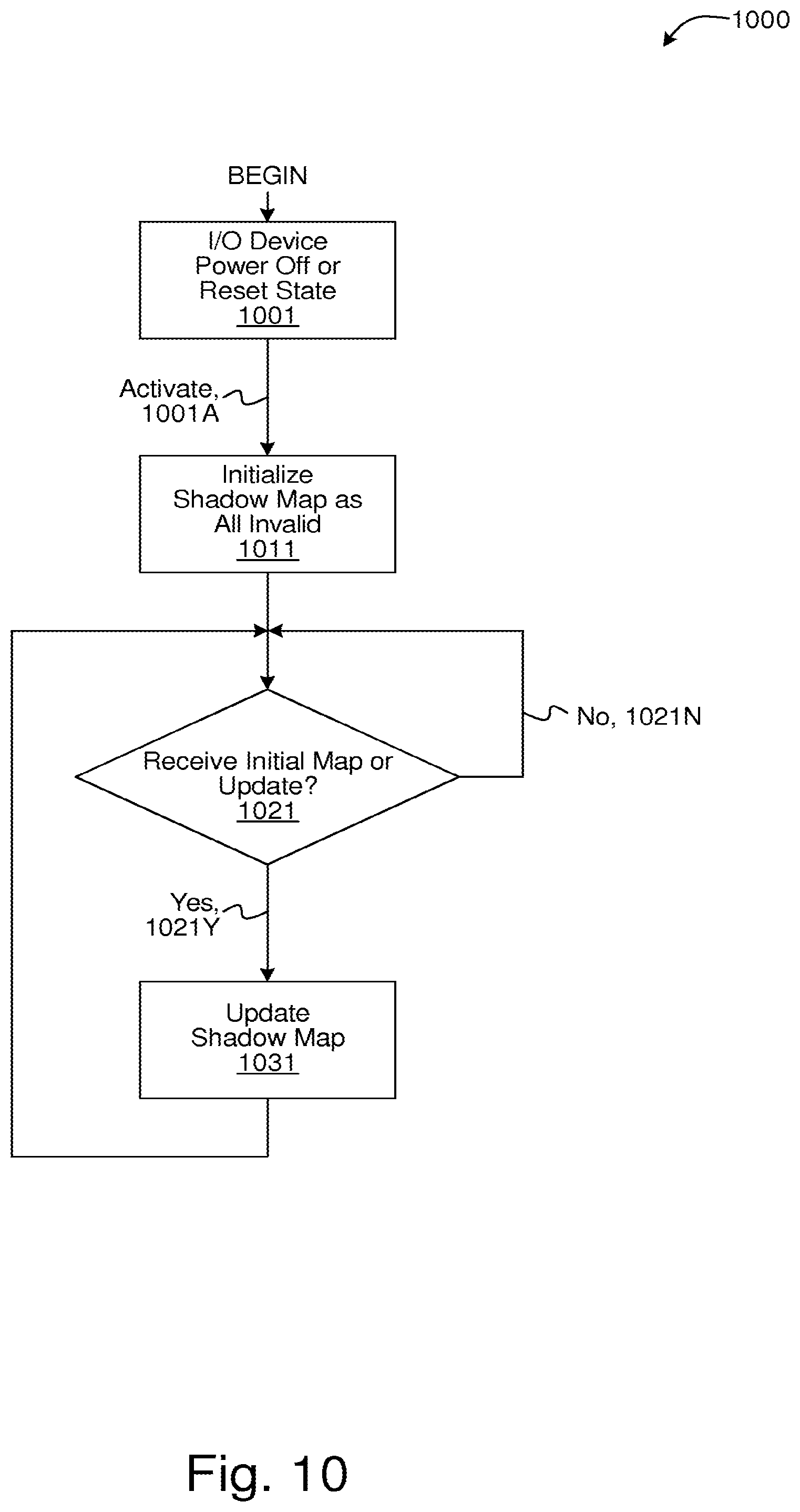

FIG. 10 illustrates a flow diagram of an embodiment of maintaining shadow map information at a computing host.

FIG. 11 illustrates a flow diagram of an embodiment of issuing commands and optional shadow map information at a computing host.

FIG. 12 illustrates a flow diagram of an embodiment of entering and exiting a sleep state of an I/O device.

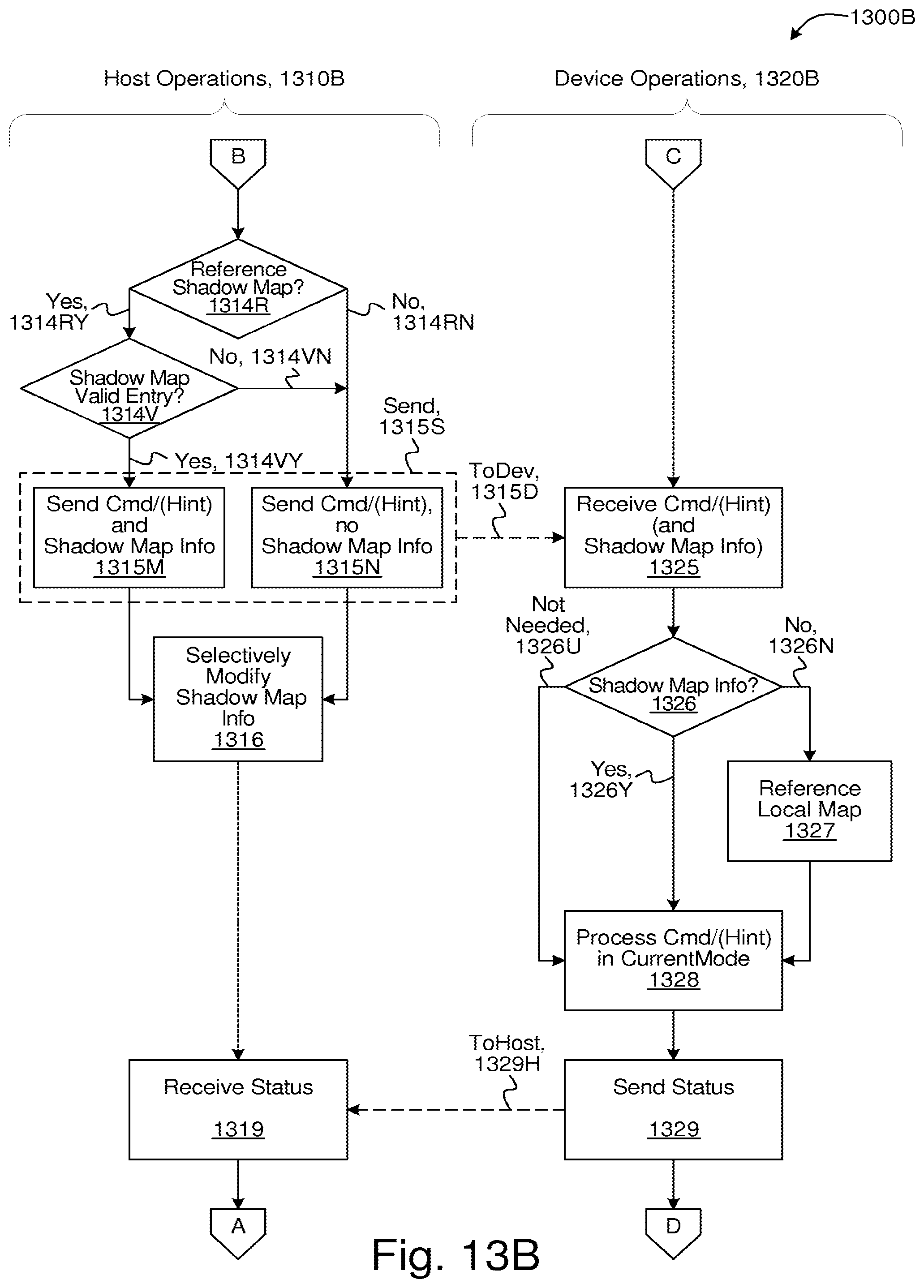

FIGS. 13A and 13B collectively illustrate a flow diagram of an embodiment of I/O device and computing host interoperation.

LIST OF REFERENCE SYMBOLS IN DRAWINGS

TABLE-US-00001 Ref. Symbol Element Name 100 SSD Controller 101 SSD 102 Host 103 (optional) Switch/Fabric/Intermediate Controller 104 Intermediate Interfaces 105 OS 106 FirmWare (FW) 107 Driver 107D dotted-arrow (Host Software .rarw..fwdarw. I/O Device Communication) 108 Shadow Map 109 Application 109D dotted-arrow (Application .rarw..fwdarw. I/O Device Communication via driver) 109V dotted-arrow (Application .rarw..fwdarw. I/O Device Communication via VF) 110 External Interfaces 111 Host Interfaces 112C (optional) Card Memory 112H Host Memory 113 Tag Tracking 114 Multi-Device Management Software 115 Host Software 116 I/O Card 117 I/O & Storage Devices/Resources 118 Servers 119 LAN/WAN 121 Data Processing 123 Engines 131 Buffer 133 DMA 135 ECC-X 137 Memory 141 Map 143 Table 151 Recycler 161 ECC 171 CPU 172 CPU Core 173 Command Management 175 Buffer Management 177 Translation Management 179 Coherency Management 180 Memory Interface 181 Device Management 182 Identity Management 190 Device Interfaces 191 Device Interface Logic 192 Flash Device 193 Scheduling 194 Flash Die 199 NVM 211 LBA 213 LPN 215 Logical Offset 221 Map Info for LPN 223 Read Unit Address 225 Length in Read Units 311 Read Data 313 First Read Unit 315 Last Read Unit 401A Read Unit 401B Read Unit 410B Header Marker (HM) 411A Header 1 411B Header 1 412B Header 2 419A Header N 419B Header N 421A Data Bytes 421B Data Bytes 422B Data Bytes 429B Data Bytes 431A Optional Padding Bytes 431B Optional Padding Bytes 501 Header 511 Type 513 Last Indicator 515 Flags 517 LPN 519 Length 521 Offset 600 I/O Device Command Processing, generally 601 I/O Device Power Off or Reset State 601A Activate 611 I/O Device Initializations 621 Receive Command? 621N No 621Y Yes 631 Command With or Uses Hint? 631N No 631Y Yes 641 Process Command Using Hint 651 Process Command (no Hint) 661 Send Command Status 700 I/O Device Command Processing, generally 701 I/O Device Power Off or Reset State 701A Activate 711 I/O Device Initializations 721 Receive Command? 721N No 721Y Yes 731 Command for Hint Mode? 731N No 731Y Yes 741 Enter/Exit Hint Mode 751 Process Command in Current Mode 761 Send Command Status 771 Exit Hint Mode? 771N No 771Y Yes 781 Exit Hint mode 800 I/O Device Command Processing, generally 801 I/O Device Power Off or Reset State 801A Activate 811 I/O Device Initializations 821 Receive Command? 821N No 821Y Yes 831 With or Uses Hint? 831N No 831Y Yes 832 Process with Hint in CurrentMode 841 Enter Hint Mode? 841N No 841Y Yes 842 Enter Particular Hint Mode (CurrentMode += ParticularMode) 851 Exit Hint Mode? 851N No 851Y Yes 852 Exit Particular Hint Mode (CurrentMode -= ParticularMode) 861 Exit All Hint Modes? 861N No 861Y Yes 862 Exit All Hint Modes (CurrentMode = DefaultMode) 872 Process in CurrentMode (no Hint) 881 Send Status 882 (optional) Exit Hint Mode(s) 900 I/O Device Command Processing, generally 901 I/O Device Power Off or Reset State 901A Activate 911 I/O Device Initializations 921 Transfer Initial Map to Host (optionally in background) 931 Receive Command? 931N No 931Y Yes 941 Location Provided? 941N No 941Y Yes 951 Determine Location (e.g. by map access) 961 Process Command 971 Send Command Status (optionally with map update for write) 981 Map Update to Send? 981N No 981Y Yes 991 Transfer Map Update(s) to Host (optionally in background) (optionally accumulating multiple updates prior to transfer) 1000 Host Shadow Map Processing, generally 1001 I/O Device Power Off or Reset State 1001A Activate 1011 Initialize Shadow Map as All Invalid 1021 Receive Initial Map or Update? 1021N No 1021Y Yes 1031 Update Shadow Map 1100 Host Command Issuing, generally 1101 I/O Device Power Off or Reset State 1101A Activate 1111 Command? 1111N No 1111Y Yes 1121 Decode Command 1121O Other 1121R Read 1121T Trim 1121W Write 1131 Mark LBA in Shadow Map as Invalid 1141 Mark LBA in Shadow Map as Trimmed 1151 LBA Valid in Shadow Map? 1151N No 1151Y Yes 1161 Issue Command 1171 Issue Command as Pre-Mapped Read (with location from shadow map) 1200 I/O Device Sleep Entry/Exit, generally 1201 I/O Device Power Off or Reset State 1201A Activate 1211 I/O Device Initializations 1221 Active Operating State 1231 Enter Sleep State? 1231N No 1231Y Yes 1241 Save Internal State in Save/Restore Memory 1251 Sleep State 1261 Exit Sleep State? 1261N No 1261Y Yes 1271 Restore Internal State from Save/Restore Memory 1300A I/O Device and Computing Host Interoperation, generally 1300B I/O Device and Computing Host Interoperation, generally 1301 I/O Device Power Off/Sleep or Reset State 1301D Activate 1301H Activate 1310A Host Operations 1310B Host Operations 1311 Initialize Shadow Map as All Invalid 1312 Initialize/Update Shadow Map 1312G Generate 1313 Generate Command (optional Hint) 1314R Reference Shadow Map? 1314RN No 1314RY Yes 1314V Shadow Map Valid Entry? 1314VN No 1314VY Yes 1315D To Device 1315M Send Command/(Hint) and Shadow Map Info 1315N Send Command/(Hint), no Shadow Map Info 1315S Send 1316 Selectively Modify Shadow Map Info 1319 Receive Status 1320A Device Operations 1320B Device Operations 1321 I/O Device Initializations and Conditional State Restore from Memory 1322 Send Local Map (full or updates) to Host 1322C Command 1322H To Host 1322S Sleep 1323 Receive Sleep Request and State Save to Memory 1325 Receive Command/(Hint) (and Shadow Map Info) 1326 Shadow Map Info? 1326N No 1326U Not Needed 1326Y Yes 1327 Reference Local Map 1328 Process Command/(Hint) in CurrentMode 1329 Send Status 1329H To Host

DETAILED DESCRIPTION

A detailed description of one or more embodiments of the invention is provided below along with accompanying figures illustrating selected details of the invention. The invention is described in connection with the embodiments. The embodiments herein are understood to be merely exemplary, the invention is expressly not limited to or by any or all of the embodiments herein, and the invention encompasses numerous alternatives, modifications, and equivalents. To avoid monotony in the exposition, a variety of word labels (including but not limited to: first, last, certain, various, further, other, particular, select, some, and notable) may be applied to separate sets of embodiments; as used herein such labels are expressly not meant to convey quality, or any form of preference or prejudice, but merely to conveniently distinguish among the separate sets. The order of some operations of disclosed processes is alterable within the scope of the invention. Wherever multiple embodiments serve to describe variations in process, method, and/or program instruction features, other embodiments are contemplated that in accordance with a predetermined or a dynamically determined criterion perform static and/or dynamic selection of one of a plurality of modes of operation corresponding respectively to a plurality of the multiple embodiments. Numerous specific details are set forth in the following description to provide a thorough understanding of the invention. The details are provided for the purpose of example and the invention may be practiced according to the claims without some or all of the details. For the purpose of clarity, technical material that is known in the technical fields related to the invention has not been described in detail so that the invention is not unnecessarily obscured.

Introduction

This introduction is included only to facilitate the more rapid understanding of the Detailed Description; the invention is not limited to the concepts presented in the introduction (including explicit examples, if any), as the paragraphs of any introduction are necessarily an abridged view of the entire subject and are not meant to be an exhaustive or restrictive description. For example, the introduction that follows provides overview information limited by space and organization to only certain embodiments. There are many other embodiments, including those to which claims will ultimately be drawn, discussed throughout the balance of the specification.

Acronyms

At least some of the various shorthand abbreviations (e.g. acronyms) defined here refer to certain elements used herein.

TABLE-US-00002 Acronym Description AES Advanced Encryption Standard API Application Program Interface AHCI Advanced Host Controller Interface ASCII American Standard Code for Information Interchange ATA Advanced Technology Attachment (AT Attachment) BCH Bose Chaudhuri Hocquenghem BIOS Basic Input/Output System CD Compact Disk CF Compact Flash CMOS Complementary Metal Oxide Semiconductor CPU Central Processing Unit CRC Cyclic Redundancy Check DDR Double-Data-Rate DES Data Encryption Standard DMA Direct Memory Access DNA Direct NAND Access DRAM Dynamic Random Access Memory DVD Digital Versatile/Video Disk ECC Error-Correcting Code eSATA external Serial Advanced Technology Attachment FUA Force Unit Access HBA Host Bus Adapter HDD Hard Disk Drive I/O Input/Output IC Integrated Circuit IDE Integrated Drive Electronics JPEG Joint Photographic Experts Group LAN Local Area Network LBA Logical Block Address LDPC Low-Density Parity-Check LPN Logical Page Number LZ Lempel-Ziv MLC Multi-Level Cell MMC MultiMediaCard MPEG Moving Picture Experts Group MRAM Magnetic Random Access Memory NCQ Native Command Queuing NVM Non-Volatile Memory OEM Original Equipment Manufacturer ONA Optimized NAND Access ONFI Open NAND Flash Interface OS Operating System PC Personal Computer PCIe Peripheral Component Interconnect express (PCI express) PDA Personal Digital Assistant PHY PHYsical interface RAID Redundant Array of Inexpensive/Independent Disks RS Reed-Solomon RSA Rivest, Shamir & Adleman SAS Serial Attached Small Computer System Interface (Serial SCSI) SATA Serial Advanced Technology Attachment (Serial ATA) SCSI Small Computer System Interface SD Secure Digital SLC Single-Level Cell SMART Self-Monitoring Analysis and Reporting Technology SPB Secure Physical Boundary SRAM Static Random Access Memory SSD Solid-State Disk/Drive SSP Software Settings Preservation USB Universal Serial Bus VF Virtual Function VPD Vital Product Data WAN Wide Area Network

In some embodiments, an I/O device such as a Solid-State Disk (SSD) is coupled via a host interface to a host computing system, also simply herein termed a host. According to various embodiments, the coupling is via one or more host interfaces including PCIe, SATA, SAS, USB, Ethernet, Fibre Channel, or any other interface suitable for coupling two electronic devices. In further embodiments, the host interface includes an electrical signaling interface and a host protocol. The host protocol defines standard commands for communicating with the I/O device, including commands that send data to and receive data from the I/O device.

The host computing system includes one or more processing elements herein termed a computing host (or sometimes simply a "host"). According to various embodiments, the computing host executes one or more of: supervisory software, such as an operating system and/or a hypervisor; a driver to communicate between the supervisory software and the I/O device; application software; and a BIOS. In further embodiments, some or all or a copy of a portion of the driver is incorporated in one or more of the BIOS, the operating system, the hypervisor, and the application. In still further embodiment, the application is enabled to communicate with the I/O device more directly by sending commands through the driver in a bypass mode, and/or by having direct communication with the I/O device. An example of an application having direct communication with an I/O device is provided by Virtual Functions (VFs) of a PCIe I/O device. The driver communicates with a primary function of the I/O device to globally configure the I/O device, and one or more applications directly communicate with the I/O device via respective virtual functions. The virtual functions enable each of the applications to treat at least a portion of the I/O device as a private I/O device of the application.

The standard commands of the host protocol provide a set of features and capabilities for I/O devices as of a time when the host protocol is standardized. Some I/O devices have features and/or capabilities not supported in the standard host protocol and thus not controllable by a computing host using the standard host protocol. Accordingly, in some embodiments, non-standard features and/or capabilities are added to the host protocol via techniques including one or more of: using reserved command codes; using vendor-specific commands; using reserved fields in existing commands; using bits in certain fields of commands that are unused by the particular I/O device, such as unused address bits; adding new features to capability registers, such as in SATA by a SET FEATURES command; by aggregating and/or fusing commands; and other techniques known in the art. Use of the non-standard features and/or capabilities is optionally in conjunction with use of a non-standard driver and/or an application enabled to communicate with the I/O device.

Herein, a non-standard modifier of a standard command refers to use of any of the techniques above to extend a standard command with non-standard features and/or capabilities not supported in the standard host protocol. In various embodiments, a non-standard modifier (or portion thereof) is termed a hint that is optionally used by or provided with a (standard) command. In a first example, the non-standard modifier is encoded as part of a command in the host protocol, and affects solely that command (e.g. a "one-at-a-time" hint). In a second example, the non-standard modifier is encoded as part of a command in the host protocol and stays in effect on subsequent commands (e.g. a "sticky" hint) unless it is temporarily disabled, such as by another nonstandard modifier on one of the subsequent commands, or until it is disabled for all further commands, such as by another non-standard modifier on another command. In a third example, the non-standard modifier is enabled in a configuration register by a mode-setting command, such as in SATA by a SET FEATURES command, and stays in effect until it is explicitly disabled, such as by another mode-setting command. Many combinations and variations of these examples are possible.

According to various embodiments, the non-standard features and/or capabilities affect one or more of: execution of commands; power and/or performance of commands; treatment and/or processing of data associated with a command; a relationship between multiple commands; a relationship between data of multiple commands; an indication that data of a command is trimmed; an indication that data of a command is uncorrectable; a specification of a type of data of a command; a specification of a data access type for data of a command; a specification of data sequencing for data of multiple commands; a specification of a data relationship among data of multiple commands; any other data value, data type, data sequence, data relationship, data destination, or data property specification; a property of the I/O device, such as power and/or performance; and any other feature and/or capability affecting operation of the I/O device, and/or processing of commands and/or data, and/or storing, retrieval and/or recycling of data.

In some embodiments and/or usage scenarios, a command includes one of a plurality of LBAs, and a map optionally and/or selectively associates the LBA of the command with one of a plurality of entries of the map. Each of the entries of the map includes a location in an NVM of the I/O device and/or an indication that data associated with the LBA is not present in (e.g. unallocated, de-allocated, deleted, or trimmed from) the NVM. According to various embodiments, in response to receiving the command, the I/O device one or more of: accesses the entry of the map associated with the LBA; stores an indication of a non-standard modifier of the command in the entry of the map associated with the LBA; retrieves an indication of a non-standard modifier of a prior command from the entry of the map associated with the LBA; and other operations performed by an I/O device in response to receiving the command. Storing and/or retrieving a non-standard modifier from an entry of the map enables effects of the non-standard modifier to be associated with specific ones of the LBAs and/or to be persistent across multiple commands. For example, a command includes a particular non-standard modifier specifying a data destination that is a fixed one of a plurality of data bands. In addition to storing data of the command in the specified fixed data band, an indication of the particular non-standard modifier is stored in an entry of the map associated with an LBA of the command. Subsequent recycling of the data of the command is enabled to access the entry of the map associated with the LBA of the command, and according to the indication of the particular non-standard modifier, to cause the recycling to recycle the data back to the specified fixed data band.

Examples of a non-standard modifier that specifies a type of data include specification of a compressibility of data, such as incompressible, or specification of a usage model of the data, such as usage as a database journal. In some instances, data identified (e.g. by the I/O device and/or the host) to be of a particular type (such as via the specification or a previously provided specification that has been recorded in the map) is optionally and/or selectively processed more efficiently by the I/O device. In some usage scenarios, for example, data identified to be of a database journal type is optionally and/or selectively stored in a database journal one of a plurality of data bands, the database journal data band reserved for the database journal type of data. In various embodiments, the database journal data band is of a fixed size, and when full, the oldest data in the database journal data band is optionally and/or selectively automatically deleted.

Examples of a non-standard modifier that specifies a data access type include specification of a read/write access type, specification of a read-mostly access type, specification of a write-mostly access type, specification of a write-once (also known as read-only) access type, and specification of a transient access type. In some instances, data identified (e.g. by the I/O device and/or the host) to have a particular access type (such as via the specification or a previously provided specification that has been recorded in the map) is optionally and/or selectively processed more efficiently by the I/O device. In a first example, identifying a relative frequency with which data is read or written optionally and/or selectively enables the I/O device to advantageously store the data in a manner and/or in a location to more efficiently enable writing of, access to, reading of, and/or recycling of the data. In a second example, standard write access to data that is identified by the I/O device to be write-once is optionally and/or selectively treated as an error.

In some embodiments, the specification of a transient access type enables data to be stored by the I/O device, and further optionally and/or selectively enables the I/O device to delete and/or trim (e.g. "auto-trim") the data without having received a command from the computing host to do so. For example, identifying that a particular portion of storage is transient optionally and/or selectively enables the I/O device to trim the portion of storage rather than recycling it. According to various embodiments, subsequent access by a computing host to transient data that has been deleted or trimmed by the I/O device returns one or more of: an indication that the data has been deleted or trimmed; an error indication; data containing a specific pattern and/or value; and any other indication to the computing that the data has been deleted or trimmed by the I/O device.

In various embodiments, a specification of a transient-after-reset access type enables data to be stored by the I/O device, and further optionally and/or selectively enables the I/O device to delete and/or trim (e.g. auto-trim) the data without having received a command from the computing host to do so, but solely after a subsequent power-cycle and/or reset of the I/O device and/or of a system including the I/O device. For example, certain operating system data, such as page files, and/or certain application data, such as data of a memcached application, are invalid after a power-cycle and/or reset of the system. In some embodiments, an indication of the transient-after-reset access type includes a counter, such as a two-bit counter. A value of a global counter is used to initialize the counter of the indication of the transient-after-reset access type. The global counter is incremented on each power-cycle and/or reset of the I/O device and/or the system. A particular portion of storage, such as a portion of an NVM of the I/O device, having the indication of the transient-after-reset access type is selectively trimmed when processing the particular portion of storage for recycling according to whether the counter of the indication of the transient-after-reset access type matches the global counter. In yet further embodiments, there are multiple global counters, each of the global counters is optionally and/or selectively independently incremented, and the indication of the transient-after-reset access type further includes a specification of a respective one of the global counters. Techniques other than counters, such as bit masks or fixed values, are used in various embodiments to distinguish whether the particular portion of storage is to be selectively trimmed when recycled.

In still further embodiments, the indication of the power-cycle and/or reset is a signal provided from the computing host to indicate that a portion of storage having an indication of a transient-after-reset access type is enabled to be trimmed when the portion of storage is subsequently processed for recycling. In a first example, in an environment with virtual machines, the signal provided from the computing host is an indication of a reset and/or termination of a virtual machine. In a second example, with a memcached application, the signal provided from the computing host is an indication of a reset and/or termination of the memcached application. In a third example, the indication of the power-cycle and/or reset is a function-level reset of a virtual function of the I/O device. In some usage scenarios, only portions of an NVM of the I/O device associated with the virtual function, such as a particular range of LBAs, are affected by the function-level reset.

Examples of a non-standard modifier that specifies a data sequencing include specification of a sequential data sequencing, and specification of an atomic data sequencing. In some instances, data identified (e.g. by the I/O device and/or the host) to be of a particular data sequencing (such as via the specification or a previously provided specification that has been recorded in the map) is optionally and/or selectively processed more efficiently by the I/O device. In a first example, identifying that data belongs to a sequential data sequencing optionally and/or selectively enables the I/O device to advantageously store the data in a manner and/or in a location to more efficiently enable writing of, access to, reading of, and/or recycling of the data. In a second example, identifying that data belongs to an atomic data sequencing optionally and/or selectively enables the I/O device to advantageously treat data of the atomic data sequencing as a unit and guarantee that the either all or none of the data of the atomic data sequencing are successfully written as observable by the computing host. In some embodiments, writing an atomic sequence of data includes writing meta-data, such as log information, indicating a start and/or an end of the sequence.

Examples of a non-standard modifier that specifies a data relationship include specification of a read and/or a write association between multiple items of data. In some instances, data identified (e.g. by the I/O device and/or the host) to be of a particular data relationship (such as via the specification or a previously provided specification that has been recorded in the map) is optionally and/or selectively processed more efficiently by the I/O device. For example, identifying a read data relationship between two items of data optionally and/or selectively enables the I/O device to advantageously prefetch a second one of the items of data when a first one of the items of data is read. In some usages examples and/or scenarios, the first item of data is a label of a file in a file system, and the second item of data is a corresponding extent of the file. According to various embodiments, a data relationship is one or more of: one-to-one; one-to-many; many-to-one; many-to-many; a different data relationship for different commands, such as different for a read command as compared to a write command; and any other relationship among items of data.

Examples of a non-standard modifier that specifies a data destination include a specification of a particular portion of NVM (such as a particular flash storage element or collection of flash storage elements, e.g. to provide for spreading data among elements of the NVM), a specification of a hierarchical storage tier, a specification of a type of storage, and a specification of one of a plurality of data bands. In some instances, data identified (e.g. by the I/O device and/or the host) to be of a particular data destination (such as via the specification or a previously provided specification that has been recorded in the map) is optionally and/or selectively processed more efficiently by the I/O device. In a first example, identifying that data is preferentially stored in a specified type of storage optionally and/or selectively enables the I/O device to advantageously store the data in one of a plurality of memories of different characteristics, such as SLC flash vs. MLC flash, such as flash vs. MRAM, or such as volatile memory vs. NVM. The different characteristics of the memories include one or more of: volatility; performance such as access time, latency, and/or bandwidth; read, write, or erase time; power; reliability; longevity; lower-level error correction and/or redundancy capability; higher-level error correction and/or redundancy capability; and other memory characteristics. In a second example, identifying that data is to be stored in a specified one of a plurality of data bands optionally and/or selectively enables the I/O device to advantageously store the data in the specified data band to improve one or more of: write speed; recycling speed; recycling frequency; write amplification; and other characteristics of data storage.

Examples of a non-standard modifier that specifies a command relationship include specification of a command priority imposing a preferred or required relative order among commands, and specification of a command barrier imposing a boundary between commands of at least some types. For example, a write command barrier type of command barrier is permeable to read commands but impermeable to write commands, enabling the write command barrier to ensure that all previously submitted write commands complete prior to completion of the write command barrier.

An example of an aggregated and/or fused command is a combination of two or more commands treated as a unit and either not executed or executed as a whole. For example, non-standard modifiers specify a start, a continuation, or an end of a fused command sequence. Commands of the fused command sequence are executed in an atomic manner such that unless all of the commands complete successfully, none of the effects of any of the commands are visible. An example of a fused command sequence is a compare-write sequence where effects of a subsequent write command are only visible if a preceding compare command succeeded, such as by the comparison being equal. According to various embodiments, commands of a fused command sequence are executed one or more of: sequentially; in parallel; in an order determined by entering rules of the host protocol; in an order in which the commands are received by the I/O device; and in any order.

In some embodiments, an I/O device, such as an SSD, includes an SSD controller. The SSD controller acts as a bridge between the host interface and NVM of the SSD, and executes commands of a host protocol sent from a computing host via a host interface of the SSD. At least some of the commands direct the SSD to write and read the NVM with data sent from and to the computing host, respectively. In further embodiments, the SSD controller is enabled to use a map to translate between LBAs of the host protocol and physical storage addresses in the NVM. In further embodiments, at least a portion of the map is used for private storage (not visible to the computing host) of the I/O device. For example, a portion of the LBAs not accessible by the computing host is used by the I/O device to manage access to logs, statistics, or other private data.

According to various embodiments, the map is one or more of: a one-level map; a two-level map; a multi-level map; a direct map; an associative map; and any other means of associating the LBAs of the host protocol with the physical storage addresses in the NVM. For example, in some embodiments, a two-level map includes a first-level map that associates a first function of an LBA with a respective address in the NVM of one of a plurality of second-level map pages, and each of the second-level map pages associates a second function of the LBA with a respective address in the NVM of data corresponding to the LBA. In further embodiments, an example of the first function of the LBA and the second function of the LBA are a quotient and a remainder obtained when dividing by a fixed number of entries included in each of the second-level map pages. The plurality of second-level map pages is collectively termed a second-level map. Herein, references to one or more entries of a map refers to one or more entries of any type of map, including a one-level map, a first-level of a two-level map, a second-level of a two-level map, any level of a multi-level map, or any other type of map having entries.

According to various embodiments, each or the map pages of a second-level map (or a lower-level of a multi-level map) one or more of: includes a same number of entries as others of the map pages; includes a different number of entries than at least some others of the map pages; includes entries of a same granularity as others of the map pages; includes entries of a different granularity than others of the map pages; includes entries that are all of a same granularity; includes entries that are of multiple granularities; includes a respective header specifying a format and/or layout of contents of the map page; and has any other format, layout, or organization to represent entries of the map page. For example, a first second-level map page has a specification of a granularity of 4 KB per entry, and a second second-level map page has a specification of a granularity of 8 KB per entry and only one half as many entries as the first second-level map page.

In further embodiments, entries of a higher-level map include the format and/or layout information of the corresponding lower-level map pages, for example, each of the entries in a first-level map includes a granularity specification for entries in the associated second-level map page.

In some embodiments, the map includes a plurality of entries, each of the entries associating one or more LBAs with information selectively including a respective location in the NVM where data of the LBAs is stored. For example, LBAs specify 512 B sectors, and each entry in the map is associated with an aligned eight-sector (4 KB) region of the LBAs.

According to various embodiments, the information of the entries of the map includes one or more of: a location in the NVM; an address of a read unit in the NVM; a number of read units to read to obtain data of associated LBAs stored in the NVM; a size of the data of the associated LBAs stored in the NVM, the size having a granularity that is optionally and/or selectively larger than one byte; an indication that the data of the associated LBAs is not present in the NVM, such as due to the data of the associated LBAs being trimmed; a property of the data of the associated LBAs, including any non-standard modifiers applied to the data of the associated LBAs; and any other metadata, property, or nature of the data of the associated LBAs.

In some embodiments, addresses in the NVM are grouped into regions to reduce a number of bits required, to represent one of the addresses. For example, if LBAs of the I/O device are divided into 64 regions, and the NVM is divided into 64 regions, one for each of the LBA regions, then a map entry associated with a particular LBA requires six fewer address bits since one of the regions in the NVM is able to be determined by the region of the particular LBA. According to various embodiments, an association between regions of the LBAs and regions of the NVM is by one or more of: equality; a direct association, such as 1-to-1 numeric function; a table look-up; a dynamic mapping; and any other method for associating two sets of numbers.

In various embodiments, the location in the NVM includes an address of one of a plurality of read units, and a length and/or a span in read units. The length is a size of a particular one of a plurality of data items stored in the NVM, the particular data item associated with the entry of the map including the length. According to various embodiments, the length has a granularity of one or more of; one byte; more than one byte; one read unit; a specified fraction of a read unit; a granularity according to a maximum allowed compression rate of one of the data items; and any other granularity used to track storage usage. The span is a number of reads units, such as an integer number of read units, storing a respective portion of the particular data item. In further embodiments and/or usage scenarios, a first read unit in the span of read units and/or a last read unit in the span of read units optionally and or selectively store some or all of multiple ones of the data items. In some embodiments and/or usage scenarios, the length and/or the span are stored encoded, such as by storing the length (sometimes termed size in a context with length and/or span encoded) as an offset from the span. In some embodiments and/or usage scenarios, unused encodings of the length and/or the span encode additional information, such as an indication of a non-standard modifier, or such as an indication as to whether an associated data item is present in the NVM.

Encoding the location in the NVM as an address and a length enables data stored in the NVM to vary in size. For example, a first 4 KB region is compressed to 400 B in size, is stored entirely in a single read unit, and has a length of one read unit, whereas a second 4 KB region is incompressible, spans more than one read unit, and has a length more than one read unit. In further embodiments, having a length and/or span in read units of storage associated with a region of the LBAs enables reading solely a required portion of the NVM to retrieve data of the region of the LBAs.

In some embodiments, each of the entries of the map includes information, sometimes termed meta-data, specifying properties of a region of the LBAs associated with the entry. In further embodiments, at least some of the meta-data is of a granularity finer than that of the region, such as by having separate meta-data specifications for each of a plurality of LBAs of the region. According to various embodiments, the meta-data includes one or more non-standard modifiers applicable to and/or to be used to modify and/or control writing of, access to, reading of, and/or recycling of data in the NVM associated with the region.

As one example of storing metadata in an entry of a map in response to a non-standard modifier of a command, an extended write command includes an LBA and a non-standard modifier specifying that data of the write command is transient. Data of the write command is stored in the NVM, and a particular entry of the map associated with the LBA is updated to include a location in the NVM of the data of the write command and an indication of the transient specification of the data associated with the LBA. A subsequent operation, such as a subsequent command or an internal operation such as recycling, accessing the particular entry of the map, is enabled to determine the indication of the transient specification of the data associated with the LBA, and to execute differently if the indication of the transient specification of the data associated with the LBA is present. For example, recycling of an LBA having the indication of the transient specification of the data associated with the LBA is, in some embodiments, enabled to trim the data associated with the LBA rather than recycling the data associated with the LBA.

In some embodiments, the I/O device includes an external memory, such as a DRAM, and the external memory is directly coupled to an element of the I/O device, such as via a DDR2 or DDR3 interface. According to various embodiments, the external memory is used one or more of: to store some or all of a map of the I/O device; to store one or more of the levels of a multi-level map of the I/O device; to buffer write data sent to the I/O device; to store internal state of the I/O device; and any other memory storage of the I/O device. For example, the external memory is used to provide access to the map, but, if the external memory is volatile, updates are selectively stored to the map in NVM. In various embodiments and/or usage scenarios, the updates are optionally, conditionally, and/or selectively stored immediately and/or delayed. In further embodiments and/or usage scenarios, all of the updates are stored. In other embodiments and/or usage scenarios, some of the updates are not stored (e.g. due to an older update being superseded by a younger update before storing the older update, or recovery techniques that enable omitting storing of one or more of the updates). According to various embodiments, the external memory one or more of: is an SRAM; is a DRAM; is an MRAM or other NVM; has a DDR interface; has a DDR2 or DDR3 interface; has any other memory interface; and is any other volatile or non-volatile external memory device.

In other embodiments, such as some embodiments having a multi-level map, a lower level of the map is stored in an NVM of the I/O device along with data from the computing host, such as data associated with LBAs of the I/O device, and the I/O device optionally and/or selectively does not utilize a directly-coupled DRAM. Access to an entry of the lower level of the map is performed, at least some of the time, using the NVM.

In some embodiments, a shadow copy of the map is stored in a memory of the computing host. In various embodiments, the I/O device stores information, such as the shadow copy of the map or such as internal state, in the memory of the computing host. According to various embodiments, the memory of the computing host is one or more of: a main memory of the computing host, such as a DRAM memory coupled to a processor; a system-accessible memory of the computing host; an I/O space memory of the computing host; a PCIe-addressable memory of the computing host; a volatile memory, such as a DRAM memory or an SRAM memory; an NVM, such as a flash memory or an MRAM memory; any memory that is accessible by the I/O device and is not directly coupled to the I/O device; and any memory that is accessible by both the I/O device and the computing host.

According to various embodiments, the shadow copy of the map includes one or more of: at least some of the entries of the map; all of the entries of the map; entries that include a subset of corresponding entries of the map; entries that include information according to corresponding entries of the map; entries that include a valid indication and/or other information, the valid indication and/or other information not present in entries of the map; only entries corresponding to entries of the second level of a two-level map; only entries corresponding to entries of the lowest level of a multi-level map; a page structure corresponding to a page structure of the map, such as a page structure corresponding to second-level pages of a two-level map; and any structure that is logically consistent with the map.

In further embodiments, the shadow copy of the map has one or more of: entries with a same format as corresponding entries of the map; entries with a similar format as corresponding entries of the map; and entries with a different format than corresponding entries of the map. In a first example, second-level pages of a two-level map are stored in a compressed format in an NVM, and a shadow copy of the second-level of the map is stored in an uncompressed format. In a second example, each of a plurality of portions of a shadow copy of a map, the portions including one or more of entries of the shadow copy of the map, has a valid indication not present in the map. The valid indication enables the portions to be independently initialized and/or updated. In a third example, each of the entries of the shadow copy of the map has information not present in the map indicating in which of one or more storage tiers data of LBAs associated with the entry are present. In a fourth example, each of the entries of the map has information not present in the shadow copy of the map indicating an archive state of data of LBAs associated with the entry. In a fifth example, the shadow copy of the map and/or the map are enabled to indicate a storage tier of data of LBAs associated with entries. In a sixth example, the shadow copy of the map and/or the map include one or more bits per entry that are readable and/or writable by the host. In a seventh example, each of the entries of the map includes a respective length and a respective span, and each of the corresponding entries of the shadow copy of the map includes the respective span and does not include the respective length.

In some embodiments, at a reset event, such as power-on or reset of the I/O device, an initial shadow copy of the map is stored in the memory of the computing host. According to various embodiments, the initial shadow copy of the map is one or more of all invalid; a copy of the map; a copy of one or more levels of a multi-level map; a copy of at least a portion of the map, such as a portion identified as being used initially; and in any state that is consistent with the map. In further embodiments, the shadow copy of the map is updated dynamically as LBAs are referenced. In a first example, an entry in the shadow copy of the map corresponding to an LBA is updated from an initial state when the LBA is first accessed. In a second example, a portion of the shadow copy of the map corresponding to an LBA is updated from an initial state when the LBA is first accessed. Continuing the example, the portion includes a plurality of entries of the shadow copy of the map, such as the entries corresponding to a second-level map page that contains an entry associated with the LBA.

In some embodiments, each of one or more commands received by the I/O device enable the I/O device to update the map as at least a portion of execution of the command. The one or more commands are herein termed map-updating commands. According to various embodiments, the map-updating commands include one or more of: a write command; a trim command; a command to invalidate at least a portion of the map; and any other command enabled to modify the map.

In some embodiments, a map-updating command, such as a write command, includes an LBA, and invalidates an entry in the shadow copy of the map corresponding to the LBA. According to various embodiments, one or more of: the invalidation is performed by the computing host when issuing the map-updating command; the invalidation is performed by the I/O device when receiving and/or executing the map-updating command; the invalidation includes turning off a valid indication in the entry in the shadow copy of the map corresponding to the LBA; and the invalidation includes turning off a valid indication in a portion of the shadow copy of the map, the portion including a plurality of entries of the shadow copy of the map including the entry corresponding to the LBA. Invalidating the entry in the shadow copy of the map corresponding to the LBA of the map-updating command in response to the map-updating command enables subsequent accesses to the entry in the shadow copy of the map to determine that information of the entry in the shadow copy of the map is invalid.

In some embodiments and/or usage scenarios, a write command received by the I/O device enables data of the write command to be written to an NVM of the I/O device. The I/O device determines a location in the NVM where the data of the write command is written and updates an entry in the map associated with an LBA of the write command to include the location in the NVM. In further embodiments, the shadow copy of the map in the memory of the computing host is also updated so that an entry in the shadow copy of the map associated with the LBA of the write command includes the location in the NVM.

In some embodiments and/or usage scenarios, in response to receiving a trim command, the I/O device updates an entry in the map associated with an LBA of the trim command to include an indication that data associated with the LBA is not present in the NVM. In further embodiments, the shadow copy of the map in the memory of the computing host is also updated so that an entry in the shadow copy of the map associated with the LBA of the trim command includes the indication that data associated with the LBA is not present in the NVM.

According to various embodiments, the updating of the shadow copy of the map is performed by one or more of: the computing host, in response to issuing certain types of commands, such as map-updating commands; the computing host, in response to receiving updated information from the I/O device; the computing host, in response to polling the I/O device for recent updates and receiving a response with updated information; the I/O device by accessing the shadow copy of the map in the memory of the computing host, such as by accessing the shadow copy of the map in a PCIe address space; and another agent of a system including the computing host and the I/O device. The updated information includes information according to at least some contents of one or more entries of the map and/or an indication of the one or more entries, such as respective LBAs associated with the one or more entries. In various embodiments, the updated information is communicated in any format or by any techniques that communicate information between one or more I/O devices and one or more computing hosts. In a first example, using a SATA host protocol, the updated information is communicated in a log page readable by the computing host. In a second example, using a PCIe-based host protocol, such as NVM Express, the updated information is communicated at least in part by the I/O device writing a region in the memory of the computing host and informing the computing host with an interrupt.

In some embodiments, the entry in the map associated with the LBA of the map-updating command further includes information specifying a length and/or a span of the data associated with the LBA and stored in the NVM, as the length and/or the span of the data associated with the LBA and stored in the NVM varies depending on compressibility of the data. In further embodiments, the entry in the shadow copy of the map associated with the LBA of the map-updating command optionally and/or selectively further includes the length and/or the span of the data associated with the LBA and stored in the NVM. According to various embodiments, the length and/or the span of the data associated with the LBA and stored in the NVM are optionally and/or selectively additionally enabled to encode one or more of: an indication that some and/or all of the data associated with the LBA is trimmed; an indication that some and/or all of the data associated with the LBA is uncorrectable; and any other property of some and/or all of the data associated with the LBA.

In some embodiments and/or usage scenarios, storing the length of the data associated with the LBA and stored in the NVM in the entry in the shadow copy of the map associated with the LBA of the map-updating command enables usage of the shadow copy of the map to provide the length of the data associated with the LBA and stored in the NVM in a subsequent map-updating command, such as when over-writing data of the LBA of the map-updating command. The length of the data associated with the LBA and stored in the NVM is used to adjust used space statistics in a region of the NVM containing the data of the LBA of the map-updating command when over-writing the data of the LBA of the map-updating command by the subsequent map-updating command. For example, a used space statistic for the region of the NVM containing the data of the LBA of the map-updating command is decremented by the length of the data associated with the LBA and stored in the NVM when over-writing the data of the LBA of the map-updating command by the subsequent map-updating command.

In some embodiments, a read request, such as a read command requesting data at an LBA, is enabled to access an entry in the shadow copy of the map corresponding to the LBA. According to various embodiments, one or more of: the access is performed by the computing host when issuing the read command; the access is performed by the I/O device when receiving and/or executing the read command; and the access includes reading at least a portion of the entry in the shadow copy of the map corresponding to the LBA. Accessing the entry in the shadow copy of the map corresponding to the LBA of the read command provides information according to a corresponding entry of the map without a need to access the map. In some embodiments and/or usage scenarios where the map, such as a lower level of a multi-level map, is stored in the NVM of the I/O device, access to the shadow copy of the map has a shorter latency and advantageously improves latency to access the data at the LBA.

In some embodiments, in response to a read request to read data corresponding to a particular LBA from the I/O device, the computing host reads at least a portion of an entry of the shadow copy of the map, the entry of the shadow copy of the map corresponding to the particular LBA. The computing host sends a pre-mapped read command to the I/O device, the pre-mapped read command including information of the entry of the shadow copy of the map, such as a location in the NVM of the I/O device. In various embodiments, the pre-mapped read command does not provide the particular LBA. In further embodiments and/or usage scenarios, the location in the NVM includes a respective span in the NVM.

In some embodiments, in response to a read request to read data corresponding to a particular LBA from the I/O device, the computing host is enabled to read at least a portion of an entry of the shadow copy of the map, the entry of the shadow copy of the map corresponding to the particular LBA. If the entry of the shadow copy of the map is not valid, such as indicated by a valid indication, the computing host sends a read command to the I/O device, the read command including the particular LBA. If the entry of the shadow copy of the map is valid, such as indicated by a valid indication, the computing host sends a pre-mapped read command to the I/O device, the pre-mapped read command including information of the entry of the shadow copy of the map, such as a location in the NVM of the I/O device. In various embodiments, the pre-mapped read command does not provide the particular LBA. In further embodiments and/or usage scenarios, the location in the NVM includes a respective span in the NVM.

In some embodiments, in response to the I/O device receiving a pre-mapped read command including a location in the NVM of the I/O device, the I/O device is enabled to access the NVM at the location to obtain read data. The I/O device omits accessing the map in response to receiving the pre-mapped read command. The read data and/or a processed version thereof is returned in response to the pre-mapped read command.

In some embodiments, in response to the I/O device receiving a read command to read data from the I/O device corresponding to a particular LBA, the I/O device is enabled to read at least a portion of an entry of the shadow copy of the map, the entry of the shadow copy of the map corresponding to the particular LBA. The I/O device obtains a location in the NVM corresponding to the data of the particular LBA from the entry of the shadow copy of the map without accessing the map. The I/O device accesses the NVM at the location in the NVM to obtain read data. The read data and/or a processed version thereof is returned in response to the read command.

In some embodiments, in response to receiving a read command to read data from the I/O device corresponding to a particular LBA, the I/O device is enabled to read at least a portion of an entry of the shadow copy of the map, the entry of the shadow copy of the map corresponding to the particular LBA. If the entry of the shadow copy of the map is not valid, such as indicated by a valid indication, the I/O device accesses the map to determine a location in the NVM corresponding to the data of the particular LBA. If the entry of the shadow copy of the map is valid, such as indicated by a valid indication, the I/O device obtains the location in the NVM corresponding to the data of the particular LBA from the entry of the shadow copy of the map without accessing the map. The I/O device accesses the NVM at the location in the NVM to obtain read data. The read data and/or a processed version is returned in response to the read command.

In further embodiments and/or usage scenarios, including variants of the above embodiments, the location in the NVM of the I/O device optionally and/or selectively is enabled to encode an indication of whether data corresponding to the particular LBA is present in the NVM of the I/O device. For example, data that has been trimmed or data that has never been written since the I/O device was formatted are not present in the NVM of the I/O device.

In variants where the computing host determines the location in the NVM as a part of attempting to read data from the I/O device, such as by accessing the shadow copy of the map, the computing host is enabled to determine, without sending a command to the I/O device, that data corresponding to the particular LBA is not present in the NVM of the I/O device. According to various embodiments, one or more of: the computing host returns a response to the read request without sending a command to the I/O device; the computing host sends a command to the I/O device including the particular LBA; and the computing host sends a command to the I/O device including information obtained from the entry of the shadow copy of the map corresponding to the particular LBA.

In variants where the I/O device determines the location in the NVM as a part of executing the read command, such as by accessing the shadow copy of the map, the I/O device is enabled to return a response to the read command without accessing the NVM to obtain the read data.

In some embodiments, the I/O device is enabled to use the shadow copy of the map when recycling a region of the NVM. Recycling is enabled to determine, via entries of the shadow copy of the map, whether data read from the region of the NVM for recycling is still valid, such as by being up-to-date in one of the entries of the shadow copy of the map. In various embodiments, data that is up-to-date is optionally and/or selectively recycled by writing the data to a new location in the NVM and updating an entry of the map to specify the new location. According to various embodiments, a corresponding one of the entries of the shadow copy of the map is either invalidated or is updated with the new location. In further embodiments, data read from the NVM at a particular location in the NVM is up-to-date if a header associated with the data and read from the NVM includes at least a portion of an LBA, and an entry in the shadow copy of the map associated with the at least a portion of an LBA includes the particular location in the NVM.