Gaming systems and methods using optical narrowcasting

Bortz , et al. Nov

U.S. patent number 10,473,439 [Application Number 16/163,393] was granted by the patent office on 2019-11-12 for gaming systems and methods using optical narrowcasting. This patent grant is currently assigned to ARON SUREFIRE, LLC. The grantee listed for this patent is ARON SUREFIRE, LLC. Invention is credited to John C. Bortz, Narkis E. Shatz.

View All Diagrams

| United States Patent | 10,473,439 |

| Bortz , et al. | November 12, 2019 |

Gaming systems and methods using optical narrowcasting

Abstract

Use of optical narrowcasting for gaming systems and methods are provided. An optical shooting device may comprise an optical transmitter assembly and a first RF transceiver. The optical shooting device may determine shooting information and transmit an optical beam carrying the shooting information using the optical transmitter assembly. An optical target device may comprise an optical receiver assembly and a second RF transceiver. The optical receiver assembly may receive the optical beam using the optical receiver assembly. The optical target device may extract the information from the optical beam. A game control device may comprise a third RF transceiver. The game control device may communicate with the optical shooting device or the optical target device using the third RF transceiver to transmit or receive information relating to a shooting game, and activate a game event based on the information relating to the shooting game.

| Inventors: | Bortz; John C. (Spokane, WA), Shatz; Narkis E. (La Jolla, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | ARON SUREFIRE, LLC (Fountain

Valley, CA) |

||||||||||

| Family ID: | 67140637 | ||||||||||

| Appl. No.: | 16/163,393 | ||||||||||

| Filed: | October 17, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20190212106 A1 | Jul 11, 2019 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 62614123 | Jan 5, 2018 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A63F 13/31 (20140902); A63F 13/219 (20140901); F41A 33/02 (20130101); F41J 5/02 (20130101); A63F 13/34 (20140902); A63F 13/213 (20140902) |

| Current International Class: | F41J 5/02 (20060101); F41A 33/02 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 3761184 | September 1973 | McLaughlin |

| 3987297 | October 1976 | Brienza |

| 5005040 | April 1991 | Norita |

| 5029195 | July 1991 | Danos |

| 5359446 | October 1994 | Johnson |

| 5475523 | December 1995 | Shinada |

| 5566022 | October 1996 | Segev |

| 5596452 | January 1997 | Yamakawa |

| 5598281 | January 1997 | Zimmerman |

| 5604630 | February 1997 | Palmer |

| 5610753 | March 1997 | Kessler |

| 5717510 | February 1998 | Ishikawa |

| 5777768 | July 1998 | Korevaar |

| 5778256 | July 1998 | Darbee |

| 5896217 | April 1999 | Ishikawa |

| 5999294 | December 1999 | Petsko |

| 6065880 | May 2000 | Thompson |

| 6104513 | August 2000 | Bloom |

| 6122084 | September 2000 | Britz |

| 6229631 | May 2001 | Sato |

| 6260763 | July 2001 | Svetal |

| 6292283 | September 2001 | Grandbois |

| 6324013 | November 2001 | Nakai |

| 6381055 | April 2002 | Javitt |

| 6410942 | June 2002 | Thibeault |

| 6501581 | December 2002 | Snyder |

| 6504632 | January 2003 | Watanabe |

| 6529329 | March 2003 | Dang |

| 6559993 | May 2003 | Doucet |

| 6560038 | May 2003 | Parkyn |

| 6568627 | May 2003 | Jones |

| 6643068 | November 2003 | Mandella |

| 6657790 | December 2003 | Kim |

| 6822634 | November 2004 | Kemp |

| 6829439 | December 2004 | Sidorovich |

| 6868237 | March 2005 | Willebrand |

| 6910780 | June 2005 | Vail |

| 7035546 | April 2006 | Keller |

| 7058316 | June 2006 | Vilnrotter |

| 7079774 | July 2006 | Sidorovich |

| 7099649 | August 2006 | Patterson |

| 7106973 | September 2006 | Kube |

| 7116661 | October 2006 | Patton |

| 7120363 | October 2006 | Andreu-von Euw |

| 7130505 | October 2006 | Shen |

| 7203424 | April 2007 | Alwan |

| 7221910 | May 2007 | Atsushilshii |

| 7223315 | May 2007 | Chen |

| 7245798 | July 2007 | Graves |

| 7308194 | December 2007 | Iizuka |

| 7319822 | January 2008 | Lo |

| 7324271 | January 2008 | Winterot |

| 7338375 | March 2008 | Small |

| 7375804 | May 2008 | Liebman |

| 7382103 | June 2008 | Shirazee |

| 7450857 | November 2008 | Dress |

| 7480101 | January 2009 | Lubart |

| 7495837 | February 2009 | Smith |

| 7508588 | March 2009 | Nakajima |

| 7538879 | May 2009 | Power |

| 7554076 | June 2009 | Wang |

| 7583901 | September 2009 | Nakagawa |

| 7587141 | September 2009 | Fisher |

| 7630648 | December 2009 | Dress |

| 7639948 | December 2009 | Gilbert |

| 7663501 | February 2010 | Hyun |

| 7699229 | April 2010 | Bennett |

| 7715723 | May 2010 | Kagawa |

| 7734181 | June 2010 | Bahar |

| 7800541 | September 2010 | Moshfeghi |

| 7884931 | February 2011 | Achal |

| 7885547 | February 2011 | Nakaso |

| 7889998 | February 2011 | Son |

| 7907345 | March 2011 | Paulussen |

| 7953326 | May 2011 | Farr |

| 7970279 | June 2011 | Dress |

| 8029167 | October 2011 | Ikeda |

| 8031414 | October 2011 | Liu |

| 8036244 | October 2011 | Naoe |

| 8081876 | December 2011 | Dress |

| 8103167 | January 2012 | Tidhar |

| 8204383 | June 2012 | Shin |

| 8269971 | September 2012 | Marsh |

| 8304733 | November 2012 | Alameh |

| 8406427 | March 2013 | Chand |

| 8417058 | April 2013 | Tardif |

| 8422119 | April 2013 | Keaton |

| 8452182 | May 2013 | Davidson |

| 8469824 | June 2013 | Farley |

| 8526403 | September 2013 | Nadji |

| 8582973 | November 2013 | Takahashi |

| 8593647 | November 2013 | Charny |

| 8611754 | December 2013 | Templ |

| 8639106 | January 2014 | Gleason |

| 8687965 | April 2014 | Pederson |

| 8706815 | April 2014 | Redmond |

| 8805192 | August 2014 | Eide |

| 8831427 | September 2014 | Grubor |

| 8848059 | September 2014 | Tiscareno |

| 8908074 | December 2014 | Oshima |

| 8922666 | December 2014 | Oshima |

| 8923701 | December 2014 | D'Errico |

| 8948601 | February 2015 | Shar |

| 8965214 | February 2015 | Weckwerth |

| 8965215 | February 2015 | Na |

| 9118420 | August 2015 | Kwon |

| 9134538 | September 2015 | Augst |

| 9143232 | September 2015 | Bhide |

| 9146103 | September 2015 | Rousseau |

| 9166683 | October 2015 | Jovicic |

| 9203524 | December 2015 | Simpson |

| 9210376 | December 2015 | Yu |

| 9215032 | December 2015 | Zhang |

| 9225427 | December 2015 | Jung |

| 9250355 | February 2016 | Deng |

| 9252875 | February 2016 | Bae |

| 9264136 | February 2016 | Vaananen |

| 9300398 | March 2016 | Chaffee |

| 9317747 | April 2016 | Jovicic |

| 9350448 | May 2016 | Byers |

| 9360554 | June 2016 | Retterath |

| 9386666 | July 2016 | Economy |

| 9401121 | July 2016 | Chen |

| 9420264 | August 2016 | Gilliland |

| 9438337 | September 2016 | Byers |

| 9453976 | September 2016 | Qian |

| 9509402 | November 2016 | Ryan |

| 9520939 | December 2016 | Jovicic |

| 2002/0089726 | July 2002 | He |

| 2002/0109884 | August 2002 | Presley |

| 2002/0149822 | October 2002 | Stroud |

| 2002/0163699 | November 2002 | Kavehrad |

| 2003/0090765 | May 2003 | Neff |

| 2003/0151818 | August 2003 | Wagner |

| 2004/0135935 | July 2004 | Glaeser |

| 2004/0141753 | July 2004 | Andreu-von Euw |

| 2004/0156640 | August 2004 | Dress |

| 2004/0161246 | August 2004 | Matsushita |

| 2004/0208602 | October 2004 | Plante |

| 2004/0258414 | December 2004 | Lee |

| 2005/0169643 | August 2005 | Franklin |

| 2006/0076473 | April 2006 | Wilcken |

| 2006/0153498 | July 2006 | Shen |

| 2006/0287113 | December 2006 | Small |

| 2007/0070060 | March 2007 | Kagawa |

| 2007/0127926 | June 2007 | Marioni |

| 2007/0133097 | June 2007 | Lubart |

| 2007/0147843 | June 2007 | Fujiwara |

| 2007/0253716 | November 2007 | Nakaso |

| 2008/0008471 | January 2008 | Dress |

| 2008/0074752 | March 2008 | Chaves |

| 2008/0095533 | April 2008 | Lee |

| 2008/0107419 | May 2008 | Won |

| 2008/0124083 | May 2008 | Esser et al. |

| 2008/0138077 | June 2008 | Stretton |

| 2008/0170296 | July 2008 | Chaves |

| 2008/0218850 | September 2008 | Power |

| 2008/0240722 | October 2008 | Karaki |

| 2009/0028564 | January 2009 | Villarruel |

| 2009/0041459 | February 2009 | Dress |

| 2009/0103925 | April 2009 | Alpert |

| 2009/0128921 | May 2009 | Roth |

| 2009/0244716 | October 2009 | Mathai |

| 2010/0003029 | January 2010 | Dress |

| 2010/0157434 | June 2010 | Lee |

| 2010/0188753 | July 2010 | Paulussen |

| 2010/0257569 | October 2010 | O'Hanlon |

| 2011/0026931 | February 2011 | Koizumi |

| 2011/0270585 | November 2011 | Chen |

| 2012/0060177 | March 2012 | Stinson |

| 2012/0068913 | March 2012 | Bar-Zeev |

| 2012/0098934 | April 2012 | McKiel |

| 2012/0098945 | April 2012 | McKiel |

| 2012/0106200 | May 2012 | Yin |

| 2012/0148189 | June 2012 | Zhang |

| 2012/0287511 | November 2012 | Dross |

| 2013/0004173 | January 2013 | Maricevic |

| 2013/0061259 | March 2013 | Raman |

| 2013/0126713 | May 2013 | Haas |

| 2013/0216063 | August 2013 | Sherman |

| 2013/0236183 | September 2013 | Chao |

| 2013/0315604 | November 2013 | LoPresti |

| 2013/0330088 | December 2013 | Oshima |

| 2014/0029494 | January 2014 | Sundaram |

| 2014/0037294 | February 2014 | Cox |

| 2014/0072119 | March 2014 | Hranilovic |

| 2014/0169796 | June 2014 | Sasaki |

| 2014/0178841 | June 2014 | Carter |

| 2014/0201400 | July 2014 | Beel |

| 2014/0225916 | August 2014 | Theimer |

| 2014/0226977 | August 2014 | Jovicic |

| 2014/0273834 | September 2014 | Merckling |

| 2014/0301735 | October 2014 | Okada |

| 2014/0306866 | October 2014 | Miller |

| 2014/0355057 | December 2014 | Jang |

| 2014/0363168 | December 2014 | Walker |

| 2014/0368533 | December 2014 | Salter |

| 2015/0012249 | January 2015 | Li |

| 2015/0037040 | February 2015 | Lyn-Shue |

| 2015/0097719 | April 2015 | Balachandreswaran |

| 2015/0156568 | June 2015 | Byers |

| 2015/0177526 | June 2015 | Zhang |

| 2015/0185492 | July 2015 | Nagano |

| 2015/0215040 | July 2015 | Dickson |

| 2015/0244624 | August 2015 | Asiano |

| 2015/0293228 | October 2015 | Retterath |

| 2015/0332500 | November 2015 | France |

| 2015/0339855 | November 2015 | Diaz |

| 2015/0349881 | December 2015 | Byers |

| 2015/0349892 | December 2015 | Fischer |

| 2015/0358079 | December 2015 | Cronin |

| 2016/0020855 | January 2016 | Guetta |

| 2016/0021354 | January 2016 | Lan |

| 2016/0025994 | January 2016 | Shagam |

| 2016/0033386 | February 2016 | Reed |

| 2016/0041359 | February 2016 | Gaskin |

| 2016/0047890 | February 2016 | Ryan |

| 2016/0072580 | March 2016 | Wabnig |

| 2016/0087676 | March 2016 | Tanaka |

| 2016/0087724 | March 2016 | Liu |

| 2016/0088511 | March 2016 | Nguyen |

| 2016/0127040 | May 2016 | Vaananen |

| 2016/0131843 | May 2016 | Amit |

| 2016/0164261 | June 2016 | Warren |

| 2016/0231521 | August 2016 | Smith |

| 2016/0259038 | September 2016 | Retterath |

| 2016/0277140 | September 2016 | Wu |

| 2016/0294472 | October 2016 | Palmer |

| 2016/0342297 | November 2016 | Ellwood |

| 2017/0017947 | January 2017 | Robinton |

| 2017/0090047 | March 2017 | Shahar |

| 2017/0191800 | July 2017 | Fischer |

| 853022 | Nov 1960 | GB | |||

| 2499693 | Aug 2013 | GB | |||

| 2000004660 | Aug 2001 | WO | |||

| 2002056507 | Jul 2002 | WO | |||

| 2005055436 | Jun 2005 | WO | |||

| 2014015353 | Jan 2014 | WO | |||

| 2015086668 | Jun 2015 | WO | |||

| 2015086671 | Jun 2015 | WO | |||

| 2015168842 | Nov 2015 | WO | |||

| 2015188948 | Dec 2015 | WO | |||

| 2016028226 | Feb 2016 | WO | |||

| 2015049180 | Apr 2016 | WO | |||

| 2016086276 | Jun 2016 | WO | |||

| 2016154470 | Sep 2016 | WO | |||

Other References

|

Hemani Kaushal and Georges Kaddoum, "Free Space Optical Communication: Challenges and Mitigation Techniques," Jun. 16, 2015. cited by applicant . Pekka Kamsula, "Design and Implementation of a Bi-directional Visible Light Communication Test Bed," Univ. of Oulu, 2015. cited by applicant . ShuchitaChaudhary,"OpticalWirelessCommunication:AFuturePerspectiveforNextG- enerationWirelessSystems",IJSER, vol. 3,Iss.9,Sep. 2012. cited by applicant . JLatal,AVanderka,PKoudelka,andMLucki, "Softwaredesignofsegmentopticaltransmitterforindoorfree-spaceopticalnetwo- rks," 2015. cited by applicant . Stefan Schmid, Giorgio Corbellini, Stefan Mangold, and Thomas R. Gross, "An LED-to-LED Visible Light Communication System with Software-Based Synchronization", 2012. cited by applicant . Ali Mansour, Raed Mesleh, and Mohamed Abaza, "New challenges in wireless and free space optical commmunications", May 12, 2016. cited by applicant . JeffreyB.CarruthersandJosephM.Kahn, "AngleDiversityforNondirectedWirelessInfraredCommunication", IEEETransactionsonCommunications,vol. 48,No. 6,Jun. 6, 2000. cited by applicant . Aleksandar Jovicic, Principal Engineer Qualcomm Flarion Technologies, "Qualcomm.RTM. Lumicast.TM.: A high accuracy indoor positioning system based on visible light communication", Apr. 2016. cited by applicant . Devendra J. Varanva and Kantipudi MVV Prasad, "LED to LED communication with WDM concept for flashlight of Mobilephones", IJACSA vol. 4, No. 7, 2013. cited by applicant . StefanSchmid,GiorgioCorbellini,StefanMangoldandThomasGross, "ContinuousSynchronizationforLED-to-LEDVisibleLightCommunicationNetworks" InternationalWorkshoponOpticalWireless(IWOW)2014. cited by applicant . StefanSchmid,GiorgioCorbellini,StefanMangoldandThomasGross, "EnLighting: AnIndoorVisibleLightCommunicationSystemBasedonNetworkedLightBulbs",Intern- ationalConferenceonSensing, CommunicationandNetworking(SECON)2016. cited by applicant . Giorgio Corbellini, Kaan Aksit, Stefan Schmid, Stefan Mangold and Thomas Gross, "Connecting Networks of Toys and Smartphones with Visible Light Communication", IEEE Communications Magazine Jul. 2014. cited by applicant . MariamM.Galal,AhmedA.AbdElAziz,HebaA.Fayed,andMoustafaHAly, "Smartphonepaymentviaflashlight: Utilizingthebuilt-inflashlightofsmartphonesasreplacementformagneticcards,- " OpticIJLEO, Nov. 2015. cited by applicant . Alvin Abraham and Jintu K Joseph, "Short Distance Optical Wireless Communication," M. tech, 2015. cited by applicant . RayanaBoubezari,HoaLeMinh,ZabihGhassemlooy, AhmedBouridane, "Noveldetectiontechniqueforsmartphonetosmartphonevisiblelightcommunicatio- ns," 10thInt. SymposiumonCSNDSP,2016. cited by applicant . Rayana Boubezari, Hoa Le Minh, Zabih Ghassemlooy, Ahmed Bouridane, "Smartphone camera based visible light commmunication," Journal of Lightwave Technology, vol. 34, No. 17, Sep. 1, 2016. cited by applicant . V. Jungnickeletal., "AEuropeanViewontheNextGenerationOpticalWirelessCommunicationStandard," 2015IEEEConference, Oct. 2015. cited by applicant . Chao Wang, Minglun Zhang, Hetian Zhu, Xujing Guo, Xiangwen Zhai and Xiaonan Bai, "Visible Light Communication Application Scenarios Based on Android Smart Devices' LED Lamp", 14th Int. Conf. on ICOCN, Jul. 2015. cited by applicant . Hollister, Sean, "Guns, grenades and GPS: Recoil is aiming to bring back laser tag," CNET, CBS Interactive Inc., Jul. 7, 2017, https://www.cnet.com/reviews/skyrocket-recoil-preview/. cited by applicant . Stein, Scott, "Laser Tag," CNET, CBS Interactive Inc., 2012 Aug. 2012, https://www.cnet.com/reviews/lazer-tag-preview/. cited by applicant . Armo Gear Infrared Laser Tag Guns and Vests Review, http://bestlasertagguns.com/armogear-infrared-laser-tag-guns-and-vests/. cited by applicant . "Wi-fi technology is a new step in the arena laser tag development," LLC Lasertag.net, Dec. 27, 2016, https://lasertag.net/wi-fi-technology/. cited by applicant. |

Primary Examiner: Clarke, Jr.; Robert T

Attorney, Agent or Firm: Sheppard, Mullin, Richter & Hampton LLP

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATIONS

This application claims the benefit of U.S. Provisional Patent Application No. 62/614,123 filed on Jan. 5, 2018, and entitled "GAMING SYSTEMS AND METHODS USING OPTICAL NARROWCASTING," all of the foregoing being incorporated herein by reference in their entirety.

Claims

What is claimed is:

1. A system, comprising: an optical shooting device, comprising: a first optical transmitter assembly configured to transmit an optical beam carrying information; a first RF transceiver configured to communicate with a third RF transceiver of a game control device; a first processor; and a first non-transitory computer-readable medium having a first set of instructions stored thereon that, when executed by the first processor, causes the optical shooting device to: determine shooting information; transmit a first optical beam carrying the shooting information using the first optical transmitter assembly; and communicate with the game control device using the first RF transceiver; and an optical target device, comprising: a first optical receiver assembly configured to receive the first optical beam, the first optical receiver assembly including an optical receiver configured to differentiate between beams incident at different angles of incidence, the optical receiver including multiple detectors in a focal plane such that the beams incident at different angles of incidence are concentrated at different locations in the focal plane; a second RF transceiver configured to communicate with the third RF transceiver of the game control device; a second processor; and a second non-transitory computer-readable medium having a second set of instructions stored thereon that, when executed by the second processor, causes the optical target device to: detect the first optical beam within a field of view of the first optical receiver assembly; extract the shooting information from the first optical beam; and communicate with the game control device using the second RF transceiver.

2. The system of claim 1, wherein the optical shooting device further comprises a second optical receiver assembly enabling a two-way optical communication between the optical shooting device and another optical shooting device or the optical target device.

3. The system of claim 2, wherein the optical target device further comprises a second optical transmitter assembly enabling a two-way optical communication between the optical target device and another optical target device or the optical shooting device.

4. The system of claim 1, wherein the shooting information includes an identifier for a user of the optical shooting device.

5. The system of claim 4, wherein the shooting information further includes information relating to a type of a virtual weapon simulated by the optical shooting device or information relating to a type of virtual ammunition used by the optical shooting device.

6. The system of claim 1, wherein the optical shooting device further comprises a display for presenting an augmented reality view, the augmented reality view determined based on locations of objects around the optical shooting device.

7. The system of claim 6, wherein: the optical target device further comprises a second optical transmitter assembly; the second set of instructions, when executed by the second processor, further causes the optical target device to: generate user identity information of a user using the optical target device; and transmit a second optical beam carrying the user identity information using the second optical transmitter assembly.

8. The system of claim 7, wherein: the optical shooting device further comprises a second optical receiver assembly; and the first set of instructions, when executed by the first processor, further causes the optical shooting device to: detect the second optical beam within a field of view of the second optical receiver assembly; extract the user identity information from the second optical beam; generate one or more augmented reality elements based on the user identify information; and present the one or more augmented reality elements on the display.

9. The system of claim 7, wherein: the first optical transmitter assembly of the optical target device transmits the first optical beam over a first range of angles; and the second optical transmitter assembly of the optical target device transmits the second optical beam over a second range of angles wider than the first range of angles.

10. The system of claim 1, wherein the first set of instructions, when executed by the first processor, further causes the optical shooting device to: generate message information, the message information containing one or more messages; and transmit a second optical beam carrying the message information using the first optical transmitter assembly.

11. The system of claim 1, wherein the first optical receiver assembly has a 180-degree axisymmetric field of view.

12. The system of claim 1, wherein the optical target device includes multiple optical receivers configured for placement on different parts of a user, different placements of the multiple optical receivers enabling the optical target device to determine at which of the different parts of the user the first optical beam was detected by the optical target device.

13. The system of claim 1, further comprising the game control device, the game control device comprising: a third RF transceiver configured to communicate with the first RF transceiver and the second RF transceiver; a third processor; and a third non-transitory computer-readable medium having a third set of instructions stored thereon that, when executed by the third processor, causes the game control device to: communicate with the optical shooting device or the optical target device using the third RF transceiver to transmit or receive information relating to a shooting game; and activate a game event based on the information relating to the shooting game.

14. The system of claim 13, wherein the game event includes a movement restriction for a user of the optical target device and a violation of the movement restriction is determined based on a switch or a proximity sensor of the optical target device.

15. The system of claim 13, wherein the game control device communicates with another game control device to exchange information on parameters of the shooting game.

16. A system, comprising: an optical shooting device, comprising: a first optical transmitter assembly configured to transmit an optical beam carrying information; a first optical receiver assembly; a first RF transceiver configured to communicate with a third RF transceiver of a game control device; a display for presenting an augmented reality view, the augmented reality view determined based on locations of objects around the optical shooting device; a first processor; and a first non-transitory computer-readable medium having a first set of instructions stored thereon that, when executed by the first processor, causes the optical shooting device to: determine shooting information; transmit a first optical beam carrying the shooting information using the first optical transmitter assembly; and communicate with the game control device using the first RF transceiver; and an optical target device, comprising: a second optical receiver assembly configured to receive the first optical beam; a second optical transmitter assembly; a second RF transceiver configured to communicate with the third RF transceiver of the game control device; a second processor; and a second non-transitory computer-readable medium having a second set of instructions stored thereon that, when executed by the second processor, causes the optical target device to: detect the first optical beam within a field of view of the second optical receiver assembly; extract the shooting information from the first optical beam; communicate with the game control device using the second RF transceiver; generate user identity information of a user using the optical target device; and transmit a second optical beam carrying the user identity information using the second optical transmitter assembly; wherein the first set of instructions, when executed by the first processor, further causes the optical shooting device to: detect the second optical beam within a field of view of the first optical receiver assembly; extract the user identity information from the second optical beam; generate one or more augmented reality elements based on the user identify information; and present the one or more augmented reality elements on the display, wherein presenting the one or more augmented reality elements on the display comprises placing the one or more augmented reality elements at one or more locations of the display based on an incident angle of the second optical beam on the first optical receiver assembly.

17. An optical shooting device, comprising: a trigger; an optical transmitter assembly configured to transmit an optical beam carrying shooting information; an optical receiver assembly; an RF transceiver configured to communicate with a game control device; a display for presenting an augmented reality view, the augmented reality view determined based on locations of objects around the optical shooting device; a processor; and a non-transitory computer-readable medium having a set of instructions stored thereon that, when executed by the processor, causes the optical shooting device to: determine the shooting information; in response to actuation of the trigger, transmit the optical beam carrying the shooting information using the optical transmitter assembly; communicate with the game control device using the RF transceiver to transmit or receive information relating to a shooting game; detect an optical beam within a field of view of the optical receiver assembly; extract user identity information from the optical beam; generate one or more augmented reality elements based on the user identify information; and present the one or more augmented reality elements on the display, wherein presenting the one or more augmented reality elements on the display comprises placing the one or more augmented reality elements at one or more locations of the display based on an incident angle of the optical beam on the optical receiver assembly.

Description

TECHNICAL FIELD

The present disclosure relates generally to wireless optical communications. Some embodiments relate to gaming systems and methods for using optical narrowcasting.

DESCRIPTION OF THE RELATED ART

Generally, mobile communications systems, both long and short-range, are based on the transmission and/or receipt of radio waves (e.g., cellular networks, WiFi networks, Bluetooth.RTM. communications, Near-Field Communications (NFC), etc.). Services, such as location-based services, may oftentimes also rely on radio-wave-based communications (e.g., Global Positioning System (GPS) positioning, WiFi triangulation, etc.).

BRIEF SUMMARY OF THE DISCLOSURE

In one aspect of the disclosure, a system may comprise an optical shooting device and an optical target device. The optical shooting device may comprise a first optical transmitter assembly configured to transmit an optical beam carrying information, a first RF transceiver configured to communicate with a third RF transceiver of a game control device, a first processor, and a first non-transitory computer-readable medium. The first non-transitory computer-readable medium may have a first set of instructions stored thereon that, when executed by the first processor, causes the optical shooting device to: determine shooting information; transmit a first optical beam carrying the shooting information using the first optical transmitter assembly; and communicate with the game control device using the first RF transceiver. The optical target device may comprise a first optical receiver assembly configured to receive the first optical beam, a second RF transceiver configured to communicate with the third RF transceiver of the game control device, a second processor, and a second non-transitory computer-readable medium. The second non-transitory computer-readable medium may have a second set of instructions stored thereon that, when executed by the second processor, causes the optical target device to: detect the first optical beam within a field of view of the first optical receiver assembly; extract the shooting information from the first optical beam; and communicate with the game control device using the second RF transceiver.

In some implementations, the optical shooting device may further comprise a second optical receiver assembly. The second optical receiver assembly may enable a two-way optical communication between the optical shooting device and another optical shooting device or the optical target device. The optical target device may further comprise a second optical transmitter assembly. The second optical transmitter assembly may enable a two-way optical communication between the optical target device and another optical target device or the optical shooting device.

In some implementations, the shooting information may include an identifier for a user of the optical shooting device. The shooting information may further include information relating to a type of a virtual weapon simulated by the optical shooting device or information relating to a type of virtual ammunition used by the optical shooting device.

In some implementations, the optical shooting device may further comprise a display for presenting an augmented reality view. The augmented reality view may be determined based on locations of objects around the optical shooting device.

In some implementations, the optical target device may further comprise a second optical transmitter assembly. The second set of instructions, when executed by the second processor, may further cause the optical target device to: generate user identity information of a user using the optical target device; and transmit a second optical beam carrying the user identity information using the second optical transmitter assembly. The optical shooting device may further comprise a second optical receiver assembly. The first set of instructions, when executed by the first processor, may further cause the optical shooting device to: detect the second optical beam within a field of view of the second optical receiver assembly; extract the user identity information from the second optical beam; generate one or more augmented reality elements based on the user identify information; and present the one or more augmented reality elements on the display. Placement of the one or more augmented reality elements at one or more locations of the display may be determined based on an incident angle of the second optical beam on the second optical receiver assembly. The first optical transmitter assembly of the optical target device may transmit the first optical beam over a first range of angles, and the second optical transmitter assembly of the optical target device may transmit the second optical beam over a second range of angles wider than the first range of angles.

In some implementations, the first set of instructions, when executed by the first processor, may further cause the optical shooting device to: generate message information, the message information containing one or more messages; and transmit a second optical beam carrying the message information using the first optical transmitter assembly.

In some implementations, the first optical receiver assembly may have a 180-degree axisymmetric field of view.

In some implementations, the optical target device may include multiple optical receivers configured for placement on different parts of a user. Different placements of the multiple optical receivers may enable the optical target device to determine at which of the different parts of the user the first optical beam was detected by the optical target device.

In some implementations, the optical target device may include an optical receiver configured to differentiate between beams incident at different angles of incidence. The optical receiver may include multiple detectors in a focal plane such that the beams incident at different angles of incidence are concentrated at different locations in the focal plane.

In some implementations, the system may further comprise the game control device. The game control device may comprise a third RF transceiver configured to communicate with the first RF transceiver and the second RF transceiver, a third processor, and a third non-transitory computer-readable medium. The third non-transitory computer-readable medium may have a third set of instructions stored thereon that, when executed by the third processor, causes the game control device to: communicate with the optical shooting device or the optical target device using the third RF transceiver to transmit or receive information relating to a shooting game; and activate a game event based on the information relating to the shooting game.

In some implementations, the game event may include a movement restriction for a user of the optical target device. A violation of the movement restriction may be determined based on a switch or a proximity sensor of the optical target device.

In some implementations, the game control device may communicate with another game control device to exchange information on parameters of the shooting game.

In another aspect of the disclosure, an optical shooting device may comprise a trigger, an optical transmitter assembly configured to transmit an optical beam carrying shooting information, an RF transceiver configured to communicate with a game control device, a processor, and a non-transitory computer-readable medium. The non-transitory computer-readable medium may have a set of instructions stored thereon that, when executed by the processor, causes the optical shooting device to: determine the shooting information; in response to actuation of the trigger, transmit the optical beam carrying the shooting information using the optical transmitter assembly; and communicate with the game control device using the RF transceiver to transmit or receive information relating to a shooting game.

In another aspect of the disclosure, an optical target device may comprise an optical receiver assembly configured to receive an optical beam carrying shooting information, an RF transceiver configured to communicate with a game control device, a processor, and a non-transitory computer-readable medium. The non-transitory computer-readable medium may have a set of instructions stored thereon that, when executed by the processor, causes the optical target device to: detect the optical beam within a field of view of the optical receiver assembly; extract the shooting information from the optical beam; and communicate with the game control device using the RF transceiver to transmit or receive information relating to a shooting game.

In some implementations, the optical receiver assembly may comprise a plurality of optical receivers configured for placement on different parts of a user. Different placements of the plurality of optical receivers may enable the optical target device to determine at which of the different parts of the user an optical beam was detected by the optical target device.

Other features and aspects of the disclosed method will become apparent from the following detailed description, taken in conjunction with the accompanying drawings, which illustrate, by way of example, the features in accordance with embodiments of the disclosure. The summary is not intended to limit the scope of the claimed disclosure, which is defined solely by the claims attached hereto.

BRIEF DESCRIPTION OF THE DRAWINGS

The present disclosure, in accordance with one or more various embodiments, is described in detail with reference to the following figures. The figures are provided for purposes of illustration only and merely depict typical or example embodiments of the disclosure.

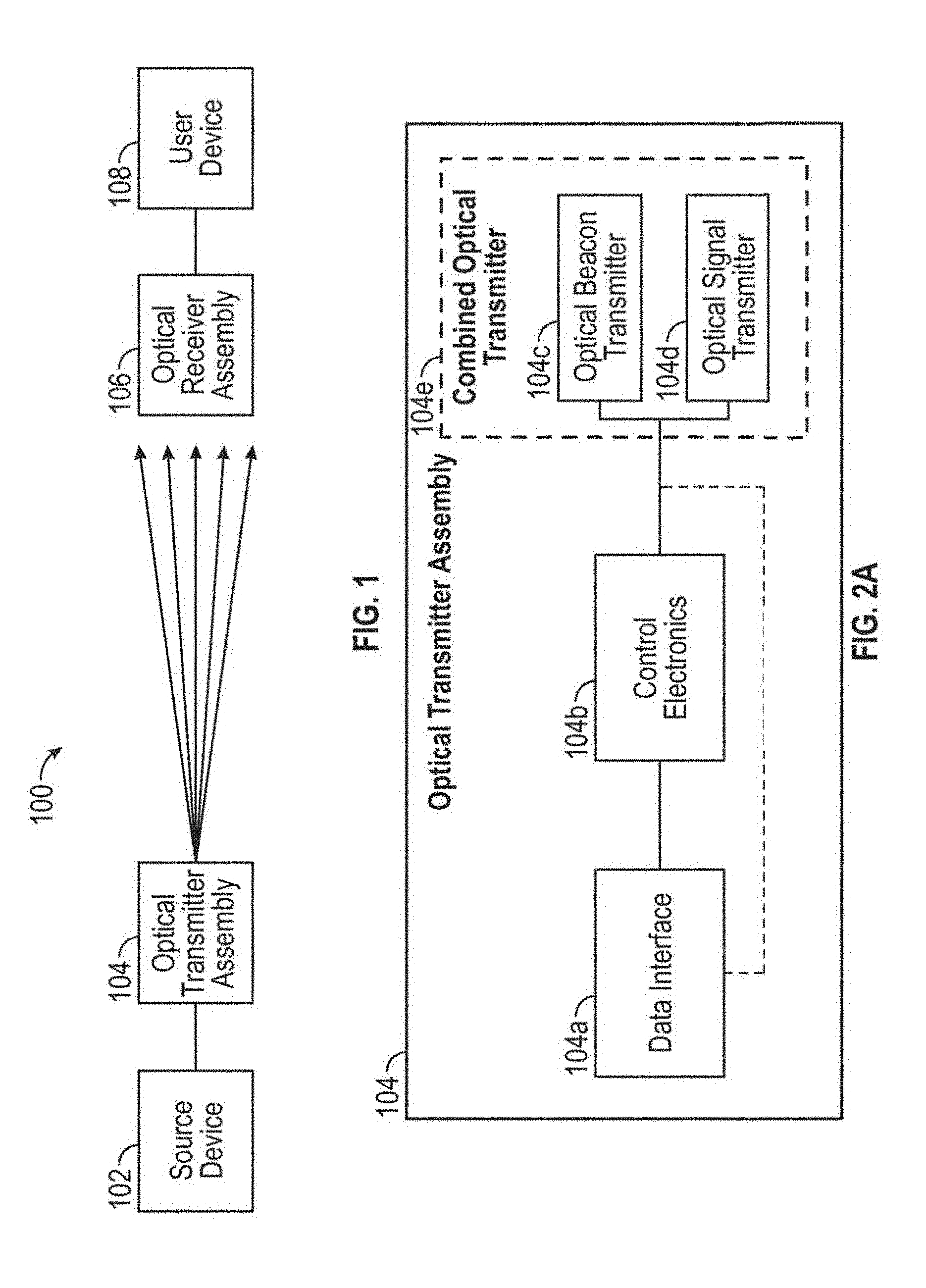

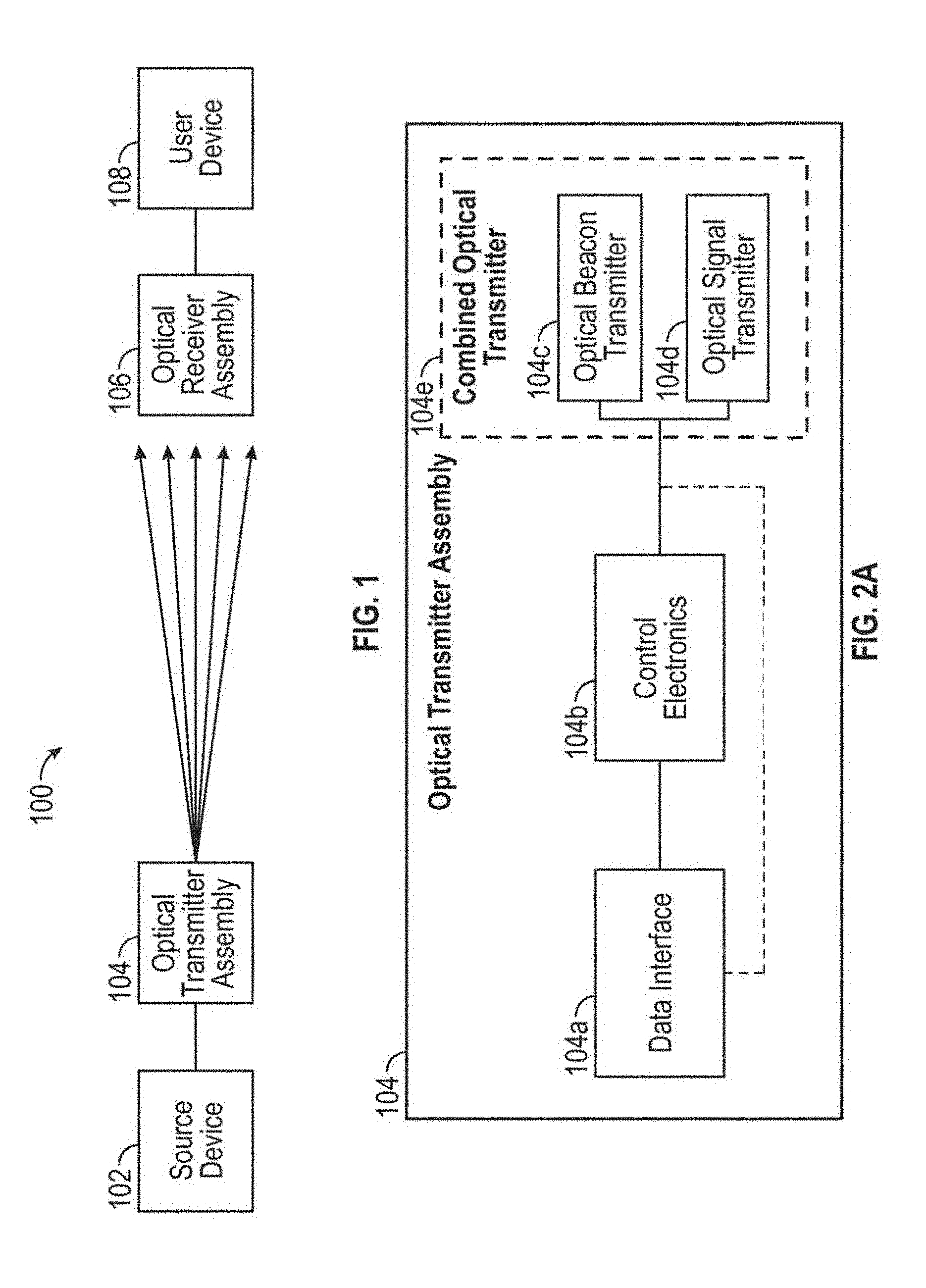

FIG. 1 illustrates an example optical narrowcasting system.

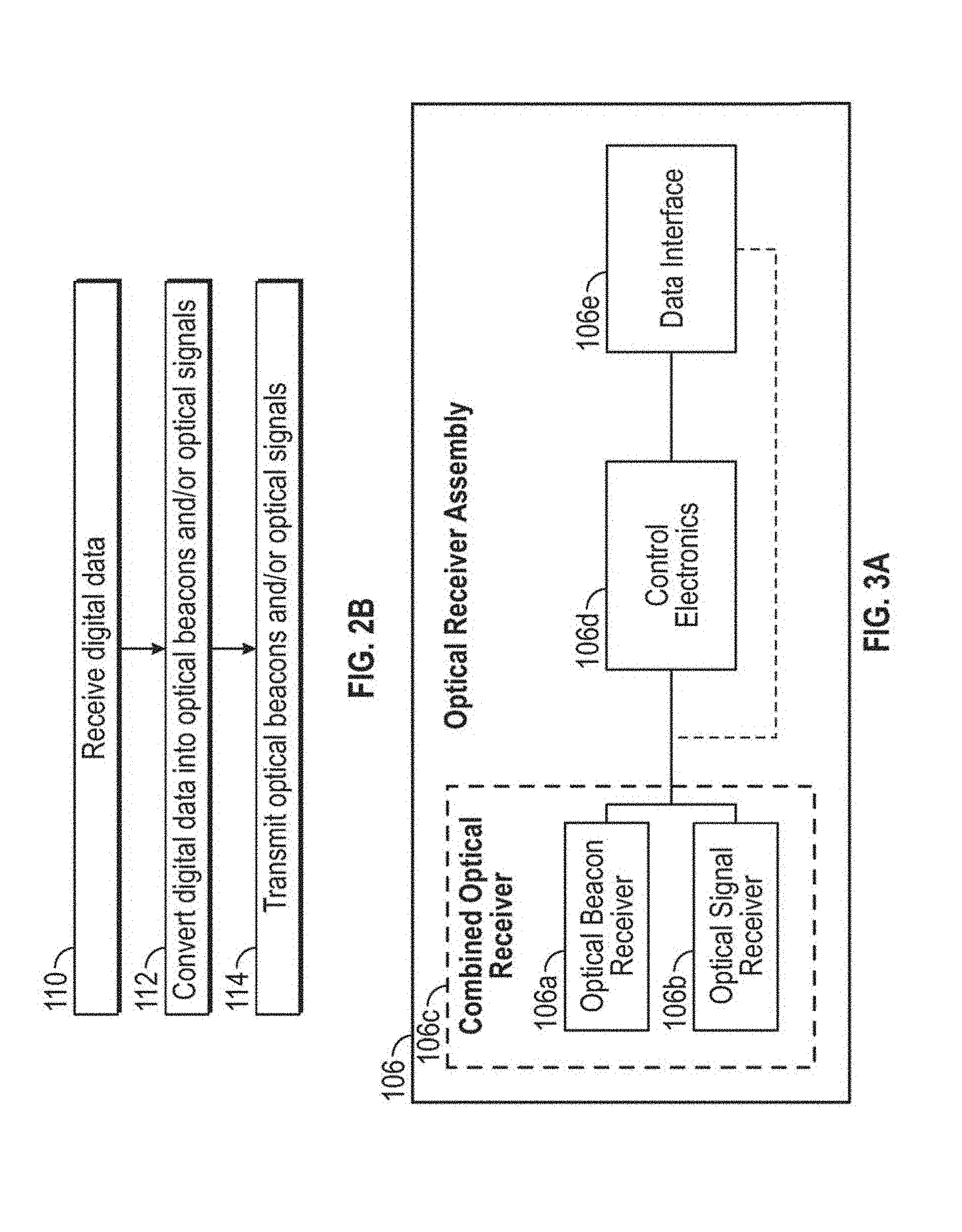

FIG. 2A illustrates example components that may make up an optical transmitter assembly.

FIG. 2B is a flow chart illustrating example operations that may be performed by the optical transmitter assembly of FIG. 2A and/or its component parts or elements.

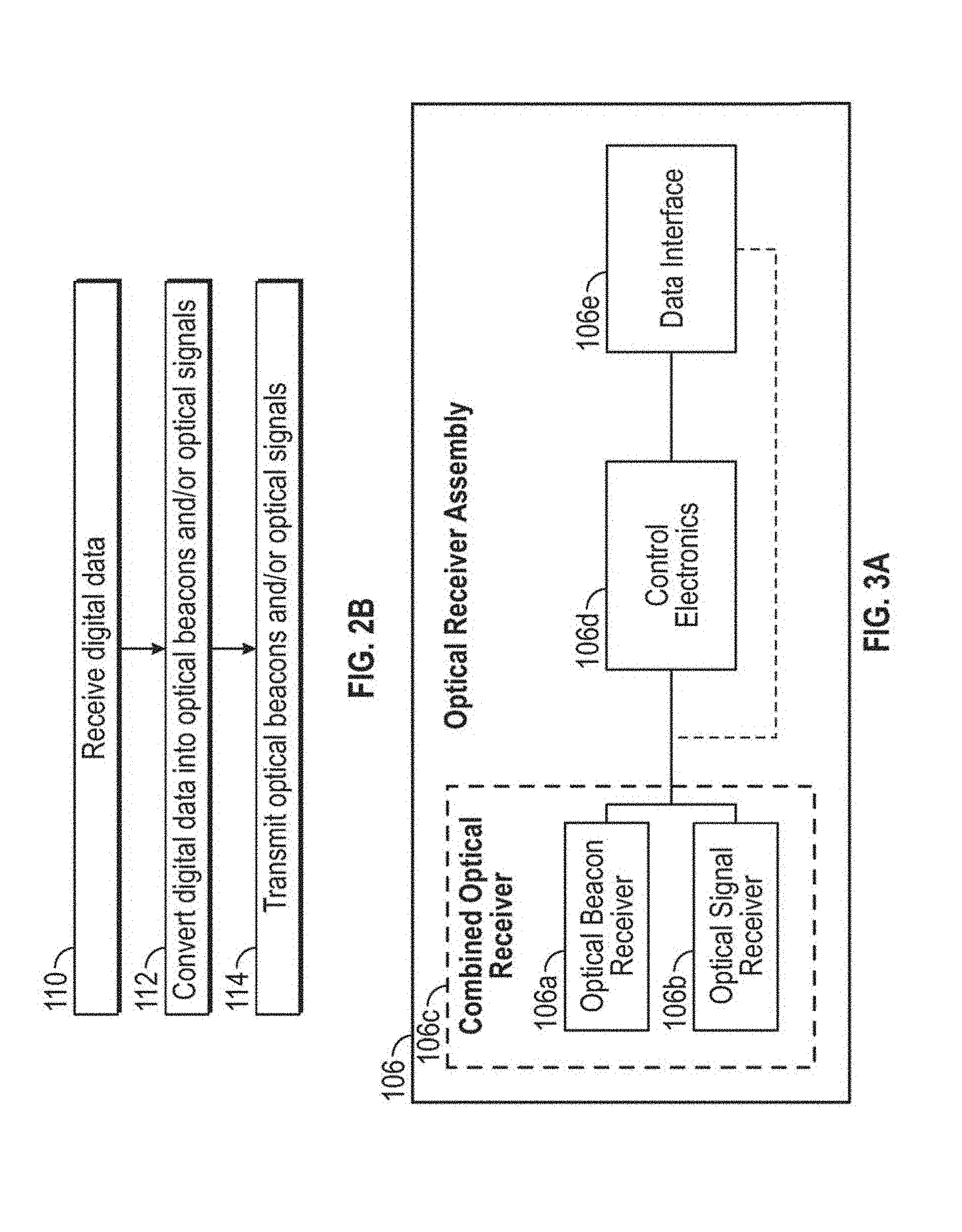

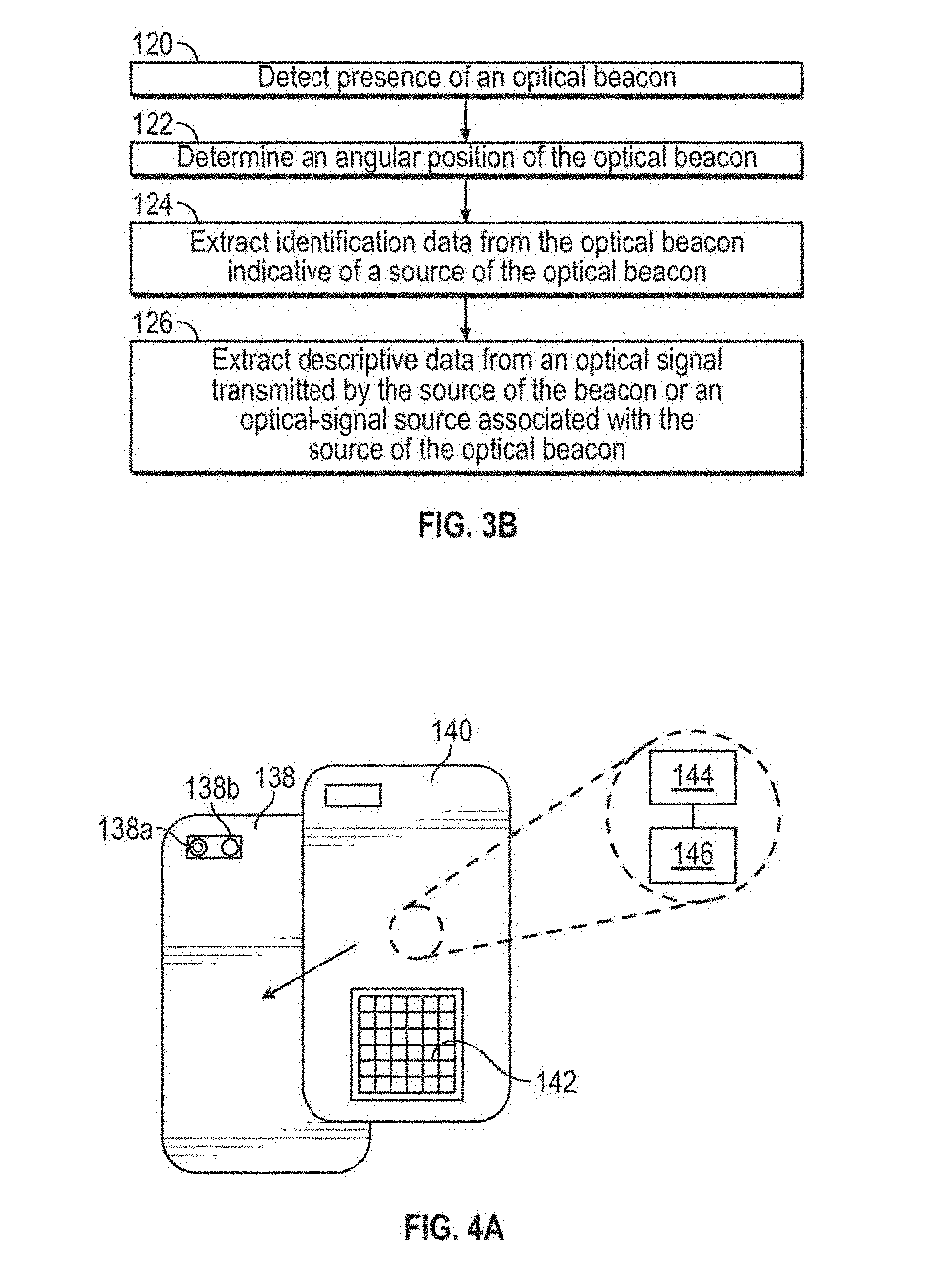

FIG. 3A illustrates an optical receiver assembly, including one or more example components that may make up the optical receiver assembly.

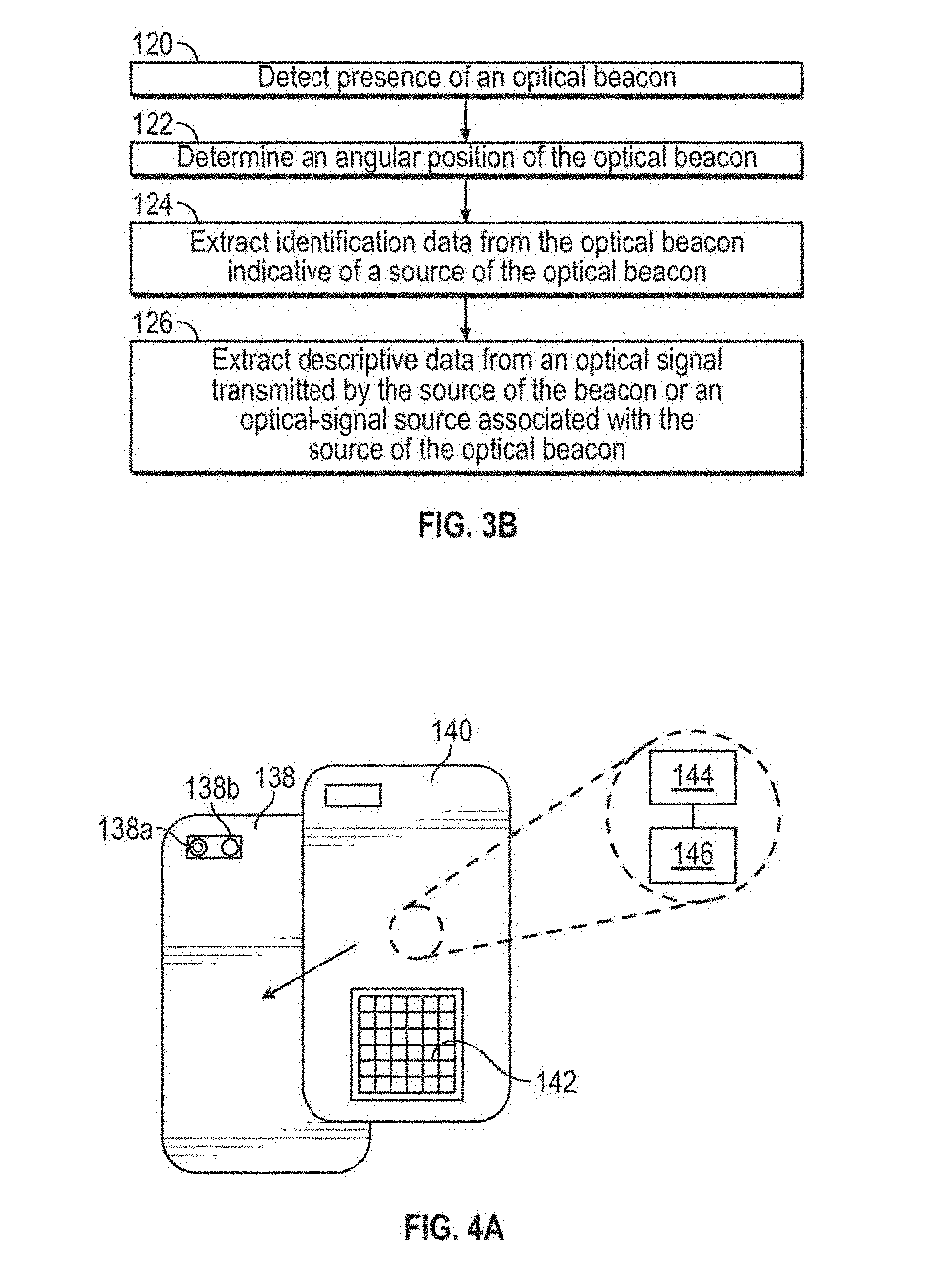

FIG. 3B is a flow chart illustrating example operations that can be performed by the optical receiver assembly of FIG. 3A and/or its component parts or elements.

FIG. 4A illustrates an example of an optical receiver assembly attachment.

FIG. 4B illustrates an example of an optical receiver assembly that is incorporated into a device.

FIG. 5A illustrates a frontal view of an automobile in which an optical receiver assembly is installed in and electronically interfaced with a vehicle.

FIG. 5B illustrates an example interior view of the automobile of FIG. 5A.

FIG. 6 illustrates a user device that is operatively and/or communicatively connected to an optical receiver assembly.

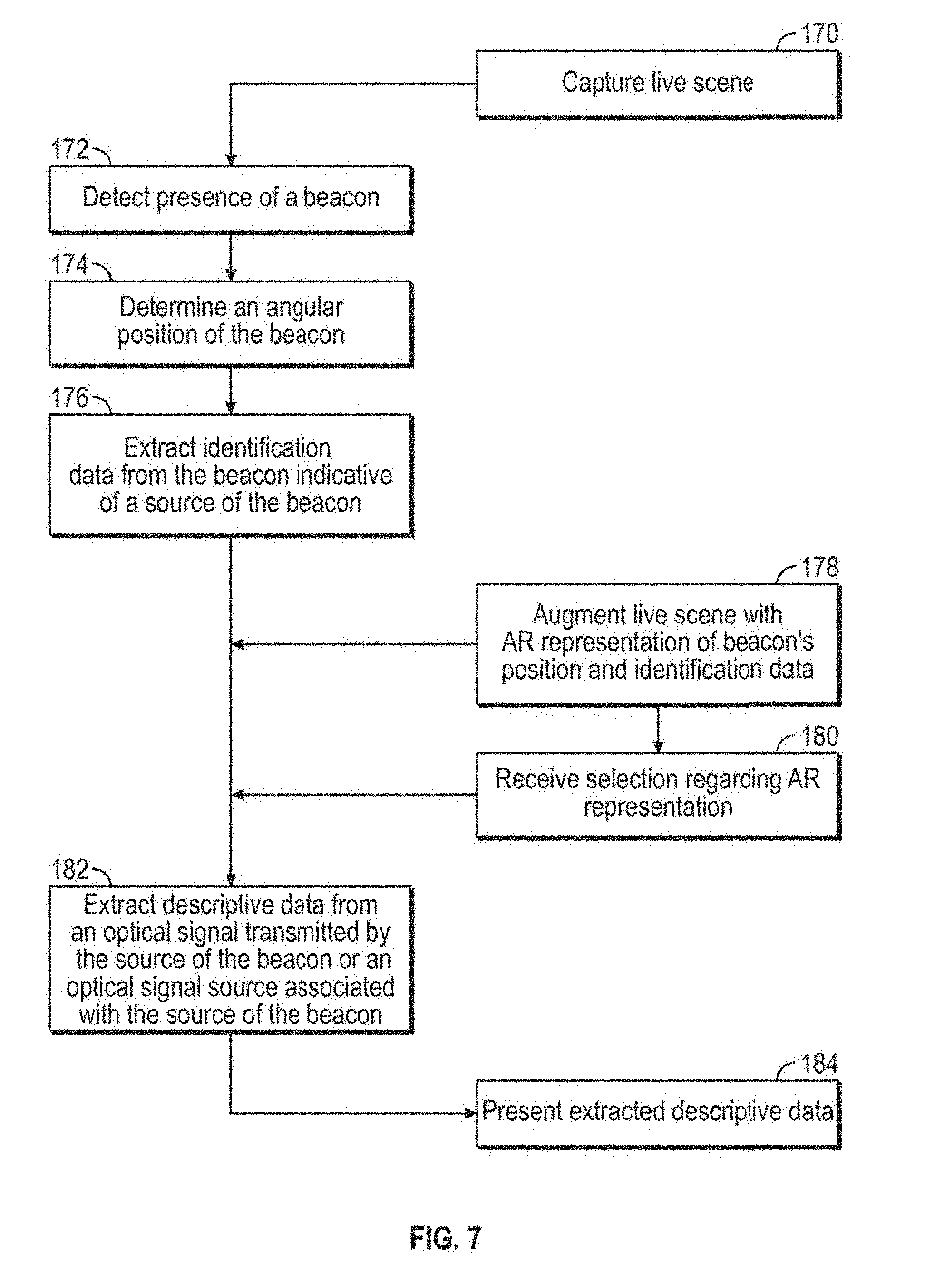

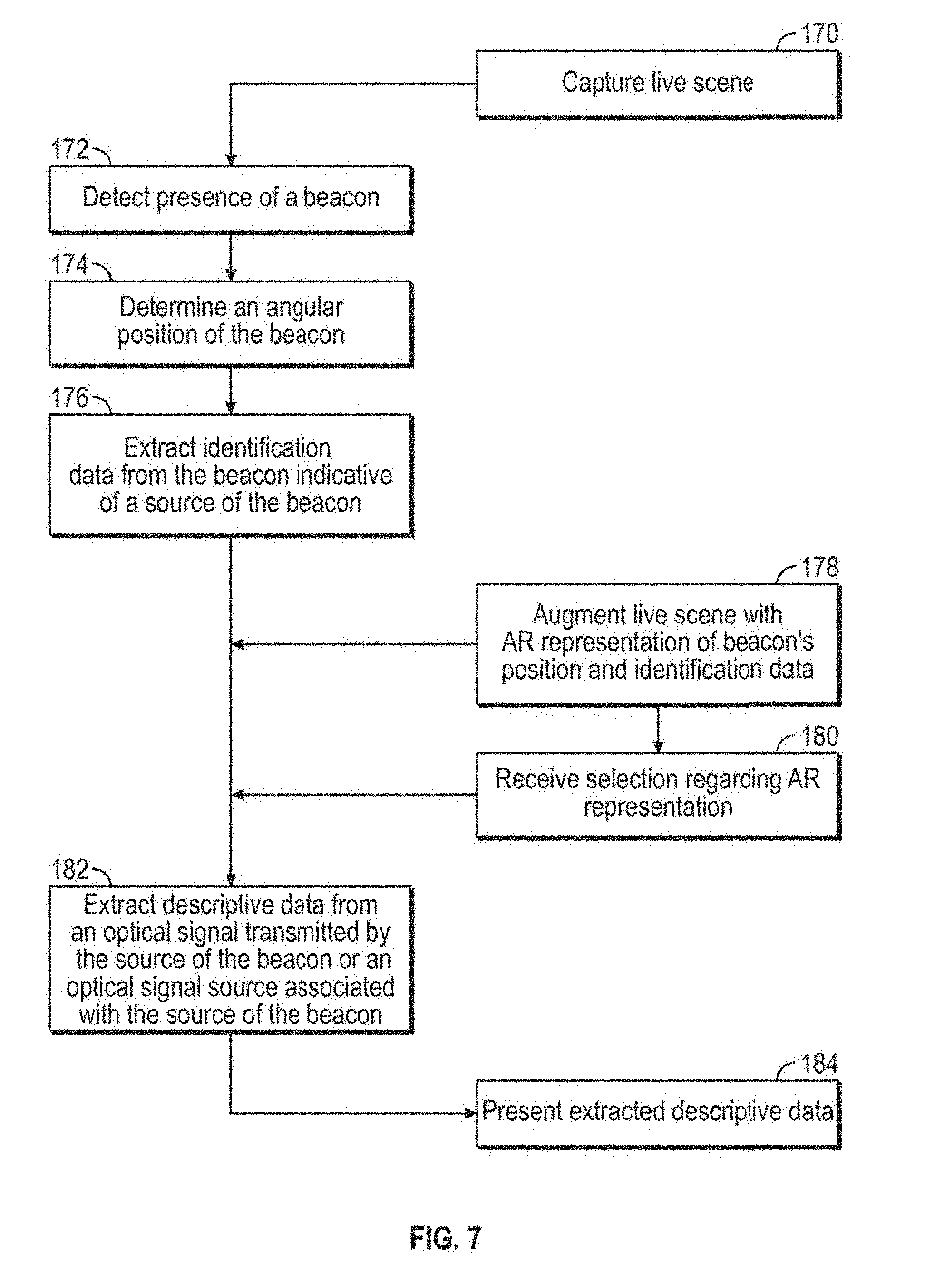

FIG. 7 is a flow chart illustrating example operations that may be performed by a user/controlling device and optical receiver assembly within an optical narrowcasting system.

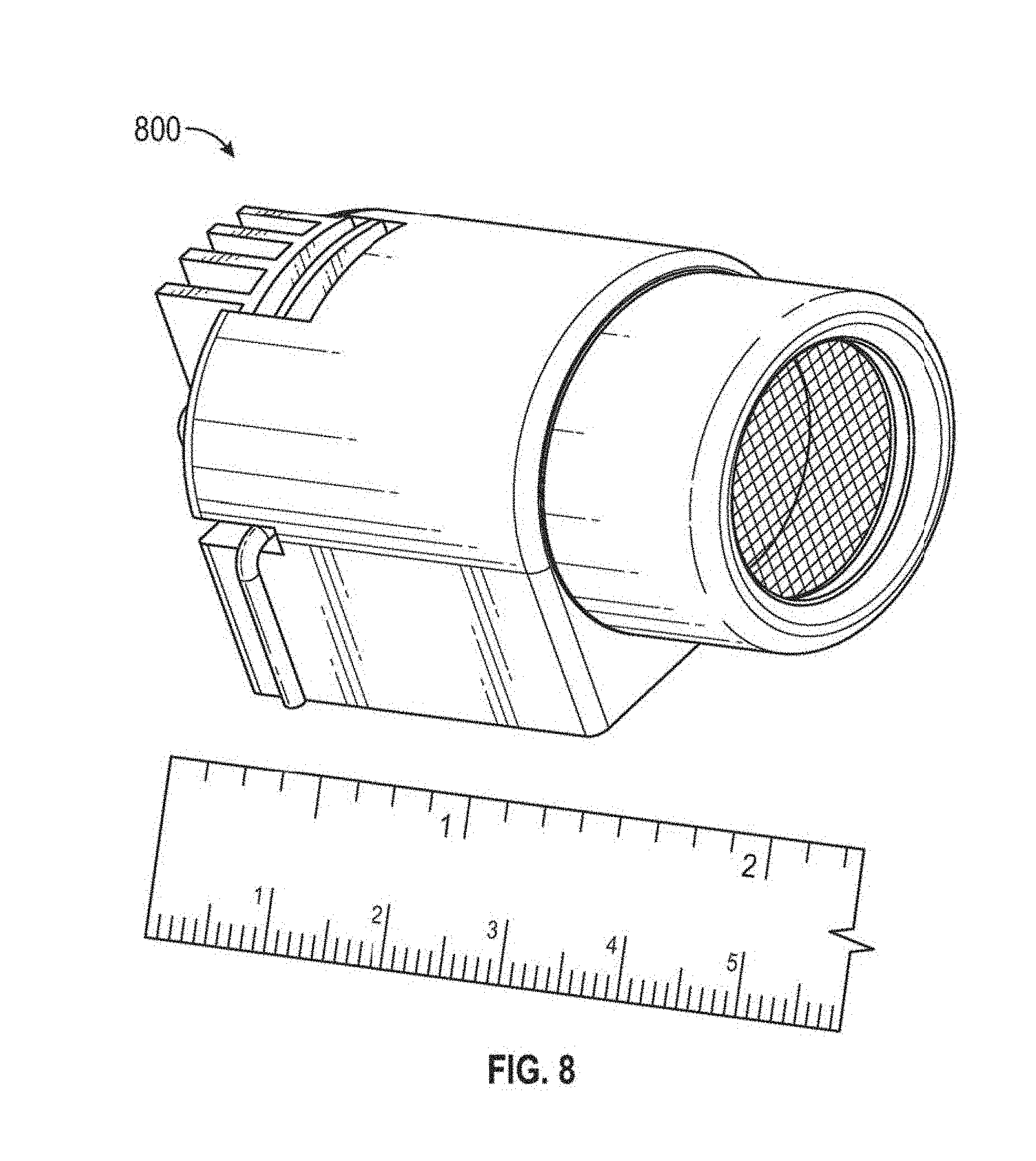

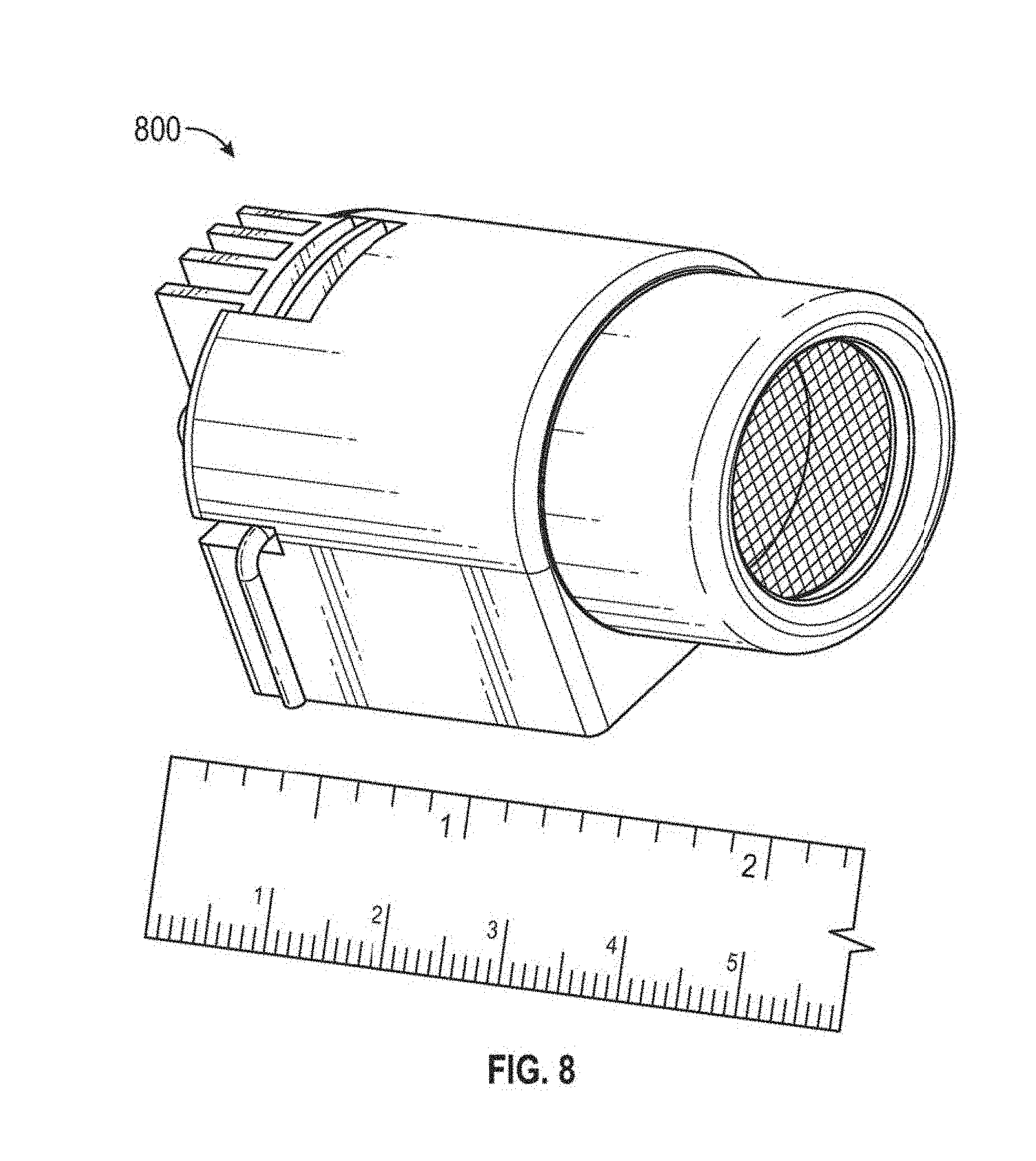

FIG. 8 is a depiction of an example optical transmitter assembly.

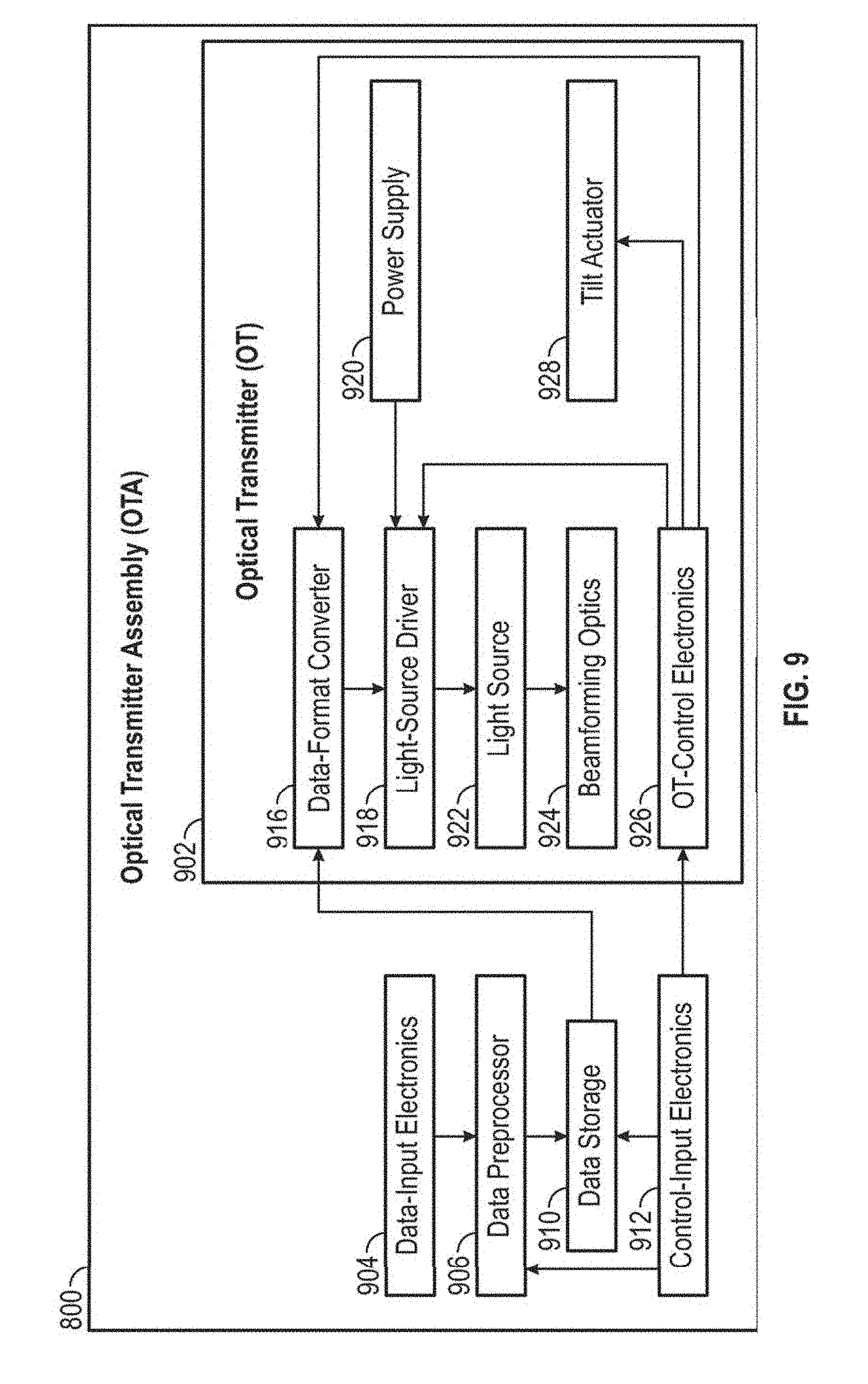

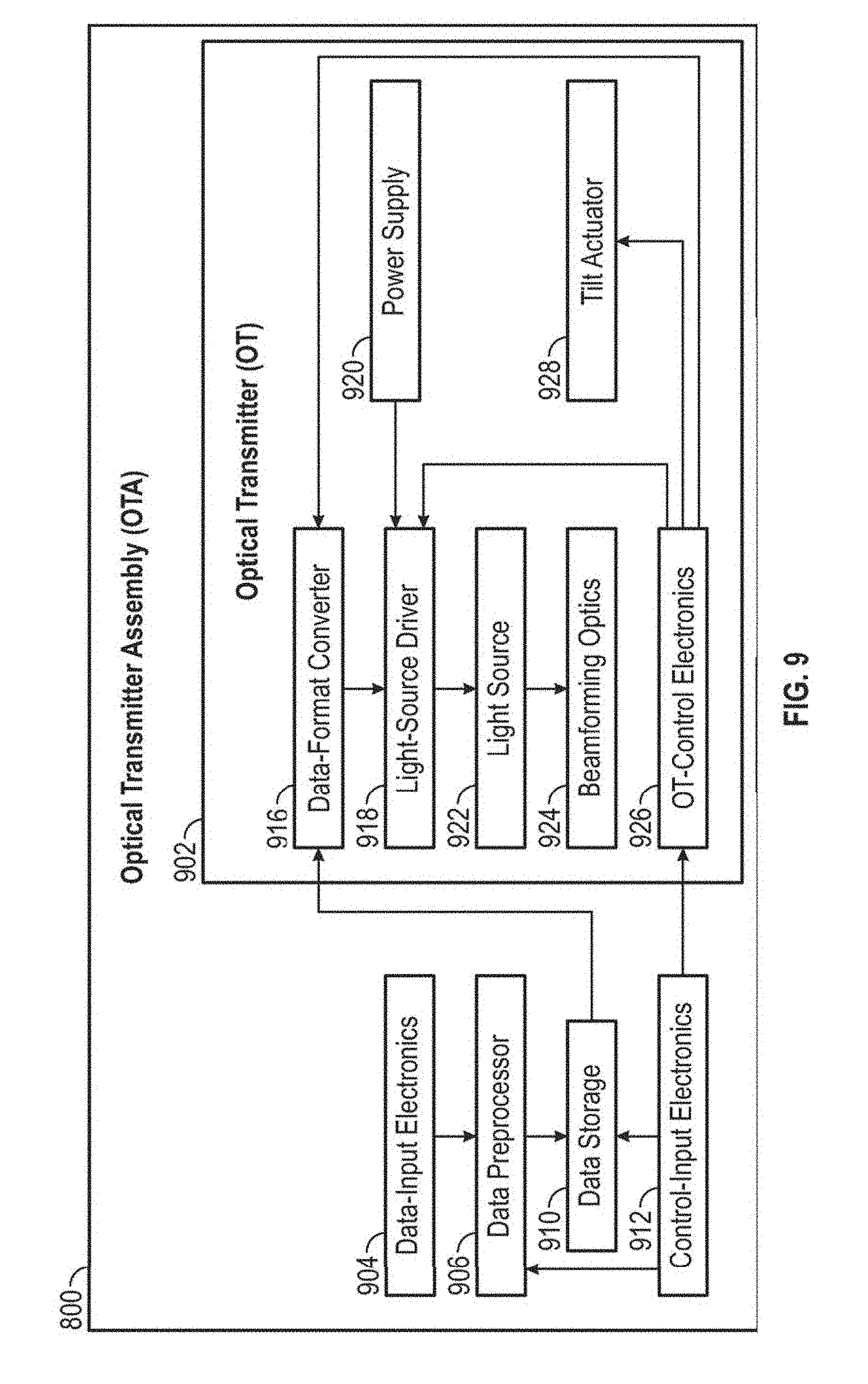

FIG. 9 depicts an example functional block diagram of an optical transmitter assembly.

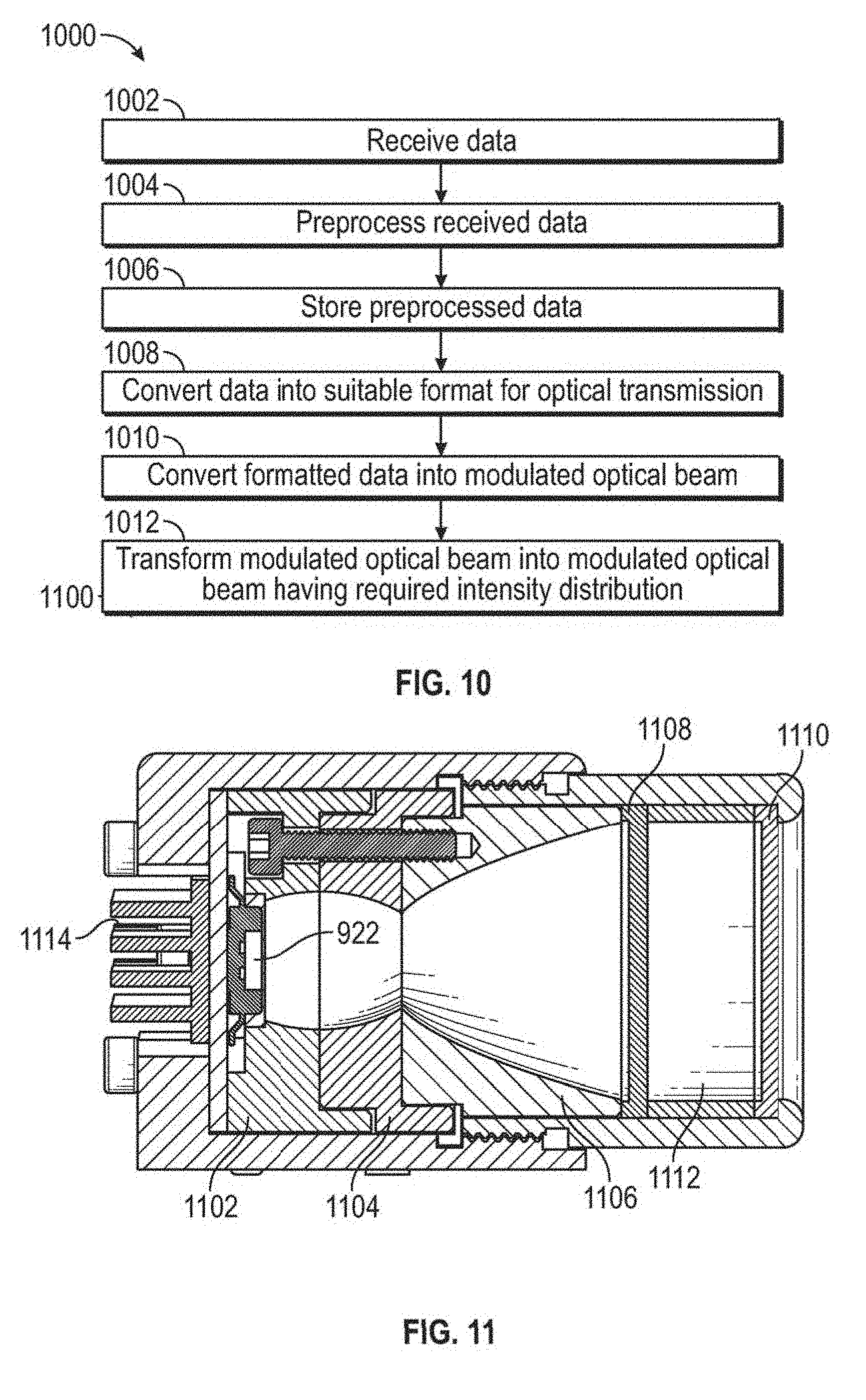

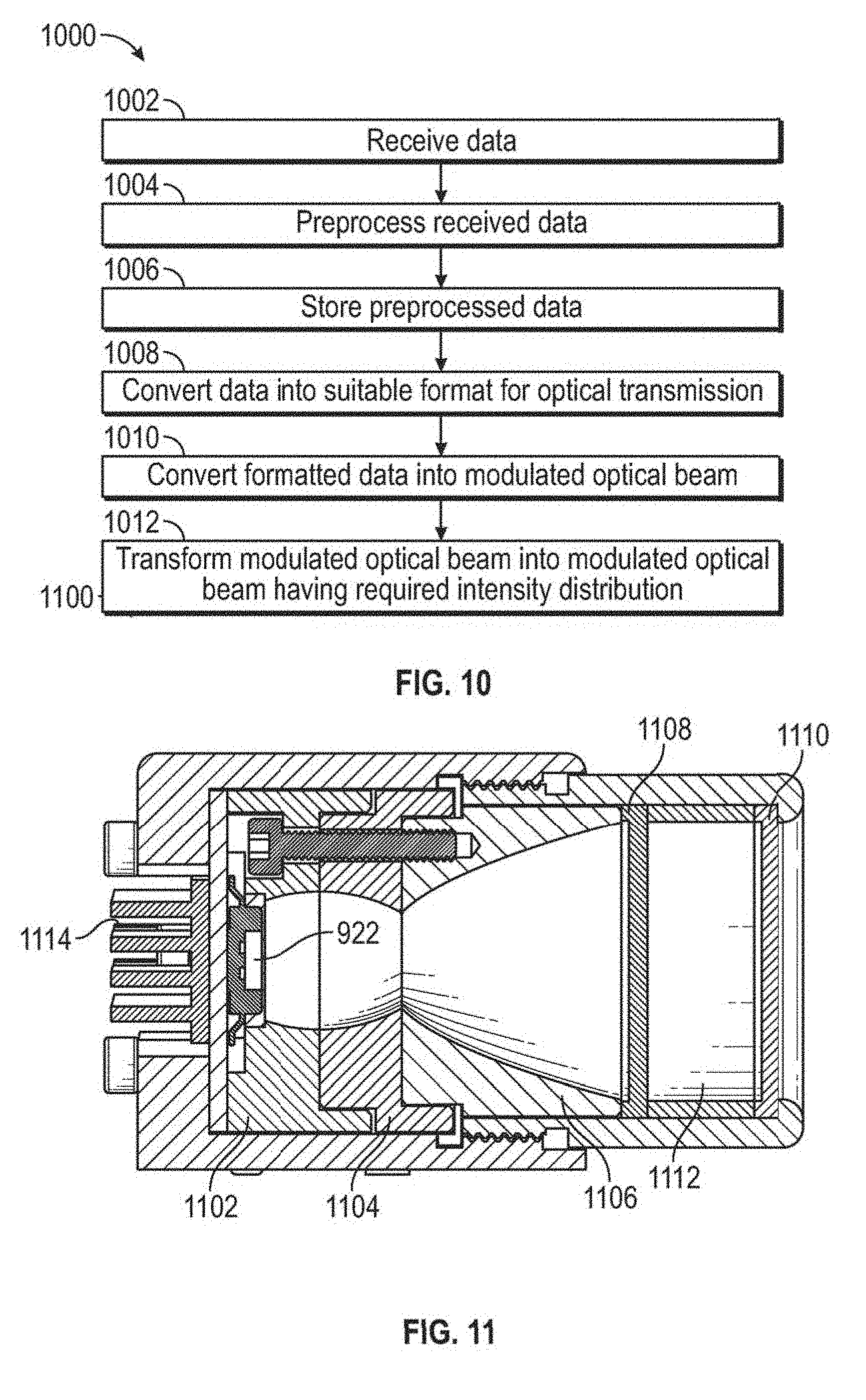

FIG. 10 is a flowchart for optical narrowcast transmission of data in some embodiments.

FIG. 11 is a depiction of an example optical transmitter assembly.

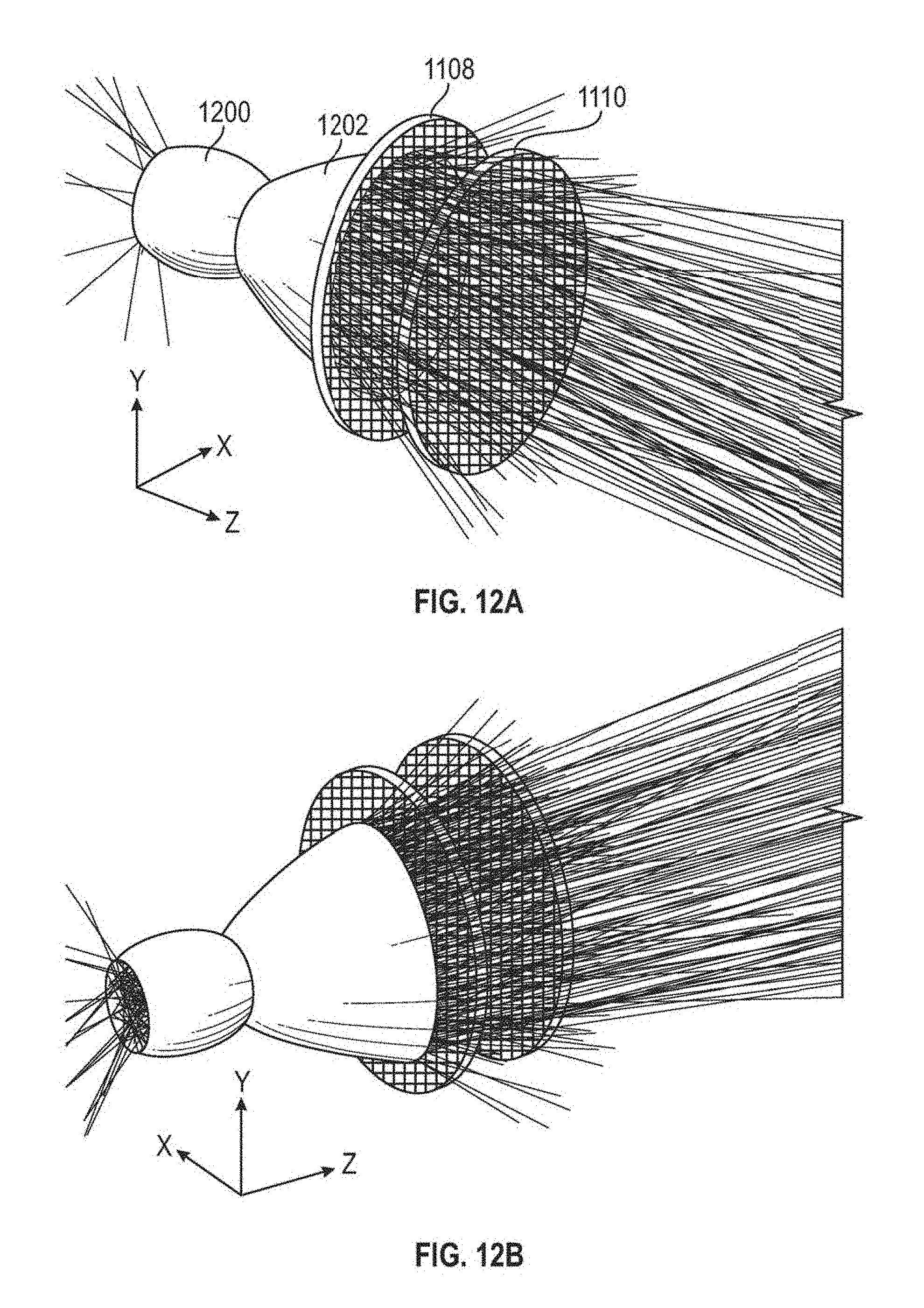

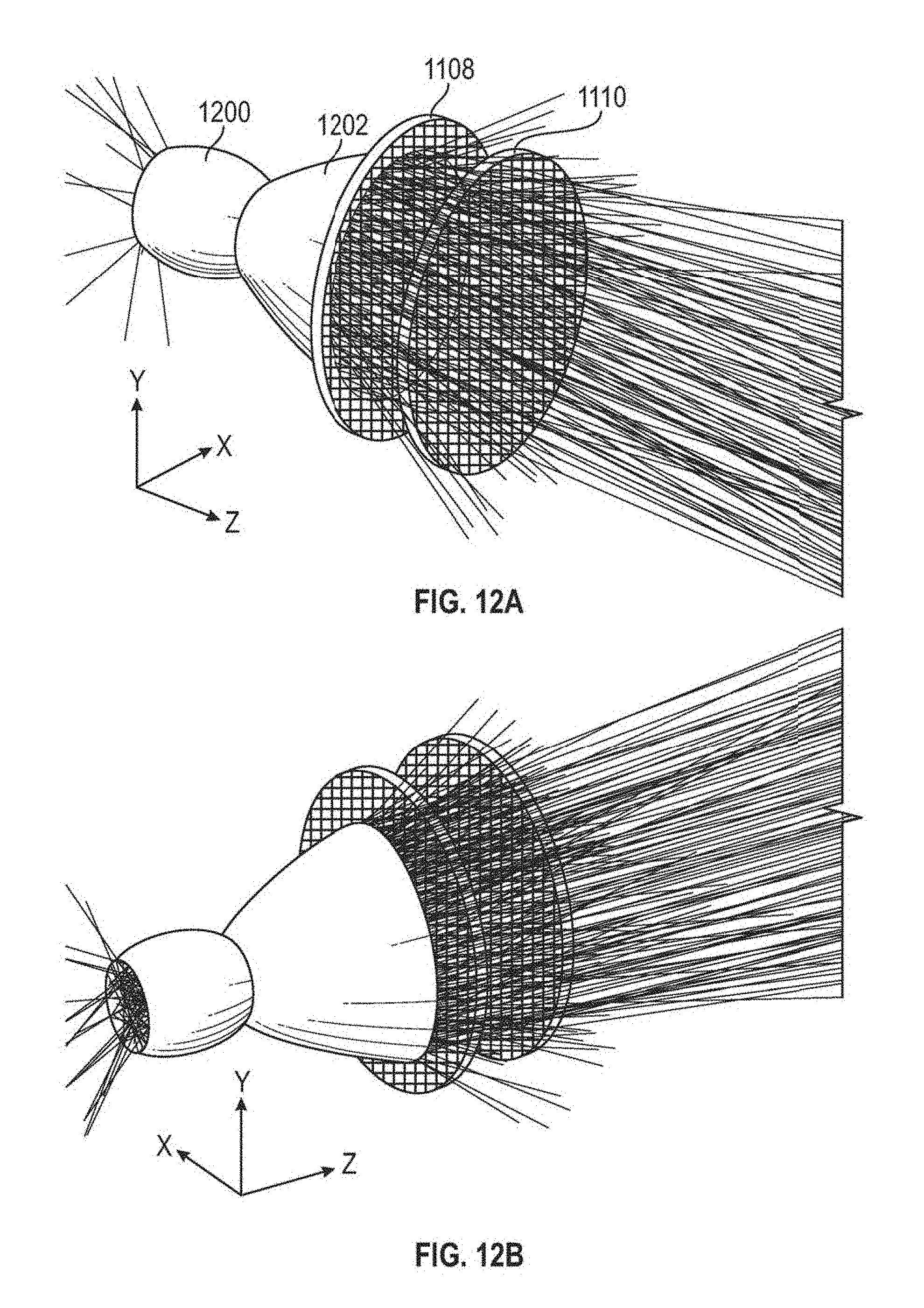

FIG. 12A depicts a three-dimensional perspective view of beamforming optics with traced rays from a light source.

FIG. 12B depicts another three-dimensional perspective view of beamforming optics with traced rays from a light source.

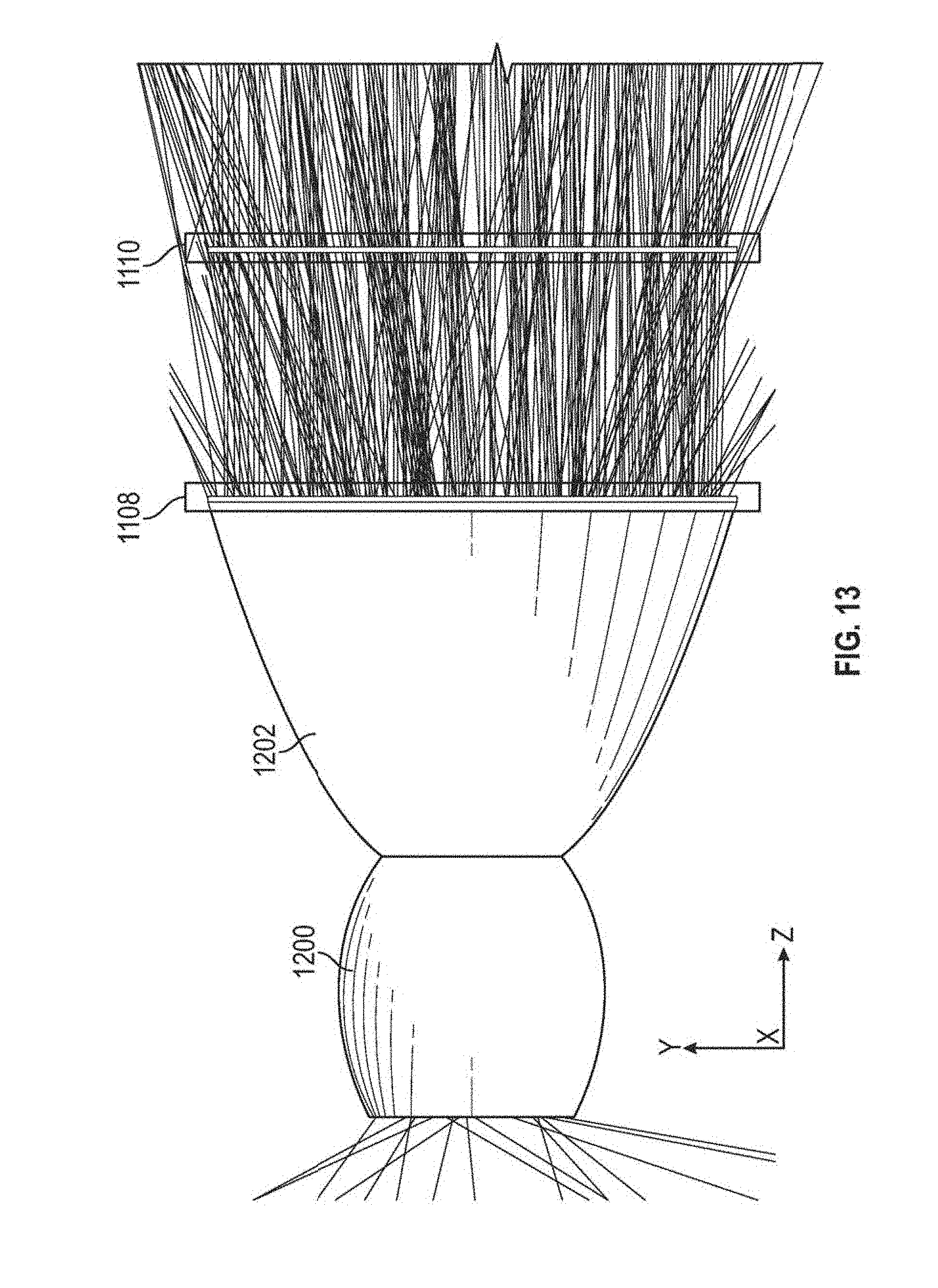

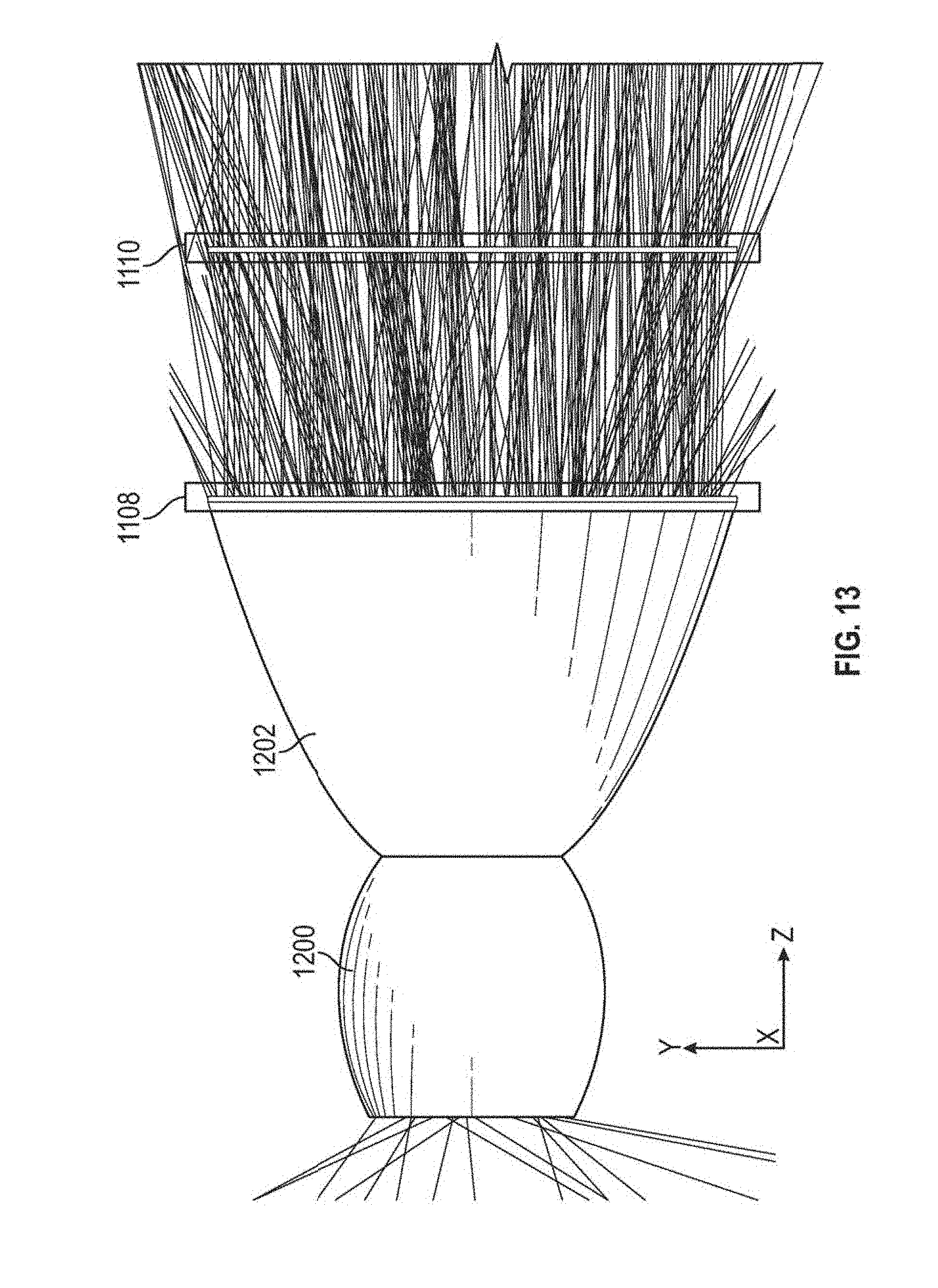

FIG. 13 depicts a side view of an example beamforming optic with traced rays from a light source.

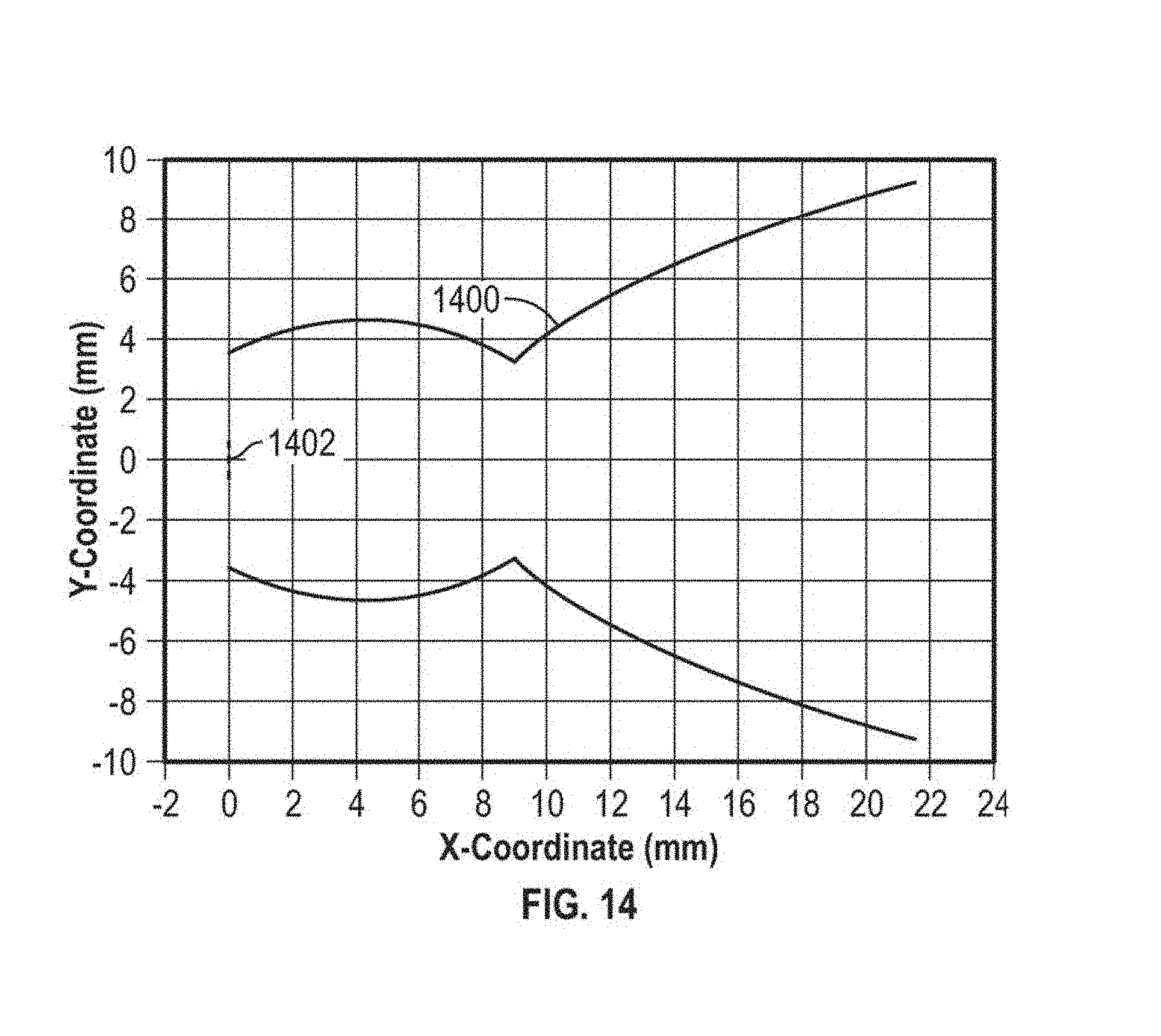

FIG. 14 is a cross-sectional view of an example axisymmetric reflective collimator.

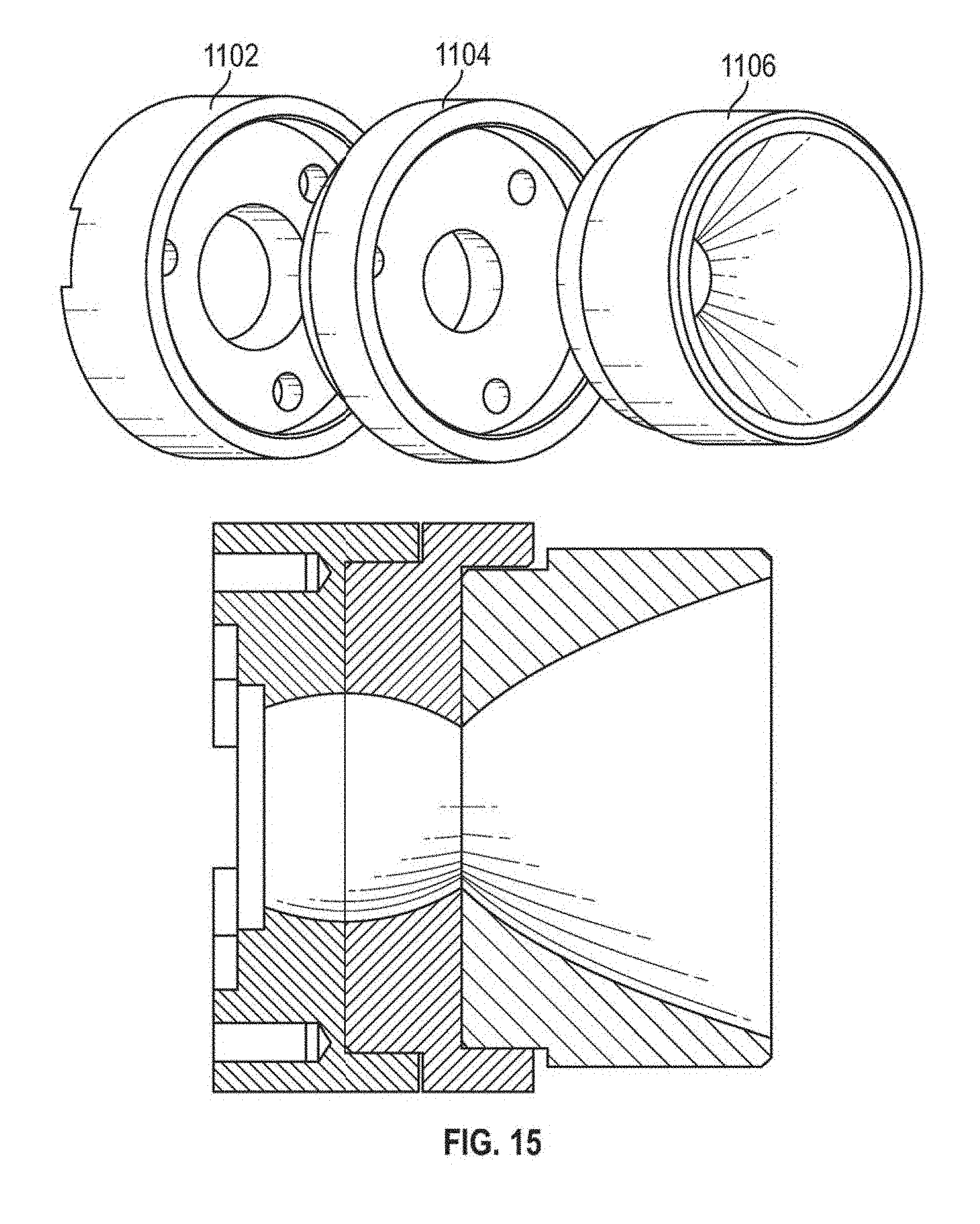

FIG. 15 depicts a three-dimensional view of an example of a wineglass collimator for use in beamforming optics.

FIG. 16 depicts an example lenslet array.

FIG. 17 depicts an example pair of lenslet arrays.

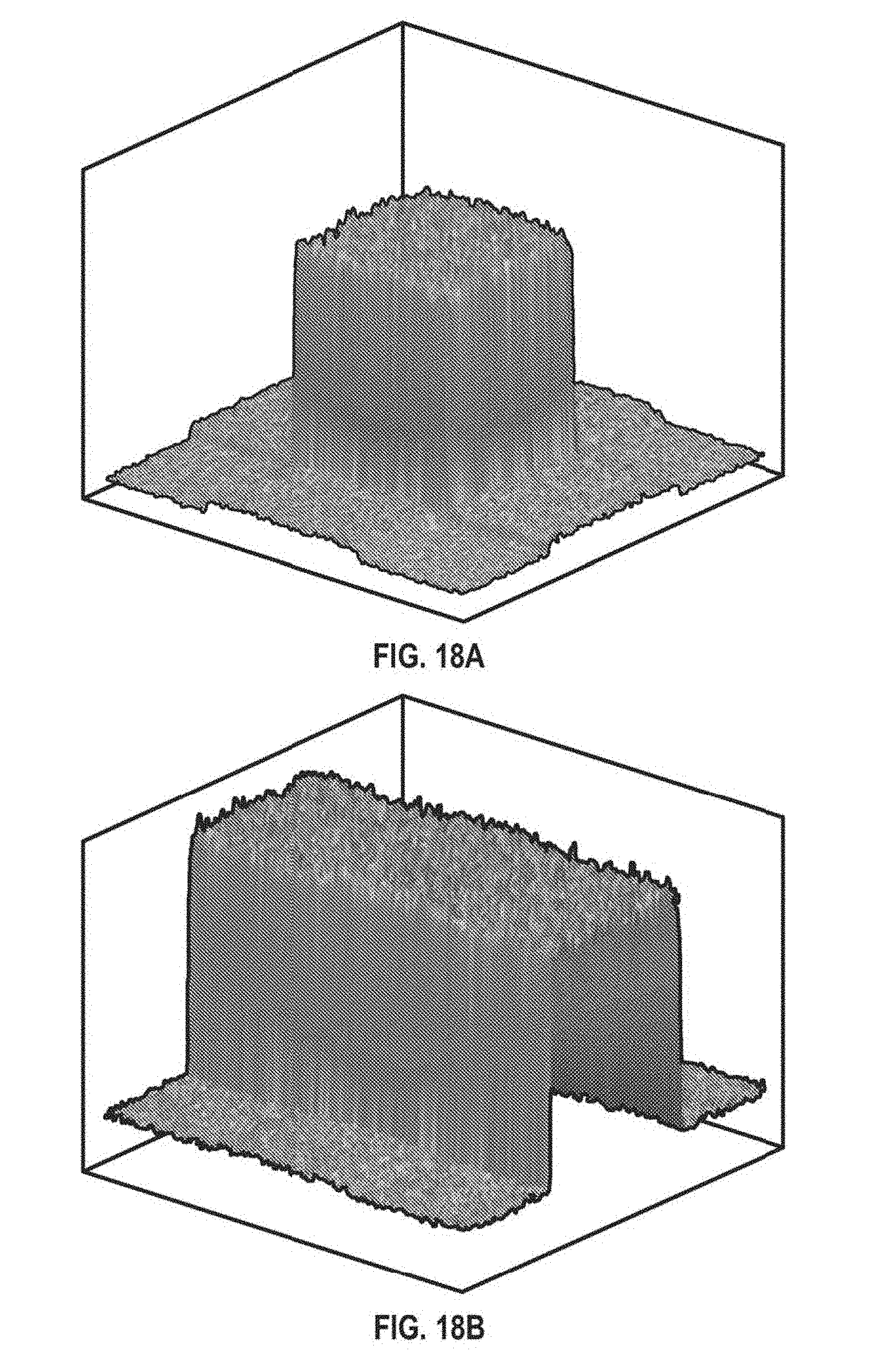

FIG. 18a is a surface plot of the output intensity distribution as a function of a horizontal angle and a vertical angle produced by a single beamforming optic consisting of a wineglass collimator and lenslet arrays in some embodiments.

FIG. 18b is a surface plot of a portion of the combined output intensity distribution as a function of angle produced by six identical beamforming optics of the same type used to generate the results of FIG. 18a in some embodiments.

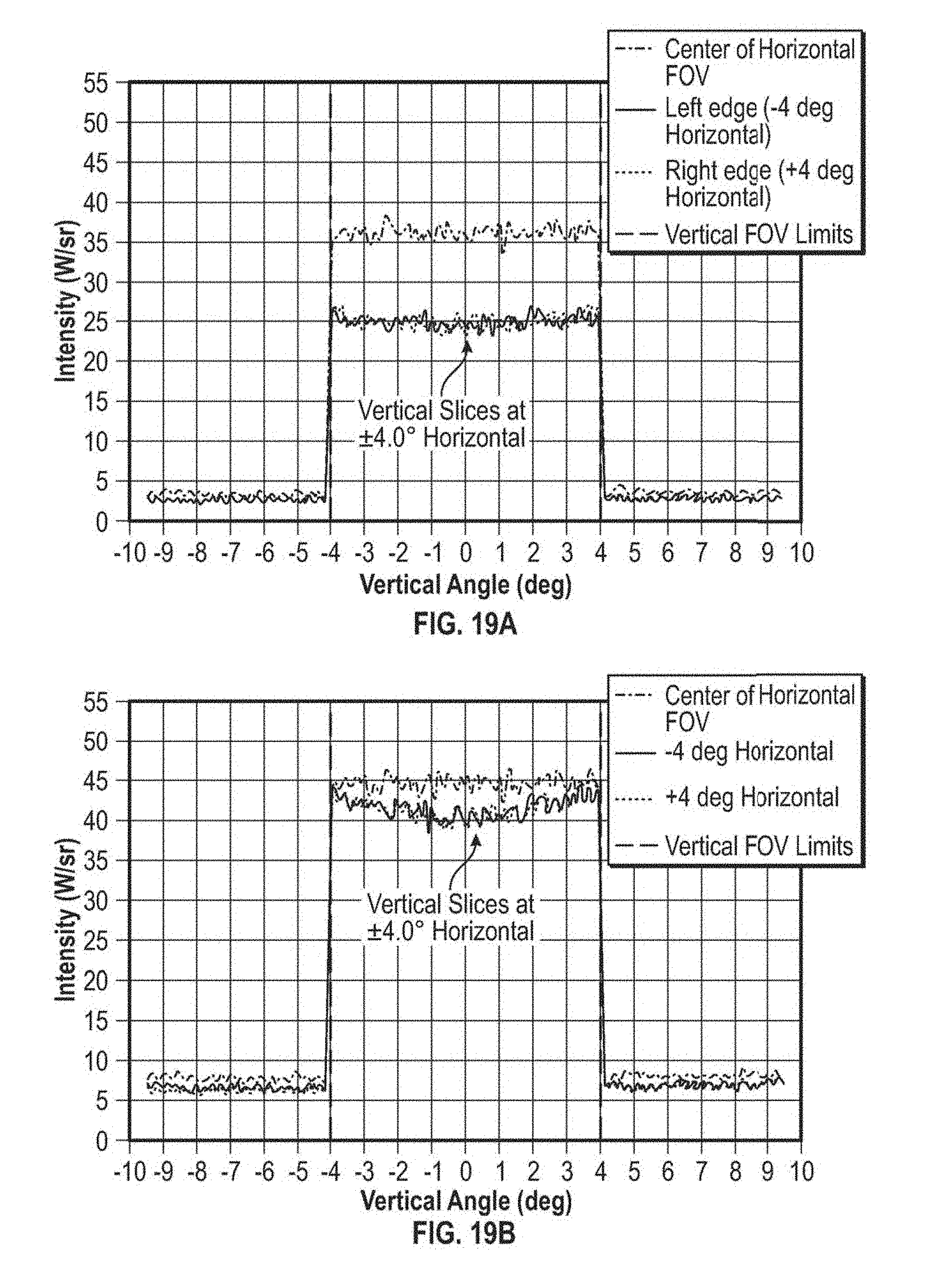

FIG. 19a is a graph of vertical slices taken through the center and at horizontal coordinates of .+-.4.degree. relative to the center of the same intensity distribution produced by a single beamforming optic in some embodiments that is depicted as a surface plot in FIG. 18a.

FIG. 19b is a graph of vertical slices taken through the center of the beam and at horizontal coordinates of .+-.4.degree. relative to the center of the same intensity distribution produced by the six beamforming optics in some embodiments that is depicted as a surface plot in FIG. 18b.

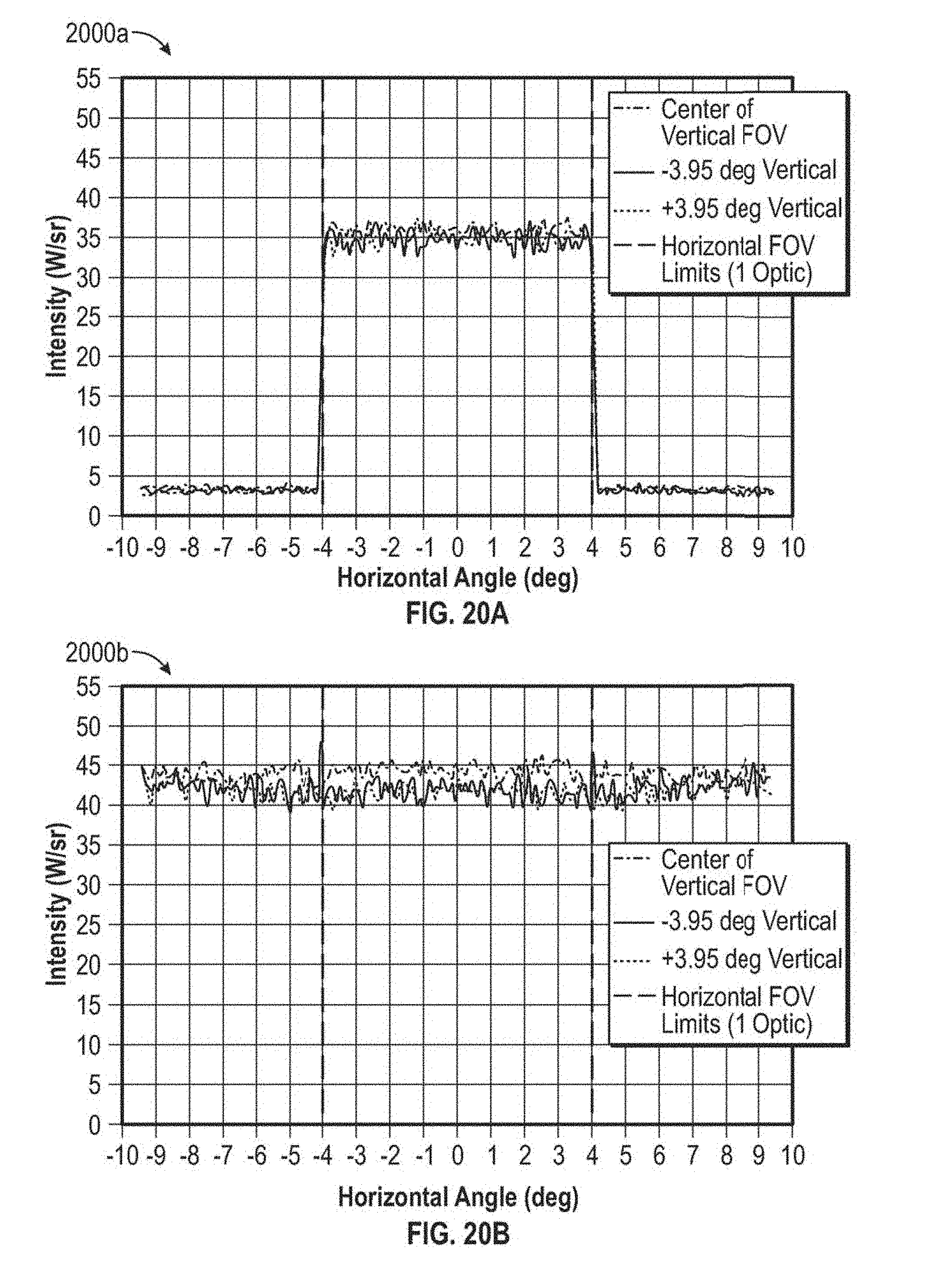

FIG. 20a is a graph of horizontal slices taken through the center of the beam and at vertical coordinates of .+-.3.95.degree. relative to the center of the same intensity distribution produced by a single beamforming optic in some embodiments that is depicted as a surface plot in FIG. 18a.

FIG. 20b is a graph of horizontal slices taken through the center of the beam and at vertical coordinates of .+-.3.95.degree. relative to the center of the same intensity distribution produced by the six beamforming optics in some embodiments that is depicted as a surface plot in FIG. 18b.

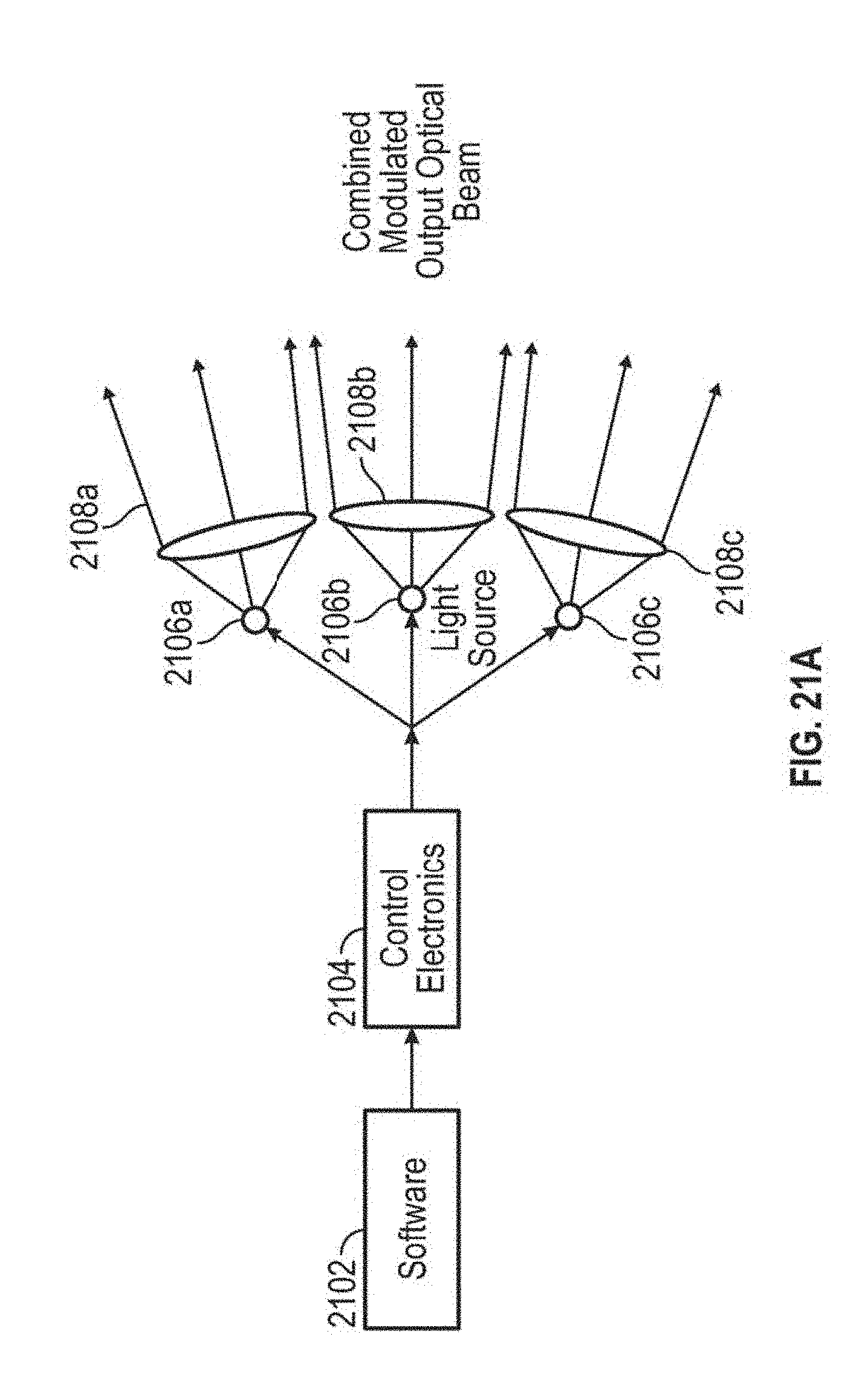

FIG. 21a depicts a simplified schematic diagram of an example OTA utilizing multiple light sources and beamforming optics.

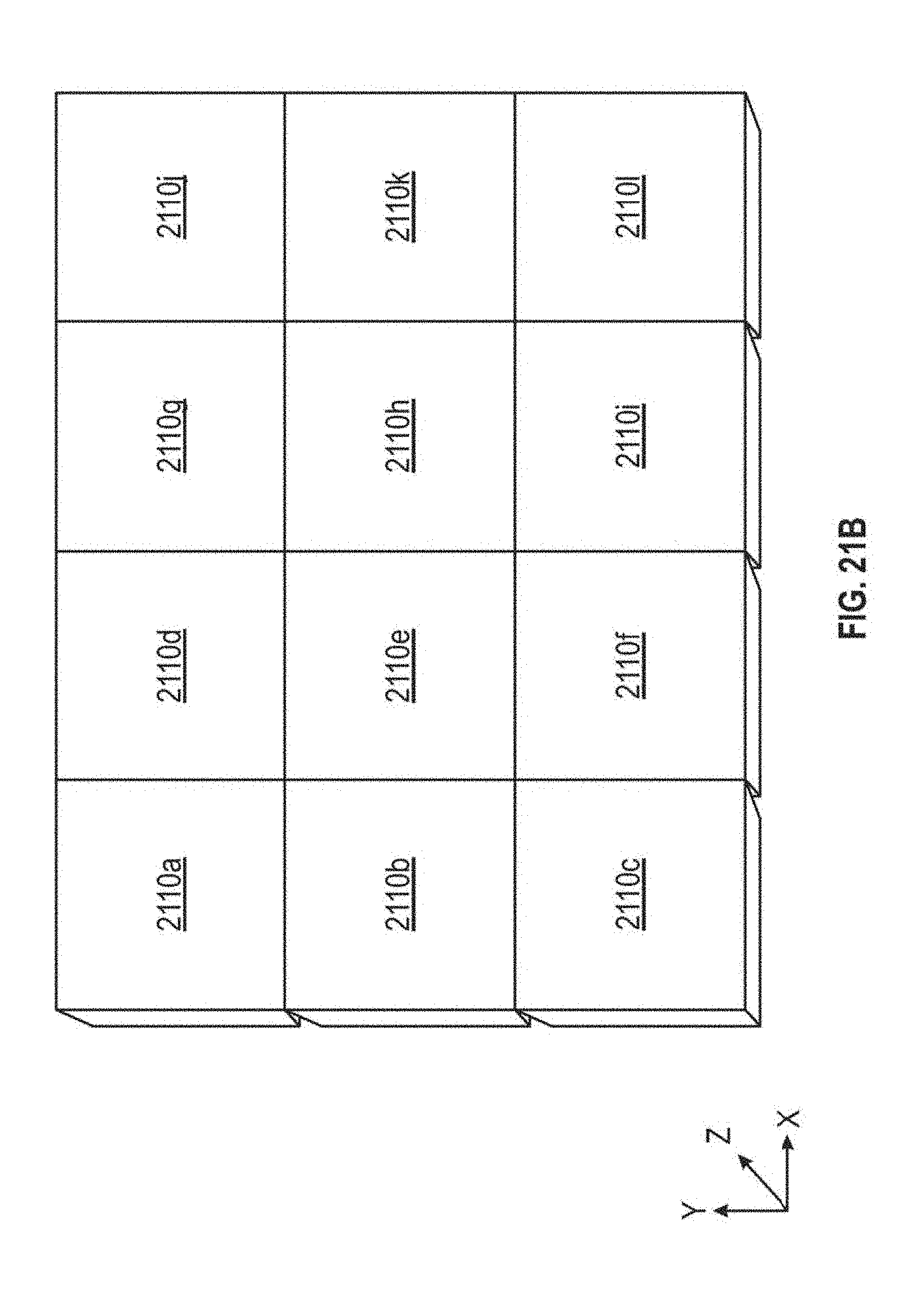

FIG. 21b depicts an example combined optical beam output from an OTA utilizing multiple light sources and beamforming optics.

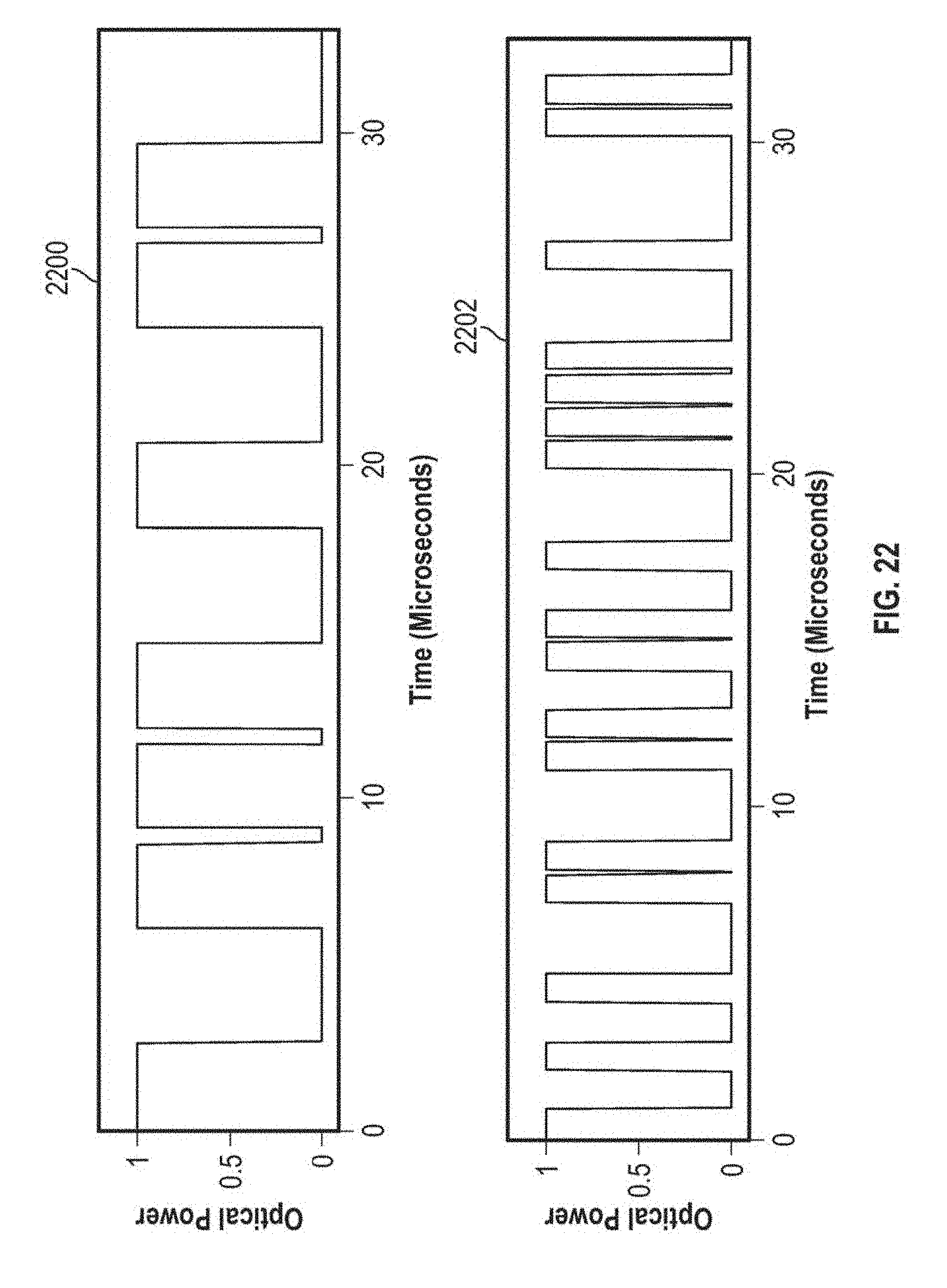

FIG. 22 depicts an example of the optical power output (in arbitrary units) as a function of time for an optical beacon operating in the 800-900 nm band, as well as for an optical signal operating in the 900-1000 nm band, where the bit rates for the optical beacon and the optical signal are 333.33 kHz and 1 MHz, respectively.

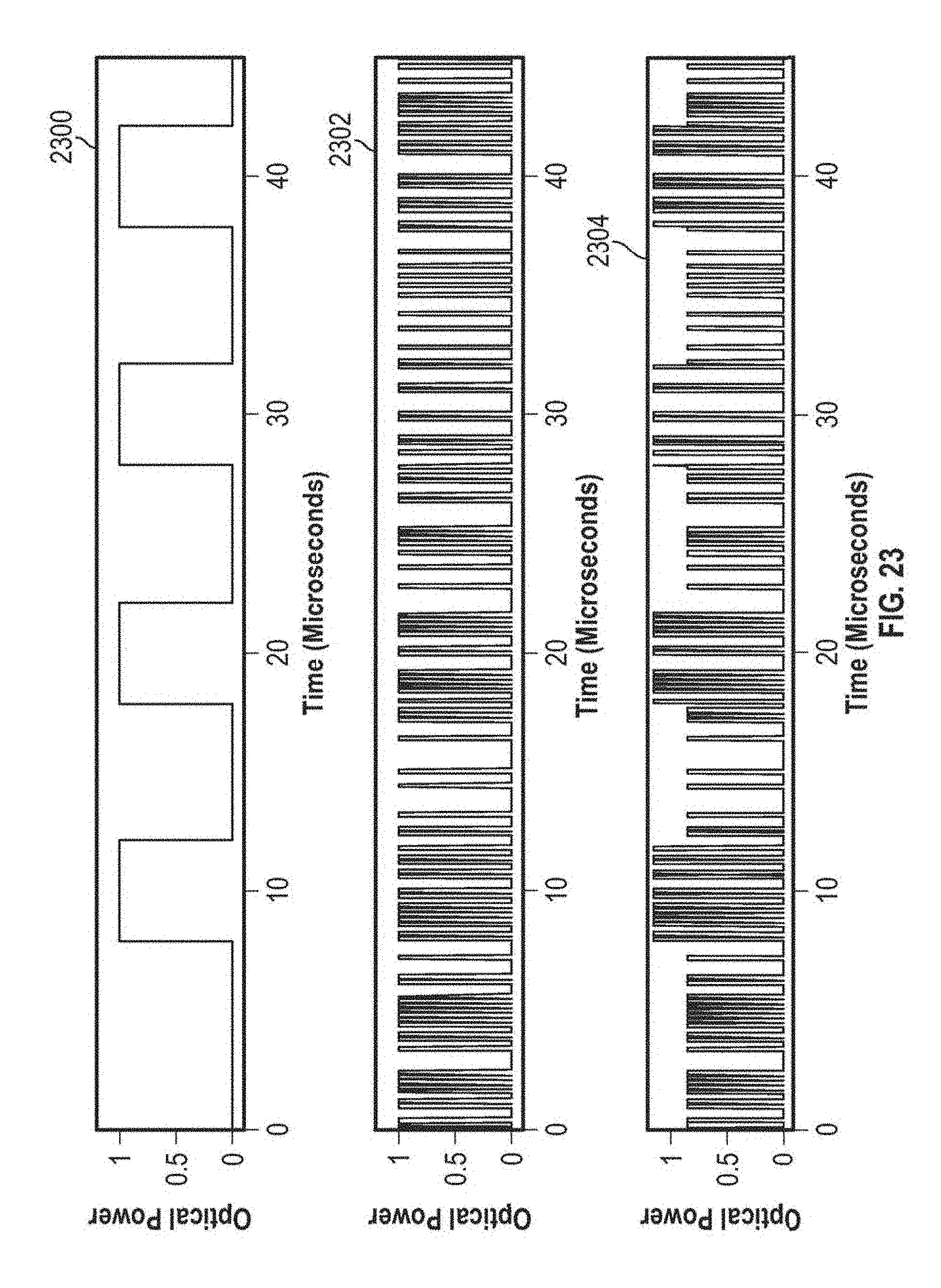

FIG. 23 depicts three plots of temporal waveforms of transmitted output beams for an example of double modulation.

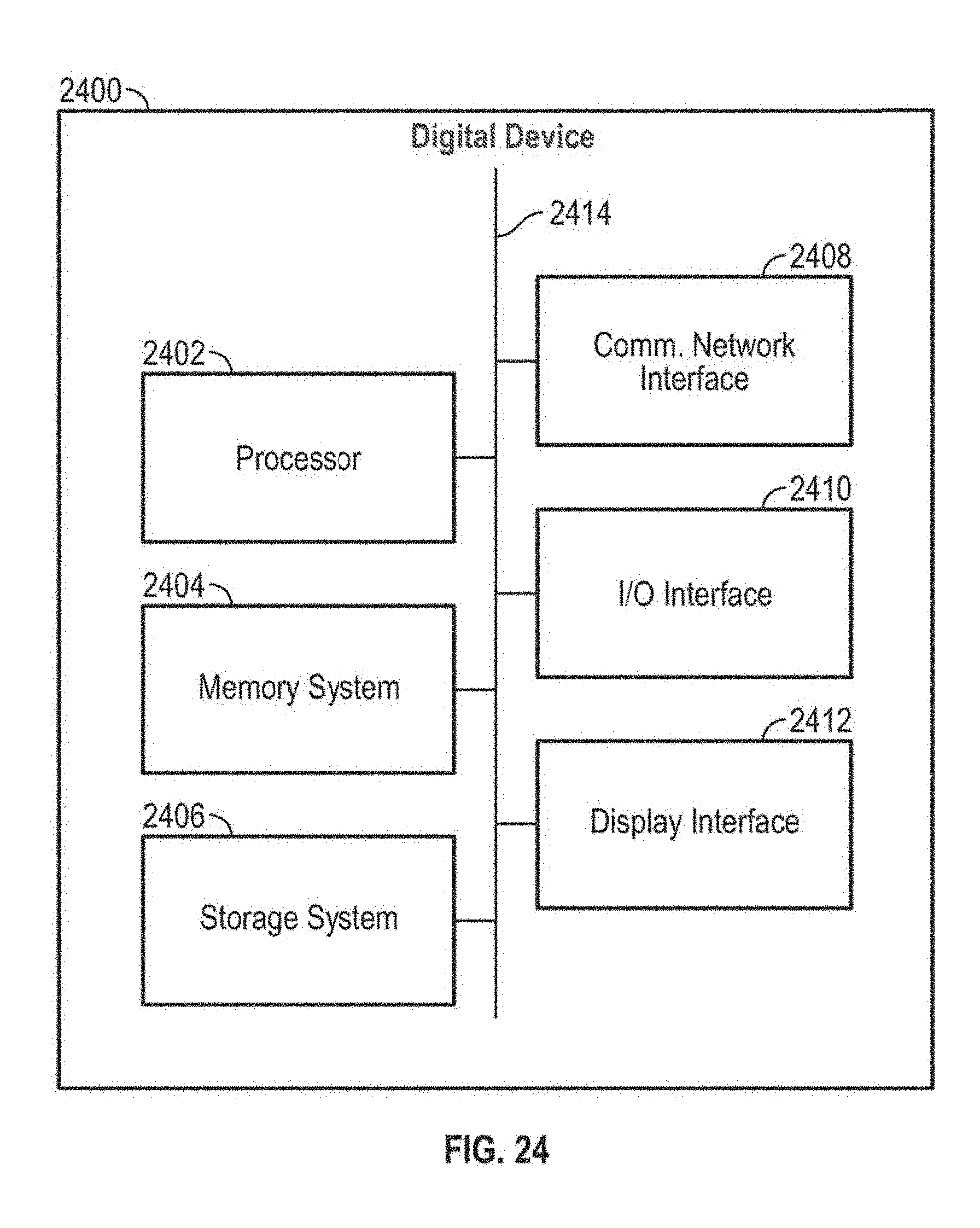

FIG. 24 is a block diagram of an example digital device.

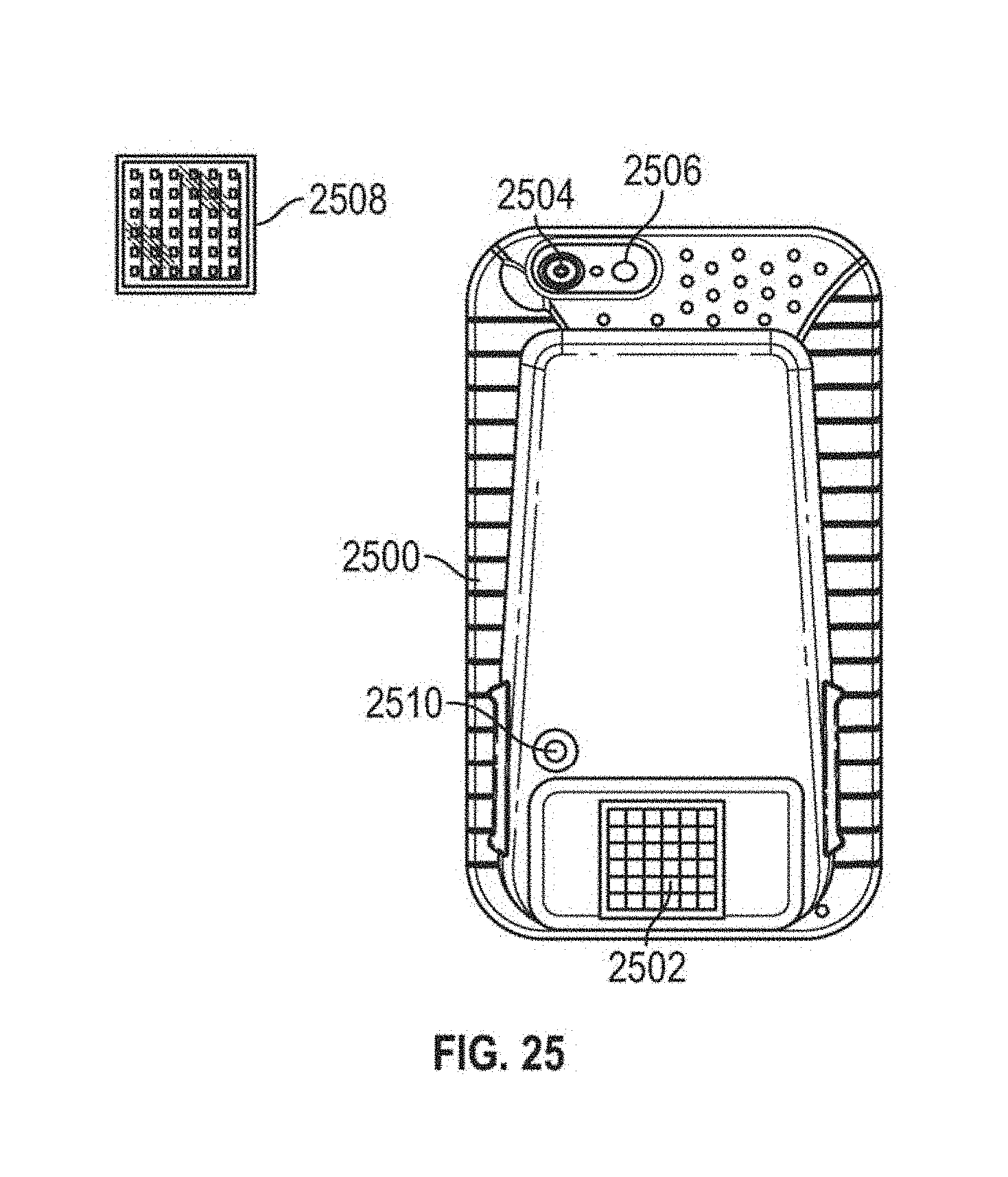

FIG. 25 is a depiction of an example optical receiver assembly.

FIG. 26a schematically depicts an ORA that utilizes a single OSR and a single OBR.

FIG. 26b schematically depicts an ORA utilizing multiple OSRs.

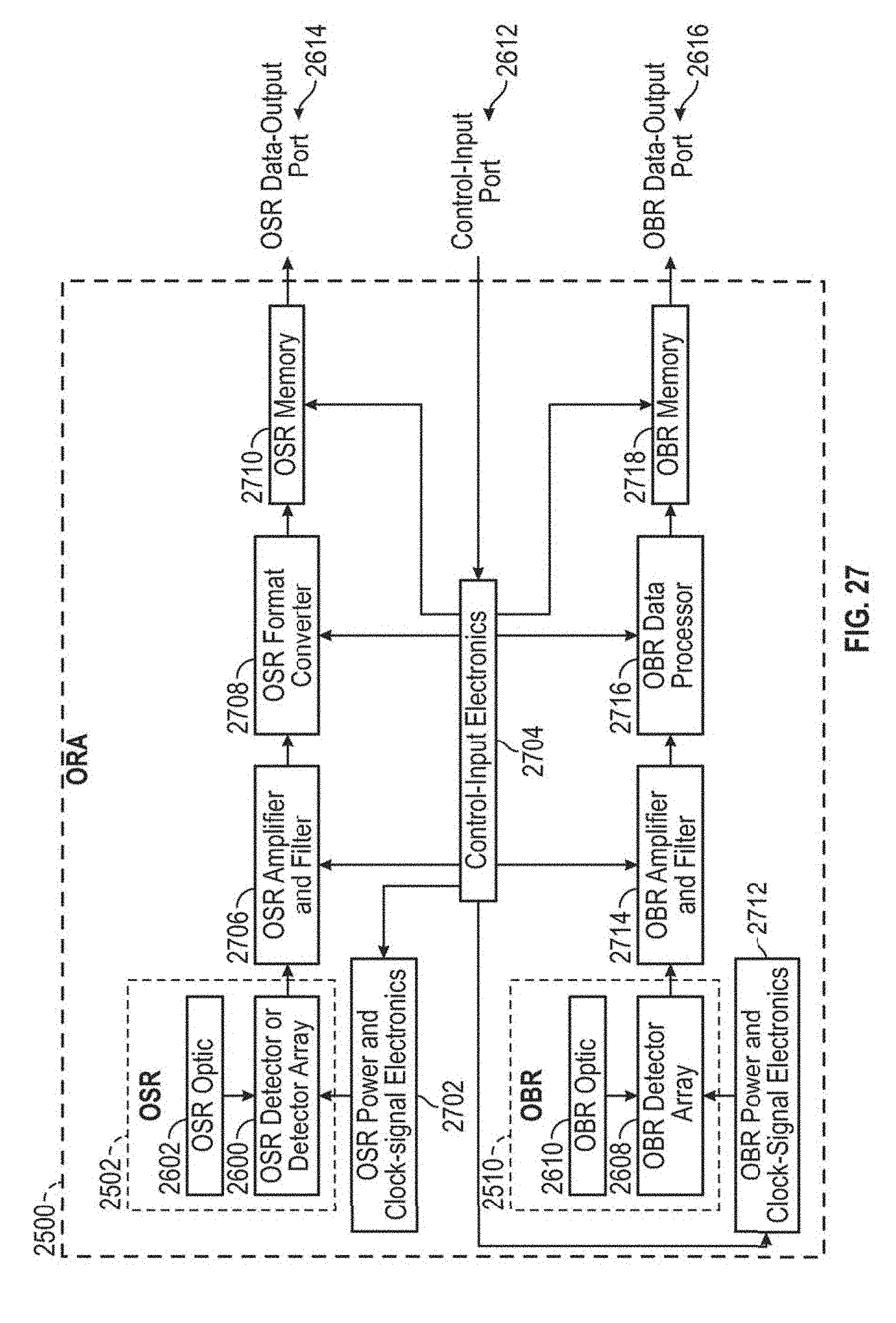

FIG. 27 depicts a functional block diagram of an optical receiver assembly.

FIG. 28a is a flow diagram depicting a process of receiving optical signals by an optical receiver assembly.

FIG. 28b is a flow diagram depicting a process of receiving optical beacons by an optical receiver assembly.

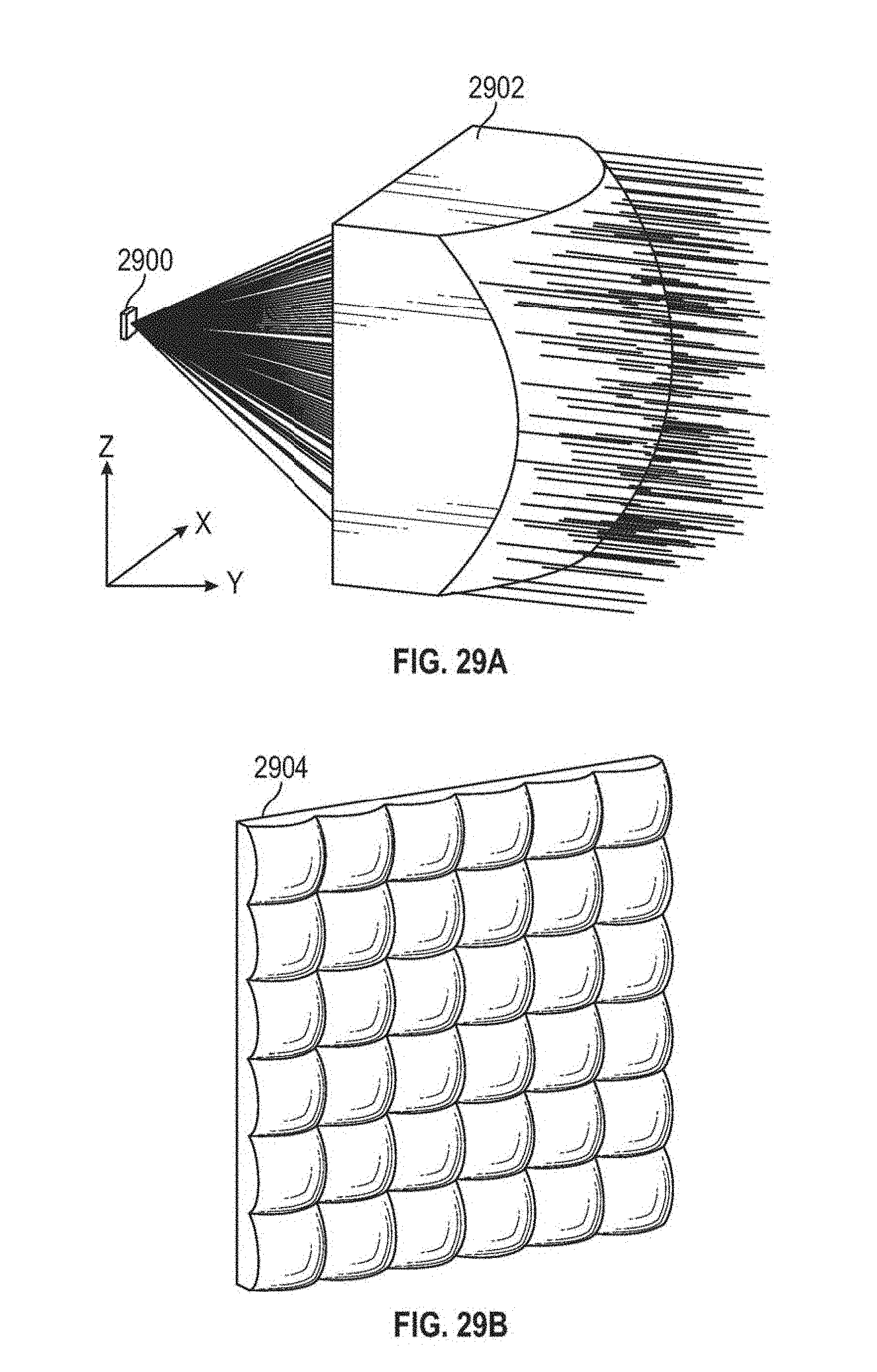

FIG. 29a is a three-dimensional depiction of a detector and a beam of collimated rays traced through a lenslet, which focuses (i.e., concentrates) the rays onto the light-sensitive surface of a detector.

FIG. 29b depicts a three-dimensional view of an array of lenslets.

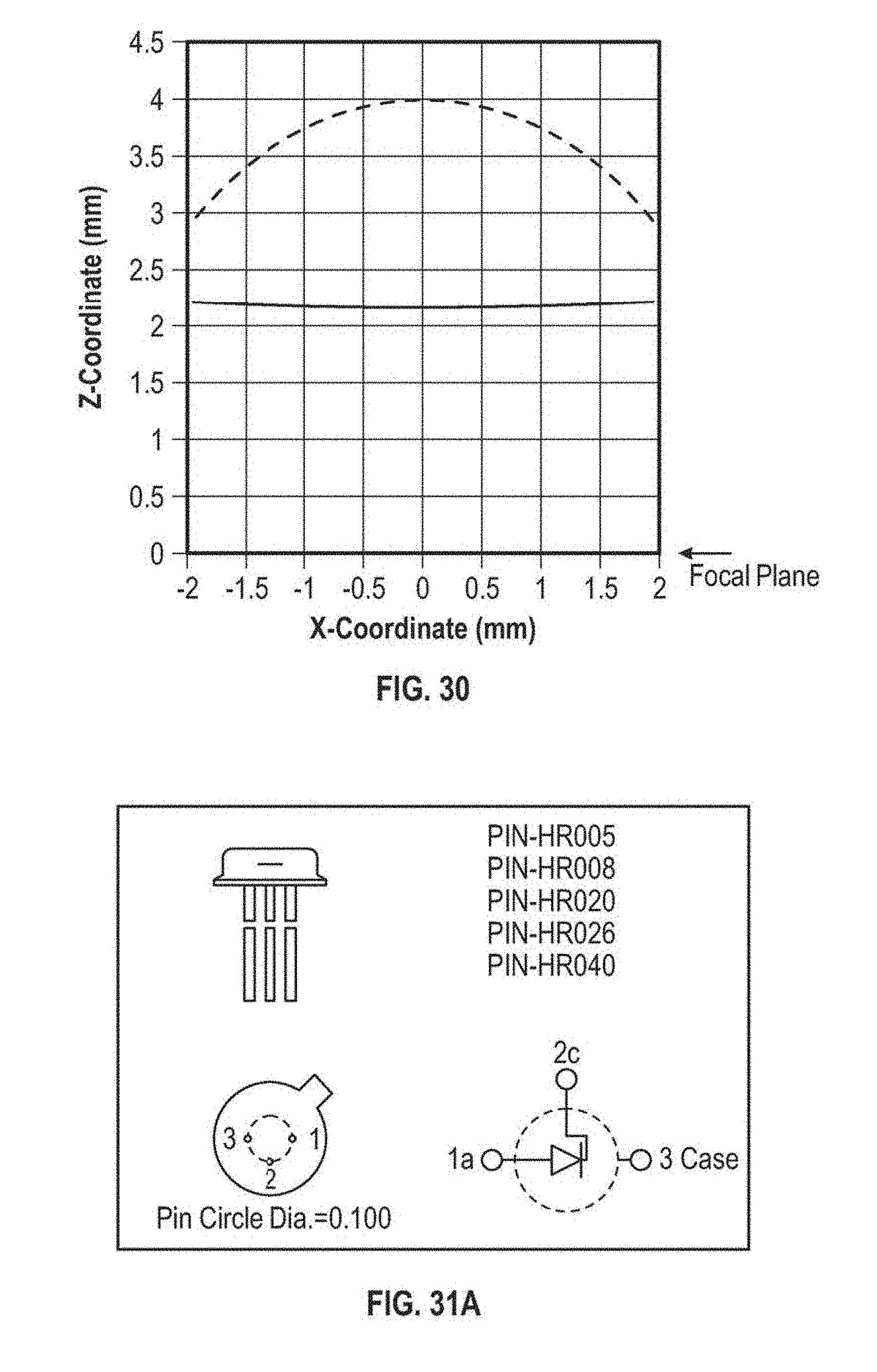

FIG. 30 depicts a diagonal cross-section (i.e., taken from one corner of the square entrance pupil to the corner on the opposite side) through an optical axis of an aspherical lenslet that may be used in an optical assembly.

FIG. 31a depicts a specification of an example detector.

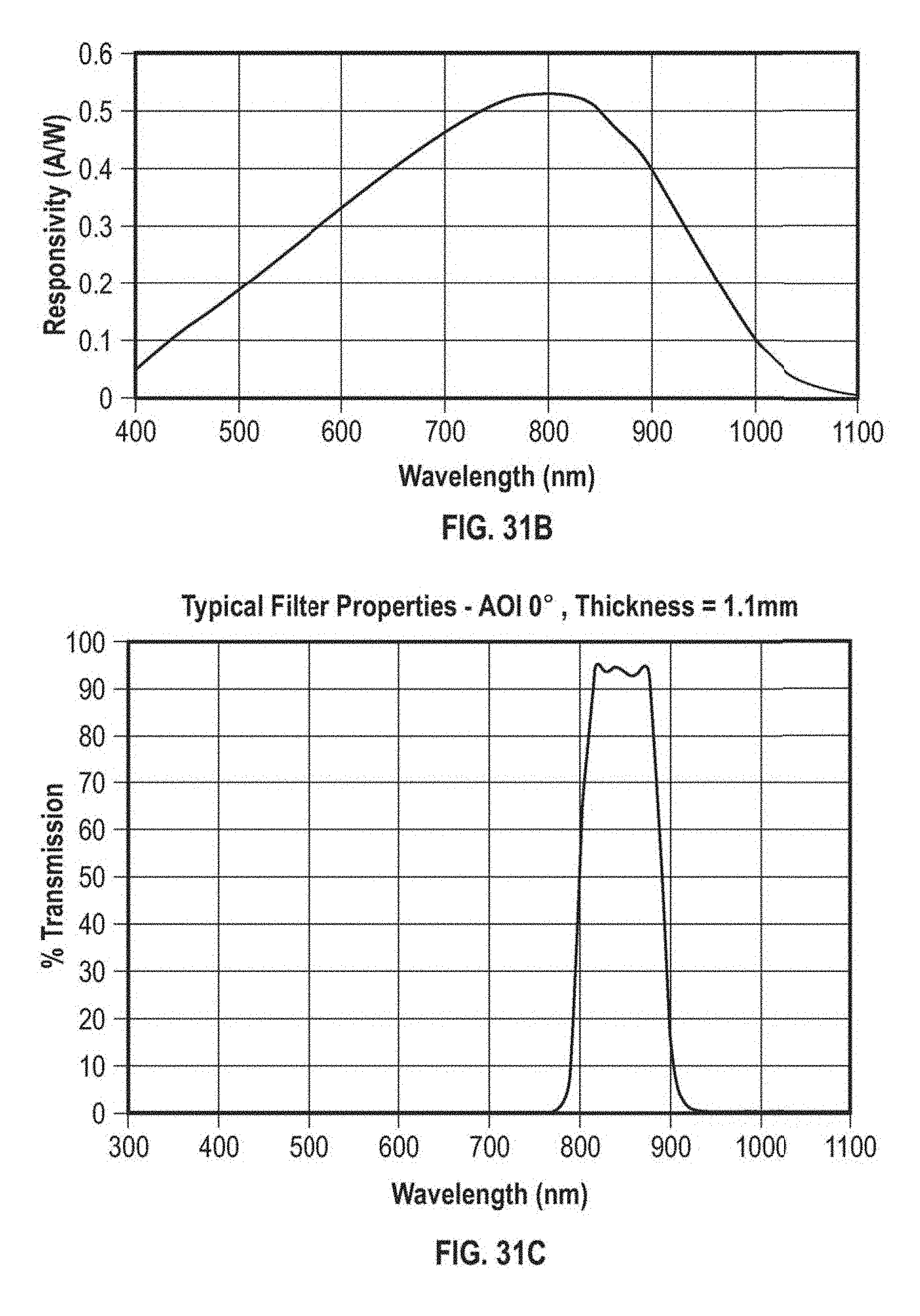

FIG. 31b depicts a plot of the PIN-HR008 detector's spectral response.

FIG. 31c is a plot of the spectral response of an example optical bandpass filter that may be used in conjunction with the PIN-HR0080 detector to reduce detector noise due to background radiation.

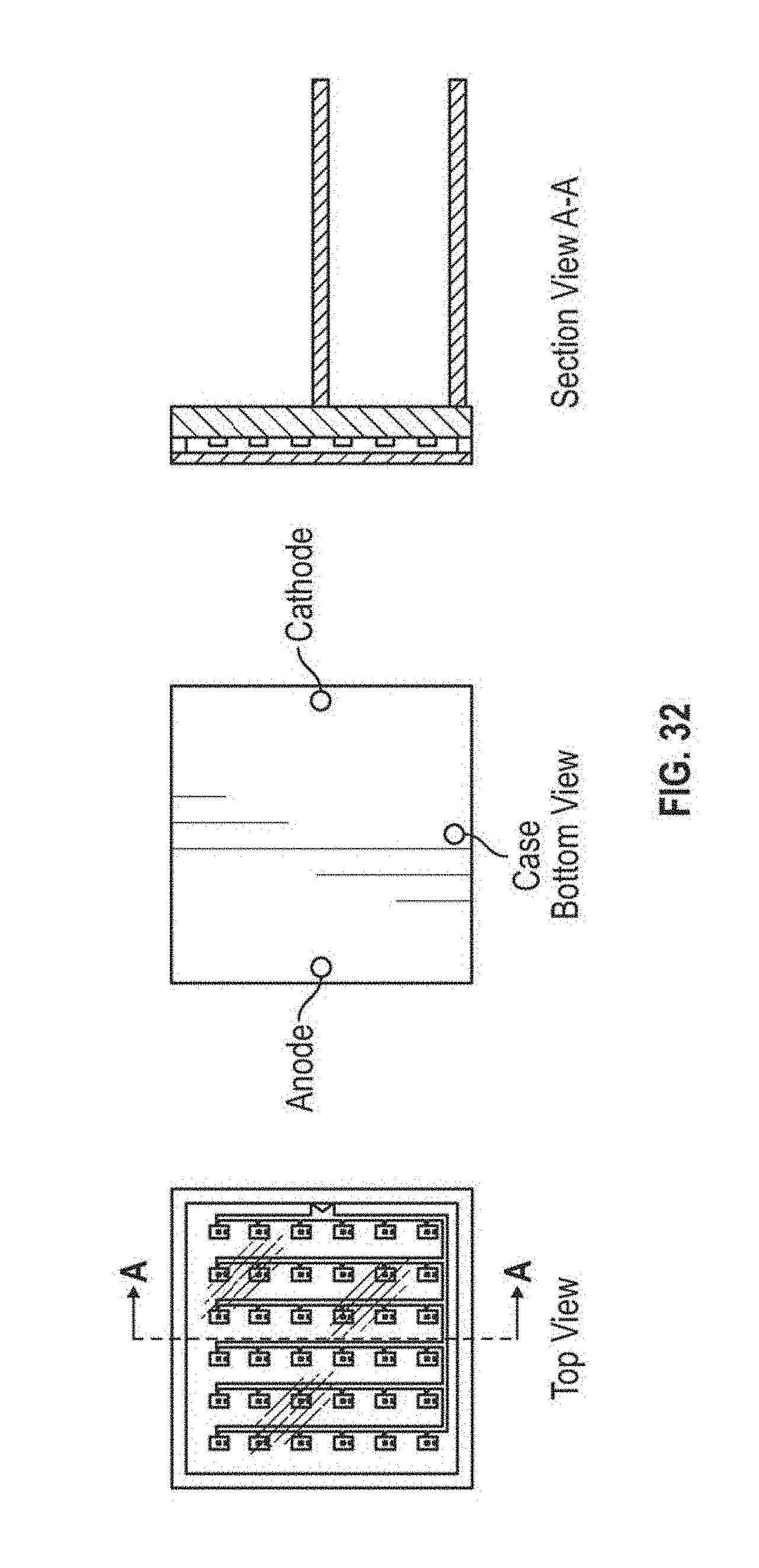

FIG. 32 is a depiction of a photodiode array using PIN-HR0080 detectors with dimensions in millimeters.

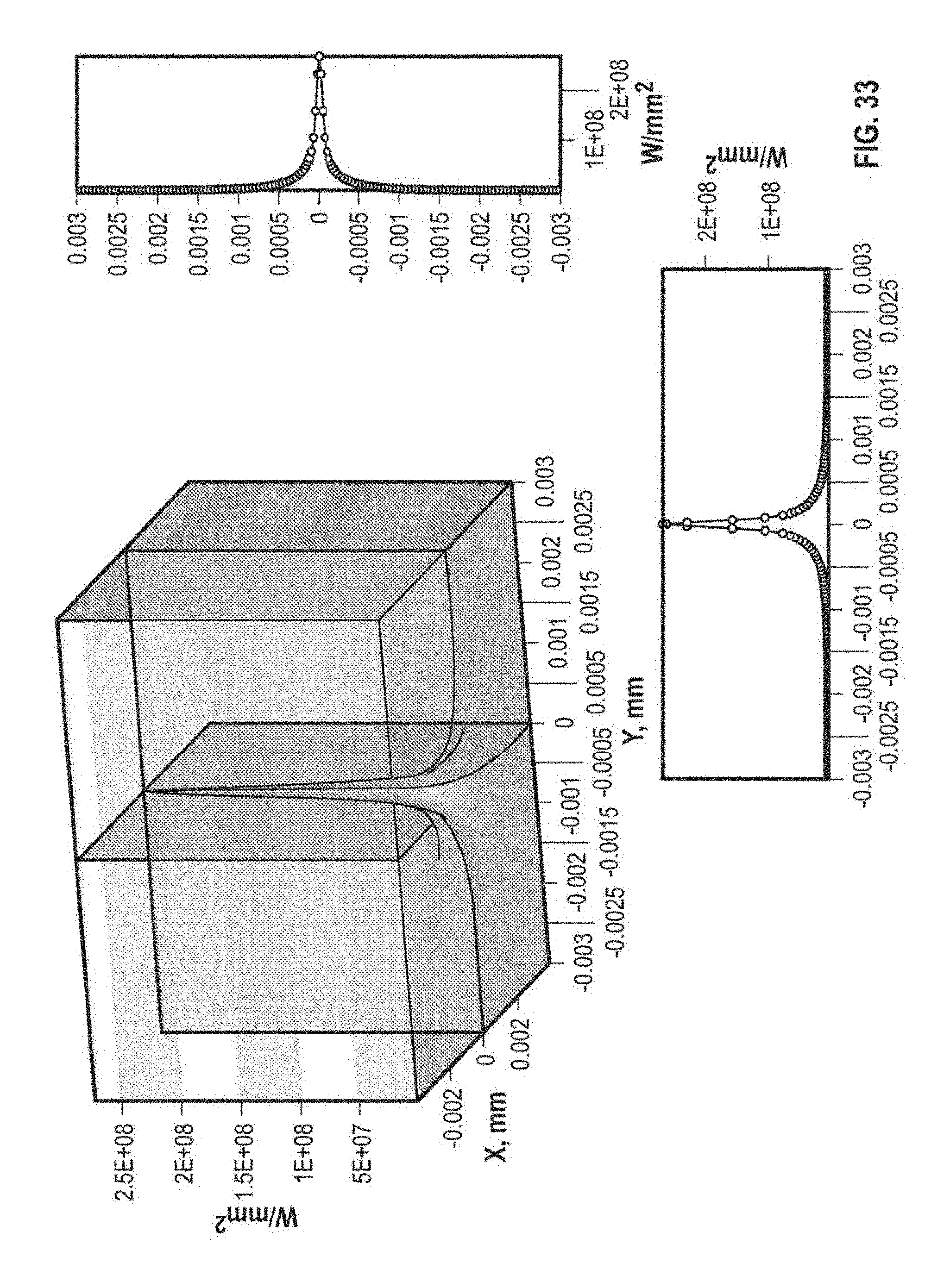

FIG. 33 depicts the irradiance distribution produced on a single detector (e.g., one of the detectors in the detector array of FIG. 32) of the OSR using the lenslet array of FIG. 29b as an OSR optic when the incident beam from an optical transmitter is centered on the FOV of the OSR.

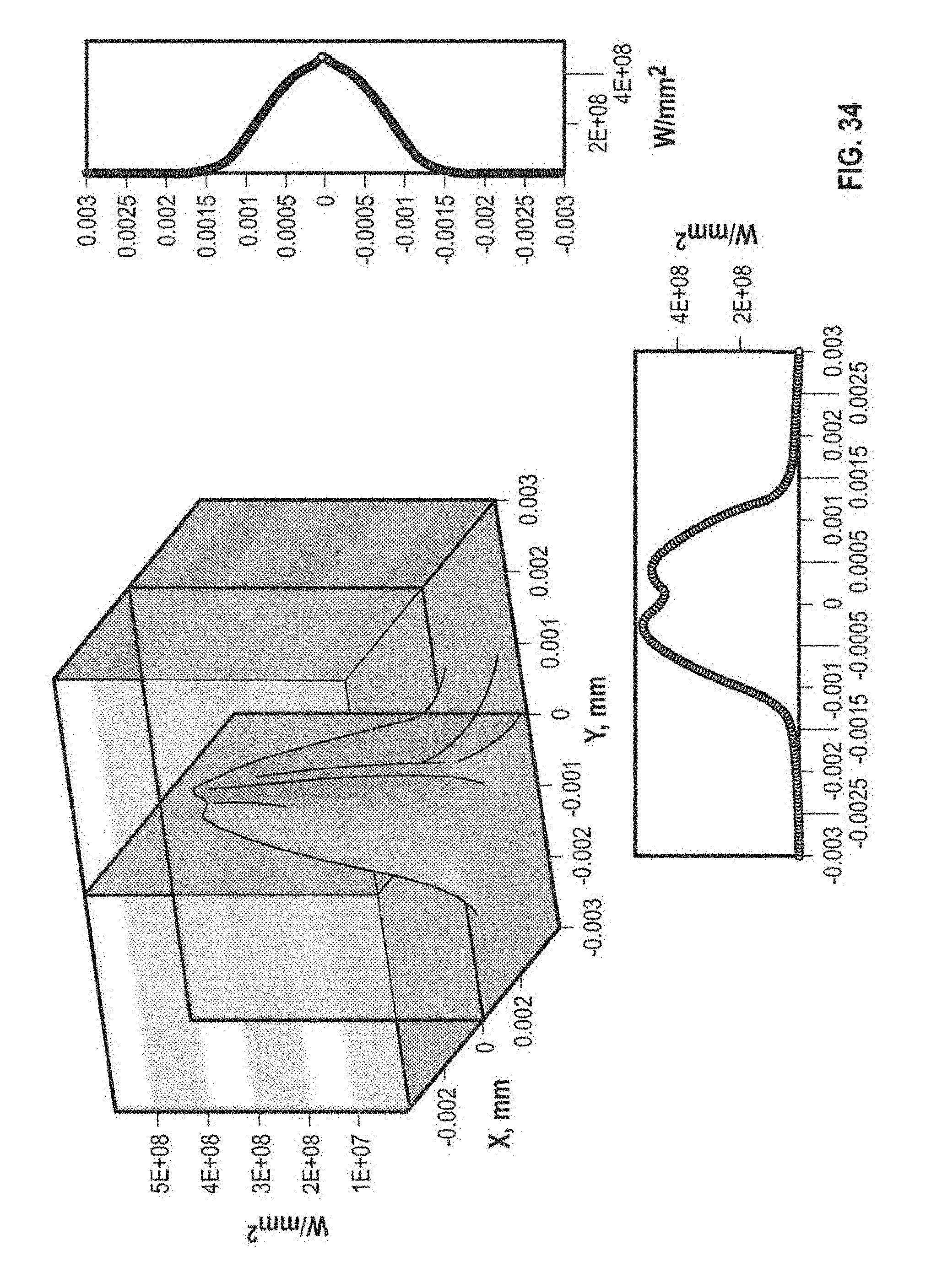

FIG. 34 depicts the irradiance distribution produced on a single detector when the transmitted beam is incident at an angle of 1.8.degree. (i.e., half the width of the OSR's FOV) relative to the center of the FOV.

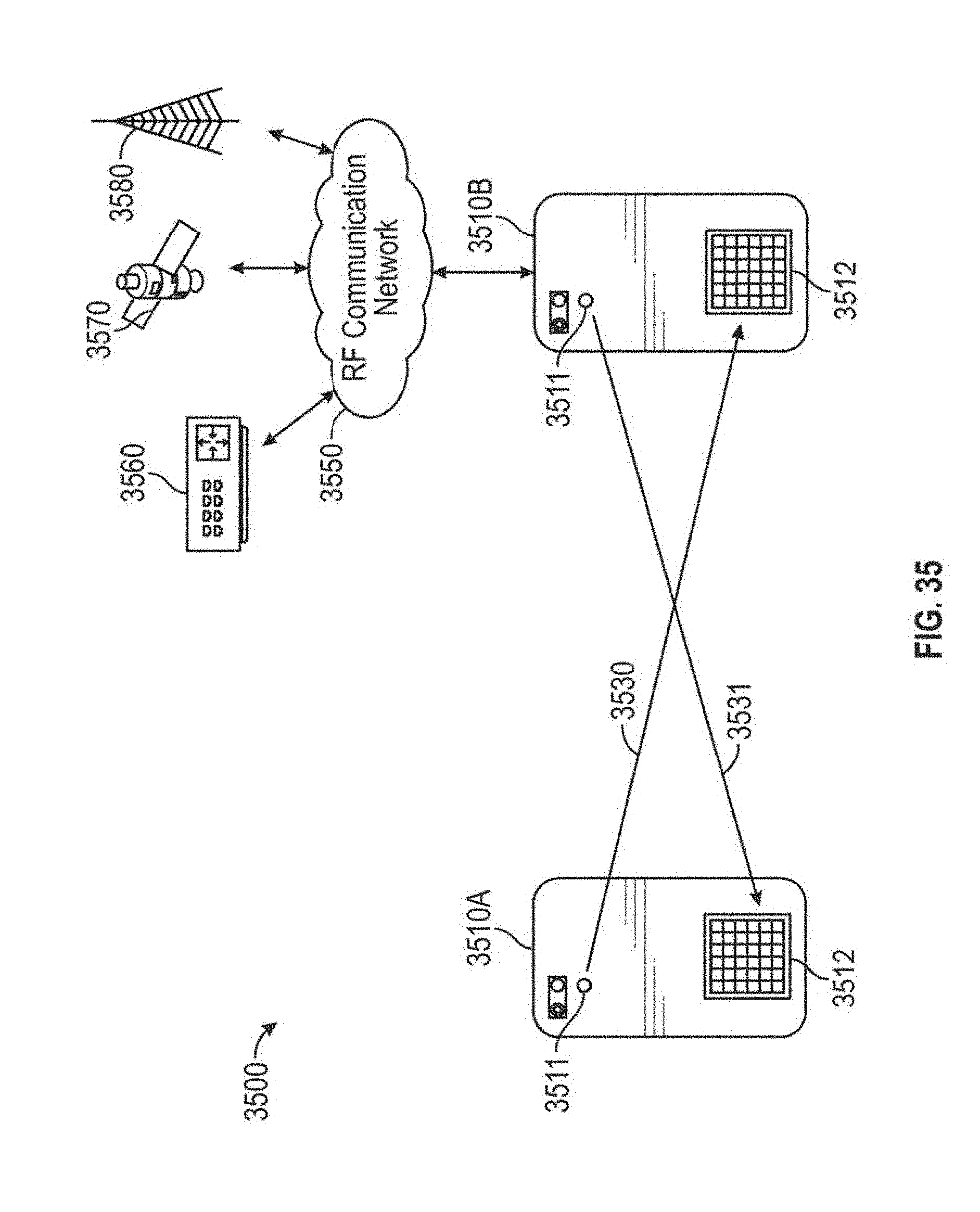

FIG. 35 illustrates an example ad-hoc optical narrowcasting network environment.

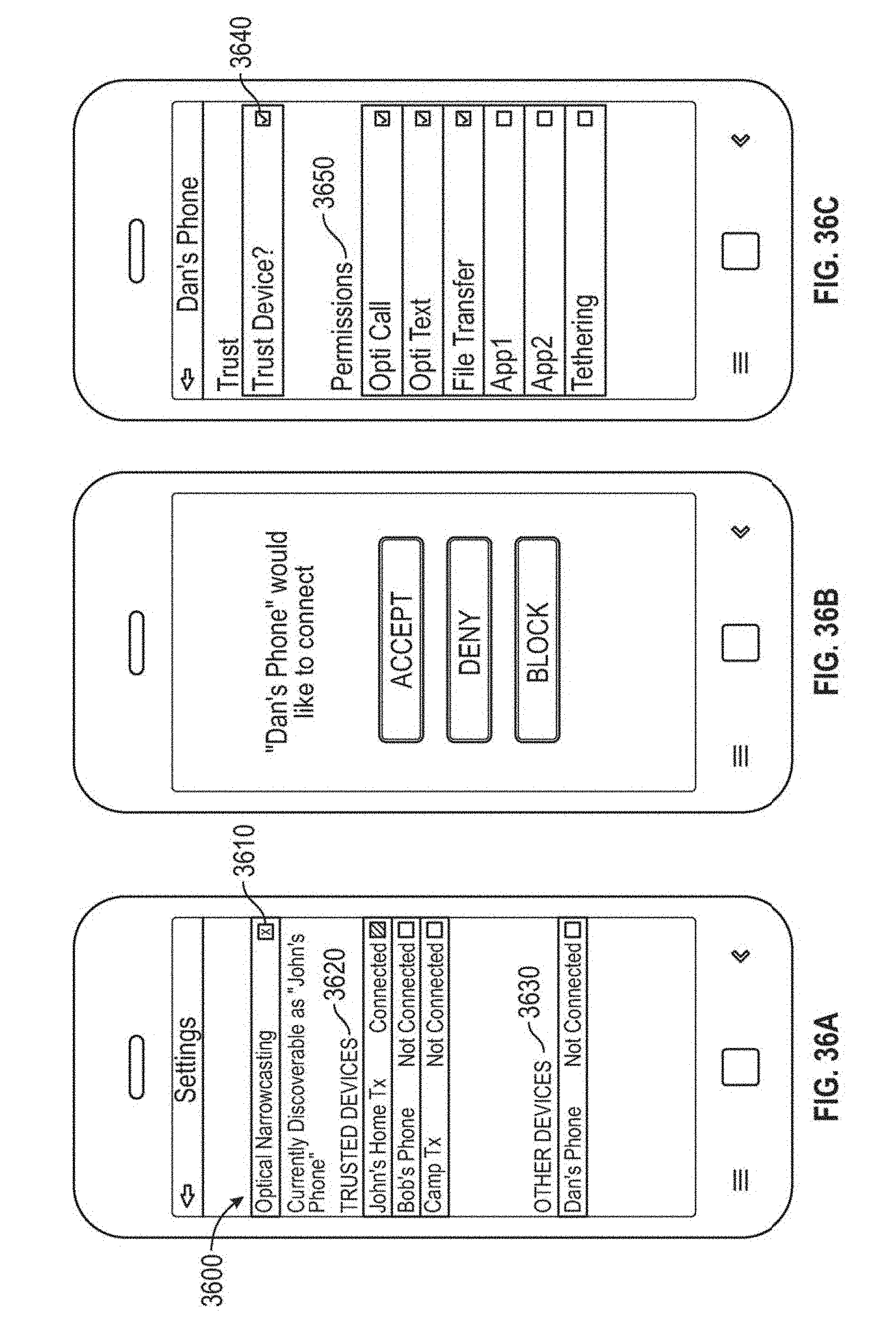

FIG. 36A illustrates an example graphical user interface for setting ad-hoc networking settings that may be implemented in embodiments.

FIG. 36B illustrates an example graphical user interface for setting ad-hoc networking settings that may be implemented in embodiments.

FIG. 36C illustrates an example graphical user interface for setting ad-hoc networking settings that may be implemented in embodiments.

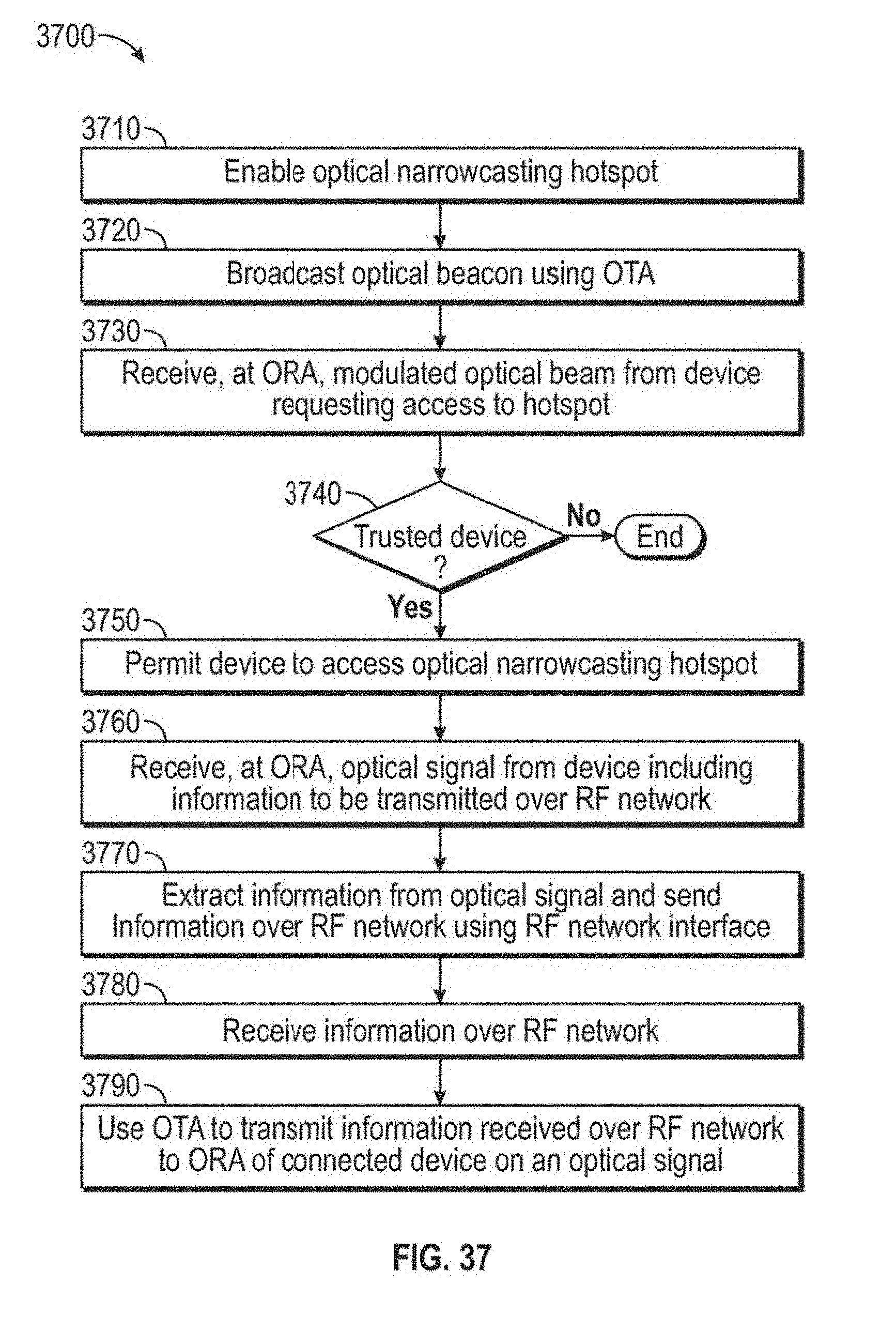

FIG. 37 is a flow diagram illustrating an example method that may be implemented by a device to create or extend an RF network using an optical narrowcasting ad hoc network.

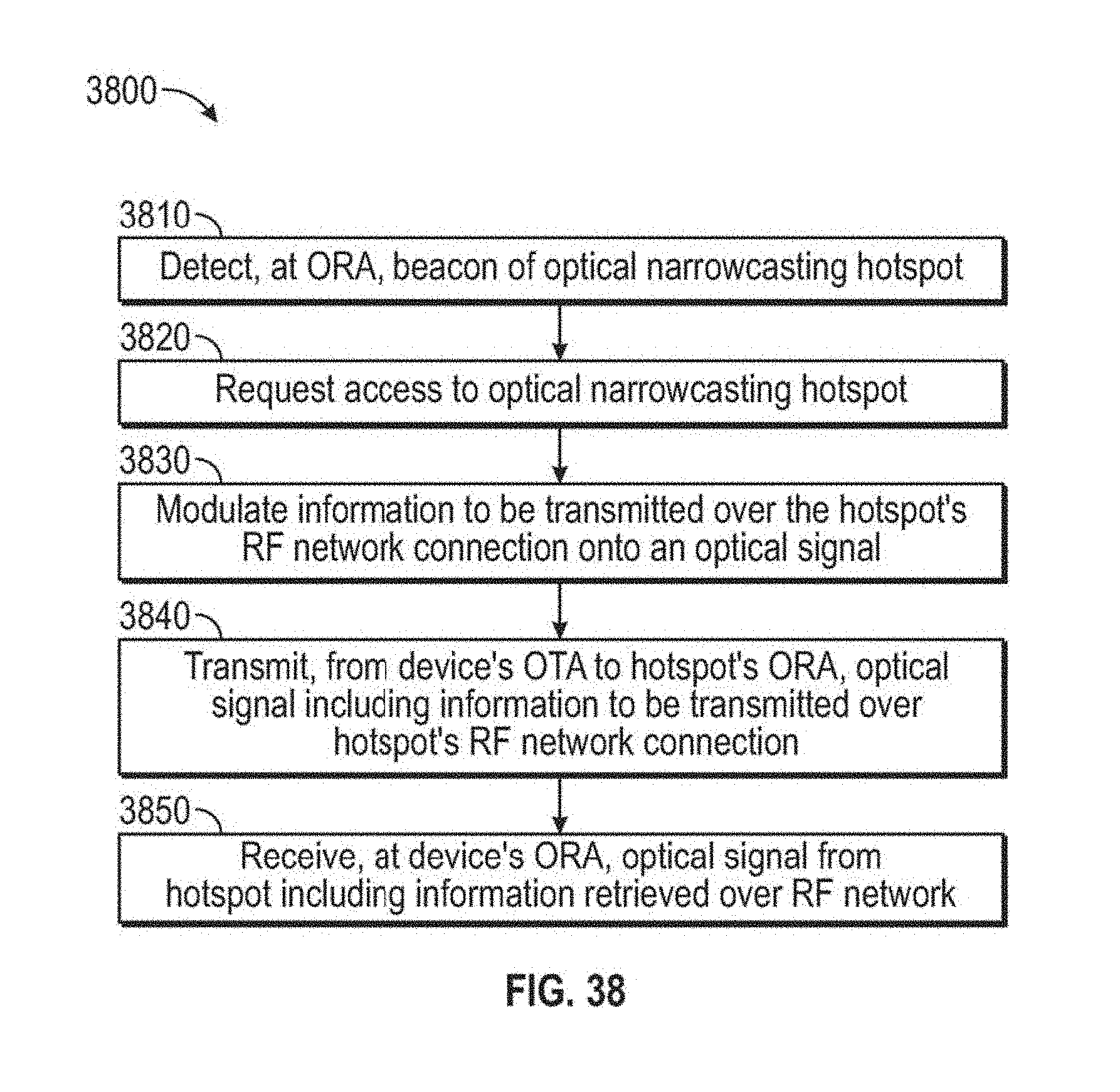

FIG. 38 is a flow diagram illustrating an example method that may be implemented by a device to access an RF network over an optical narrowcasting ad hoc network.

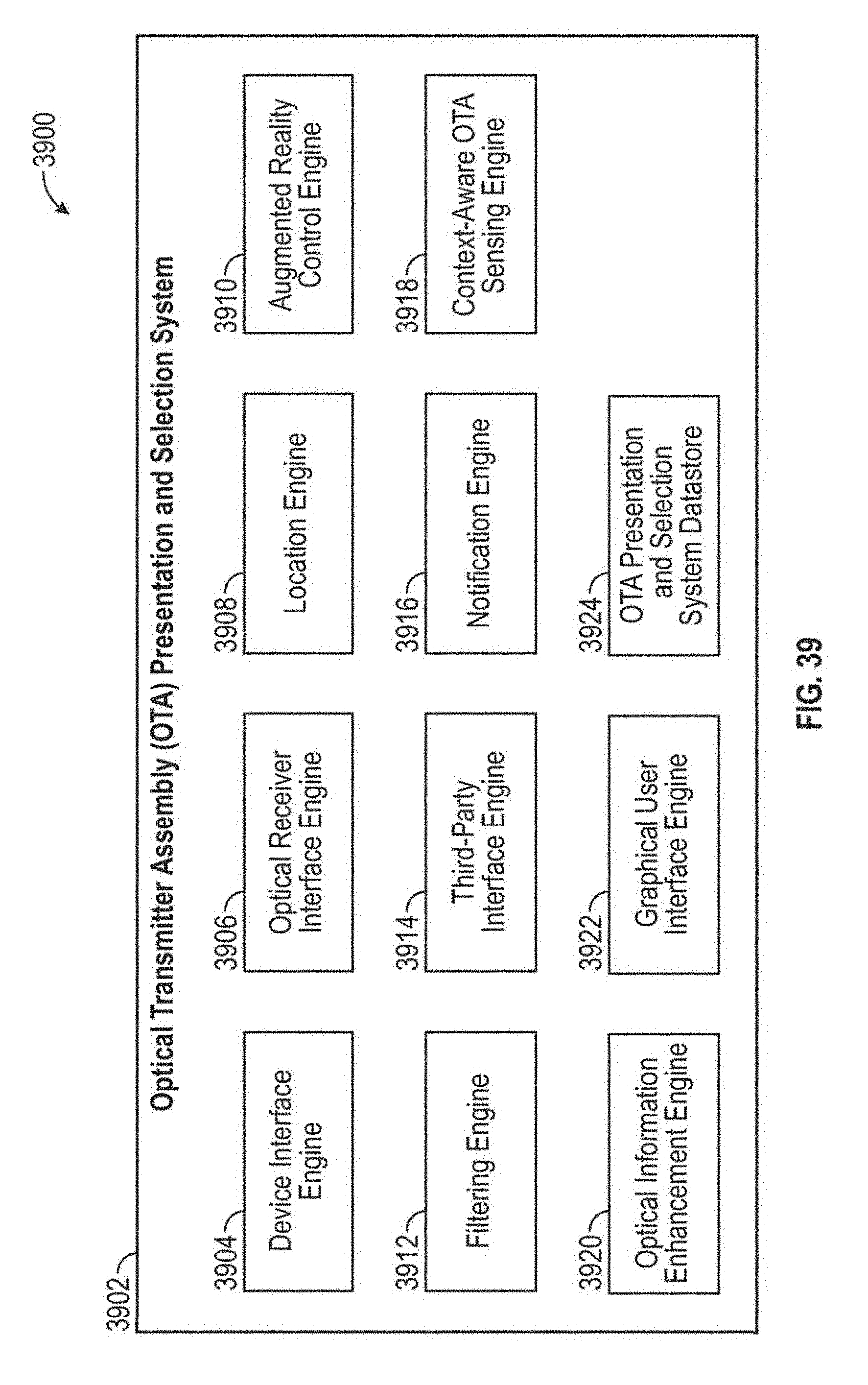

FIG. 39 depicts a block diagram of an example of an OTA presentation and selection system according to some embodiments.

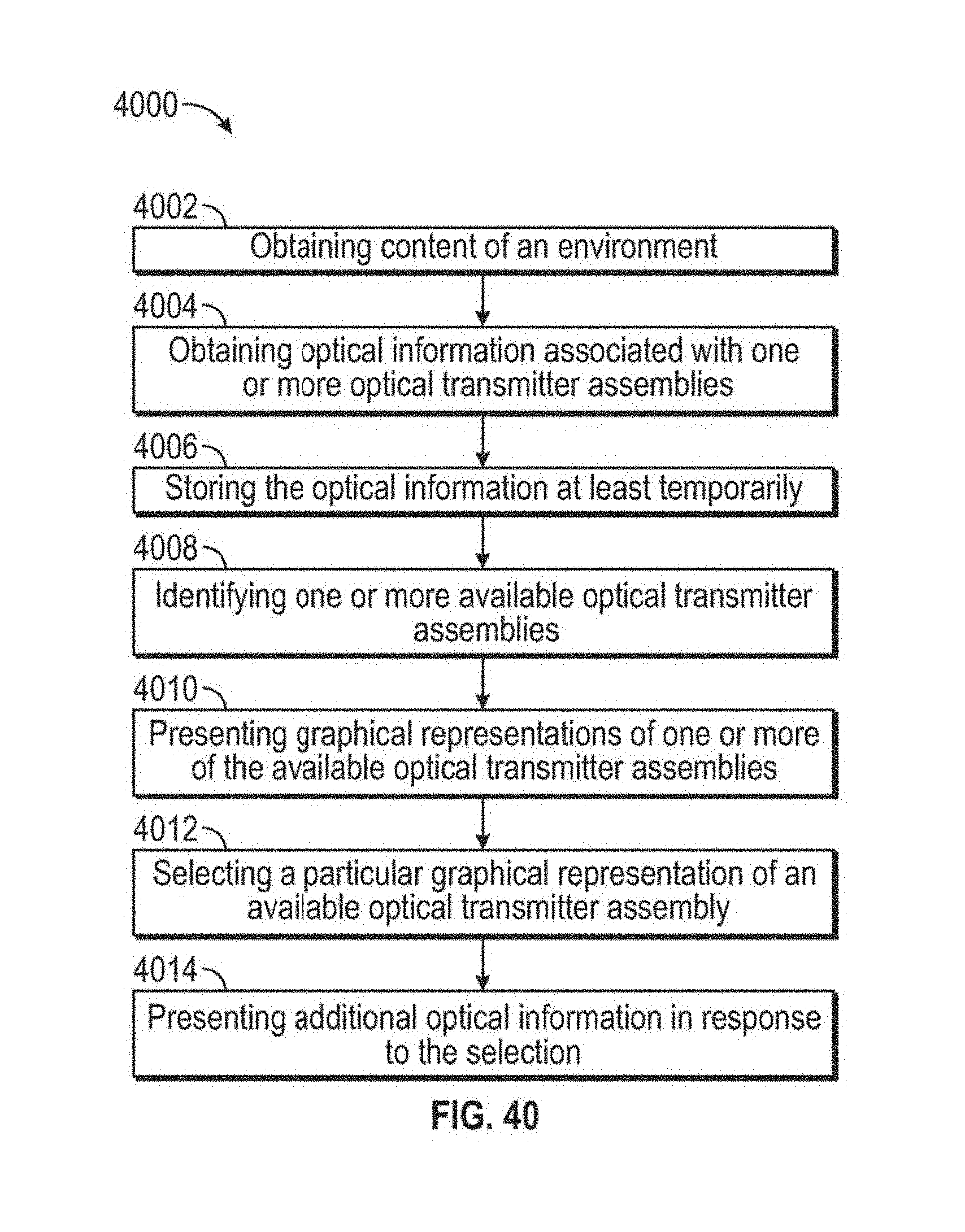

FIG. 40 depicts a flowchart of an example method for presenting graphical representations of OTAs according to some embodiments.

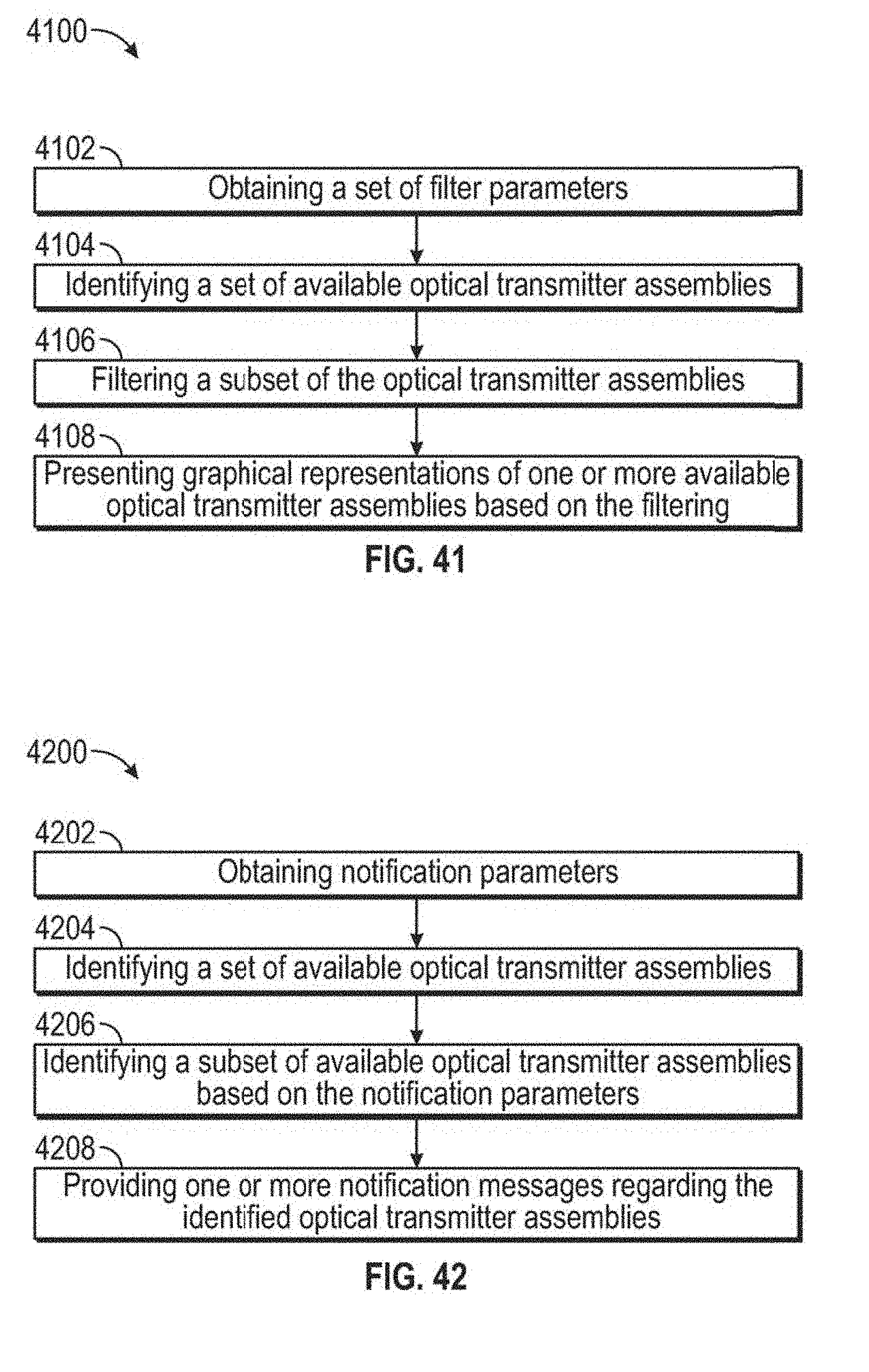

FIG. 41 depicts a flowchart of an example of a method for filtering optical transmitter assemblies or representations thereof according to some embodiments.

FIG. 42 depicts a flowchart of an example of a method for providing notifications according to some embodiments.

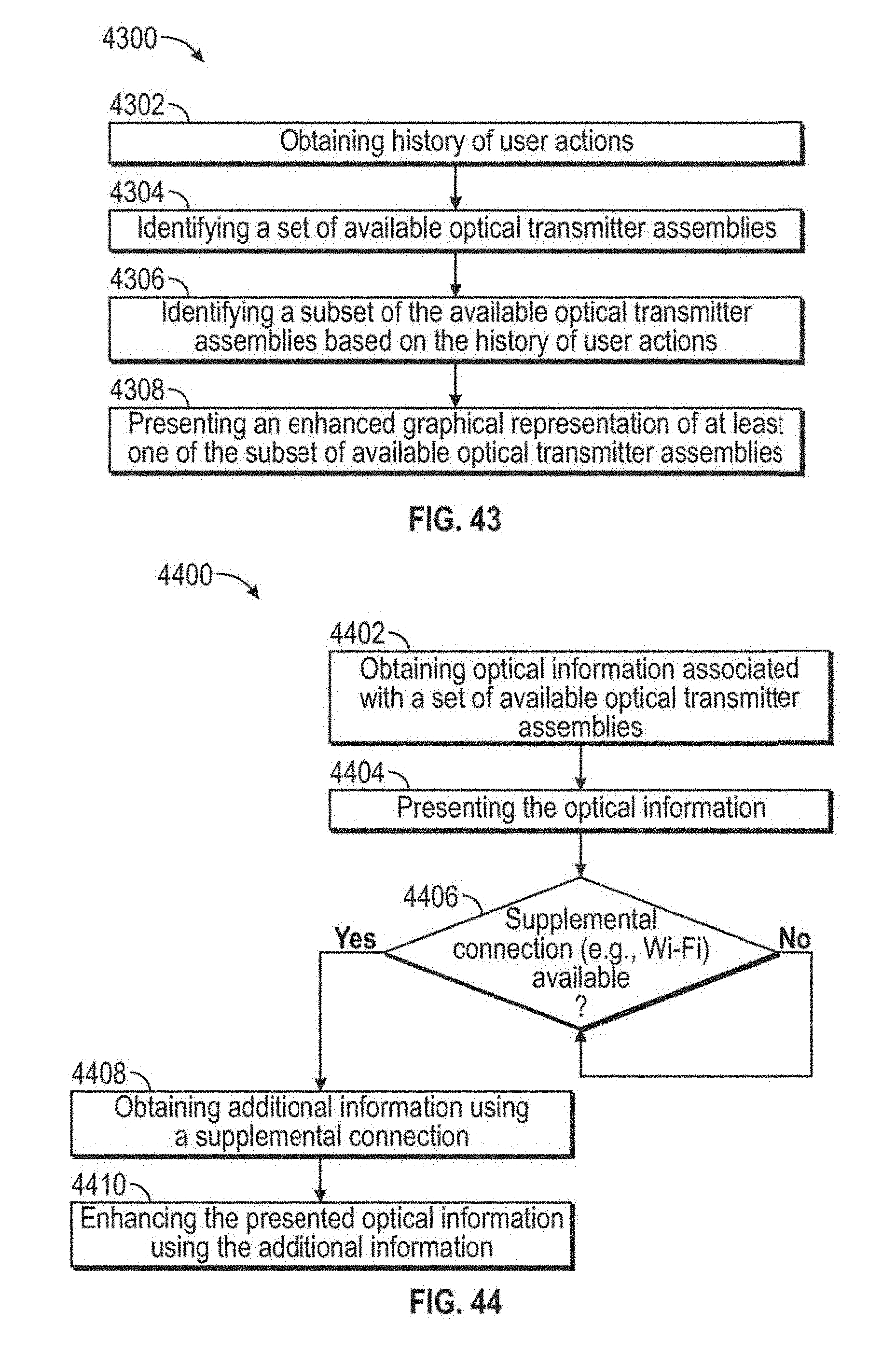

FIG. 43 depicts a flowchart of an example of a method for predicting one or more OTAs that may be of interest to a user according to some embodiments.

FIG. 44 depicts a flowchart of an example of a method for enhancing signal information using a supplemental communication connection according to some embodiments.

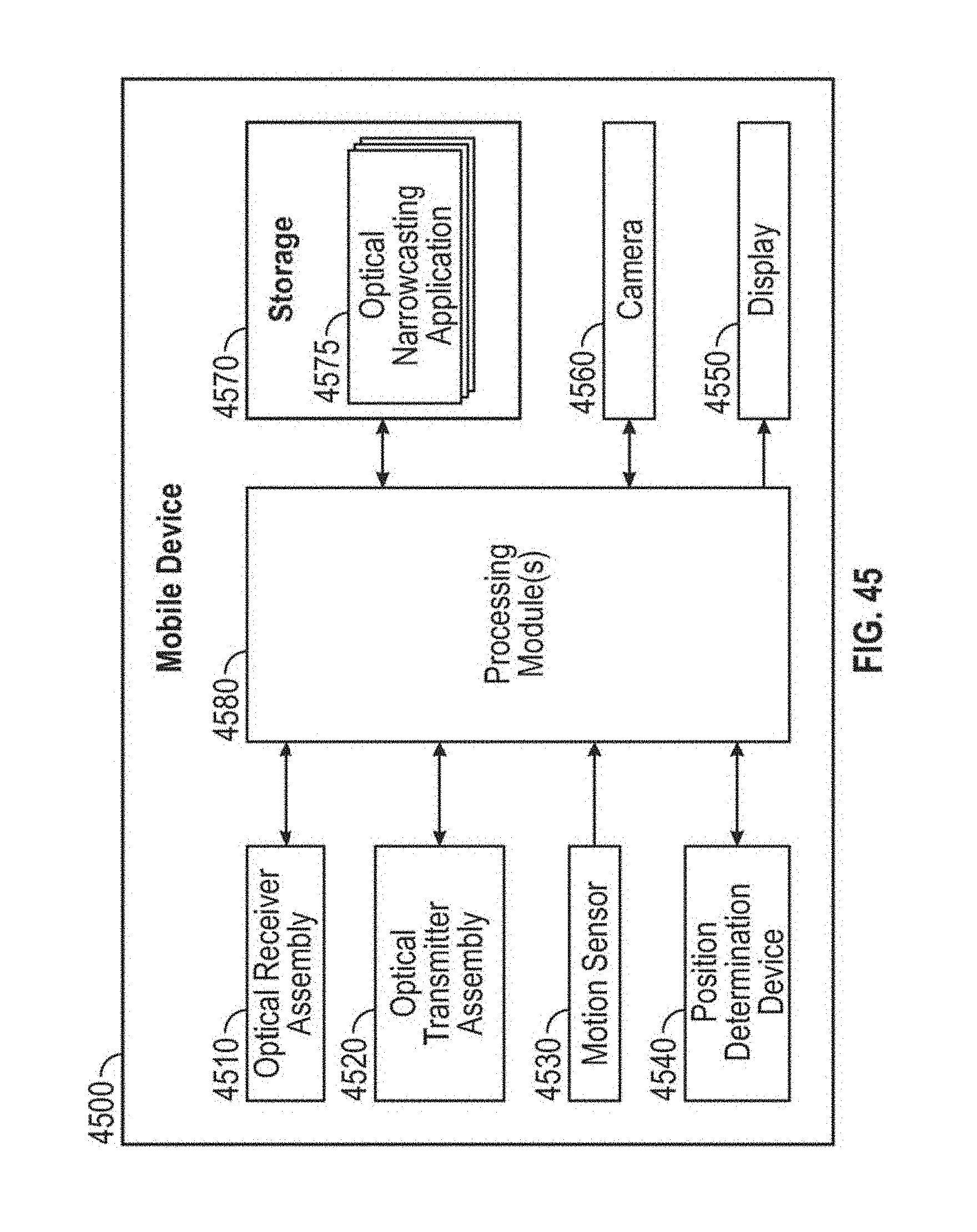

FIG. 45 depicts a block diagram of an example optical narrowcasting mobile device configured to provide GUIs for optical narrowcasting in accordance with embodiments.

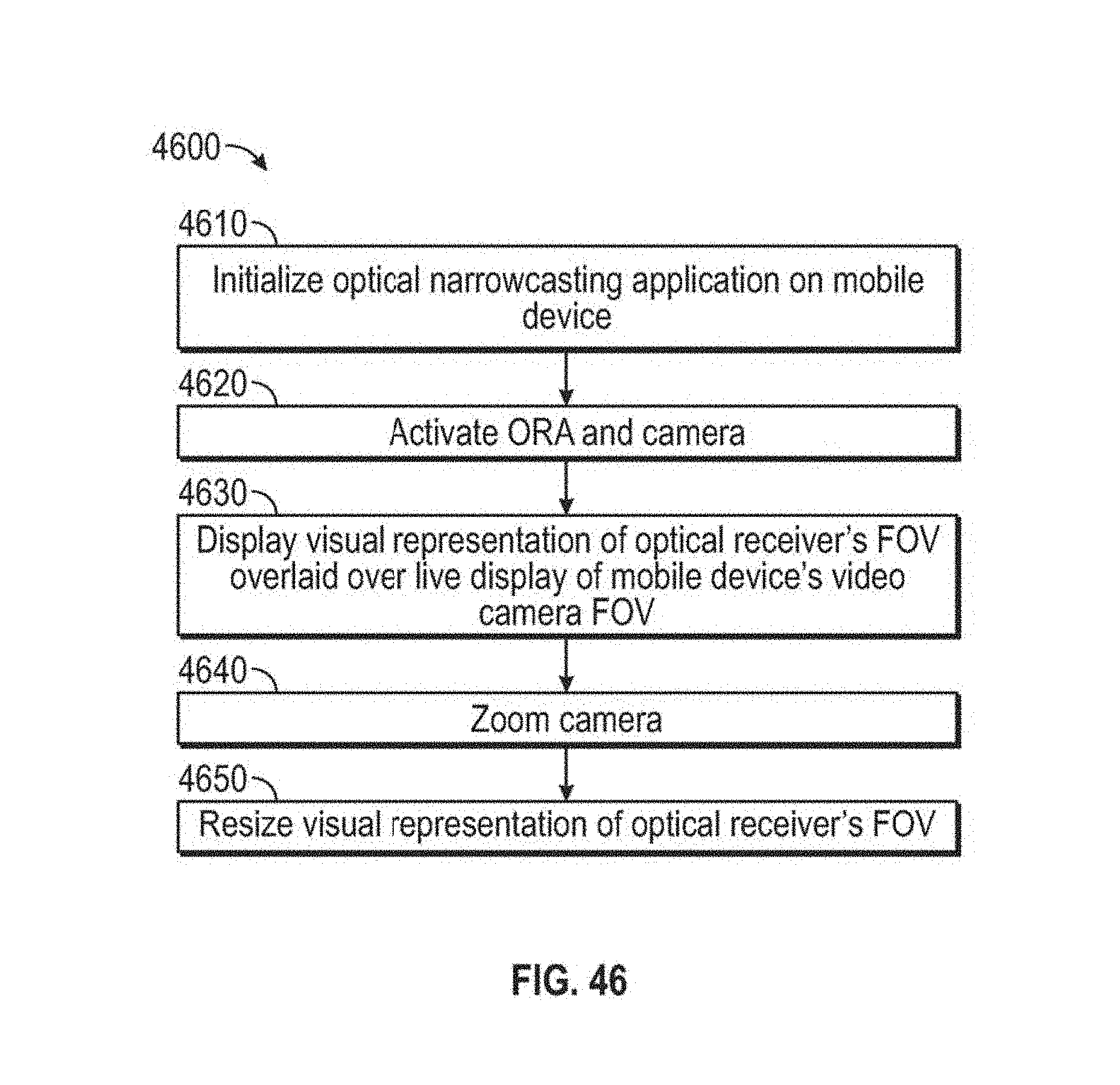

FIG. 46 is a flow diagram illustrating an example method 4600 of rendering an augmented reality display of an optical receiver's field of view in accordance with embodiments.

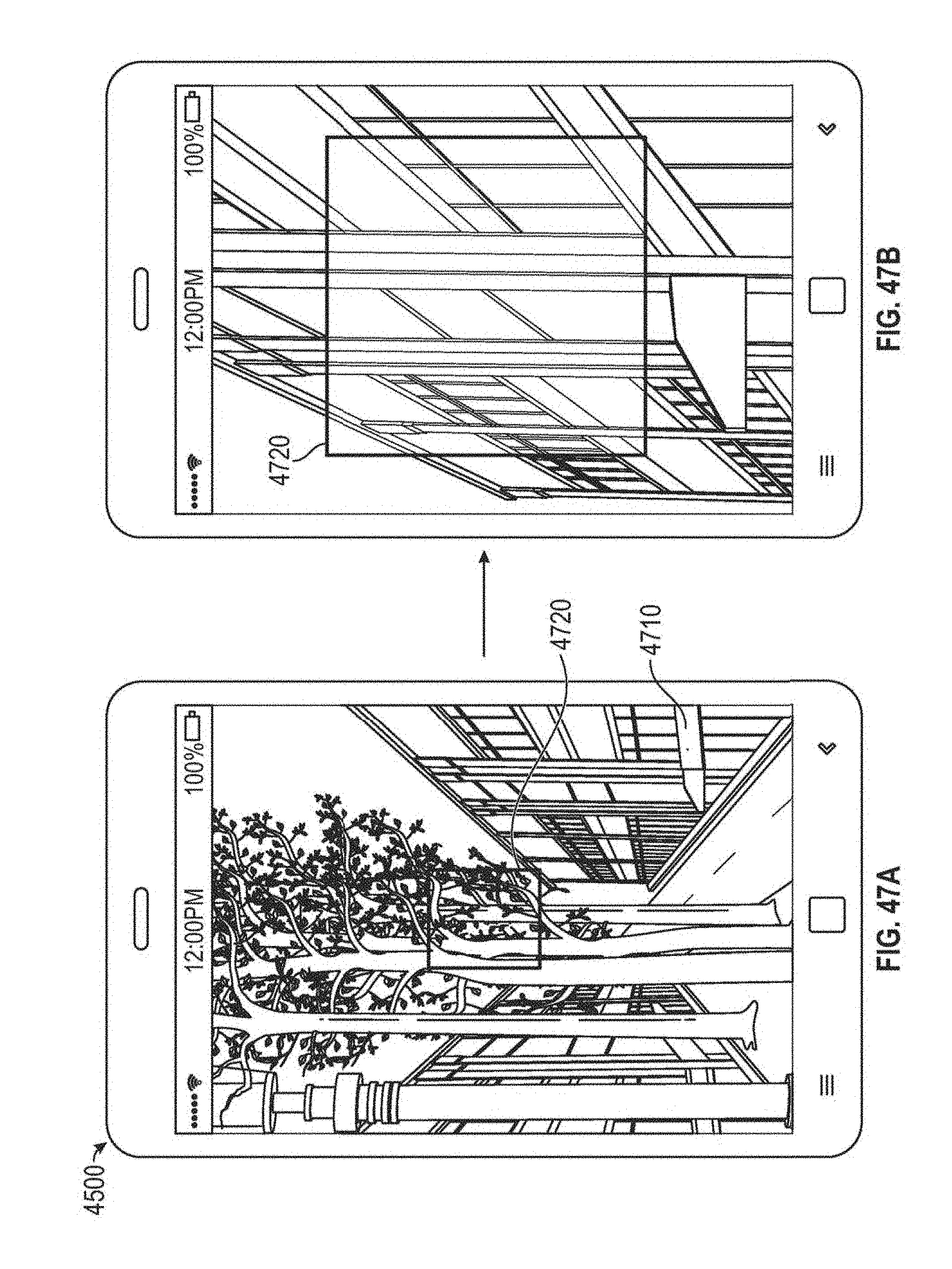

FIG. 47A illustrates an example display of an augmented reality graphical user interface showing a field of view augmented reality object.

FIG. 47B illustrates an example display of the augmented reality graphical user interface of FIG. 47A showing the field of view augmented reality object after zooming a camera.

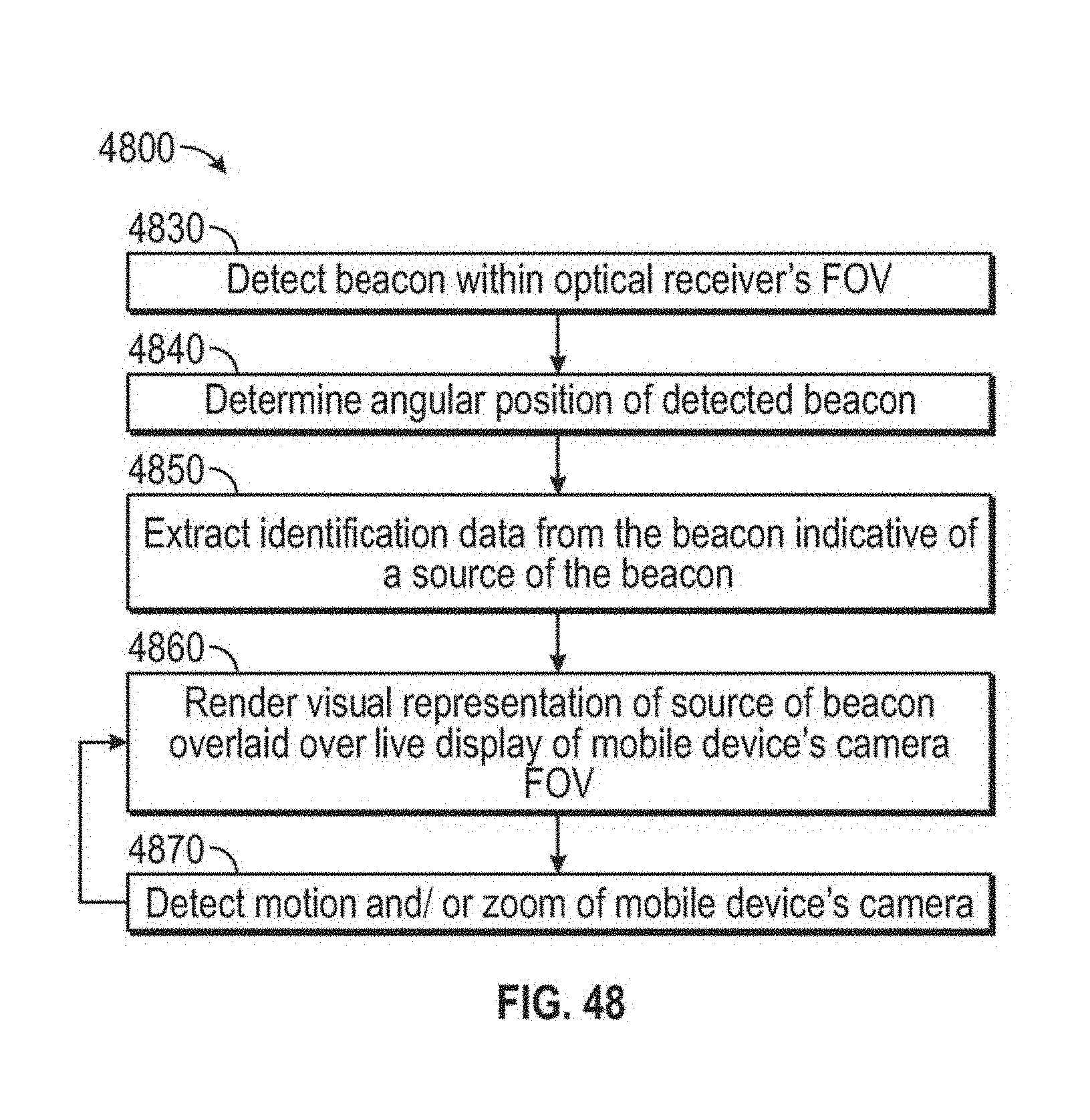

FIG. 48 is a flow diagram illustrating an example method of rendering an augmented reality display of detected optical transmitter assemblies or sources of optical transmitter assemblies in accordance with embodiments.

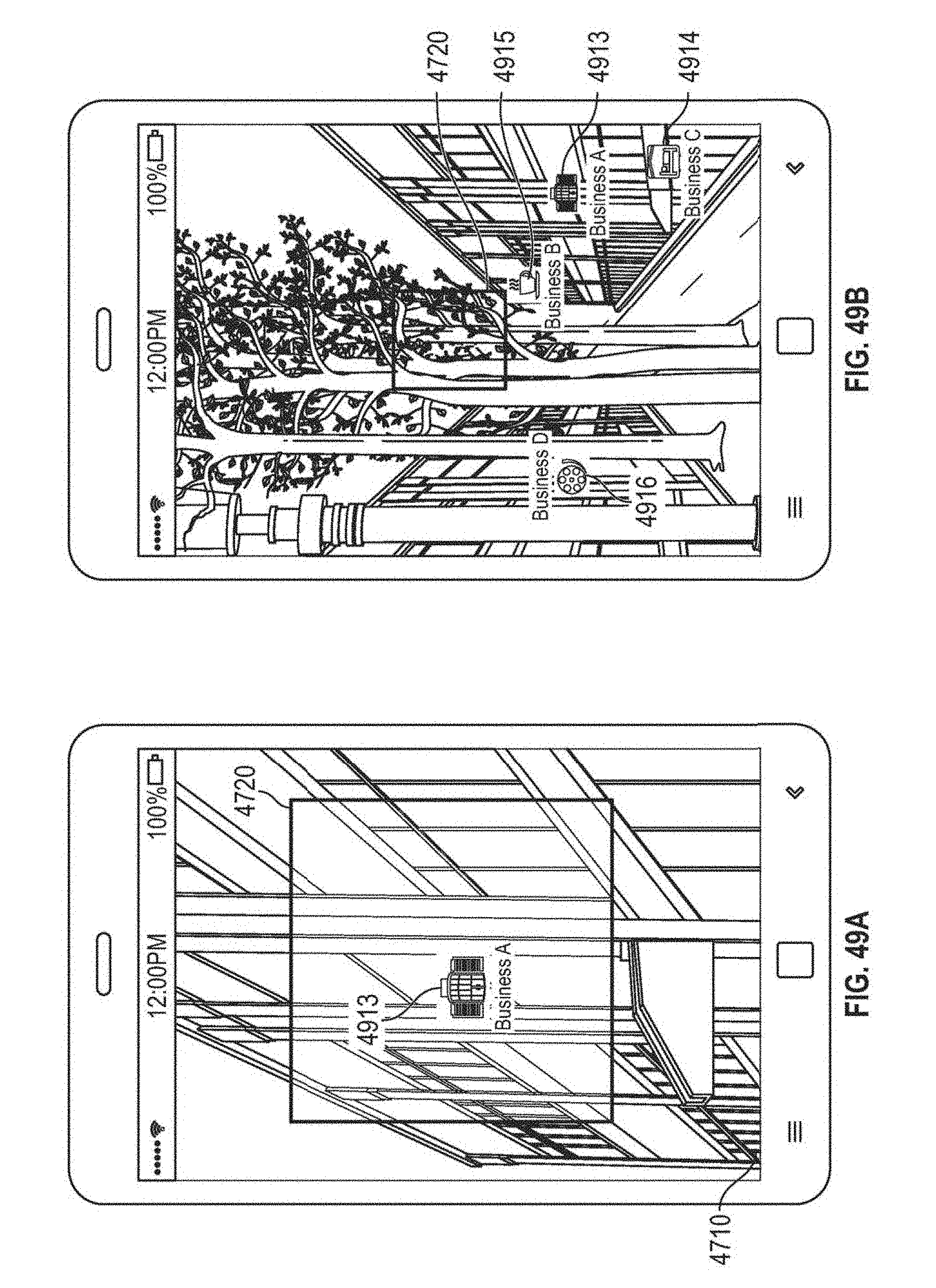

FIG. 49A illustrates an example display of an augmented reality graphical user interface displaying an icon associated with a business transmitting a beacon that was detected by an optical receiver assembly of a mobile device.

FIG. 49B illustrates an example display of an augmented reality graphical user interface displaying a plurality of icons associated with corresponding optical transmitter assemblies.

FIG. 50A is a flow diagram illustrating an example graphical user interface method that may be implemented by a mobile device to extract descriptive data from detected optical transmitter assemblies in accordance with embodiments.

FIG. 50B illustrates an example graphical user interface displaying descriptive data extracted from an optical signal received from an optical transmitter assembly.

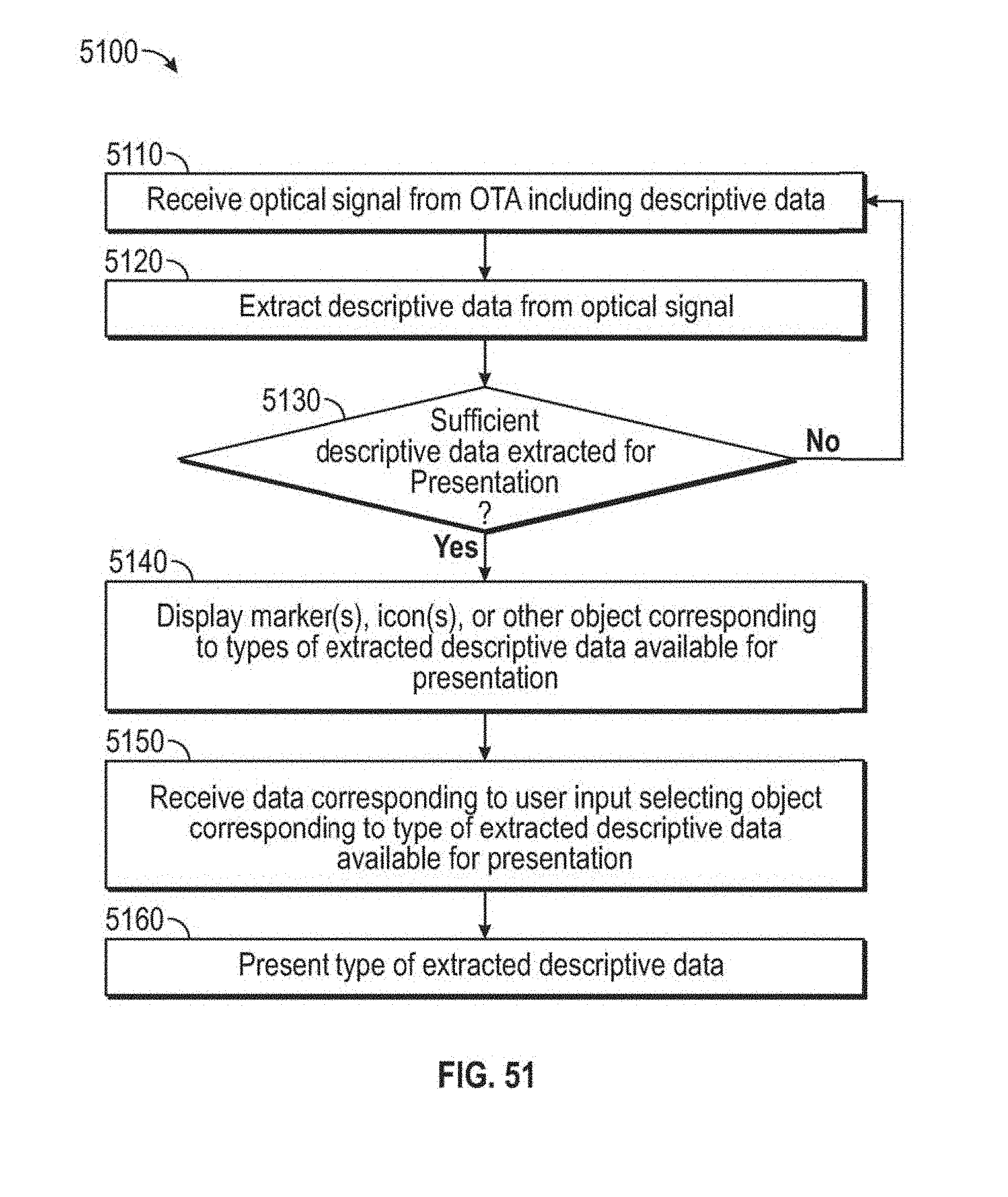

FIG. 51 is a flow diagram illustrating an example graphical user interface method of dynamically presenting descriptive data extracted from an optical signal transmitted by an optical transmitter assembly.

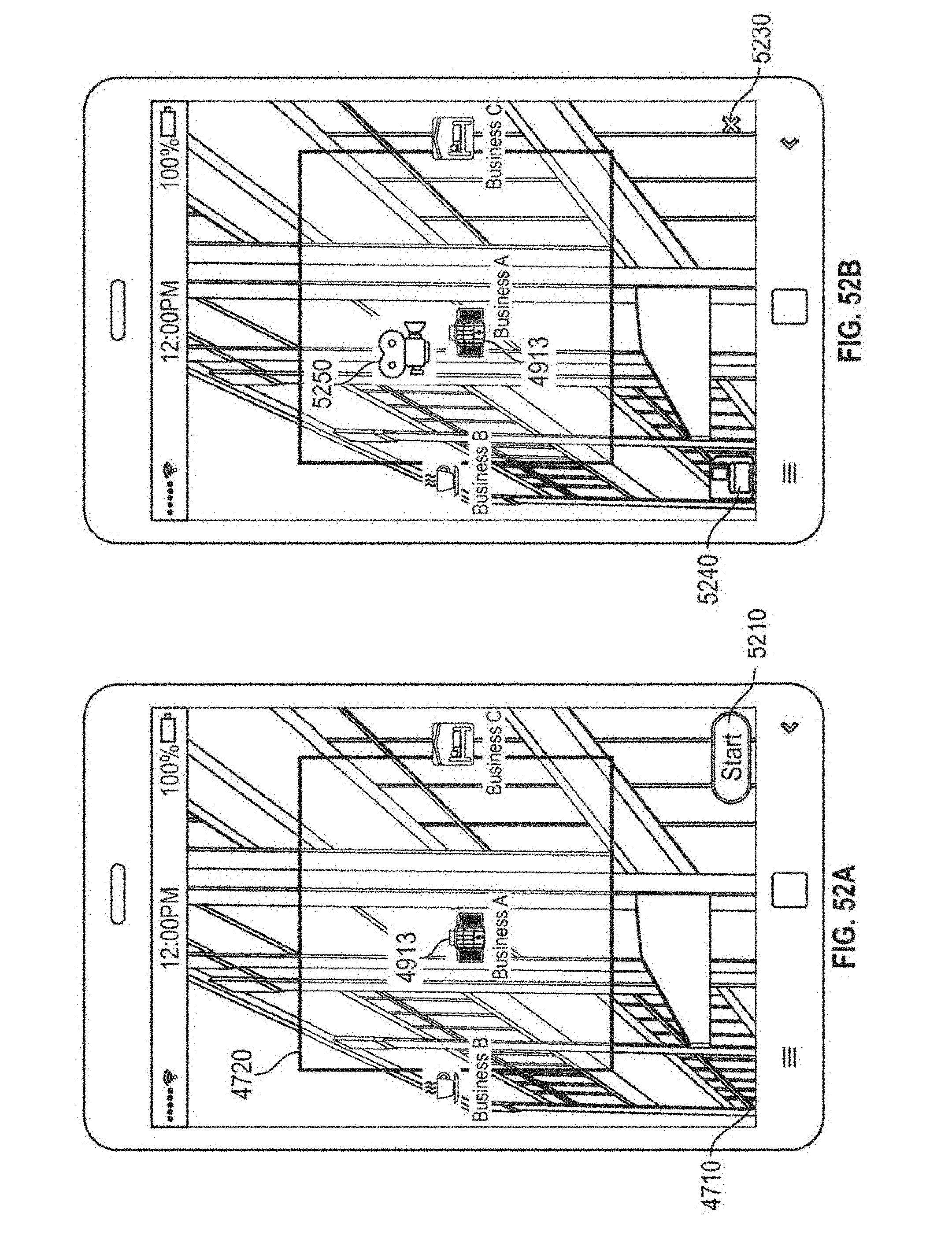

FIG. 52A illustrates an example display of a graphical user interface for retrieving optical signal information transmitted by an optical transmitter assembly.

FIG. 52B illustrates an example display of a graphical user interface for retrieving optical signal information transmitted by an optical transmitter assembly.

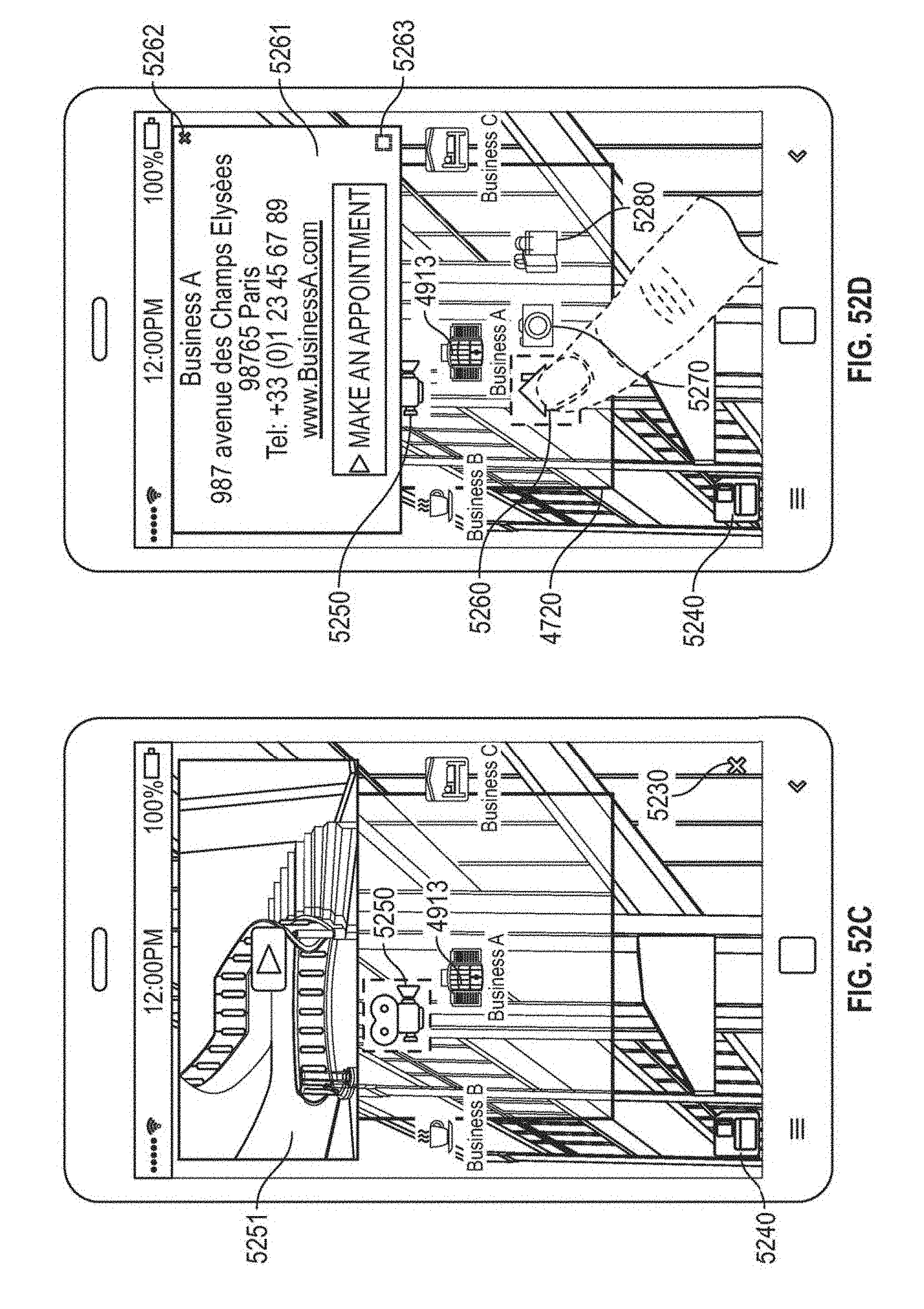

FIG. 52C illustrates an example display of a graphical user interface after retrieving optical signal information including a video.

FIG. 52D illustrates an example display of a graphical user interface after extracting all optical signal information received from an optical transmitter assembly.

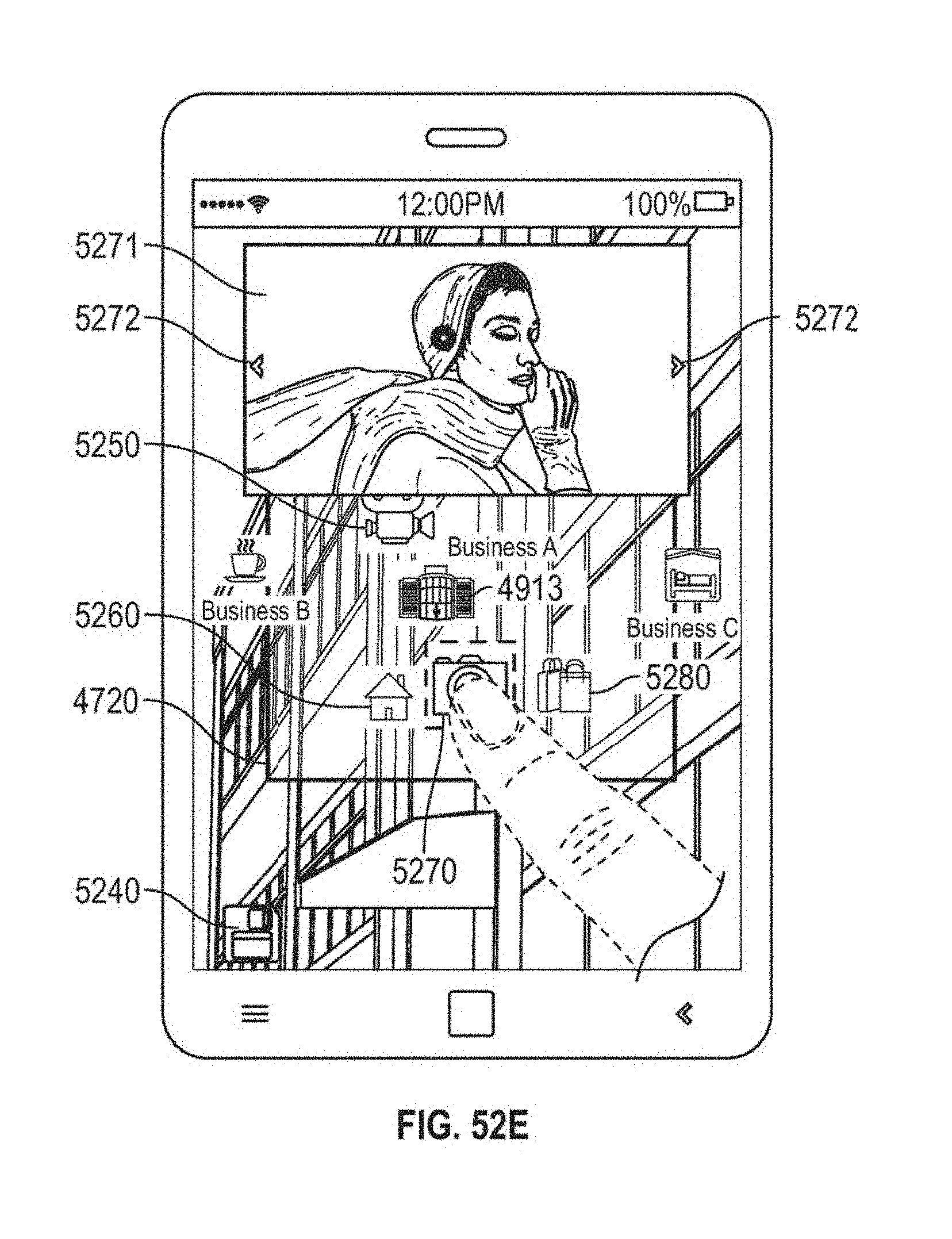

FIG. 52E illustrates an example display of a graphical user interface after user input selecting a photo-gallery icon displayed by the graphical user interface of FIG. 52D.

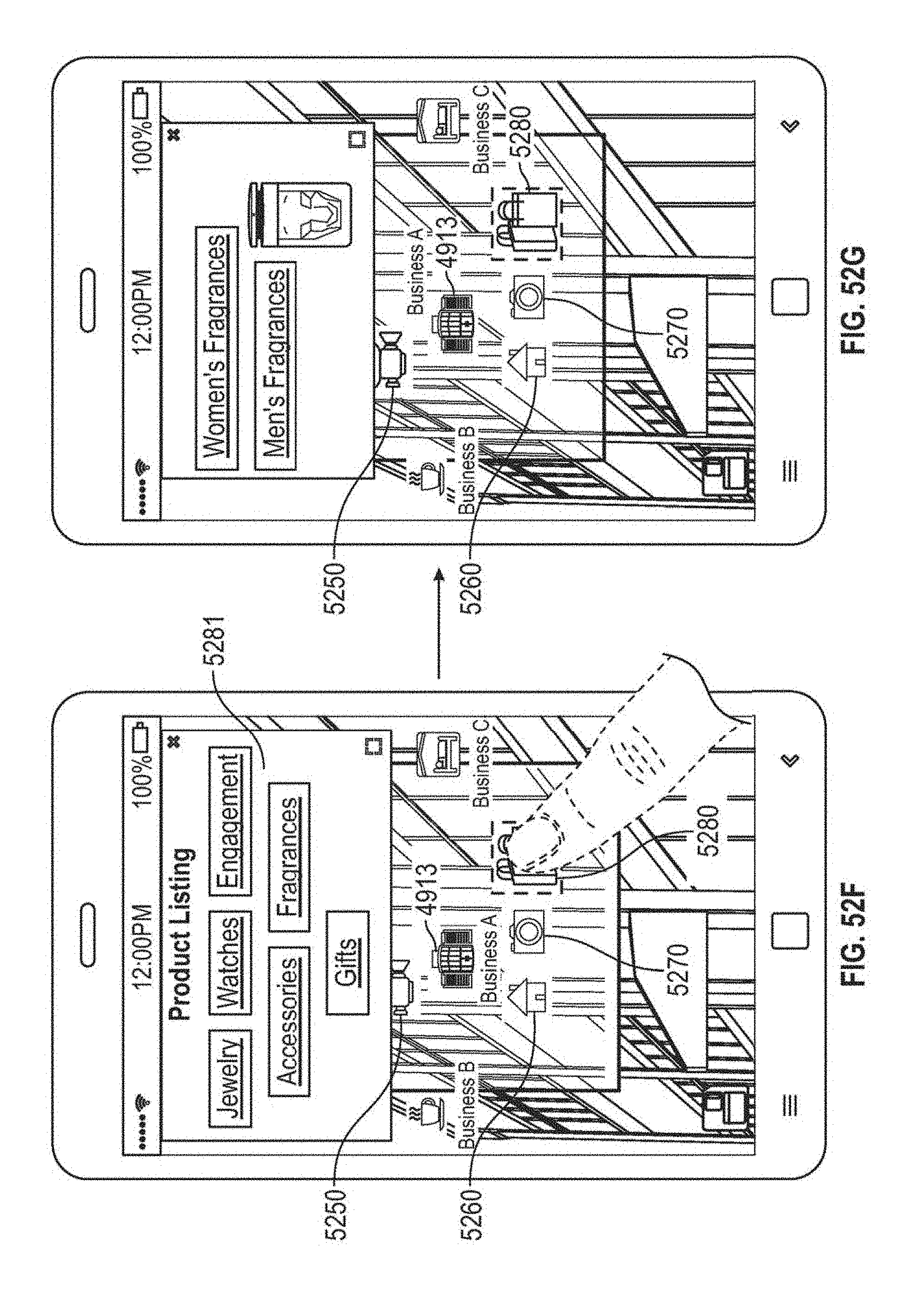

FIG. 52F illustrates an example display of a graphical user interface after user input selecting a product-listing icon displayed by the graphical user interface of FIG. 52D.

FIG. 52G illustrates an example display of a graphical user interface after user input selecting a fragrance product category shown in FIG. 52F.

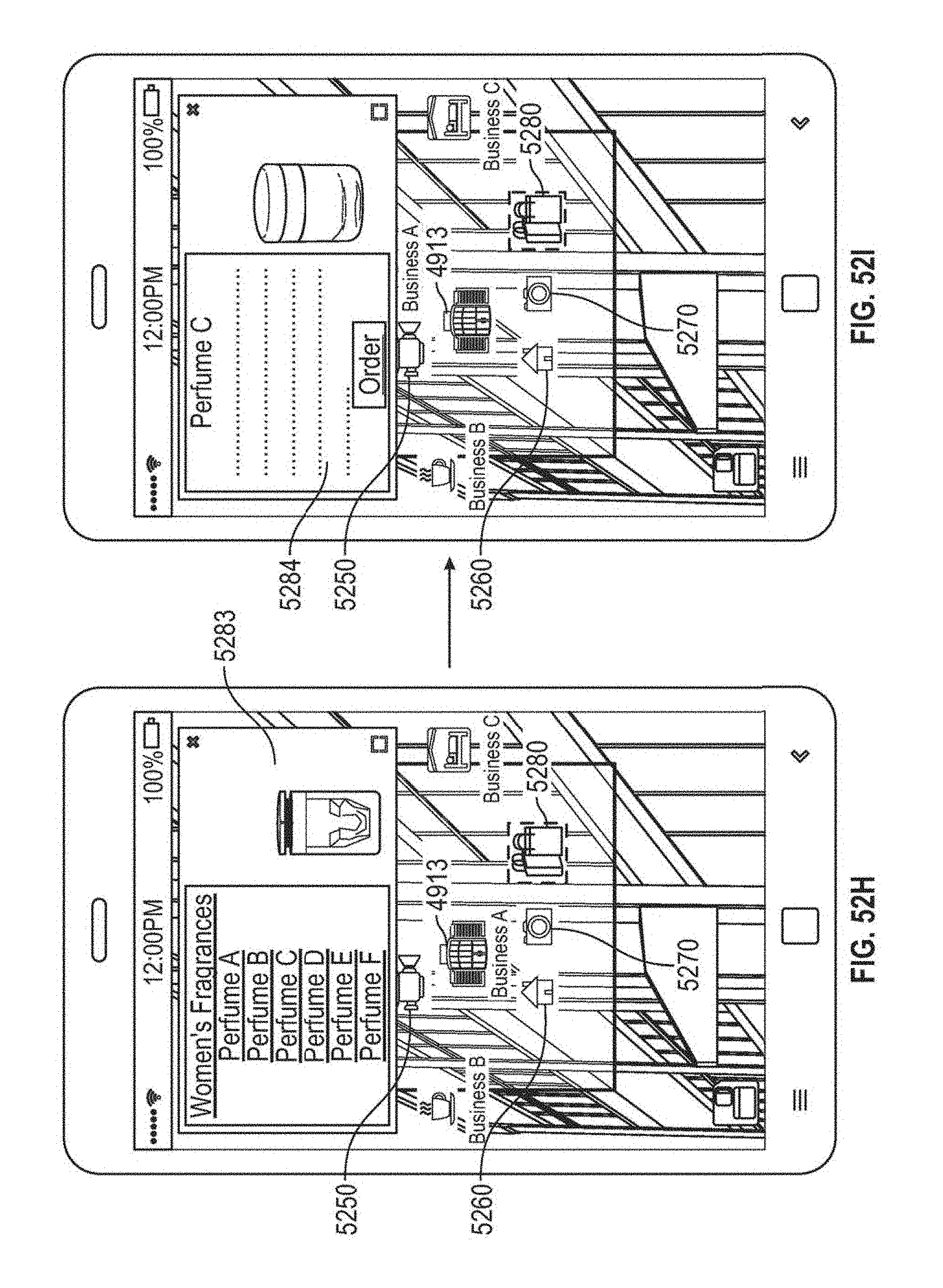

FIG. 52H illustrates an example display of a graphical user interface after user input selecting a women's fragrances product category shown in FIG. 52G.

FIG. 52I illustrates an example display of a graphical user interface after user input selecting a particular fragrance shown in FIG. 52H.

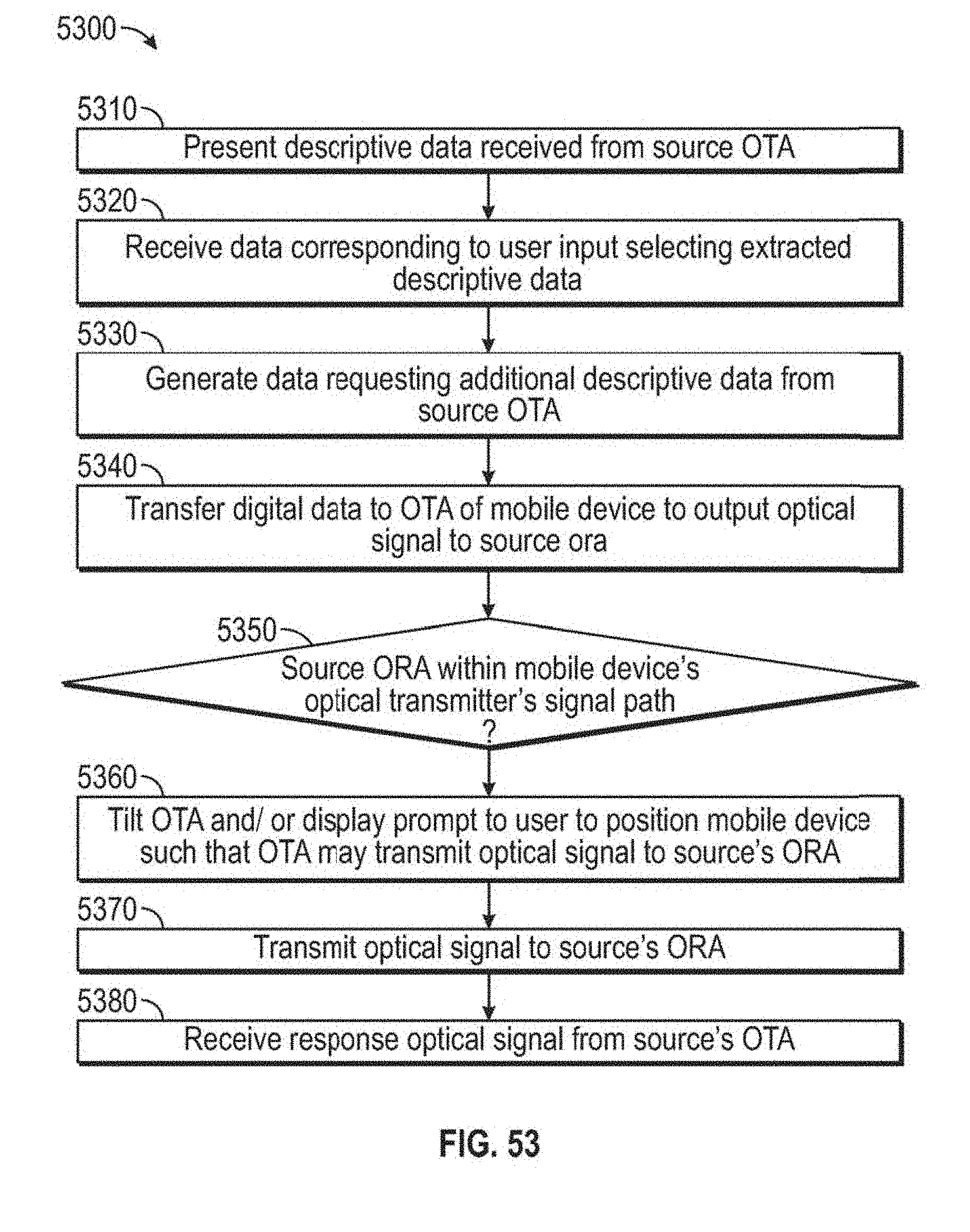

FIG. 53 is a flow diagram illustrating an example method of communicating with an entity over an optical narrowcasting network in response to user input received at a graphical user interface that presents optical signal information received from the entity.

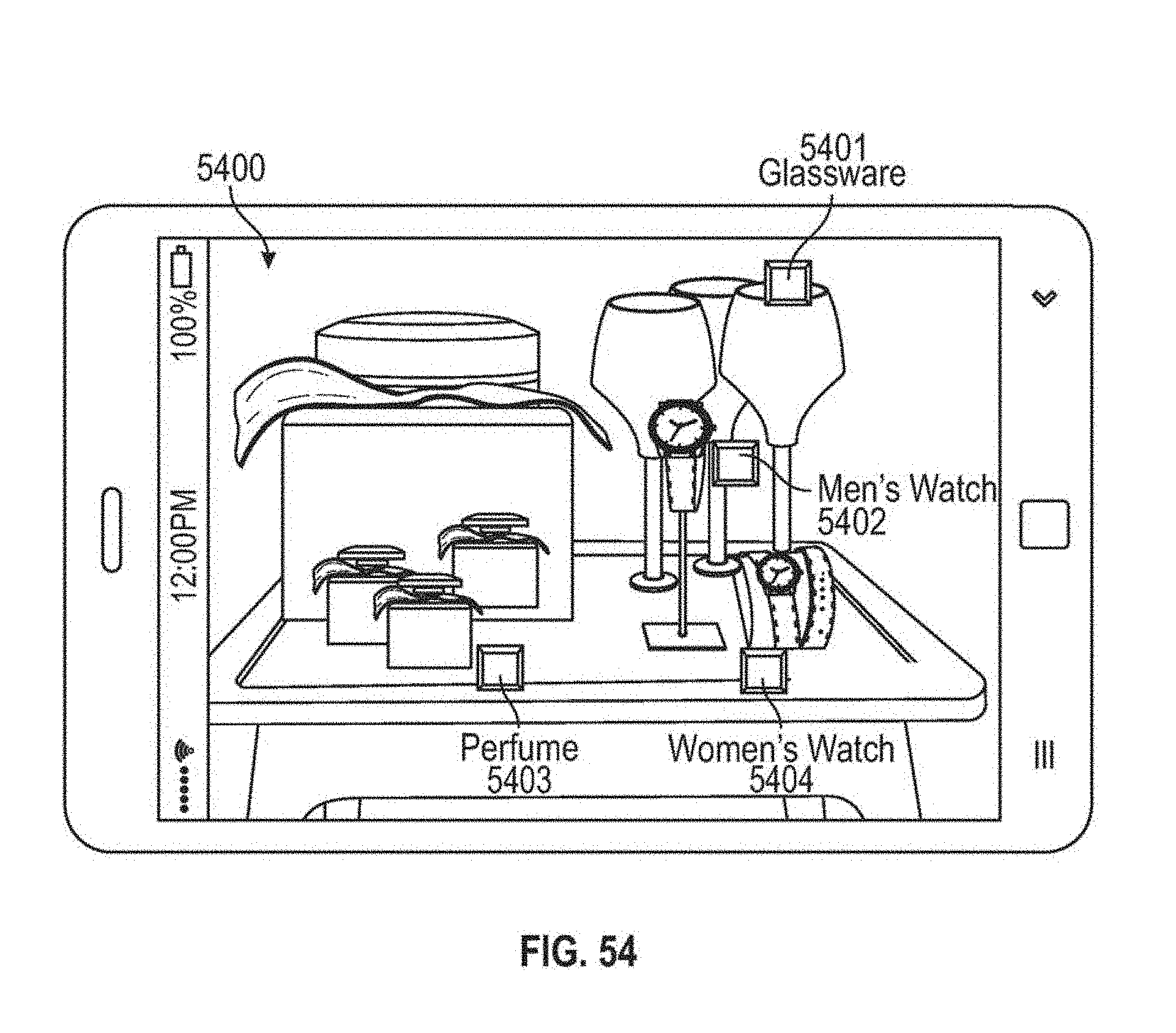

FIG. 54 illustrates an example augmented reality optical narrowcasting graphical user interface for a shop-window or in-store display that may be presented by running an optical narrowcasting application on a mobile device.

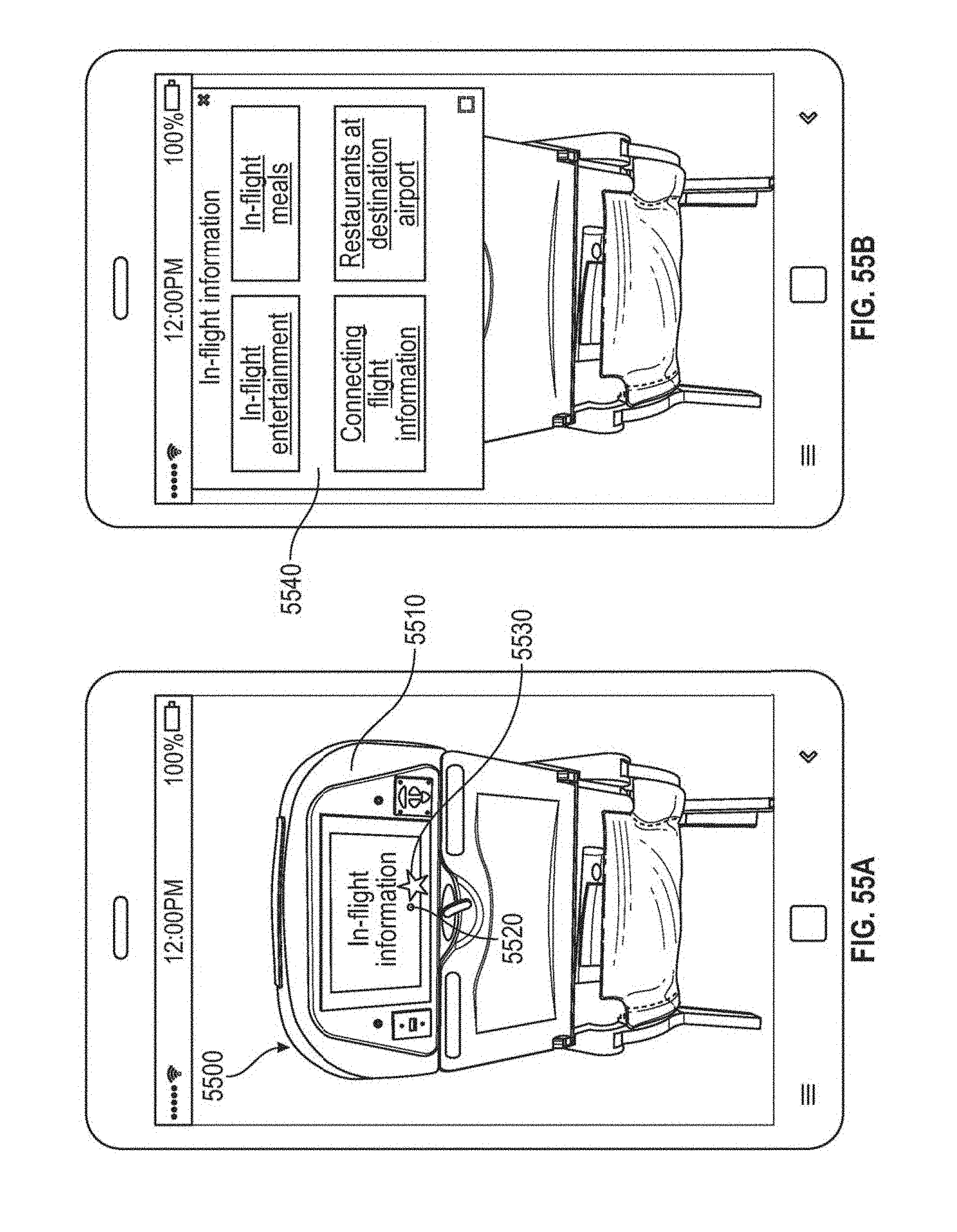

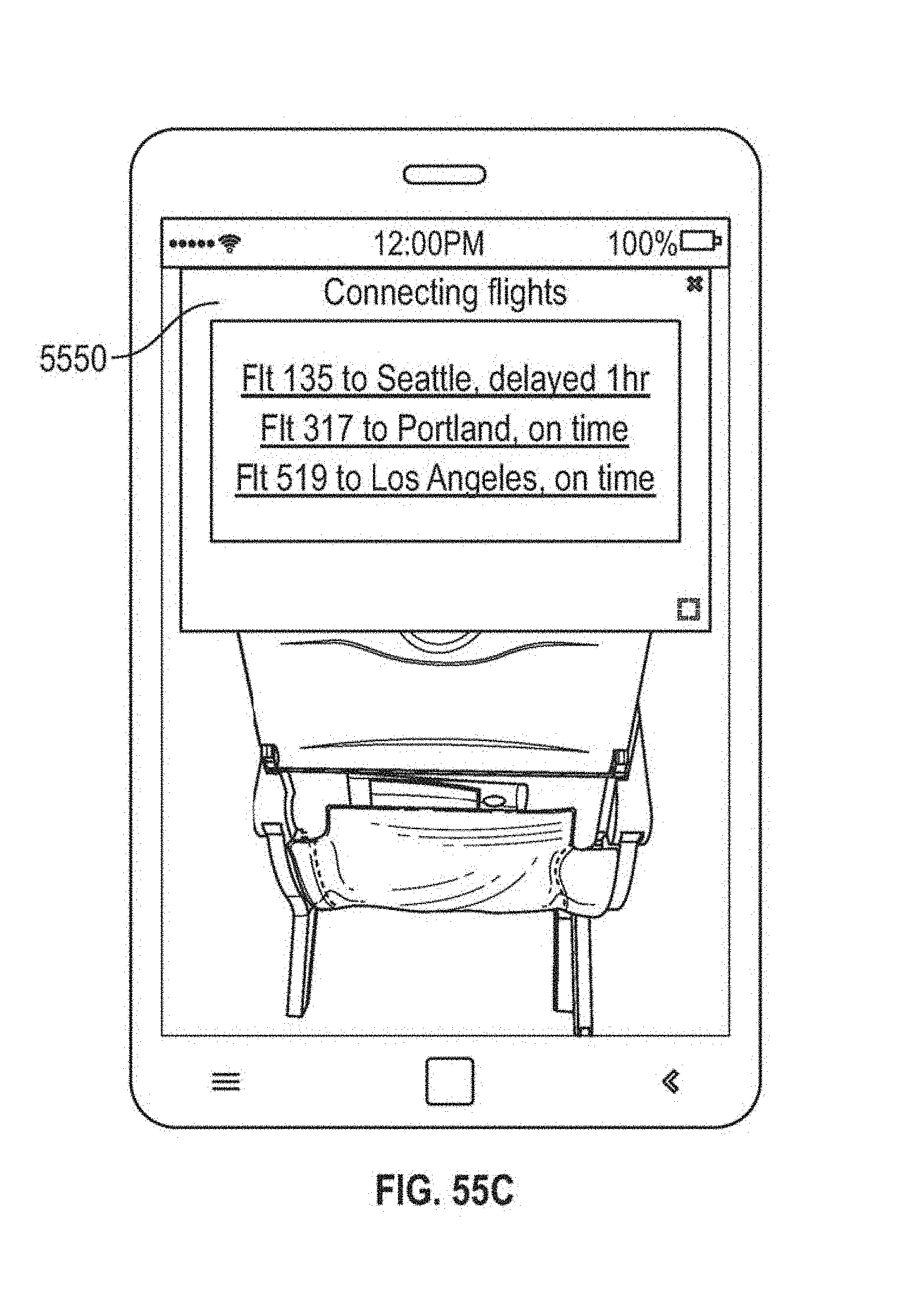

FIG. 55A illustrates an example augmented reality graphical user interface that may be presented in an airplane environment by running an optical narrowcasting application on a mobile device.

FIG. 55B illustrates an example augmented reality graphical user interface after user input selecting an augmented reality object shown in FIG. 55A.

FIG. 55C illustrates an example augmented reality graphical user interface after user input selecting a menu item shown in FIG. 55B.

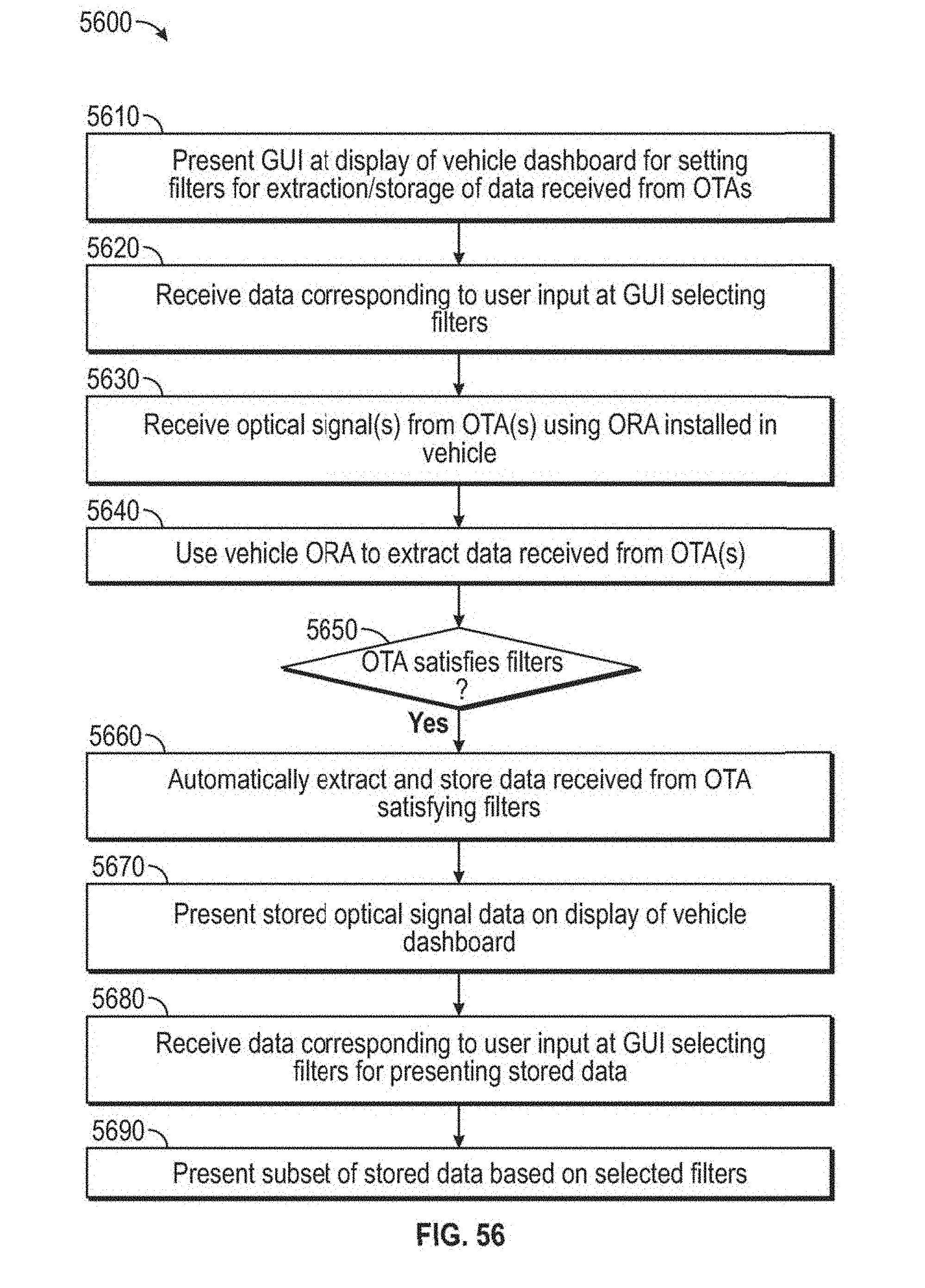

FIG. 56 is a flow diagram illustrating an example graphical user interface method of implementing optical narrowcasting in a vehicle.

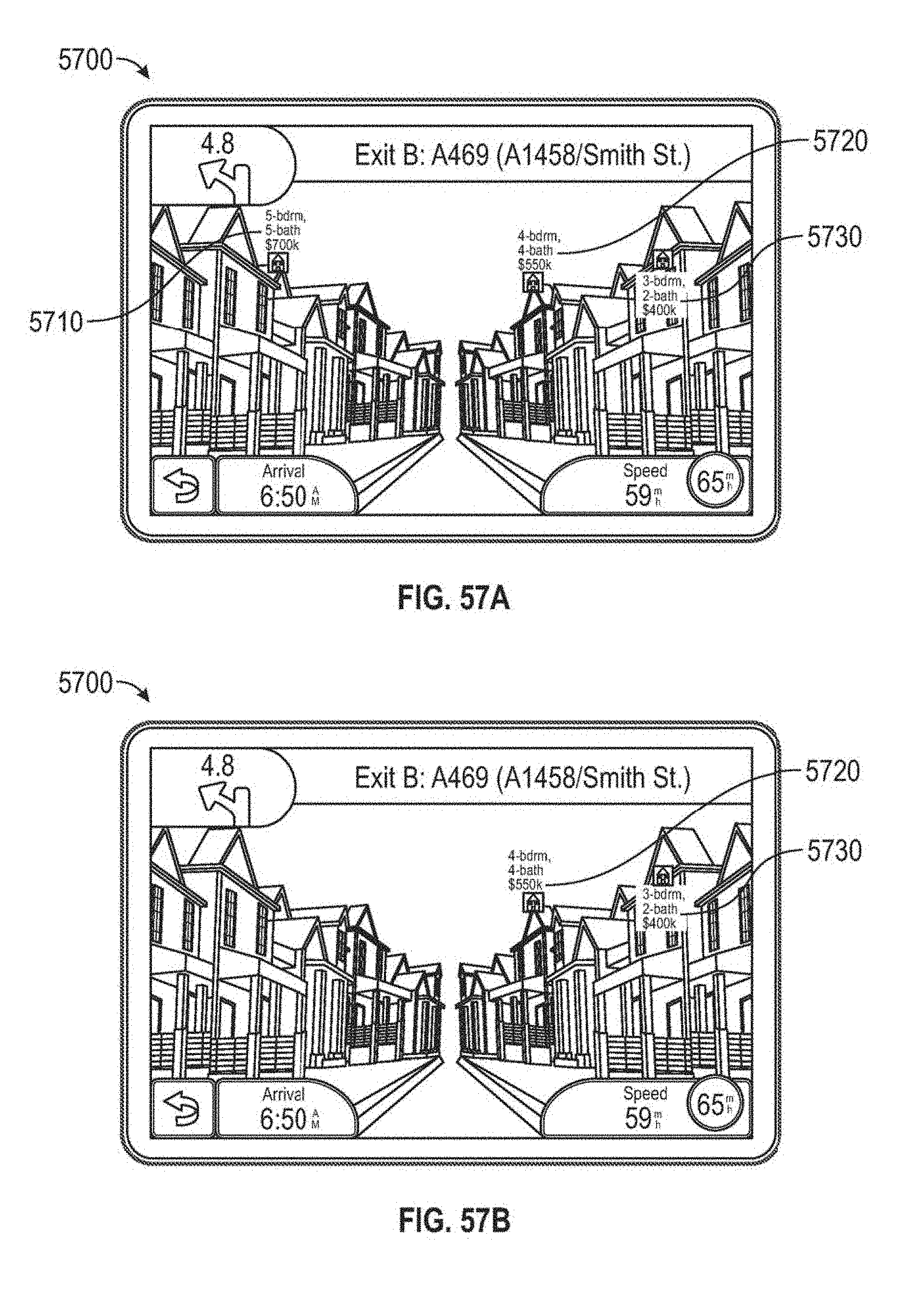

FIG. 57A illustrates an example display of an optical narrowcasting graphical user interface that may be provided by a vehicle to a driver and/or passenger interested in purchasing real estate.

FIG. 57B illustrates an example display of an optical narrowcasting graphical user interface that may be provided by a vehicle to a driver and/or passenger after filtering information displayed on the graphical user interface of FIG. 57A.

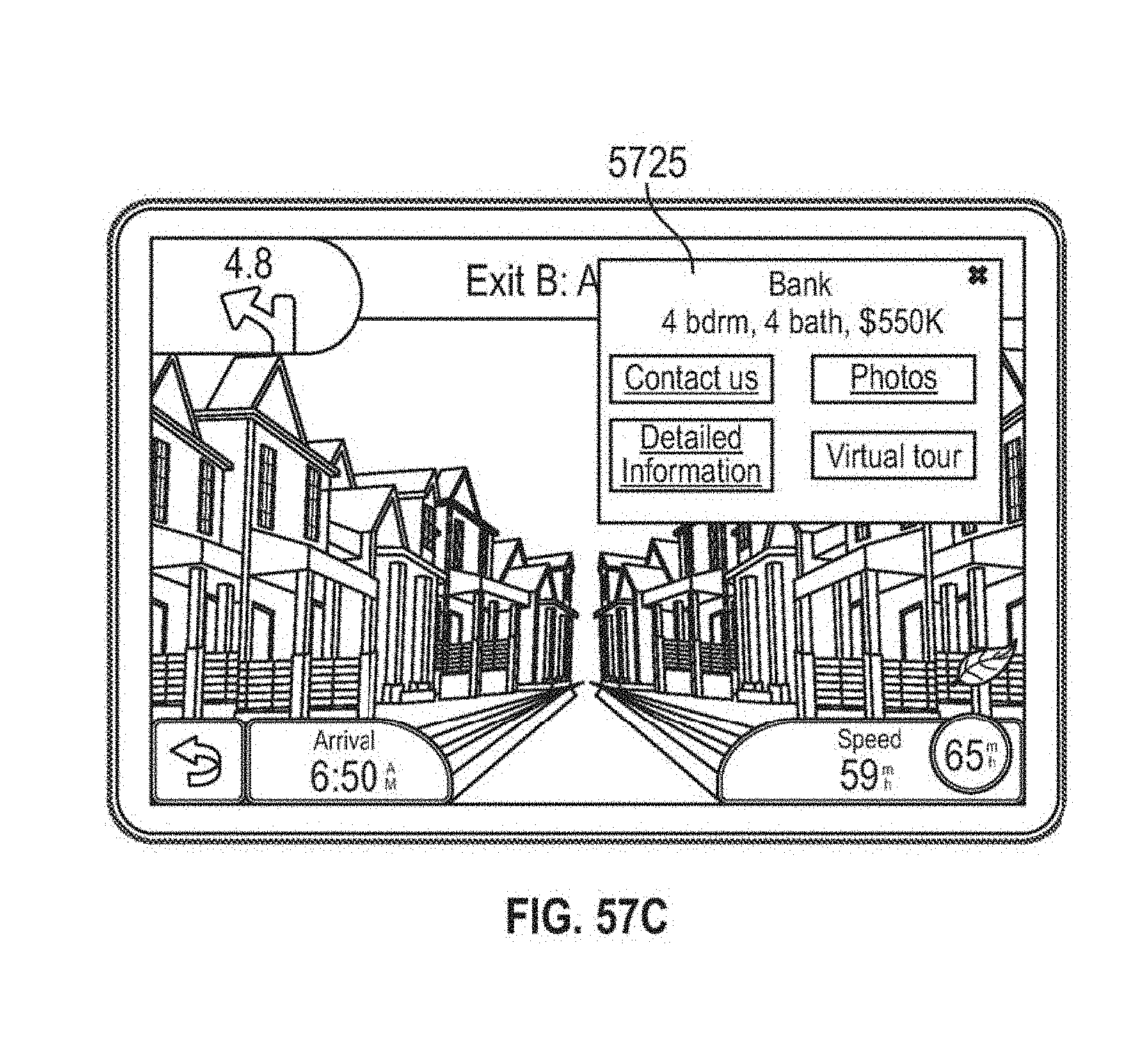

FIG. 57C illustrates an example display of an optical narrowcasting graphical user interface that may be provided by a vehicle to a driver and/or passenger after user input selecting an icon associated with a home for sale shown in FIG. 57B.

FIG. 58A is a flow chart illustrating example operations that may be performed for embedding optically narrowcast content in media content.

FIG. 58B is a flow chart illustrating example operations that may be performed to retrieve information or data embedded in a signal-enhanced media.

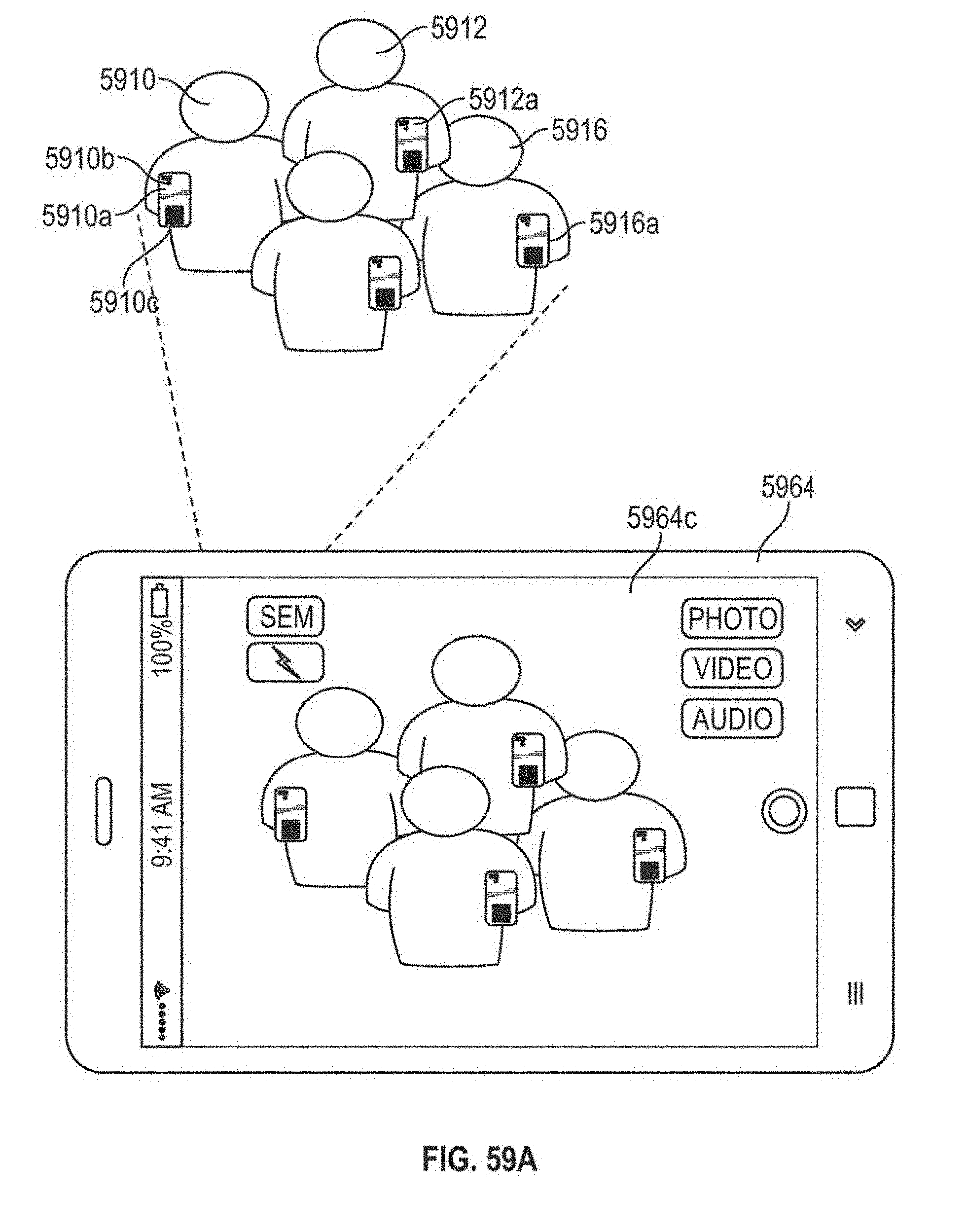

FIG. 59A illustrates a scenario in which a user may utilize a user device to capture an image or video of a group of individuals.

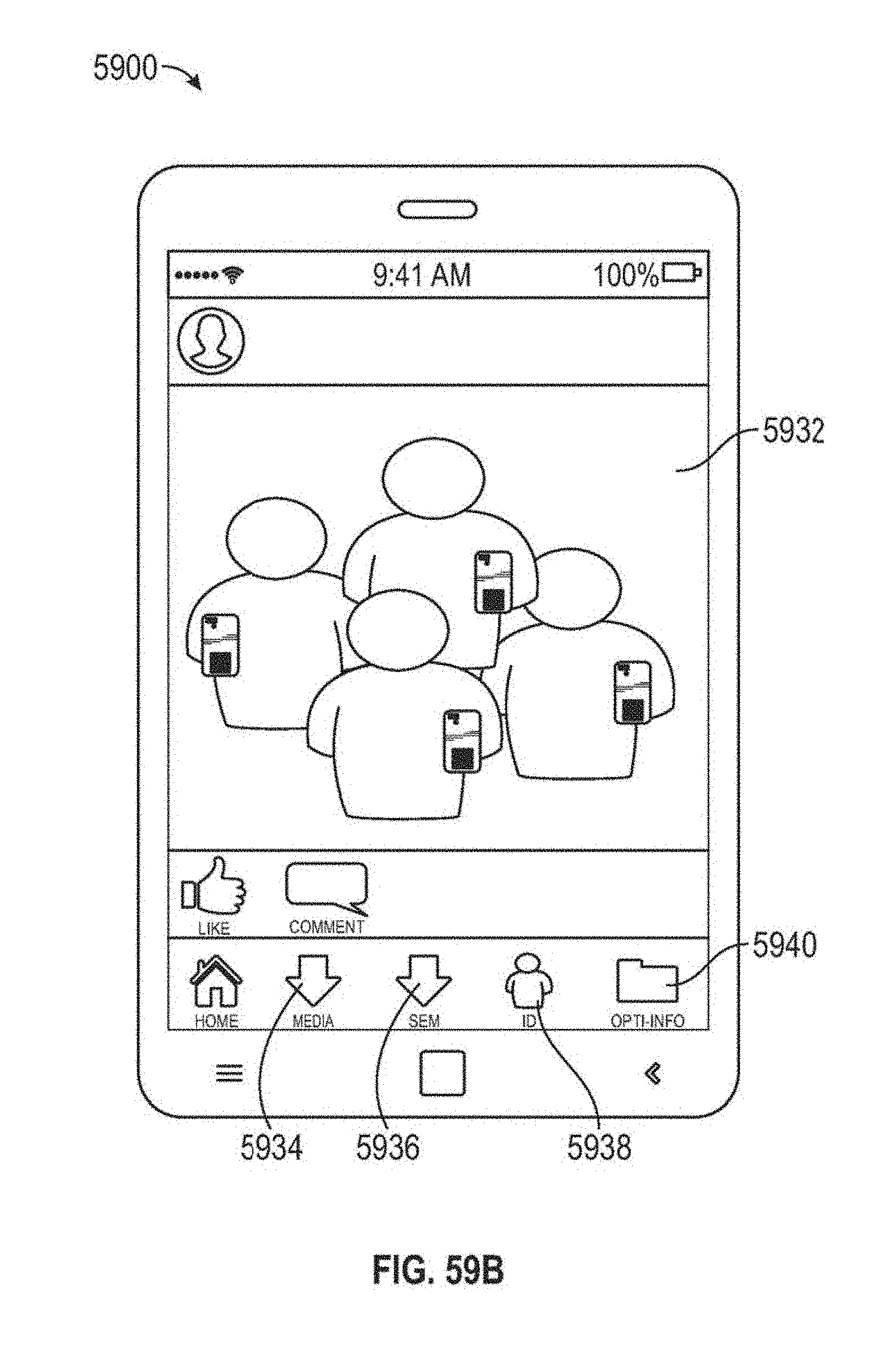

FIG. 59B illustrates an example view of a signal-enhanced photo taken in accordance with the example scenario illustrated in FIG. 59A.

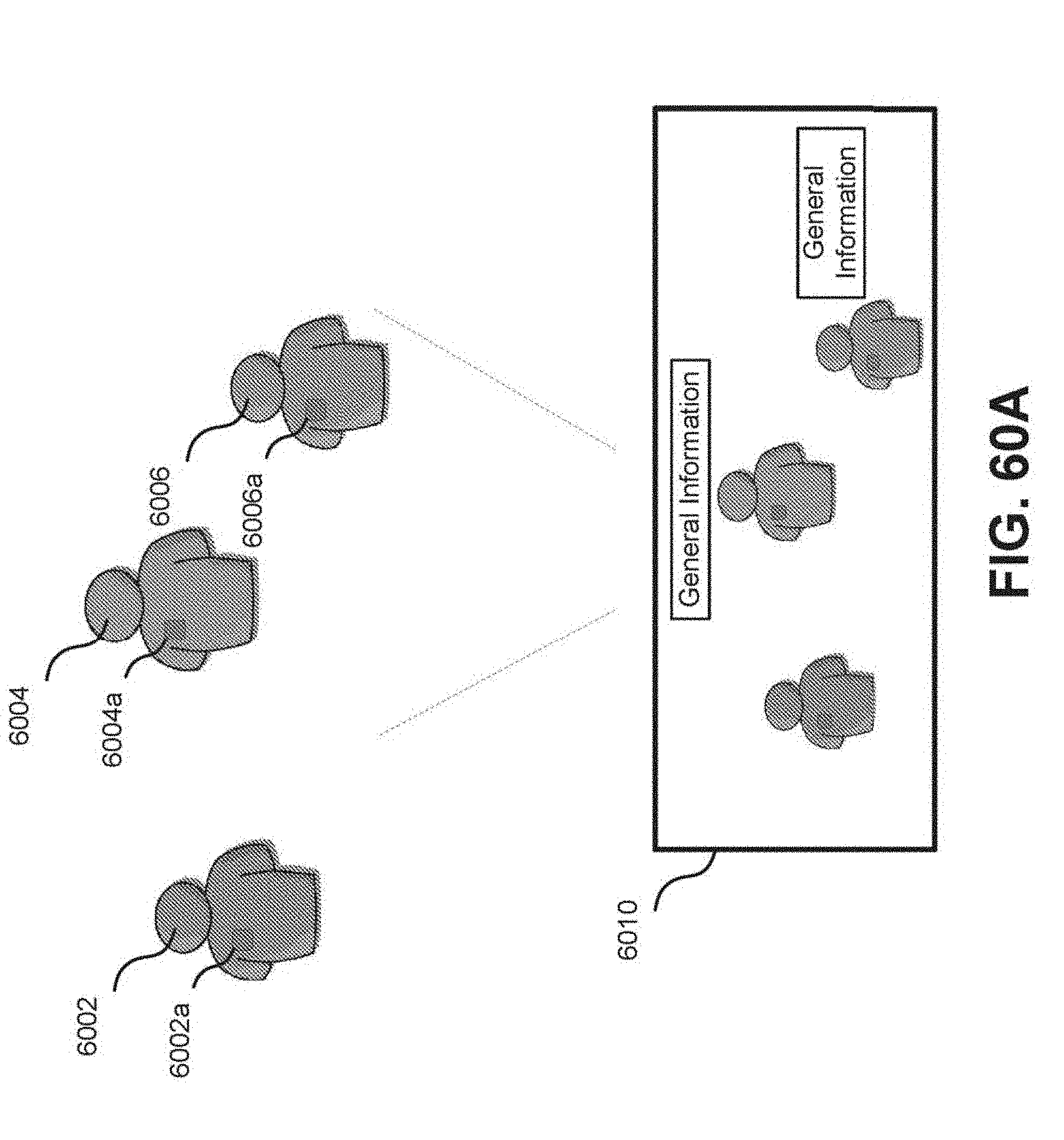

FIG. 60A illustrates a scenario in which a user may utilize a user device to capture an image or video of a group of individuals.

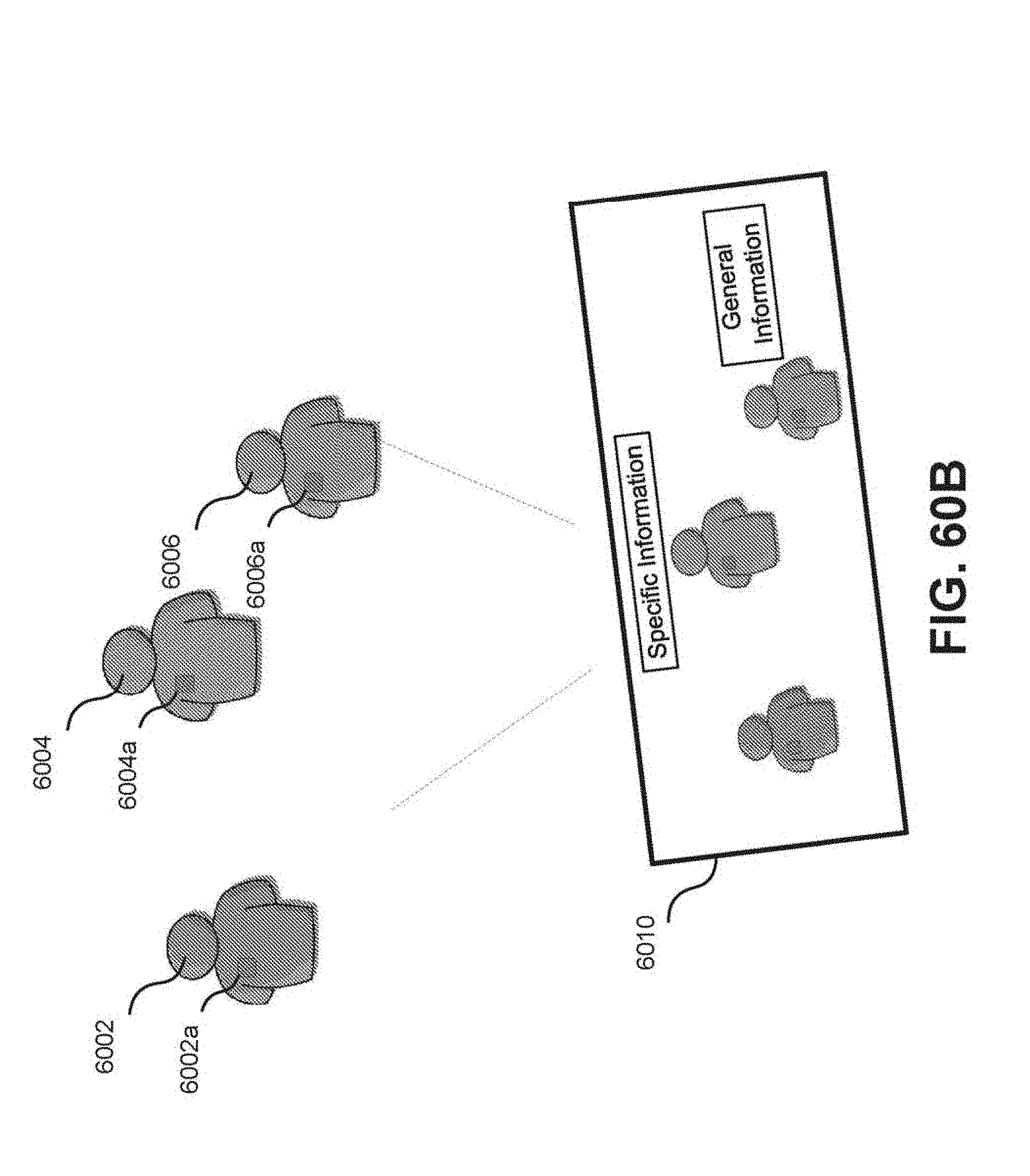

FIG. 60B illustrates a scenario in which a user may utilize a user device to capture an image or video of a group of individuals.

FIG. 60C illustrates a scenario in which a user may utilize a user device to capture an image or video of a group of individuals.

FIG. 61A illustrates a scenario in which a user may utilize a user device to capture an image or video of an environment.

FIG. 61B illustrates an effect caused by a user device.

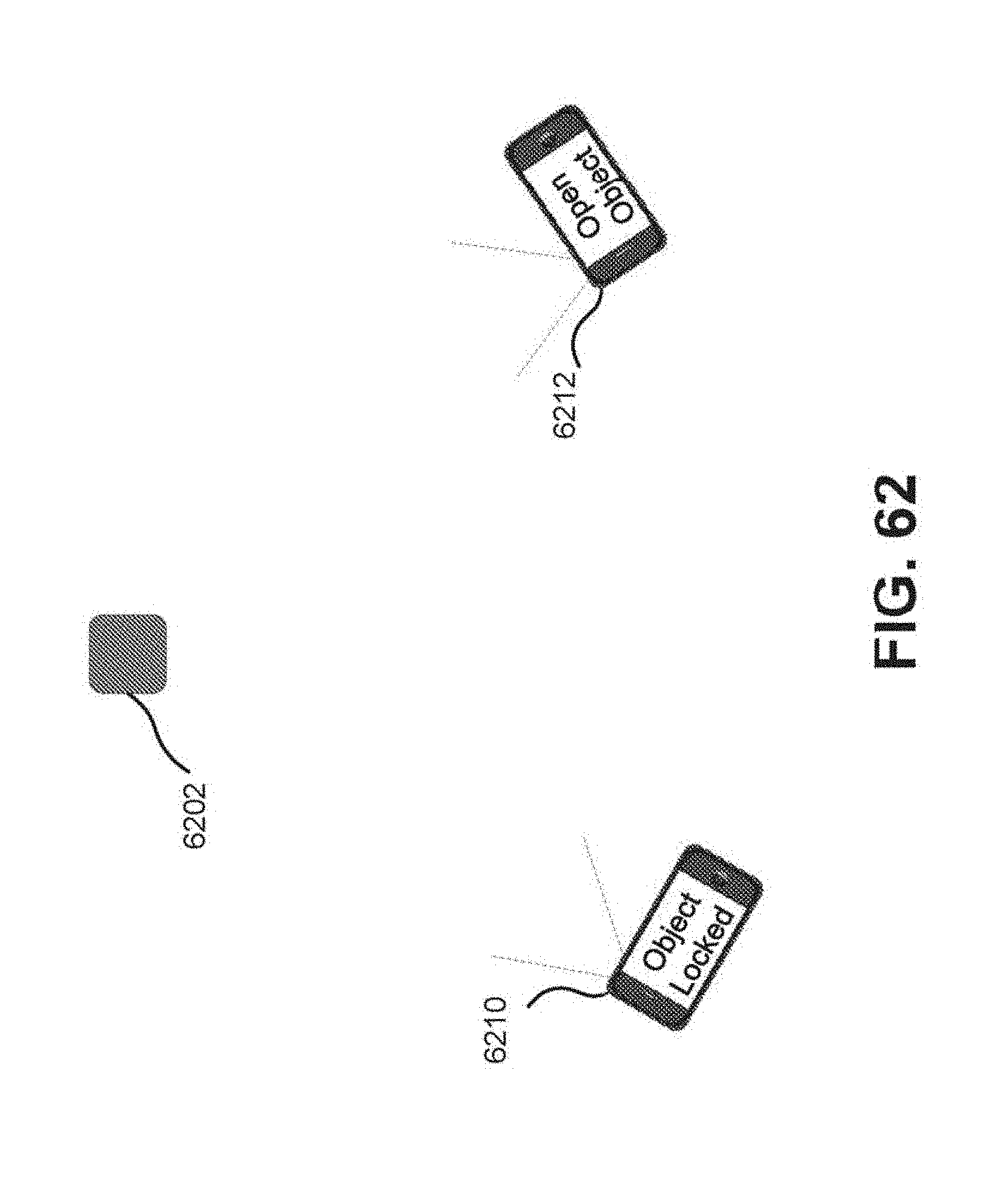

FIG. 62 illustrates a scenario in which users may collaboratively utilize user devices in an environment.

FIG. 63 illustrates a scenario in which a user may utilize a user device to interact with objects in an environment.

FIG. 64 illustrates a layout of source devices positioned to present a story.

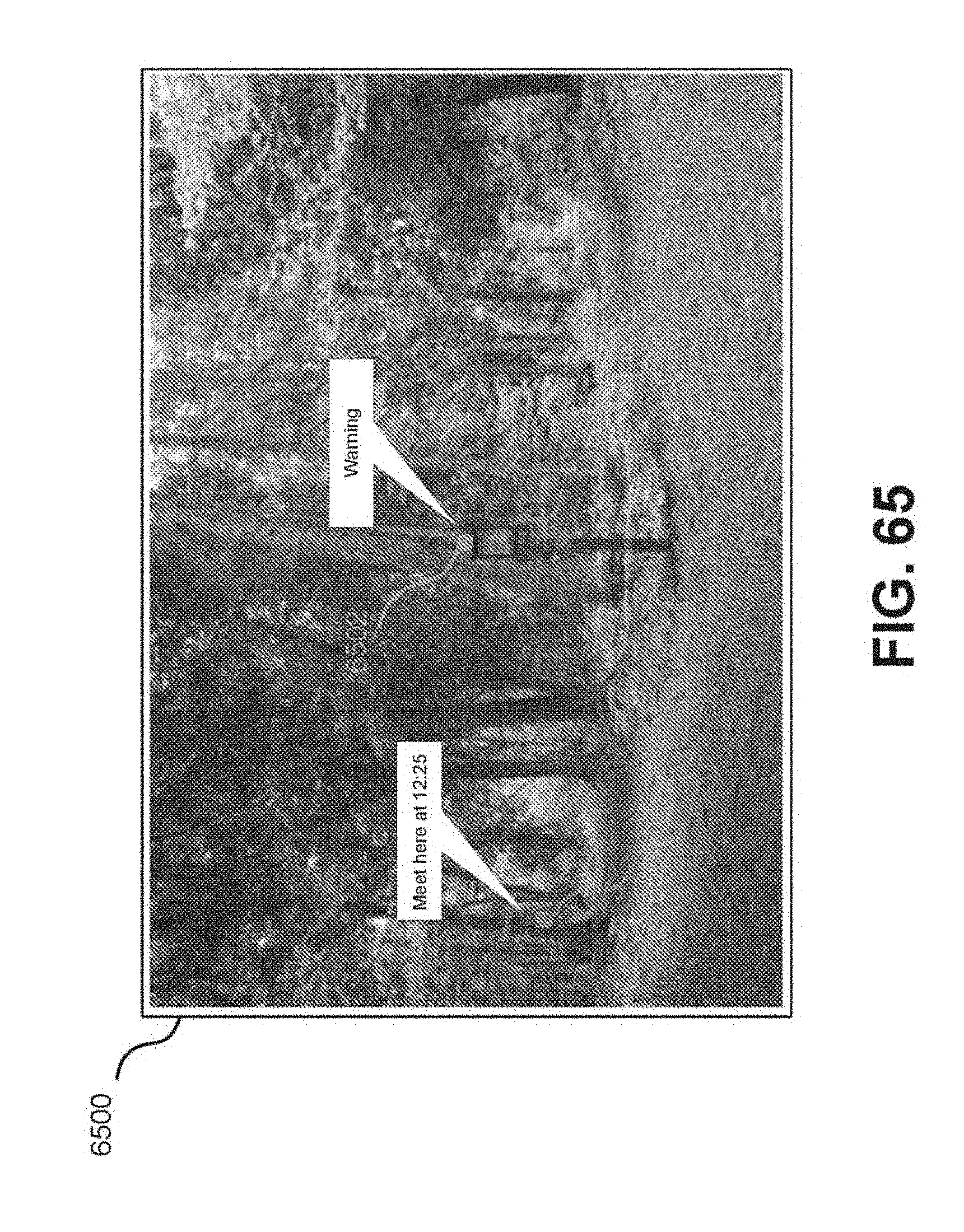

FIG. 65 illustrates an environment in which users may utilize user devices and source devices to exchange messages.

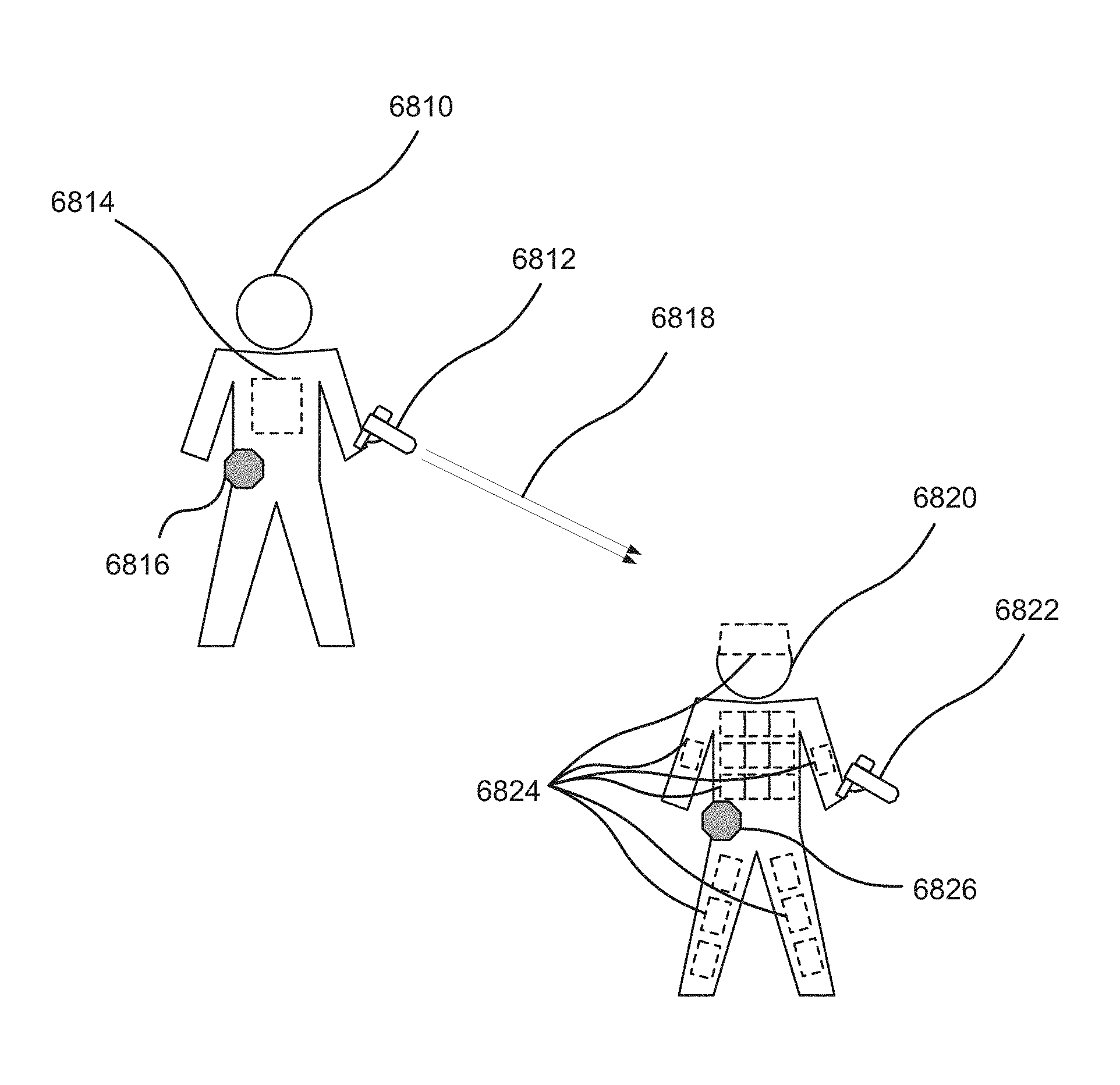

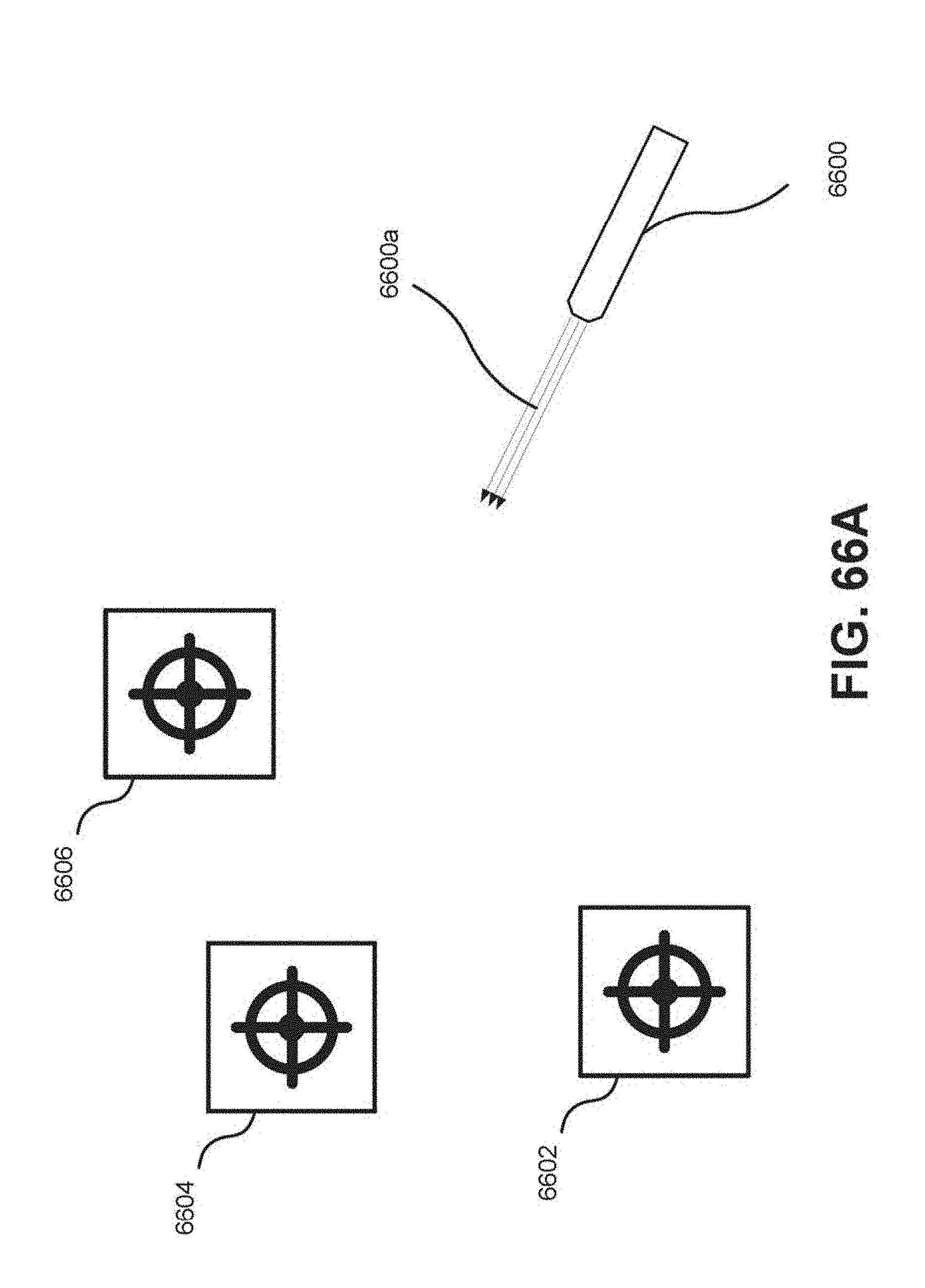

FIG. 66A illustrates a scenario in which optical beams may be used for target shooting.

FIG. 66B illustrates placement of optical signal receivers on a target.

FIG. 66C illustrates placement of optical signal receivers on a target.

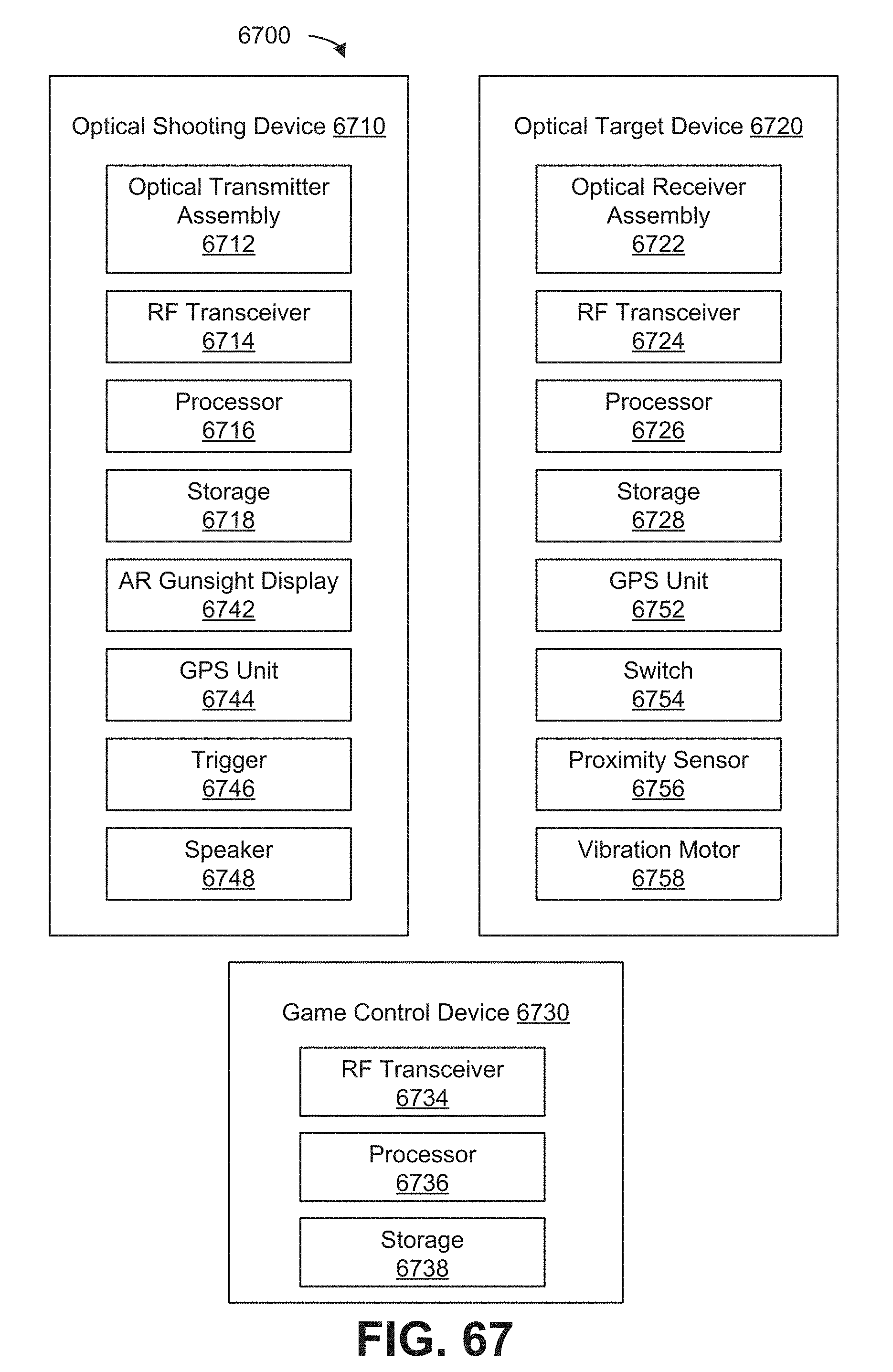

FIG. 67 illustrates an example optical narrowcasting gaming system.

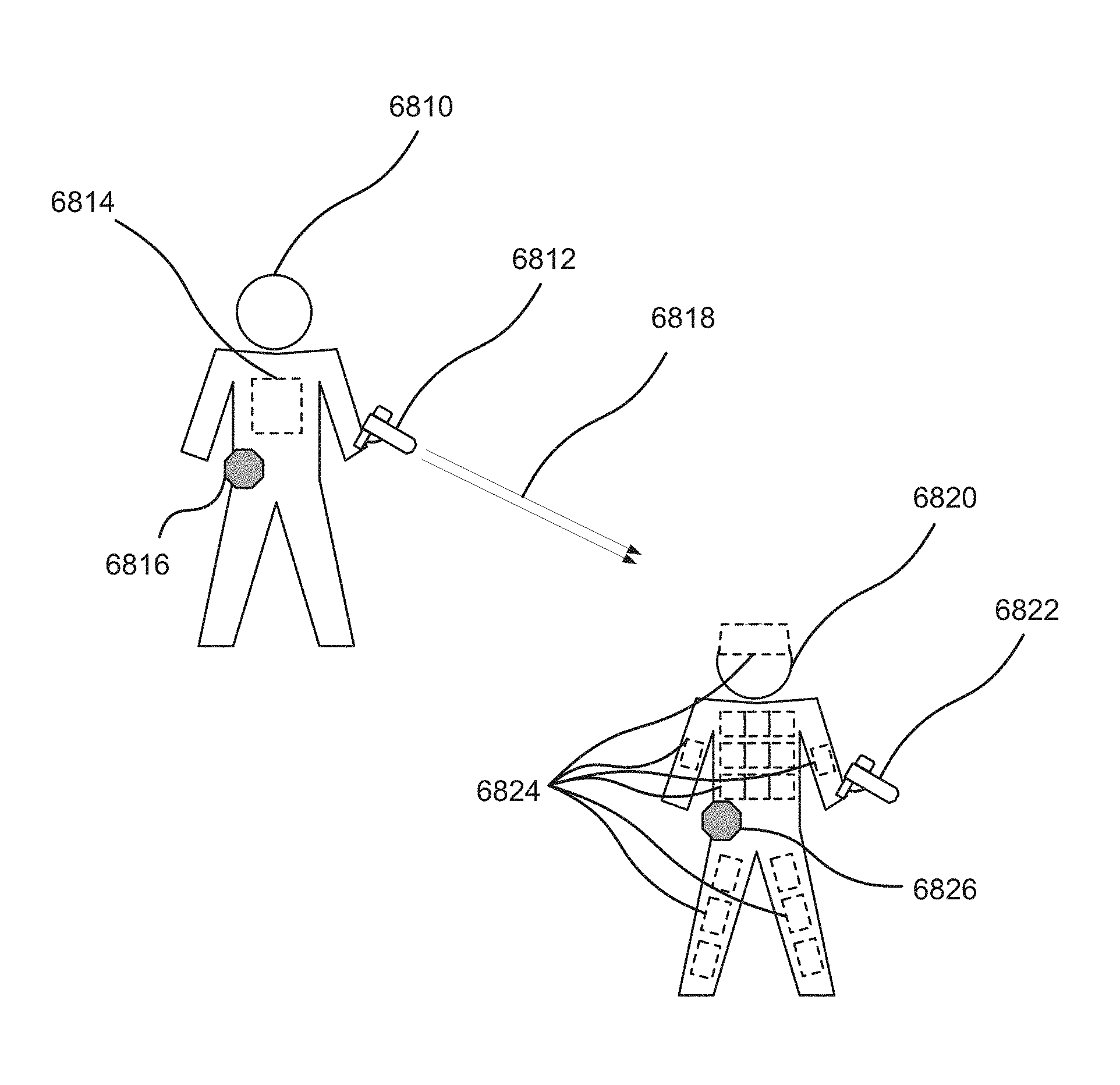

FIG. 68 illustrates a scenario in which an optical shooting device, an optical target device, and a game control device may be used.

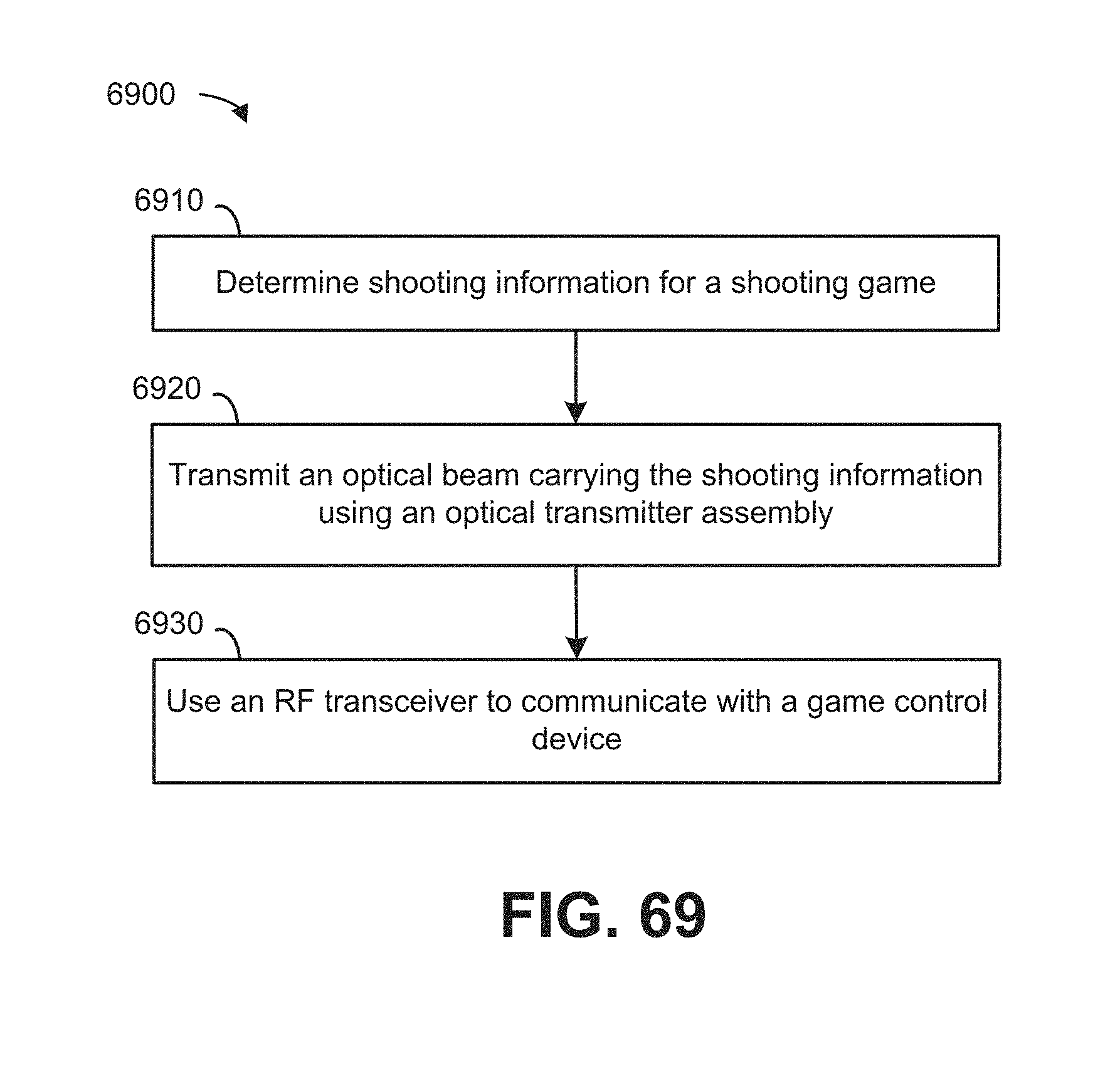

FIG. 69 illustrates a flowchart of example operations of an optical shooting device.

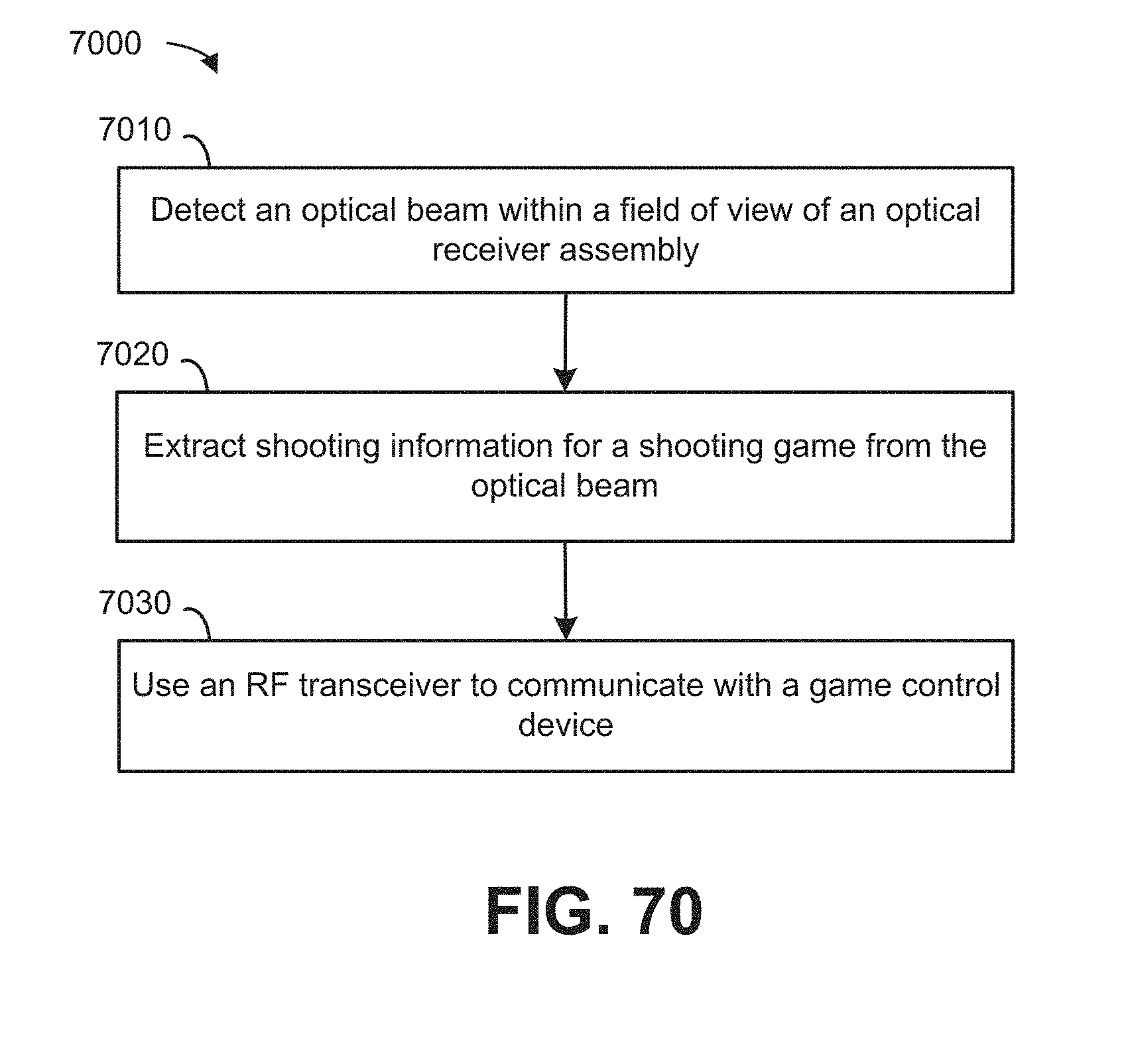

FIG. 70 illustrates a flowchart of example operations of an optical target device.

FIG. 71 illustrates a flowchart of example operations of a game control device.

FIG. 72 illustrates an example computing module that may be used to implement various features of the methods disclosed herein.

The figures are not exhaustive and do not limit the disclosure to the precise form disclosed.

DETAILED DESCRIPTION

Definitions

As used herein, an "optical narrowcasting system" or "ONS" is a system that can transmit information from one or more locations to one or more other locations using one or more digitally modulated optical beams transmitted through one or more propagation media. Contemplated propagation media may include, but are not limited to, air, water, glass windows, and the vacuum of space. An ONS may include one or more optical transmitter assemblies (OTAs) to transmit optical beams to one or more optical receiver assemblies (ORAS).

As used herein, an "optical beam" is a directed beam of electromagnetic radiation having wavelengths in a spectral region ranging from approximately 10 nm (e.g., extreme ultraviolet (UV) radiation) to approximately 10.sup.6 nm (e.g., far infrared (IR) radiation). As used herein to refer to an optical beam, the term "directed" beam can refer to energy, e.g., light energy sent in a specific range of propagation directions, but not in other directions. For example, a laser may emit a narrow directed beam of light, whereas the sun may be understood to emit undirected light that propagates outward in all possible directions.

As used herein, an "optical transmitter assembly" or "OTA" is a device including electronics, software (and/or firmware), and one or more optical transmitters (OTs). An OTA may be an element of an ONS. The OT(s) within an OTA can provide the functionality of at least one optical beacon transmitter (OBT) and/or at least one optical signal transmitter (OST). In some implementations, a single OT may function as both an OBT and an OST. In other implementations, the OBT(s) and OST(s) of an OTA can be separate devices. An OTA may also contain one or more tilt actuators allowing it to control the pointing direction(s) of the optical beam(s) output by its OT(s). An OTA's electronics and associated software (and/or firmware) may perform various useful functions, such as: providing an interface between the OTA and its user(s) (or its users' devices); supplying timing pulses and electrical power to its OT(s); controlling the operation of the OT(s) (e.g., turning them on and off, setting their data-transmission rate, etc.); transferring digital data to the OT(s) for them to output as one or more digitally modulated optical beams; and controlling one or more tilt actuators to alter the pointing direction(s) of the output optical beam(s).

As used herein, an "optical transmitter" or "OT" is a device including one or more optical sources, one or more beam-forming optics, and electronics with associated software (and/or firmware) adapted to transmit optical beams. One or more OTs may form at least part of an OTA. The optical sources may be coherent (e.g., lasers) or incoherent (e.g., light emitting diodes (LEDs)). The optical output of each optical source may be electronically modulated at a desired bit rate (or at one of a user-selectable range of bit rates) to transmit digital data in the form of a series of one-bits and zero-bits. The optical source(s) produce optical radiation in a desired optical waveband. Each beam-forming optic may collect flux emitted by one or more optical source(s) and utilize refraction, reflection, and/or diffraction to concentrate it into a transmitted beam having a desired angular intensity distribution. In some cases, the beam-forming optic may also include one or more spectral filters to minimize the amount of flux transmitted outside of the desired waveband. Multiple OTs could in some implementations be used in a single OTA to increase the solid angle of the output beam and/or to increase the output intensity in certain solid-angular regions. The electronics and associated software (and/or firmware) of an OT may perform the following functions: receive and (if necessary) modify timing pulses and electrical power sent to it by the OTA of which it is a component; receive and properly interpret various control signals sent to it from the OTA; and receive from the OTA, data in digital electronic form that it will then output in digital optical form.

As used herein, an "optical beacon transmitter" or "OBT" is a type of OT that produces a beacon associated with an OTA. An "optical beacon" or "beacon" is a modulated optical beam containing information that allows an ORA to detect the presence of an OTA. An optical beacon makes a user or entity receiving optically transmitted information aware of the presence or availability of information transmitted by the OTA associated with the beacon. In addition to detecting the presence of the OTA, a beacon produced by an OBT may also contain information allowing an optical receiver assembly (ORA) to identify the entity (e.g., business, organization, private individual, product, landmark, etc.) and type (i.e., category) of entity (e.g., restaurant, department store, movie theater, etc.) with which the OTA is associated. A beacon may also be used by an OBR to determine the angular position of the OTA. In some embodiments, the angular position, e.g., horizontal and/or vertical angular position, of the OTA can be determined based on information optically transmitted within or as part of the optical beacon. For example, latitudinal, longitudinal, and altitudinal information indicative of the location of an OTA may be transmitted in a beacon. In some embodiments, one or more measurements made by an OBR of the propagation direction of an optical beacon can be used by the OBR to derive, calculate, or otherwise determine an angular position of the OTA within the FOV of the OBR. As mentioned previously, a single OT within an OTA may function as both an OBT and an OST, or the OBT(s) and OST(s) within an OTA may be separate devices.

As used herein, an "optical signal transmitter" or "OST" is a type of OT that produces an optical signal associated with an OTA. An "optical signal" is a modulated optical beam containing information, other than information contained in an optical beacon, which the operators of an OTA desire to transmit to optical receiver assemblies (ORAs). The purpose of an OST is to transmit information to ORAs that have already detected the OTA of which the OST is a component. In some instances, the ORAs may have also identified and determined the angular location of the OTA prior to receiving optical signals transmitted by the OTA. A single OT within an OTA may function as both an OBT and an OST, or the OBT(s) and OST(s) within an OTA may be separate devices.

A modulated optical beam produced by an OTA may contain both optical beacons and optical signals. Alternatively, a modulated optical beam may contain only one or more optical beacons and no optical signals, or it may contain only one or more optical signals and no optical beacons. For example, an OTA may simultaneously output two separate optical beams, one being an optical beacon and another being an optical signal, where the optical beacon has a different wavelength spectrum than the optical signal.

As used herein, the term "optical information" generally refers to information extracted from a modulated optical beam or used to modulate an optical beam. Optical information may include identification data extracted from or contained in an optical beacon (e.g., identifying a particular OTA and/or source of the OTA) and descriptive data extracted from or contained in an optical signal (e.g., an advertisement or other message). This data may comprise machine-readable and/or human-readable data, such as text, video, audio, metadata, or other types of information.

As used herein, an "optical receiver assembly" or "ORA" is a device including electronics, software (and/or firmware), and one or more optical receivers (OR). The OR(s) within an ORA can provide the functionality of at least one optical beacon receiver (OBR) and/or at least one optical signal receiver (OSR). An ORA may be an element of an ONS. In some cases, an ORA may also contain one or more tilt actuators allowing it to control the directions from which its OBR(s) and OSR(s) can receive modulated optical beams. An ORA can perform one or more of the following functions. It may detect the presence of beacons transmitted by OTAs. It may extract information from beacons, such as the identities of the entities (e.g., businesses, organizations, private individuals, products, landmarks, etc.) with which OTAs are associated. It may determine the angular positions of OTAs by sensing the direction of incidence of beacons or extracting positioning information therefrom. It may receive and/or extract data from optical signals transmitted by OTAs. An ORA's electronics and associated software (and/or firmware) perform various useful functions, such as: providing an interface between the ORA and its user(s) (or its users' devices); supplying timing pulses and electrical power to its OBR(s) and OSR(s); controlling the operation of its OBR(s) and OSR(s) (e.g., turning them on and off, setting their data-reception rate, etc.); receiving and transferring to users (or to users' devices) information, such as identifying information and angular position, obtained by its OBR(s) regarding OTAs that have been detected; receiving and transferring to users (or to users' devices) data received from OTAs by its OSR(s); and controlling one or more tilt actuators to alter the pointing direction(s) of one or more OBRs and one or more OSRs.

As used herein, an "optical beacon receiver" or "OBR" is a device adapted to receive an optical beacon that may make up at least part of an ORA. An OBR may detect the presence of one or more OTAs. An OBR may also identify the entities (e.g., businesses, organizations, or private individuals) with which OTAs are associated through, e.g., information contained within an optical beacon, as well as determine the angular positions of OTAs. As noted previously, the angular positions of OTAs may be derived from measurement(s) of the propagation direction of a beacon and/or determined from information contained within the beacon. An OBR may include, for example: one or more optical detectors or detector arrays; one or more collection optics, each including one or more optical components (e.g., lenses, reflectors, and/or diffractive optical elements); and control electronics with associated software (and/or firmware). A spectral filter may be included in each collection optic to reduce to low levels the out-of-band flux incident on the detector(s). The optical detectors are capable of detecting optical flux in the waveband and at the bit rates of beacons which the OBR is designed to receive. In some cases an OBR could share some or all of its detectors, collection optics, electronic hardware, and software/firmware with one or more OSRs within the ORA of which it is a part. The electronics and associated software (and/or firmware) of an OBR perform at least the following functions: providing the means to receive and (if necessary) modify timing pulses and electrical power sent to it by the ORA of which it is a part; receiving and properly interpreting various control signals sent to it by the ORA; and transferring to the ORA information (e.g., identifying information and angular position) it has obtained regarding beacons it has detected and from which it has received information.

As used herein, an "optical signal receiver" or "OSR" is a device adapted to receive optical signals and to convert the data they contain into digital or electronic form. An OSR may include one or more optical detectors or detector arrays, one or more collection optics, and control electronics with associated software (and/or firmware). The optical detectors are capable of detecting optical flux in the waveband and at the bit rates of optical signals the OSR is designed to receive. Each collection optic can collect incident in-band flux over its entrance pupil and within its specified field of view (FOV), and utilizes refraction, reflection, and/or diffraction to concentrate it onto one or more of the optical detectors. A spectral filter may also be included in the optical train to reduce to low levels, the out-of-band flux incident on the detectors. In some cases, an OSR may share some or all of its detectors, collection optics, electronic hardware, and software/firmware with one or more OBRs within the ORA of which it is a part. The electronics and associated software (and/or firmware) of an OSR can perform one or more of the following functions: receive and (if necessary) modify timing pulses and electrical power sent to it by the ORA (of which it is a part); receive and properly interpret various control signals sent to it by the ORA; and transfer to the ORA, digital data extracted from optical signals it has received.

Disclosed herein are systems and methods of communication that utilize non-radio-wave-based communications channels. That is, communications may be achieved through the transmission and/or receipt of information in the form of modulated optical beams. In this way, a user or entity, such as a business wishing to transmit information, e.g., advertising information, may do so by utilizing an OTA that can convert a digital representation of the information into one or more modulated optical beams for transmission. It should be noted that the information transmitted may include information disseminated by businesses and other organizations, including government agencies, for example, and by individuals. Personal content, such as messages, photos, and videos shared by individuals within a social media context are other examples of information that may be transmitted.

A characteristic of the optical communications methods and systems disclosed herein is that a user of an ORA designed to receive information sent by one or more OTAs may not know ahead of time what specific optical transmitters will be sending information of interest to him/her or where they will be located. For this reason, one aspect of various embodiments is that an ORA may be equipped with one or more components adapted to detect the presence of optically transmitted information prior to receiving that information.

A user wishing to receive the information transmitted in the form of one or more modulated optical beams may utilize an ORA implemented within or in conjunction with a user device, such as a smartphone, to scan for and detect the presence of available optical beacons, extract the identifying information contained in the beacons, and display the identifying information through, e.g., an augmented reality (AR) interface. Upon selecting a specific OTA using information extracted from its associated beacon and displayed on the AR interface, the user, if he/she so desires, may further obtain some or all of the information contained within or represented by the optical signal associated with said OTA through the AR interface or other information-presentation mechanism, such as a media player (e.g., advertising information in the form of digital video).

Advantages can be realized by using such an optical communications system, referred to herein as an optical narrowcasting system. For example, optical narrowcasting systems such as those disclosed herein may have long-range, high-bandwidth capabilities, avoid regulatory limitations (optical transmissions are thus far unregulated by the Federal Communications Commission (FCC) or any other regulatory body). For example, optical narrowcasting systems can provide users with the ability to utilize existing hardware and/or software technologies that are enhanced by extremely compact non-imaging optical components that have low power needs and are energy efficient. For example, the operable range of an optical narrowcasting system can be approximately 400 m (e.g., during the day) to approximately 1200 m (e.g., during nighttime) compared to that of WiFi that is effective within approximately 50 m. Moreover, optical narrowcasting systems are able to direct information in one or more desired directions using, e.g., beamforming. This can be accomplished through the use of the aforementioned non-imaging optics, whereas directionality using WiFi is not practical given the need (of WiFi routers) to use expensive and bulky directional antennas. Regarding efficiency, optical narrowcasting networks can be up to 300 times more energy efficient than WiFi networks. Further still, the security that can be achieved in an optical narrowcasting network is much higher than that possible in a WiFi.RTM. network, due to the directionality of the transmitted optical beams.

FIG. 1 illustrates an example optical narrowcasting system 100. Transmitting and/or receiving an optical beam(s) may be accomplished using an OTA, e.g., optical transmitter assembly 104, and an ORA, e.g., optical receiver assembly 106. An noted previously, "optical transmitter assembly," or "OTA," may refer to an optical narrowcasting element adapted to transmit one or more optical beams, and can include certain electronics and/or circuitry, software and/or firmware, and one or more optical transmitters, which will be described in greater detail below with reference to FIG. 2. As illustrated in FIG. 1, optical transmitter assembly 104 may transmit one or more optical beams into a medium, such as air. As alluded to previously, an optical beam may comprise one or more of an optical beacon and an optical signal.

Optical transmitter assembly 104 may receive, modulate, convert, and/or otherwise process digital information into an optical format for transmission as an optical beam to be received by optical receiver assembly 106. The digital information may be received by optical transmitter assembly 104 from one or more sources, e.g., source device 102. Source device 102 may be a computer tablet, smartphone, data server, or other information source.

Optical transmitter assembly 104 may be installed on various fixed structures, such as buildings, billboards, road signs, and the like. It may also be installed on vehicles such as automobiles and buses. It should be understood that these installations are merely examples and not limiting in any way. Optical transmitter assembly 104 may also be incorporated into portable and/or handheld devices, such as smartphones, tablet computers, and head mounted displays, or it may be incorporated into devices intended to be attached to, or kept in close proximity to, portable and/or handheld devices, such as smartphone cases and cases for tablet computers. It should be understood that the devices mentioned here are merely examples and not limiting in any way. Moreover, although optical transmitter assembly 104 is illustrated as being associated with a single source device 102, optical transmitter assembly 104, in some embodiments, may be associated with and/or receive digital information from additional source devices.

Optical receiver assembly 106 may be installed on various fixed structures, such as buildings, billboards, road signs, and the like. It may also be installed on vehicles such as automobiles and buses. It should be understood that these installations are merely examples and not limiting in any way. Optical receiver assembly 106 may also be incorporated into portable and/or handheld devices, such as smartphones, tablet computers, and head mounted displays, or it may be incorporated into devices intended to be attached to, or kept in close proximity to, portable and/or handheld devices, such as smartphone cases and cases for tablet computers. It should be understood that the devices mentioned here are merely examples and not limiting in any way. Moreover, although optical receiver assembly 106 is illustrated as being associated with a single user device 108, optical receiver assembly 106, in some embodiments, may be associated with, controlled by, and/or share digital information with additional user devices.

Optical receiver assembly 106 may be an optical narrowcasting element adapted to receive one or more optical beams, and can include certain electronics and/or circuitry, software and/or firmware, and one or more optical receivers, which will be described in detail below with reference to FIG. 4. Optical receiver assembly 106 may receive an optical beam and demodulate, convert, and/or otherwise process the optical beam back into digital information. Optical receiver assembly 106 may transmit or forward the digital information to a receiving device, such as user device 108. User device 108 may be a computer tablet, smartphone, network server, or other device capable of receiving and/or utilizing the digital information or data. Optical receiver assembly 106 may be integrated with user device 108 or optical receiver assembly 106 may be operatively attached to user device 108. It should be noted that optical receiver assembly 106 need not be associated with only a single user device. In some embodiments, optical receiver assembly 106 may transmit or forward received digital information to more than one user device, e.g., via broadcasting, multicasting, etc.

It should be noted that although FIG. 1 depicts one-way communications between optical transmitter assembly 104 and optical receiver assembly 106, an optical narrowcasting system may also involve two-way communications. For example, source device 102 and user device 108 may each have respective optical transmitter and optical receiver assemblies integrated therein or operatively attached thereto. Optical beams may, in some cases, be in the visible or near-IR bands. Optical beams may be produced using either incoherent sources (e.g., light emitting diodes (LEDs)), lasers, or other appropriate light sources. Depending on the application, different angular beam widths can be used. Optical beams may either propagate from an optical transmitter assembly directly to an optical receiver assembly along an unobstructed line of sight (LOS), or optical beams may propagate along an indirect, non-LOS path, utilizing diffuse reflections from ceilings, walls, or other structures, for example, or from suspensions of small particles (e.g., airborne dust) or liquid droplets (e.g., clouds or fog). As illustrated in FIG. 21, two or more identical modular transmitter-optics units may be used to produce combined beams having increased horizontal and/or vertical angular beam widths, and/or increased intensity within certain solid-angular regions.

An ad hoc network (e.g., a communications network established directly between two or more computers or other devices) need not rely on a base station or other centralized access point. Such communications networks are generally established on a temporary basis between a small number of participants in close physical proximity for a specific common purpose, such as sharing a set of documents being written by the participants or playing multi-player computer games. In some embodiments, two or more user devices (one embodiment of which can be user device 108) may each comprise optical transmitter assemblies and optical receiver assemblies (embodiments of which can be optical transmitter assembly 104 and optical receiver assembly 106 of FIG. 1). The two or more user devices may be used to transmit and receive data via optical beams, thereby creating an ad hoc optical narrowcasting network.

FIG. 2A illustrates example components that may make up optical transmitter assembly 104. Optical transmitter assembly 104 may include a data interface 104a. Data interface 104a may comprise electronics and/or circuitry, as well as associated software (and/or firmware) adapted to provide an interface between optical transmitter assembly 104 and source device 102 (and/or a user of source device 102). For example, optical transmitter assembly 104 may be controlled by source device 102 via data interface 104a. Data interface 104a may communicate with source device 102 by way of a hardwired and/or wireless (e.g., Bluetooth.RTM.) connection. One or more software applications on source device 102 may allow data files to be uploaded to a memory unit of optical transmitter assembly 104 via data interface 104a. These one or more software applications may also allow a user to send commands instructing optical transmitter assembly 104 to optically transmit the contents of one or more data files that have been uploaded to optical transmitter assembly 104. The user may also be able to specify values, such as bit rate, optical output intensity, pulse duty cycle, and other relevant operating parameters for optical transmitter assembly 104.

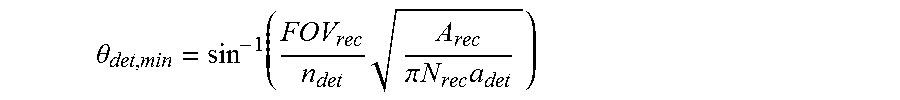

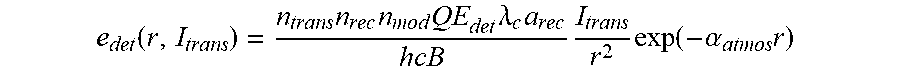

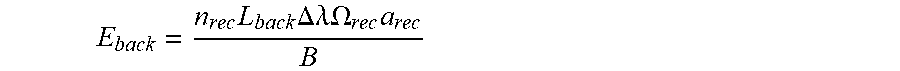

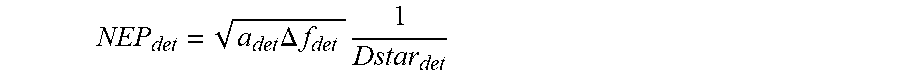

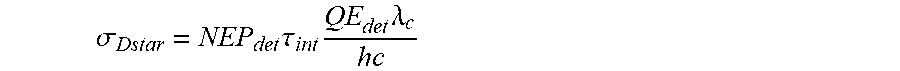

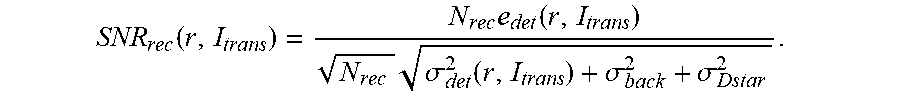

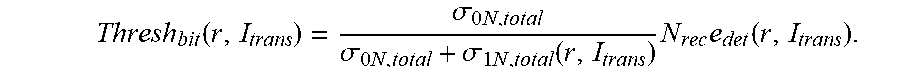

Optical transmitter assembly 104 may include control electronics 104b. Control electronics 104b may receive the above-noted values that have been input by the user and utilized to control operation of optical transmitter assembly 104. For example, control electronics 104b may supply timing pulses and electrical power to the optical transmitters, control the operation of one or more optical transmitters, e.g., optical beacon transmitter 104c and optical signal transmitter 104d, (for example, by turning them on and off, setting their data-transmission rate, etc.). Control electronics 104b may effectuate the transfer of digital data to one or more of the optical transmitters to be output as one or more digitally modulated optical beams.