Method and apparatus for individualized three dimensional display calibration

Fateh , et al. July 30, 2

U.S. patent number 10,368,059 [Application Number 14/874,313] was granted by the patent office on 2019-07-30 for method and apparatus for individualized three dimensional display calibration. This patent grant is currently assigned to Atheer, Inc.. The grantee listed for this patent is Atheer, Inc.. Invention is credited to Nathan Abercrombie, Sina Fateh, Sleiman Itani.

View All Diagrams

| United States Patent | 10,368,059 |

| Fateh , et al. | July 30, 2019 |

Method and apparatus for individualized three dimensional display calibration

Abstract

A target is outputted to an ideal position in 3D space. A viewer indicates the apparent position of the target, and the indication is sensed. An offset between the ideal and apparent positions is determined, and an adjustment determined from the offset such that the apparent position of the ideal position with the adjustment matches the ideal position without the adjustment. The adjustment is made to the first entity and/or a second entity, such that the entities appear to the viewer in the ideal position. The indication may be monocular with a separate indication for each eye, or binocular with a single viewer indication for both eyes. The indication also may serve as communication, such as a PIN input, so that calibration is transparent to the viewer. The method may be continuous, intermittent, or otherwise ongoing over time.

| Inventors: | Fateh; Sina (Mountain View, CA), Abercrombie; Nathan (Oakland, CA), Itani; Sleiman (East Palo Alto, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Atheer, Inc. (Mountain View,

CA) |

||||||||||

| Family ID: | 58446983 | ||||||||||

| Appl. No.: | 14/874,313 | ||||||||||

| Filed: | October 2, 2015 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20170099482 A1 | Apr 6, 2017 | |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/332 (20180501); G02B 27/0172 (20130101); H04N 13/327 (20180501); H04N 13/128 (20180501); H04N 13/383 (20180501); H04N 13/344 (20180501); H04N 2213/002 (20130101); G02B 2027/0134 (20130101); G02B 2027/0178 (20130101); G02B 2027/0138 (20130101) |

| Current International Class: | H04N 13/122 (20180101); H04N 13/332 (20180101); H04N 13/128 (20180101); H04N 13/344 (20180101); H04N 13/383 (20180101); H04N 13/327 (20180101); G02B 27/01 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 5677501 | October 1997 | Kawaguchi |

| 6795019 | September 2004 | Holt |

| 7164117 | January 2007 | Breed |

| 7414596 | August 2008 | Satoh |

| 7663502 | February 2010 | Breed |

| 7706625 | April 2010 | Friedrich |

| 8467133 | June 2013 | Miller |

| 8547422 | October 2013 | Surman |

| 8553935 | October 2013 | Mandella |

| 8581892 | November 2013 | Yoshida |

| 8676937 | March 2014 | Rapaport |

| 8933886 | January 2015 | Imoto |

| 9030443 | May 2015 | Yoshida |

| 9098137 | August 2015 | Nakanishi |

| 9141235 | September 2015 | Nakanishi |

| 2003/0120183 | June 2003 | Simmons |

| 2005/0195158 | September 2005 | Wilke |

| 2008/0231926 | September 2008 | Klug |

| 2009/0312595 | December 2009 | Leuthardt |

| 2010/0125561 | May 2010 | Leuthardt |

| 2011/0122131 | May 2011 | Bruls |

| 2012/0005624 | January 2012 | Vesely |

| 2012/0120498 | May 2012 | Harrison |

| 2012/0154920 | June 2012 | Harrison |

| 2013/0257849 | October 2013 | Doherty |

| 2014/0160250 | June 2014 | Pomerantz |

| 2014/0184496 | July 2014 | Gribetz |

| 2014/0200079 | July 2014 | Bathiche |

| 2014/0243614 | August 2014 | Rothberg |

| 2014/0285429 | September 2014 | Simmons |

| 2015/0029180 | January 2015 | Komatsu |

Assistant Examiner: Dang; Philip P.

Attorney, Agent or Firm: Mohr Intellectual Property Law Solutions, PC

Claims

We claim:

1. A method, comprising: defining, by a processor, a first coordinate in three-dimensional (3D) space to display a virtual object to a viewer, wherein the three-dimensional space is an augmented reality environment or a virtual reality environment; displaying, by a display, the virtual object at the first coordinate in the 3D space; sensing, by a sensor, an end-effector interacting with the virtual object when the virtual object is displayed at the first coordinate; determining, by the processor, that the end-effector is pointing to a second coordinate in the 3D space that is different than the first coordinate where the virtual object is displayed; determining, by the processor, an offset value between the first coordinate where the virtual object is located and the second coordinate where the end-effector is pointing to, wherein the offset value indicates a difference in a first coordinate and the second coordinate; determining, by the processor, a third coordinate in the 3D space, wherein the third coordinate is the first coordinate of the first coordinate adjusted by the offset value so that the viewer perceives the virtual object as being located at the first coordinate in the 3D space; displaying, by the display, the virtual object at the third coordinate in the 3D space; sensing, by the sensor, the end-effector interacting with the virtual object when the virtual object is displayed at the third coordinate; determining, by the processor, whether the end-effector is pointing to the first coordinate in the 3D space when the virtual object is displayed at the third coordinate; in response to the end-effector pointing to the first coordinate in the 3D space, continuing to display the virtual object at the third coordinate; and in response to the end-effector pointing to a coordinate in the 3D space that is not the first coordinate, iteratively adjusting, by the processor, a coordinate that the virtual object is displayed at until the end-effector points to the first coordinate.

2. The method of claim 1, wherein displaying the virtual object as the first coordinate further comprises displaying the virtual object to a first display and a second display of a stereo display pair.

3. The method of claim 1, wherein displaying the virtual object in the first coordinate further comprises: displaying the virtual object at a first location at a first display of a stereo display pair; and displaying the virtual object in a second location at a second display of the stereo display pair.

4. The method of claim 2, wherein the first coordinate is a first two-dimensional coordinate and the second coordinate is a second two dimensional coordinate.

5. The method of claim 1, wherein displaying the first virtual object at the first coordinate comprises: displaying the virtual object at a first location to a first eye of the viewer but not a second eye of the viewer; and displaying the virtual object at a second location to the second eye of the viewer but not the first eye of the viewer.

6. The method of claim 5, wherein displaying the virtual object at the first coordinate further comprises displaying the virtual object substantially simultaneously to the first eye and the second eye.

7. The method of claim 5, wherein displaying the virtual object at the first coordinate further comprises displaying the virtual object sequentially to the first eye and the second eye.

8. The method of claim 7, wherein displaying the virtual object at the first coordinate further comprises: displaying substantially nothing to the second eye while displaying the virtual object to the first eye; and displaying substantially nothing to the first eye while displaying the virtual object to the second eye.

9. The method of claim 7, wherein displaying the virtual object at the first coordinate further comprises: substantially obstructing the second eye while displaying the virtual object to the first eye; and substantially obstructing the first eye while displaying the virtual object to the second eye.

10. The method of claim 1, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises sensing at least one of a posture or a gesture of the viewer.

11. The method of claim 1, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises sensing, by the sensor, the viewer substantially aligning an end-effector with the second location of the virtual object.

12. The method of claim 6, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises: sensing, by the sensor, the viewer substantially aligning a first end-effector with the first coordinate of the virtual object; and sensing, by the sensor, the viewer substantially aligning the first end-effector with the second coordinate of the virtual object, substantially simultaneously to the viewer substantially aligning the first end-effector with the first coordinate of the virtual object.

13. The method of claim 7, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises: sensing, by the sensor, the viewer substantially aligning a first end-effector with the first coordinate of the virtual object; and sensing, by the sensor, the viewer substantially aligning the first end-effector with the second coordinate of the virtual object.

14. The method of claim 7, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises: sensing, by the sensor, the viewer substantially aligning a first end-effector with the first coordinate of the virtual object; and sensing, by the sensor, the viewer substantially aligning a second end-effector with the second coordinate of the virtual object.

15. The method of claim 1, wherein the virtual object comprises at least one of a virtual reality object or an augmented reality object.

16. The method of claim 1, comprising outputting the virtual object to a stereo display.

17. The method of claim 1, comprising outputting the virtual object to a see-through display.

18. The method of claim 1, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space through stereo imaging.

19. The method of claim 1, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space through depth imaging.

20. The method of claim 1, wherein determining that the viewer perceives the virtual object as being located at the second coordinate that is different than the first coordinate in the 3D space further comprises receiving a viewer communication.

21. The method of claim 20, wherein the viewer communication comprises at least one of: activating a device in communication with the processor; activating a data entity comprising executable instructions instantiated by the processor; waking the device in communication with the processor; waking the data entity comprising executable instructions instantiated by the processor; unlocking the device in communication with the processor; unlocking the data entity comprising executable instructions instantiated by the processor; addressing the device in communication with the processor; addressing the data entity comprising executable instructions instantiated by the processor; identifying a user of the device in communication with the processor; identifying the user of the data entity comprising executable instructions instantiated by the processor; entering a security verification for the device in communication with the processor; and entering the security verification for the data entity comprising executable instructions instantiated by the processor.

22. A method, comprising: defining, by a processor, a first coordinate in an augmented reality environment or a virtual reality environment to display a first virtual object to a viewer; displaying, by a display, the first virtual object at the first coordinate in the augmented reality environment or the virtual reality environment; sensing, by a sensor, an end-effector interacting with the virtual object when the virtual object is displayed at the first coordinate; determining, by the processor, that the end-effector is pointing to a second coordinate in the 3D space that is different than the first coordinate where the virtual object is displayed; determining, by the processor, an offset value between the first coordinate where the virtual object is located and the second coordinate where the end-effector is pointing to, wherein the offset value indicates a difference in a first coordinate and the second coordinate determining, by the processor, a third coordinate in the augmented reality environment or the virtual reality environment, wherein the third coordinate is the first coordinate of the first coordinate adjusted by the offset value so that the viewer perceives the virtual object as being located at the first coordinate in the augmented reality environment or the virtual reality environment; generating a second virtual object that is different than the first virtual object; defining, by the processor, a fourth coordinate in the augmented reality environment or the virtual reality environment to display the second virtual object to the viewer; adjusting the fourth coordinate that the second virtual object is displayed at based on the offset value; displaying, by the display, the first virtual object at the first coordinate in the augmented reality environment or the virtual reality environment; and displaying the second virtual object at the adjusted fourth coordinate.

23. The method of claim 22, comprising sequentially displaying the first virtual object and the second virtual object.

24. The method of claim 22, comprising: displaying the first virtual object by a first display while outputting substantially nothing by a second display; and displaying the second virtual object by the second display while outputting substantially nothing by the first display.

25. The method of claim 22, comprising: displaying the first virtual object by a first display while substantially obstructing a second display; and displaying the second virtual object by the second display while substantially obstructing the first display.

26. The method of claim 22, further comprising sensing a viewer first indication of the first virtual object and a viewer second indication of the second virtual object.

27. The method of claim 22, wherein displaying the first virtual object and the second virtual object at the third coordinate further comprises displaying the first virtual object by a first display and substantially simultaneously displaying the second virtual object by a second display.

28. The method of claim 22, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises sensing substantially simultaneous a perceived location of the first virtual object and the second virtual object.

29. The method of claim 22, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises sensing at least one of a viewer posture or a viewer gesture.

30. The method of claim 22, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises sensing a first end-effector aligning with the second coordinate of the first virtual object.

31. The method of claim 30, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises sensing the viewer substantially aligning the first end-effector with the second coordinate of the first virtual object and substantially simultaneously sensing the viewer substantially aligning the first end-effector with the first coordinate of the first virtual object.

32. The method of claim 30, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises: sensing the viewer substantially aligning the first end-effector with the first coordinate of the first virtual object; and sensing the viewer substantially aligning the first end-effector with the second coordinate of the first virtual object.

33. The method of claim 30, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises: sensing the viewer substantially aligning the first end-effector with the first coordinate of the first virtual object; and sensing the viewer substantially aligning a second end-effector with the second coordinate of the first virtual object.

34. The method of claim 30, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate further comprises: sensing the viewer substantially aligning the first end-effector with the first coordinate of the first virtual object; and sensing the viewer substantially aligning the first end-effector with the second coordinate of the first virtual object substantially simultaneously to the viewer substantially aligning the first end-effector with the first coordinate of the first virtual object.

35. The method of claim 22, wherein the first virtual object comprises at least one of a virtual reality object or an augmented reality object.

36. The method of claim 22, wherein the display comprises a see-through display.

37. The method of claim 22, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate that is different than the first coordinate in the augmented reality environment or the virtual reality environment further comprises determining that the viewer perceives the first virtual object as being located at the second coordinate that is different than the first coordinate in the augmented reality environment or the virtual reality environment through stereo imaging.

38. The method of claim 22, wherein determining that the viewer perceives the first virtual object as being located at the second coordinate that is different than the first coordinate in the augmented reality environment or the virtual reality environment further comprises determining that the viewer perceives the first virtual object as being located at the second coordinate that is different than the first coordinate in the augmented reality environment or the virtual reality environment through depth imaging.

39. An apparatus comprising: a first three-dimensional (3D) display operable to output a virtual object at a first coordinate in three-dimensional (3D) space to a first eye of a viewer; a second 3D display operable to output the virtual object at a second coordinate in the 3D space to a second eye of the viewer, wherein the first coordinate is different than the first coordinate; a first sensor configured to measure the first coordinate of an end-effector interacting with the virtual object relative to the first eye when the virtual object is displayed at the first coordinate; a second sensor configured to measure the second coordinate of the end-effector interacting with the virtual object relative to the second eye when the virtual object is displayed at the second coordinate; a processor coupled to the first 3D display, the second 3D display, the first sensor, and the second sensor, wherein the processor is configured to: determine that the end-effector is pointing to a third coordinate on the first 3D display that is different than the first coordinate where the virtual object is displayed; determine that the end-effector is pointing to a fourth coordinate on the second 3D display that is different than the second coordinate where the virtual object is displayed; determine a first offset value between the first coordinate where the virtual object is located and the third coordinate where the end-effector is pointing to on the first display, wherein the first offset value indicates a difference in the first coordinate and the third coordinate; determine a second offset value between the second coordinate where the virtual object is located and the fourth coordinate where the end-effector is pointing to on the second display, wherein the second offset value indicates a difference in the second coordinate and the fourth coordinate; determine a fifth coordinate in the 3D space, wherein: the fifth coordinate is the first coordinate adjusted by the first offset value so that the viewer perceives the virtual object as being located at the first coordinate on the first 3D display; the first 3D display is to display the virtual object at the fifth coordinate on the first 3D display; and determine a sixth coordinate in the 3D space, wherein: the sixth coordinate is the second coordinate adjusted by the second offset value so that the viewer perceives the virtual object as being located at the second coordinate on the second 3D display; and the second 3D display is to display the virtual object at the sixth coordinate on the second 3D display.

40. The apparatus of claim 39, wherein the first 3D display comprises a stereo display.

41. The apparatus of claim 39, wherein the first 3D display comprises a see-through display.

42. The apparatus of claim 39, wherein the first sensor or the second sensor comprises an imager.

43. The apparatus of claim 39, wherein the first sensor or the second sensor comprises a stereo imager pair.

44. The apparatus of claim 39, wherein the first sensor or the second sensor comprises a depth imager.

45. The apparatus of claim 39, wherein the processor, the display, and the sensor are disposed on a head-mounted display.

46. An apparatus, comprising: a stereo display pair comprising a first display to display a virtual object to a first eye of a viewer at a first coordinate of the first display and a second display to display the virtual object to a second eye of the viewer at a second coordinate of the second display; a sensor configured to: measure the first coordinate an end-effector interacting with the virtual object relative to the first eye of when the virtual object is displayed at the first coordinate; measure the second coordinate of the end-effector interacting with the virtual object relative to the second eye when the virtual object is displayed at the second coordinate; and a processor coupled to the stereo display and the sensor, wherein the processor is configured to: determine that the end-effector is pointing to a third coordinate on the first 3D display that is different than the first coordinate where the virtual object is displayed; determine that the end-effector is pointing to a fourth coordinate on the second 3D display that is different than the second coordinate where the virtual object is displayed; determine a first offset value between the first coordinate where the virtual object is located and the third coordinate where the end-effector is pointing to on the first display, wherein the first offset value indicates a difference in the first coordinate and the third coordinate; determine a second offset value between the second coordinate where the virtual object is located and the fourth coordinate where the end-effector is pointing to on the second display, wherein the second offset value indicates a difference in the second coordinate and the fourth coordinate, and simultaneously adjust the first coordinate by the first offset value and the second coordinate by the second offset value such that the first display and the second display simultaneously display the virtual object at the adjusted first coordinate and the adjusted second coordinate, respectively.

47. The apparatus of claim 46, wherein the first display and the second display comprise see-through displays.

48. The apparatus of claim 46, wherein the sensor comprises an imager.

49. The apparatus of claim 46, wherein the sensor comprises a stereo imager pair.

50. The apparatus of claim 46, wherein the sensor comprises a depth imager.

51. The apparatus of claim 46, wherein at least one of the virtual object is a calibration object.

52. The apparatus of claim 46, wherein the processor is further to combine the virtual object to form a 3D object.

Description

FIELD OF THE INVENTION

This invention relates to output of three dimensional images. More particularly, this invention relates to approaches for individually calibrating a three dimensional display system such as a stereo display system to accommodate a viewer's particular visual parameters.

DESCRIPTION OF RELATED ART

Humans perceive depth in part through stereo vision, noting the difference in perspective between the left and right eyes when viewing the physical world. Stereo displays take advantage of this feature: by displaying different images to the left and right eyes, depth can be portrayed even if both images are two-dimensional.

However, human vision may not be uniform from one individual to another, and/or for an individual over time. For example, differences in geometry--such as the spacing between a person's eyes, the relative positions of the eyes, the physical structures of the eyes, the alignment of the eyes with one another, etc.--can affect the way a scene appears to an individual. Thus, individuals viewing a physical scene may perceive that scene differently, based on the particulars of each individual's eyes. Even if two people both perceive an object to be at a distance of (for example) 50 cm, what those two people see in making that determination may vary.

Because of this, a "one size fits all" stereo display configuration may be problematic. Displayed imagery that does not correspond with what a viewer sees when looking at a physical environment may consciously or unconsciously be perceived as unrealistic or unconvincing. In addition, variations in how a stereo display scene appears compared with how the physical world appears--from the viewer's perspective, errors (or at least apparent errors) in how the scene is displayed--may contribute (again, with or without the viewer's awareness) to issues such as disorientation, eyestrain, nausea, etc.

BRIEF SUMMARY OF THE INVENTION

The present invention contemplates a variety of systems, apparatus, methods, and paradigms for calibrating three dimensional display systems to individual viewers.

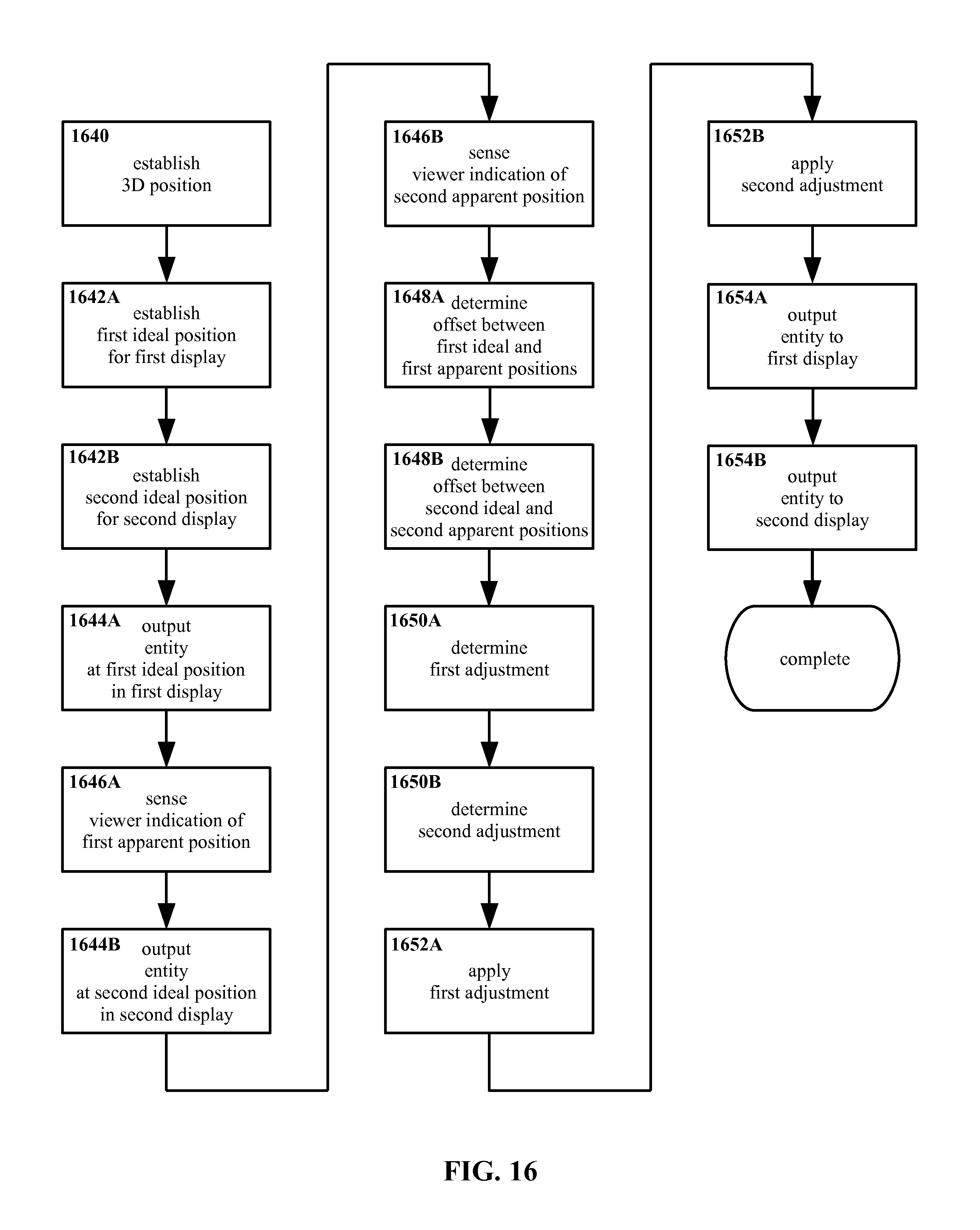

In one embodiment of the present invention, a machine-implemented method is provided that includes, in a processor, outputting a first entity in an ideal position in three-dimensional space, and sensing a viewer indication of an apparent position of the first entity. The method also includes determining an offset between the ideal position and the apparent position, and determining an adjustment from the offset, such that an apparent position of the ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. Thereafter the adjustment is applied the first entity and/or a second entity.

Outputting the first entity in the ideal position may include outputting the first entity to first and second displays of a stereo display pair. Outputting the first entity in the ideal position may include outputting the first entity in a first ideal position to a first display of a stereo display pair, and outputting the first entity in a second ideal position to a second display of the stereo display pair. The first and second ideal positions may be substantially two-dimensional.

Outputting the first entity in the ideal position may include outputting the first entity in a first ideal position to a first eye of the viewer but not a second eye of the viewer, and outputting the first entity in a second ideal position to the second eye of the viewer but not the first eye of the viewer.

The method may include outputting the first entity substantially simultaneously to the first eye and the second eye, wherein sensing the viewer indication of the apparent position of the first entity comprises sensing a substantially simultaneous viewer first indication of a first apparent position of the first entity to the first eye and viewer second indication of a second apparent position of the first entity to the second eye.

The method may include outputting the first entity sequentially to the first eye and the second eye, wherein sensing the viewer indication of the apparent position of the first entity comprises sequentially sensing a viewer first indication of a first apparent position of the first entity to the first eye, and a viewer second indication of a second apparent position of the first entity to the second eye.

The method may include outputting substantially nothing to the second eye while outputting the first entity to the first eye, and outputting substantially nothing to the first eye while outputting the first entity to the second eye. The method may include substantially obstructing the second eye while outputting the first entity to the first eye, and substantially obstructing the first eye while outputting the first entity to the second eye.

The viewer indication may include a viewer posture and/or a viewer gesture. The viewer indication may include the viewer substantially aligning an end-effector with the apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning the first end-effector with the second apparent position of the first entity, substantially simultaneously to the viewer substantially aligning the first end-effector with the first apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning the first end-effector with the second apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning a second end-effector with the second apparent position of the first entity.

The entity may include a virtual reality entity and/or an augmented reality entity.

The method may include outputting the first entity to a stereo display. The method may include outputting the first entity to a see-through display.

The method may include sensing the viewer indication of the apparent position through stereo imaging. The method may include sensing the viewer indication of the apparent position through depth imaging.

The viewer indication may include a viewer communication with the processor in addition to the indication of the apparent position. The viewer communication may include: activating a device in communication with the processor, activating a data entity comprising executable instructions instantiated on the processor, waking the device in communication with the processor, waking the data entity comprising executable instructions instantiated on the processor, unlocking the device in communication with the processor, unlocking the data entity comprising executable instructions instantiated on the processor, addressing the device in communication with the processor, addressing the data entity comprising executable instructions instantiated on the processor, identifying a user of the device in communication with the processor, identifying the user of the data entity comprising executable instructions instantiated on the processor, entering a security verification for the device in communication with the processor, and/or entering the security verification for the data entity comprising executable instructions instantiated on the processor.

In another embodiment of the present invention, a machine-implemented method is provided that includes, in a processor, establishing a substantially three-dimensional combined ideal position, determining from the combined ideal position a substantially two-dimensional first ideal position for a first display of a stereo display pair, and determining from the combined ideal position a substantially two-dimensional second ideal position for a second display of the display stereo pair. The method also includes outputting a first entity substantially to the first ideal position in the first display, and outputting the first entity substantially to the second ideal position in the second display. The method further includes sensing a viewer indication of an apparent position, determining a first position offset substantially representing a difference between the apparent position and the first ideal position, determining a second position offset substantially representing a difference between the apparent position and the second ideal position, and determining an adjustment for the combined ideal position from the first and second position offsets, such that an apparent position of the combined ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. The method includes applying the adjustment to a three dimensional position of the first entity and/or a second entity, and outputting the first entity and/or second entity with the adjustment applied thereto to the stereo display pair.

The method may include sequentially outputting the first and second entities, outputting the first entity in the first display while not outputting the second target in the second display, and outputting the second entity in the second display while not outputting the first target in the first display. The method may include outputting the first target in the first display while outputting substantially nothing in the second display, and outputting the second target in the second display while outputting substantially nothing in the first display. The method may include outputting the first target in the first display while substantially obstructing the second display, and outputting the second target in the second display while substantially obstructing the first display.

Sensing the viewer indication may include sensing a viewer first indication of the first target and a viewer second indication of the second target.

The method may include outputting the first target in the first display and substantially simultaneously outputting the second target in the second display.

Sensing the viewer indication may include sensing substantially simultaneous indication of the first and second targets.

The viewer indication may include a viewer posture and/or a viewer gesture. The viewer indication may include the viewer substantially aligning an end-effector with the apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning the first end-effector with the second apparent position of the first entity, substantially simultaneously to the viewer substantially aligning the first end-effector with the first apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning the first end-effector with the second apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning a second end-effector with the second apparent position of the first entity.

The viewer first indication may include the viewer substantially aligning a first end-effector with the first apparent position of the first entity, and the viewer second indication may include the viewer substantially aligning the first end-effector with the second apparent position of the first entity, substantially simultaneously to the viewer substantially aligning the first end-effector with the first apparent position of the first entity.

The entity may include a virtual reality entity and/or an augmented reality entity.

The first and second displays are see-through displays.

The method may include sensing the viewer indication of the apparent position through stereo imaging. The method may include sensing the viewer indication of the apparent position through depth imaging.

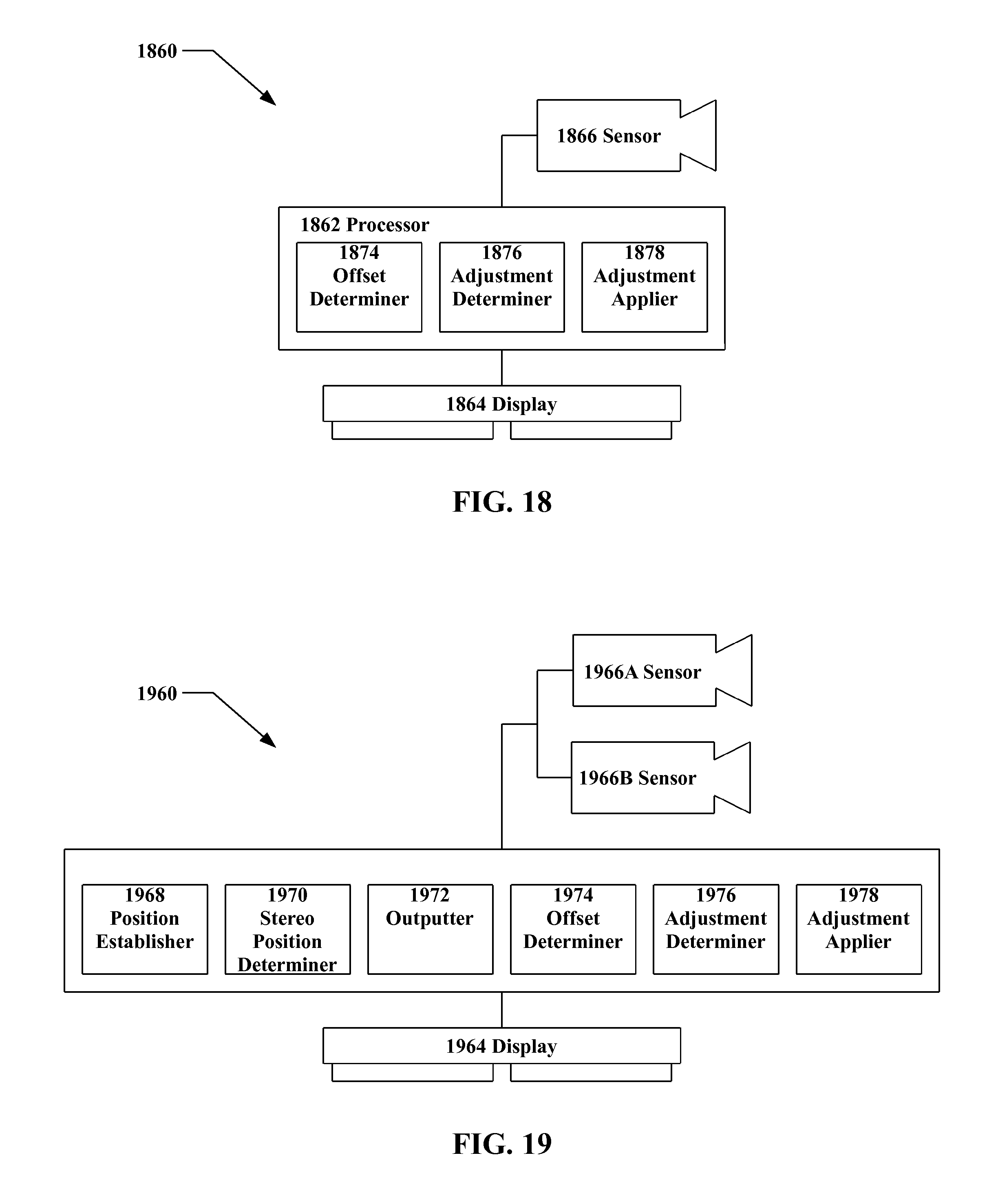

In another embodiment of the present invention, an apparatus is provided that includes a processor, a 3D display in communication with the processor, the display being adapted to output a first entity at an ideal position, and a sensor in communication with the processor, the sensor being adapted to determine a viewer indication of an apparent position of the first entity. An offset determiner is instantiated on the processor, the offset determiner including executable instructions, the difference determiner being adapted to determine an offset between the ideal position and the apparent position. An adjustment determiner is instantiated on the processor, the adjustment determiner including executable instructions, the adjustment determiner being adapted to determine an adjustment from the offset such that an apparent position of the ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. An adjustment applier is instantiated on the processor, the adjustment applier including executable instructions, the adjustment applier being adapted to apply the adjustment to at least one of a group consisting of the first entity and a second entity.

The display may be a stereo display. The display may be a see-through display. The sensor may include an imager. The sensor may include a stereo imager pair. The sensor may include a depth sensor and/or depth imager. The processor, the display, and the sensor may be disposed on a head-mounted display.

In another embodiment of the present invention, an apparatus is provided that includes a processor, a stereo display pair in communication with the processor, the stereo display pair comprising first and second displays, the stereo display pair being adapted to output at least one entity, and a sensor in communication with the processor, the sensor being adapted to determine a viewer indication of an apparent position. A position establisher is instantiated on the processor, the position establisher including executable instructions, the position establisher being adapted to establish a substantially three-dimensional combined ideal position. A stereo position determiner instantiated on the processor, the stereo position determiner including executable instructions, the stereo position determiner being adapted to determine a substantially two-dimensional first ideal position in the first display substantially corresponding with the combined ideal position, and to determine a substantially two-dimensional second ideal position for in the second display substantially corresponding with the combined ideal position. An outputter is instantiated on the processor, the outputter including executable instructions, the outputter being adapted to output a first target substantially to the first ideal position in the first display, and to output a second target substantially to the second ideal position in the second display. An offset determiner is instantiated on the processor, the offset determiner including executable instructions, the offset determiner being adapted to determine a first position offset substantially representing a difference between the apparent position and the first ideal position, and to determine a second position offset substantially representing a difference between the apparent position and the second ideal position. An adjustment determiner is instantiated on the processor, the adjustment determiner including executable instructions, the adjustment determiner being adapted to determine an adjustment for the combined ideal position from the first and second position offsets such that an apparent position of the ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. An adjustment applier is instantiated on the processor, the adjustment applier including executable instructions, the adjustment applier being adapted to apply the adjustment to at least one the entity.

The first and second displays may be see-through displays. The sensor may include an imager. The sensor may include a stereo imager pair. The sensor may include a depth sensor and/or depth imager.

In another embodiment of the present invention, an apparatus is provided that includes means for establishing a substantially three-dimensional combined ideal position, means for determining from the combined ideal position a substantially two-dimensional first ideal position for a first display of a stereo display pair, and means for determining from the combined ideal position a substantially two-dimensional second ideal position for a second display of the display stereo pair. The apparatus includes means for outputting a first target substantially to the first ideal position in the first display, means for outputting a second target substantially to the second ideal position in the second display. The apparatus also includes means for sensing a viewer indication of an apparent position, means for determining a first position offset substantially representing a difference between the apparent position and the first ideal position, means for determining a second position offset substantially representing a difference between the apparent position and the second ideal position, and means for determining an adjustment, such that an apparent position of the ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. The apparatus further includes means for applying the adjustment to a three dimensional position of at least one output entity; and means for outputting the at output entity to the stereo display pair.

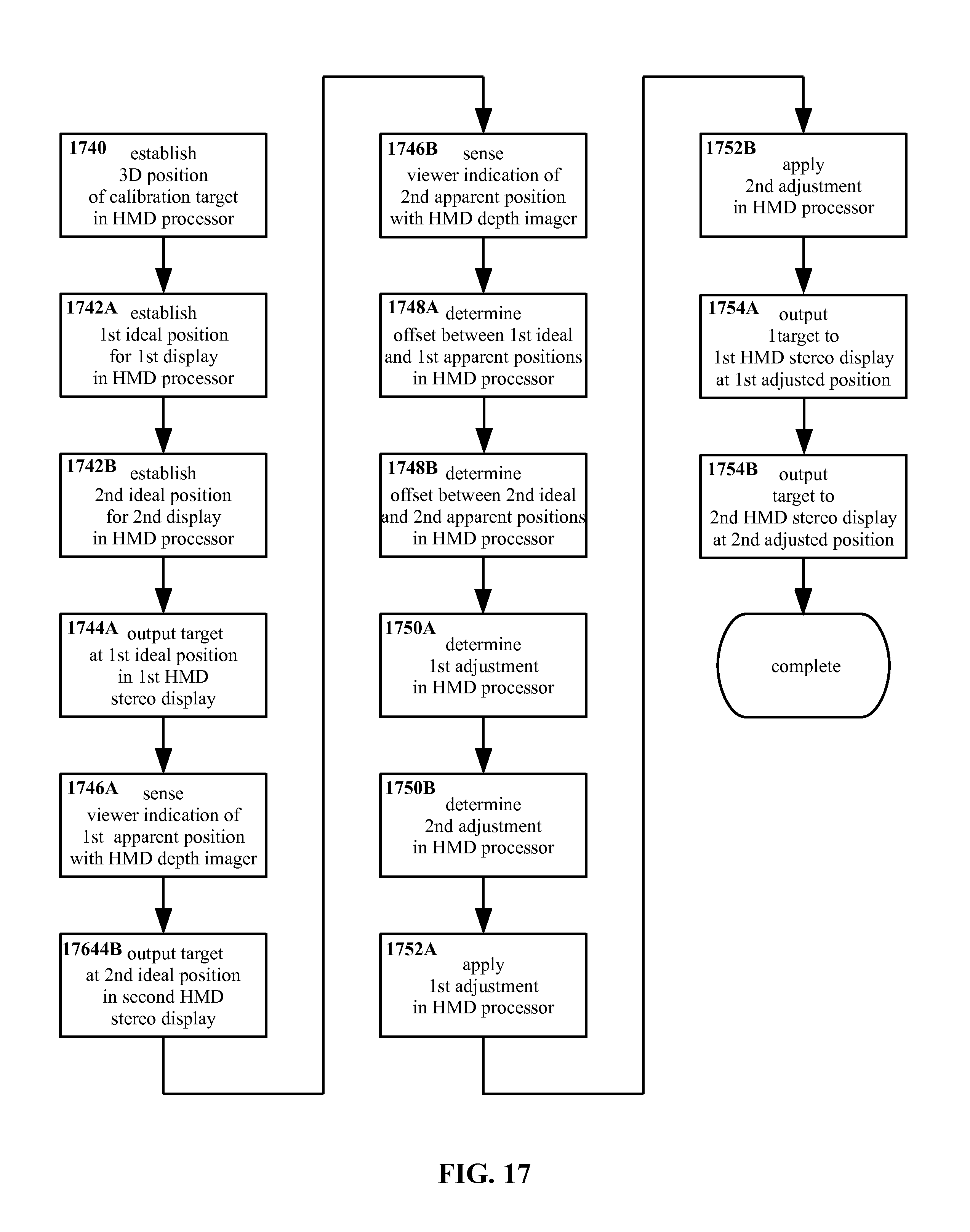

In another embodiment of the present invention, a method is provided that includes outputting to a stereo display of a head mounted display a calibration target in an ideal position in three-dimensional space. The method includes sensing with a depth imager of the head mounted display a viewer indication of the apparent position of the first entity, determining computationally in a processor of the head mounted display an offset between the ideal position and the apparent position, and determining computationally in the processor an adjustment from the offset such that the apparent position of the ideal position with the adjustment is substantially similar to the ideal position absent the adjustment. The method also includes thereafter applying the adjustment in the processor to the calibration target and/or a data entity, and outputting the calibration target and/or data entity with the adjustment applied thereto to the stereo display.

In another embodiment of the present invention, a method is provided that includes, in a processor of a head mounted display, computationally determining a substantially three-dimensional combined ideal position for a calibration target, and in the processor computationally determining from the combined ideal position a substantially two-dimensional first ideal position for a first display of a stereo display pair of the head mounted display, and in the processor computationally determining from the combined ideal position a substantially two-dimensional second ideal position for a second display of the stereo display pair. The method includes outputting said target substantially to the first ideal position in said first display, outputting the target substantially to the second ideal position in the second display, and sensing with a sensor of the head mounted display a viewer indication of an apparent position of the target. The method also includes in the processor computationally determining a first position offset substantially representing a difference between the apparent position and the first ideal position, in the processor computationally determining a second position offset substantially representing a difference between the apparent position and the second ideal position, and in the processor computationally determining an adjustment for the combined ideal position from the first and second position offsets, such that an apparent position of said combined ideal position with said adjustment is substantially similar to said ideal position absent said adjustment. The method further includes in the processor applying the adjustment to a three dimensional position of the target, and outputting the calibration target with the adjustment applied thereto to the stereo display pair.

BRIEF DESCRIPTION OF THE SEVERAL VIEWS OF THE DRAWINGS

Like reference numbers generally indicate corresponding elements in the figures.

FIG. 1 shows a top down schematic for an example three dimensional display system according to the present invention, with an example inter-pupillary distance and apparent position.

FIG. 2 shows a top down schematic for another example three dimensional display system according to the present invention, with a different inter-pupillary distance and apparent position from FIG. 1.

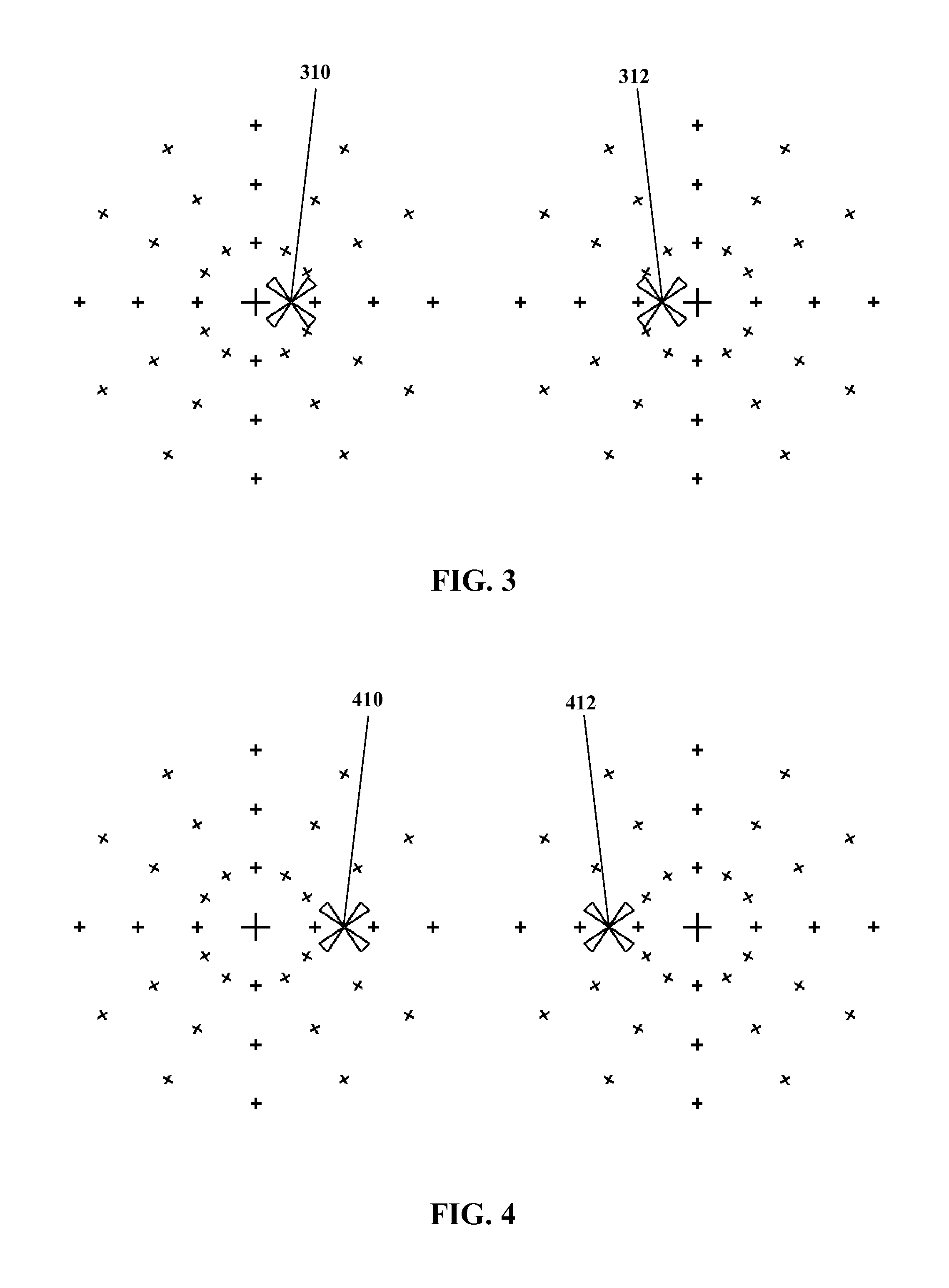

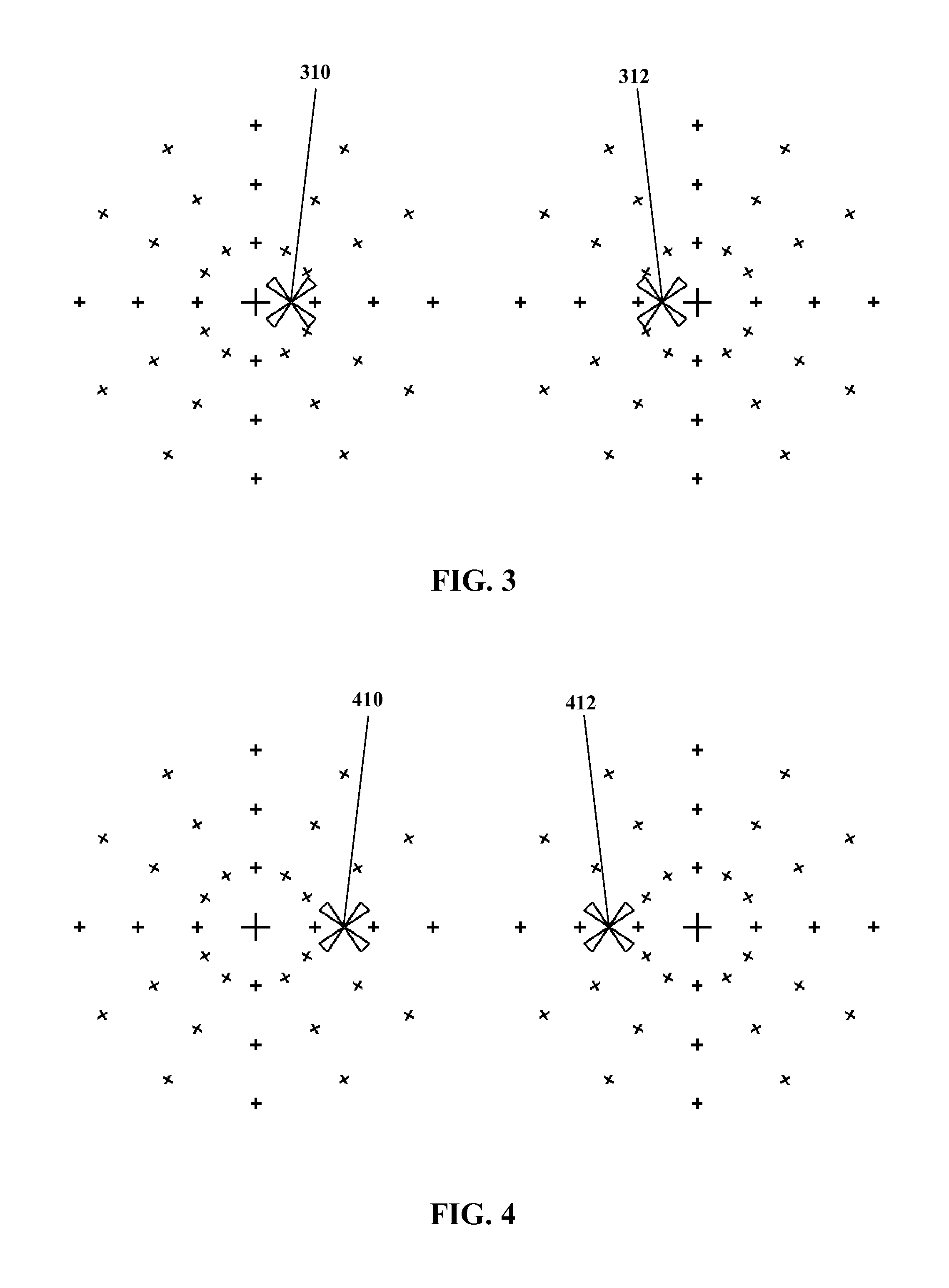

FIG. 3 shows apparent positions of images for the left and right eyes of a viewer, for an example inter-pupillary distance.

FIG. 4 shows apparent positions of images for the left and right eyes of a viewer, for a different example inter-pupillary distance from FIG. 3.

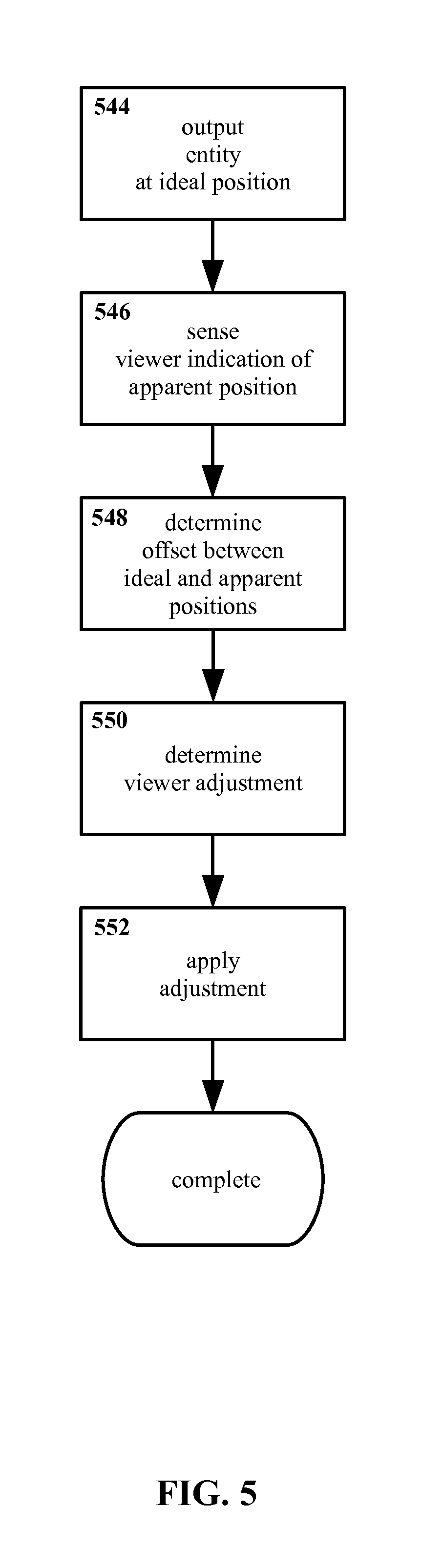

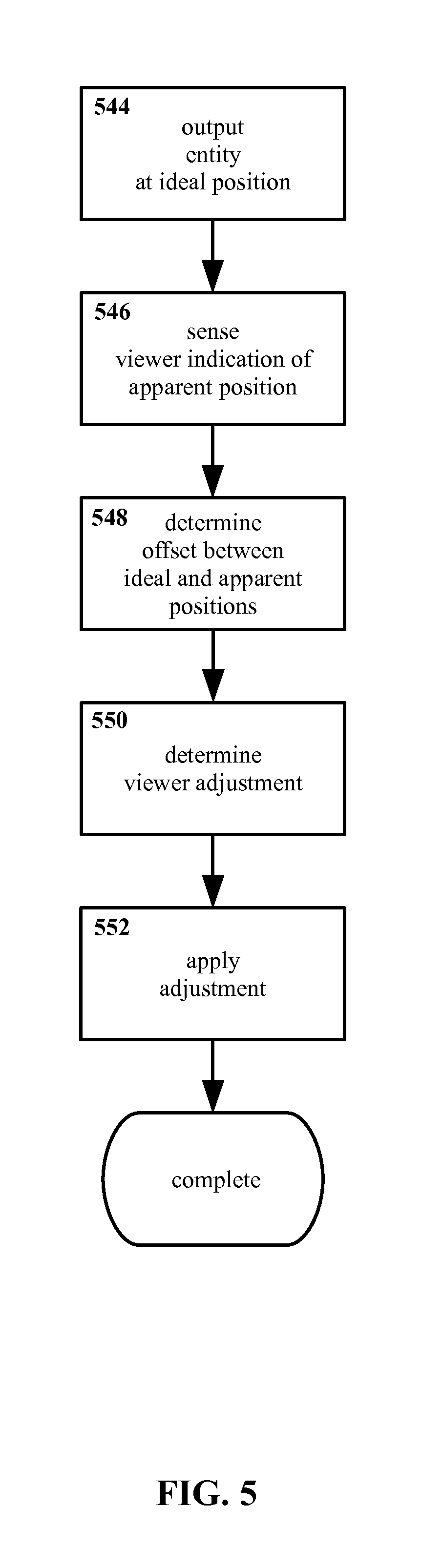

FIG. 5 shows an example method for individualized three dimensional display calibration according to the present invention, in flow-chart form.

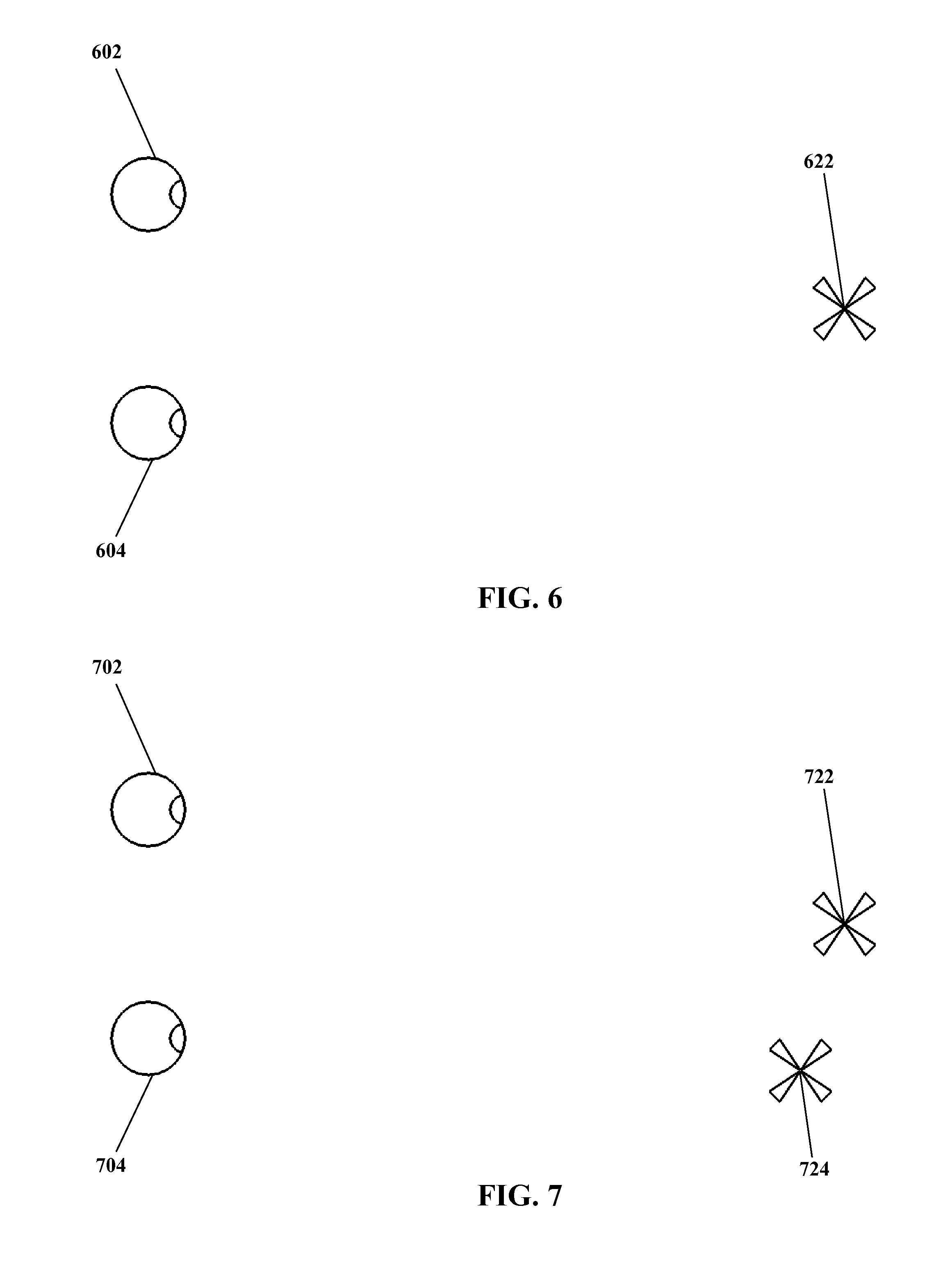

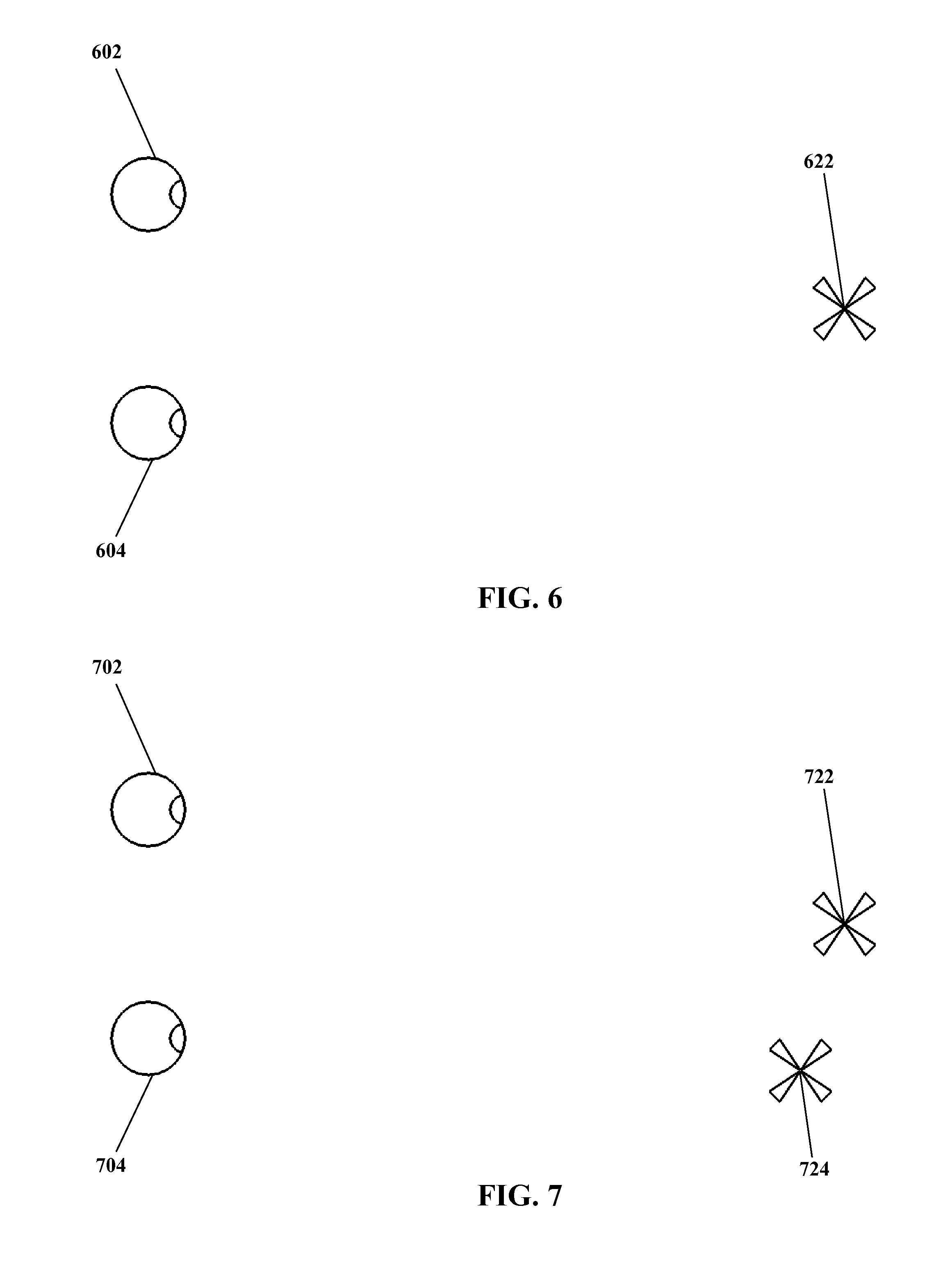

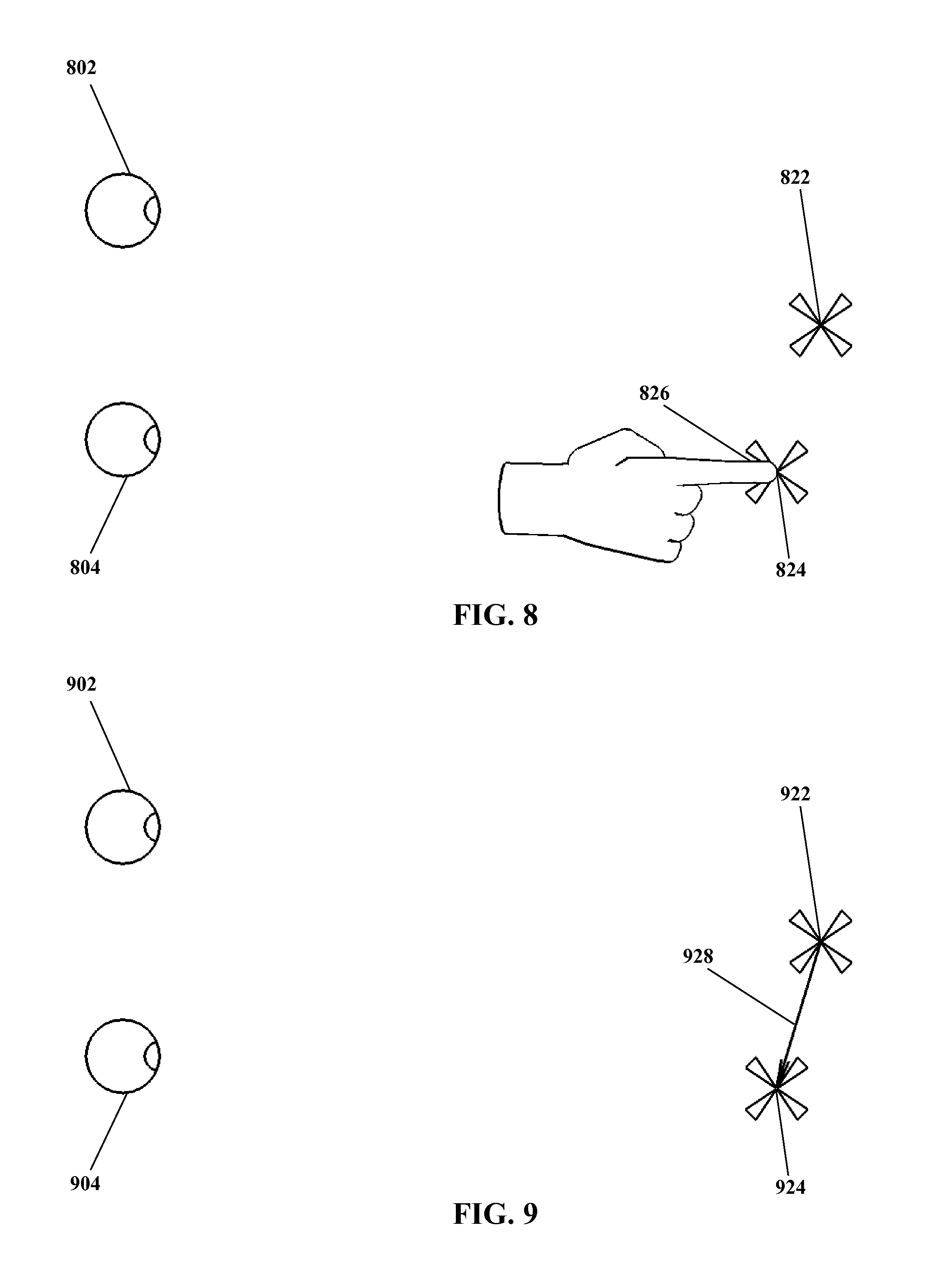

FIG. 6 through FIG. 13 show a sequence as might correspond to at least certain features of an example method for individualized three dimensional display calibration according to the present invention, illustrated as might be seen and/or carried out by a viewer.

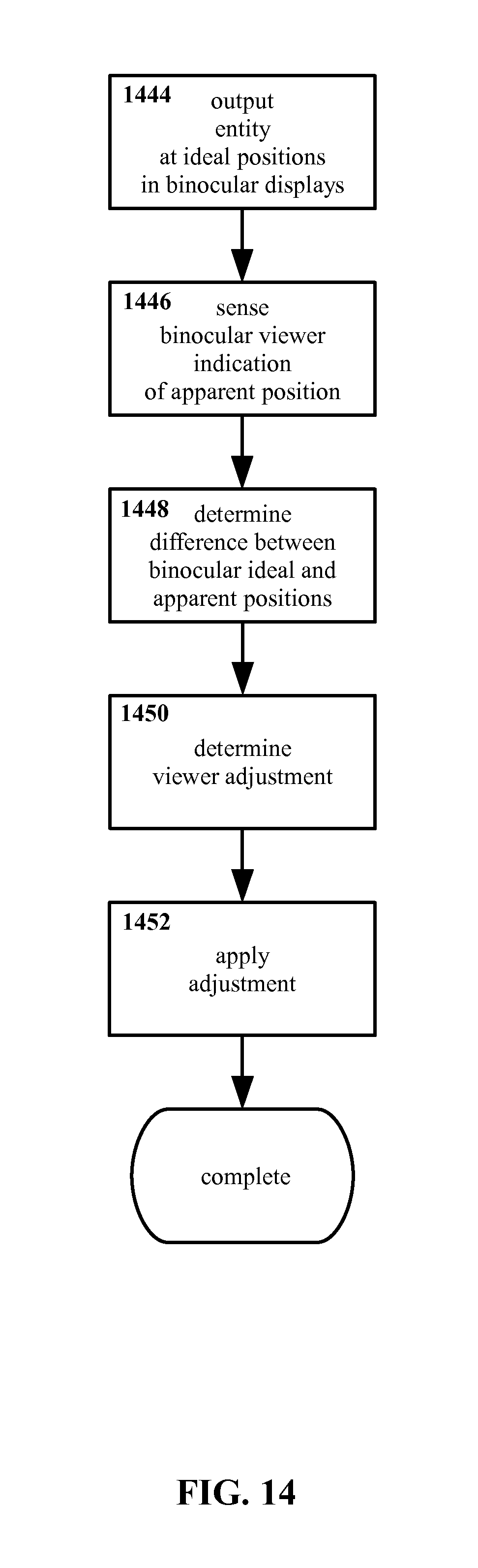

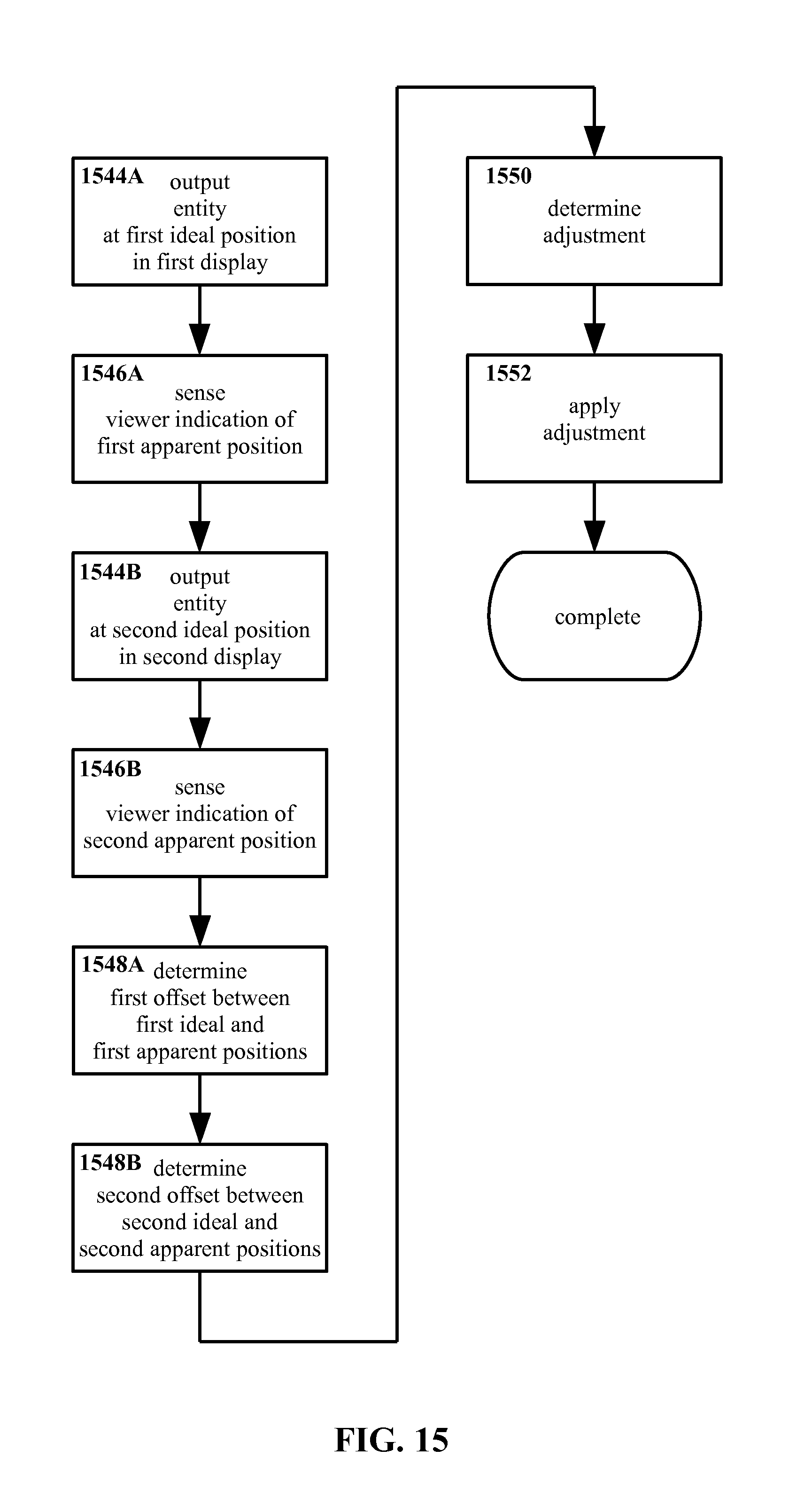

FIG. 14 shows another example method for individualized three dimensional display calibration according to the present invention, explicitly binocular in nature, in flow-chart form.

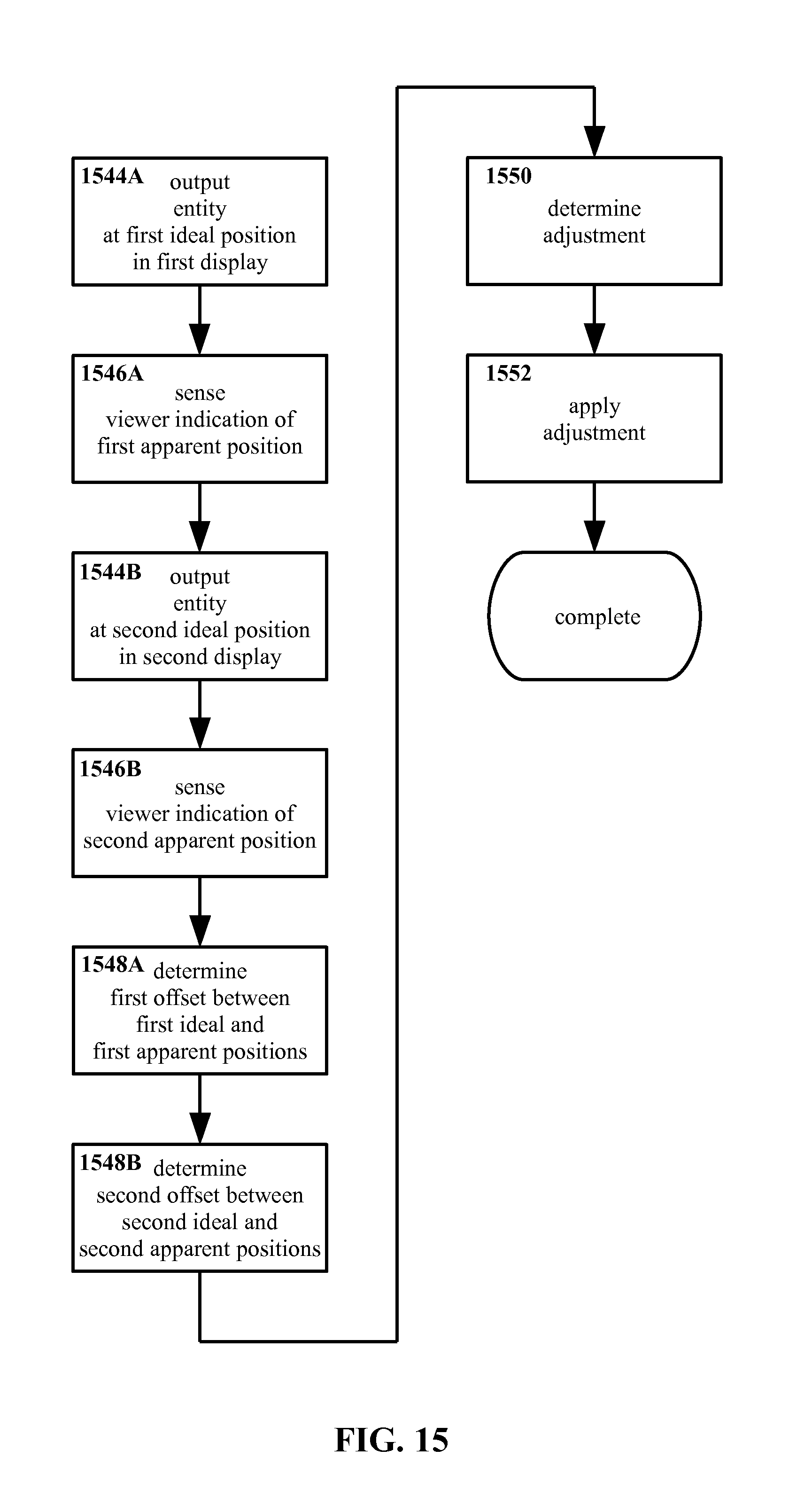

FIG. 15 shows another example method for individualized three dimensional display calibration according to the present invention, explicitly monocular in nature, in flow-chart form.

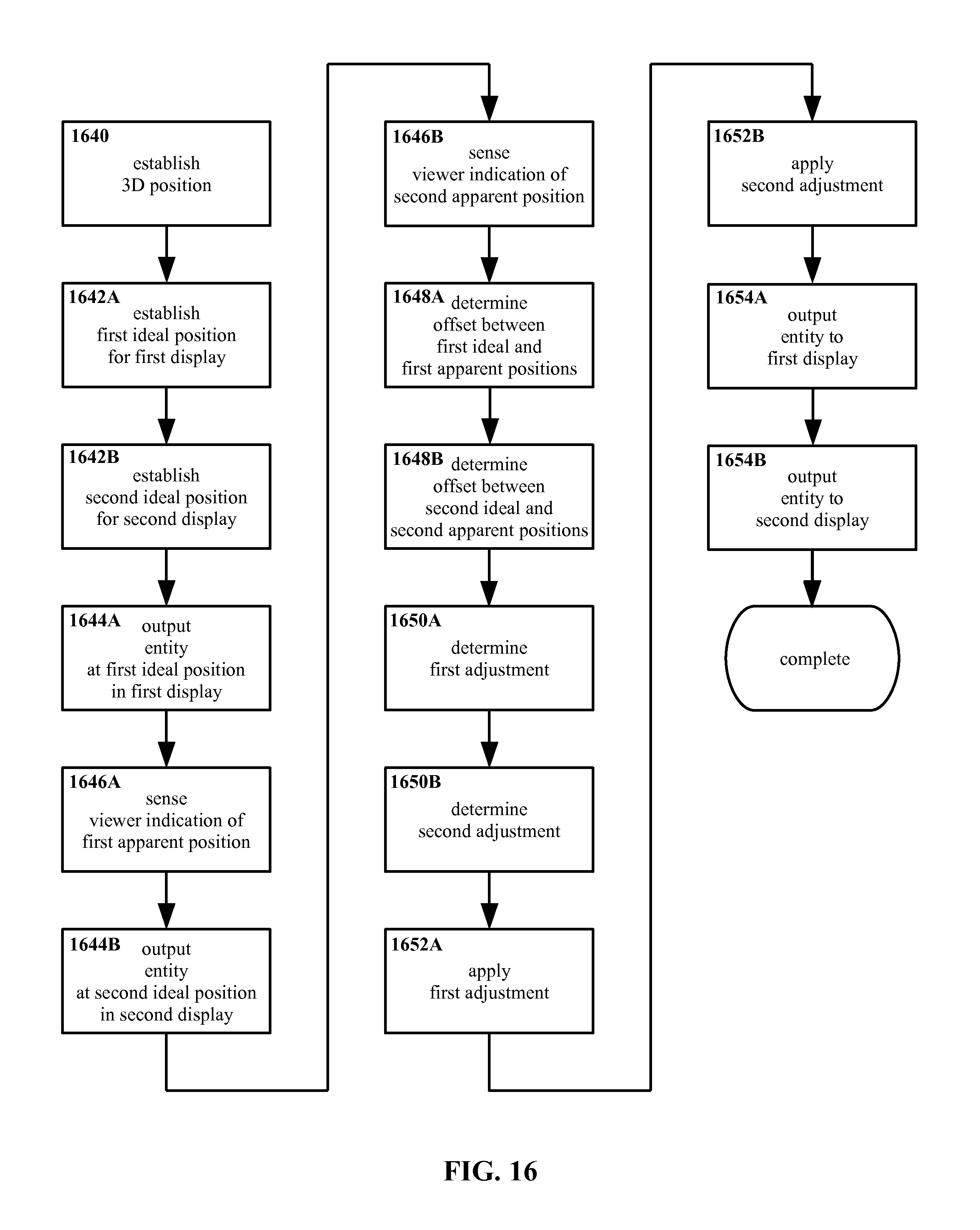

FIG. 16 shows another example method for individualized three dimensional display calibration according to the present invention, explicitly establishing positions and outputting entities, in flow-chart form.

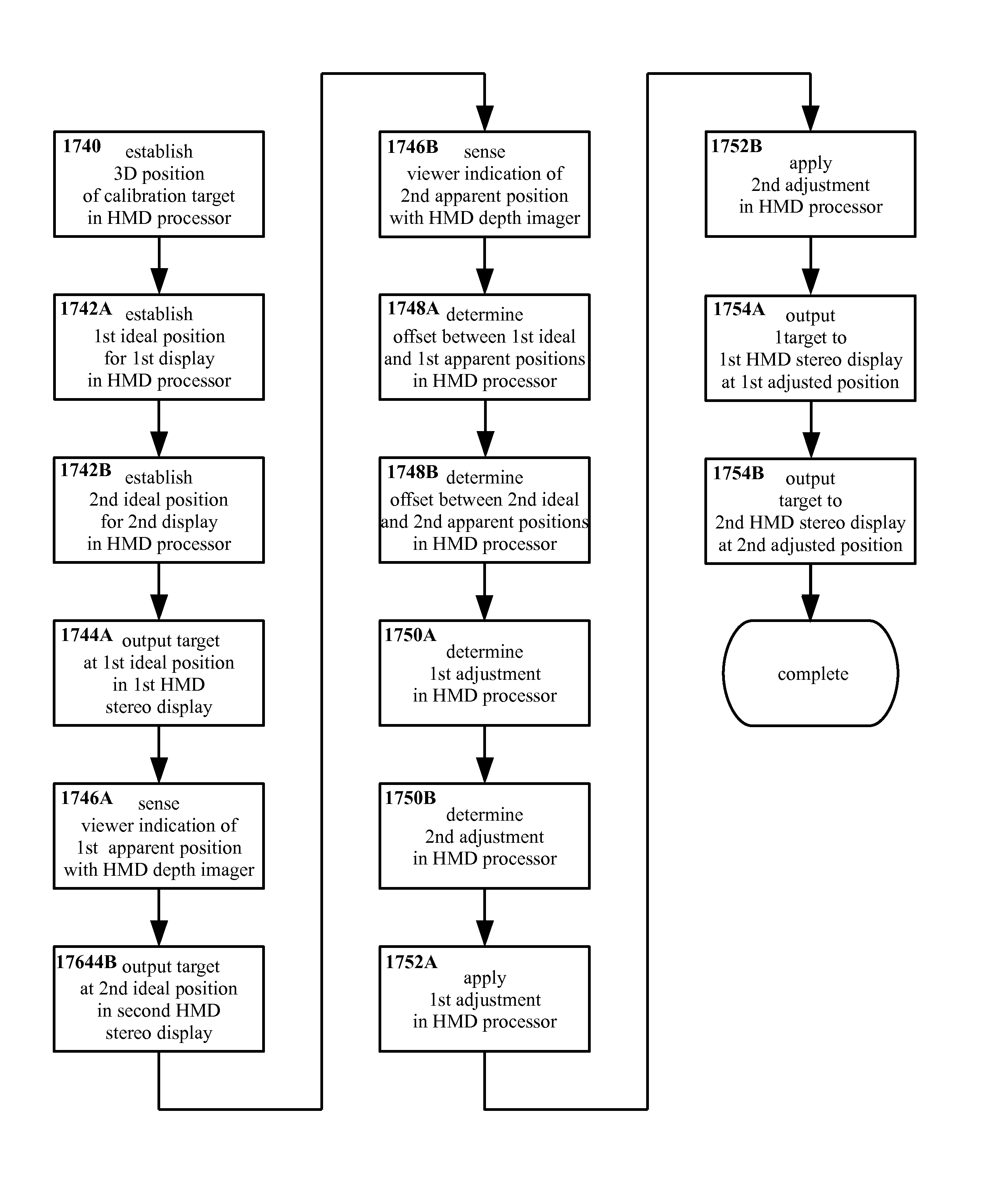

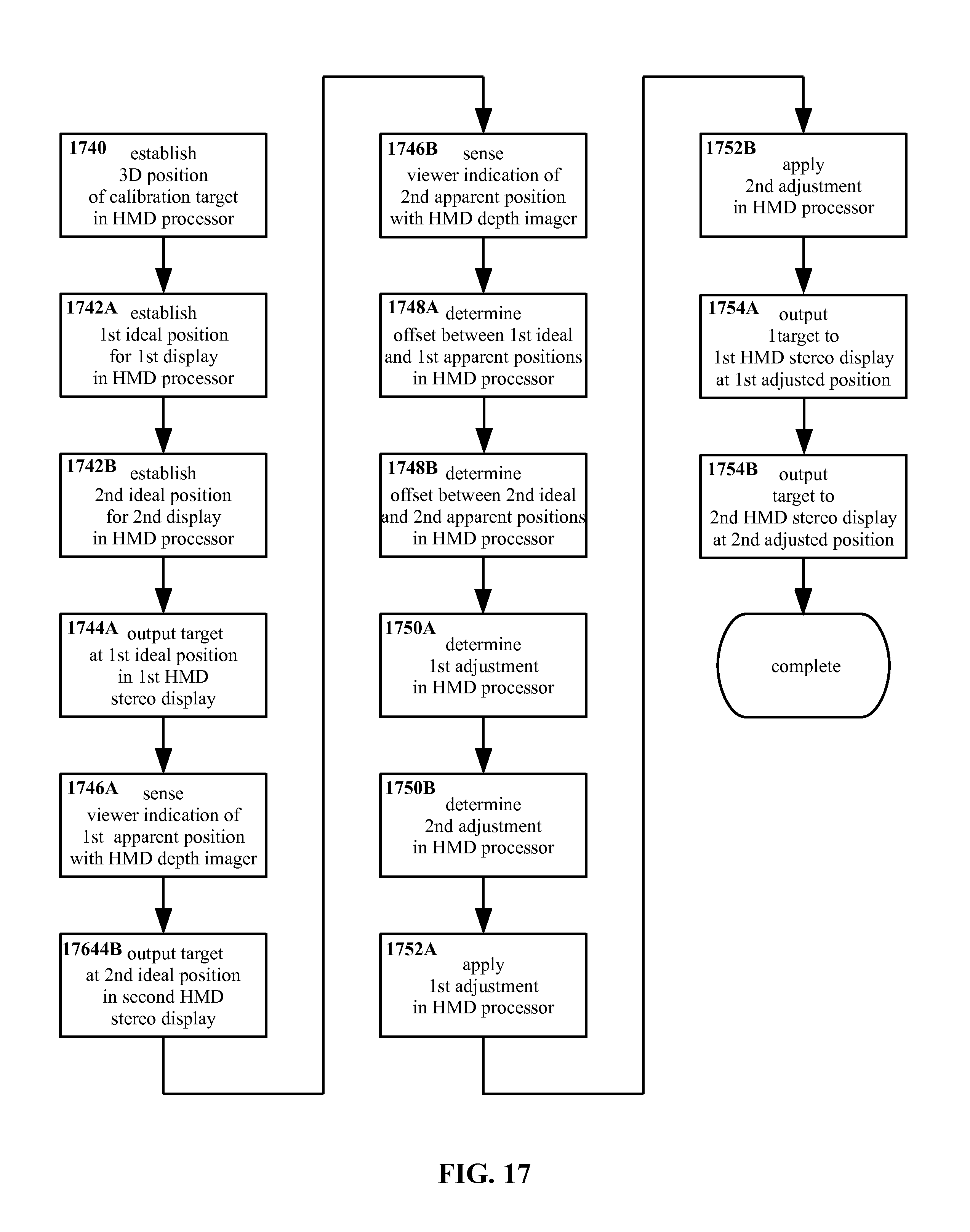

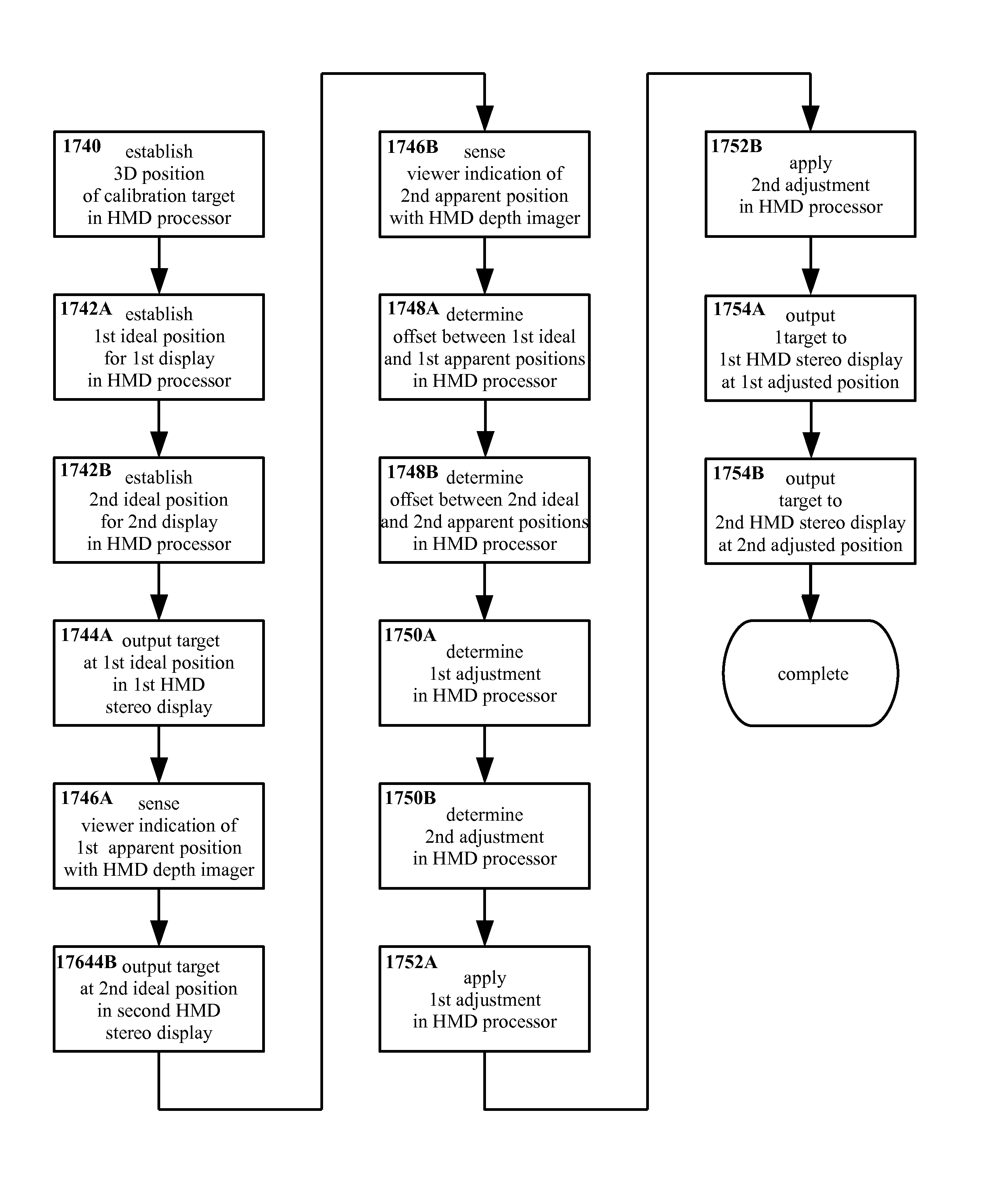

FIG. 17 shows another example method for individualized three dimensional display calibration according to the present invention, with example references to approaches for execution of steps thereof, in flow-chart form.

FIG. 18 shows an example apparatus for individualized three dimensional display calibration according to the present invention, in schematic form.

FIG. 19 shows another example apparatus for individualized three dimensional display calibration according to the present invention, in schematic form.

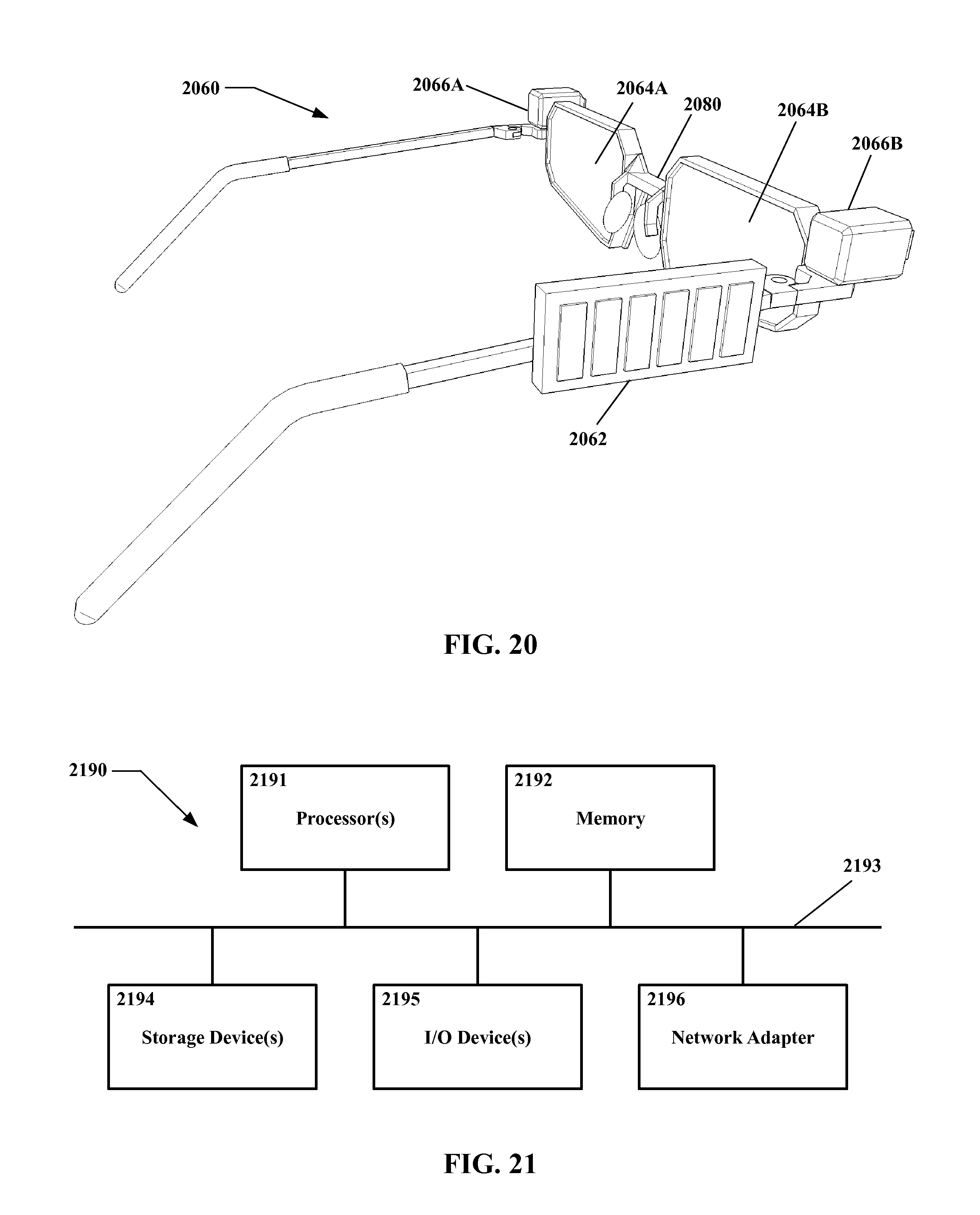

FIG. 20 shows another example apparatus for individualized three dimensional display calibration according to the present invention, in perspective view.

FIG. 21 shows a block diagram of a processing system that may implement operations of the present invention.

DETAILED DESCRIPTION OF THE INVENTION

With reference to FIG. 1, a top down schematic for a three dimensional display system is shown therein. Left and right eyes 102 and 104 are shown, with left and right displays 106 and 108 of a three dimensional display system (as shown a stereo display, though this is an example only) disposed in front of the left and right eyes 102 and 104 respectively. Left and right images 110 and 112 are outputted to the displays 106 and 108. Sight lines 114 and 116 are shown extending from the eyes 102 and 104 to the images 110 and 112.

Because the images 110 and 112 are outputted to the displays 106 and 108, sight lines 114 and 116 to the images 110 and 112 terminate at the displays 106 and 108. However, human stereo vision typically combines the two images 110 and 112, interpreting images 110 and 112 as a single target 122. The position of the target 122 is determined by extending virtual sight lines 118 and 120 from the sight lines 114 and 116 to a point of convergence. Thus even though the images 110 and 112 themselves may be substantially or entirely two-dimensional, and/or constrained to positions in two-dimensional space (e.g. displayed on two-dimensional surfaces), entities appearing to a viewer as being three-dimensional and/or as being in three-dimensional space (e.g. at some depth different from the distance to the two-dimensional displays) nevertheless may be displayed.

Turning to FIG. 2, another top down schematic for a three dimensional display system is shown therein. As in FIG. 1, left and right eyes 202 and 204 are shown in FIG. 2. However, as may be seen the eyes 202 and 204 in FIG. 2 are farther apart from one another than the eyes 102 and 104 in FIG. 1. The spacing between eyes may be referred to as the inter-pupillary distance or IPD, i.e. the distance between the centers of the pupils. Inter-pupillary distance may vary significantly between individuals. For example, while a mean for young adult U.S. males is approximately 65 millimeters, values as low as 52 mm or as high as 78 mm are not unknown, and greater extremes may exist. Inter-pupillary distance also may vary with age (typically being smaller in children than in adults), due to certain injuries, etc. It is noted that the inter-pupillary distance in FIG. 2 is about 15% greater than that in FIG. 1, while the maximum real-world value of 78 mm cited above is 50% greater than the minimum real-world value of 52 mm cited above. Thus the variation between FIG. 1 and FIG. 2 is not necessarily an exaggeration beyond anticipated human anatomical norms in that regard.

Continuing in FIG. 2, left and right displays 206 and 208 of a three dimensional display system are disposed in front of the left and right eyes 202 and 204, with left and right images 210 and 212 outputted thereto. Sight lines 214 and 216 extend from the eyes 202 and 204 to the images 210 and 212, and virtual sight lines 218 and 220 converge such that a viewer would see a target 222 at a location in three dimensional space.

As may be seen by comparison of FIG. 2 with FIG. 1, the distance from the viewer in FIG. 2 (as represented by eyes 202 and 202) to the target 222 therein is visibly less than the distance from the viewer in FIG. 1 to the target 122 therein. As may also be seen by comparison, the size, shape, configuration, and position of the displays 206 and 208 in FIG. 2 are otherwise similar to those of the displays 106 and 108 in FIG. 1, and that the positions of the images 210 and 212 in FIG. 2 are similar to the positions of the images 110 and 112 in FIG. 1. Yet although the display systems in FIG. 1 and FIG. 2 are at least extremely similar, the apparent position of the targets 122 and 222 are visibly quite different; namely, the image 222 in FIG. 2 appears to be at a closer distance than the image 122 in FIG. 1.

Then, when an entity is outputted by a three dimensional system such as a stereo display system, where that entity appears to a viewer may vary from one viewer to the next. In other words, what a viewer perceives as the apparent position of the entity may be different from an ideal or intended position for the entity.

Such variation may occur even without three dimensional displays, due for example to factors such as the aforementioned variation in inter-pupillary distance. For example, as may be seen in FIG. 3, a viewer with an inter-pupillary distance and in an arrangement similar to what is shown in FIG. 1 may see image positions similar to 310 and 312 shown in FIG. 3 with his or her left and right eyes. In FIG. 3, the larger center mark represents a perpendicular away from the viewer (i.e. "straight ahead") while the surrounding marks indicate intervals of 15 degrees in various directions. Thus, the left image 310 appears approximately 9 degrees right of perpendicular viewed from the left eye, while the right image 312 appears approximately 9 degrees left of perpendicular viewed from the right eye.

With reference to FIG. 4, a viewer with an inter-pupillary distance and in an arrangement similar to what is shown in FIG. 2 might see image positions similar to 410 and 412. The left image 410 appears approximately 22 degrees right of perpendicular viewed from the left eye, while the right image 412 appears approximately 22 degrees left of perpendicular viewed from the right eye.

In unassisted human vision, differences such as those visible between FIG. 3 and FIG. 4 may not be of concern; a real-world target in three dimensional space "is where it is", and individuals may learn to judge distances and positions for targets based on the particulars of their own eyes and brain. The precise deflections (and/or the angles of convergence those deflections represent) seen by each eye may vary from person to person, but over time each person may learn (perhaps not consciously) what deflections/angles correspond with what positions in three dimensional space.

However, when entities are displayed without necessarily existing physically, such as may be the case for at least some virtual reality and/or augmented reality content, such individual variations may prove problematic. A display system may output images 110 and 112 as in FIG. 1 with the expectation that the viewer would perceive a target 122 at the distance shown in FIG. 1, based on an idealized assumption that the inter-pupillary distance of the viewer also is as shown in FIG. 1. However, for a viewer with an inter-pupillary distance similar to that in FIG. 2, the apparent position of the target 222 may be as shown in FIG. 2, and thus substantially closer than the ideal position of the target 122 in FIG. 1.

In such instance, to a viewer with an inter-pupillary distance as in FIG. 2 the target 222 may appear much closer than is intended. More broadly, variations in inter-pupillary distance and/or other factors may contribute to a perception by viewers that objects displayed in (for example) virtual reality and/or augmented reality systems are "in the wrong place". Such discrepancies between ideal positions and apparent positions may cause outputted entities to appear differently than is expected, in addition to and/or instead of variations in perceived distance only. Scenes may appear distorted from how they are anticipated to be displayed, for example. More colloquially, the output being displayed may appear "wrong" (even though the viewer may not necessarily be able to identify precisely what is wrong with the output).

Even if relatively small, errors in apparent distance may be problematic. For example, interacting with a three dimensional environment may be more difficult if objects are not at the positions that the viewer expects from the appearance of those objects. This may be particularly significant for three dimensional environments that are relatively rich and sophisticated, e.g. with many objects and/or phenomena displayed therein, objects and/or phenomena moving, affecting one another, etc. In addition, a high level of interactivity and/or opportunity to manipulate such environments, responsiveness to fine control, etc. may contribute to position errors (or at least perceived errors) and so forth being particularly problematic. Furthermore, if an environment is to be realistic, immersive, etc. a discrepancy between where objects "should be" for a given viewer and where they are as far as the three dimensional system is concerned may interfere with the illusion of reality. As has been noted, it is not necessary for such discrepancies to be so pronounced as to be consciously observed by viewers in order to be of potential concern. Although much of human vision may be handled without conscious control or attention (and anomalies may not be immediately apparent at a conscious level), a non-specific sense that "this isn't right" may be problematic even if a viewer is unable to articulate or particularly identify what isn't right.

In addition, if a three dimensional display is intended to provide information that aligns or interacts with a physical environment--for example, augmented reality information overlaid onto the physical world, as might be viewed using a see-through display--even relatively minor differences between perceived position and ideal position may be significant. For instance, an outline intended to highlight a real-world feature such as a street sign may, to the viewer, appear to be slightly misplaced. Besides being a potential cosmetic nuisance, such errors may cause operational problems, for example a misplaced outline might obscure the very sign that the outline was intended to highlight. Likewise, differences in where a viewer perceives a cursor to be and where a controlling processor treats that cursor as being may make using the cursor more difficult.

Furthermore, when from the viewer's perspective displayed features are not where those features appear to be, problems such as disorientation, dizziness, nausea, eye strain, headaches, etc. may occur. If sufficiently severe, such problems may limit the usefulness of a display system, or even render the system effectively unusable in practice for at least some viewers.

Although variations in inter-pupillary distance are used in FIG. 1 through FIG. 4 to illustrate one specific property that may cause and/or contribute to differences between apparent and ideal positions, this is an example only, and other properties also may be significant. Individuals may exhibit variation not only in inter-pupillary distance, but also in eye alignment, in the optical architecture of the eyes themselves, in the behavior of the eyes (i.e. saccadic motion, eye tracking, etc.), in the specifics of brain activity in interpreting images, etc. Furthermore, although the examples in FIG. 1 through FIG. 4 show only variations in deflection along one dimension (the horizontal) for purposes of simplicity, and also only show deflections that are similar for both eyes, individuals may perceive a target as being offset vertically, offset to different degrees and/or in different directions with both eyes, etc.

Furthermore, features that may have no immediately apparent connection to eyesight may affect the apparent position of an entity displayed in three dimensional space. For example, consider an arrangement wherein the entity is displayed using a near-eye head mounted display, such as one resembling glasses, etc. If the head mounted display rests on the viewer's nose and ears, then factors such as the size, shape, and position of the viewer's nose and/or ears may affect where objects are outputted relative to the viewer's eyes, and thus may affect where those objects appear to be in three dimensional space.

Besides anatomical variations, variations the position of a display relative to the eyes of a viewer also may affect the apparent position of entities in three dimensional space. The viewer's preferences in how to use, wear, etc. a display system (such as wearing a head mounted display high on the nose or low on the nose) thus may affect the apparent position of displayed information. For example, a viewer may wear a near-eye head mounted display high up on the bridge of the nose, or farther out near the tip of the nose. This difference in distance between eyes and displays may produce variations in sight lines, thus contributing to differences in sight lines (and thus apparent distances) even for the same viewer looking at the same content; thus, even shifting how a viewer wears or otherwise utilizes a head mounted display from day to day (or moment to moment) may affect the apparent position of content being displayed.

Also, injury and/or illness may produce variations in anatomy, preferred viewing position, etc., that are outside the typical range of healthy individuals.

In principle, a sufficiently detailed optical model for an individual may address such variations, for example by enabling accurate prediction of where that individual will see a target displayed with a three dimensional system. For instance, direct measurement of an individual's optical parameters, for example inter-pupillary distance, might be undertaken. However, such measurements may require some degree of skill to execute properly, may require specialized equipment, may be time-consuming, etc. In addition, a comprehensive list of factors that potentially could affect depth perception may not necessarily be well-defined, and the number of relevant factors also may be very large. A significant amount of time and skilled labor might be required to gather and process such data.

By contrast, in the present invention a determination may be made as to where a viewer in fact sees a target, as opposed to where the viewer is expected to see the target in principle, without necessarily requiring or utilizing detailed measurement or optical modeling.

With reference now to FIG. 5, an example method for calibrating a display system for an individual user (and/or for an individual set of conditions for a user, etc.) is shown therein in flow-chart form.

In the method shown in FIG. 5 an entity is outputted 544 at an ideal position. The ideal position is a location in three dimensional space at which it is expected the entity will be perceived by a viewer. For example, the entity may be outputted 544 in left and right stereo display screens with a position on each such screen as would ideally correspond with an apparent depth of (for example) 50 centimeters, centered between the viewer's eyes and in a horizontal plane aligned with the viewer's eyes. However, this is an example only, and other ideal positions may be equally suitable.

Typically a single three-dimensional ideal position may be used to determine corresponding two-dimensional positions for left and right eyes. For example, for a known position in three-dimensional space, ideal parallax and ideal positions in two-dimensional space may be calculated geometrically (though perhaps making assumptions regarding optical baseline/inter-pupillary distance and other parameters, which as already noted may vary from one individual to another).

The present invention is not particularly limited in the manner by which the ideal position is determined. Typically, though not necessarily, the ideal position may be determined for some standardized model representative of average optical characteristics.

Continuing in FIG. 5, a viewer indication of an apparent position is sensed 546. That is, the viewer indicates in some fashion where the entity appears, based on the specific properties of his or her eyes, the particulars of the display configuration, etc., and that indication is in some manner determined. It is emphasized that the viewer is not required to know or understand the parameters affecting the apparent position; the viewer is required only to indicate where the entity appears to him/her.

Typically though not necessarily, a viewer may indicate the apparent position of the entity by aligning an end-effector such as a fingertip with the entity. More colloquially, the viewer may point to where the entity appears to him or her.

The indication process may be binocular or monocular (and indeed the method overall may be referred to as binocular or monocular). That is, the viewer may indicate the apparent position of the entity, and that apparent position may be sensed, for either both eyes at the same time (binocular) or for one eye at a time (monocular). These options are discussed in more detail later herein.

It will be understood that whatever approach is used to sense 546 the viewer indication, that approach must either provide or allow the determination of depth information. However, the present invention is not otherwise particularly limited with regard to how the viewer indication may be sensed 546. For certain embodiments, a stereo arrangement of cameras, including but not limited to digital video cameras, may be suitable. For other embodiments a depth camera might be used. Other arrangements, including but not limited to ultrasonic range-finding, active light-pulse range-finding, millimeter radar, etc. may be equally suitable.

Moving on in FIG. 5, a difference between the ideal position and the apparent position is determined 548. As has been described, where a displayed entity is perceived to be located in three dimensional space is different from where that entity "should be" perceived to be located under ideal conditions. Since the ideal position is known (the entity having been displayed at that position) and the apparent position may be determined from the viewer's indication, the difference between those two positions also may be determined.

The present invention is not particularly limited with regard to how the difference is determined 548, or with regard to the manner in which that difference is expressed. For example, the difference might be considered in two dimensions (e.g. if convenient for systems using two dimensional displays) or in three dimensions (e.g. if convenient for a three dimension space being portrayed). The difference might be expressed and considered as a Cartesian coordinate set (e.g. -3 cm along the x axis, +5 cm along the y axis, -11 cm z along the z axis, etc.). Alternately, the difference might be expressed as a direction and magnitude, or in other terms. As yet another alternative the difference might be expressed as two or more values (or vectors, etc.). For example, for a stereo display system a first difference may be determined for the left display and a second difference determined for the right eye, with the combined three-dimensional difference being a result of the two individual differences (the difference might be expressed as one difference for an arrangement wherein a three dimensional position is outputted as a combination of two dimensional positions.

Still with reference to FIG. 5, a viewer-specific adjustment is determined 550 for entities that are and/or will be displayed. That is, based on the difference determined in step 548--where the entity appeared to be relative to where the entity was intended to be--a correction may be determined such that entities are displayed in such fashion that where those entities appear to the viewer and where those entities are intended to be seen more closely match. Stated differently, applying the adjustment to the ideal position results in an apparent (adjusted) position that is substantially similar to the (non-adjusted) ideal position.

Ideally the match between the ideal position on one hand and the apparent position combined with the adjustment on the other hand should be perfect, i.e. the ideal and adjusted apparent positions are identical. However, in practice achieving perfect matching may not be feasible, nor is an identical match necessarily required by the present invention. For certain embodiments it may be sufficient for the ideal and adjusted apparent positions to be similar, or even merely for the difference between the ideal and adjusted apparent positions to be reduced.

With regard to less-than-identical matches, the method as shown in FIG. 5 (as well as other methods shown and described herein, unless otherwise noted) may be used iteratively. That is, the method steps in FIG. 5 may be repeated two or more times, potentially refining/improving the adjustment so as to improve the match between ideal and adjusted apparent positions, compensating for changes in the optical parameters (e.g. if a head mounted display shifts on a viewer's head over time), etc.

Continuing in FIG. 5, the adjustment is then applied 552 to one or more entities being displayed. The adjustment may be applied 552 to the entity that is used to determine the adjustment itself, that is, the already-displayed position of the entity may shift from the ("incorrect") apparent position to a new adjusted apparent position that more closely matches the ideal position. Similarly, the adjustment may be applied 552 to other entities already being displayed to the viewer. In certain embodiments the displayed positions of all or nearly all information being displayed may be modified by applying the adjustment 552, such that the entire display might be considered to recalibrate. However, this is not required, and for certain embodiments it may be equally suitable to apply the adjustment only to entities being newly displayed, or otherwise limit the adjustment only to some entities while not applying the adjustment to other entities.

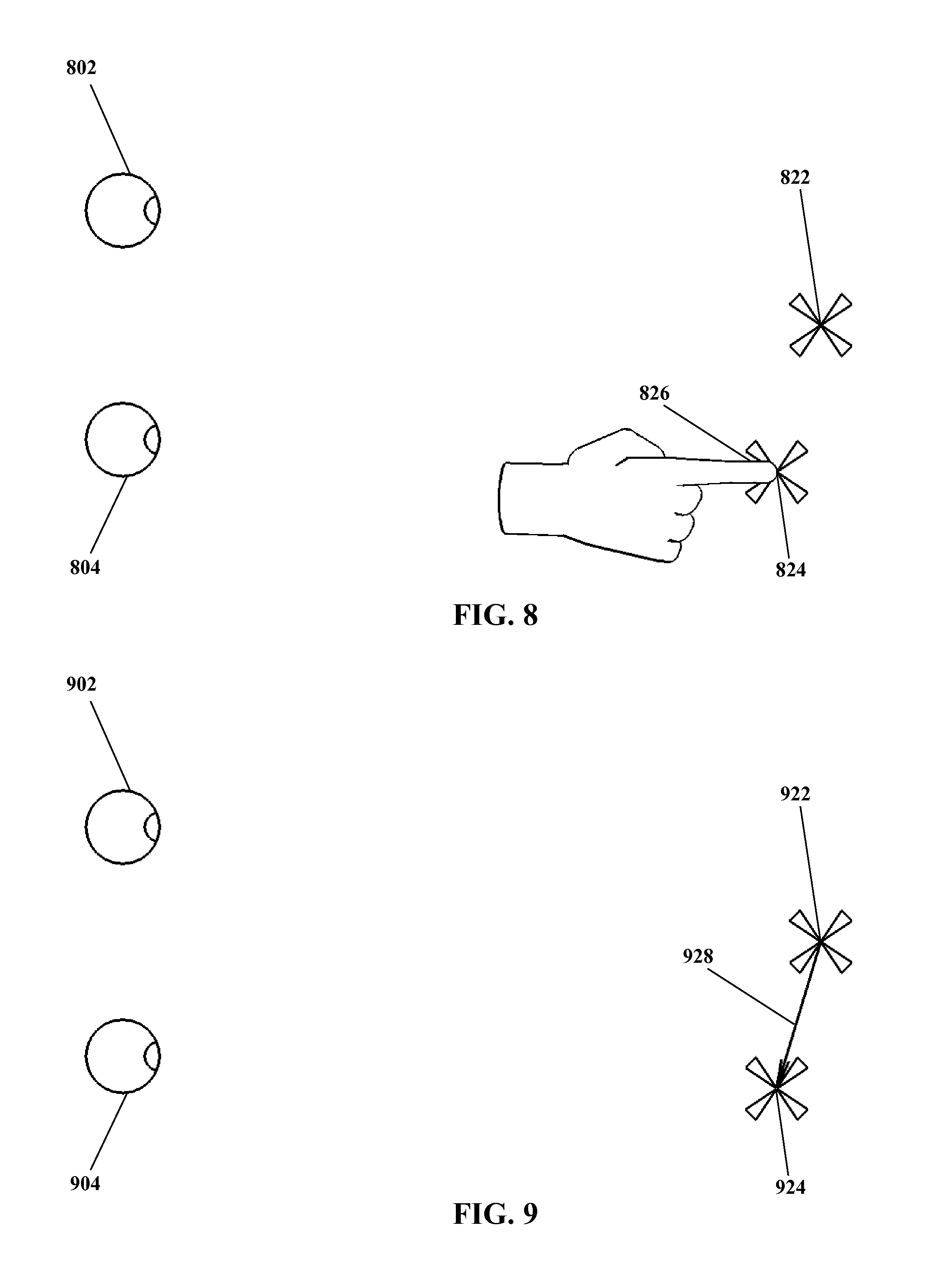

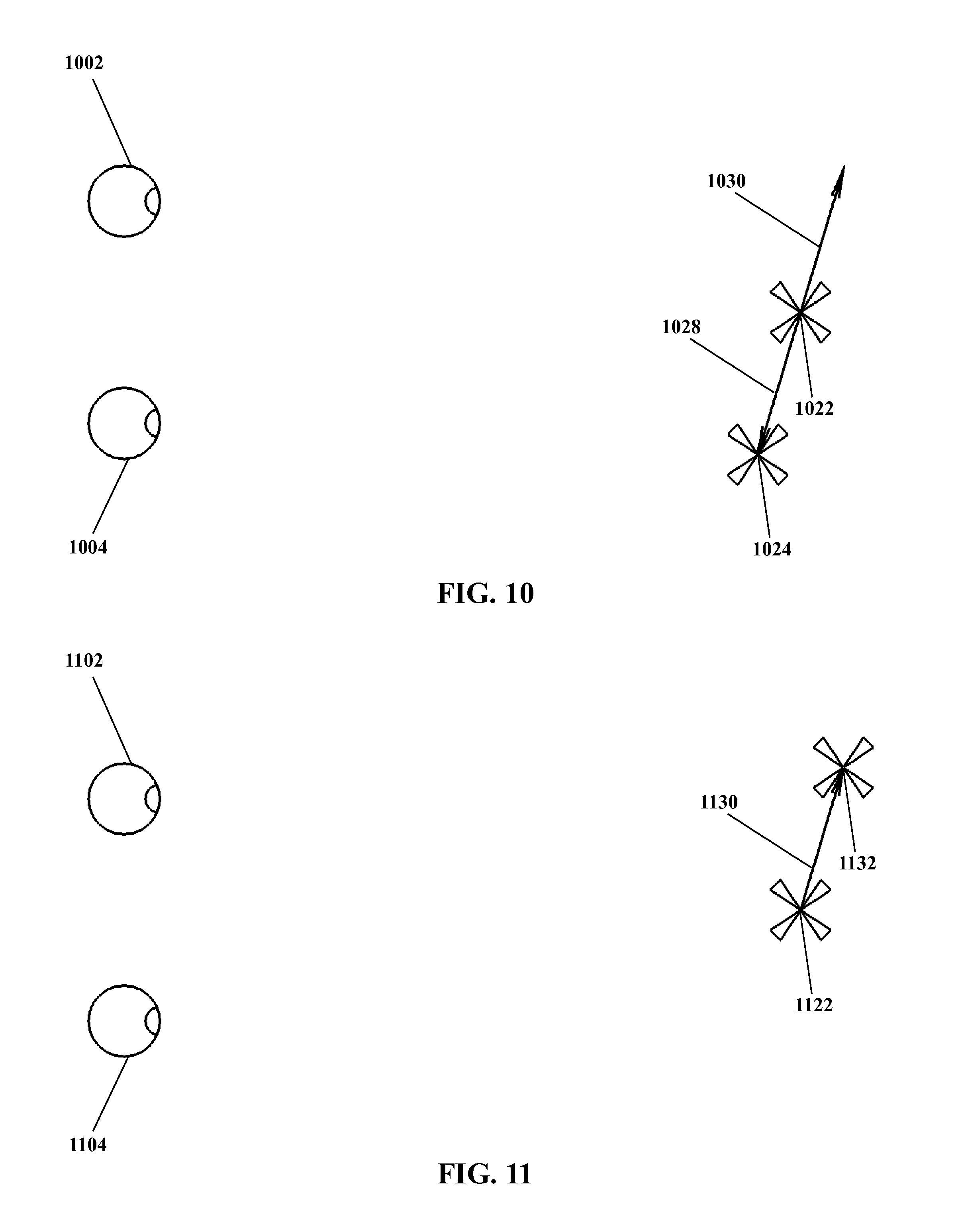

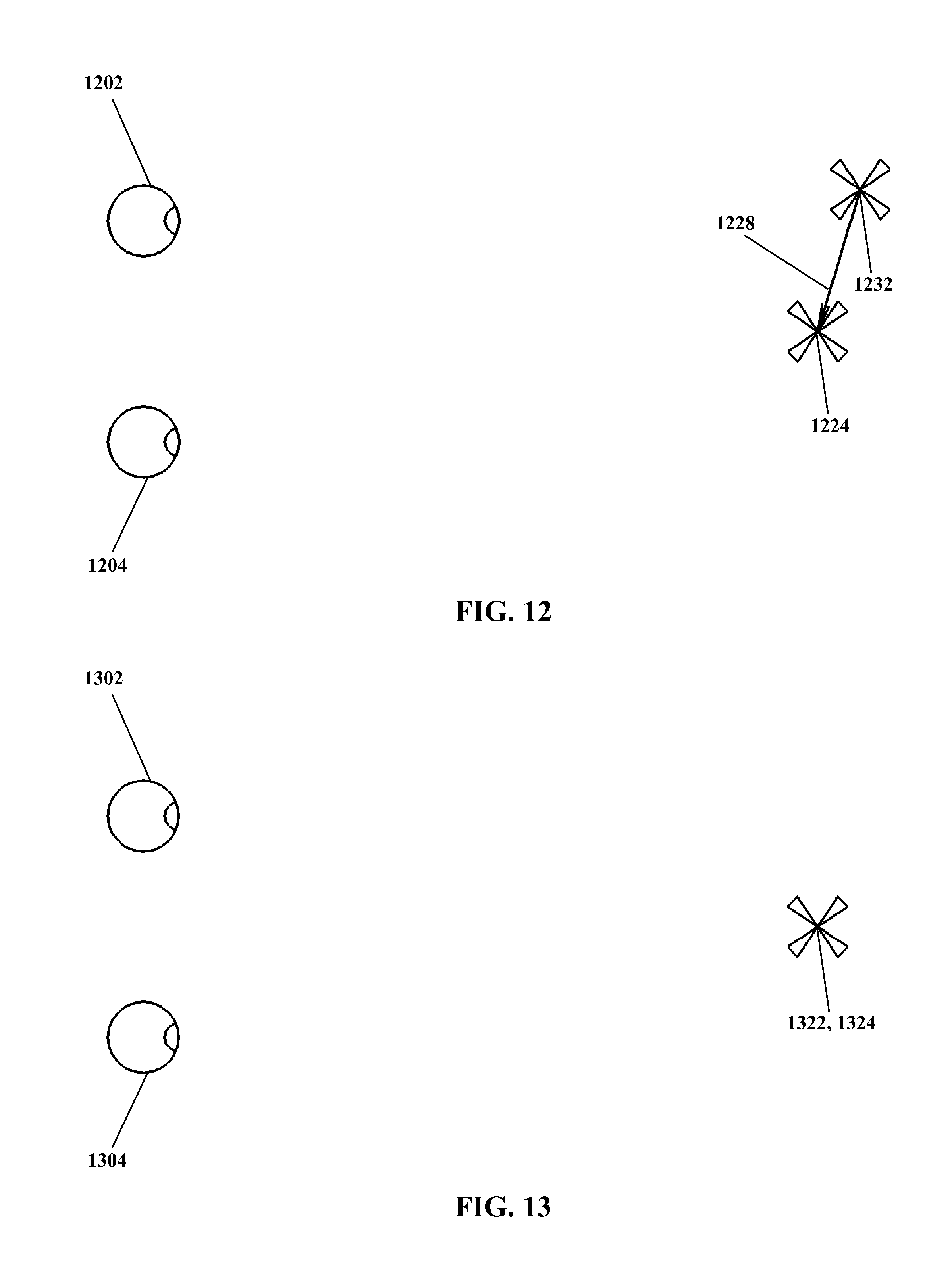

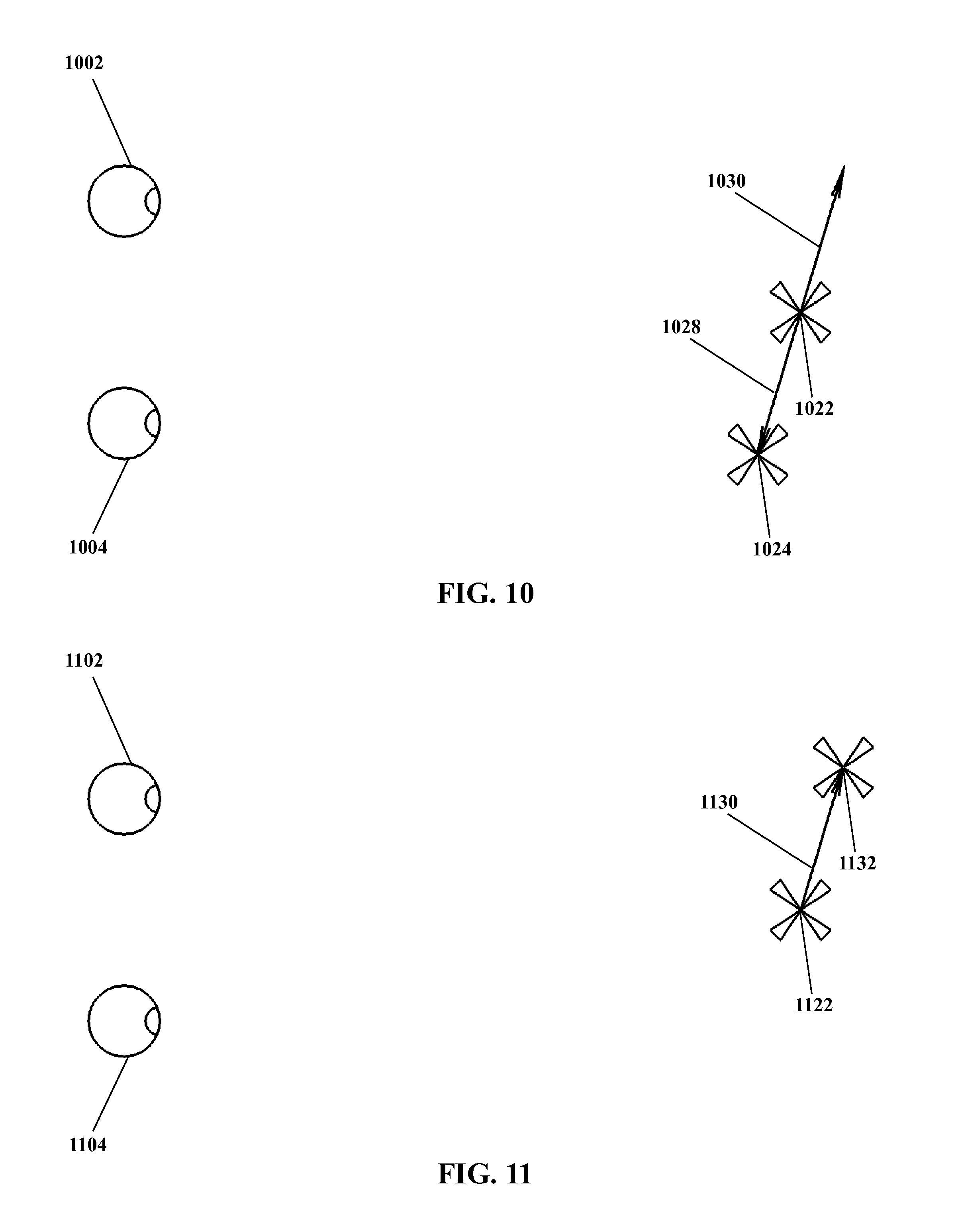

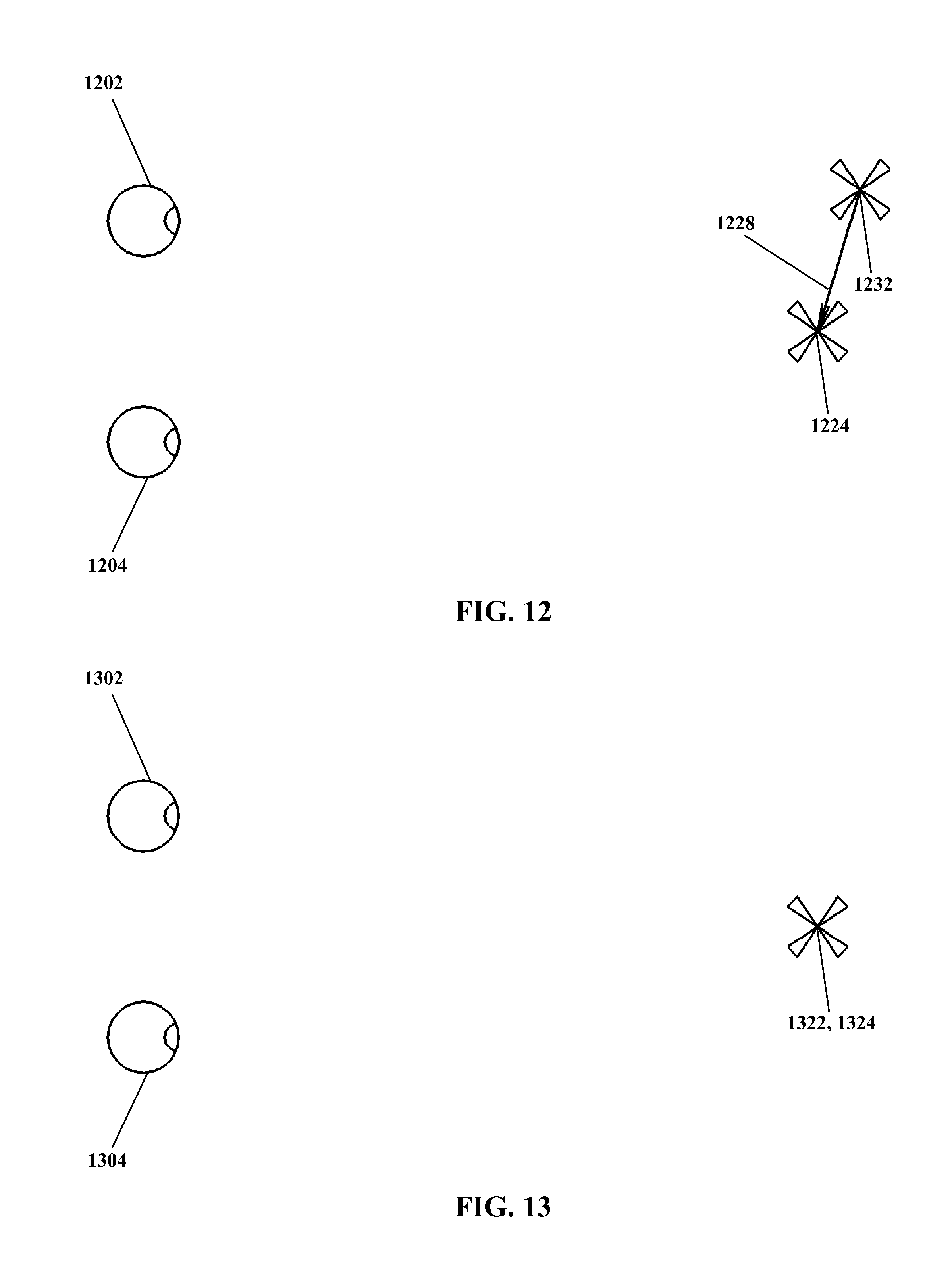

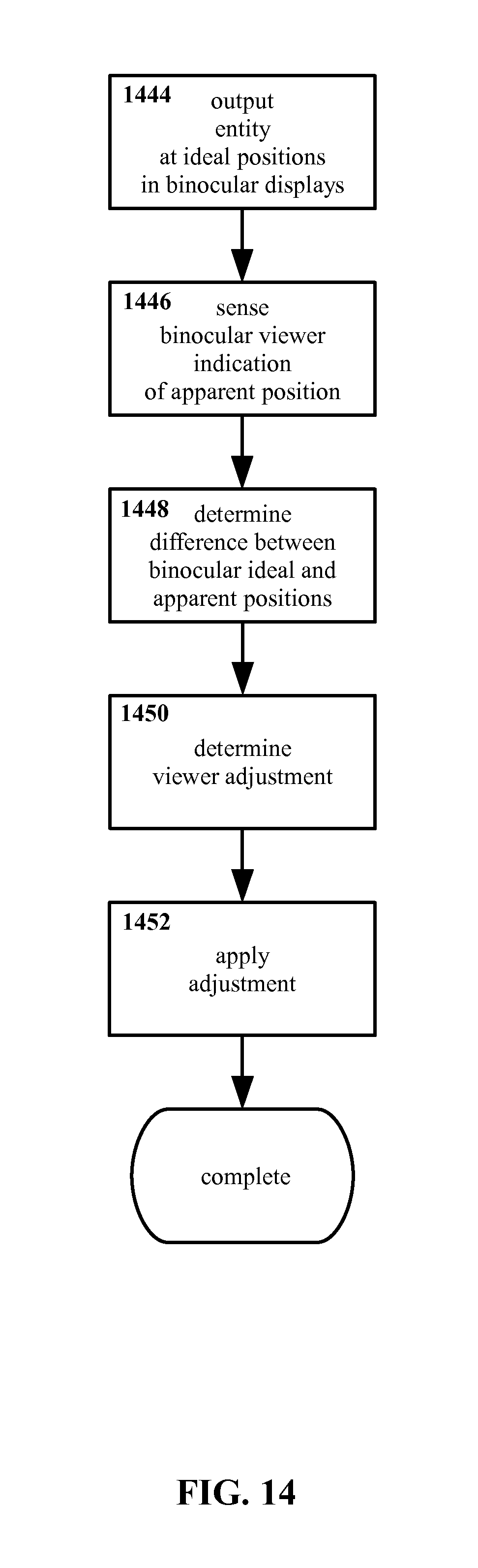

With regard collectively to FIG. 6 through FIG. 13, therein is shown a sequence as may correspond to at least certain features of an example method for individualized three dimensional display calibration according to the present invention. However, FIG. 6 through FIG. 13 illustrate such features of a method according to the present invention considering perceptions and/or actions as might be seen and/or carried out by a viewer, rather than a flow-chart of individual steps as in FIG. 5.

With regard specifically to FIG. 6, for a three dimensional display system such as a stereo display system a distinction may exist between the ideal position for a displayed entity and the apparent position of that displayed entity, as described previously herein. FIG. 6 shows an example entity outputted for viewing by a viewer at an ideal position 622 in three dimensional space, the viewer of the entity being represented by left and right eyes 602 and 604. The arrangement in FIG. 6 may be considered as at least somewhat similar to that represented by step 544 in FIG. 5. (For purposes of simplicity, display screens, sight lines, etc. are not illustrated in FIG. 6 or in subsequent FIG. 7 through FIG. 12. However, it should be understood that in at least certain embodiments of the present invention, changes in apparent three dimensional position of displayed content may be implemented in whole or in part by changing the actual position of content as displayed in two dimensions, e.g. on two dimensional display screens.

Turning to FIG. 7, an example entity is again shown outputted for viewing by a viewer at an ideal position 722 in three dimensional space. However, because of the particular parameters of the viewer's eyes 702 and 704, the arrangement of a display outputting the example entity 722, etc., the viewer perceives the example entity as being at an apparent position 724, with the apparent position 724 being different from the ideal position 722. As may be observed, the apparent position 724 as shown in FIG. 7 is shifted to the right of the ideal position 722 from the point of view of the viewer (downward as FIG. 7 is arranged), and is closer to the viewer than the ideal position 722.

It is noted that the particular shift from the ideal position 722 of the entity to the apparent position 724 of the entity as perceived by the viewer that is shown in FIG. 7 is arbitrary, presented for purposes of illustration. As noted previously, many factors may affect apparent position as compared with ideal position, resulting in displacement of varying degree and direction. No specific arrangement of optical parameters or other properties is proposed herein as causing this precise shift, nor does the present invention require determining such cause. The present invention is not particularly limited by causes of visual variations, and indeed the reasons for any given visual variation need not necessarily even be known or considered. The present invention instead considers the viewer's perception of where things do in fact appear to be, thus a determination of where things should appear to be may be of little consequence. Such determination is not excluded from the present invention, however. The displacement shown in FIG. 7 is an example only, and the present invention is not limited only to displacements similar to that shown, or to any particular arrangement of optical parameters.

Moving on to FIG. 8, an example entity is shown outputted for viewing by a viewer at an ideal position 822 in three dimensional space, along with an apparent position 824 for the entity as perceived by the viewer with his or her eyes 802 and 804. In addition, an indication by the viewer is shown in the form of the viewer pointing with a fingertip 826 to the apparent position 824. The arrangement in FIG. 8 may be considered as at least somewhat similar to that represented by step 546 in FIG. 5.

The use of a fingertip 826 as shown in FIG. 8 to indicate the apparent position 824 is an example only. Other end effectors, such as a stylus, a pen, a hand with other postures, etc. may be equally suitable for indicating the apparent position 824. In addition, approaches other than the use of an end-effector also may be equally suitable.

Turning to FIG. 9, therein is shown an example entity outputted at an ideal position 922 and an apparent position 924 for the entity as viewed by the viewer 902 and 904. In addition, a difference 928 between the ideal position 922 and apparent position 924 is shown. As previously noted and as visible in FIG. 9, the apparent position 924 is to the right of the ideal position 922 and closer to the viewer than the ideal position 922, as perceived by the viewer. The difference 928 shown in FIG. 9 is illustrated as a vector, representing the direction and magnitude of the shift or offset between the ideal position 922 and the apparent position 924. The arrangement in FIG. 9 may be considered as at least somewhat similar to that represented by step 548 in FIG. 5.

Although as illustrated the difference 928 is in the form of a two-dimensional vector, the present invention is not limited only two two-dimensional differences, nor is the present invention particularly limited with regard to how the difference is determined, expressed, etc. For simplicity, a two dimensional geometric vector is shown in FIG. 9, but other arrangements may be equally suitable.

Moving on to FIG. 10, again a viewer's eyes 1002 and 1004 are shown along with an example entity outputted at an ideal position 1022 and an apparent position 1024 for the entity. The difference 1028 between the ideal and apparent positions 1022 and 1024 is also shown, again as a two dimensional vector.

In addition, FIG. 10 shows a viewer adjustment 1030. The viewer adjustment 1030 is a position adjustment such that if the ideal position 1022 is adjusted with the viewer adjustment 1030, the apparent position of that adjusted ideal position is substantially similar to the (un-adjusted) ideal position 1022. That is, the apparent position of an ideal position in combination with the viewer adjustment is substantially similar to the ideal position absent the viewer adjustment.

More colloquially, the viewer adjustment 1030 "undoes" the effects of the difference 1028, such that a displayed entity appears to a viewer in the ideal position 1022. The viewer adjustment 1030 as shown is an opposing vector, in effect the opposite of the difference 1028. However, the present invention is not limited only to corrections that are geometrically, mathematically, or otherwise opposites of their respective differences. So long as the adjustment produces an affect according to the present invention, e.g. reducing and/or eliminating variation between where content is and where content is viewed by a viewer to be, the difference and/or viewer adjustment may vary considerably, and are not necessarily required to be exact opposites.

In principle, such an adjustment may be applied to the apparent position 1024 rather than the ideal position 1022. However, typically (though not necessarily) the ideal position may be more closely defined and/or controlled than the apparent position 1024. For example, the ideal position 1022 may be a position as calculated within a processor, while the apparent position 1024 is a position as perceived by the viewer. Even when the apparent position 1024 is indicated by the viewer (as shown for example in FIG. 8) the ideal position 1022 may be known with higher precision and/or confidence than the apparent position 1024 (e.g. due to error in the viewer indication of the apparent position 1024, uncertainty in the sensing of the viewer's indication, etc.). Thus typically the viewer adjustment 1030 is determined as applicable to the ideal position 1024. However, embodiments wherein a viewer adjustment is determined that would be applied to the apparent position 1022 are not excluded from the present invention.

As noted elsewhere herein, the specific parameters determining the apparent position 1022 as compared with the ideal position 1024 may be dependent upon the individual viewer. Thus, a viewer correction 1030 likewise may be specific to an individual viewer. Furthermore, a viewer correction 1030 may be specific to an individual time, individual conditions, etc.

Turning to FIG. 11, a viewer's eyes 1102 and 1104 are shown along with an example entity outputted at an ideal position 1122. A viewer adjustment 1130 is also shown. As has been described, the viewer adjustment 1130 is adapted to be combined with the ideal position 1122, resulting in an adjusted ideal position 1132.