Distance measurement device, distance measurement method, and distance measurement program

Sugimoto July 16, 2

U.S. patent number 10,353,070 [Application Number 15/921,659] was granted by the patent office on 2019-07-16 for distance measurement device, distance measurement method, and distance measurement program. This patent grant is currently assigned to FUJIFILM CORPORATION. The grantee listed for this patent is FUJIFILM CORPORATION. Invention is credited to Masahiko Sugimoto.

View All Diagrams

| United States Patent | 10,353,070 |

| Sugimoto | July 16, 2019 |

Distance measurement device, distance measurement method, and distance measurement program

Abstract

A distance measurement device includes an imaging unit, a measurement unit that measures a plurality of distances to the subject by emitting a plurality of directional light rays the subject and receiving reflection light rays, a control unit that controls the imaging unit to image the subject in an angle of view which includes irradiation positions of the directional light rays used in the measurement of the plurality of distances onto the subject, and a deriving unit that derives a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired through imaging, among the plurality of measured distances, the interval for each distance within the captured image, and a focal length of the imaging unit.

| Inventors: | Sugimoto; Masahiko (Saitama, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | FUJIFILM CORPORATION (Tokyo,

JP) |

||||||||||

| Family ID: | 58423400 | ||||||||||

| Appl. No.: | 15/921,659 | ||||||||||

| Filed: | March 15, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180246213 A1 | Aug 30, 2018 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| PCT/JP2016/063584 | May 2, 2016 | ||||

Foreign Application Priority Data

| Sep 28, 2015 [JP] | 2015-190355 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/23245 (20130101); G01S 7/4817 (20130101); G01S 17/89 (20130101); G06T 7/62 (20170101); G01S 17/42 (20130101); H04N 5/232933 (20180801); G06T 7/521 (20170101); H04N 5/23296 (20130101); G01S 17/87 (20130101); G06T 2207/10028 (20130101); G06T 2207/10152 (20130101) |

| Current International Class: | G01S 17/42 (20060101); G01S 17/89 (20060101); G06T 7/521 (20170101); G06T 7/62 (20170101); H04N 5/232 (20060101); G01S 17/87 (20060101); G01S 7/481 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 5761549 | June 1998 | Utagawa |

| 6144443 | November 2000 | Ide |

| 2010/0061623 | March 2010 | Yokoi |

| 2011/0249117 | October 2011 | Yoshihama et al. |

| 2012/0224164 | September 2012 | Hayashi |

| 2015/0268180 | September 2015 | Hirose |

| 2004-205222 | Jul 2004 | JP | |||

| 2008-166482 | Jul 2008 | JP | |||

| 2011-232330 | Nov 2011 | JP | |||

| 2013-108789 | Jun 2013 | JP | |||

| 2014-232095 | Dec 2014 | JP | |||

Other References

|

International Search Report issued in International Application No. PCT/JP2016/063584 dated Jul. 19, 2016. cited by applicant . Written Opinion of the ISA issued in International Application No. PCT/JP2016/063584 dated Jul. 19, 2016. cited by applicant . International Preliminary Report on Patentability issued in International Application No. PCT/JP2016/063584 dated Dec. 1, 2016. cited by applicant. |

Primary Examiner: Pontius; James M

Attorney, Agent or Firm: SOLARIS Intellectual Property Group, PLLC

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATION

This application is a continuation application of International Application No. PCT/JP2016/063584, filed May 2, 2016, the disclosure of which is incorporated herein by reference in its entirety. Further, this application claims priority from Japanese Patent Application No. 2015-190355 filed Sep. 28, 2015, the disclosure of which is incorporated herein by reference in its entirety.

Claims

What is claimed is:

1. A distance measurement device comprising: an imaging unit that images a subject; a measurement unit that measures a plurality of distances to the subject by emitting a plurality of directional light rays which are light rays each having directivity to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units; a control unit that controls the imaging unit to image the subject in an angle of view which includes irradiation positions of the directional light rays used in the measurement of the plurality of distances by the measurement unit onto the subject; and a deriving unit that derives a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, wherein the measurement unit includes a plurality of sets each having an emission unit that emits the directional light ray and a light receiving unit that receives a reflection light ray of the directional light ray emitted by the corresponding emission unit, wherein an angle at which the directional light ray is emitted is able to be changed for each set of the emission unit and the light receiving unit in a state in which a positional relation between the emission unit and the light receiving unit in each set is fixed, and wherein the deriving unit (i) acquires a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each set, (ii) derives factors which influence the irradiation positions for each set based on the acquired first correspondence relation, and (iii) derives the in-image irradiation positions for each set based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

2. The distance measurement device according to claim 1, further comprising: a performing unit that performs a predetermined process as a process of suppressing a decrease in accuracy of the in-image irradiation position in a case where a distance which is actually measured by the measurement unit is out of a range of a distance specified by the first correspondence relation related to the corresponding set.

3. The distance measurement device according to claim 1, further comprising: an output unit that derives derivation accuracy corresponding to an actually present factor based on a second correspondence relation between assumption factors assumed as factors influencing the in-image irradiation positions and derivation accuracy derived by the deriving unit, and outputs information based on the derived derivation accuracy.

4. The distance measurement device according to claim 3, wherein different derivation accuracy are associated with different assumption factors in the second correspondence relation, and the output unit derives the derivation accuracy associated with the assumption factor corresponding to the actually present factor.

5. The distance measurement device according to claim 4, wherein, in a case where the actually present factor is in plural, the output unit derives the derivation accuracy by integrating the derivation accuracy associated with the assumption factors corresponding to the plurality of actually present factors.

6. The distance measurement device according to claim 1, further comprising: a change unit that is capable of changing an angle at which the directional light ray is emitted, wherein, in a case where the in-image irradiation position is out of a default range within the captured image, the control unit controls the measurement unit to measure the distance until the in-image irradiation position falls in a default range, and controls the deriving unit to derive the in-image irradiation position based on the distance measured by the measurement unit and the angle changed by the change unit.

7. The distance measurement device according to claim 6, wherein, in a case where the in-image irradiation position is out of the default range, the control unit controls the measurement unit to measure the distance until the in-image irradiation position falls in the default range, controls the change unit to change an angle by driving a power source, and controls the deriving unit to derive the in-image irradiation position based on the distance measured by the measurement unit and the angle changed by the change unit.

8. The distance measurement device according to claim 1, wherein a frame including the in-image irradiation positions is designated for each in-image irradiation position within the captured image, the plurality of pixels is designated inside the frame for each frame, and the deriving unit derives the dimension of the real-space region corresponding to an interval between the plurality of designated pixels based on distances related to the corresponding in-image irradiation positions among the plurality of distances measured by the measurement unit, the interval, and the focal length for each frame.

9. The distance measurement device according to claim 1, wherein the subject includes a plurality of planar regions of which at least one of an orientation or a position is different, and the measurement unit measures distances to the plurality of planar regions by emitting the directional light rays to the plurality of planar regions and receiving reflection lights of the directional light rays emitted to the plurality of planar regions.

10. A distance measurement device comprising: an imaging unit that images a subject; a measurement unit that measures a plurality of distances to the subject by emitting directional light rays which are light rays each having directivity to the subject in a plurality of directions through scanning of the subject with the directional light rays and receiving reflection light rays of the directional light rays in the plurality of directions; a control unit that controls the imaging unit to image the subject in an angle of view which includes irradiation positions of the directional light rays used in the measurement of the plurality of distances by the measurement unit onto the subject; and a deriving unit that derives a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, wherein the deriving unit (i) acquires a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each direction, (ii) derives factors which influence the irradiation positions for each direction based on the acquired first correspondence relation, and (iii) derives the in-image irradiation positions for each direction based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

11. The distance measurement device according to claim 10, further comprising: a performing unit that performs a predetermined process as a process of suppressing a decrease in accuracy of the in-image irradiation position in a case where a distance which is actually measured by the measurement unit is out of a range of a distance specified by the first correspondence relation related to the corresponding direction.

12. A distance measurement method comprising: controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device; and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, wherein the measurement unit includes a plurality of sets each having an emission unit that emits the directional light ray and a light receiving unit that receives a reflection light ray of the directional light ray emitted by the corresponding emission unit, wherein an angle at which the directional light ray is emitted is able to be changed for each set of the emission unit and the light receiving unit in a state in which a positional relation between the emission unit and the light receiving unit in each set is fixed, and the method further comprising; (i) acquiring a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each set, (ii) deriving factors which influence the irradiation positions for each set based on the acquired first correspondence relation, and (iii) deriving the in-image irradiation positions for each set based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

13. A distance measurement method comprising: controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device; and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, and the method further comprising; (i) acquiring a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each direction, (ii) deriving factors which influence the irradiation positions for each direction based on the acquired first correspondence relation, and (iii) deriving the in-image irradiation positions for each direction based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

14. A non-transitory computer readable medium storing a program that causes a computer to execute a process for a distance measurement, the process comprising: controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device; and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, wherein the measurement unit includes a plurality of sets each having an emission unit that emits the directional light ray and a light receiving unit that receives a reflection light ray of the directional light ray emitted by the corresponding emission unit, wherein an angle at which the directional light ray is emitted is able to be changed for each set of the emission unit and the light receiving unit in a state in which a positional relation between the emission unit and the light receiving unit in each set is fixed, and the process further comprising; (i) acquiring a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each set, (ii) deriving factors which influence the irradiation positions based on the acquired first correspondence relation for each set, and (iii) deriving the in-image irradiation positions for each set based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

15. A non-transitory computer readable medium storing a program that causes a computer to execute a process for a distance measurement, the process comprising: controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device; and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit, and the process further comprising; (i) acquiring a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each direction, (ii) deriving factors which influence the irradiation positions for each direction based on the acquired first correspondence relation, and (iii) deriving the in-image irradiation positions for each direction based on the derived factors and a distance obtained at an actual measurement by the measurement unit.

Description

BACKGROUND OF THE INVENTION

1. Field of the Invention

A technology of the present disclosure relates to a distance measurement device, a distance measurement method, and a distance measurement program.

2. Description of the Related Art

Initially, in the present specification, distance measurement means that a distance to a subject which is a measurement target from a distance measurement device is measured. In the present specification, a captured image means an image acquired by imaging the subject by an imaging unit that images the subject. In the present specification, irradiation-position pixel coordinates mean two-dimensional coordinates as two-dimensional coordinates for specifying a position of a pixel, among pixels included in the captured image, which corresponds to an irradiation position of directional light in a real space by the distance measurement device on the assumption that distance measurement is performed by using the distance measurement device that performs the distance measurement based on a time during which the directional light (for example, laser beam) emitted by an emission unit toward the subject supposed to be a distance measurement target travels in a reciprocating motion. In the present specification, an in-image irradiation position means a position acquired as a position within the captured image, which corresponds to the irradiation position of the directional light in the real space by the distance measurement device. In other words, the in-image irradiation position means a position of a pixel, among the pixels included in the captured image, which is specified by the irradiation-position pixel coordinates.

In recent years, a distance measurement device provided with an imaging unit has been developed. In such a type of distance measurement device, a subject is irradiated with a laser beam, and the subject is captured in a state in which the subject is irradiated with the laser beam. The captured image acquired by imaging the subject is presented to a user, and thus, an irradiation position of the laser beam is ascertained by the user through the captured image.

In recent years, a distance measurement device having a function of deriving a dimension of a target within an image in a real space as in a measurement device described in JP2014-232095A has been also developed.

The measurement device described in JP2014-232095A includes means for displaying an isosceles trapezoid shape of a structure having an isosceles trapezoid portion captured by the imaging unit and means for specifying four vertices of the displayed isosceles trapezoid shape and acquiring coordinates of the four specified vertices. The measurement device described in JP2014-232095A specifies a distance between two points on a plane including the isosceles trapezoid shape or a distance to one point on a plane from the imaging unit, acquires a shape of the structure from the coordinates of the four vertices and a focal length, and acquires a size of the structure from the specified distance.

Incidentally, in a case where a dimension of a target within the captured image acquired by imaging the subject by the imaging unit in the real space is derived, a plurality of pixels corresponding to a region as a deriving target in the captured image in the real space is designated by the user. The dimension of the region in the real space designated by a plurality of pixels designated by the user is derived based on the distance measured by the distance measurement device. Thus, in a case where the dimension of the region in the real space specified from the plurality of designated pixels is accurately derived, it is preferable that the in-image irradiation position is derived with high accuracy and the acquired in-image irradiation position together with the distance is ascertained by the user.

SUMMARY OF THE INVENTION

However, in the invention described in JP2014-232095A, in a case where the imaging and the distance measurement are performed once, a dimension in a real space related to one target designated as a dimension deriving target is merely derived. Thus, in a case where a plurality of targets desired by the user is present as the dimension deriving target in the captured image acquired by performing the imaging once, the targets are required to be designated one by one whenever the imaging and the distance measurement are performed, and thus, it takes effort to derive the dimension.

One embodiment of the present invention provides a distance measurement device, a distance measurement method, and a distance measurement program capable of rapidly deriving dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

A distance measurement device according to a first aspect of the present invention comprises an imaging unit that images a subject, a measurement unit that measures a plurality of distances to the subject by emitting a plurality of directional light rays which are light rays each having directivity to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, a control unit that controls the imaging unit to image the subject in an angle of view which includes irradiation positions of the directional light rays used in the measurement of the plurality of distances by the measurement unit onto the subject, and a deriving unit that derives a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement device according to the first aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

According to a distance measurement device according to a second aspect of the present invention, in the distance measurement device according to the first aspect of the present invention, the measurement unit includes a plurality of sets each having an emission unit that emits the directional light ray and a light receiving unit that receives a reflection light ray of the directional light ray emitted by the corresponding emission unit, and an angle at which the directional light ray is emitted is able to be changed for each set of the emission unit and the light receiving unit in a state in which a positional relation between the emission unit and the light receiving unit in each set is fixed.

Therefore, according to the distance measurement device according to the second aspect of the present invention, it is possible to easily change irradiation positions of a plurality of directional light rays compared to a case where an angle at which the directional light is emitted is not able to be changed for each set of the emission unit and the light receiving unit.

According to a distance measurement device according to a third aspect of the present invention, in the distance measurement device according to the second aspect of the present invention, the deriving unit acquires a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for the sets, and derives the in-image irradiation positions for the sets based on the acquired first correspondence relation.

Therefore, according to the distance measurement device according to the third aspect of the present invention, it is possible to derive the in-image irradiation positions with high accuracy based on the directional light rays emitted by the emission units of the sets compared to a case where the in-image irradiation positions are derived for the sets without acquiring the first correspondence relation.

According to a distance measurement device according to a fourth aspect of the present invention, the distance measurement device according to the third aspect of the present invention further comprises a performing unit that performs a predetermined process as a process of suppressing a decrease in accuracy of the in-image irradiation position in a case where a distance which is actually measured by the measurement unit is out of a range of a distance specified by the first correspondence relation related to the corresponding set.

Therefore, according to the distance measurement device according to the fourth aspect of the present invention, it is possible to increase the accuracy of the in-image irradiation positions based on the directional light rays emitted using the sets compared to a case where the predetermined process as the process of suppressing the decrease in the accuracy of the in-image irradiation position is not performed.

A distance measurement device according to a fifth aspect of the present invention comprises an imaging unit that images a subject, a measurement unit that measures a plurality of distances to the subject by emitting directional light rays which are light rays each having directivity to the subject in a plurality of directions through scanning of the subject with the directional light rays and receiving reflection light rays of the directional light rays in the plurality of directions, a control unit that controls the imaging unit to image the subject in an angle of view which includes irradiation positions of the directional light rays used in the measurement of the plurality of distances by the measurement unit onto the subject, and a deriving unit that derives a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement device according to the fifth aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

According to a distance measurement device according to a sixth aspect of the present invention, in the distance measurement device according to the fifth aspect of the present invention, the deriving unit acquires a first correspondence relation between in-provisional-image irradiation positions corresponding to the irradiation positions within a provisional image acquired by provisionally imaging the subject by the imaging unit whenever each of a plurality of distances is provisionally measured by the measurement unit and distances which are provisionally measured by the measurement unit by using the directional light rays corresponding to the in-provisional-image irradiation positions for each direction, and derives the in-image irradiation positions for each direction based on the acquired first correspondence relation.

Therefore, according to the distance measurement device according to the sixth aspect of the present invention, it is possible to derive the in-image irradiation positions with high accuracy based on the directional light rays emitted by the emission units of the directions compared to a case where the in-image irradiation positions are derived for each direction without acquiring the first correspondence relation.

According to a distance measurement device according to a seventh aspect of the present invention, the distance measurement device according to the sixth aspect of the present invention further comprises a performing unit that performs a predetermined process as a process of suppressing a decrease in accuracy of the in-image irradiation position in a case where a distance which is actually measured by the measurement unit is out of a range of a distance specified by the first correspondence relation related to the corresponding direction.

Therefore, according to the distance measurement device according to the seventh aspect of the present invention, it is possible to increase the accuracy of the in-image irradiation positions based on the directional light rays irradiated in the plurality of directions compared to a case where the predetermined process as the process of suppressing the decrease in the accuracy of the in-image irradiation position is not performed.

According to a distance measurement device according to an eighth aspect, the distance measurement device according to any one of the first to seventh aspects of the present invention further comprises an output unit that derives derivation accuracy corresponding to an actually present factor based on a second correspondence relation between assumption factors assumed as factors influencing the in-image irradiation positions and derivation accuracy derived by the deriving unit, and outputs information based on the derived derivation accuracy.

Therefore, according to the distance measurement device according to the eighth aspect of the present invention, the user can easily ascertain the information based on the derivation accuracy of the dimension of the real-space region compared to a case where the information based on the derivation accuracy of the dimension of the real-space region is not output even though a factor influencing the in-image irradiation position is actually present.

According to a distance measurement device according to a ninth aspect, in the distance measurement device according to the eighth aspect of the present invention, different derivation accuracy are associated with different assumption factors in the second correspondence relation, and the output unit derives the derivation accuracy associated with the assumption factor corresponding to the actually present factor.

Therefore, according to the distance measurement device according to the ninth aspect of the present invention, it is possible to accurately derive the derivation accuracy compared to a case where the derivation accuracy is associated with only a single assumption factor.

According to a distance measurement device according to a tenth aspect, in the distance measurement device according to the ninth aspect of the present invention, in a case where the actually present factor is in plural, the output unit derives the derivation accuracy by integrating the derivation accuracy associated with the assumption factors corresponding to the plurality of actually present factors.

Therefore, according to the distance measurement device according to the tenth aspect of the present invention, it is possible to achieve easy handling of the derivation accuracy compared to a case where the derivation accuracy associated with the assumption factors corresponding to the plurality of factors which is actually present in the distance measurement device as the factors influencing the in-image irradiation position are individually derived.

According to a distance measurement device according to an eleventh aspect, the distance measurement device according to any one of the first to tenth aspects of the present invention further comprises a change unit that is capable of changing an angle at which the directional light ray is emitted. In a case where the in-image irradiation position is out of a default range within the captured image, the control unit controls the measurement unit to measure the distance until the in-image irradiation position falls in a default range, and controls the deriving unit to derive the in-image irradiation position based on the distance measured by the measurement unit and the angle changed by the change unit.

Therefore, according to the distance measurement device according to the eleventh aspect of the present invention, it is possible to perform the distance measurement in a state in which the in-image irradiation position is in the default range within a captured image.

According to a distance measurement device according to a twelfth aspect of the present invention, in the distance measurement device according to the eleventh aspect of the present invention, in a case where the in-image irradiation position is out of the default range, the control unit controls the measurement unit to measure the distance until the in-image irradiation position falls in the default range, controls the change unit to change an angle by driving a power source, and controls the deriving unit to derive the in-image irradiation position based on the distance measured by the measurement unit and the angle changed by the change unit.

Therefore, according to the distance measurement device according to the twelfth aspect of the present invention, it is possible to reduce an effort to position the in-image irradiation position within the default range compared to a case where the angle is changed by the change unit without using the power source.

According to a distance measurement device according to a thirteenth aspect, in the distance measurement device according to any one of the first to twelfth aspects of the present invention, a frame including the in-image irradiation positions is designated for each in-image irradiation position within the captured image, the plurality of pixels is designated inside the frame for each frame, and the deriving unit derives the dimension of the real-space region corresponding to an interval between the plurality of designated pixels based on distances related to the corresponding in-image irradiation positions among the plurality of distances measured by the measurement unit, the interval, and the focal length for each frame.

Therefore, according to the distance measurement device according to the thirteenth aspect of the present invention, it is possible to derive the dimension of the real-space region based on the distance measured based on the directional light ray among the plurality of directional light rays which is desired to be used by the user in the deriving of the dimension of the real-space region compared to a case where the frame including the in-image irradiation position is not designated.

According to a distance measurement device according to a fourteenth aspect, in the distance measurement device according to any one of the first to thirteenth aspects of the present invention, the subject includes a plurality of planar regions of which at least one of an orientation or a position is different, and the measurement unit measures distances to the plurality of planar regions by emitting the directional light rays to the plurality of planar regions and receiving reflection lights of the directional light rays emitted to the plurality of planar regions.

Therefore, according to the distance measurement device according to the fourteenth aspect of the present invention, it is possible to easily derive dimensions of different real-space regions by using the plurality of planar regions as the targets compared to a case where only one directional light ray is emitted to the planar region.

A distance measurement method according to a fifteenth aspect of the present invention comprises controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device, and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement method according to the fifteenth aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

A distance measurement method according to a sixteenth aspect of the present invention comprises controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device, and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement method according to the sixteenth aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

A distance measurement program according to a seventeenth aspect of the present invention causes a computer to perform a process of controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device, and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement program according to the seventeenth aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

A distance measurement program according to an eighteenth aspect of the present invention causes a computer to perform a process of controlling an imaging unit which images a subject to image the subject in an angle of view which includes irradiation positions of a plurality of directional light rays which are light rays each having directivity used in measurement of a plurality of distances by a measurement unit which measures the plurality of distances to the subject by emitting the directional light rays to the subject and receiving reflection light rays of the directional light rays by corresponding light receiving units, onto the subject, the imaging unit and the measurement unit being included in a distance measurement device, and deriving a dimension of a real-space region corresponding to an interval between a plurality of pixels associated with the in-image irradiation positions based on distances which are related to in-image irradiation positions derived as positions corresponding to the irradiation positions within a captured image acquired by imaging the subject by the imaging unit, among the plurality of distances measured by the measurement unit, the interval for each distance within the captured image, and a focal length of the imaging unit.

Therefore, according to the distance measurement program according to the eighteenth aspect of the present invention, it is possible to rapidly derive dimensions of a plurality of targets compared to a case where only one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

According to one embodiment of the present invention, an effect of rapidly deriving dimensions of a plurality of targets is acquired compared to a case where one dimension deriving target is designated whenever the imaging and the distance measurement are performed once.

BRIEF DESCRIPTION OF THE DRAWINGS

FIG. 1 is a front view showing an example of an external appearance of a distance measurement device according to first to fifth embodiments.

FIG. 2 is a conceptual diagram (schematic side view) showing an example of a schematic configuration of a distance measurement unit and a longitudinal rotation mechanism according to the first to sixth embodiments.

FIG. 3 is a conceptual diagram (schematic front view) showing an example of a schematic configuration of an upper distance measurement unit, an upper horizontal rotation mechanism, a lower distance measurement unit, and a lower horizontal rotation mechanism according to the first to sixth embodiments.

FIG. 4 is a conceptual diagram (schematic plan view) showing an example of a schematic configuration of the upper distance measurement unit and the lower distance measurement unit according to the first to sixth embodiments.

FIG. 5 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement device according to the first to third embodiments and the fifth embodiment.

FIG. 6 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement unit according to the first to third embodiments, the fifth embodiment, and the sixth embodiment.

FIG. 7 is a time chart showing an example of a measurement sequence using the distance measurement device according to the first to seventh embodiments.

FIG. 8 is a time chart showing an example of a laser trigger, a light-emitting signal, a light-receiving signal, and a count signal required in a case where measurement using the distance measurement device according to the first to seventh embodiments is performed once.

FIG. 9 is a graph showing an example of a histogram (a histogram in a case where a lateral axis represents a distance (measurement value) to the subject and a longitudinal axis represents the number of times the measurement is performed) of measurement values acquired in the measurement sequence using the distance measurement device according to the first to seventh embodiments.

FIG. 10 is a block diagram showing an example of a hardware configuration of a main control unit included in the distance measurement device according to the first to fifth embodiments and the seventh embodiment.

FIG. 11 is an explanatory diagram for describing a method of measuring a dimension (length) of a designated region.

FIG. 12 is a functional block diagram showing an example of functions of main parts realized by a CPU of the distance measurement device according to the first to seventh embodiments.

FIG. 13 is a conceptual diagram showing an example of a position and distance table according to the first to seventh embodiments.

FIG. 14 is a conceptual diagram showing an example of a factor and accuracy table according to the first to seventh embodiments.

FIG. 15 is a flowchart showing an example of a flow of a factor storing process according to the first to seventh embodiments.

FIG. 16 is a flowchart showing an example of a flow of a measurement process according to the first to third embodiments, the fifth embodiment, and the sixth embodiment.

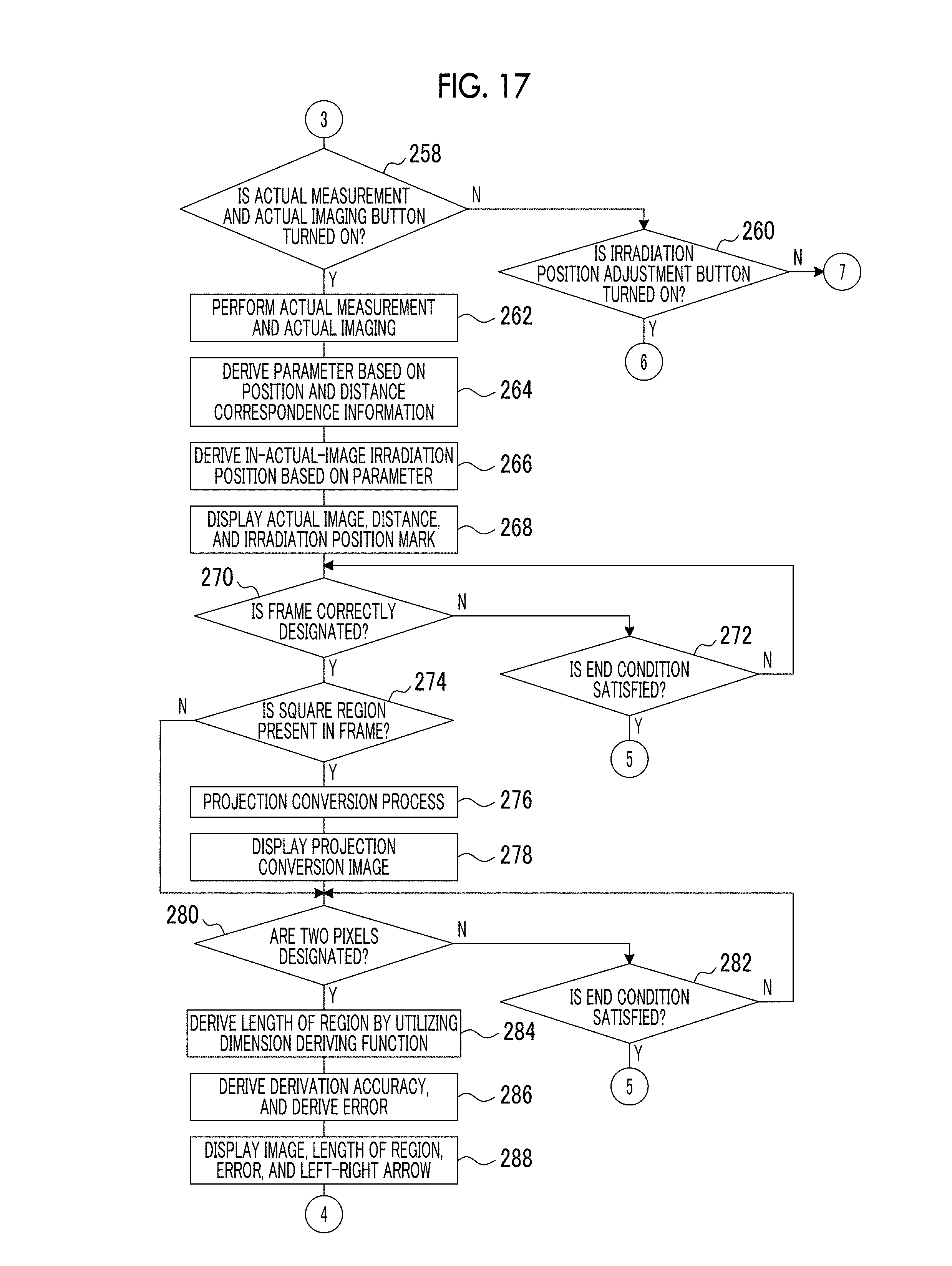

FIG. 17 is a flowchart subsequent to the flowcharts shown in FIGS. 16 and 36.

FIG. 18 is a flowchart subsequent to the flowcharts shown in FIGS. 16 and 48.

FIG. 19 is a flowchart showing an example of a flow of a data acquisition process for deriving an irradiation position according to the first to seventh embodiments.

FIG. 20 is an explanatory diagram for describing a parameter that influences an in-image irradiation position.

FIG. 21 is a screen diagram showing an example of a screen in which an actual image, a distance, an error, and an irradiation position mark are displayed on a display unit according to the first to seventh embodiments.

FIG. 22 is a screen diagram showing an example of an irradiation position adjustment recommendation screen according to the first to seventh embodiments.

FIG. 23 is a screen diagram showing an example of a provisional measurement and provisional imaging guide screen according to the first to seventh embodiments.

FIG. 24 is a screen diagram showing an example of a re-performing guide screen according to the first to seventh embodiments.

FIG. 25 is a screen diagram showing an example of a screen in which a plurality of square frames each including an irradiation position mark is designated in a display region within an actual image.

FIG. 26 is a screen diagram showing an example of a screen in which an actual image including a projection conversion image acquired by performing a projection conversion process on an image region within the square frame is displayed.

FIG. 27 is a screen diagram showing an example of a screen in which a length of a region, an error, and a left-right arrow are displayed so as to be superimposed on the projection conversion image.

FIG. 28 is a flowchart showing an example of a flow of an irradiation position adjustment process according to the first embodiment and the fifth to seventh embodiments.

FIG. 29 is a screen diagram showing an example of a live view image, a frame, and an irradiation position mark displayed on the display unit by performing the irradiation position adjustment process.

FIG. 30 is a screen diagram showing an example of a live view image, a frame, an irradiation position mark, and a message corresponding to out-of-default-range information displayed on the display unit by performing the irradiation position adjustment process.

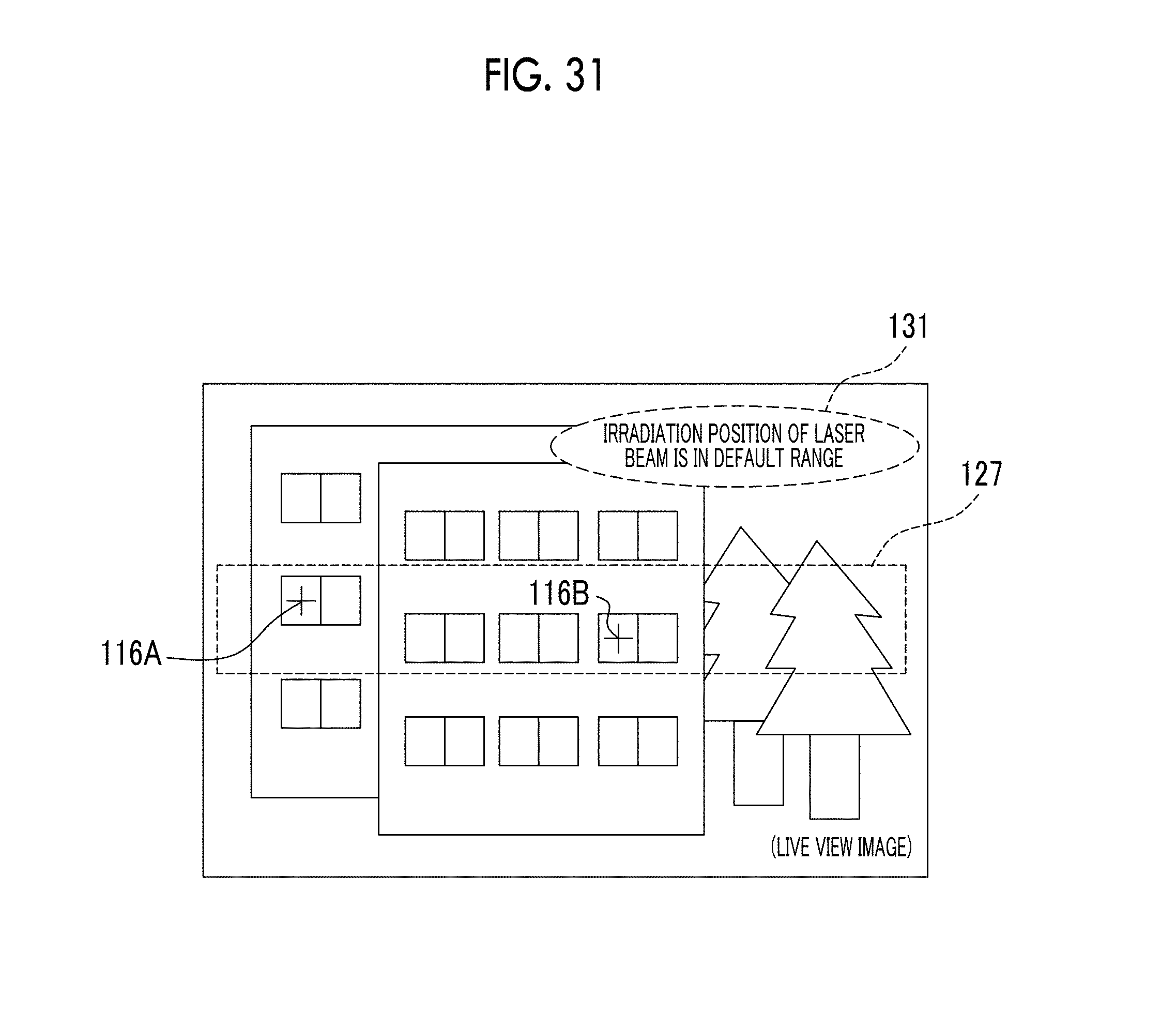

FIG. 31 is a screen diagram showing an example of a live view image, a frame, an irradiation position mark, and a message corresponding to in-default-range information displayed on the display unit by performing the irradiation position adjustment process.

FIG. 32 is a flowchart showing an example of a flow of an irradiation position adjustment process according to the second embodiment.

FIG. 33 is a flowchart showing an example of a flow of an irradiation position adjustment process according to the third embodiment.

FIG. 34 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement device according to the fourth embodiment.

FIG. 35 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement unit according to the fourth embodiment.

FIG. 36 is a flowchart showing an example of a flow of a measurement process according to the fourth embodiment.

FIG. 37 is a flowchart showing an example of a flow of an irradiation position adjustment process according to the fourth embodiment.

FIG. 38 is a flowchart showing an example of a flow of the measurement process according to the fifth embodiment, and is also a flowchart subsequent to the flowchart shown in FIG. 16.

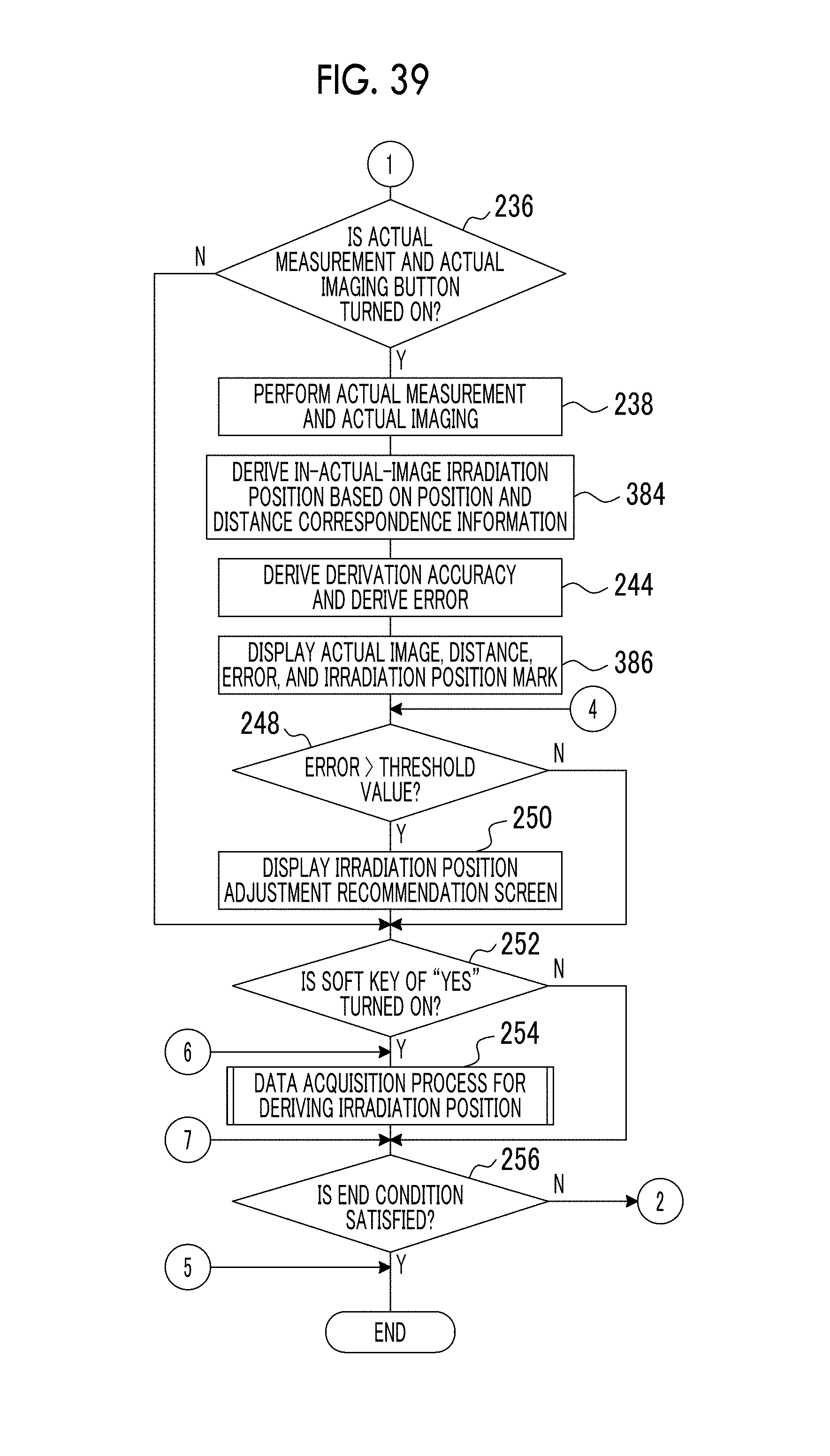

FIG. 39 is a flowchart showing an example of a flow of the measurement process according to the fifth embodiment, and is also a flowchart subsequent to the flowchart shown in FIG. 16.

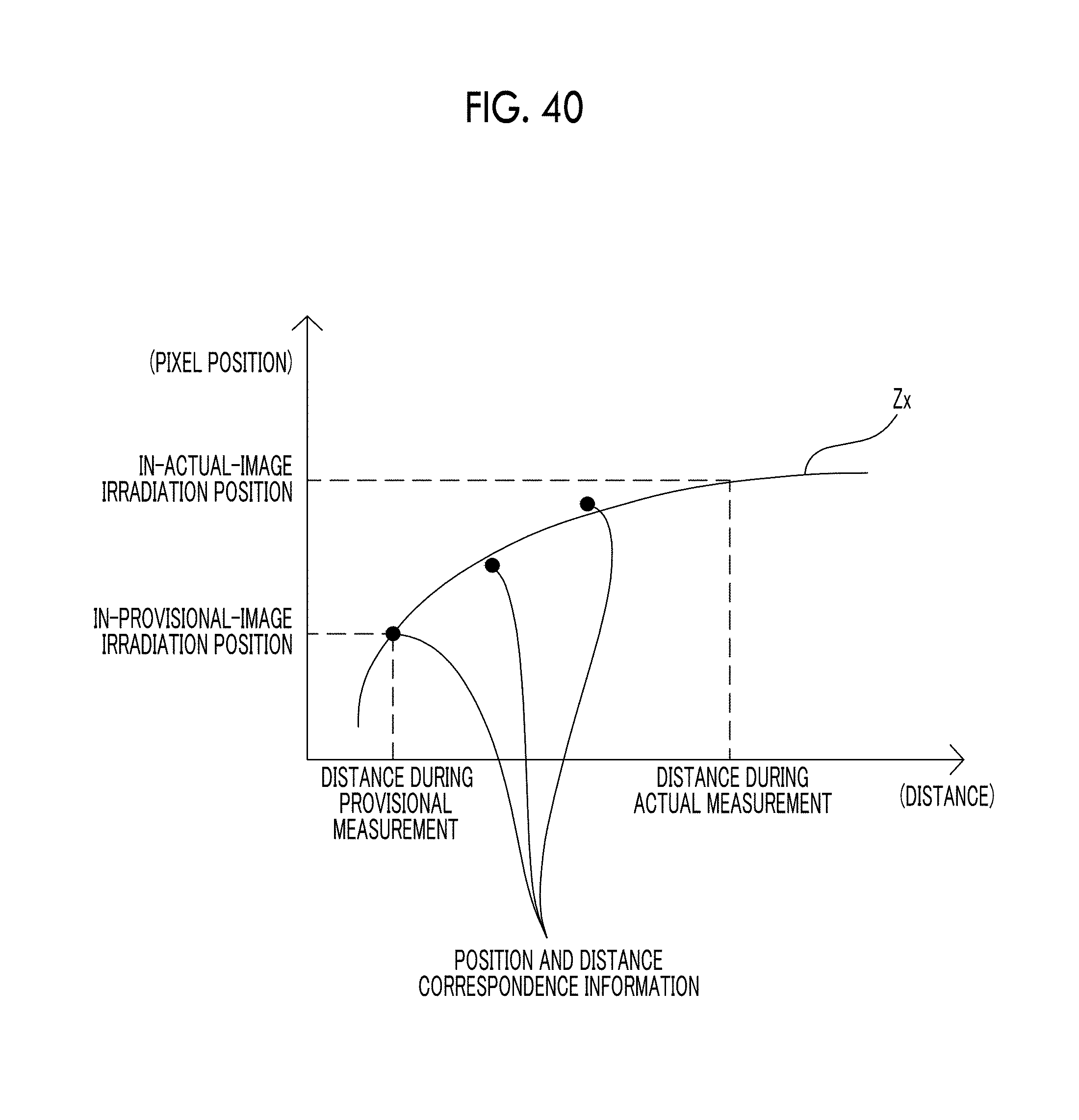

FIG. 40 is a graph showing an example of an approximate curve related to the latest position and distance correspondence information.

FIG. 41 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement device according to the sixth embodiment.

FIG. 42 is a screen diagram showing an example of a screen including an actual measurement and actual imaging button, a provisional measurement and provisional imaging button, an imaging system operation mode switching button, a wide angle instruction button, a telephoto instruction button, a measurement system operation mode switching button, an irradiation position adjustment button, an upper horizontal rotation touchpad, a lower horizontal rotation touchpad, and a longitudinal rotation touchpad displayed as soft keys on a display unit of a smart device according to the sixth embodiment.

FIG. 43 is a front view showing an example of an external appearance of a distance measurement device according to the seventh embodiment.

FIG. 44 is a conceptual diagram (schematic side view) showing an example of a schematic configuration of a distance measurement unit and a longitudinal rotation mechanism according to the seventh embodiment.

FIG. 45 is a conceptual diagram (schematic front view) showing an example of a distance measurement unit and a horizontal rotation mechanism according to the seventh embodiment.

FIG. 46 is a conceptual diagram (schematic plan view) showing an example of a scanning aspect using the distance measurement unit according to the seventh embodiment.

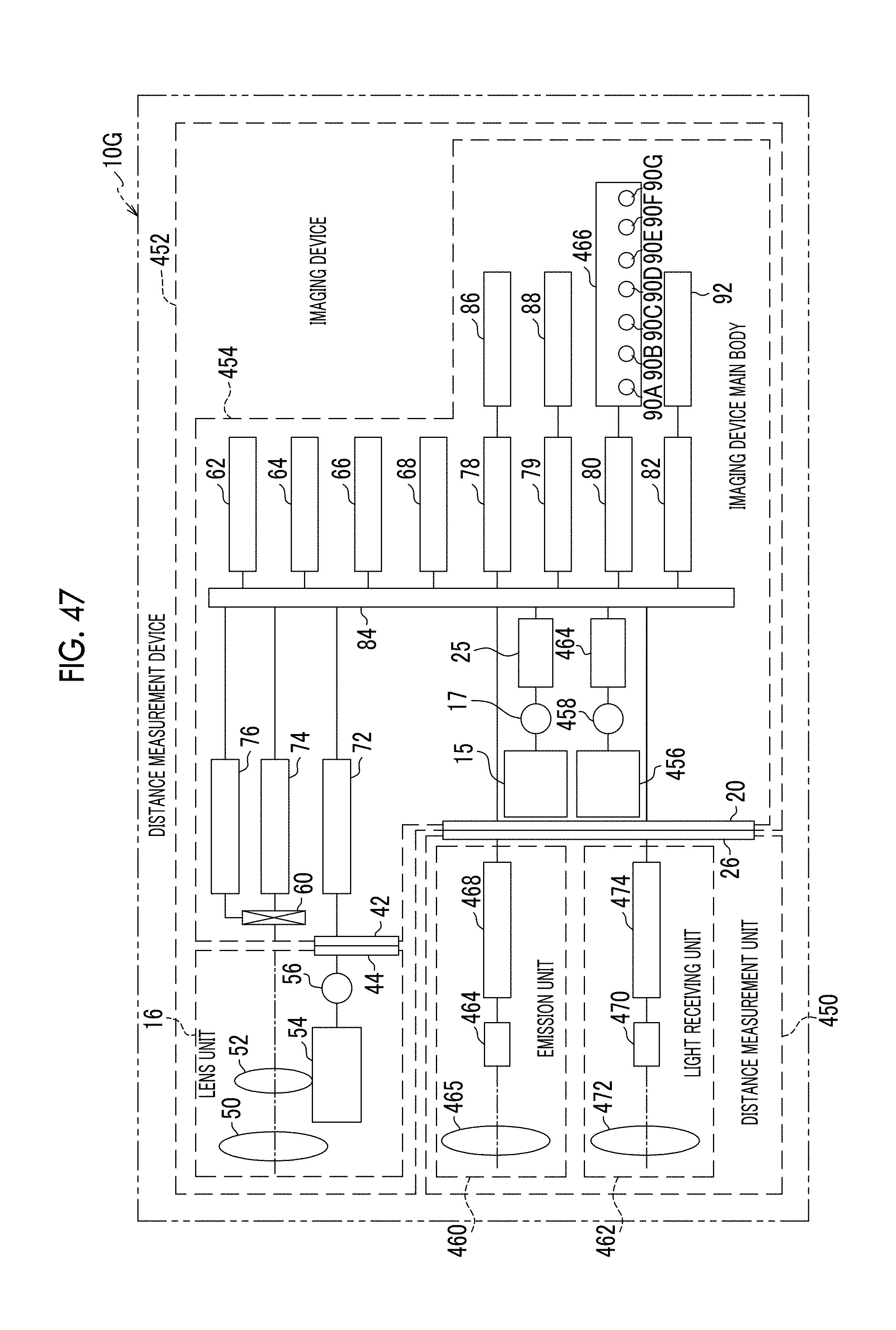

FIG. 47 is a block diagram showing an example of a hardware configuration of main parts of the distance measurement device according to the seventh embodiment.

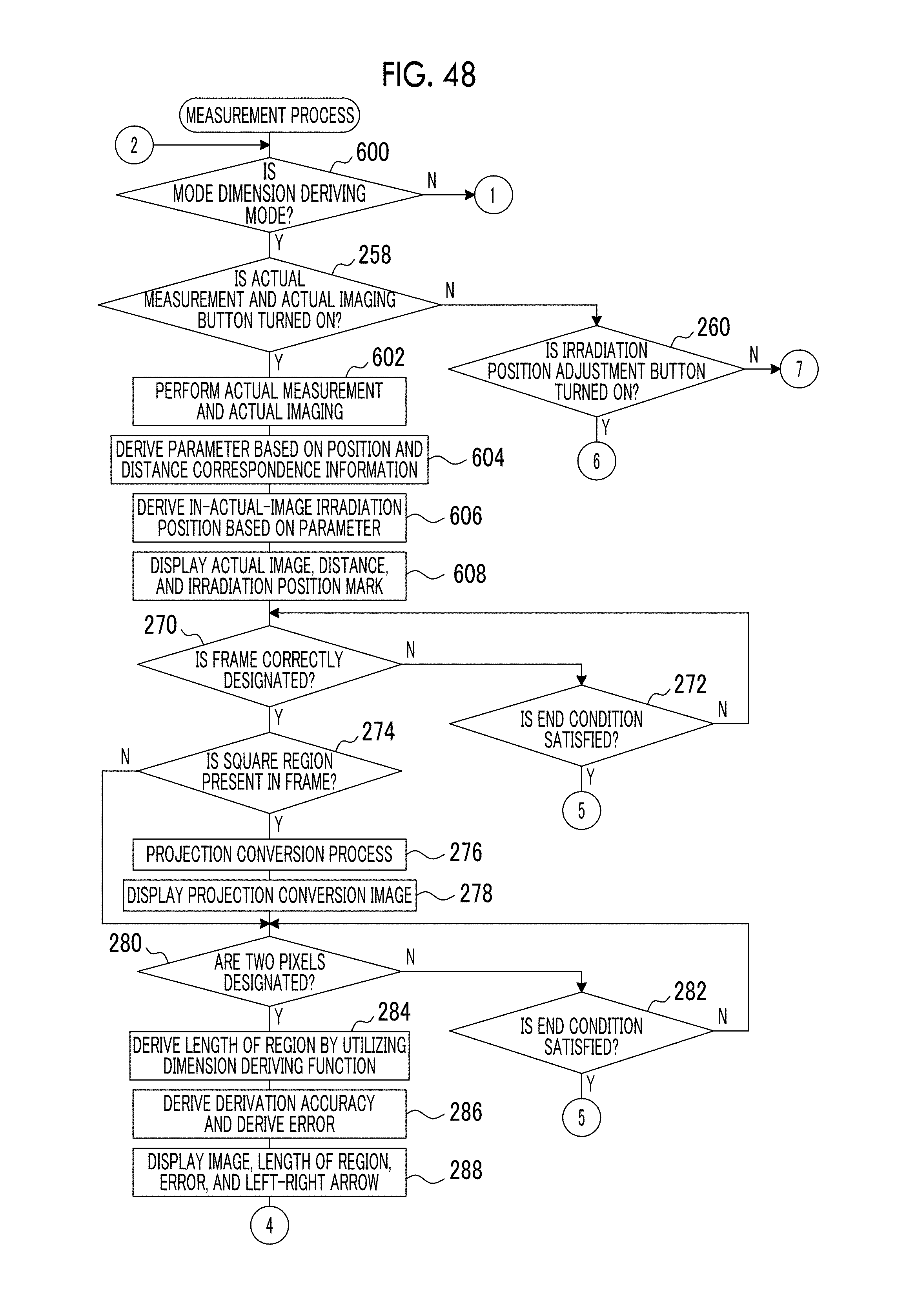

FIG. 48 is a flowchart showing an example of a flow of a measurement process according to the seventh embodiment.

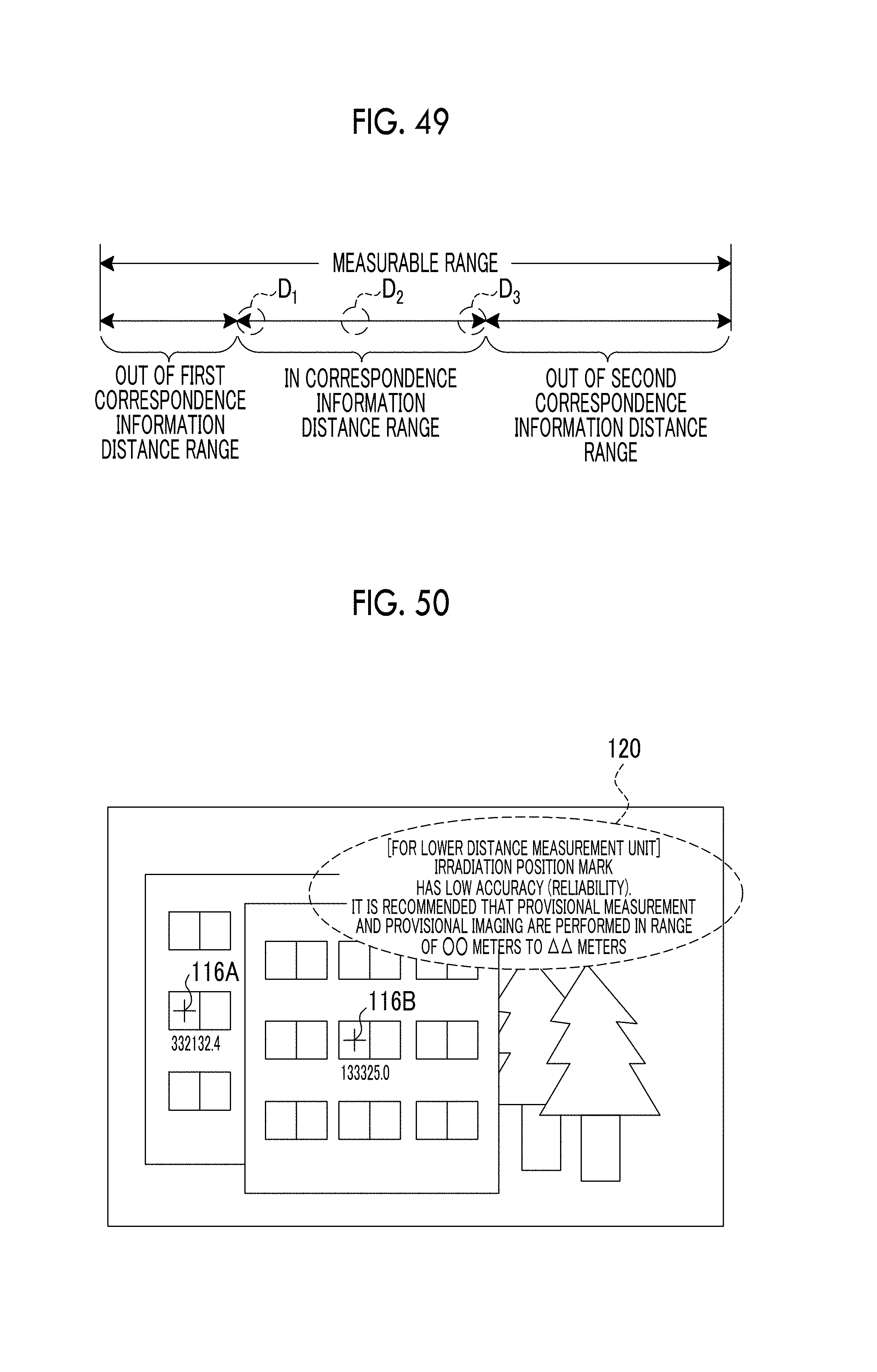

FIG. 49 is a conceptual diagram showing an example in which a distance is in a correspondence information distance range, is out of a first correspondence information distance range, and is out of a second correspondence information distance range according to the embodiments.

FIG. 50 is a screen diagram showing an example of a screen in which an actual image, a distance, an error, an irradiation position mark, and a warning and recommendation message are displayed on the display unit according to the first to sixth embodiments.

FIG. 51 is a conceptual diagram showing an example in which a distance is in a correspondence information distance range, is out of a first correspondence information distance range, and is out of a second correspondence information distance range used in a case where an in-image irradiation position is derived by using an approximate curve according to the fifth embodiment.

FIG. 52 is a conceptual diagram showing an example of an aspect in which a program is installed in the distance measurement device from a storage medium that stores a program according to the first to seventh embodiments.

DESCRIPTION OF THE PREFERRED EMBODIMENTS

Hereinafter, an example of an embodiment related to a technology of the present disclosure will be described with reference to the accompanying drawings. In the present embodiment, a distance between a distance measurement device and a subject as a measurement target is simply referred to as a distance for the sake of convenience in description. In the present embodiment, an angle of view (an angle of view on a subject image indicating the subject) on the subject is simply referred to as an "angle of view".

First Embodiment

For example, a distance measurement device 10A according to the first embodiment includes a distance measurement unit 12 and an imaging device 14 as shown in FIG. 1. In the present embodiment, the distance measurement unit 12 and a distance measurement control unit 68 (see FIG. 5) to be described below are examples of a measurement unit according to the technology of the present disclosure, and the imaging device 14 is an example of an imaging unit according to the technology of the present disclosure.

The imaging device 14 includes a lens unit 16 and an imaging device main body 18, and the lens unit 16 is detachably attached to the imaging device main body 18.

A hot shoe 20 is provided on a top surface of the imaging device main body 18, and the distance measurement unit 12 is detachably attached to the hot shoe 20.

The distance measurement device 10A has a distance measurement system function of measuring a distance by emitting a laser beam for distance measurement to the distance measurement unit 12, and an imaging system function of causing the imaging device 14 to acquire a captured image by imaging the subject. Hereinafter, the captured image acquired by imaging the subject by using the imaging device 14 by utilizing the imaging system function is simply referred to as an "image" or a "captured image".

The distance measurement unit 12 includes an upper distance measurement unit 11 and a lower distance measurement unit 13, and the upper distance measurement unit 11 is disposed so as to be overlapped on the lower distance measurement unit 13. The upper distance measurement unit 11 and the lower distance measurement unit 13 are examples of a "set" according to the technology of the present disclosure.

The upper distance measurement unit 11 is attached to the lower distance measurement unit 13 so as to be rotated with a plan-view central portion as a rotation axis, and the lower distance measurement unit 13 is attached to a plan-view central portion of the hot shoe 20 so as to be rotated with a plan-view central portion as a rotation axis.

Hereinafter, the upper distance measurement unit 11 and the lower distance measurement unit 13 are referred to as an "individual distance measurement unit" without being assigned the references in a case where it is not necessary to distinguish between these distance measurement units for the sake of convenience in description.

The distance measurement device 10A performs one measurement sequence (see FIG. 7) on the upper distance measurement unit 11 and the lower distance measurement unit 13 according to one instruction by utilizing the distance measurement system function. The distance measurement device 10A finally outputs one distance by performing one distance measurement sequence by using the upper distance measurement unit 11, and finally outputs one distance by performing one distance measurement sequence by using the lower distance measurement unit 13.

In the present embodiment, actual measurement and provisional measurement are selectively performed by utilizing the distance measurement system function according to an instruction of a user. The actual measurement means measurement in which a distance measured by utilizing the distance measurement system function is actually used, and the provisional measurement means measurement performed in a preparation stage of increasing the accuracy of the actual measurement.

The distance measurement device 10A has, as an operation mode of the imaging system function, a still image imaging mode and a video imaging mode. The still image imaging mode is an operation mode for imaging a still image, and the video imaging mode is an operation mode of imaging a motion picture. The still image imaging mode and the video imaging mode are selectively set according to an instruction of the user.

In the present embodiment, the actual imaging and the provisional imaging are selectively performed by utilizing the imaging system function according to an instruction of the user. The actual imaging is imaging performed in synchronization with the actual measurement, and the provisional imaging is imaging performed in synchronization with the provisional measurement. Hereinafter, for the sake of convenience in description, an image acquired by performing actual imaging is also referred to as an actual captured image or an actual image, and an image acquired by performing provisional imaging is also referred to as a provisional captured image or a provisional image. Hereinafter, the actual captured image and the provisional captured image are referred to as an "image" or a "captured image" for the sake of convenience in description in a case where it is not necessary to distinguish between these images.

The distance measurement device 10A has, as an operation mode of the distance measurement system function, a distance deriving mode and a dimension deriving mode. The distance deriving mode is an operation mode in which the distance measurement device 10A measures a distance. The dimension deriving mode is an operation mode in which a dimension of a real-space region designated by the user is derived based on the distance measured by the distance measurement device 10A by utilizing a dimension deriving function.

Hereinafter, an example in which a length between two points in a real space is derived as the dimension of the real-space region will be described for the sake of convenience in description. Hereinafter, a region "between two points in the real space" is also referred to as a "region in the real space" or is simply referred to as a "region" for the sake of convenience in description.

For example, the imaging device main body 18 includes a longitudinal rotation mechanism 15 as shown in FIG. 2. The longitudinal rotation mechanism 15 receives a power generated by a motor 17 (see FIG. 5) to be described below, and rotates the hot shoe 20 in a front-view longitudinal direction with a front end portion of the hot shoe 20 as a rotation axis. Accordingly, the hot shoe 20 to which the distance measurement unit 12 is attached is rotated by the longitudinal rotation mechanism 15 in the longitudinal direction in front view, and thus, an orientation of the distance measurement unit 12 is changed in the front-view longitudinal direction (for example, an A1 direction represented in FIG. 2) in the front-view longitudinal direction.

For the sake of convenience in description, although it has been described in the example shown in FIG. 2 that the hot shoe 20 is rotated in the front-view longitudinal direction such that a rear end portion of the hot shoe 20 is buried within the imaging device main body 18, the technology of the present disclosure is not limited thereto. For example, the hot shoe 20 may be rotated in the front-view longitudinal direction such that the rear end of the hot shoe 20 is pushed up from the imaging device main body 18. Hereinafter, for the sake of convenience in description, the front-view longitudinal direction is simply referred to as a "longitudinal direction".

For example, the upper distance measurement unit 11 includes an upper horizontal rotation mechanism 11A, as shown in FIG. 3. The upper horizontal rotation mechanism 11A receives a power generated by a motor 11B (see FIG. 6) to be described below, and rotates the upper distance measurement unit 11 with a plan-view central portion of the lower distance measurement unit 13 as the rotation axis in a front-view horizontal direction. Accordingly, the upper distance measurement unit 11 is rotated in the front-view horizontal direction in a state in which the distance measurement unit 12 is attached to the hot shoe 20, and thus, an orientation of the upper distance measurement unit 11 is changed in the front-view horizontal direction (for example, a direction of an arrow B1 represented in FIG. 3). Hereinafter, for the sake of convenience in description, the front-view horizontal direction is simply referred to as a "horizontal direction".

For example, the lower distance measurement unit 13 includes a lower horizontal rotation mechanism 13A as shown in FIG. 3. The lower horizontal rotation mechanism 13A receives a power generated by a motor 13B (see FIG. 6) to be described below, and rotates the lower distance measurement unit 13 in a horizontal direction with the plan-view central portion of the hot shoe 20 as the rotation axis. Accordingly, the lower distance measurement unit 13 is rotated in the horizontal direction in a state in which the distance measurement unit 12 is attached to the hot shoe 20, and thus, the orientation of the lower distance measurement unit 13 is changed in the horizontal direction (for example, the direction of the arrow B1 represented in FIG. 3).

As stated above, since the distance measurement unit 12 includes a set of a plurality of individual distance measurement units (for example, the upper distance measurement unit 11 and the lower distance measurement unit 13) in a longitudinal direction, it is possible to emit a plurality of laser beams to the subject according to one instruction. In a case where the orientations of the upper distance measurement unit 11 and the lower distance measurement unit 13 are changed in the horizontal direction, it is possible to emit the plurality of laser beams in different directions by the upper distance measurement unit 11 and the lower distance measurement unit 13, as shown in FIG. 4. The example shown in FIG. 4 shows a state in which two laser beams are emitted from the distance measurement unit 12.

In the first to sixth embodiments, the upper and lower horizontal rotation mechanisms are referred to as a "horizontal rotation mechanism" without being assigned the reference for the sake of convenience in description in a case where it is not necessary to distinguish between the upper horizontal rotation mechanism 11A and the lower horizontal rotation mechanism 13A. In the first to sixth embodiments, the longitudinal rotation mechanism and the longitudinal rotation mechanism are referred to as a "rotation mechanism" without being assigned the reference for the sake of convenience in description in a case where it is not necessary to distinguish between the longitudinal rotation mechanism 15 and the horizontal rotation mechanism.

For example, the distance measurement unit 12 includes a connector 26, as shown in FIG. 5. The upper distance measurement unit 11 includes signal lines 28A, 28B, and 28C, and the lower distance measurement unit 13 includes signal lines 28D, 28E, and 28F. The signal lines 28A, 28B, 28C, 28D, 28E, and 28F are connected to the connector 26.

The connector 26 is able to be connected to the hot shoe 20, and the distance measurement unit 12 is operated under the control of the imaging device main body 18 in a state in which the connector 26 is connected to the hot shoe 20.

For example, the upper distance measurement unit 11 includes an emission unit 22 and a light receiving unit 24, as shown in FIG. 6. The positional relation between the emission unit 22 and the light receiving unit 24 is fixed.

The emission unit 22 includes a laser diode (LD) 22A, a condenser lens (not shown), an object lens 22B, and an LD driver 22C.

The condenser lens and the object lens 22B are provided along an optical axis of a laser beam emitted by the LD 22A, and the condenser lens and the object lens 22B are arranged in order along the optical axis from the LD 22A.

The LD 22A emits a laser beam for distance measurement which is an example of directional light according to the technology of the present disclosure. The laser beam emitted by the LD 22A is a colored laser beam. For example, as long as the subject is separated from the emission unit 22 in a range of about several meters, an irradiation position of the laser beam is visually recognized in a real space, and is visually recognized from the captured image acquired by imaging the subject by the imaging device 14.

The condenser lens concentrates the laser beam emitted by the LD 22A, and causes the concentrated laser beam to pass. The object lens 22B faces the subject, and emits the laser beam that passes through the condenser lens to the subject.

The LD driver 22C is connected to the LD 22A, and is connected to the connector 26 through the signal line 28A. The LD driver 22C emits the laser beam by driving the LD 22A according to an instruction of the imaging device main body 18.

The light receiving unit 24 includes a photodiode (PD) 24A, an object lens 24B, and a light-receiving signal processing circuit 24C. The object lens 24B is disposed on a light receiving surface of the PD 24A. After the laser beam emitted by the emission unit 22 reaches the subject, a reflection laser beam which is a laser beam reflected from the subject is incident on the object lens 24B. The object lens 24B factors the reflection laser beam to pass, and guides the reflection laser beam to the light receiving surface of the PD 24A. The PD 24A receives the reflection laser beam that passes through the object lens 24B, and outputs an analog signal corresponding to a light reception amount, as a light-receiving signal.

The light-receiving signal processing circuit 24C is connected to the PD 24A, and is connected to the connector 26 through the signal line 28B. The light-receiving signal processing circuit 24C amplifies the light-receiving signal input from the PD 24A by an amplifier (not shown), and performs analog-to-digital (A/D) conversion on the amplified light-receiving signal. The light-receiving signal processing circuit 24C outputs the light-receiving signal digitized through the A/D conversion to the imaging device main body 18.

The upper distance measurement unit 11 includes a motor 11B. The motor 11B is connected to the upper horizontal rotation mechanism 11A so as to transfer the power, and is connected to the connector 26 through the signal line 28C. Accordingly, the upper horizontal rotation mechanism 11A is activated by receiving the power generated by the motor 11B under the control of the imaging device main body 18.

For example, the lower distance measurement unit 13 includes an emission unit 30 and a light receiving unit 32, as shown in FIG. 6. The positional relation between the emission unit 30 and the light receiving unit 32 is fixed.

The emission unit 30 includes an LD 30A, a condenser lens (not shown), an object lens 30B, and an LD driver 30C.

The condenser lens and the object lens 30B are provided along an optical axis of a laser beam emitted by the LD 30A, and the condenser lens and the object lens 30B are arranged in order along the optical axis from the LD 30A.

The LD 30A emits a laser beam for distance measurement which is an example of directional light according to the technology of the present disclosure. The laser beam emitted by the LD 30A is the same laser beam as that of the LD 22A of the emission unit 22 of the upper distance measurement unit 11.

The condenser lens concentrates the laser beam emitted by the LD 30A, and causes the concentrated laser beam to pass. The object lens 30B faces the subject, and emits the laser beam that passes through the condenser lens to the subject.

The LD driver 30C is connected to the LD 30A, and is connected to the connector 26 through the signal line 28D. The LD driver 30C emits the laser beam according to an instruction of the imaging device main body 18 by driving the LD 30A.

The light receiving unit 32 includes a PD 32A, an object lens 32B, and a light-receiving signal processing circuit 32C. The object lens 32B is disposed on a light receiving surface of the PD 32A. After the laser beam emitted by the emission unit 30 reaches the subject, a reflection laser beam which is a laser beam reflected from the subject is incident on the object lens 32B. The object lens 32B factors the reflection laser beam to pass, and guides the reflection laser beam to the light receiving surface of the PD 32A. The PD 32A receives the reflection laser beam that passes through the object lens 32B, and outputs an analog signal corresponding to a light reception amount, as a light-receiving signal.

Hereinafter, the object lenses are referred to as the "object lens" without being assigned the references for the sake of convenience in description in a case where it is not necessary to distinguish between the object lenses 22B, 24B, 30B, and 32B.

The light-receiving signal processing circuit 32C is connected to the PD 32A, and is connected to the connector 26 through the signal line 28E. The light-receiving signal processing circuit 32C amplifies the light-receiving signal input from the PD 32A by an amplifier (not shown), and performs A/D conversion on the amplified light-receiving signal. The light-receiving signal processing circuit 32C outputs the light-receiving signal digitized through the A/D conversion to the imaging device main body 18.

The lower distance measurement unit 13 includes a motor 13B. The motor 13B is connected to the lower horizontal rotation mechanism 13A so as to transfer the power, and is connected to the connector 26 through the signal line 28F. Accordingly, the lower horizontal rotation mechanism 13A is activated by receiving the power generated by the motor 13B under the control of the imaging device main body 18.

For example, the imaging device 14 includes mounts 42 and 44, as shown in FIG. 5. The mount 42 is provided at the imaging device main body 18, and the mount 44 is provided at the lens unit 16. The lens unit 16 is attached to the imaging device main body 18 so as to be replaceable by coupling the mount 42 to the mount 44.

The lens unit 16 includes an imaging lens 50, a zoom lens 52, a zoom lens moving mechanism 54, and a motor 56.

Subject light which is reflected from the subject is incident on the imaging lens 50. The imaging lens 50 factors the subject light to pass, and guides the subject light to the zoom lens 52.

The zoom lens 52 is attached to the zoom lens moving mechanism 54 so as to slide along the optical axis. The motor 56 is connected to the zoom lens moving mechanism 54. The zoom lens moving mechanism 54 receives a power of the motor 56, and factors the zoom lens 52 to slide along an optical axis direction.

The motor 56 is connected to the imaging device main body 18 through the mounts 42 and 44, and the driving of the motor is controlled according to a command from the imaging device main body 18. In the present embodiment, a stepping motor is used as an example of the motor 56. Accordingly, the motor 56 is driven in synchronization with a pulsed power according to a command from the imaging device main body 18.