Network management layer--configuration management

Pugaczewski

U.S. patent number 10,341,200 [Application Number 15/792,466] was granted by the patent office on 2019-07-02 for network management layer--configuration management. This patent grant is currently assigned to CenturyLink Intellectual Property LLC. The grantee listed for this patent is CenturyLink Intellectual Property LLC. Invention is credited to John T. Pugaczewski.

View All Diagrams

| United States Patent | 10,341,200 |

| Pugaczewski | July 2, 2019 |

Network management layer--configuration management

Abstract

Novel tools and techniques are provided for implementing network management layer configuration management. In some embodiments, a system might determine one or more network devices in a network for implementing a service arising from a service request that originates from a client device over the network. The system might further determine network technology utilized by each of the one or more network devices, and might generate flow domain information (in some cases, in the form of a flow domain network ("FDN") object), using flow domain analysis, based at least in part on the determined network devices and/or the determined network technology. The system might automatically configure at least one of the network devices to enable performance of the service (which might include, without limitation, service activation, service modification, fault isolation, and/or performance monitoring), based at least in part on the generated flow domain information.

| Inventors: | Pugaczewski; John T. (Hugo, MN) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | CenturyLink Intellectual Property

LLC (Broomfield, CO) |

||||||||||

| Family ID: | 52466774 | ||||||||||

| Appl. No.: | 15/792,466 | ||||||||||

| Filed: | October 24, 2017 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180048539 A1 | Feb 15, 2018 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 15141274 | Apr 28, 2016 | 9806966 | |||

| 14462778 | Jun 7, 2016 | 9363159 | |||

| 61867461 | Aug 19, 2013 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 41/0206 (20130101); H04L 41/022 (20130101); H04L 45/02 (20130101); H04L 12/4641 (20130101); H04L 12/42 (20130101); H04L 41/5054 (20130101); H04L 2012/421 (20130101) |

| Current International Class: | H04L 12/28 (20060101); H04L 12/46 (20060101); H04L 12/42 (20060101); H04L 12/751 (20130101); H04L 12/24 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 8675488 | March 2014 | Sidebottom et al. |

| 8693323 | April 2014 | McDysan |

| 8948207 | February 2015 | DelRegno et al. |

| 9363159 | June 2016 | Pugaczewski |

| 9806966 | October 2017 | Pugaczewski |

| 2005/0251584 | November 2005 | Monga et al. |

| 2005/0259655 | November 2005 | Cuervo et al. |

| 2005/0259674 | November 2005 | Cuervo et al. |

| 2005/0262232 | November 2005 | Cuervo et al. |

| 2006/0109802 | May 2006 | Zelig et al. |

| 2006/0133405 | June 2006 | Fee |

| 2006/0153070 | July 2006 | DelRegno et al. |

| 2008/0003998 | January 2008 | Small |

| 2008/0046597 | February 2008 | Stademann et al. |

| 2008/0049621 | February 2008 | McGuire et al. |

| 2009/0310610 | December 2009 | Sandstrom |

| 2010/0002722 | January 2010 | Porat et al. |

| 2012/0263186 | October 2012 | Ueno |

| 2015/0049641 | February 2015 | Pugaczewski |

| 2016/0241451 | August 2016 | Pugaczewski |

| 2418802 | Feb 2012 | EP | |||

| WO-2015/026809 | Feb 2015 | WO | |||

Other References

|

International Search Report and Written Opinion prepared by the Korean Intellectual Property Office as Internatlional Searching Authority for PCT Intl Patent App. No. PCT/US2014/051667 dated Nov. 24, 2014; 9 pages. cited by applicant . Publication Notice of PCT International Patent Application No. PCT/US2014/051667; dated Feb. 26, 2015; 1 page. cited by applicant . International Preliminary Report on Patentability for PCT International Patent Application No. PCT/US2014/051667; dated Mar. 3, 2016; 6 pages. cited by applicant. |

Primary Examiner: Yao; Kwang B

Assistant Examiner: Loo; Juvena W

Parent Case Text

CROSS-REFERENCES TO RELATED APPLICATIONS

This application is a continuation application of U.S. patent application Ser. No. 15/141,274 (the "'274 application"), filed Apr. 28, 2016 by John T. Pugaczewski, entitled, "Network Management Layer--Configuration Management"), which is a continuation application of U.S. patent application Ser. No. 14/462,778 (the "'778 application"), filed Aug. 19, 2014 by John T. Pugaczewski, entitled, "Network Management Layer--Configuration Management"), which claims priority to U.S. Patent Application Ser. No. 61/867,461 (the "'461 application"), filed Aug. 19, 2013 by John T. Pugaczewski, entitled, "Network Management Layer--Configuration Management," the entire disclosure of which is incorporated herein by reference in its entirety for all purposes.

Claims

What is claimed is:

1. A method, comprising: receiving, with a first network device in a network, a service request, the service request originating from a first client device over the network; determining, with the first network device, one or more second network devices for implementing a service arising from the service request; determining, with the first network device, network technology utilized by each of the one or more second network devices; generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices; and automatically configuring, with a third network device in the network, at least one of the one or more second network devices to enable performance of the service arising from the service request, based at least in part on the generated flow domain information.

2. The method of claim 1, wherein generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices comprises generating, with the first network device, a flow domain network ("FDN") object, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices.

3. The method of claim 2, wherein generating, with the first network device, the FDN object, using flow domain analysis comprises generating, with the first network device, a computed graph of flow domains indicating one or more network paths in the network through the one or more second network devices and indicating relevant connectivity associations of the one or more second network devices.

4. The method of claim 2, wherein generating, with the first network device, the FDN object, using flow domain analysis comprises utilizing an outside-in analysis that first analyzes relevant user network interfaces.

5. The method of claim 1, wherein the service request comprises a Metro Ethernet Forum ("MEF") service request.

6. The method of claim 5, wherein determining, with the first network device, network technology utilized by each of the one or more second network devices comprises mapping, with the first network device, MEF services associated with the service request with the network technology utilized by each of the one or more second network devices.

7. The method of claim 6, wherein mapping, with the first network device, MEF services associated with the service request with the network technology utilized by each of the one or more second network devices comprises implementing, with the first network device, an intermediate abstraction between the MEF services and the network technology utilized by each of the one or more second network devices, using a layer network domain.

8. The method of claim 5, wherein the MEF service request comprises vectors of at least two user network interfaces ("UNIs") and at least one Ethernet virtual circuit ("EVC").

9. The method of claim 8, wherein generating, with the first network device, the flow domain information comprises: determining, with the first network device, a network path connecting each of the at least two UNIs with the at least one EVC; and generating, with the first network device, the flow domain information, wherein the flow domain information indicates the determined network path connecting each of the at least two UNIs with the at least one EVC and indicates connectivity associations for each of the at least two UNIs with the at least one EVC.

10. The method of claim 8, wherein generating, with the first network device, the flow domain information, using flow domain analysis, comprises: performing, with the first network device, an edge flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising an intra-provider edge router system for multi-dwelling units ("intra-MTU-s"), based on the edge flow domain analysis; based on a determination that at least one of the one or more second network devices is included in a flow domain comprising an intra-MTU-s, determining, with the first network device, one or more edge flow domains; based on a determination that at least one of the one or more second network devices does not include a flow domain comprising an intra-MTU-s, performing, with the first network device, a ring flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, based on the ring flow domain analysis; based on a determination that at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, determining, with the first network device, the one or more G.8032 flow domains; based on a determination that at least one of the one or more second network devices is not included in one or more G.8032 ring flow domains, performing, with the first network device, an aggregate flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in one or more aggregate flow domains, based on the aggregate flow domain analysis; based on a determination that at least one of the one or more second network devices is included in one or more aggregate flow domains, determining, with the first network device, the one or more aggregate flow domains; based on a determination that at least one of the one or more second network devices is not included in one or more aggregate flow domains, performing, with the first network device, a hierarchical virtual private local area network service ("H-VPLS") flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in one or more H-VPLS flow domains, based on the H-VPLS flow domain analysis; and based on a determination that at least one of the one or more second network devices is included in one or more H-VPLS flow domains, determining, with the first network device, the one or more H-VPLS flow domains.

11. The method of claim 10, wherein generating, with the first network device, the flow domain information, using flow domain analysis, further comprises: based on a determination that at least one of the one or more second network devices is not included in one or more H-VPLS flow domains, performing, with the first network device, a virtual private local area network service ("VPLS") flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in one or more VPLS flow domains, based on the VPLS flow domain analysis; based on a determination that at least one of the one or more second network devices is included in one or more VPLS flow domains, determining, with the first network device, the one or more VPLS flow domains; based on a determination that at least one of the one or more second network devices is not included in one or more VPLS flow domains, performing, with the first network device, a flow domain analysis for a provider edge router having routing and switching functionality ("PE_rs flow domain analysis"); determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising one or more PE_rs, based on the PE_rs flow domain analysis; based on a determination that at least one of the one or more second network devices is included in a flow domain comprising one or more PE_rs, determining, with the first network device, one or more PE_rs flow domains; based on a determination that at least one of the one or more second network devices is not included in a flow domain comprising one or more PE_rs, performing, with the first network device, an interconnect flow domain analysis; determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising an external network-to-network interface ("E-NNI"), based on the interconnect flow domain analysis; and based on a determination that at least one of the one or more second network devices is included in a flow domain comprising an E-NNI, determining, with the first network device, one or more service provider or operator flow domains.

12. The method of claim 11, wherein generating, with the first network device, the flow domain information, using flow domain analysis, further comprises: stitching, with the first network device, at least one of the determined one or more edge flow domains, the determined one or more G.8032 flow domains, the determined one or more aggregate flow domains, the determined one or more H-VPLS flow domains, the determined one or more VPLS flow domains, the determined one or more PE_rs flow domains, or the determined one or more service provider or operator flow domains to generate the flow domain information indicating a flow domain network.

13. The method of claim 1, wherein the service arising from the service request comprises at least one of service activation, service modification, service assurance, fault isolation, or performance monitoring.

14. The method of claim 1, wherein the first network device comprises one of a network management layer-configuration management ("NML-CM") controller or a layer 3/layer 2 flow domain ("L3/L2 FD") controller.

15. The method of claim 1, wherein the third network device comprises one of a layer 3/layer 2 element management layer-configuration management ("L3/L2 EML-CM") controller, a NML-CM activation engine, a NML-CM modification engine, a service assurance engine, a fault isolation engine, or a performance monitoring engine.

16. The method of claim 1, wherein the first network device and the third network device are the same network device.

17. The method of claim 1, wherein each of the one or more second network devices comprise one of a user-side provider edge ("U-PE") router, a network-side provider edge ("N-PE") router, a provider ("P") router, or an internal network-to-network interface ("I-NNI") device.

18. The method of claim 1, wherein the network technology comprises one or more of G.8032 Ethernet ring protection switching ("G.8032 ERPS") technology, aggregation technology, hierarchical virtual private local area network service ("H-VPLS") technology, packet on a blade ("POB") technology, provider edge having routing and switching functionality ("PE_rs")-served user network interface ("UNI") technology, multi-operator technology, or virtual private local area network service ("VPLS") technology.

19. A network device, comprising: at least one processor; and a non-transitory computer readable medium communicatively coupled to the at least one processor, the computer readable medium having stored thereon computer software comprising a set of instructions that, when executed by the at least one processor, causes the network device to perform one or more functions, the set of instructions comprising: instructions for receiving a service request, the service request originating from a first client device over the network; instructions for determining one or more second network devices for implementing a service arising from the service request; instructions for determining, with the first network device, network technology utilized by each of the one or more second network devices; and instructions for generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices, wherein the flow domain information is used to automatically configure the at least one of the one or more second network devices to enable performance of the service arising from the service request.

20. A system, comprising: a first network device; one or more second network devices; and a third network device; wherein the first network device comprises: at least one first processor; and a first non-transitory computer readable medium communicatively coupled to the at least one first processor, the first non-transitory computer readable medium having stored thereon computer software comprising a first set of instructions that, when executed by the at least one first processor, causes the first network device to perform one or more functions, the first set of instructions comprising: instructions for receiving a service request, the service request originating from a first client device over the network; instructions for determining one or more second network devices for implementing a service arising from the service request; instructions for determining, with the first network device, network technology utilized by each of the one or more second network devices; and instructions for generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices; wherein the third network device comprises: at least one third processor; and a third non-transitory computer readable medium communicatively coupled to the at least one third processor, the third non-transitory computer readable medium having stored thereon computer software comprising a third set of instructions that, when executed by the at least one third processor, causes the third network device to perform one or more functions, the third set of instructions comprising: instructions for receiving the flow domain information from the first network device; and instructions for automatically configuring the at least one of the one or more second network devices to enable performance of the service arising from the service request, by sending configuration instructions to the at least one of the one or more second network devices; wherein each of the one or more second network devices comprises: at least one second processor; and a second non-transitory computer readable medium communicatively coupled to the at least one second processor, the second non-transitory computer readable medium having stored thereon computer software comprising a second set of instructions that, when executed by the at least one second processor, causes the second network device to perform one or more functions, the second set of instructions comprising: instructions for receiving the configuration instructions from the third network device; and instructions for changing network configuration settings, based on the configuration instructions.

Description

COPYRIGHT STATEMENT

A portion of the disclosure of this patent document contains material that is subject to copyright protection. The copyright owner has no objection to the facsimile reproduction by anyone of the patent document or the patent disclosure as it appears in the Patent and Trademark Office patent file or records, but otherwise reserves all copyright rights whatsoever.

FIELD

The present disclosure relates, in general, to network communications, and more particularly to methods, systems, and computer software for implementing communications network methodologies for determination of network connectivity path given a service request. In some cases, a resultant path might be used to automatically configure network devices directly or indirectly to activate a bearer plane for user traffic flow.

BACKGROUND

Existing network architectures in support of Metro Ethernet Forum ("MEF") services include multiple technologies and multiple vendors. Typically, this will result in complicated logic in breaking down the service request and determining how to map the service attributes to corresponding network device configurations.

For example, a MEF service request with two user network interfaces ("UNIs") and an Ethernet virtual connection or circuit ("EVC") is sent from the client to the support system(s) responsible for communicating with the network. The support system must determine the underlying technology (including, but not limited to, virtual private local area network ("LAN") service ("VPLS"), IEEE 802.1ad (otherwise referred to as "Q-in-Q," "QinQ," or "Q in Q")) and corresponding vendors. The determination of this connectivity can be persistently stored in part or whole. If the technology and/or vendors change, there is typically a significant development effort in order to keep the automation of service request to underlying network activation and corresponding bearer plane flow.

Hence, there is a need for more robust and scalable solutions for implementing communications network methodologies for determination of network connectivity path. In particular, there is a need for a system that overcomes the complexity of multi-technology and multi-vendor networks in support of service activation. Further, there is a need to have a solution that can be used across multiple services, products, and underlying networks for automated service activation.

BRIEF SUMMARY

Various embodiments provide techniques for implementing network management layer configuration management. In particular, various embodiments provide techniques for implementing communications network methodologies for determination of network connectivity path given a service request. In some embodiments, a resultant path might be used to automatically configure network devices directly or indirectly to activate a bearer plane for user traffic flow.

In some embodiments, a system might determine one or more network devices in a network for implementing a service arising from a service request that originates from a client device over the network. The system might further determine network technology utilized by each of the one or more network devices, and might generate flow domain information (in some cases, in the form of a flow domain network ("FDN") object), using flow domain analysis, based at least in part on the determined network devices and/or the determined network technology. The system might automatically configure at least one of the network devices to enable performance of the service (which might include, without limitation, service activation, service modification, fault isolation, and/or performance monitoring), based at least in part on the generated flow domain information.

According to some embodiments, given a Metro Ethernet Forum ("MEF") service of two or more UNIs and an Ethernet virtual circuit or connection ("EVC") as input, a detailed an NML-CM flow domain logic algorithm may be used to return or output a computed graph or path (in some cases, in the form of a FDN object). Using the algorithm and the methodology described above, a MEF-defined service may determine the underlying set of network components and connectivity associations required to provide bearer plane service. The returned or outputted graph (in some instances, the FDN object) can then be used to perform the necessary service activation, service modification, fault isolation, and/or performance monitoring, or the like across multiple technologies and multiple vendors.

The tools provided by various embodiments include, without limitation, methods, systems, and/or software products. Merely by way of example, a method might comprise one or more procedures, any or all of which are executed by a computer system. Correspondingly, an embodiment might provide a computer system configured with instructions to perform one or more procedures in accordance with methods provided by various other embodiments. Similarly, a computer program might comprise a set of instructions that are executable by a computer system (and/or a processor therein) to perform such operations. In many cases, such software programs are encoded on physical, tangible, and/or non-transitory computer readable media (such as, to name but a few examples, optical media, magnetic media, and/or the like).

In an aspect, a method might comprise receiving, with a first network device in a network, a service request, the service request originating from a first client device over the network, determining, with the first network device, one or more second network devices for implementing a service arising from the service request, and determining, with the first network device, network technology utilized by each of the one or more second network devices. The method might further comprise generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices. The method might also comprise automatically configuring, with a third network device in the network, at least one of the one or more second network devices to enable performance of the service arising from the service request, based at least in part on the generated flow domain information.

In some embodiments, generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices might comprise generating, with the first network device, a flow domain network ("FDN") object, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices. In some cases, generating, with the first network device, the FDN object, using flow domain analysis might comprise generating, with the first network device, a computed graph of flow domains indicating one or more network paths in the network through the one or more second network devices and indicating relevant connectivity associations of the one or more second network devices. In some instances, generating, with the first network device, the FDN object, using flow domain analysis might comprise utilizing an outside-in analysis that first analyzes relevant user network interfaces.

According to some embodiments, the service request might comprise a Metro Ethernet Forum ("MEF") service request. In some cases, determining, with the first network device, network technology utilized by each of the one or more second network devices might comprise mapping, with the first network device, MEF services associated with the service request with the network technology utilized by each of the one or more second network devices. In some embodiments, mapping, with the first network device, MEF services associated with the service request with the network technology utilized by each of the one or more second network devices might comprise implementing, with the first network device, an intermediate abstraction between the MEF services and the network technology utilized by each of the one or more second network devices, using a layer network domain.

In some instances, the MEF service request might comprise vectors of at least two user network interfaces ("UNIs") and at least one Ethernet virtual circuit ("EVC"). According to some embodiments, generating, with the first network device, the flow domain information might comprise determining, with the first network device, a network path connecting each of the at least two UNIs with the at least one EVC and generating, with the first network device, the flow domain information, wherein the flow domain information indicates the determined network path connecting each of the at least two UNIs with the at least one EVC and indicates connectivity associations for each of the at least two UNIs with the at least one EVC.

Merely by way of example, in some embodiments, generating, with the first network device, the flow domain information, using flow domain analysis, might comprise performing, with the first network device, an edge flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising an intra-provider edge router system for multi-dwelling units ("intra-MTU-s"), based on the edge flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in a flow domain comprising an intra-MTU-s, determining, with the first network device, one or more edge flow domains. Generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices does not include a flow domain comprising an intra-MTU-s, performing, with the first network device, a ring flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, based on the ring flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, determining, with the first network device, the one or more G.8032 flow domains. In some cases, generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices is not included in one or more G.8032 ring flow domains, performing, with the first network device, an aggregate flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in one or more aggregate flow domains, based on the aggregate flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in one or more aggregate flow domains, determining, with the first network device, the one or more aggregate flow domains.

According to some embodiments, generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices is not included in one or more aggregate flow domains, performing, with the first network device, a hierarchical virtual private local area network service ("H-VPLS") flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in one or more H-VPLS flow domains, based on the H-VPLS flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in one or more H-VPLS flow domains, determining, with the first network device, the one or more one or more H-VPLS flow domains. In some cases, generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices is not included in one or more H-VPLS flow domains, performing, with the first network device, a virtual private local area network service ("VPLS") flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in one or more VPLS flow domains, based on the VPLS flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in one or more VPLS flow domains, determining, with the first network device, the one or more VPLS flow domains.

In some embodiments, generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices is not included in one or more VPLS flow domains, performing, with the first network device, a flow domain analysis for a provider edge router having routing and switching functionality ("PE_rs flow domain analysis"), determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising one or more PE_rs, based on the PE_rs flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in a flow domain comprising one or more PE_rs, determining, with the first network device, one or more PE_rs flow domains. In some instances, generating the flow domain information might comprise, based on a determination that at least one of the one or more second network devices is not included in a flow domain comprising one or more PE_rs, performing, with the first network device, an interconnect flow domain analysis, determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising an external network-to-network interface ("E-NNI"), based on the interconnect flow domain analysis, and based on a determination that at least one of the one or more second network devices is included in a flow domain comprising an E-NNI, determining, with the first network device, one or more service provider or operator flow domains.

According to some embodiments, generating, with the first network device, the flow domain information, using flow domain analysis, might further comprise stitching, with the first network device, at least one of the determined one or more edge flow domains, the determined one or more G.8032 flow domains, the determined one or more aggregate flow domains, the determined one or more one or more H-VPLS flow domains, the determined one or more VPLS flow domains, the determined one or more PE_rs flow domains, or the determined one or more service provider or operator flow domains to generate the flow domain information indicating a flow domain network.

In some cases, the service arising from the service request might comprise at least one of service activation, service modification, service assurance, fault isolation, or performance monitoring. In some embodiments, the first network device might comprise one of a network management layer--configuration management ("NML-CM") controller or a layer 3/layer 2 flow domain ("L3/L2 FD") controller. In some instances, the third network device might comprise one of a layer 3/layer 2 element management layer--configuration management ("L3/L2 EML-CM") controller, a NML-CM activation engine, a NML-CM modification engine, a service assurance engine, a fault isolation engine, or a performance monitoring engine. According to some embodiments, the first network device and the third network device might be the same network device. In some cases, each of the one or more second network devices might comprise one of a user-side provider edge ("U-PE") router, a network-side provider edge ("N-PE") router, a provider ("P") router, and/or an internal network-to-network interface ("I-NNI") device. In some instances, the network technology might comprise one or more of G.8032 Ethernet ring protection switching ("G.8032 ERPS") technology, aggregation technology, hierarchical virtual private local area network service ("H-VPLS") technology, packet on a blade ("POB") technology, provider edge having routing and switching functionality ("PE_rs")-served user network interface ("UNI") technology, multi-operator technology, and/or virtual private local area network service ("VPLS") technology.

In another aspect, a network device might comprise at least one processor and a non-transitory computer readable medium communicatively coupled to the at least one processor. The computer readable medium might have stored thereon computer software comprising a set of instructions that, when executed by the at least one processor, causes the network device to perform one or more functions. The set of instructions might comprise instructions for receiving a service request, the service request originating from a first client device over the network, instructions for determining one or more second network devices for implementing a service arising from the service request, and instructions for determining, with the first network device, network technology utilized by each of the one or more second network devices. The set of instructions might further comprise instructions for generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices. The flow domain information might be used to automatically configure the at least one of the one or more second network devices to enable performance of the service arising from the service request.

In yet another aspect, a system might comprise a first network device, one or more second network devices, and a third network device. The first network device might comprise at least one first processor and a first non-transitory computer readable medium communicatively coupled to the at least one first processor. The first non-transitory computer readable medium might have stored thereon computer software comprising a first set of instructions that, when executed by the at least one first processor, causes the first network device to perform one or more functions. The first set of instructions might comprise instructions for receiving a service request, the service request originating from a first client device over the network, instructions for determining one or more second network devices for implementing a service arising from the service request, and instructions for determining, with the first network device, network technology utilized by each of the one or more second network devices. The first set of instructions might further comprise instructions for generating, with the first network device, flow domain information, using flow domain analysis, based at least in part on the determined one or more second network devices and based at least in part on the determined network technology utilized by each of the one or more second network devices.

The third network device might comprise at least one third processor and a third non-transitory computer readable medium communicatively coupled to the at least one third processor. The third non-transitory computer readable medium might have stored thereon computer software comprising a third set of instructions that, when executed by the at least one third processor, causes the third network device to perform one or more functions. The third set of instructions might comprise instructions for receiving the flow domain information from the first network device and instructions for automatically configuring the at least one of the one or more second network devices to enable performance of the service arising from the service request, by sending configuration instructions to the at least one of the one or more second network devices.

Each of the one or more second network devices might comprise at least one second processor and a second non-transitory computer readable medium communicatively coupled to the at least one second processor. The second non-transitory computer readable medium might have stored thereon computer software comprising a second set of instructions that, when executed by the at least one second processor, causes the second network device to perform one or more functions. The second set of instructions might comprise instructions for receiving the configuration instructions from the third network device and instructions for changing network configuration settings, based on the configuration instructions.

Various modifications and additions can be made to the embodiments discussed without departing from the scope of the invention. For example, while the embodiments described above refer to particular features, the scope of this invention also includes embodiments having different combination of features and embodiments that do not include all of the above described features.

BRIEF DESCRIPTION OF THE DRAWINGS

A further understanding of the nature and advantages of particular embodiments may be realized by reference to the remaining portions of the specification and the drawings, in which like reference numerals are used to refer to similar components. In some instances, a sub-label is associated with a reference numeral to denote one of multiple similar components. When reference is made to a reference numeral without specification to an existing sub-label, it is intended to refer to all such multiple similar components.

FIG. 1 is a block diagram illustrating a system representing network management layer--configuration management ("NML-CM") network logic, in accordance with various embodiments.

FIG. 2A is a block diagram illustrating a system depicting an exemplary NML-CM flow domain, in accordance with various embodiments.

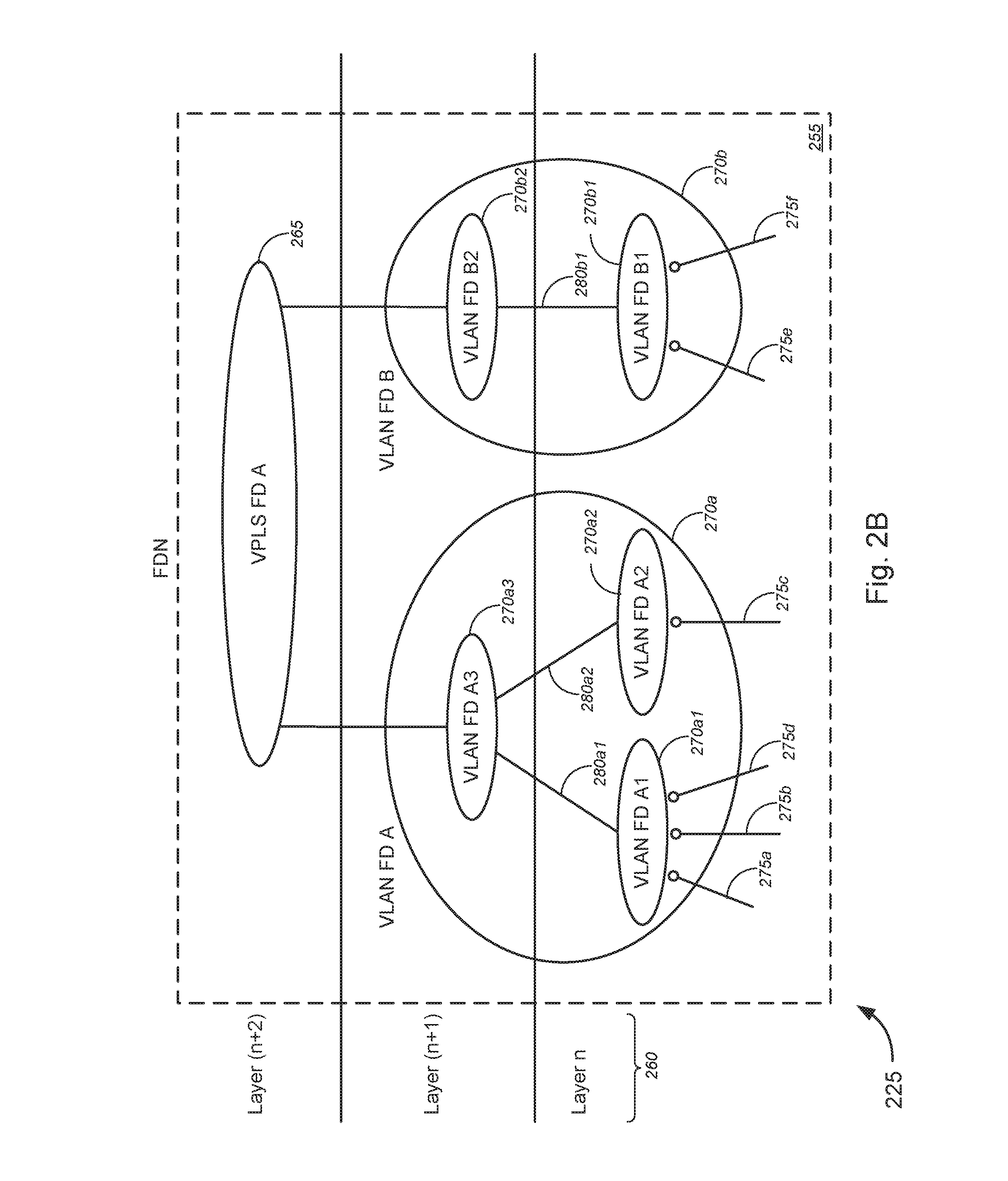

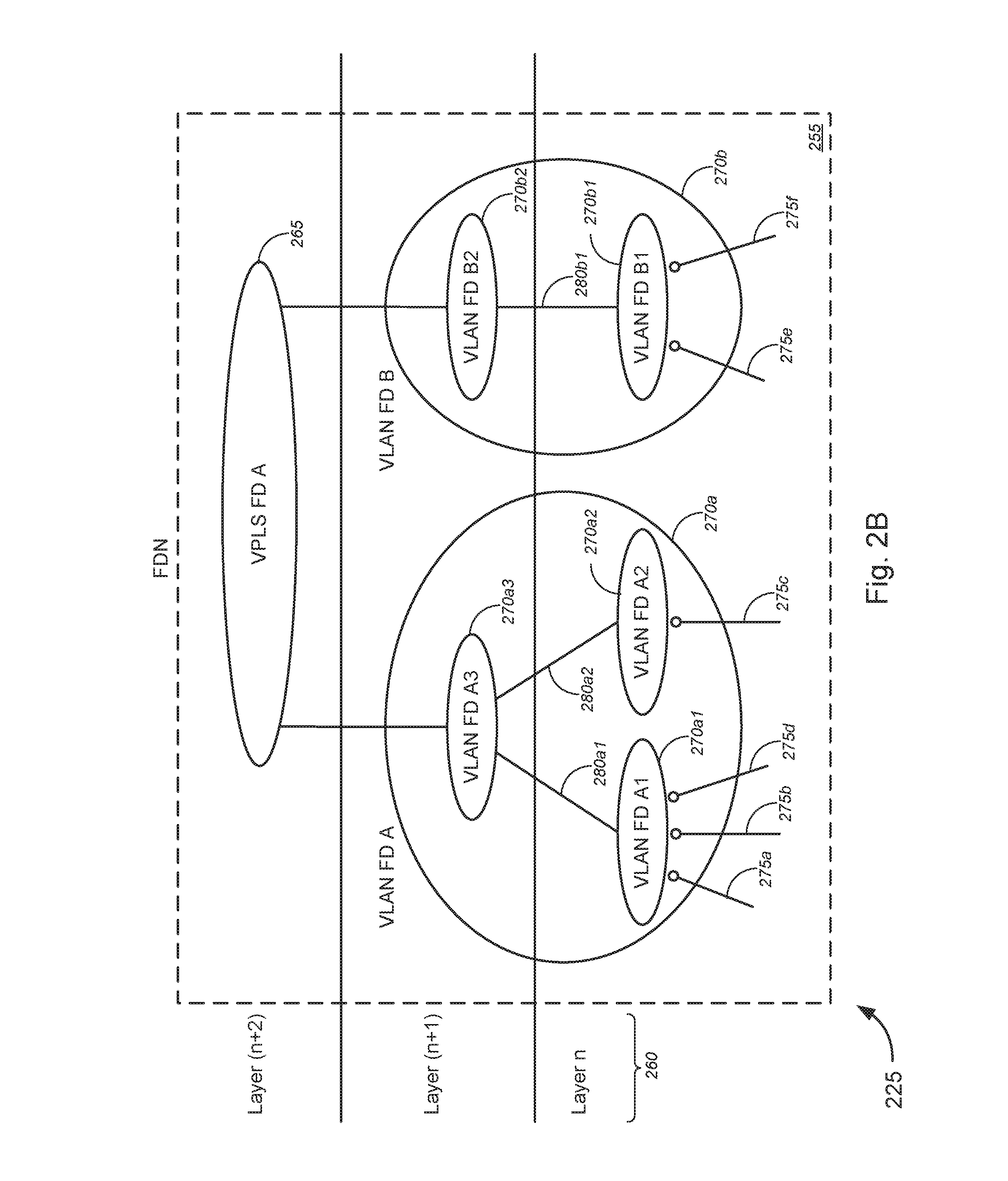

FIG. 2B is a block diagram illustrating an exemplary flow domain network ("FDN"), in accordance with various embodiments.

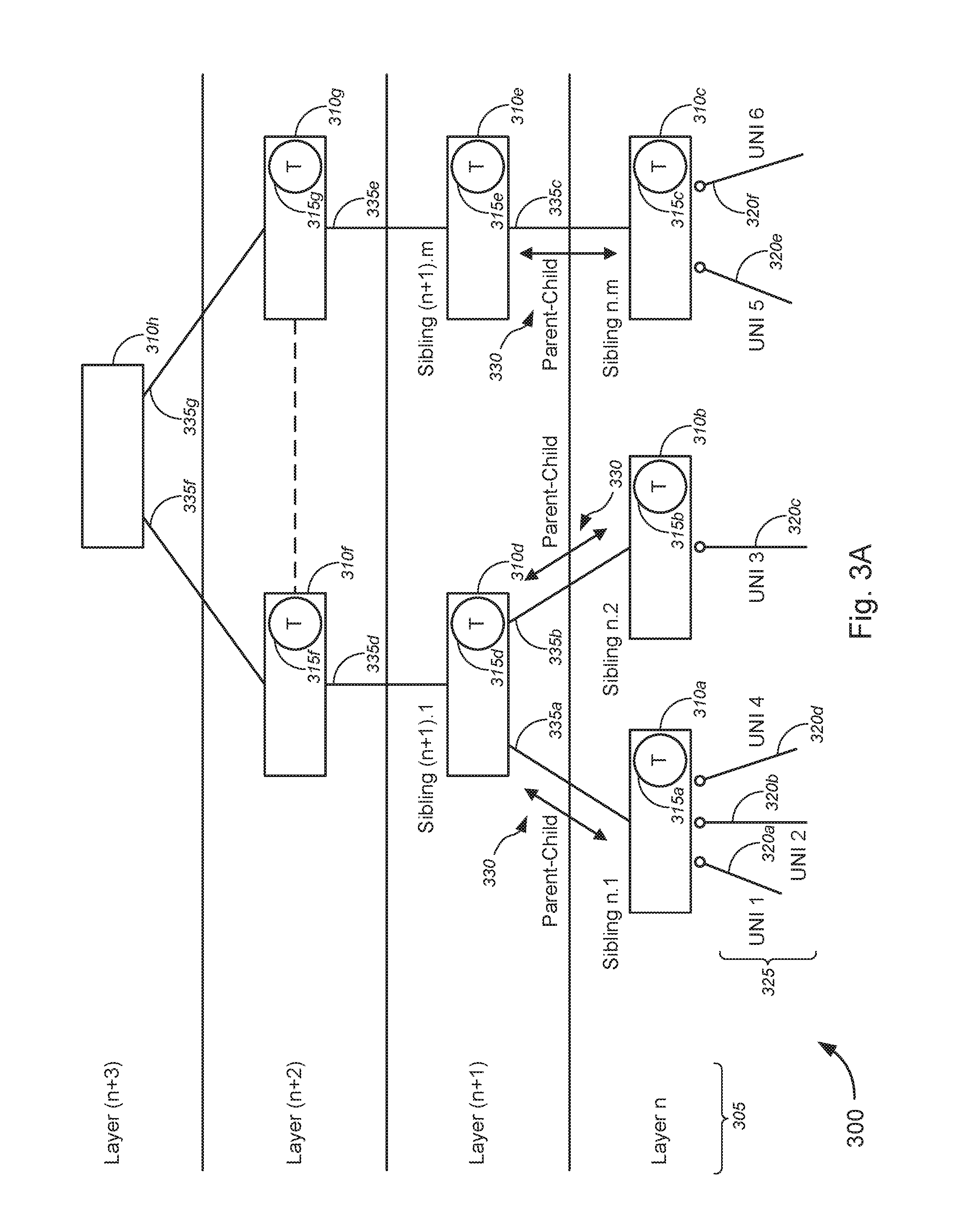

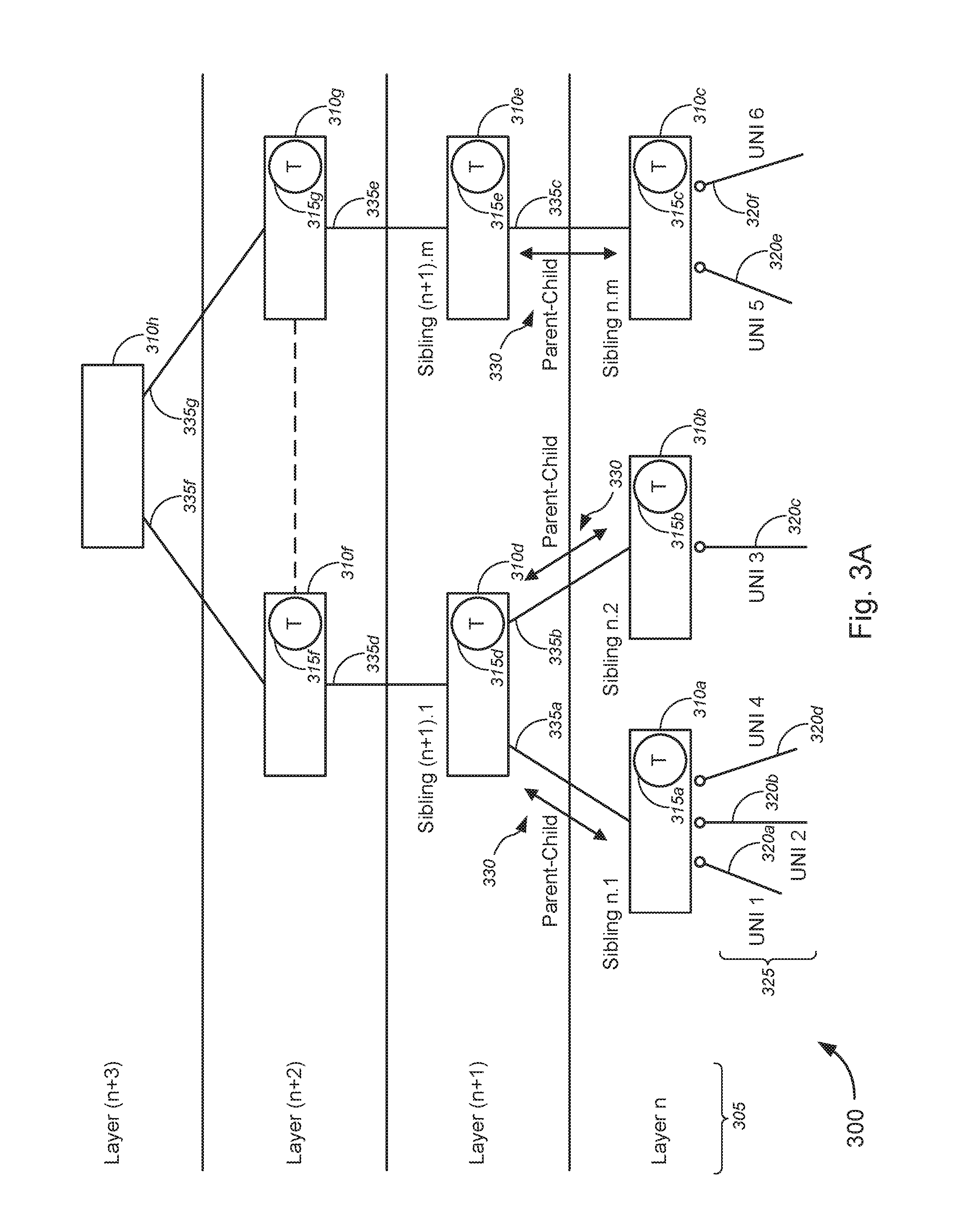

FIG. 3A is a block diagram illustrating exemplary FDN derivation, in accordance with various embodiments.

FIG. 3B is a block diagram illustrating exemplary layer n sibling check for FDN derivation, in accordance with various embodiments.

FIG. 3C is a block diagram illustrating exemplary FDN derivation utilizing a set of bins each for grouping user network interfaces ("UNIs") that share the same edge serving device, in accordance with various embodiments.

FIG. 4 is a flow diagram illustrating an exemplary method for generating a FDN object, in accordance with various embodiments.

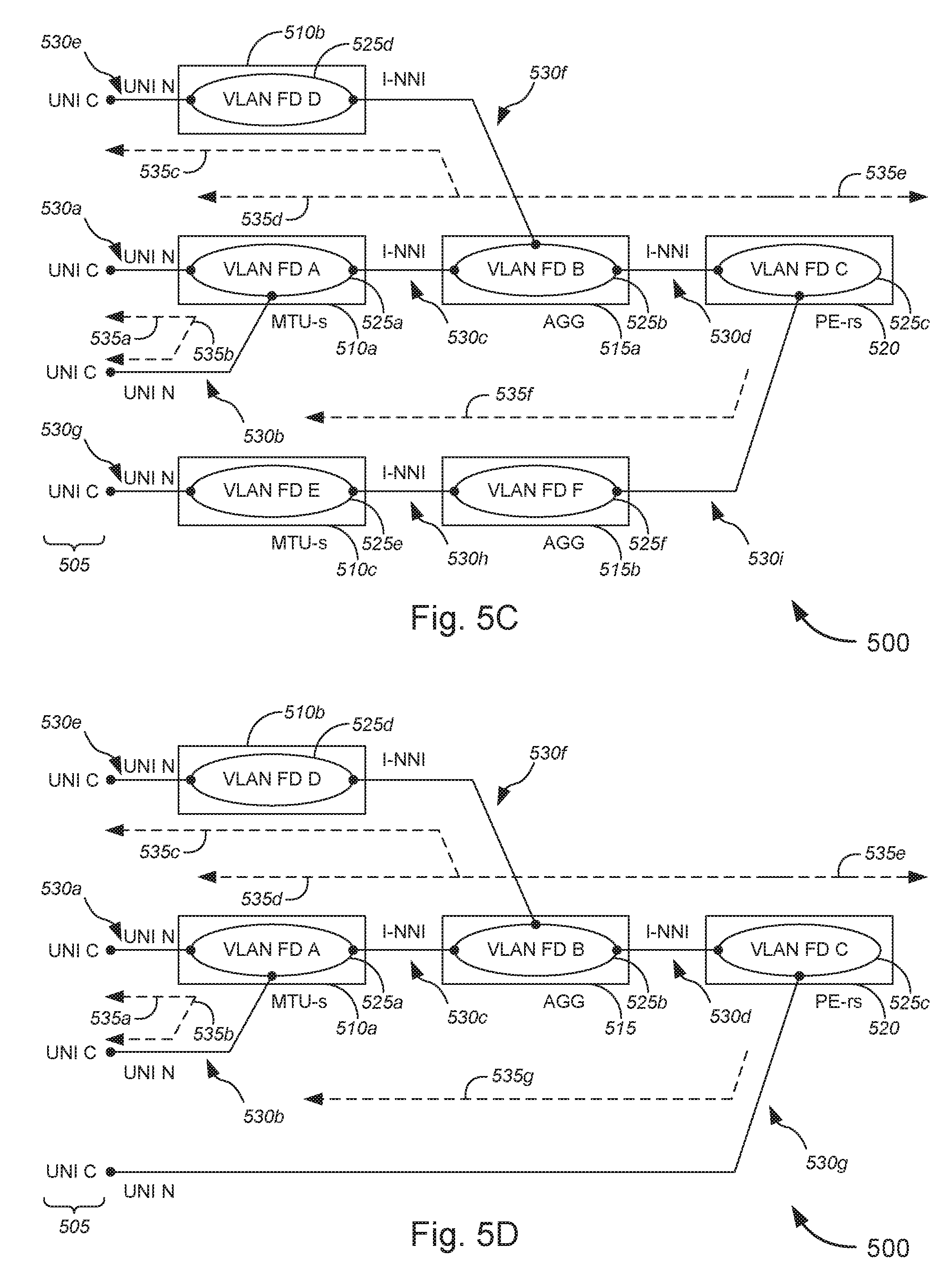

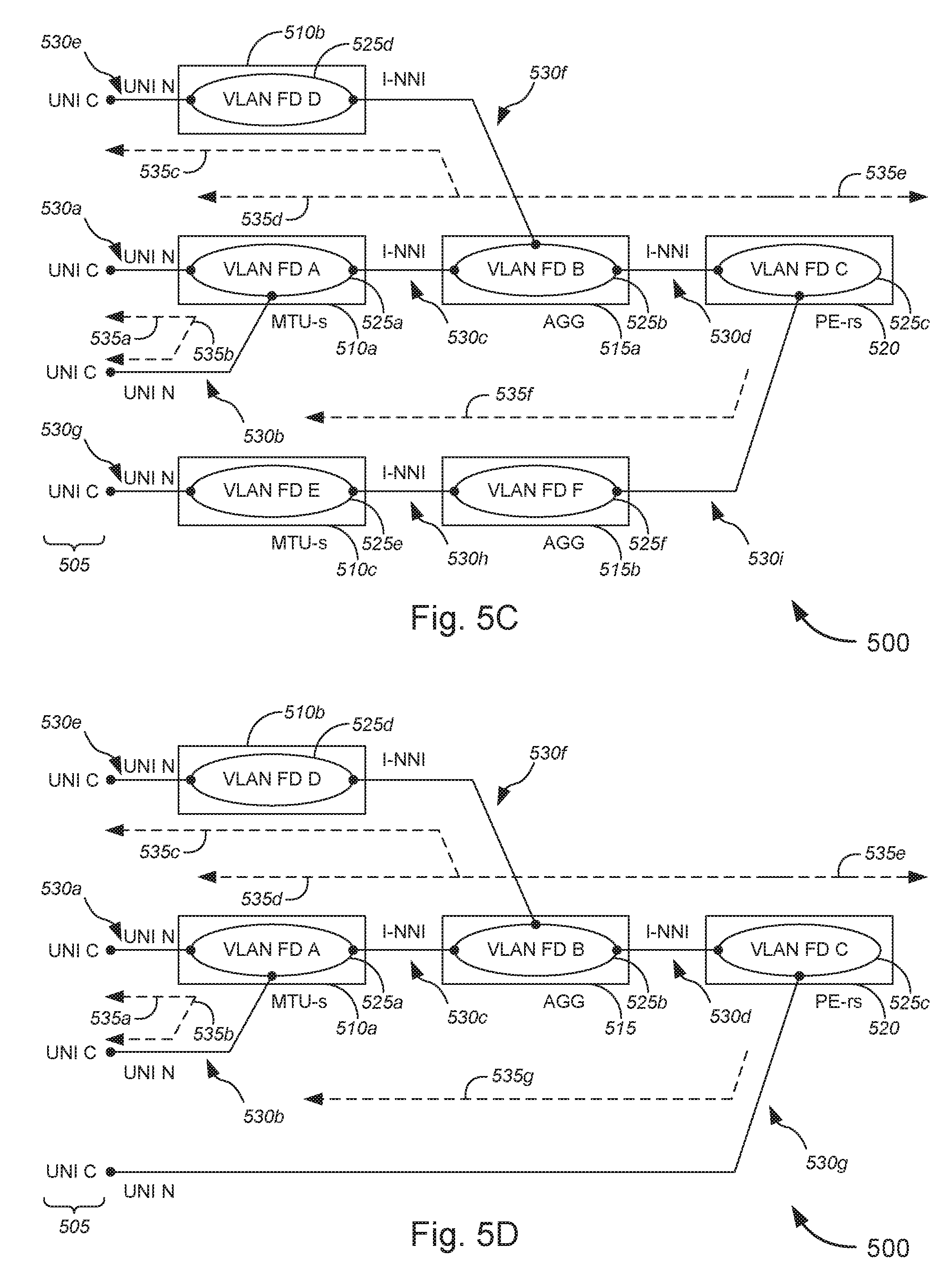

FIGS. 5A-5I are block diagrams illustrating various exemplary flow domains, in accordance with various embodiments.

FIG. 6 is a flow diagram illustrating a method for generating flow domain information and implementing automatic configuration of network devices based on the generated flow domain information, in accordance with various embodiments.

FIG. 7 is a block diagram illustrating an exemplary computer or system hardware architecture, in accordance with various embodiments.

DETAILED DESCRIPTION OF CERTAIN EMBODIMENTS

While various aspects and features of certain embodiments have been summarized above, the following detailed description illustrates a few exemplary embodiments in further detail to enable one of skill in the art to practice such embodiments. The described examples are provided for illustrative purposes and are not intended to limit the scope of the invention.

In the following description, for the purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of the described embodiments. It will be apparent to one skilled in the art, however, that other embodiments of the present invention may be practiced without some of these specific details. In other instances, certain structures and devices are shown in block diagram form. Several embodiments are described herein, and while various features are ascribed to different embodiments, it should be appreciated that the features described with respect to one embodiment may be incorporated with other embodiments as well. By the same token, however, no single feature or features of any described embodiment should be considered essential to every embodiment of the invention, as other embodiments of the invention may omit such features.

Unless otherwise indicated, all numbers used herein to express quantities, dimensions, and so forth used should be understood as being modified in all instances by the term "about." In this application, the use of the singular includes the plural unless specifically stated otherwise, and use of the terms "and" and "or" means "and/or" unless otherwise indicated. Moreover, the use of the term "including," as well as other forms, such as "includes" and "included," should be considered non-exclusive. Also, terms such as "element" or "component" encompass both elements and components comprising one unit and elements and components that comprise more than one unit, unless specifically stated otherwise.

Various embodiments provide techniques for implementing network management layer configuration management. In particular, various embodiments provide techniques for implementing communications network methodologies for determination of network connectivity path given a service request. In some embodiments, a resultant path might be used to automatically configure network devices directly or indirectly to activate a bearer plane for user traffic flow. Herein, "bearer plane" might refer to a user plane or data plane that might carry user or data traffic for a network, while "control plane" might refer to a plane that carries control information for signaling purposes, and "management plane" might refer to a plane that carries operations and administration traffic for network management. Herein also, data traffic or network traffic might have traffic patterns denoted as one of "north traffic," "east traffic," "south traffic," or "west traffic." North (or northbound) and south (or southbound) traffic might refer to data or network traffic between a client and a server, while east (or eastbound) and west (or westbound) traffic might refer to data or network traffic between two servers.

In some embodiments, a system might determine one or more network devices in a network for implementing a service arising from a service request that originates from a client device over the network. In some cases, the service might include, without limitation, service activation, service modification, fault isolation, and/or performance monitoring, or the like.

The system might further determine network technology utilized by each of the one or more network devices, and might generate flow domain information (in some cases, in the form of a flow domain network ("FDN") object), using flow domain analysis, based at least in part on the determined network devices and/or the determined network technology. According to some embodiments, the network technology might include, but is not limited to, one or more of G.8032 Ethernet ring protection switching ("G.8032 ERPS") technology, aggregation technology, hierarchical virtual private local area network service ("H-VPLS") technology, packet on a blade ("POB") technology, provider edge having routing and switching functionality ("PE_rs")-served user network interface ("UNI") technology, multi-operator technology, and/or virtual private local area network service ("VPLS") technology.

The system might automatically configure at least one of the network devices to enable performance of the service, based at least in part on the generated flow domain information.

In some embodiments, given a Metro Ethernet Forum ("MEF") service of two or more UNIs and an Ethernet virtual circuit or connection ("EVC") as input, a detailed an NML-CM flow domain logic algorithm or NML-CM flow domain algorithm may be used to return or output a computed graph or path (in some cases, in the form of a FDN object). Using the algorithm and the methodology described above, a MEF-defined service may determine the underlying set of network components and connectivity associations required to provide bearer plane service. The returned or outputted graph (in some instances, the FDN object) can then be used to perform the necessary service activation, service modification, fault isolation, and/or performance monitoring, or the like across multiple technologies and multiple vendors.

According to some embodiments, the NML-CM flow domain algorithm might leverage the concepts provided in the technical specifications for MEF 7.2: Carrier Ethernet Management Information Model (April 2013; hereinafter referred to simply as "MEF 7.2"), which is incorporated herein by reference in its entirety for all purposes. Similar to the recursive flow domain (sub-network) derivation described in MEF 7.2, the NML-CM flow domain algorithm recursively generates a graph (network) of flow domains. The returned value(s) from successfully invocation of the algorithm might be a FDN object.

The FDN object is intended to be used by clients, including, but not limited to, a NML-CM activation engine, or the like. In some cases, the service fulfillment process might use the FDN information during a new service activation process. The service fulfillment process might also use the FDN information during a service modification process. Other clients can use the FDN object for service assurance processes, including without limitation, fault isolation.

A layer network domain ("LND") with flow domains might provide an intermediate abstraction between the MEF service(s) and the underlying network technology. The software logic/algorithms might be developed to map MEF service(s) to underlying flow domain network. The flow domain network may then be mapped to the underlying network technology.

One benefit of this framework might include that future changes in underlying technology do not require changes to MEF service-aware functionality. In a specific (non-limiting) example, a move from virtual local area network ("VLAN") or virtual private LAN service ("VPLS") flow domains to multiprotocol label switching--transport protocol ("MPLS-TP") flow domains does not require business management layer ("BML") and/or service management layer ("SML") support system changes.

The framework provides a technology and software layering and abstraction that reduces long term development costs. A change in a vendor(s) supporting the existing or new technology only requires modification of the NML-to-EML support systems.

Key in the derivation of the graph (or FDN object) is the need to derive topology from potentially multiple sources. These sources might include, but are not limited to, the network devices, support systems (including, e.g., element management systems ("EMSs"), etc.) and databases. A major goal of the topology engine is to limit and throttle the access to the network. The reason for limiting or throttling access is that each access request of a device has a corresponding cost, which might include, but is not limited to, increased levels of device and network resources. Device and network resources might include, without limitation, CPU utilization, memory, bandwidth, and/or the like. Keeping these resources at or below optimal levels is an important aspect of the aforementioned major goal.

The combination of the EMS and controlled devices can provide varying levels of network/device protection. For example, an EMS that asynchronously responds to events and incrementally queries the network/device is better than a solution that is constantly polling.

We now turn to the embodiments as illustrated by the drawings. FIGS. 1-7 illustrate some of the features of the method, system, and apparatus for implementing network management layer configuration management, as referred to above. The methods, systems, and apparatuses illustrated by FIGS. 1-7 may refer to examples of different embodiments that include various components and steps, which can be considered alternatives or which can be used in conjunction with one another in the various embodiments. The description of the illustrated methods, systems, and apparatuses shown in FIGS. 1-7 is provided for purposes of illustration and should not be considered to limit the scope of the different embodiments.

With reference to the figures, FIG. 1 is a block diagram illustrating a system 100 representing network management layer--configuration management ("NML-CM") network logic, in accordance with various embodiments. In FIG. 1, system 100 might comprise a plurality of layers 105, including, but not limited to, a business management layer ("BML"), a service management layer ("SML"), a network management layer ("NML"), a flow domain layer ("FDL"), an element management layer ("EML"), an element layer ("EL"), and/or the like. System 100 might further comprise a plurality of user-side or customer-side interfaces or interface devices 110, including, without limitation, one or more graphical user interfaces ("GUIs") 110a, one or more web portals 110b, one or more web services 110c.

In some embodiments, system 100 might further comprise a Metro Ethernet Forum ("MEF") business management layer--configuration management ("BML-CM") controller 115, which is located at the BML, and a MEF service management layer--configuration management ("SML-CM") controller 120, which is located at the SML. Also located at the SML might be a Metro Ethernet Network ("MEN") 125, the edges of which might be communicatively coupled to two or more user network interfaces ("UNIs") 130. In some cases, the two or more UNIs 130 might be linked by an Ethernet virtual connection or Ethernet virtual circuit ("EVC") 135. At the NML, system 100 might comprise a MEF network management layer--configuration management ("NML-CM") controller 140, while at the FDL, system 100 might comprise a plurality of virtual local area network ("VLAN") flow domains 145 and a plurality of flow domain controllers 150.

In some embodiments (such as shown in FIG. 1), the plurality of VLAN flow domains 145 might include, without limitation, a first VLAN flow domain A 145a, a second VLAN flow domain B 145b, and a third VLAN flow domain C 145c. A plurality of UNIs 130 might communicatively couple to edge VLAN flow domains, such as the first VLAN flow domain A 145a and the third VLAN flow domain C 145c (in the example of FIG. 1). The edge VLAN flow domains (i.e., the first VLAN flow domain A 145a and the third VLAN flow domain C 145c) might each communicatively couple with inner VLAN flow domains (i.e., the second VLAN flow domain B 145b) via one or more internal network-to-network interfaces ("I-NNI") 155. In some cases, each of the plurality of the flow domain controllers 150 might be part of the corresponding one of the plurality of VLAN flow domains 145. In some instances, each of the plurality of the flow domain controllers 150 might be separate from the corresponding one of the plurality of VLAN flow domains 145, although communicatively coupled therewith; in some embodiments, each separate flow domain controller 150 and each corresponding VLAN flow domain 145 might at least in part be co-located. In the example of FIG. 1, flow domain a controller 150a might be part of (or separate from, yet communicatively coupled to) the first VLAN flow domain A 145a, while flow domain b controller 150b might be part of (or separate from, yet communicatively coupled to) the second VLAN flow domain B 145b, and flow domain c controller 150c might be part of (or separate from, yet communicatively coupled to) the third VLAN flow domain C 145c. According to some embodiments, the plurality of flow domain controllers 150 might include layer 3/layer 2 ("L3/L2") flow domain controllers 150. As understood in the art, "layer 3" might refer to a network layer, while "layer 2" might refer to a data link layer.

At the EML, system 100 might further comprise a plurality of L3/L2 element management layer--configuration management ("EML-CM") controllers 160. As shown in the embodiment of FIG. 1, the plurality of L3/L2 EML-CM controllers 160 might comprise a first L3/L2 EML-CM a controller 160a, a second L3/L2 EML-CM b controller 160b, and a third L3/L2 EML-CM c controller 160c. Each of the plurality of L3/L2 EML-CM controllers 160 might communicatively couple with a corresponding one of the plurality of L3/L2 flow domain controllers 150. Each L3/L2 EML-CM controller 160 might control one or more routers at the EL. For example, as shown in FIG. 1, the first L3/L2 EML-CM a controller 160a might control a first user-side provider edge ("U-PE") router 165a, while the second L3/L2 EML-CM b controller 160b might control two network-side provider edge ("N-PE") routers 170a and 170b, and the third L3/L2 EML-CM c controller 160c might control a second U-PE 165b. I-NNIs 155 might communicatively couple U-PE routers 165 with N-PE routers 170, and communicatively couple N-PE routers 170 to other N-PE routers 170.

In operation, a service request might be received by a GUI 110a, a web portal 110b, or a web service 110c. The service request might request performance of a service including, but is not limited to, service activation, service modification, service assurance, fault isolation, or performance monitoring, or the like. The MEF BML-CM controller 115 receives the service request and forwards to the MEF SML-CM controller 120, which then sends the service request to the MEF NML-CM controller 140 (via MEN 125). The MEF NML-CM controller 140 receives the service request, which might include information regarding the UNIs 130 and the EVC(s) 135 (e.g., vectors of the UNIs 130 and the EVC(s) 135, or the like), and might utilize a flow domain algorithm to generate flow domain information, which might be received and used by the L3/L2 flow domain controllers 150 to control the VLAN flow domains 145 and/or to send control information to the L3/L2 EML-CM controllers 160, which in turn controls the U-PEs 165 and/or N-Pes 170 at the element layer. Herein, the functions of the NML and the FDL (as well as interactions between the NML/FDL and the EML or EL) are described with respect to FIGS. 2-6 below.

FIG. 2A is a block diagram illustrating a system 200 depicting an exemplary NML-CM flow domain, in accordance with various embodiments. FIG. 2B is a block diagram illustrating an exemplary flow domain network ("FDN") 225, in accordance with various embodiments.

In FIG. 2A, system 200, which represents a NML-CM flow domain, comprises a NML-CM flow domain algorithm 205 that generates flow domain information that is used by an NML-CM activation engine 210 to configure network 215. The flow domain information might be generated (or otherwise based on) a MEF service request 220, which might include information regarding two or more UNIs 230 and at least one EVC 235. In some cases, the information might comprise vectors or vector lists of each of the two or more UNIs 230 and the at least one EVC 235, and/or vector paths through some (if not all) of the two or more UNIs 230 and the at least one EVC 235, or the like.

In other words, service information (under the MEF standard) 240 is used by the algorithm 205 to generate network flow domain information 245, which is technology specific yet vendor independent (i.e., it doesn't matter what vendor is providing the network components or devices, rather it is the technology utilized in the network components or devices that is important, at this stage). The network flow domain information 245 is then used by the NML-CM activation engine 210 to configure the network devices and components 250, which may be technology specific and vendor dependent.

It should be appreciated that although a NML-CM activation engine 210 is used in this non-limiting example, any suitable client may be used (consistent with the teachings of the various embodiments) to utilize network flow domain information 245 to configure network devices and components 250 to perform a service arising from the MEF service request. In some embodiments, for example, the client (instead of the activation engine 210) might include one of a service modification engine, a service assurance, a fault isolation engine, a performance monitoring/optimization engine, and/or the like, to configure the network devices 250 to perform service modification, service assurance, fault isolation, performance monitoring/optimization, and/or the like, respectively.

In general, FIG. 2A illustrates a concept referred to as an "outside-in" approach or analysis, which takes the edge components of UNIs and initiates the process of determining how to connect the UNIs with the EVC in order to meet the service request. In some cases, the "outside-in" approach or analysis might include deriving a network computed graph, which, in some embodiments, might be embodied as a FDN object or flow domain information. FIG. 2B illustrates an example FDN, and a FDN object includes information describing or defining the FDN.

Turning to FIG. 2B, FDN 225 might comprise a plurality of layers 260 (denoted "Layer n," "Layer (n+1)," and "Layer (n+2)" in the non-limiting example of FIG. 2B). At Layer (n+2), FDN 225 might comprise a VPLS flow domain A 265. FDN 225 might further comprise a two or more VLAN flow domains 270 that might span one or more layers 260. In the embodiment of FIG. 2B, FDN 225 includes a first VLAN flow domain A 270a and a second VLAN flow domain B 270b, which each spans Layers n and (n+1). The first VLAN flow domain A 270a and the second VLAN flow domain B 270b might each comprise a plurality of VLAN flow domains. In the example of FIG. 2B, the first VLAN flow domain A 270a might comprise VLAN flow domain A1 270a1 and VLAN flow domain A2 270a2, both at Layer n, and might further comprise VLAN flow domain A3 270a3 at Layer (n+1). The second VLAN flow domain B 270b, as shown in FIG. 2B, might comprise VLAN flow domain B1 270b1 and VLAN flow domain B2 270b2, the former at Layer n and the latter at Layer (n+1). A plurality of connections 275 might communicatively couple a plurality of UNIs to the VLAN flow domains at Layer n. For example, as shown in FIG. 2B, each of connections 275a-275f might communicatively couple each of UNIs (e.g., UNI 1 through UNI 6 325, as shown in FIG. 3) to VLAN flow domain A1 270a1, VLAN flow domain A2 270a2, or VLAN flow domain B1 270b1. In some embodiments, one or more of connections 275 might utilize flexible port partitioning ("FPP") or the like, which might enable virtual Ethernet controllers to control partitioning of bandwidth across multiple virtual functions and/or to provide balanced quality of service ("QoS") by giving each assigned virtual function equal (or distributed) access to network bandwidth.

FIG. 3A-3C (collectively, "FIG. 3") are generally directed to FDN derivation, in accordance with various embodiments. In particular, FIG. 3A is a block diagram illustrating exemplary FDN derivation, in accordance with various embodiments. FIG. 3B is a block diagram illustrating exemplary layer n sibling check for FDN derivation, in accordance with various embodiments. FIG. 3C is a block diagram illustrating exemplary FDN derivation utilizing a set of bins each for grouping user network interfaces ("UNIs") that share the same edge serving device, in accordance with various embodiments.

In a flow domain analysis, the end result, given a MEF service (including two or more UNIs and at least one EVC, or the like), might, in some exemplary embodiments, include a FDN object. The FDN object might be used by a client device for various purposes, including, without limitation, service activation, service modification, fault isolation, performance monitoring, and/or the like. The FDN object might provide the intermediate abstraction between the service and the underlying network technology.

The node type is a requirement that is used to support flow domain analysis. An attribute associated with the node/device may be assigned. In some cases, the assignment should ideally be an inventoried attribute, but can, in some instances, be derived. The node type might be examined during the flow domain analysis and used to construct the flow domain network.

The overall logic might include a series of technology specific (yet vendor independent) flow analyses. The process might begin with a Nodal (i.e., MTU-s, which might refer to a provider edge router (or system thereof) for multi-dwelling units) flow domain analysis, and might follow with various supporting technology flow domains, including, without limitation, G.8032 Ethernet ring protection switching ("G.8032 ERPS"), Aggregation ("Agg" or "AGG"), hierarchical virtual private local area network service ("H-VPLS"), packet on a blade ("POB"), PE-rs served UNIs (herein, "PE-rs" or "PE_rs" might refer to a provider edge router having routing and switching functionality), multi-operator (including, but not limited to, external network-to-network interface ("E-NNI"), border gateway protocol-logical unit ("BGP-LU"), and/or the like), and/or virtual private LAN service ("VPLS"), or the like.

Each technology flow domain analysis might begin with the examination of a set of UNIs (e.g., Edge Flow Domain Analysis) that are part of the MEF service. A major function of the Edge Flow Domain Analysis is to take the MEF service request (including vector(s) of UNIs and EVCs), and then determining service devices.

The overall logic may be implemented using an outside-in analysis. Specifically, the UNI containment might be used to begin the analysis and determine the outside flow domains. The next step might be based on the devices serving the UNI work from the outside to the inside and determining attached flow domains. The flow domains shown in FIG. 3 include, but are not limited to, G.8032, H-VPLS, AGG, and/or the like.

With reference to FIG. 3A, system 300 might comprise a plurality of layers 305 (denoted "Layer n," "Layer (n+1)," "Layer (n+2)," and "Layer (n+3)"). At each layer, system 300 might include at least one network device 310. For example, as shown in the embodiment of FIG. 3A, at Layer n, system 300 might comprise M number of network devices--a first n.sup.th layer network device 310a, a second n.sup.th layer network device 310b, and through an M.sup.th n.sup.th layer network device 310c--each of which includes a G.8032 ring 315 or a PE_rs 315 (denoted 315a, 315b, and 315c, respectively). At Layer (n+1), system 300 might comprise a first (n+1).sup.th layer network device 310d through an M.sup.th (n+1).sup.th layer network device 310e--each of which includes a G.8032 ring 315 or a PE_rs 315 (denoted 315d and 315e, respectively). At Layer (n+2), system 300 might comprise a first (n+2).sup.th layer network device 310f through an M.sup.th (n+2).sup.th layer network device 310g--each of which includes a G.8032 ring 315 or a PE_rs 315 (denoted 315f and 315g, respectively). At Layer (n+3), method 300 might comprise a (n+3).sup.th layer network device 310h, which may or may not include a G.8032 ring 315 or a PE_rs 315.

Each network device 310 may be referred to as a sibling to each other, classified by layers. For instance, with reference to the example shown in FIG. 3A, the first n.sup.th layer network device 310a might be denoted "Sibling n.1," the second n.sup.th layer network device 310b might be denoted "Sibling n.2," the M.sup.th n.sup.th layer network device 310c might be denoted "Sibling n.m." At Layer (n+1), the first (n+1).sup.th layer network device 310d might be denoted "Sibling (n+1).1," while the M.sup.th (n+1).sup.th layer network device 310e might be denoted "Sibling (n+1).m, or the like. And so on.

In some embodiments, each of the n.sup.th layer network devices 310a-310c might communicatively couple via connections 320 (including connections 320a-320f) to each of a plurality of UNIs 325 (including UNI 1 through UNI 6, as shown in FIG. 3A). The (n+1).sup.th layer and n.sup.th layer network devices might have a parent-child relationship 330, and might communicatively couple to each other via connections 335 (including connections 335a, 335b, and 335c, as shown in FIG. 3A). As shown in the embodiment of FIG. 3A, Sibling n.1 might communicatively couple with Sibling (n+1).1 via connection 335a, while Sibling n.2 might communicatively couple with Sibling (n+1).1 via connection 335b, and Sibling n.m might communicatively couple with Sibling (n+1).m via connection 335c. Each of the (n+1).sup.th layer network devices might communicatively couple with each of the corresponding (n+2).sup.th layer network devices via connections 335d and 335e (i.e., network device 310d coupled with network device 310f via connection 335d, network device 310e coupled with network device 310g via connection 335e, as shown in the example of FIG. 3A). Each of the (n+2).sup.th layer network devices 310f-310g might communicatively couple to the (n+3).sup.th layer network device 310h via connections 335f-335g. In some embodiments, the network devices at Layer (n+2) might communicate with each other (as denoted by the dashed line in FIG. 3A).

In a FDN derivation, the process might begin with a sibling check at the edge or Layer n. In some embodiments, the sibling check might include determining whether a number of the siblings is greater than 1. Based on a determination that the number of the siblings is greater than 1, the process might proceed to Layer (n+1). The process might include performing a "T check," in which it is determined whether T equals G.8032 ring. If so, then ring members are added. The process might further include performing a flow domain change check, in which it is determined whether T=PE_rs. If T is a PE_rs, then the VLAN flow domain is done. At Layer n+1, another sibling check might be performed that includes determining whether a number of the siblings is greater than 1. Based on a determination that the number of the siblings is greater than 1, the process might proceed to Layer (n+2). The process might include performing a "T check," in which it is determined whether T equals G.8032 ring. If so, then ring members are added. The process might further include performing a flow domain change check, in which it is determined whether T=PE_rs. If T is a PE_rs, then the VLAN flow domain is done. In general, the process proceeds to the next layer (n+x) until the core technology (in this case, VPLS; although the various embodiments are not so limited) is reached or until there are no more siblings at that layer.

FIG. 3B illustrates the n.sup.th layer of the FDN of FIG. 3A. FIG. 3C illustrates the steps for determining edge connecting technology. In some embodiments, the first process flow logic might determine the set of Intra-MTUs flow domains, given a set of UNIs. The Edge Flow Domain Analysis might be used to determine the UNI supporting devices. Second, the analysis might feed to the neighbor flow domain. Some technologies that support UNIs might include, without limitation, MTU-s, packet on a blade, PE-rs, H-VPLS, G.8032 remote terminal ("RT"), G.8032 central office terminal ("COT"), and/or the like.

The analysis that may be performed herein might examine the vector (set) of UNIs that are components of the MEF service (along with an EVC). The analysis might be an outside-in approach, whereby the network connectivity required to support the service is determined by examining the edge of the network and determining the connecting components inward (e.g., toward a core of the network).

In some cases, the UNI list might be examined and a set of bins might be created with each bin holding a subset of UNIs that share the same edge serving device. Once the set of bins is established, the underlying technology may be determined in order to identify the VLAN flow domain type for the edge.

With reference to FIG. 3C, the two steps for FDN derivation might include determining shared edge devices (Step 1, 340a) and determining the edge connecting technology (including, but not limited to, MTU-s, packet on a blade, PE-rs, H-VPLS, G.8032 RT, G.8032 COT, and/or the like) (Step 2, 340b). At Step 1 (340a), UNIs 325 or 350 that share the same edge serving device may be grouped within the same bin of a plurality of bins 345 (including a first bin 345a, a second bin 345b, and a third bin 345c, as shown in the non-limiting example of FIG. 3C). For example, in the embodiment of FIG. 3C, UNIs 1, 2, and 4 might be grouped in the first bin 345a, while UNI 3 might be placed in the second bin 345b, and UNIs 5 and 6 might be grouped in the third bin 345c. At Step 2 (340b), the underlying technology for the grouped UNIs (in the appropriate bins) may be determined in order to identify the VLAN flow domain type for the edge. In some embodiments, by grouping the UNIs by shared edge serving device, determination of the underlying network technology may be implemented by determining the underlying technology for each of the shared edge serving devices (each represented by a bin of the plurality of bins). In such as case, efficient determination may be performed with minimal duplicative determinations.

FIG. 4 is a flow diagram illustrating an exemplary method 400 for generating a FDN object, in accordance with various embodiments. In particular, method 400 represents an implementation of an FDN algorithm using the outside-in approach, as referred to with respect to FIGS. 2 and 3 above. While the techniques and procedures of the method 400 is depicted and/or described in a certain order for purposes of illustration, it should be appreciated that certain procedures may be reordered and/or omitted within the scope of various embodiments. Moreover, while the method illustrated by FIG. 6 can be implemented by (and, in some cases, are described below with respect to) system 100 of FIG. 1 (or components thereof), or any of systems 200, 300, and 500 of FIGS. 2, 3, and 5 (or components thereof), respectively, the method may also be implemented using any suitable hardware implementation. Similarly, while systems 200, 300, and 500 (and/or components thereof) can operate according to the methods illustrated by FIG. 4 (e.g., by executing instructions embodied on a computer readable medium), systems 200, 300, and 500 can also operate according to other modes of operation and/or perform other suitable procedures.

With reference to FIG. 4, method 400 might comprise initiating MEF service provisioning (block 402). In some cases, initiating MEF service provisioning might comprise receiving a service request, which might originate from a first client device over a network (in some cases, via a UNI). At block 404, method 400 might comprise entering a vector of UNI(s). In some embodiments, entering a vector of UNI(s) might comprise determining one or more network devices for implementing a service arising from the service request. In some cases, the vector of UNI(s) might be included in, or derived from, the service request.

Method 400, at block 406, might comprise performing, with the first network device, an edge flow domain analysis and, at block 408, might comprise determining, with the first network device, whether at least one of the one or more second network devices is included in a flow domain comprising an intra-provider edge router system for multi-dwelling units ("intra-MTU-s"), based on the edge flow domain analysis. Method 400 might further comprise, based on a determination that at least one of the one or more second network devices is included in a flow domain comprising an intra-MTU-s, determining, with the first network device, one or more edge flow domains (block 410) and, based on a determination that at least one of the one or more second network devices does not include a flow domain comprising an intra-MTU-s, performing, with the first network device, a ring flow domain analysis (block 412). At block 414, method 400 might comprise determining, with the first network device, whether at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, based on the ring flow domain analysis. Method 400, at block 416, might comprise, based on a determination that at least one of the one or more second network devices is included in one or more G.8032 ring flow domains, determining, with the first network device, the one or more G.8032 flow domains. At block 418, method 400 might comprise, based on a determination that at least one of the one or more second network devices is not included in one or more G.8032 ring flow domains, performing, with the first network device, an aggregate ("AGG") flow domain analysis. Method 400 might further comprise, at block 420, determining, with the first network device, whether at least one of the one or more second network devices is included in one or more aggregate flow domains, based on the aggregate flow domain analysis. Method 400 might further comprise, based on a determination that at least one of the one or more second network devices is included in one or more aggregate flow domains, determining, with the first network device, the one or more aggregate flow domains (block 422) and, based on a determination that at least one of the one or more second network devices is not included in one or more aggregate flow domains, performing, with the first network device, a hierarchical virtual private local area network service ("H-VPLS") flow domain analysis (block 424).