Systems and methods for detecting entity migration

Shaw , et al.

U.S. patent number 10,318,964 [Application Number 15/248,244] was granted by the patent office on 2019-06-11 for systems and methods for detecting entity migration. This patent grant is currently assigned to LexisNexis Risk Solutions FL Inc.. The grantee listed for this patent is LexisNexis Risk Solutions FL Inc.. Invention is credited to Eric Blood, Andrew John Bucholz, Johannes Philippus de Villiers Prichard, Jesse C P B Shaw.

View All Diagrams

| United States Patent | 10,318,964 |

| Shaw , et al. | June 11, 2019 |

Systems and methods for detecting entity migration

Abstract

Systems and methods are disclosed herein for detecting entity migration. A method is provided for receiving container information for all known addresses in the United States. The container information can include address records, person or business entities associated with the address records, and temporal information associating the entities with the address records. The method includes determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the container information. The method includes extracting metrics from the migration data. The metrics can include velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and/or age of the person or business entities. The method can include determining, based at least in part on outliers associated with the metrics, one or more indicators of fraud; and outputting, for display, the one or more indicators of the fraud.

| Inventors: | Shaw; Jesse C P B (Saint Cloud, MN), Prichard; Johannes Philippus de Villiers (Boynton Beach, FL), Blood; Eric (Cumming, GA), Bucholz; Andrew John (Alexandria, VA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | LexisNexis Risk Solutions FL

Inc. (Boca Raton, FL) |

||||||||||

| Family ID: | 58096803 | ||||||||||

| Appl. No.: | 15/248,244 | ||||||||||

| Filed: | August 26, 2016 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20170061446 A1 | Mar 2, 2017 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 62210601 | Aug 27, 2015 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/2465 (20190101); G06Q 30/0185 (20130101); G06F 16/26 (20190101) |

| Current International Class: | G06Q 30/00 (20120101); G06F 16/26 (20190101); G06F 16/2458 (20190101); G06F 21/31 (20130101); H04W 12/06 (20090101); H04W 12/12 (20090101); G06Q 20/40 (20120101) |

References Cited [Referenced By]

U.S. Patent Documents

| 8682755 | March 2014 | Bucholz |

| 2013/0333048 | December 2013 | Coggeshall |

Attorney, Agent or Firm: Troutman Sanders LLP Schutz; James E. Jones; Mark Lehi

Parent Case Text

CROSS REFERENCE TO RELATED APPLICATIONS

This application claims priority under 35 U.S.C. 119 to U.S. Provisional Patent Application No. 62/210,601, entitled "Systems and Methods for Detecting Entity Migration" filed 27 Aug. 2015, the contents of which are incorporated by reference in their entirety as if fully set forth herein.

Claims

What is claimed is:

1. A computer-implemented method, comprising: receiving, from two or more sources, container information for all known addresses in the United States, the container information comprising: address records; person or business entities associated with the address records; and temporal information associating the person or business entities with the address records; disambiguating the container information to verify or correct the container information obtained from the two or more sources; determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the disambiguated container information; extracting metrics from the migration data, the metrics comprising one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities; determining, with the one or more special-purpose computer processors, and based at least in part on outliers associated with the metrics, one or more indicators of fraud, wherein determining the one or more indicators of fraud comprises determining a ratio of the number of persons or business entities associated with a single address per square footage of the address being greater than a threshold ratio; and outputting, for display, the one or more indicators of the fraud.

2. The method of claim 1, wherein the indicators of fraud are related to identity theft fraud.

3. The method of claim 1, wherein determining the one or more indicators of fraud comprises determining one or more of: a number of person or business entities associated with a single address being greater than a first threshold number; a number of person or business entities moving to a single address per time-period being greater than a second threshold number; and an association of person or business entities with an address having known previous criminal activity.

4. The method of claim 1, wherein extracting metrics from the migration data further comprises extracting activities of the person or business entities related to one or more of: governmental benefits, tax returns, Medicaid, and credit abuse.

5. The method of claim 1, wherein extracting metrics from the migration data further comprises extracting activities of the person or business entities related to one or more of: a distance between a current and previous address; and a length of time associated with a previous address.

6. The method of claim 1, wherein the outliers associated with the metrics correspond to data that differs from a statistical normal by greater than a standard deviation.

7. The method of claim 1, wherein determining the one or more indicators of fraud comprises determining one or more of: an association of person or business entities with vehicle hoarding; an association of person or business entities with nominee incorporation services, and an association of person or business entities with shelf companies.

8. The method of claim 1, further comprising scoring the metrics based on or more of: data quality; anomalous migration; groups that evidence the greatest connectedness; events associated with an organization; and false positive association of person or business entities with fraudulent activity.

9. A system comprising: at least one memory for storing data and computer-executable instructions; and at least one special-purpose processor configured to access the at least one memory and further configured to execute the computer-executable instructions to: receive, from two or more sources, container information for all known addresses in the United States, the container information comprising: address records; person or business entities associated with the address records; and temporal information associating the person or business entities with the address records; disambiguate the container information to verify or correct the container information obtained from the two or more sources; determine migration data based on the disambiguated container information; extract metrics from the migration data, the metrics comprising one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities; determine, based at least in part on outliers associated with the metrics, one or more indicators of fraud, wherein determining the one or more indicators of fraud comprises determining a ratio of the number of persons or business entities associated with a single address per square footage of the address being greater than a threshold ratio; and output, for display, the one or more indicators of the fraud.

10. The system of claim 9, wherein the indicators of fraud are related to identity theft fraud.

11. The system of claim 9, wherein the one or more indicators of fraud comprises one or more of: a number of person or business entities associated with a single address being greater than a first threshold number; a number of person or business entities moving to a single address per time-period being greater than a second threshold number; and an association of person or business entities with an address having known previous criminal activity.

12. The system of claim 9, wherein the metrics further comprises activities of the person or business entities related to one or more of: governmental benefits, tax returns, Medicaid, and credit abuse.

13. The system of claim 9, wherein metrics from the migration data further comprises activities of the person or business entities related to one or more of: a distance between a current and previous address; and a length of time associated with a previous address.

14. The system of claim 9, wherein the outliers associated with the metrics correspond to data that differs from a statistical normal by greater than a standard deviation.

15. The system of claim 9, wherein the one or more indicators of fraud comprises one or more of: an association of person or business entities with vehicle hoarding; an association of person or business entities with nominee incorporation services, and an association of person or business entities with shelf companies.

16. The system of claim 9, wherein the at least one special-purpose processor is configured to execute the computer-executable instructions to score the metrics based on or more of: data quality; anomalous migration; groups that evidence the greatest connectedness; events associated with an organization; and false positive association of person or business entities with fraudulent activity.

17. A non-transitory computer-readable media comprising computer-executable instructions that, when executed by one or more processors, configure the one or more processors to perform the method of: receiving, from two or more sources, container information for all known addresses in the United States, the container information comprising: address records; person or business entities associated with the address records; and temporal information associating the person or business entities with the address records; disambiguating the container information to verify or correct the container information obtained from the two or more sources; determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the disambiguated container information; extracting metrics from the migration data, the metrics comprising one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities; determining, with the one or more special-purpose computer processors, and based at least in part on outliers associated with the metrics, one or more indicators of fraud, wherein determining the one or more indicators of fraud comprises determining a ratio of the number of persons or business entities associated with a single address per square footage of the address being greater than a threshold ratio; and outputting, for display, the one or more indicators of the fraud.

18. The computer-readable media of claim 17, wherein the indicators of fraud are related to identity theft fraud.

19. The computer-readable media of claim 17, wherein determining the one or more indicators of fraud comprises determining one or more of: a number of person or business entities associated with a single address being greater than a first threshold number; a number of person or business entities moving to a single address per time-period being greater than a second threshold number; and an association of person or business entities with an address having known previous criminal activity.

20. The computer-readable media of claim 17, wherein the computer-executable instructions configure the one or more processors to score the metrics based on or more of: data quality; anomalous migration; groups that evidence the greatest connectedness; events associated with an organization; and false positive association of person or business entities with fraudulent activity.

Description

FIELD OF THE DISCLOSED TECHNOLOGY

The disclosed technology generally relates to detecting entity migration, and in particular, to systems and methods for detecting anomalous patterns relating individuals or businesses and their associated addresses over time.

BACKGROUND OF THE DISCLOSED TECHNOLOGY

Businesses and governmental agencies face a number of growing problems associated with fraudulent activities that have proven very difficult to detect and stop. Such activities can include identity-related fraud such as identity theft, account takeover, and/or synthetic identity creation. Fraudsters, for example, can apply for credit, payments, benefits, tax refunds, etc. by misrepresenting their identity as another adult, a child, or even a deceased person. The associated revenue loss to the businesses and/or government agencies can be significant, and the technical and emotional burden on the victim to rectify their public, private, and/or credit records can be onerous.

Identity theft, for example, can occur when an individual's identity is used by another person for personal gain. In certain cases, by the time such fraudulent activity is discovered, the damage has already been done and the perpetrator has moved on. Technically well-informed fraud perpetrators with sophisticated deception schemes are likely to continue developing, refining, and applying fraudulent schemes, particularly if fraud detection and prevention mechanisms are not in place.

BRIEF SUMMARY OF THE DISCLOSED TECHNOLOGY

Some or all of the above needs may be addressed by certain embodiments of the disclosed technology. Certain embodiments of the disclosed technology may include systems and methods for detecting anomalous activity related to entity migration and associated data.

According to an example embodiment of the disclosed technology, a method is provided for determining a likelihood of identity theft. The method can include receiving, from one or more sources, container information for all known addresses in the United States. The container information can include address records, person or business entities associated with the address records, and temporal information associating the entities with the address records. The method includes determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the container information. The method further includes extracting metrics from the migration data. The metrics can include one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities. The method can include determining, with the one or more special-purpose computer processors, and based at least in part on outliers associated with the metrics, one or more indicators of fraud; and outputting, for display, the one or more indicators of the fraud. In certain example implementations, the fraud may relate to identity theft fraud.

According to an example embodiment of the disclosed technology, a system is provided. The system includes at least one memory for storing data and computer-executable instructions; and at least one special-purpose processor configured to access the at least one memory and further configured to execute the computer-executable instructions to: receive, from one or more sources, container information for all known addresses in the United States, the container information comprising: address records; person or business entities associated with the address records; and temporal information associating the person or business entities with the address records. The system is configured to determine migration data based on the container information; extract metrics from the migration data, the metrics comprising one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities. The system is further configured to determine, based at least in part on outliers associated with the metrics, one or more indicators of fraud; and output, for display, the one or more indicators of the fraud.

According to an example embodiment of the disclosed technology, a computer-readable media comprising computer-executable instructions is provided. When executed by one or more processors, configure the computer-executable instructions cause the one or more processors to perform the method of: receiving, from one or more sources, container information for all known addresses in the United States. The container information can include address records, person or business entities associated with the address records, and temporal information associating the entities with the address records. The method includes determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the container information. The method further includes extracting metrics from the migration data. The metrics can include one or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the person or business entities. The method can include determining, with the one or more special-purpose computer processors, and based at least in part on outliers associated with the metrics, one or more indicators of fraud; and outputting, for display, the one or more indicators of the fraud. In certain example implementations, the fraud may relate to identity theft fraud.

Other embodiments, features, and aspects of the disclosed technology are described in detail herein and are considered a part of the claimed disclosed technologies. Other embodiments, features, and aspects can be understood with reference to the following detailed description, accompanying drawings, and claims.

BRIEF DESCRIPTION OF THE FIGURES

Reference will now be made to the accompanying figures and flow diagrams, which are not necessarily drawn to scale, and wherein:

FIG. 1 is a diagram 100 of an illustrative entity migration and crowding example, according to certain embodiments of the disclosed technology.

FIG. 2 is a diagram 200 depicting example metrics and scores associated with migrating entities, according to an example embodiment of the disclosed technology.

FIG. 3 is an example block diagram of a system 300 for processing and scoring migration data, according to an exemplary embodiment of the disclosed technology.

FIG. 4 is a block diagram 400 of an illustrative special-purpose computer system, according to an exemplary embodiment of the disclosed technology.

FIG. 5 is a block diagram 500 of an illustrative person entity-based search process, according to an exemplary embodiment of the disclosed technology.

FIG. 6 is a block diagram 600 of an illustrative person entity and date-based search process, according to an exemplary embodiment of the disclosed technology.

FIG. 7 is a block diagram 700 of an illustrative address entity and date-based search process, according to an exemplary embodiment of the disclosed technology.

FIG. 8 is a block diagram 800 of an illustrative process for linking information from various data sources, according to an exemplary embodiment of the disclosed technology.

FIG. 9 is a flow diagram of a method 900 according to an exemplary embodiment of the disclosed technology.

DETAILED DESCRIPTION

Embodiments of the disclosed technology will be described more fully hereinafter with reference to the accompanying drawings, in which embodiments of the disclosed technology are shown. This disclosed technology may, however, be embodied in many different forms and should not be construed as limited to the embodiments set forth herein; rather, these embodiments are provided so that this disclosure will be thorough and complete, and will fully convey the scope of the disclosed technology to those skilled in the art.

According to certain example implementations of the disclosed technology, certain anomalous or suspicious activity may be detected by monitoring, tracking, processing, and/or analysis of certain migration and/or crowding data. For example, migration data may represent individuals and their associated addresses over time. In certain example implementations, the term "crowding" as used herein may be used to indicate too many people living at a single address. In certain example implementations, the term "crowding" as used herein may be used to indicate a physically impossible number of people living at a single address. In certain example implementations, the term "crowding" as used herein may be used to indicate too low of a ratio for square footage per person at a given address. Example implementations of the disclosed technology can utilize special-purpose computing systems and custom query language(s) in the processes described herein to provide meaningful results, as may be necessitated due to the sheer amount of data that needs to be tracked and analyzed.

According to certain example implementations of the disclosed technology, crowding or the "crowding effect" may be utilized to identify and/or distinguish possible fraud-related activity from normal activity associated with an entity or identity. The crowding effect, for example, may occur when multiple identities move to (or appear at) a new location within the same time-period. In certain example implementations, the crowding effect may be used to distinguish between fraudulent and non-fraudulent activity. For example, a person may fake an identity for non-fraudulent activities, such as to get a job or to move into an apartment, and such identity faking may not elicit detection of a crowding effect because if its isolated nature and typical involvement of only one identity. However, a fraudster who creates (or steals) multiple identities to commit fraudulently activity en-masse could use a same or similar address for the identities, and certain implementations of the disclosed technology may be utilized to detect such activity.

Certain example implementations of the disclosed technology may measure entity crowding over time. For example, an entity identifier (such as a universal entity identifier or similar record identifier) may be time-stamped to measure a number of months the entity remains at a geo-location or address. Certain example implementations of the disclosed technology may measure or track the entity/address association based on a given threshold of time.

Certain example implementations of the disclosed technology provide tangible improvements in computer processing speeds, memory utilization, and/or programming languages. Such improvements provide certain technical contributions that can enable the detection of anomalous activity associated with migration data. In certain example implementations, the improved computer systems disclosed herein may enable migration tracking and analysis of an entire population, such as all known persons in the United States, together with all associated addresses. The computation of such a massive amount of data, at the scale required to provide effective outlier detection and information, has been enabled by the improvements in computer processing speeds, memory utilization, and/or programming language as disclosed herein. Those with ordinary skill in the art may recognize that traditional methods such as human activity, pen-and-paper analysis, or even traditional computation using general-purpose computers and/or off-the-shelf software, are not sufficient to provide the level of data processing for effective anomaly detection at the scale envisioned herein. As disclosed herein, the special-purpose computers and special-purpose programming language can provide improved computer speed and/or memory utilization that provide an improvement in computing technology, thereby enabling the disclosed inventions.

Certain example implementations of the disclosed technology may be enabled by the use of a new programming language known as KEL (Knowledge Engineering Language), which was developed by the Applicant. Certain embodiments of the KEL programming language may be configured to operate on the specialized HPCC Systems, as developed and offered by LexisNexis Risk Solutions, Inc., the Assignee and Applicant of the disclosed technology. HPCC Systems, for example, provide data-intensive supercomputing platform(s) designed for solving big data problems. As an alternative to Hadoop, the HPCC Platform offers a consistent, single architecture for efficient processing. The KEL programming language, in conjunction with the HPCC Systems, provides technical improvements in computer processing that enable the disclosed technology and provides useful, tangible results that may have previously been unattainable. For example, certain example implementation of the disclosed technology may rely upon geo-distance calculations, which are computationally intensive, requiring special software and hardware. Example implementations of the disclosed technology can utilize special-purpose computing systems and custom query language(s) in the processes described herein to provide meaningful results, as may be necessitated due to the sheer amount of data that needs to be tracked and analyzed.

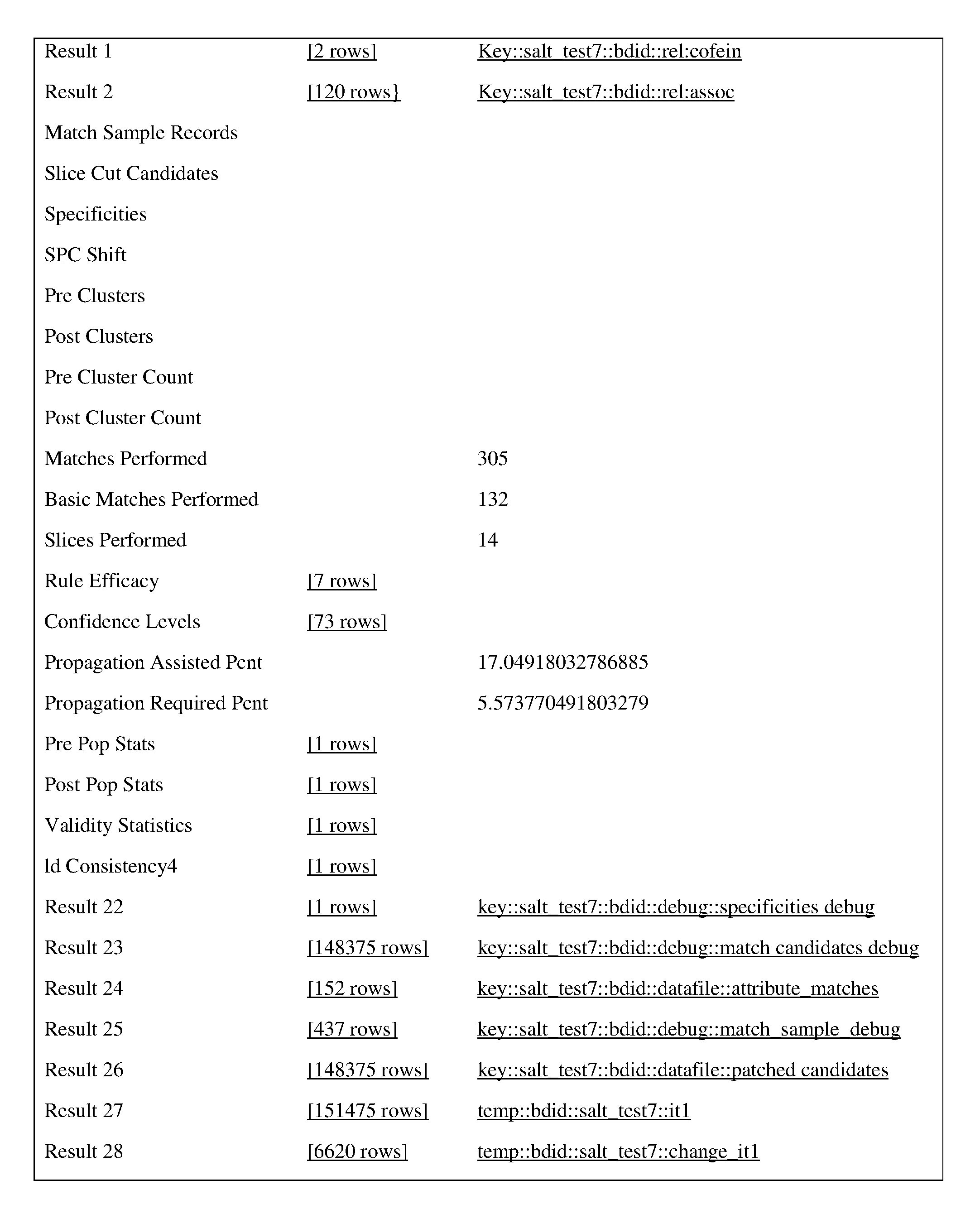

Certain example implementations of the disclosed technology may be enabled by the use of a special purpose HPCC systems in combination with a special purpose software linking technology called Scalable Automated Linking Technology (SALT). SALT and HPCC, are developed and offered by LexisNexis Risk Solutions, Inc., the assignee of the disclosed technology. HPCC Systems, for example, provide data-intensive supercomputing platform(s) designed for solving big data problems. As an alternative to Hadoop, the HPCC Platform offers a consistent, single architecture for efficient processing. The SALT modules, in conjunction with the HPCC Systems, provides technical improvements in computer processing that enable the disclosed technology and provides useful, tangible results that may have previously been unattainable. For example, certain example implementation of the disclosed technology may process massive data sets, which are computationally intensive, requiring special software and hardware.

Certain example implementations of the disclosed technology may involve processing massive data sets and managing the required large amount of memory/disk space. One of the technical solutions provided by the technology disclosed herein concerns the enablement and efficiency improvement of computer systems and software to process relationship data, and to provide the desired data in a reasonable amount of time. Certain example implementations of the disclosed technology may be utilized to increase the efficiency of detection of migration indicators.

Determining relationships among records, for example, can follow the classical n-squared process for both time and disk space. According to an example implementation of the disclosed technology, SALT provides a process in which light-weight self-joins may be utilized, for example, in generating embeddable common lisp (ECL). But disk-space utilization might still be high. Certain example implementations of the disclosed technology may enable a core join to be split into parts, each of which is persisted. This has the advantage of breaking a potentially very long join into n parts while allowing others a time slice. This has an effect of reducing disk consumption by a factor of n, provided the eventual links are fairly sparse. In terms of performance, it should be noted that if n can be made high enough that the output of each join does not spill to disk, the relationship calculation process may have significantly faster performance.

In accordance with certain example implementations, linking of records may be performed by certain additional special programming and analysis software. For example, record linking fits into a general class of data processing known as data integration, which can be defined as the problem of combining information from multiple heterogeneous data sources. Data integration can include data preparation steps such as parsing, profiling, cleansing, normalization, and parsing and standardization of the raw input data prior to record linkage to improve the quality of the input data and to make the data more consistent and comparable (these data preparation steps are sometimes referred to as ETL or extract, transform, load).

Some of the details for the use of SALT are included in the APPENDIX section of this application. According to an example implementation of the disclosed technology, SALT can provide data profiling and data hygiene applications to support the data preparation process. In addition, SALT provides a general data ingest application, which allows input files to be combined or merged with an existing base file. SALT may be used to generate a parsing and classification engine for unstructured data, which can be used for data preparation. The data preparation steps are usually followed by the actual record linking or clustering process. SALT provides applications for several different types of record linking including internal, external, and remote.

Data profiling, data hygiene and data source consistency checking, while key components of the record linking process, have their own value within the data integration process, and may be supported by SALT for leverage even when record linking is not a necessary part of a particular data work unit. SALT uses advanced concepts such as term specificity to determine the relevance/weight of a particular field in the scope of the linking process, and a mathematical model based on the input data, rather than the need for hand coded user rules, which may be key to the overall efficiency of the method. SALT may be used to verify identities, addresses and other factors, and using information on relationships to determine migration indicators.

In accordance with an example implementation, a measure of crowding may include a count of unique entities at a given location. In certain example implementations, the entity address/location data may be analyzed to measure additional crowding-related metrics, such as (1) the velocity at which entities appear at a location; (2) the distance from the entity's previously associated location; (3) the number of entities moving simultaneously; and/or (4) the age of the entity as they emerge at a location. In certain example implementations, the entity crowding metrics may be calculated via graph analytics with the Knowledge Engineering Language (KEL) as previously discussed, which may provide certain speed, efficiency, and/or memory utilization advantages over previous computation languages.

In accordance with certain example implementations of the disclosed technology, the measurement and analysis of identity migration and/or crowding can be used to identify addresses that may be under the control of criminals. For example, criminals may steal and/or manufacture a large number of identities, and a portion of these identities may be associated with a same address for which the criminals may have access or control.

In certain example implementations of the disclosed technology, "containers" may be created to store each address, and each address container may be monitored or analyzed over time, for example, as people move in and out of the address. Certain example implementations of the disclosed technology can enable review of all known addresses of the entire U.S. population, measuring and tracking identities moving in and out of the addresses, and generating statistics.

At a high level, the disclosed technology may generate and utilize metrics to look at identities and their associated flow to/from addresses over time. Certain example implementations, may also look at how far people move to go from one address to another. In certain instances, people may not have a logical movement pattern. The disclosed technology may help gain a better understanding the movement of the entire population, not only in the U.S. but also abroad.

Certain example implementations of the disclosed technology may be utilized to detect when a large number of people move to an address at once. Such movement data may be indicative of fraud. But there are also situations in which such data may represent non-fraudulent activity, such as a credit repair agency using its address for its clients, for example. In health care for example, there may be a tie between moving and increase or decrease in allergies, thus, there a situations in which people move en mass for health or seasonal reasons. However, certain example implementations may be utilized to detect indicators of large scale identity theft, for example as associated with governmental benefits, tax returns, Medicaid, credit abuse, etc., and such detection may be associated with people moving into the same address.

According to certain example implementation of the disclosed technology, a maximum distance that an individual has ever moved may be utilized, for example, to determine anomalous behavior when an individual shows up as moving a great distance, as previous movement patterns may be indicators for future movement. One metric that may be monitored in certain example implementation is the length in which an entity has stayed at a previous address. Utilizing this information can provide an early warning sign so that the anomalous behavior may be investigated and stopped before fraud damage can be done. In certain cases, it can take months for law enforcement or credit agencies to piece together data that would indicate fraudulent activity, and it is usually too late as the damage/theft may have already occurred.

Certain example implementations of the disclosed technology may provide cross checks and filtering to eliminate false positives. For example, military personnel may have a very high move-to distance, but college students may have a very low move-to distance. Certain example implementations of the disclosed technology may identify and flag certain groups of people to differentiate what may be normal for one group, but abnormal for another group. Certain example implementations can be utilized to spot the anomalies, either because of the distances moved, groups moving together, threshold settings, etc.

In accordance with an example implementation of the disclosed technology, individuals may be identified by a disambiguated entity identifier, including a year and month in which they show up in a public record at a particular address. In certain example implementations, the address may also be identified with a year and a month. In certain example implementations, the data may be analyzed together with all other address/individual data and the resulting output may be an indication of anomalous behavior. For example, if a family moves, they will typically do it all at once, but some of the people may be more prompt than others in updating records associated with the new local Department of Motor Vehicles. The fact that such information may not update all at once for an entire family may be indicative of normal behavior.

Certain example implementations of the disclosed technology may be utilized to detect large scale ID theft. For example, in one example implementation, all residential addresses metrics may be processed and reviewed for outliers. For example, outliers may be determined as corresponding to data that is more than two standard deviations away from the statistical normal. In other example implementations, the threshold for the outliers may be set as desired to include or exclude certain data.

Certain example implementations of the disclosed technology may be utilized as an early detection mechanism. For example, data associated with known identity theft in the past may be utilized as a model for predicting patterns that may be indicative of future identity theft. For example, a known dead person who shows up in the data as migrating may be labeled as a Zombie, and as such, will almost certainly be related with fraudulent identity theft activity. Other indicators of fraud may include a high number of individuals (>100 for example) who applied for credit in the same day from a same address. Example implementations of the disclosed technology may be utilized to identify such activity.

FIG. 1 is a diagram 100 of an illustrative entity migration and crowding example, according to certain embodiments of the disclosed technology. As described above, entity migration data may be processed to determine certain aspects, metrics, scoring, etc., as associated with the entity, the time-frame of the migration, other entities moving to a same location, etc. For example, a first entity 102 (as depicted by the white star in FIG. 1) may be associated with a first address in Montana at a first time T1, a second address in Wyoming at a second time T2, and a third address in Minnesota at a third time T3. Based on this information, a pattern of entity migration may be determined. Such information alone may or may not provide an indication of potential fraud.

In another example implementation, the disclosed technology may be utilized to detect a first plurality of entities 104 (as depicted by dark stars located in New Mexico) that emerge at a same or similar location within a given time-frame. For example, the first plurality of entities 104 may emerge at a certain address between times T4 and T5. In some instances, these entities 104 may not have an associated previous address. One explanation for the sudden emergence of the plurality of entities 104 at this location may be that a family of legal immigrants moved to the address within a given time-frame. However, if corroborating information is not available (for example from U.S. Immigrations and Customs Enforcement or other previous entity records), then it may be likely that the plurality of entities 104 may be synthetically generated for fraudulent purposes. Yet in other example implementations, it may be possible to determine that these entities appeared to "emerge" at a given address because they started a first job in the U.S. without a prior public record. In accordance with an example implementation of the disclosed technology, age may be used to determine abnormal emergence. For example, in the case of migrant or foreign workers, the age at emergence is typically higher than that of a natural citizen.

In yet another example, and with continued reference to FIG. 1, a third entity 106 may be associated with an address in Oregon at a time T6; a forth entity 108 may be associated with an address in Iowa at a time T7; and a fifth entity 110 may be associated with an address in Oklahoma at a time T8. Then, each of these entities 106, 108, 110 may be associated with a same address in Colorado between times T9 and T10. Certain example implementations of the disclosed technology may be utilized to determine entity migration metrics for these entities. For example, and depending on certain metrics, the address in Colorado may be a school dormitory or apartment in a college town, each of the entities may be of typical college age, and they may "show up" at the destination address in Colorado at a time-frame between T9 and T10 which may correspond to a beginning of a school year. In such an example, and depending on the analysis of the associated metrics, such migration behavior may be scored with a low probability of fraud. However, in other example situations, the metrics associated with the migrating entities may not fit such a safe scenario, such as students moving to go to college. For example, the entity ages may not fit the typical college student age, and thus, may be suspect.

FIG. 1 also depicts another way of graphically representing entity migration data that has been identified by the disclosed systems and methods as being suspicious or possibly fraudulent. For example, bubbles 112 may be utilized to represent possible fraudulent activity associated with entity migration and/or crowding. For example, a large number of entities may emerge at a given address. In certain example implementations, the diameter (or other numerical notations) associated with the bubbles 112 may represent the score of the likelihood of fraud. In certain example implementations, various metrics may be combined to provide indications or scores of the possible fraudulent activity.

FIG. 2 is a diagram 200 depicting example metrics and scores associated with migrating entities, according to an example embodiment of the disclosed technology. For example, the entity address/location data may be analyzed to determine metrics 202, such as entity identifiers, a velocity at which entities appear at a location 204; a distance from the entity's previously associated location; a number of entities moving to the same address simultaneously (or within a given time frame); and/or (4) the age of the entity as they emerge at a location.

Certain example implementations of the disclosed technology may be utilized to detect information such as vehicle hoarding, nominee incorporation services, and/or shelf companies. A shelf company, for example, is a company that can be formed in a low-tax, low-regulation state expressly to be sold off for its pristine credit rating. Metaphorically speaking, this type of company may be formed then put on the "shelf" to age with no further activity. In one example scenario, a business person with a bad credit rating, but who needs a loan may purchase a shelf corporation for the purpose of qualifying for and taking out a loan. If the business then defaults on the loan, the creditor can go after a corporation, which may have no assets, no income and no accounts receivable. With the current environment of government bailout of the banks that make questionable loans, the net result is that the public in general may be getting scammed. Thus, detection of such activity is becoming more and more crucial.

FIG. 3 is an example block diagram of a migration and crowding analysis system 300 for processing and scoring migration data, according to an exemplary embodiment of the disclosed technology. The migration and crowding analysis system 300 may fetch and/or receive a plurality of data 302 to be analyzed. In certain example implementations, a portion of the data 302 may be stored locally. In certain example implementations, all or a portion of the data may be available via one or more local or networked databases 308. In accordance with an example embodiment, the data 302 may be processed 304, and output 306 may be generated. In one example embodiment, the data 302 may include identities, public data, addresses associated with the identities. In certain example implementations, the data 302 may include previous address information 310 associated with some or all of the identities, including but not limited to time-frames in which particular addresses are associated with corresponding identities.

In certain example implementations, the data 302 may also include information related to one or more activities and/or locations associated with the activities. In an example embodiment, the system 300 may receive the data 302 in its various forms and may process 304 the data 302 to derive temporal relationships and related geographical locations 312. In certain example implementations, the relationships may be utilized to derive migration and/or crowding attributes 314. In an example embodiment, the relationships 312 and attributes 314 may be used to determine particular metrics 316. For example, the metrics 316 may include one or more of the following: velocity of movement, simultaneous movements, distance moved, and age of the entity (associated with the identity). In certain example implementations, the metrics 316 may include one of more of: proximity to the one or more activities, entity information including history, distances, number of overlaps, relations, employer record, license records, etc.

According to an example embodiment of the disclosed technology, the metrics 316 may be processed by a scoring and filtering process 318, which may result in an output 306 that may include one or more indicators of data quality 320, anomalous migration 322, and/or fraud scores 324.

Certain example implementations of the disclosed technology may enable identification of errors in data. For example, data provided by information vendors can include errors that, if left undetected, could produce erroneous results. Certain example implementations of the disclosed technology may be used to measure the accuracy and/or quality of the available data, for example by cross-checking, so that the data be included, scrubbed, corrected, or rejected before utilizing such data in the full analysis. In accordance with an example embodiment of the disclosed technology, such data quality 320 may be determined and/or improved by one or more of cross checking, scrubbing to correct errors, and scoring to use or reject the data.

In accordance with an example implementation of the disclosed technology, connections and degrees of separation between entities may be utilized. For example, the connections may include a list of names of known or derived business associates, friends, relatives, etc. The degrees of separation may be an indication of the strength of the connection. For example, two people having a shared residence may result in a connection with a degree of 1. In another example implementation, two people working for the same company may have a degree of 2. In one example implementation, the degree of separation may be inversely proportional to the strength of the connection. In other example embodiments, different factors may be contribute to the degree value, and other values besides integers may be utilized to represent the connection strength.

According to an example embodiment, anomalous migration 322 may be determined based on temporal geographical location information combined with other metrics 316. For example, the geographical information may include one or more of an address, GPS coordinates, latitude/longitude, physical characteristics about the area, whether the address is a single family dwelling, apartment, etc.

In an example implementation, the output 305 may include weightings that may represent information such as geographical spread of an individual's social network. In an example implementation, the weighting may also include a measure of connections that are in common. Such information may be utilized to vary the output.

According to an example implementation of the disclosed technology, scoring 324 may be applied, and one or more scores may be applied to each address. In an example implementation, a scoring unit may utilize a predetermined scoring algorithm for scoring some or all of the data. In another example implementation, the scoring unit may utilize a dynamic scoring algorithm for scoring some or all of the data. The scoring algorithm, for example, may be based on seemingly low-risk events that tend to be associated with organizations, such as fraud organizations. The algorithm may thus also be based on research into what events tend to be indicative of fraud in the industry or application to which the system 300 is directed.

In accordance with an example implementation of the disclosed technology, the migration and crowding analysis system 300 may leverage publicly available data as input data 308, which may include several hundred million records. The migration and crowding analysis system 300 may also clean and standardize data to reduce the possibility that matching entities are considered as distinct. Before creating a graph, the migration and crowding analysis system 300 may use this data to build a large-scale network map of the population in question and its associated migration.

According to an example implementation, the migration and crowding analysis system 300 may leverage a relatively large-scale of supercomputing power and analytics to target organized collusion. Example implementation of the disclosed technology of the systems and methods disclosed herein may rely upon large scale, special-purpose, parallel-processing computing platforms to increase the agility and scale of solutions.

Example implementations of the disclosed technology of the systems and methods disclosed herein may measure behavior, activities, and/or relationships to actively and effectively expose syndicates and rings of collusion. Unlike many conventional systems, the systems and methods disclosed herein need not be limited to activities or rings operating in a single geographic location, and it need not be limited to short time periods. The systems and methods disclosed herein may be used to determine whether migration and/or crowding activities fall within an organized ring or certain geographical location.

In one example implementation, a filter may be utilized to reduce the data set to identify groups that evidence the greatest connectedness based on the scoring algorithm. In one example implementation, systems and methods disclosed herein may utilize scores that match or exceed a predetermined set of criteria may be flagged for evaluation. In an example implementation of the disclosed technology, filtering may utilize one or more target scores, which may be selected based on the scoring algorithm. In one example implementation, geo-social networks having scores greater than or equal to a target score may be flagged as being potentially collusive.

FIG. 4 depicts a computing device or computing device system 400, according to various example implementations of the disclosed technology. It will be understood that the computing device 400 is provided for example purposes only and does not limit the scope of the various implementations of the communication systems and methods. In certain example implementations, the computing device 400 may be a specialized HPCC Systems, as developed and offered by LexisNexis Risk Solutions, Inc., the assignee of the disclosed technology. HPCC Systems, for example, provide data-intensive supercomputing platform(s) designed for solving big data problems. Various implementations and methods herein may be embodied in non-transitory computer readable media for execution by a processor.

The computing device 400 of FIG. 4 includes a central processing unit (CPU) 402, where computer instructions are processed; a display interface 404 that acts as a communication interface and provides functions for rendering video, graphics, images, and texts on the display. In certain example implementations of the disclosed technology, the display interface 404 may be directly connected to a local display, such as a touch-screen display associated with a mobile computing device. In another example implementation, the display interface 404 may be configured for providing data, images, and other information for an external/remote display that is not necessarily physically connected to the mobile computing device. For example, a peripheral device monitor may be utilized for mirroring graphics and other information that is presented on a wearable or mobile computing device. In certain example implementations, the display interface 404 may wirelessly communicate, for example, via a Wi-Fi channel or other available network connection interface 412 to the external/remote display.

In an example implementation, the network connection interface 412 may be configured as a communication interface and may provide functions for rendering video, graphics, images, text, other information, or any combination thereof on the display. In an example, a communication interface may include a serial port, a parallel port, a general purpose input and output (GPIO) port, a game port, a universal serial bus (USB), a micro-USB port, a high definition multimedia (HDMI) port, a video port, an audio port, a Bluetooth port, a near-field communication (NFC) port, another like communication interface, or any combination thereof.

The computing device 400 may include a keyboard interface 406 that provides a communication interface to a keyboard. In an example implementation, the computing device 400 may include a pointing device interface 408, which may provide a communication interface to various devices such as a pointing device, a touch screen, a depth camera, etc.

The computing device 400 may be configured to use an input device via one or more of input/output interfaces (for example, the keyboard interface 406, the display interface 404, the pointing device interface 408, network connection interface 412, camera interface 414, sound interface 416, etc.,) to allow a user to capture information into the computing device 400. The input device may include a mouse, a trackball, a directional pad, a track pad, a touch-verified track pad, a presence-sensitive track pad, a presence-sensitive display, a scroll wheel, a digital camera, a digital video camera, a web camera, a microphone, a sensor, a smartcard, and the like. Additionally, the input device may be integrated with the computing device 400 or may be a separate device. For example, the input device may be an accelerometer, a magnetometer, a digital camera, a microphone, and an optical sensor.

Example implementations of the computing device 400 may include an antenna interface 410 that provides a communication interface to an antenna; a network connection interface 412 that provides a communication interface to a network. As mentioned above, the display interface 404 may be in communication with the network connection interface 412, for example, to provide information for display on a remote display that is not directly connected or attached to the system. In certain implementations, a camera interface 414 is provided that acts as a communication interface and provides functions for capturing digital images from a camera. In certain implementations, a sound interface 416 is provided as a communication interface for converting sound into electrical signals using a microphone and for converting electrical signals into sound using a speaker. According to example implementations, a random access memory (RAM) 418 is provided, where computer instructions and data may be stored in a volatile memory device for processing by the CPU 402.

According to an example implementation, the computing device 400 includes a read-only memory (ROM) 420 where invariant low-level system code or data for basic system functions such as basic input and output (I/O), startup, or reception of keystrokes from a keyboard are stored in a non-volatile memory device. According to an example implementation, the computing device 400 includes a storage medium 422 or other suitable type of memory (e.g. such as RAM, ROM, programmable read-only memory (PROM), erasable programmable read-only memory (EPROM), electrically erasable programmable read-only memory (EEPROM), magnetic disks, optical disks, floppy disks, hard disks, removable cartridges, flash drives), where the files include an operating system 424, application programs 426 (including, for example, KEL (Knowledge Engineering Language), a web browser application, a widget or gadget engine, and or other applications, as necessary) and data files 428 are stored. According to an example implementation, the computing device 400 includes a power source 430 that provides an appropriate alternating current (AC) or direct current (DC) to power components. According to an example implementation, the computing device 400 includes and a telephony subsystem 432 that allows the device 400 to transmit and receive sound over a telephone network. The constituent devices and the CPU 402 communicate with each other over a bus 434.

In accordance with an example implementation, the CPU 402 has appropriate structure to be a computer processor. In an arrangement, the computer CPU 402 may include more than one processing unit. The RAM 418 interfaces with the computer bus 434 to provide quick RAM storage to the CPU 402 during the execution of software programs such as the operating system application programs, and device drivers. More specifically, the CPU 402 loads computer-executable process steps from the storage medium 422 or other media into a field of the RAM 418 in order to execute software programs. Data may be stored in the RAM 418, where the data may be accessed by the computer CPU 402 during execution. In an example configuration, the device 400 includes at least 128 MB of RAM, and 256 MB of flash memory.

The storage medium 422 itself may include a number of physical drive units, such as a redundant array of independent disks (RAID), a floppy disk drive, a flash memory, a USB flash drive, an external hard disk drive, thumb drive, pen drive, key drive, a High-Density Digital Versatile Disc (HD-DVD) optical disc drive, an internal hard disk drive, a Blu-Ray optical disc drive, or a Holographic Digital Data Storage (HDDS) optical disc drive, an external mini-dual in-line memory module (DIMM) synchronous dynamic random access memory (SDRAM), or an external micro-DIMM SDRAM. Such computer readable storage media allow the device 400 to access computer-executable process steps, application programs and the like, stored on removable and non-removable memory media, to off-load data from the device 400 or to upload data onto the device 400. A computer program product, such as one utilizing a communication system may be tangibly embodied in storage medium 422, which may comprise a machine-readable storage medium. Certain example implementations may include instructions stored in a non-transitory storage medium in communication with a memory, wherein the instructions may be utilized to instruct one or more processors to carry out the instructions.

According to one example implementation, the term computing device, as used herein, may be a CPU, or conceptualized as a CPU (for example, the CPU 402 of FIG. 4). In this example implementation, the computing device (CPU) may be coupled, connected, and/or in communication with one or more peripheral devices, such as display. In another example implementation, the term computing device, as used herein, may refer to a mobile computing device, such as a smartphone or tablet computer. In this example embodiment, the computing device may output content to its local display and/or speaker(s). In another example implementation, the computing device may output content to an external display device (e.g., over Wi-Fi) such as a TV or an external computing system.

In certain embodiments, the communication systems and methods disclosed herein may be embodied in non-transitory computer readable media for execution by a processor. An example implementation may be used in an application of a mobile computing device, such as a smartphone or tablet, but other computing devices may also be used, such as to portable computers, tablet PCs, Internet tablets, PDAs, ultra mobile PCs (UMPCs), etc.

FIG. 5, FIG. 6, and FIG. 7 depict various illustrative processes for conducting entity-based searches according to certain example implementations of the disclosed technology. In certain example implementations, a container can include various "shells" to house or represent certain data associated with the person and/or address. As shown in FIG. 5 and FIG. 6, a search process may begin by entering certain identification information into the person shell (such as a unique ID, SSN, name, date, etc.,) and the subsequent stages of the search may be utilized to populate corresponding shells, resulting in an address identification (AID) in the address shell.

According to an example implementation of the disclosed technology, and as depicted in FIG. 7, the search process, as described above, may be reversed by entering certain identification information related to an address (date, address, address ID, etc.) into the address shell, and the subsequent stages of the search may be utilized to populate corresponding shells, resulting in an entity or person identifier (DID) in the person shell.

Certain example implementations of the disclosed technology may utilize combinations of the processes depicted in FIGS. 5-7 to further confirm, refine, and/or reject the data in the various shells.

FIG. 8 is a block diagram 800 of an illustrative relationship-linking example and system 801 for determining relationship links between/among individuals. Certain example implementations of the disclosed technology are enabled by the use of a special-purpose HPCC supercomputer 802 and SALT 818, as described above, and as provided with further examples in the APPENDIX.

According to an example implementation of the disclosed technology, the system 801 may include a special-purpose supercomputer 802 (for example HPCC) that may be in communication with one or more data sources and may be configured to process public and/or private records 826 obtained from the various data sources 820 822. According to an exemplary embodiment of the disclosed technology, the computer 802 may include a memory 804, one or more processors 806, one or more input/output interface(s) 808, and one or more network interface(s) 810. In accordance with an exemplary embodiment, the memory 804 may include an operating system 812 and data 814. In certain example implementations, one or more record linking modules, such SALT 818 may be provided, for example, to instruct the one or more processors 806 for analyzing relationships within and among the records 826. Certain example implementations of the disclosed technology may further include one or more internal and/or external databases or sources 820 822 in communication with the computer 802. In certain example implementations, the records 826 may be provided by a source 820 822 in communication with the computer 802 directly and/or via a network 824 such as the Internet.

According to an example implementation of the disclosed technology, the various public and/or private records 826 of a population may be processed to determine relationships and/or connections with a target individual 830. In accordance with an example implementation of the disclosed technology, the analysis may yield other individuals 832 834 836 838 . . . and their associated locations 850 that are directly or indirectly associated with the target individual 830. In certain example implementations, such relationships may include one or more of: one-way relationships, two-way relationships, first degree connections, second degree connections etc., depending on the number of intervening connections.

The example block diagram 800 and system 801 shown in FIG. 8 depicts a first individual 836 that is directly associated with the target individual 830 by a first-degree connection, such as may be the case for a spouse, sibling, known business associate, etc. Also shown, for example purposes, is a second individual 834 who is associated with the target individual 830 via a second degree connection, and who also is connected directly with the first individual 836 by a first degree connections. According to an exemplary embodiment, this type of relationship would tend to add more weight, verification, credibility, strength etc., to the connections. Put another way, such a relationship may strengthen the associated connection so that it may be considered to be a connection having a degree less that one, where the strength of the connection may be inversely related to the degree of the connection.

Various embodiments of the communication systems and methods herein may be embodied in non-transitory computer readable media for execution by a processor. An exemplary embodiment may be used in an application of a mobile computing device, such as a smartphone or tablet, but other computing devices may also be used.

An exemplary method 900 for determining a likelihood of identity theft will now be described with reference to the flowchart of FIG. 9. The method 900 starts in block 902, and according to an exemplary embodiment of the disclosed technology, includes receiving, from one or more sources, container information for all known addresses in the United States, the container information comprising: address records; identities associated with the address records; and temporal information associating the identities with the address records. In block 904, the method 900 includes determining, with one or more special-purpose computer processors in communication with a memory, migration data based on the container information. In block 906, the method 900 extracting metrics from the migration data, the metrics comprising on or more of: velocity of migration data; simultaneous movement of individuals within a predetermined time period; distance moved; and age of the identities. In block 908, the method 900 includes determining, with the one or more special-purpose computer processors, and based at least in part on outliers associated with the metrics, one or more indicators of fraud. In block 910, the method 900 includes outputting, for display, the one or more indicators of the fraud.

In certain example implementations, the determined indicators of fraud may relate to identity theft fraud.

According to an example implementation of the disclosed technology, the determining the one or more indicators of fraud can include determining one or more of: a number of person or business entities associated with a single address being greater than a first threshold number; a ratio of the number person or business entities associated with a single address per square footage of the address being greater than a threshold ratio; a number of person or business entities moving to a single address per time-period being greater than a second threshold number; and an association of person or business entities with an address having known previous criminal activity.

In accordance with an example implementation of the disclosed technology, extracting metrics from the migration data can further include extracting activities of the person or business entities related to one or more of: governmental benefits, tax returns, Medicaid, and credit abuse.

In certain example implementations, extracting metrics from the migration data can include extracting activities of the person or business entities related to one or more of: a distance between a current and previous address; and a length of time associated with a previous address.

According to an example implementation of the disclosed technology, the outliers associated with the metrics may correspond to data that differs from a statistical normal by greater than a standard deviation. In another example implementation, the outliers associated with the metrics may correspond to data that differs from a statistical normal by greater than two standard deviations. In another example implementation, the outliers associated with the metrics may correspond to data that differs from a statistical normal by greater than three standard deviations. In another example implementation, the outliers associated with the metrics may be defined with respect to the statistical mean or normal by any desired deviation.

In accordance with an example implementation of the disclosed technology, determining the one or more indicators of fraud can include determining one or more of: an association of person or business entities with vehicle hoarding; an association of person or business entities with nominee incorporation services, and an association of person or business entities with shelf companies.

In certain example implementations, scoring the metrics may be based on or more of: data quality; anomalous migration; groups that evidence the greatest connectedness; events associated with an organization; and possible false positive associations of person or business entities with fraudulent activity.

Certain example embodiments of the disclosed technology may utilize a model to build a profile of indicators of fraud that may be based on multiple variables. In certain example implementations of the disclosed technology, the interaction of the indicators and variables may be utilized to produce one or more scores indicating the likelihood or probability of fraud associated with identity theft.

For example, in one aspect, addresses associated with an identity and their closest relatives or associates may be may be analyzed to determine distances between the addresses. For example, the greater distance may indicate a higher the likelihood of fraud because, for example, a fraudster may conspire with a relative or associate in another city, and may assume that their distance may buffer them from detection.

Certain example embodiments of the disclosed technology may utilize profile information related to an entity's neighborhood. For example, information such as density of housing (single family homes, versus apartments and condos), the presence of businesses, and the median income of the neighborhood may correlate with a likelihood of fraud. For example, entities living in affluent neighborhoods are less likely to be involved with fraud, whereas dense communities with lower incomes and lower presence of businesses may be more likely to be associated with fraud.

Certain example embodiments of the disclosed technology may assesses the validity of the input identity elements, such as the name, street address, social security number (SSN), phone number, date of birth (DOB), etc., to verify whether or not requesting entity input information corresponds to a real identity. Certain example implementations may utilize a correlation between the input SSN and the input address, for example, to determine how many times the input SSN has been associated with the input address via various sources. Typically, the lower the number, then the higher the probability of fraud.

Certain example implementations of the disclosed technology may determine the number of unique SSNs associated with the input address. Such information may be helpful in detecting identity theft-related fraud, and may also be helpful in finding fraud rings because the fraudsters have typically created synthetic identities, but are requesting all payments be sent to one address.

Certain example implementations may determine the number of SSNs associated with the identity in one or more public or private databases. For example, if the SSN has been associated with multiple identities, then it is likely a compromised SSN and the likelihood of fraud increases.

According to an example implementation, the disclosed technology may be utilized to verify the validity of the input address. For example, if the input address has never been seen in public records, then it is probably a fake address and the likelihood of fraud increases

Certain example implementations of the disclosed technology may be utilized to determine if the container data corresponds to a deceased person, a currently incarcerated person, a person having prior incarceration (and time since their incarceration), and/or whether the person has been involved in bankruptcy. For example, someone involved in a bankruptcy may be less likely to be a fraudster.

Certain embodiments of the disclosed technology may enable the detection of possible, probable, and/or actual identity theft-related fraud, for example, as associated with a request for credit, payment, or a benefit. Certain example implementations provide for disambiguating input information and determining a likelihood of fraud. In certain example implementations, the input information may be received from a requesting entity in relation to a request for credit, payment, or benefit. In certain example implementations, the input information may be received from a requesting entity in relation to a request for an activity from a governmental agency.

In accordance with an example implementation of the disclosed technology, input information associated with a requesting entity may be processed, weighted, scored, etc., for example, to disambiguate the information. Certain implementations, for example, may utilize one or more input data fields to verify or correct other input data fields.

In an exemplary embodiment, a request for an activity may be received by the system. For example, the request may be for a tax refund. In one example embodiment, the request may include a requesting person's name, street address, and social security number (SSN), where the SSN has a typographical error (intentional or unintentional). In this example, one or more public or private databases may be searched to find reference records matching the input information. But since the input SSN is wrong, a reference record may be returned matching the name and street address, but with a different associated SSN. According to certain example implementations, the input information may be flagged, weighted, scored, and/or corrected based on one or more factors or metrics, including but not limited to: fields in the reference record(s) having field values that identically match, partially match, mismatch, etc, the corresponding field values.

Example embodiments of the disclosed technology may reduce false positives and increase the probability of identifying and stopping fraud based on a customized identity theft-based fraud score. According to an example implementation of the disclosed technology, a model may be utilized to process identity-related input information against reference information (for example, as obtained from one or more public or private databases) to determine whether the input identity being presented corresponds to a real identity, the correct identity, and/or a possibly fraudulent identity.

Certain example implementations of the disclosed technology may determine or estimate a probability of identity theft-based fraud based upon a set of parameters. In an example implementation, the parameters may be utilized to examine the input data, such as name, address and social security number, for example, to determine if such data corresponds to a real identity. In an example implementation, the input data may be compared with the reference data, for example, to determine field value matches, mismatches, weighting, etc. In certain example implementations of the disclosed technology, the input data (or associated entity record) may be scored to indicate the probability that it corresponds to a real identity.

In some cases, a model may be utilized to score the input identity elements, for example, to look for imperfections in the input data. For example, if the input data is scored to have a sufficiently high probability that it corresponds to a real identity, even though there may be certain imperfections in the input or reference data, once these imperfections are found, the process may disambiguate the data. For example, in one implementation, the disambiguation may be utilized to determine how many other identities are associated with the input SSN. According to an example implementation, a control for relatives may be utilized to minimize the number of similar records, for example, as may be due to Jr. and Sr. designations.

In an example implementation, the container data may be utilized to derive a date-of-birth, for example, based on matching reference records. In one example implementation, the derived date-of-birth may be compared with the issue date of the SSN. If the dates of the SSN are before the DOB, then the flag may be appended for this record as indication of fraud.

Another indication of fraud that may be determined, according to an example implementation, includes whether the entity has previously been associated with a different SSN. In an example implementation, a "most accurate" SSN for the entity may be checked to determine whether the entity is a prisoner, and if so the record may be flagged. In an example implementation, the input data may be checked against a deceased database to determine whether the entity has been deceased for more than one or two years, which may be another indicator of fraud.

Queries

According to an example implementation of the disclosed technology, the container data may be subjected to various queries to determine migration and crowding metrics. For example, one or more of the following questions may be posed to assess certain metrics associated with the entity and/or address: Is this the first time we see this entity? How old was this entity when they were first seen in any available records? How far did this entity move from their previous address? How far did this entity move from any of their previous address? How many previous addresses are associated with this entity? How long did the entity stay at their previous address before moving to the present address? Did the entity live at their previous location for longer than 3 years, and then suddenly move to the new location? How old was the entity when they moved to the new address? Was the entity deceased prior to moving to the new address? (Zombie) Was the entity incarcerated prior to moving to the new address? How many people did the entity live with at the prior address? How many people are at the new address?

In accordance with an example implementation of the disclosed technology, for each address and month reported, the following questions may be answered to further assess metrics associated with the entity and/or address: Is the address deliverable, or is it a potentially fake address? Is the secondary range (apartment number) deliverable? What is the type of address? (Single family, multi-family, commercial) How many undeliverable secondary ranges (fake apartments) are connected to this address in a month? How long have we known about the address? How is the address population changing over time?