Error estimation method, motion analysis method, error estimation apparatus, and program

Ding , et al.

U.S. patent number 10,288,746 [Application Number 15/128,954] was granted by the patent office on 2019-05-14 for error estimation method, motion analysis method, error estimation apparatus, and program. This patent grant is currently assigned to Seiko Epson Corporation. The grantee listed for this patent is Seiko Epson Corporation. Invention is credited to Yimei Ding, Daisuke Sugiya.

View All Diagrams

| United States Patent | 10,288,746 |

| Ding , et al. | May 14, 2019 |

Error estimation method, motion analysis method, error estimation apparatus, and program

Abstract

Disclosed are an error estimation method capable of reducing a processing load, and estimating errors of indexes indicating a state of a moving object with high accuracy by using a signal from a positioning satellite. In one aspect, the error estimation method includes calculating a coordinate transformation matrix between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a predetermined timing in a cycle of, converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on a detection result in the second sensor by using the coordinate transformation matrix in a case where the detection result in the second sensor receiving a signal from a positioning satellite is obtained, and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle at the predetermined timing after a timing at which the detection result in the second sensor is obtained.

| Inventors: | Ding; Yimei (Shiojiri, JP), Sugiya; Daisuke (Chino, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Seiko Epson Corporation (Tokyo,

JP) |

||||||||||

| Family ID: | 54194602 | ||||||||||

| Appl. No.: | 15/128,954 | ||||||||||

| Filed: | March 12, 2015 | ||||||||||

| PCT Filed: | March 12, 2015 | ||||||||||

| PCT No.: | PCT/JP2015/001388 | ||||||||||

| 371(c)(1),(2),(4) Date: | September 23, 2016 | ||||||||||

| PCT Pub. No.: | WO2015/146048 | ||||||||||

| PCT Pub. Date: | January 10, 2015 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180172842 A1 | Jun 21, 2018 | |

Foreign Application Priority Data

| Mar 25, 2014 [JP] | 2014-061549 | |||

| Mar 28, 2014 [JP] | 2014-068226 | |||

| Mar 28, 2014 [JP] | 2014-068249 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G01P 13/00 (20130101); G08G 1/005 (20130101); G01S 19/49 (20130101); G01C 21/165 (20130101); G01S 19/19 (20130101) |

| Current International Class: | G01S 19/49 (20100101); G01P 13/00 (20060101); G01C 21/16 (20060101); G08G 1/005 (20060101); G01S 19/19 (20100101) |

| Field of Search: | ;342/357.32,357.35,357.57,357.65 ;701/473,518 |

References Cited [Referenced By]

U.S. Patent Documents

| 6546336 | April 2003 | Matsuoka et al. |

| 2000-097722 | Apr 2000 | JP | |||

| 2001-174275 | Jun 2001 | JP | |||

| 2011-112500 | Jun 2011 | JP | |||

| 2014-185955 | Oct 2014 | JP | |||

| 2014-190900 | Oct 2014 | JP | |||

Attorney, Agent or Firm: Kilpatrick Townsend & Stockton LLP

Claims

The invention claimed is:

1. An error estimation method comprising: calculating a coordinate transformation matrix between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with a second sensor mounted on the moving object as a reference, at a predetermined timing in a cycle of motion causing movement of the moving object; converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on a detection result in the second sensor by using the coordinate transformation matrix in a case where the detection result in the second sensor receiving a signal from a positioning satellite is obtained; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle at the predetermined timing after a timing at which the detection result in the second sensor is obtained.

2. The error estimation method according to claim 1, wherein a velocity of the moving object in the first coordinate system is calculated by using the detection result in the first sensor, and the coordinate transformation matrix is calculated on the basis of the calculated velocity.

3. The error estimation method according to claim 1, wherein the predetermined timing is a timing at which the detection result in the first sensor satisfies a predetermined condition.

4. The error estimation method according to claim 1, further comprising: detecting the cycle by using the detection result in the first sensor, wherein the predetermined timing is a timing at which the cycle is detected.

5. The error estimation method according to claim 1, wherein the coordinate transformation matrix is calculated by using the detection result in the first sensor in a predetermined time period after the moving object starts movement.

6. The error estimation method according to claim 1, further comprising: determining whether or not the moving object is advancing straight, wherein, in a case where it is determined that the moving object is not advancing straight between the timing at which the detection result in the second sensor is obtained and the next predetermined timing, the error is not estimated at the next predetermined timing.

7. An error estimation method comprising: calculating a plurality of coordinate transformation matrices between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with a second sensor mounted on the moving object as a reference, at a plurality of timings in periodical motion causing movement of the moving object; selecting the coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained from among the plurality of coordinate transformation matrices in a case where a detection result in the second sensor receiving a signal from a positioning satellite is obtained, and converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on the detection result in the second sensor by using the selected coordinate transformation matrix; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle.

8. The error estimation method according to claim 7, wherein, at each of the plurality of timings, a velocity of the moving object in the first coordinate system is calculated by using the detection result in the first sensor, and the plurality of coordinate transformation matrices are calculated by calculating a coordinate transformation matrix on the basis of the calculated velocity.

9. The error estimation method according to claim 7, further comprising: detecting the cycle of the motion by using the detection result in the first sensor, wherein the coordinate transformation matrix is selected on the basis of a timing at which the detection result in the second sensor is obtained, and a timing which is closest to the timing at which the detection result in the second sensor is obtained and at which the cycle is detected.

10. The error estimation method according to claim 7, wherein the plurality of coordinate transformation matrices are calculated by using the detection result in the first sensor in a predetermined time period after the moving object starts movement.

11. The error estimation method according to claim 1, wherein the first sensor includes at least one of an acceleration sensor and an angular velocity sensor.

12. The error estimation method according to claim 1, wherein the first sensor is a geomagnetic sensor.

13. A motion analysis method comprising: estimating the errors by using the error estimation method according to claim 1; correcting the indexes by using the estimated errors; and analyzing motion of the moving object by using the corrected indexes.

14. An error estimation method using a first sensor and a second sensor, the method comprising: selecting one of a first mode and a second mode on the basis of a detection result in the second sensor; and estimating errors of indexes indicating a state of a moving object, wherein the estimating of the errors includes predicting the errors; correcting the predicted errors by using a first reference value based on the detection result in the second sensor and a first error among the errors without using a second reference value based on a detection result in the first sensor in a case where the first mode is selected; and correcting the predicted errors by using the second reference value and the first error without using the first reference value in a case where the second mode is selected.

15. The error estimation method according to claim 14, wherein the first sensor includes at least one of an acceleration sensor and an angular velocity sensor.

16. The error estimation method according to claim 14, wherein the second sensor is a sensor receiving a signal from a positioning satellite, or a geomagnetic sensor.

17. The error estimation method according to claim 14, wherein, in a case where the detection result in the second sensor is obtained, the first mode is selected, and, in a case where the detection result in the second sensor is not obtained, the second mode is selected.

18. The error estimation method according to claim 14, wherein, in a case where accuracy of the detection result in the second sensor is equal to or more than a standard value, the first mode is selected, and, in a case where the accuracy of the detection result in the second sensor is less than the standard value, the second mode is selected.

19. The error estimation method according to claim 14, further comprising: correcting the predicted errors by using a third reference value based on the detection result in the first sensor, and a second error which is different from the first error among the errors, in a case where the first mode is selected.

20. A motion analysis method comprising: estimating the errors by using the error estimation method according to claim 1; correcting the indexes by using the estimated errors; and analyzing motion of the moving object by using the corrected indexes.

Description

CROSS-REFERENCES TO RELATED APPLICATIONS

This application is a National Phase of International Application No. PCT/JP2015/001388, filed Mar. 12, 2015, which claims priority to Japanese Patent Application Nos. 2014-061549, filed Mar. 25, 2014, 2014-068226, filed Mar. 28, 2014 and 2014-068249, filed Mar. 28, 2014, the entireties of which are hereby incorporated by reference.

BACKGROUND

Technical Field

The present invention relates to an error estimation method, a motion analysis method, an error estimation apparatus, and a program.

Background Art

There is a technique in which a sensor module having a plurality of sensors built thereinto is mounted on a walker, and a position or an azimuth of the walker is estimated by using a detection result in the sensor module. In estimation of a position or an azimuth using a sensor, a global positioning system (GPS) is frequently used to correct a calculation result. For example, PTL 1 proposes a method in which a movement direction is determined and a position is calculated during walking by using the highest position arrival point or a landing point of a human body as a reference, and the position is corrected by a GPS.

CITATION LIST

Patent Literature

PTL 1: JP-A-2000-97722

SUMMARY OF INVENTION

Technical Problem

However, in a case where the sensor module is mounted on the waist, the waist normally swings right and left during walking or running, and measured azimuth angles are distributed so as to be bilaterally symmetrical with respect to an advancing direction according to the swinging of the waist. In contrast, an azimuth angle calculated by using the GPS nearly matches an advancing direction of a walker, and a difference between the two azimuth angles changes every moment. For this reason, even if an azimuth angle calculated by using the GPS is used as a reference of a measured azimuth angle, it cannot be said to accurately estimate an error of the measured azimuth angle. In order to accurately estimate the error, it is necessary to calculate information regarding deviation between an azimuth angle of the waist and the advancing direction, that is, a transformation matrix between a coordinate system with a sensor as a reference and a coordinate system with a walker as a reference. However, a timing at which positioning information of the GPS is obtained is irregular, and thus a coordinate transformation matrix is required to be calculated every time on the basis of information regarding the most recent velocity at a timing at which positioning information of the GPS is obtained. Thus, not only does a processing load increase, but an error of velocity also increases due to an integration error over time, and thus a coordinate transformation matrix may not be accurately calculated. Therefore, there is a problem in that estimation accuracy of an azimuth angle error is reduced.

The present invention has been made in consideration of the above-described problems, and, according to some aspects of the present invention, it is possible to provide an error estimation method, an error estimation apparatus, and a program, capable of reducing a processing load, and estimating errors of indexes indicating a state of a moving object with high accuracy by using a signal from a positioning satellite, and a motion analysis method capable of analyzing a user's motion with high accuracy.

Solution to Problem

The present invention has been made in order to solve at least some of the above-described problems, and can be realized in the following aspects or application examples.

Application Example 1

An error estimation method according to this application example includes calculating a coordinate transformation matrix between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a predetermined timing in a cycle of motion causing movement of the moving object; converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on a detection result in the second sensor by using the coordinate transformation matrix in a case where the detection result in the second sensor receiving a signal from a positioning satellite is obtained; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle at the predetermined timing after a timing at which the detection result in the second sensor is obtained.

The error estimation method according to the application example uses the fact that a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor is nearly constant at predetermined timing in each cycle of motion causing movement of the moving object. The errors of the indexes indicating a state of the moving object are estimated by using a difference between two azimuth angles which are calculated at a predetermined timing after the timing at which the detection result in the second sensor is obtained by using a coordinate transformation matrix calculated at the predetermined timing. Therefore, according to the error estimation method of the application example, even if a timing for obtaining a detection result in the second sensor is irregular, it is possible to estimate an error with high accuracy, and also to reduce a processing load since a coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 2

In the error estimation method according to the application example, a velocity of the moving object in the first coordinate system may be calculated by using the detection result in the first sensor, and the coordinate transformation matrix may be calculated on the basis of the calculated velocity.

According to the error estimation method of the application example, it is possible to calculate a coordinate transformation matrix at a predetermined timing in a cycle of motion regardless of the presence or absence of a detection result in the second sensor.

Application Example 3

In the error estimation method according to the application example, the predetermined timing may be a timing at which the detection result in the first sensor satisfies a predetermined condition.

According to the error estimation method of the application example, a timing at which the detection result in the first sensor satisfies a predetermined condition is used as a reference, and thus it is possible to estimate an error with high accuracy at a timing corresponding to a timing at which the coordinate transformation matrix is calculated.

Application Example 4

The error estimation method according to the application example may further include detecting the cycle by using the detection result in the first sensor, and the predetermined timing may be a timing at which the cycle is detected.

According to the error estimation method of the application example, a coordinate transformation matrix is calculated at a timing at which the cycle of the motion is detected, and, then, an error can be estimated with high accuracy at the timing at which the cycle of the motion is detected.

Application Example 5

In the error estimation method according to the application example, the coordinate transformation matrix may be calculated by using the detection result in the first sensor in a predetermined time period after the moving object starts movement.

According to the error estimation method of the application example, since the coordinate transformation matrix is calculated early after the moving object starts movement, it is possible to reduce an error of a coordinate transformation matrix caused by an integration error or the like and thus to improve error estimation accuracy.

Application Example 6

The error estimation method according to the application example may further include determining whether or not the moving object is advancing straight, and, in a case where it is determined that the moving object is not advancing straight between the timing at which the detection result in the second sensor is obtained and the next predetermined timing, the error may not be estimated at the next predetermined timing.

According to the error estimation method of the application example, if the moving object changes an advancing direction thereof from the time when a detection result in the second sensor is obtained to the next predetermined timing, a difference between an azimuth angle based on a detection result in the first sensor and an azimuth angle based on a detection result in the second sensor differs from that at the time when the coordinate transformation matrix is calculated, and, in this case, an error is not estimated by taking this into consideration. Thus, it is possible to suppress a reduction in error estimation accuracy.

Application Example 7

An error estimation method according to this application example includes calculating a plurality of coordinate transformation matrices between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a plurality of timings in periodical motion causing movement of the moving object; selecting the coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained from among the plurality of coordinate transformation matrices in a case where a detection result in the second sensor receiving a signal from a positioning satellite is obtained, and converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on the detection result in the second sensor by using the selected coordinate transformation matrix; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle.

According to the error estimation method of the application example, a coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained is selected from among a plurality of coordinate transformation matrices calculated at a plurality of timings in a cycle of motion causing movement of the moving object, and errors of indexes indicating a state of the moving object are estimated by using a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor, calculated by using the selected coordinate transformation matrix. Therefore, according to the error estimation method of the application example, it is possible to estimate an error with high accuracy by using a coordinate transformation matrix corresponding to a timing at which the detection result in the second sensor is obtained, and also to reduce a processing load since the coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 8

In the error estimation method according to the application example, at each of the plurality of timings, a velocity of the moving object in the first coordinate system may be calculated by using the detection result in the first sensor, and the plurality of coordinate transformation matrices may be calculated by calculating a coordinate transformation matrix on the basis of the calculated velocity.

According to the error estimation method of the application example, it is possible to calculate a coordinate transformation matrix at a plurality of timings in a cycle of motion regardless of the presence or absence of a detection result in the second sensor.

Application Example 9

The error estimation method according to the application example may further include detecting the cycle of the motion by using the detection result in the first sensor, and the coordinate transformation matrix may be selected on the basis of a timing at which the detection result in the second sensor is obtained, and a timing which is closest to the timing at which the detection result in the second sensor is obtained and at which the cycle is detected.

According to the error estimation method of the application example, it is possible to select an appropriate coordinate transformation matrix on the basis of a timing at which a detection result in the second sensor is obtained and a timing at which a cycle of the motion has been detected most recently.

Application Example 10

In the error estimation method according to the application example, the plurality of coordinate transformation matrices may be calculated by using the detection result in the first sensor in a predetermined time period after the moving object starts movement.

According to the error estimation method of the application example, since a plurality of coordinate transformation matrices are calculated early after the moving object starts movement, it is possible to reduce an error of a coordinate transformation matrix caused by an integration error or the like and thus to improve error estimation accuracy.

Application Example 11

In the error estimation method according to the application example, the first sensor may include at least one of an acceleration sensor and an angular velocity sensor.

According to the error estimation method of the application example, it is possible to estimate errors of indexes indicating a state of the moving object by using a difference between an azimuth angle based on a detection result in the acceleration sensor or the angular velocity sensor and an azimuth angle based on a detection result in the second sensor.

Application Example 12

In the error estimation method according to the application example, the first sensor may be a geomagnetic sensor.

According to the error estimation method of the application example, it is possible to estimate errors of indexes indicating a state of the moving object by using a difference between an azimuth angle based on a detection result in the geomagnetic sensor and an azimuth angle based on a detection result in the second sensor.

Application Example 13

A motion analysis method according to this application example includes estimating the errors by using any one of the error estimation methods; correcting the indexes by using the estimated errors; and analyzing motion of the moving object by using the corrected indexes.

According to the motion analysis method of the application example, it is possible to analyze motion of the moving object with high accuracy by using the indexes which are corrected with high accuracy by using the errors of the indexes indicating a state of the moving object, the state of the moving object being estimated by using the error estimation methods according to the application examples.

Application Example 14

An error estimation apparatus according to this application example includes a coordinate transformation matrix calculation portion that calculates a coordinate transformation matrix between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a predetermined timing in a cycle of motion causing movement of the moving object; an azimuth angle transformation portion that converts one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on a detection result in the second sensor by using the coordinate transformation matrix in a case where the detection result in the second sensor receiving a signal from a positioning satellite is obtained; and an error estimation portion that estimates errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle at the predetermined timing after a timing at which the detection result in the second sensor is obtained.

The error estimation apparatus according to the application example uses the fact that a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor is nearly constant at predetermined timing in each cycle of motion causing movement of the moving object. The errors of the indexes indicating a state of the moving object are estimated by using a difference between two azimuth angles which are calculated at a predetermined timing after the timing at which the detection result in the second sensor is obtained by using a coordinate transformation matrix calculated at the predetermined timing. Therefore, according to the error estimation apparatus of the application example, even if a timing for obtaining a detection result in the second sensor is irregular, it is possible to estimate an error with high accuracy, and also to reduce a processing load since a coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 15

An error estimation apparatus according to this application example includes a coordinate transformation matrix calculation portion that calculates a plurality of coordinate transformation matrices between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a plurality of timings in periodical motion causing movement of the moving object; an azimuth angle transformation portion that selects the coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained from among the plurality of coordinate transformation matrices in a case where a detection result in the second sensor receiving a signal from a positioning satellite is obtained, and converts one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on the detection result in the second sensor by using the selected coordinate transformation matrix; and an error estimation portion that estimates errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle.

According to the error estimation apparatus of the application example, a coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained is selected from among a plurality of coordinate transformation matrices calculated at a plurality of timings in a cycle of motion causing movement of the moving object, and errors of indexes indicating a state of the moving object are estimated by using a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor, calculated by using the selected coordinate transformation matrix. Therefore, according to the error estimation apparatus of the application example, it is possible to estimate an error with high accuracy by using a coordinate transformation matrix corresponding to a timing at which the detection result in the second sensor is obtained, and also to reduce a processing load since the coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 16

A program according to this application example causes a computer to execute calculating a coordinate transformation matrix between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a predetermined timing in a cycle of motion causing movement of the moving object; converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on a detection result in the second sensor by using the coordinate transformation matrix in a case where the detection result in the second sensor receiving a signal from a positioning satellite is obtained; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle at the predetermined timing after a timing at which the detection result in the second sensor is obtained.

The program according to the application example uses the fact that a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor is nearly constant at predetermined timing in each cycle of motion causing movement of the moving object. The errors of the indexes indicating a state of the moving object are estimated by using a difference between two azimuth angles which are calculated at a predetermined timing after the timing at which the detection result in the second sensor is obtained by using a coordinate transformation matrix calculated at the predetermined timing. Therefore, according to the program of the application example, even if a timing for obtaining a detection result in the second sensor is irregular, it is possible to estimate an error with high accuracy, and also to reduce a processing load since a coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 17

A program according to this application example causes a computer to execute calculating a plurality of coordinate transformation matrices between a first coordinate system with a first sensor mounted on a moving object as a reference and a second coordinate system with the moving object as a reference, at a plurality of timings in periodical motion causing movement of the moving object; selecting the coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained from among the plurality of coordinate transformation matrices in a case where a detection result in the second sensor receiving a signal from a positioning satellite is obtained, and converting one of an azimuth angle of the moving object based on a detection result in the first sensor and an azimuth angle of the moving object based on the detection result in the second sensor by using the selected coordinate transformation matrix; and estimating errors of indexes indicating a state of the moving object by using a difference between the converted azimuth angle and the other azimuth angle.

According to the program of the application example, a coordinate transformation matrix corresponding to a timing at which a detection result in the second sensor is obtained is selected from among a plurality of coordinate transformation matrices calculated at a plurality of timings in a cycle of motion causing movement of the moving object, and errors of indexes indicating a state of the moving object are estimated by using a difference between an azimuth angle based on the detection result in the first sensor and an azimuth angle based on the detection result in the second sensor, calculated by using the selected coordinate transformation matrix. Therefore, according to the program of the application example, it is possible to estimate an error with high accuracy by using a coordinate transformation matrix corresponding to a timing at which the detection result in the second sensor is obtained, and also to reduce a processing load since the coordinate transformation matrix is not required to be calculated whenever the detection result in the second sensor is obtained.

Application Example 18

An error estimation method according to this application example is an error estimation method using a first sensor and a second sensor, the method including selecting one of a first mode and a second mode on the basis of a detection result in the second sensor; and estimating errors of indexes indicating a state of a moving object, in which the estimating of the errors includes predicting the errors; correcting the predicted errors by using a first reference value based on the detection result in the second sensor and a first error among the errors without using a second reference value based on a detection result in the first sensor in a case where the first mode is selected; and correcting the predicted errors by using the second reference value and the first error without using the first reference value in a case where the second mode is selected.

According to the error estimation method of the application example, one of two different modes is selected in order to correct errors of indexes indicating a state of the moving object by using different kinds of reference values and the same kind of error. Correction in the first mode and correction in the second mode are not duplicated, and thus it is possible to estimate the errors with high accuracy.

Application Example 19

In the error estimation method according to the application example, the first sensor may include at least one of an acceleration sensor and an angular velocity sensor.

According to the error estimation method of the application example, in the second mode, it is possible to estimate errors by using a reference value based on a detection result in the acceleration sensor or the angular velocity sensor.

Application Example 20

In the error estimation method according to the application example, the second sensor may be a sensor receiving a signal from a positioning satellite, or a geomagnetic sensor.

According to the error estimation method of the application example, in the first mode, it is possible to estimate errors by using a reference value based on a detection result in the sensor receiving a signal from a positioning satellite, or the geomagnetic sensor.

Application Example 21

In the error estimation method according to the application example, in a case where the detection result in the second sensor is obtained, the first mode may be selected, and, in a case where the detection result in the second sensor is not obtained, the second mode may be selected.

According to the error estimation method of the application example, in a case where the detection result in the second sensor is obtained, the first mode can be selected.

Application Example 22

In the error estimation method according to the application example, in a case where the accuracy of the detection result in the second sensor is equal to or more than a standard value, the first mode may be selected, and, in a case where the accuracy of the detection result in the second sensor is less than the standard value, the second mode may be selected.

According to the error estimation method of the application example, in a case where the accuracy of the reference value based on the detection result in the second sensor is less than the standard value, the second mode is selected, and thus it is possible to suppress a reduction in error estimation accuracy.

Application Example 23

The error estimation method according to the application example may further include correcting the predicted errors by using a third reference value based on the detection result in the first sensor, and a second error which is different from the first error among the errors, in a case where the first mode is selected.

According to the error estimation method of the application example, in the first mode, it is possible to perform error estimation using the first reference value based on a detection result in the second sensor and the third reference value based on a detection result in the first sensor, and thus to further improve error estimation accuracy in the first mode.

Application Example 24

A motion analysis method according to this application example includes estimating the errors by using any one of the error estimation methods; correcting the indexes by using the estimated errors; and analyzing motion of the moving object by using the corrected indexes.

According to the motion analysis method of the application example, it is possible to analyze motion of the moving object with high accuracy by using the indexes which are corrected with high accuracy by using the errors of the indexes indicating a state of the moving object, the state of the moving object being estimated by using the error estimation methods according to the application examples.

Application Example 25

An error estimation apparatus according to this application example includes a mode selection portion that selects one of a first mode and a second mode on the basis of a detection result in a second sensor; and an error estimation portion that estimates errors of indexes indicating a state of a moving object, in which the error estimation portion predicts the errors, corrects the predicted errors by using a first reference value based on the detection result in the second sensor and a first error among the errors without using a second reference value based on a detection result in the first sensor in a case where the first mode is selected, and corrects the predicted errors by using the second reference value and the first error without using the first reference value in a case where the second mode is selected.

According to the error estimation apparatus of the application example, one of two different modes is selected in order to correct errors of indexes indicating a state of the moving object by using different kinds of reference values and the same kind of error. Correction in the first mode and correction in the second correction are not duplicated, and thus it is possible to estimate the errors with high accuracy.

Application Example 26

A program according to this application example causes a computer to execute selecting one of a first mode and a second mode on the basis of a detection result in a second sensor; and estimating errors of indexes indicating a state of a moving object, in which the estimating of the errors includes predicting the errors; correcting the predicted errors by using a first reference value based on the detection result in the second sensor and a first error among the errors without using a second reference value based on a detection result in the first sensor in a case where the first mode is selected; and correcting the predicted errors by using the second reference value and the first error without using the first reference value in a case where the second mode is selected.

According to the program of the application example, one of two different modes is selected in order to correct errors of indexes indicating a state of the moving object by using different kinds of reference values and the same kind of error. Correction in the first mode and correction in the second mode are not duplicated, and thus it is possible to estimate the errors with high accuracy.

BRIEF DESCRIPTION OF DRAWINGS

FIG. 1 is a diagram illustrating an outline of a motion analysis system according to a first embodiment.

FIG. 2 is a functional block diagram illustrating configuration examples of a motion analysis apparatus and a display apparatus.

FIG. 3 is a diagram illustrating a configuration example of a sensing data table.

FIG. 4 is a diagram illustrating a configuration example of a GPS data table.

FIG. 5 is a diagram illustrating a configuration example of a calculated data table.

FIG. 6 is a functional block diagram illustrating a configuration example of a processing unit of the motion analysis apparatus.

FIG. 7 is a diagram illustrating an attitude during a user's walking.

FIG. 8 is a diagram illustrating examples of an azimuth angle of an inertial measurement unit and an azimuth angle of an advancing direction in a case where the user performs a walking action (advances straight).

FIG. 9 is a diagram illustrating an example of a combined acceleration of three-axis accelerations detected by the inertial measurement unit during the user's walking.

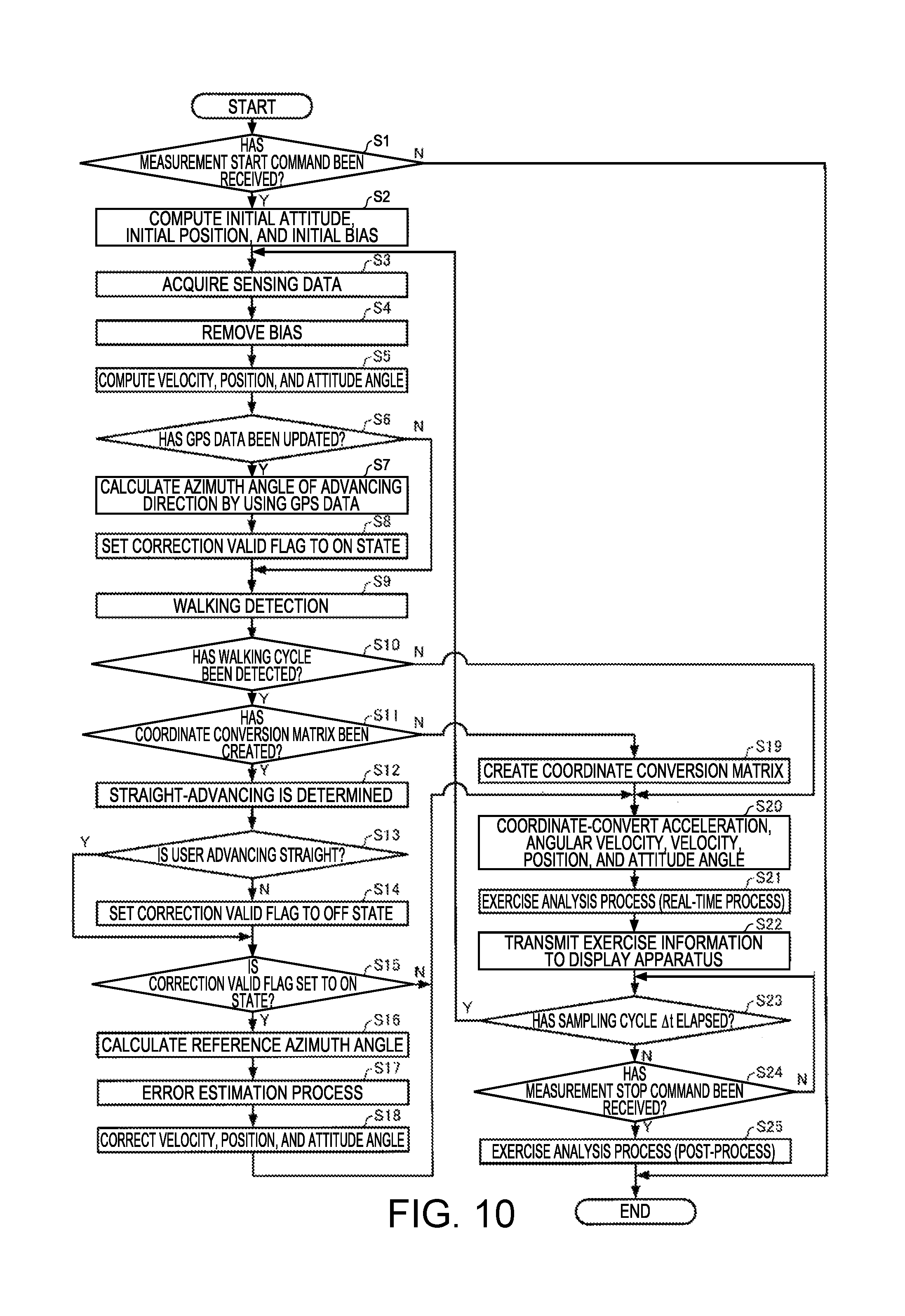

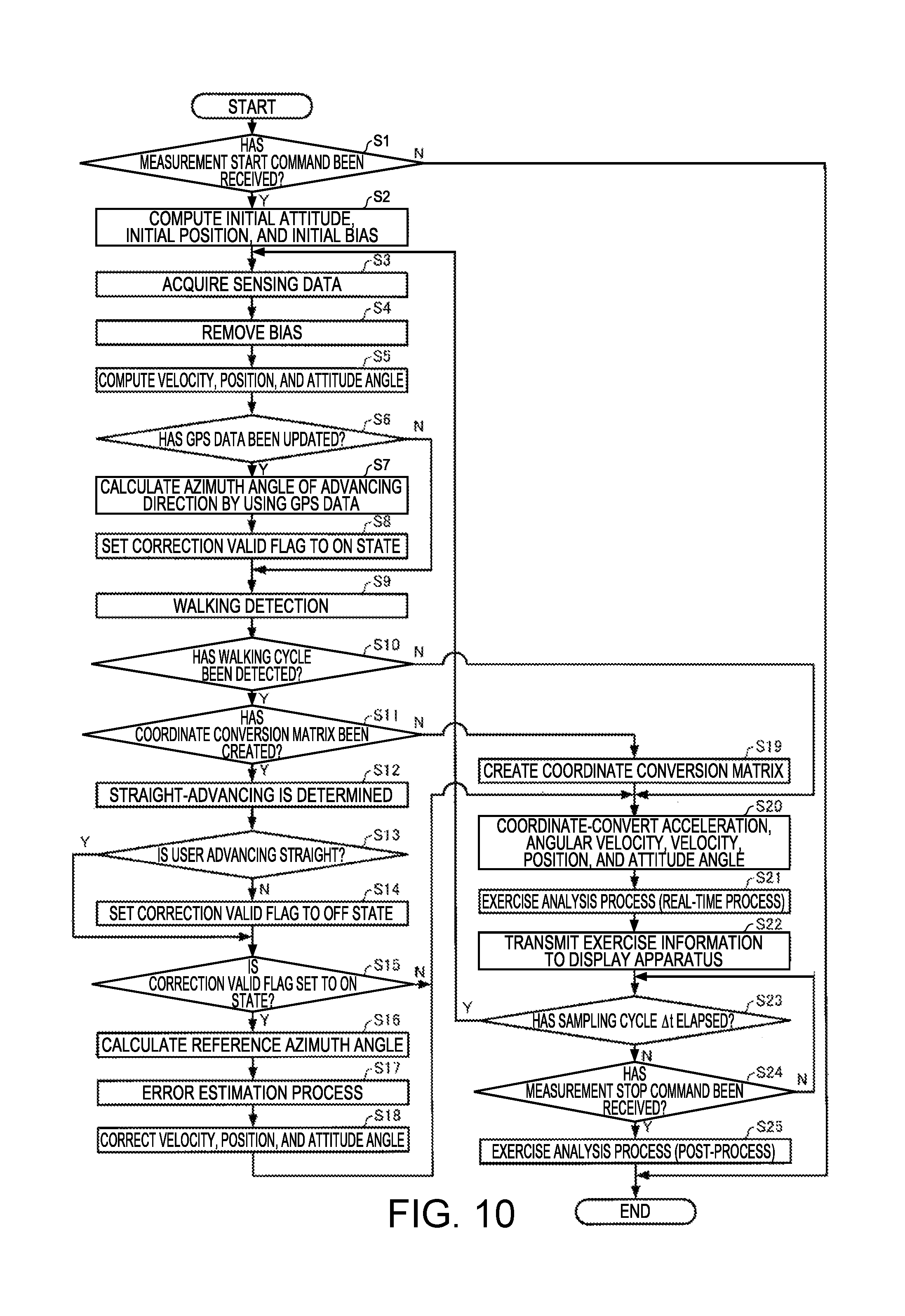

FIG. 10 is a flowchart illustrating examples of procedures of a motion analysis process.

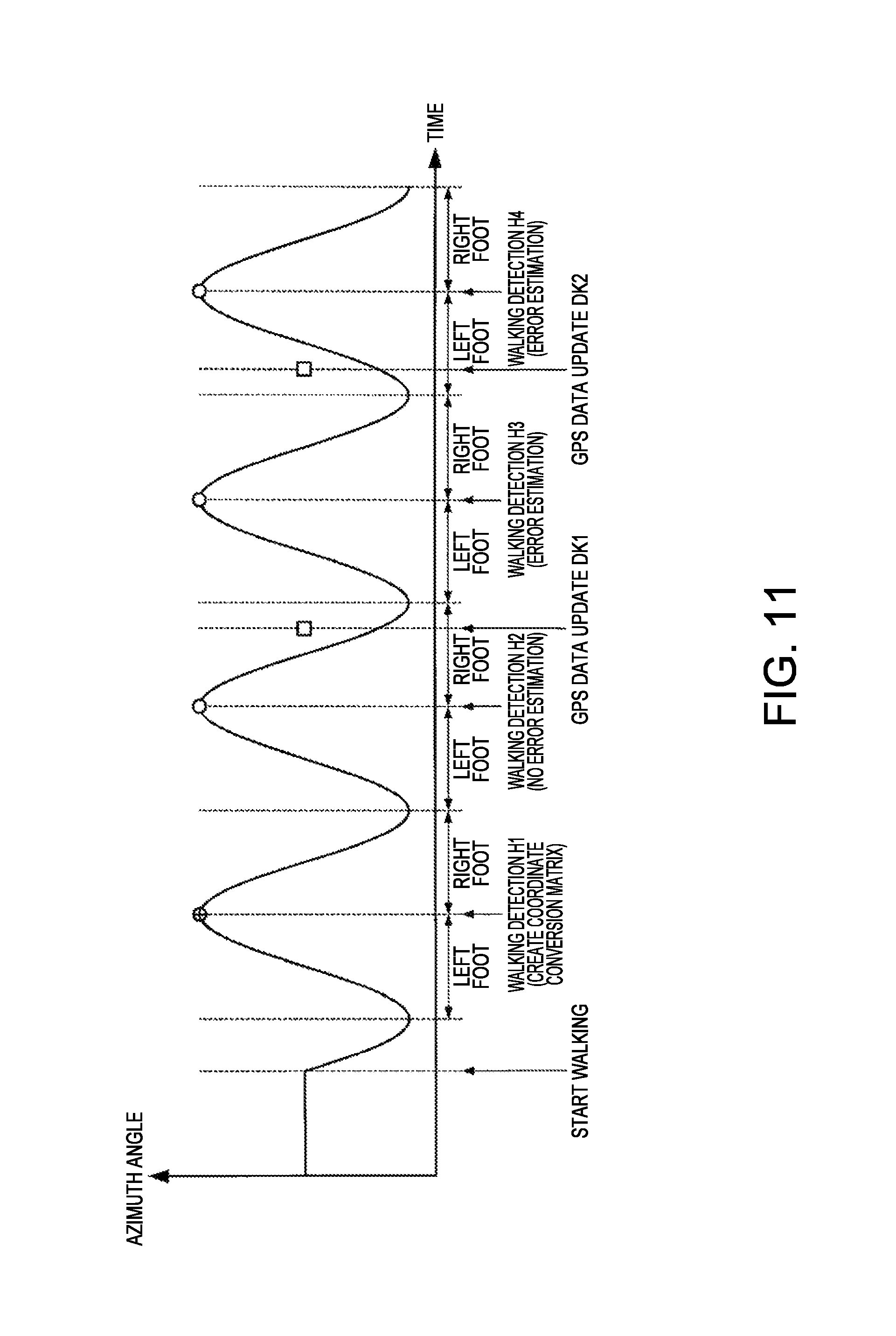

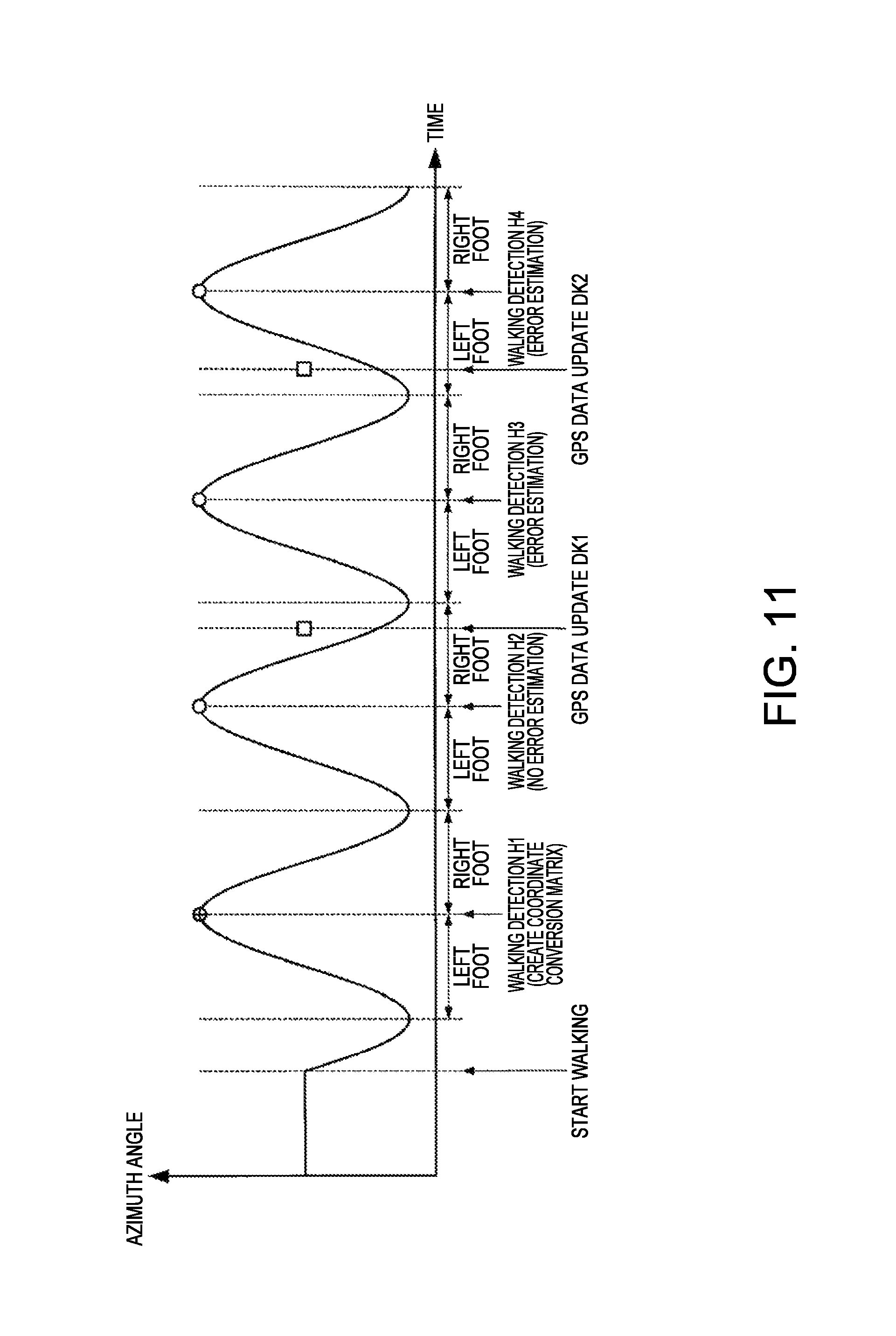

FIG. 11 is a diagram illustrating an example of a relationship between an azimuth angle of the inertial measurement unit and a timing of processing performed by the processing unit in a case where the user is walking straight.

FIG. 12 is a diagram illustrating an outline of a motion analysis system according to a second embodiment.

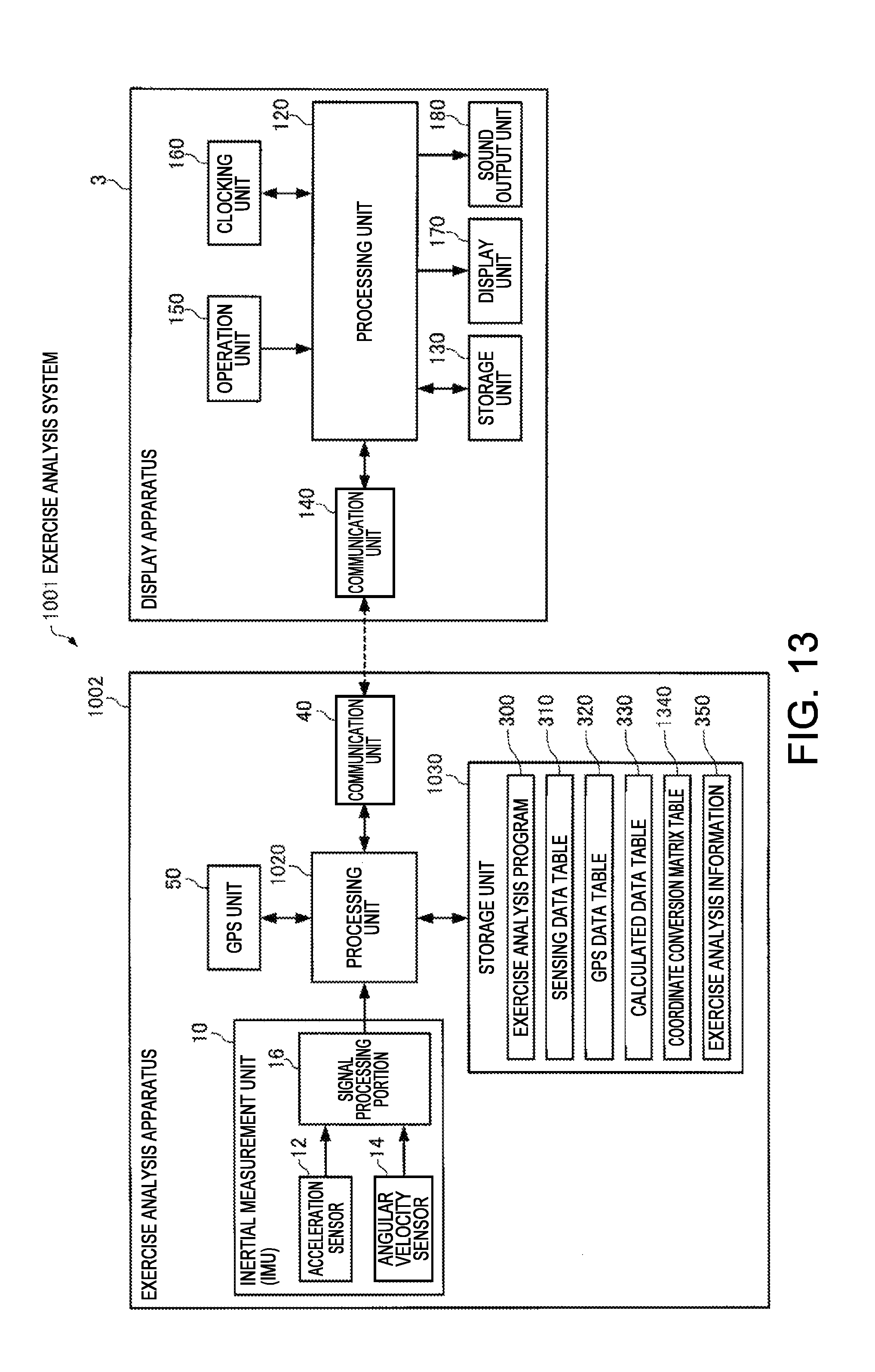

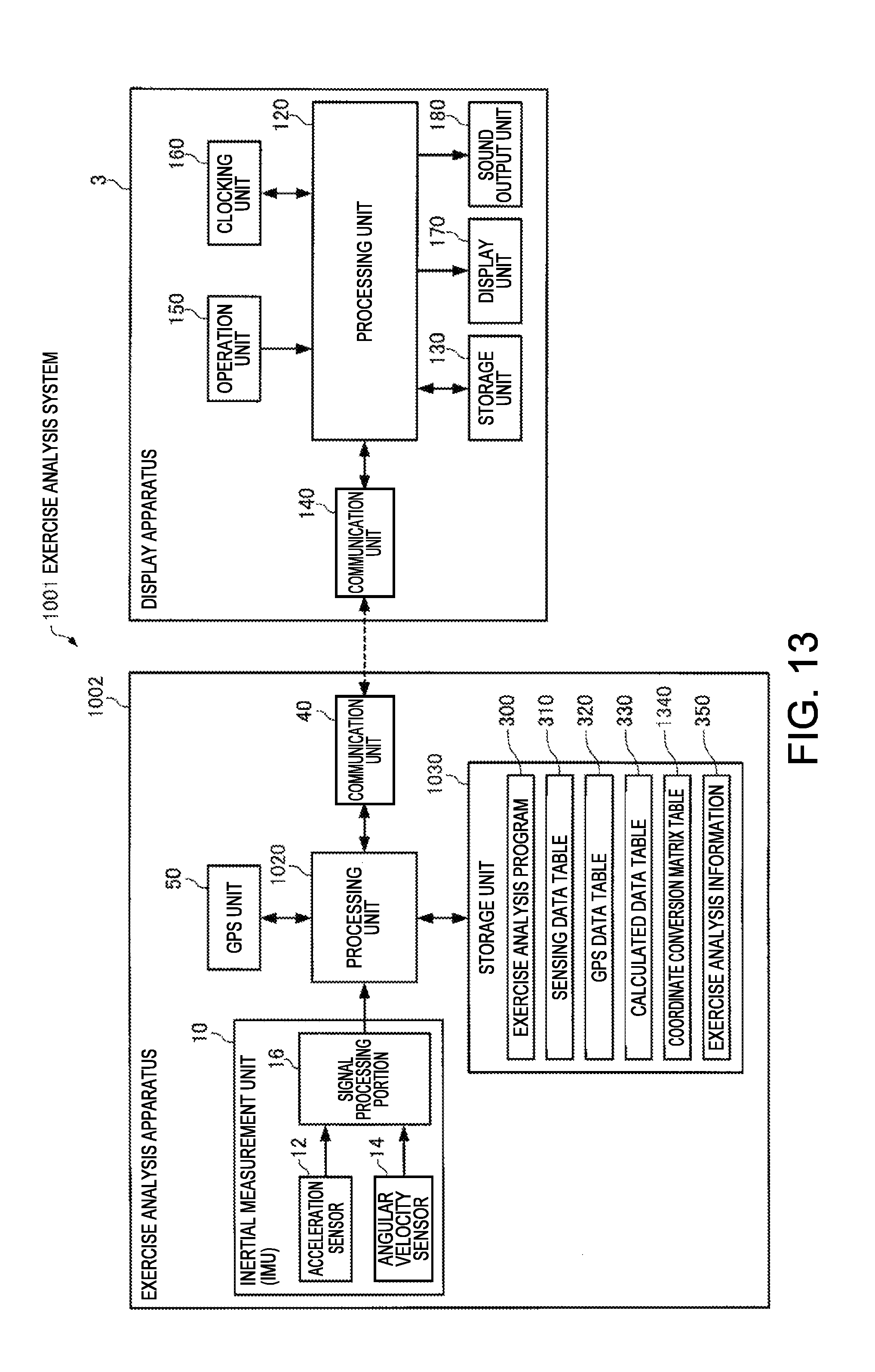

FIG. 13 is a functional block diagram illustrating configuration examples of a motion analysis apparatus and a display apparatus.

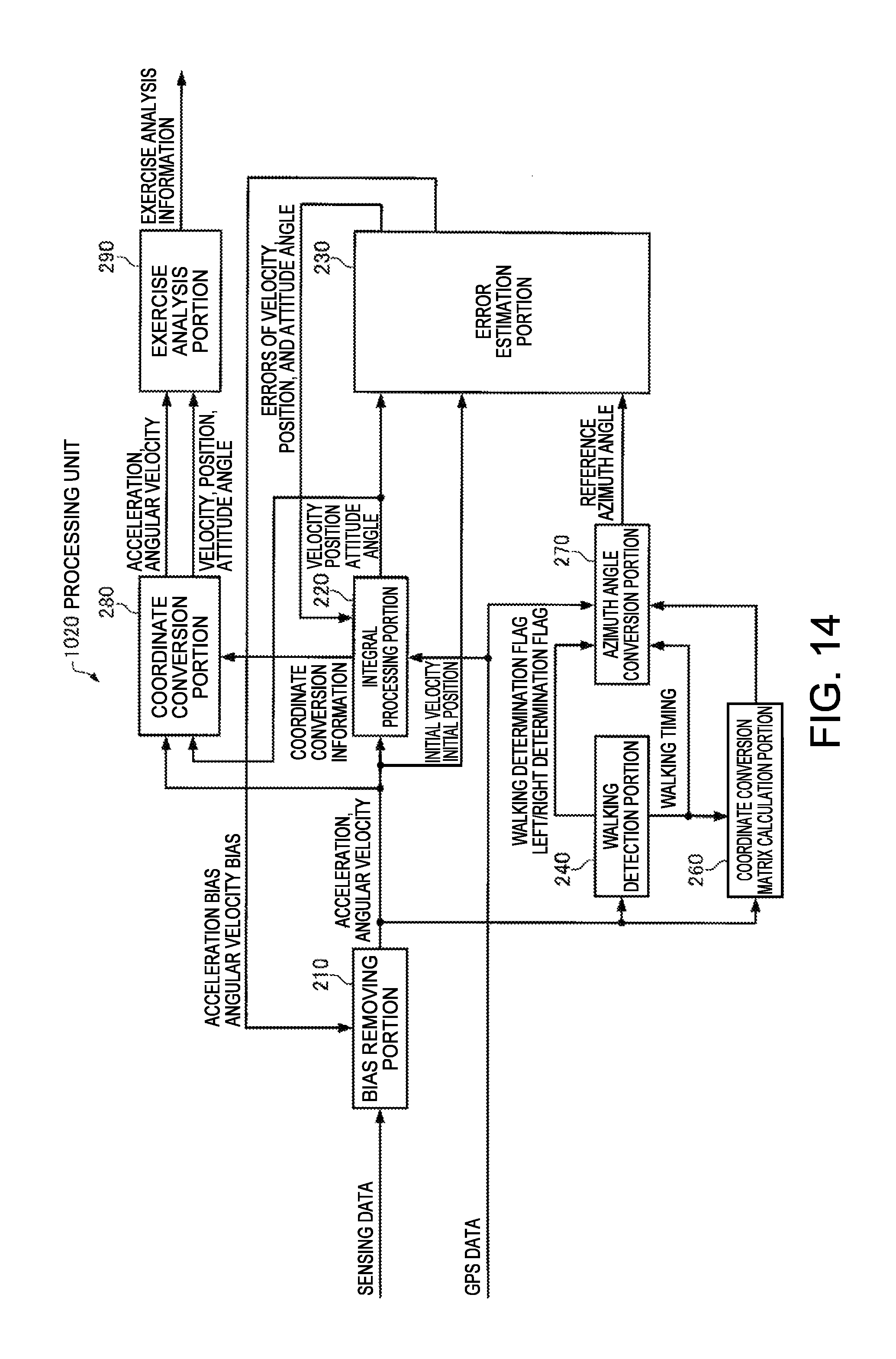

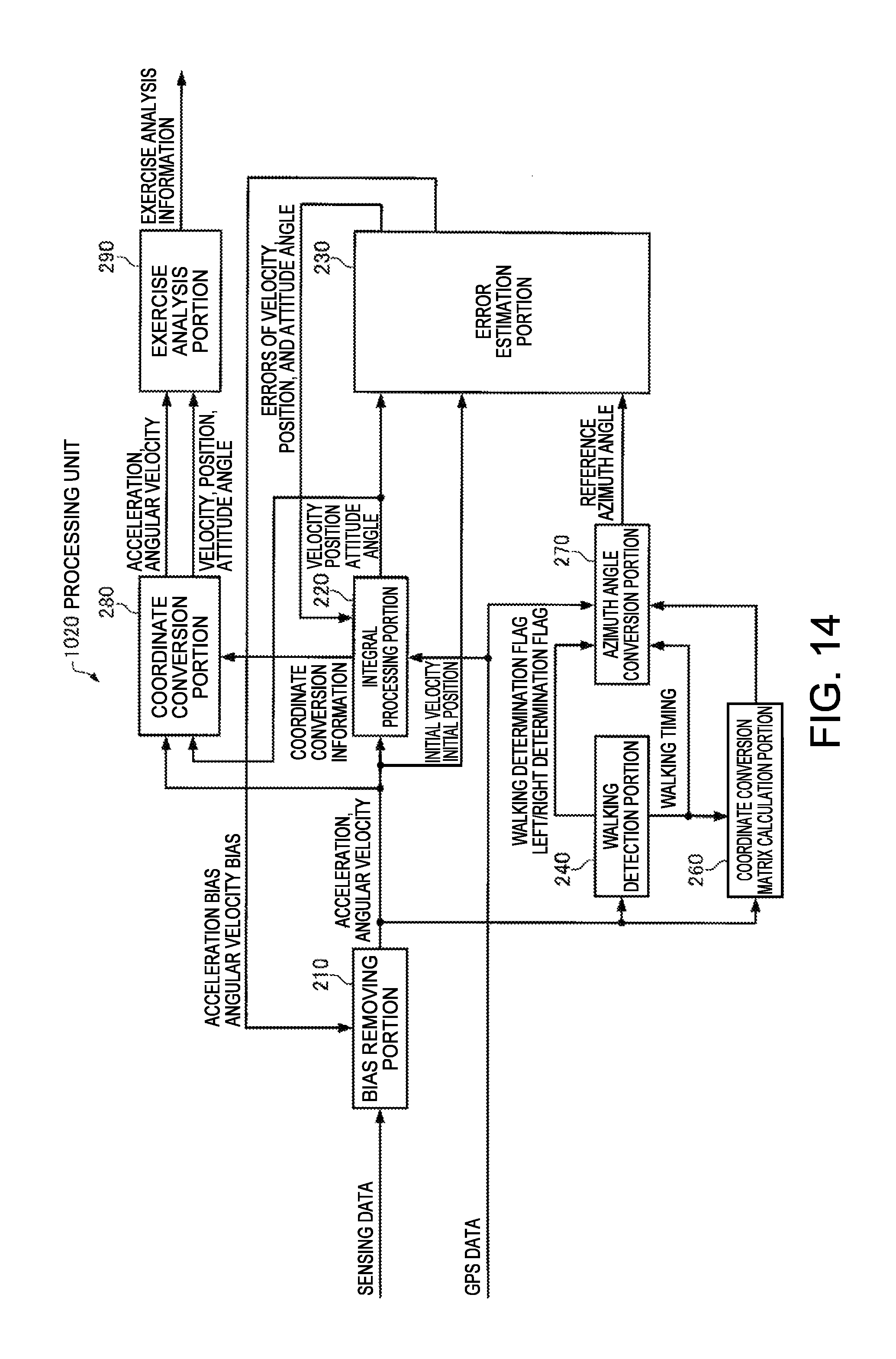

FIG. 14 is a functional block diagram illustrating a configuration example of a processing unit of the motion analysis apparatus.

FIG. 15 is a diagram illustrating a configuration example of a coordinate transformation matrix table.

FIG. 16 is a flowchart illustrating examples of procedures of a motion analysis process.

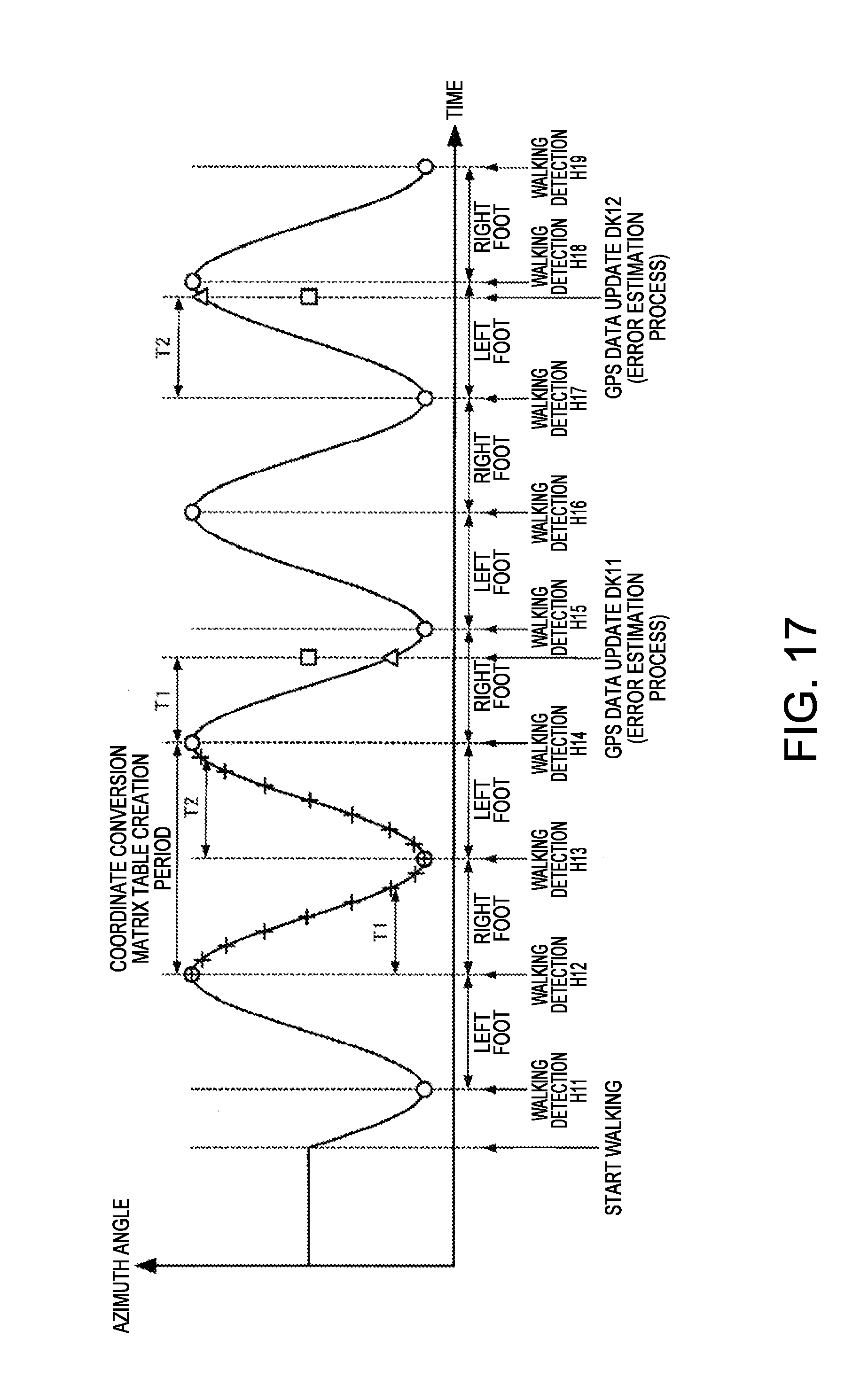

FIG. 17 is a diagram illustrating an example of a relationship between an azimuth angle of the inertial measurement unit and a timing of processing performed by the processing unit in a case where the user is walking straight.

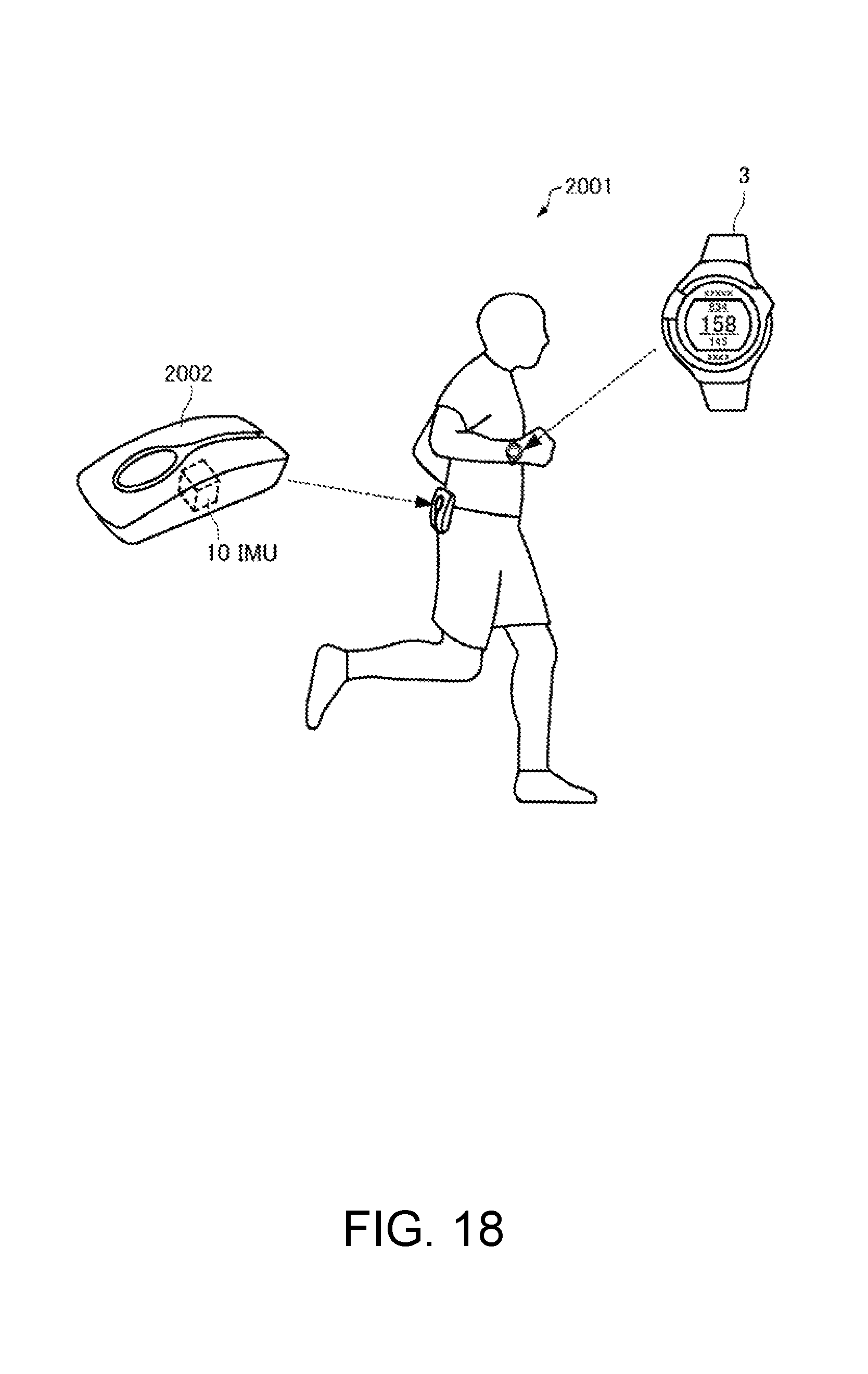

FIG. 18 is a diagram illustrating an outline of a motion analysis system according to a third embodiment.

FIG. 19 is a functional block diagram illustrating configuration examples of a motion analysis apparatus and a display apparatus.

FIG. 20 is a functional block diagram illustrating a configuration example of a processing unit of the motion analysis apparatus.

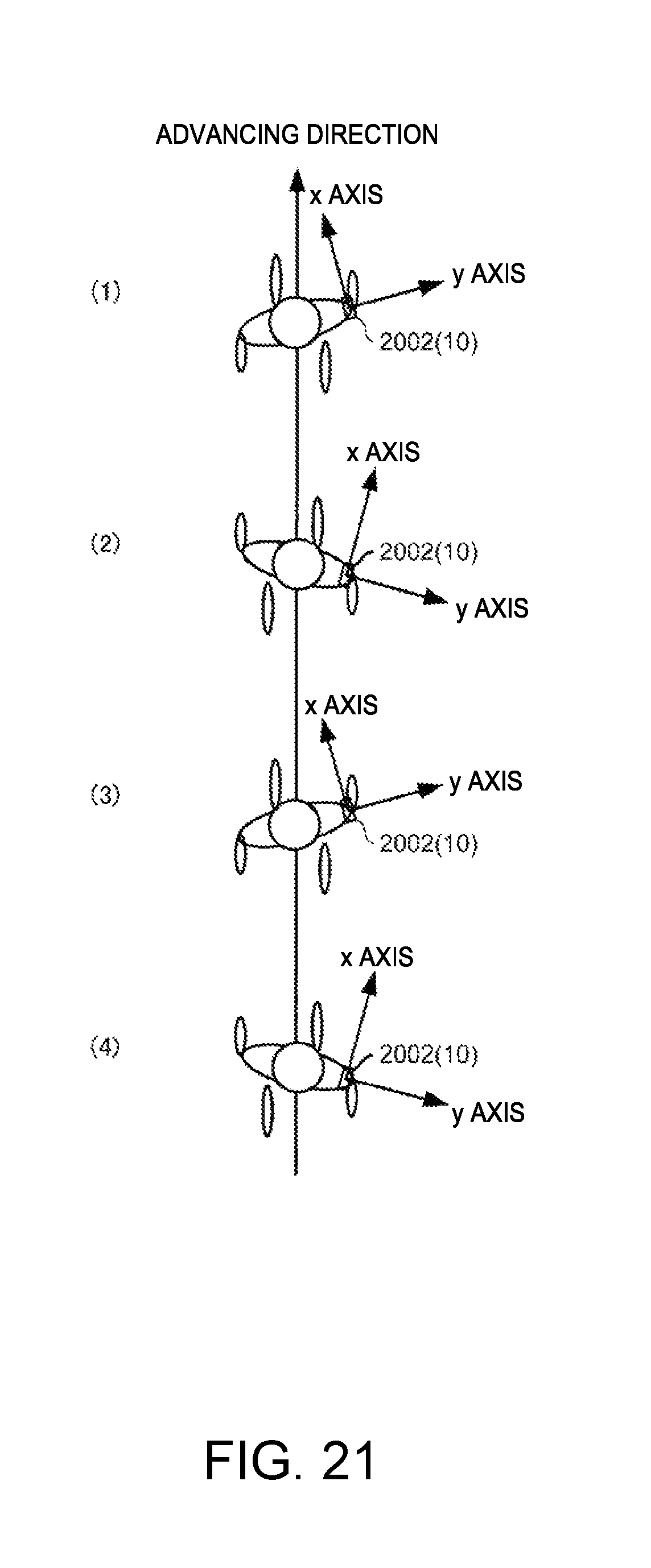

FIG. 21 is a diagram illustrating an attitude during a user's walking.

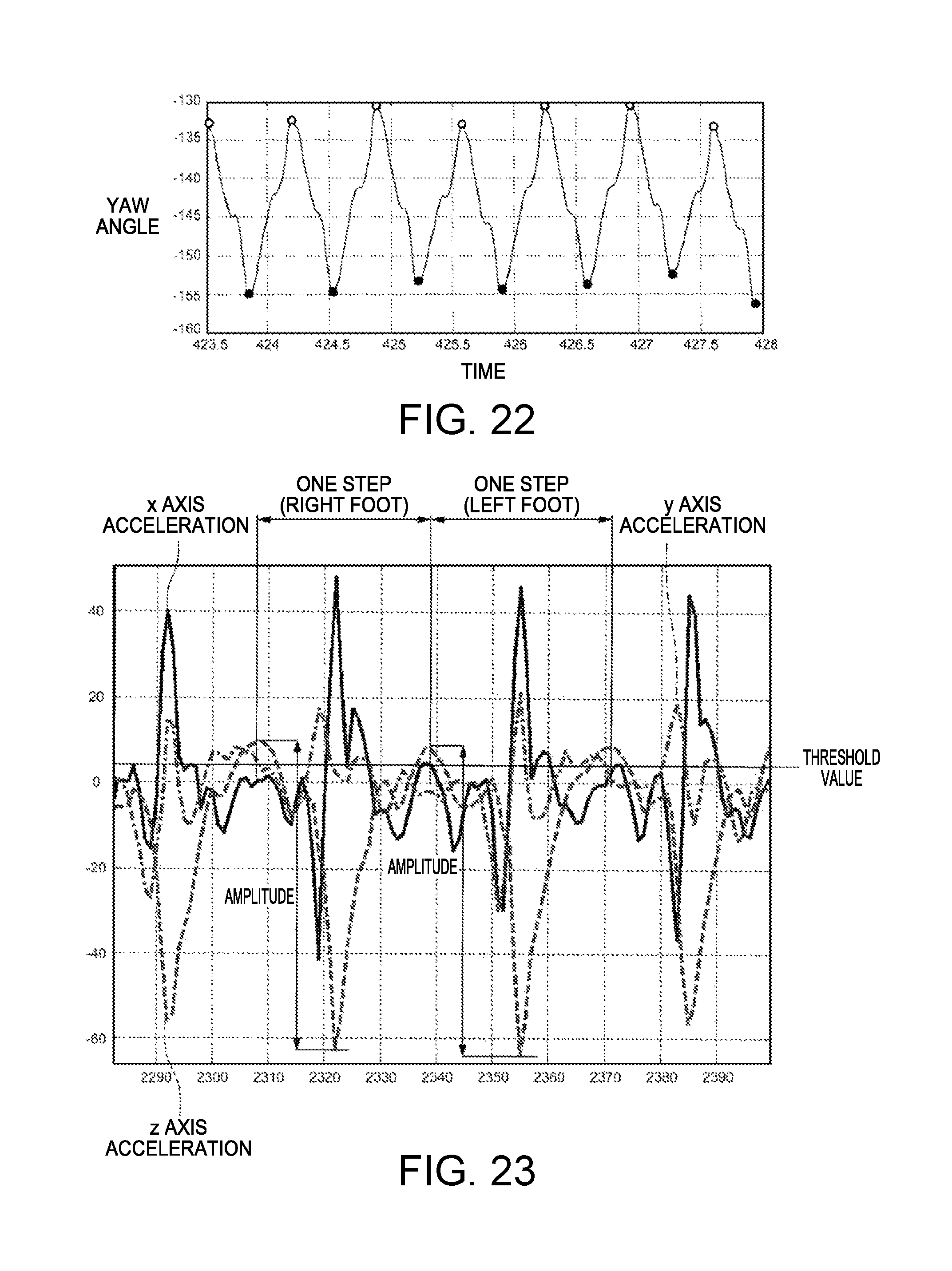

FIG. 22 is a diagram illustrating a yaw angle during the user's walking.

FIG. 23 is a diagram illustrating examples of three-axis accelerations during the user's walking.

FIG. 24 is a flowchart illustrating examples of procedures of a motion analysis process.

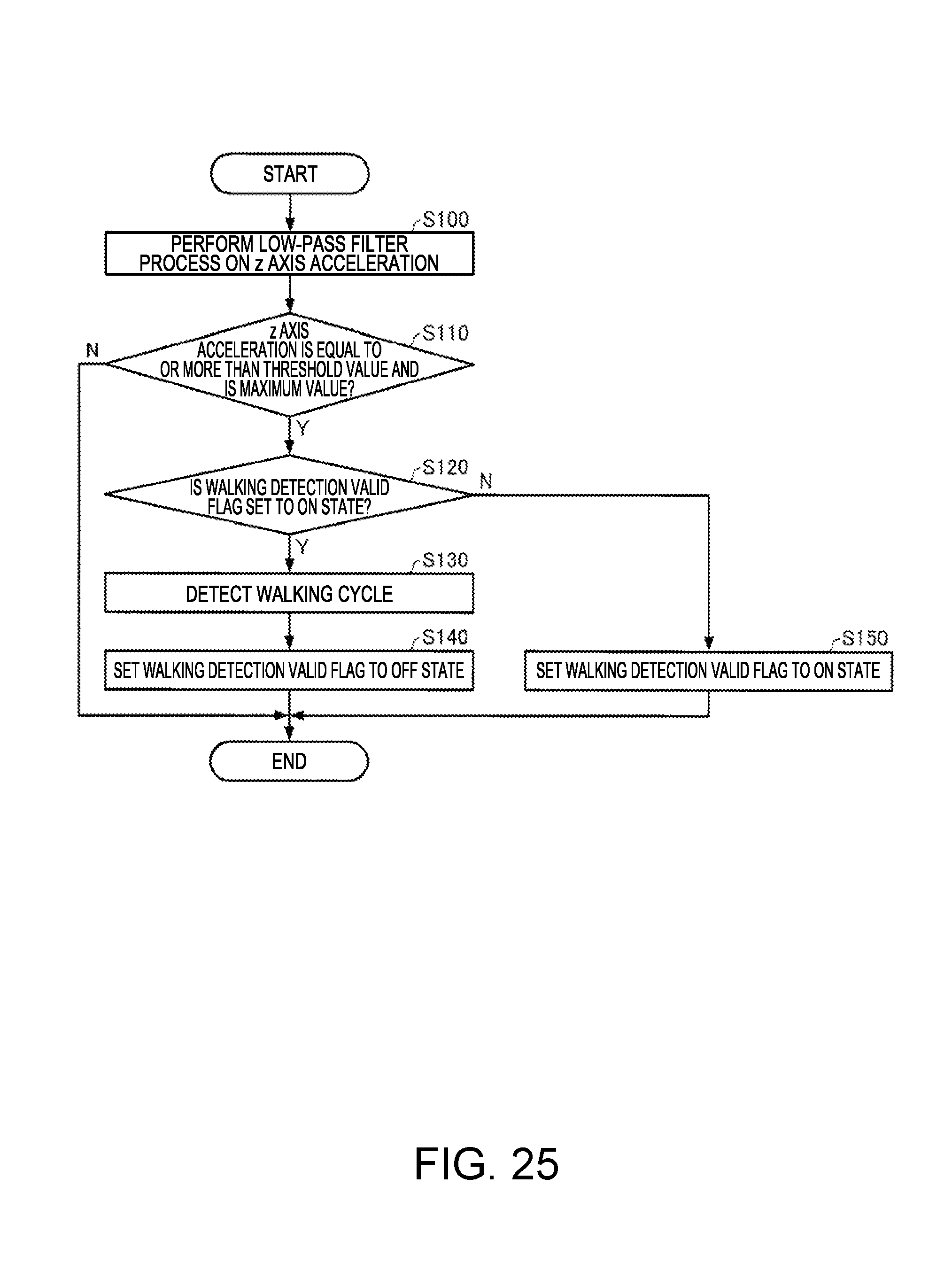

FIG. 25 is a flowchart illustrating examples of procedures of a walking detection process.

DESCRIPTION OF EMBODIMENTS

First Embodiment

1. Outline of Motion Analysis System

A first embodiment of the present invention will be described in detail with reference to the drawings.

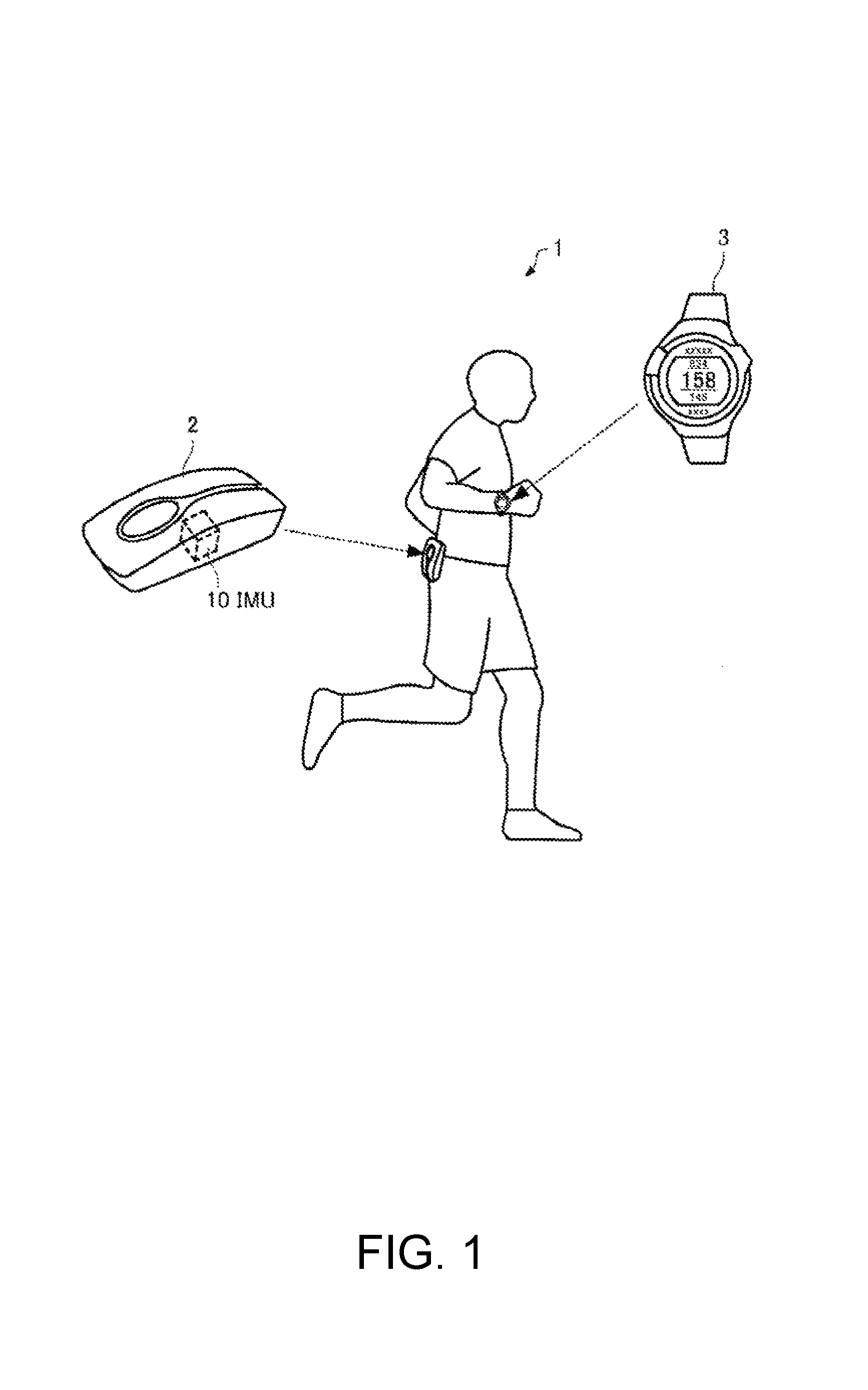

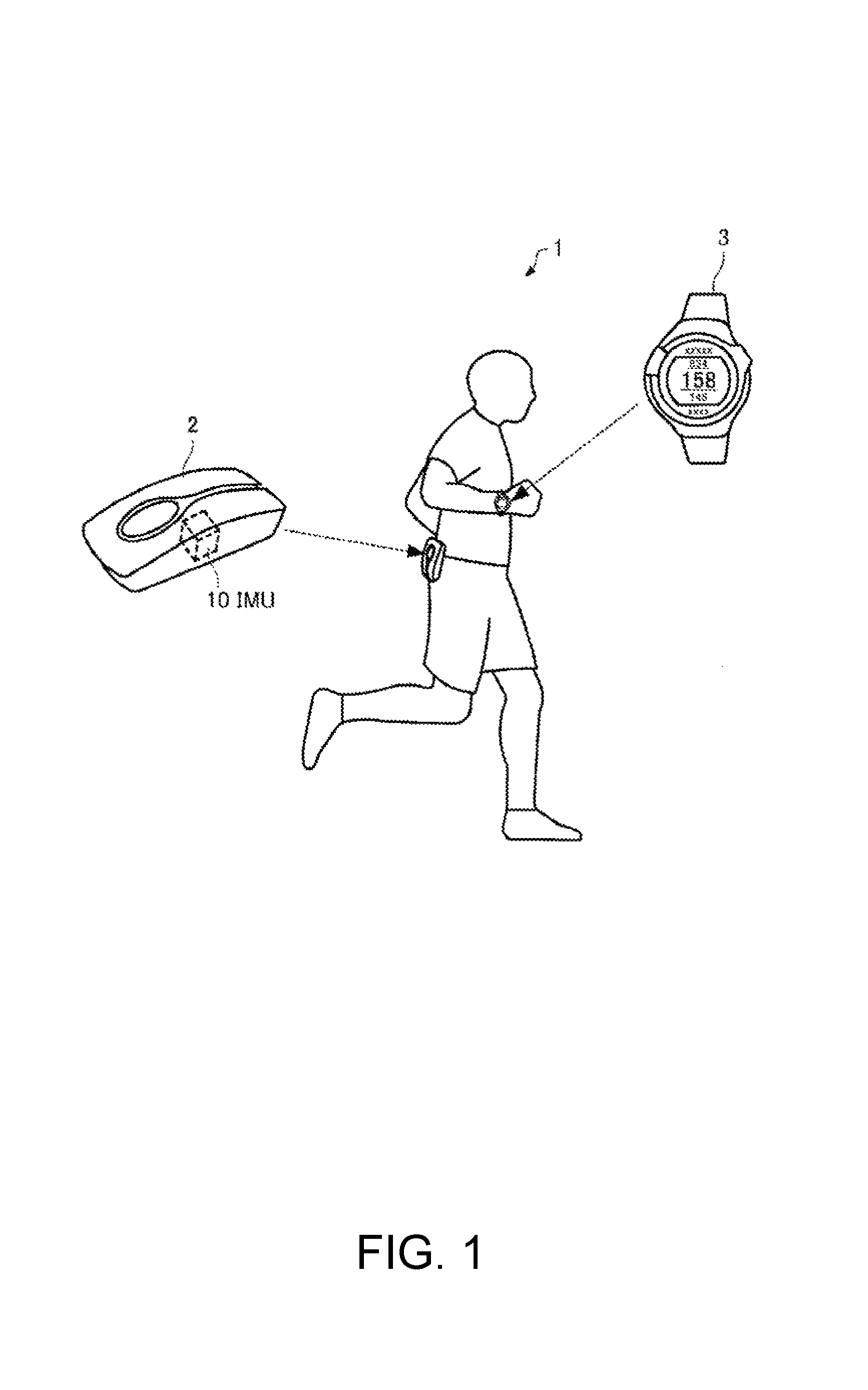

FIG. 1 is a diagram for explaining an outline of a motion analysis system 1 according to a first embodiment. As illustrated in FIG. 1, the motion analysis system 1 of the first embodiment includes a motion analysis apparatus 2 and a display apparatus 3.

The motion analysis apparatus 2 is mounted on a body part (for example, a right-side waist or a left-side waist) of a user (an example of a moving object). The motion analysis apparatus 2 has an inertial measurement unit (IMU) 10 built thereinto, recognizes motion of the user in walking (including running), computes velocity, a position, attitude angles (a roll angle, a pitch angle, and a yaw angle), and the like, and analyzes a user's motion so as to generate motion analysis information. In the present embodiment, the motion analysis apparatus 2 is mounted on the user so that one detection axis (hereinafter, referred to as a z axis) of the inertial measurement unit (IMU) 10 substantially matches the gravitational acceleration direction (vertically downward direction) in a state in which the user stands still. The motion analysis apparatus 2 transmits the generated motion analysis information to the display apparatus 3.

The display apparatus 3 is a wrist type (wristwatch type) portable information apparatus and is mounted on a user's wrist or the like. However, the display apparatus 3 may be a portable information apparatus such as a head mounted display (HMD) or a smart phone. The user operates the display apparatus 3 so as to instruct the motion analysis apparatus 2 to start or finish measurement. The display apparatus 3 transmits a command for instructing measurement to be started or finished, to the motion analysis apparatus 2. If a command for starting measurement has been received, the motion analysis apparatus 2 causes the inertial measurement unit (IMU) 10 to start measurement, and analyzes the user's motion on the basis of a measurement result so as to generate motion analysis information. The motion analysis apparatus 2 transmits the generated motion analysis information to the display apparatus 3. The display apparatus 3 receives the motion analysis information, and presents the received motion analysis information to the user in various forms such as text, graphics, and sound. The user can recognize the motion analysis information via the display apparatus 3.

Data communication between the motion analysis apparatus 2 and the display apparatus 3 may be wireless communication or wired communication.

In the present embodiment, hereinafter, as an example, a detailed description will be made of a case where the motion analysis apparatus 2 generates motion analysis information including a movement path, a movement time period, or the like by estimating a walking speed of the user, but the motion analysis system 1 of the present embodiment is also applicable to a case where motion analysis information is generated in motion causing movement other than walking.

2. Coordinate System

Coordinate systems necessary in the following description are defined. Earth centered earth fixed frame (e frame): right handed three-dimensional orthogonal coordinates in which the center of the earth is set as an origin, and a z axis is taken so as to be parallel to the axis of the earth Navigation frame (n frame): three-dimensional orthogonal coordinate system in which a moving object (user) is set as an origin, and an x axis is set to the north, a y axis is set to the east, and a z axis is set to the gravitational direction Body frame (b frame): three-dimensional orthogonal coordinate system using a sensor (the inertial measurement unit (IMU) 10) as a reference Moving frame (m frame): right handed three-dimensional orthogonal coordinate system in which a moving object (user) is set as an origin, and an advancing direction of the moving object (user) is set as an x axis 3. Configuration of Motion Analysis System

FIG. 2 is a functional block diagram illustrating configuration examples of the motion analysis apparatus 2 and the display apparatus 3. As illustrated in FIG. 2, the motion analysis apparatus 2 (an example of an error estimation apparatus) includes the inertial measurement unit (IMU) 10, a processing unit 20, a storage unit 30, a communication unit 40, and a GPS unit 50. However, the motion analysis apparatus 2 of the present embodiment may have a configuration in which some of the constituent elements are deleted or changed, or other constituent elements may be added thereto.

The inertial measurement unit 10 (an example of a first sensor) includes an acceleration sensor 12, an angular velocity sensor 14, and a signal processing portion 16.

The acceleration sensor 12 detects respective accelerations in the three-axis directions which intersect each other (ideally, orthogonal to each other), and outputs a digital signal (acceleration data) corresponding to magnitudes and directions of the detected three-axis accelerations.

The angular velocity sensor 14 detects respective angular velocities in the three-axis directions which intersect each other (ideally, orthogonal to each other), and outputs a digital signal (angular velocity data) corresponding to magnitudes and directions of the detected three-axis angular velocities.

The signal processing portion 16 receives the acceleration data and the angular velocity data from the acceleration sensor 12 and the angular velocity sensor 14, respectively, adds time information thereto, stores the data items and the time information in a storage unit (not illustrated), generates sensing data in which the stored acceleration data, angular velocity data and time information conform to a predetermined format, and outputs the sensing data to the processing unit 20.

The acceleration sensor 12 and the angular velocity sensor 14 are ideally installed so that three axes thereof match three axes of a sensor coordinate system (b frame) with the inertial measurement unit 10 as a reference, but, in practice, an error occurs in an installation angle. Therefore, the signal processing portion 16 performs a process of converting the acceleration data and the angular velocity data into data of the sensor coordinate system (b frame) by using a correction parameter which is calculated in advance according to the installation angle error. Instead of the signal processing portion 16, the processing unit 20 which will be described later may perform the process.

The signal processing portion 16 may perform a temperature correction process on the acceleration sensor 12 and the angular velocity sensor 14. Instead of the signal processing portion 16, the processing unit 20 to be described later may perform the temperature correction process, and a temperature correction function may be incorporated into the acceleration sensor 12 and the angular velocity sensor 14.

The acceleration sensor 12 and the angular velocity sensor 14 may output analog signals, and, in this case, the signal processing portion 16 may A/D convert an output signal from the acceleration sensor 12 and an output signal from the angular velocity sensor 14 so as to generate sensing data.

The GPS unit 50 (an example of a second sensor) receives a GPS satellite signal which is transmitted from a GPS satellite which is one type of positioning satellite, performs positioning computation by using the GPS satellite signal so as to calculate a position and velocity (which is a vector including a magnitude and a direction) of the user in n frames, and outputs GPS data in which time information or positioning accuracy information is added to the calculated results to the processing unit 20. A method of calculating a position or velocity or a method of generating time information by using GPS is well known, and thus detailed description thereof will be omitted.

The processing unit 20 is constituted of, for example, a central processing unit (CPU), a digital signal processor (DSP), or an application specific integrated circuit (ASIC), and performs various calculation processes or control processes according to various programs stored in the storage unit 30. Particularly, the processing unit 20 receives sensing data from the inertial measurement unit 10, and receives GPS data from the GPS unit 50, so as to calculate a velocity, a position, an attitude angle, and the like of the user by using the sensing data and the GPS data. The processing unit 20 performs various calculation processes by using the calculated information so as to analyze motion of the user and to generate motion analysis information (image data, text data, sound data, and the like) including a movement path or a movement time period. The processing unit 20 transmits the generated motion analysis information to the display apparatus 3 via the communication unit 40.

The storage unit 30 is constituted of, for example, recording media including various IC memories such as a read only memory (ROM), a flash ROM, and a random access memory (RAM), a hard disk, and a memory card.

The storage unit 30 stores a motion analysis program 300 which is read by the processing unit 20 and is used to perform a motion analysis process (refer to FIG. 10).

The storage unit 30 stores a sensing data table 310, a GPS data table 320, a calculated data table 330, a coordinate transformation matrix 340, motion analysis information 350, and the like.

The sensing data table 310 is a data table which stores sensing data (a detection result in the inertial measurement unit 10) received by the processing unit 20 from the inertial measurement unit 10 in a time series. FIG. 3 is a diagram illustrating a configuration example of the sensing data table 310. As illustrated in FIG. 3, the sensing data table 310 is configured so that sensing data items in which the detection time point 311 in the inertial measurement unit 10, an acceleration 312 detected by the acceleration sensor 12, and an angular velocity 313 detected by the angular velocity sensor 14 are correlated with each other are arranged in a time series. When measurement is started, the processing unit 20 adds new sensing data to the sensing data table 310 whenever a sampling cycle .DELTA.t (for example, 20 ms) elapses. The processing unit 20 corrects an acceleration and an angular velocity bias by using an acceleration bias and an angular bias which are estimated according to error estimation (which will be described later) using the extended Karman filter, and updates the sensing data table 310 by overwriting the corrected acceleration and angular velocity to the sensing data table.

The GPS data table 320 is a data table which stores GPS data (a detection result in the GPS unit (GPS sensor) 50) received by the processing unit 20 from the GPS unit 50 in a time series. FIG. 4 is a diagram illustrating a configuration example of the GPS data table 320. As illustrated in FIG. 4, the GPS data table 320 is configured so that GPS data items in which the positioning time point 321 at which the GPS unit 50 performs positioning computation, a position 322 calculated through the positioning computation, a velocity 323 calculated through the positioning computation, positioning accuracy (dilution of precision (DOP)) 324, a signal intensity 325 of a received GPS satellite signal, and the like are correlated with each other are arranged in a time series. When measurement is started, the processing unit 20 adds new GPS data whenever the GPS data is acquired (for example, in an asynchronous manner with acquisition timing of sensing data) so as to update the GPS data table 320.

The calculated data table 330 is a data table which stores a velocity, a position, and an attitude angle calculated by the processing unit 20 by using the sensing data in a time series. FIG. 5 is a diagram illustrating a configuration example of the calculated data table 330. As illustrated in FIG. 5, the calculated data table 330 is configured so that calculated data items in which the time 331 at which the processing unit 20 performs computation, a velocity 332, a position 333, and an attitude angle 334 are correlated with each other are arranged in a time series. When measurement is started, the processing unit 20 calculates a velocity, a position, and an attitude angle whenever new sensing data is acquired, that is, the sampling cycle .DELTA.t elapses, and adds new calculated data to the calculated data table 330. The processing unit 20 corrects a velocity, a position, and an attitude angle by using a velocity error, a position error, and an attitude angle error which are estimated according to error estimation using the extended Karman filter, and updates the calculated data table 330 by overwriting the corrected velocity, position and attitude angle to the calculated data table.

The coordinate transformation matrix 340 is a matrix for performing coordinate transformation between the b frame and the m frame, and, as will be described later, the processing unit 20 calculates the matrix at a predetermined timing in a cycle of the user's walking motion, and preserves (stores) the matrix in the storage unit 30.

The motion analysis information 350 is various information pieces regarding the motion of the user, and, in the present embodiment, includes information regarding movement due to walking, information regarding an evaluation index of walking motion, and information regarding advice, an instruction, and a warning for walking, calculated by the processing unit 20.

The communication unit 40 performs data communication with a communication unit 140 of the display apparatus 3, and performs a process of receiving motion analysis information generated by the processing unit 20 and transmitting the motion analysis information to the display apparatus 3, a process of receiving a command (a command for starting or finishing measurement, or the like) transmitted from the display apparatus 3 and sending the command to the processing unit 20, and the like.

The display apparatus 3 includes a processing unit 120, a storage unit 130, the communication unit 140, an operation unit 150, a clocking unit 160, a display unit 170, and a sound output unit 180. However, the display apparatus 3 of the present embodiment may have a configuration in which some of the constituent elements are deleted or changed, or other constituent elements may be added thereto.

The processing unit 120 performs various calculation processes or control processes according to a program stored in the storage unit 130. For example, the processing unit 120 performs various processes (a process of sending a command for starting or finishing measurement to the communication unit 140, a process of performing display or outputting sound corresponding to the operation data, and the like) corresponding to operation data received from the operation unit 150; a process of receiving motion analysis information from the communication unit 140 and sending the motion analysis information to the display unit 170 or the sound output unit 180; a process of generating time image data corresponding to time information received from the clocking unit 160 and sending the time image data to the display unit 170; and the like.

The storage unit 130 is constituted of various IC memories such as a ROM which stores a program or data required for the processing unit 120 to perform various processes, and a RAM serving as a work area of the processing unit 120.

The communication unit 140 performs data communication with the communication unit 40 of the motion analysis apparatus 2, and performs a process of receiving a command (a command for starting or finishing measurement, or the like) corresponding to operation data from the processing unit 120 and transmitting the command to the motion analysis apparatus 2, a process of receiving motion analysis information (image data, text data, sound data, and the like) transmitted from the motion analysis apparatus 2 and sending the information to the processing unit 120, and the like.

The operation unit 150 performs a process of acquiring operation data (operation data such as starting or finishing of measurement or selection of display content) from the user and sending the operation data to the processing unit 120. The operation unit 150 may be, for example, a touch panel type display, a button, a key, or a microphone.

The clocking unit 160 performs a process of generating time information such as year, month, day, hour, minute, and second. The clocking unit 160 is implemented by, for example, a real time clock (RTC) IC.

The display unit 170 displays image data or text data sent from the processing unit 120 as text, a graph, a table, animation, or other images. The display unit 170 is implemented by, for example, a display such as a liquid crystal display (LCD), an organic electroluminescence (EL) display, or an electrophoretic display (EPD), and may be a touch panel type display. A single touch panel type display may realize functions of the operation unit 150 and the display unit 170.

The sound output unit 180 outputs sound data sent from the processing unit 120 as sound such as voice or a buzzer sound. The sound output unit 180 is implemented by, for example, a speaker or a buzzer.

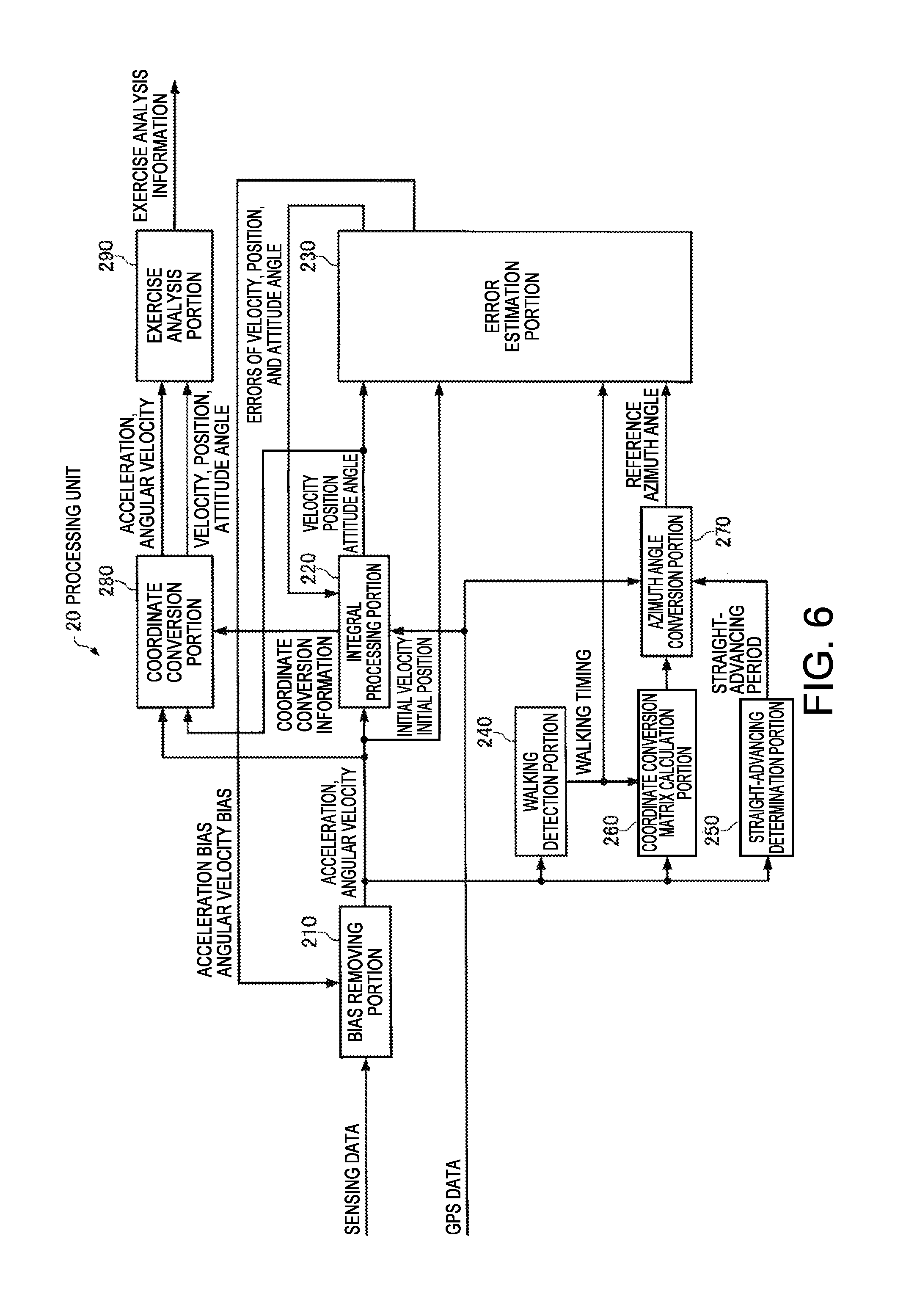

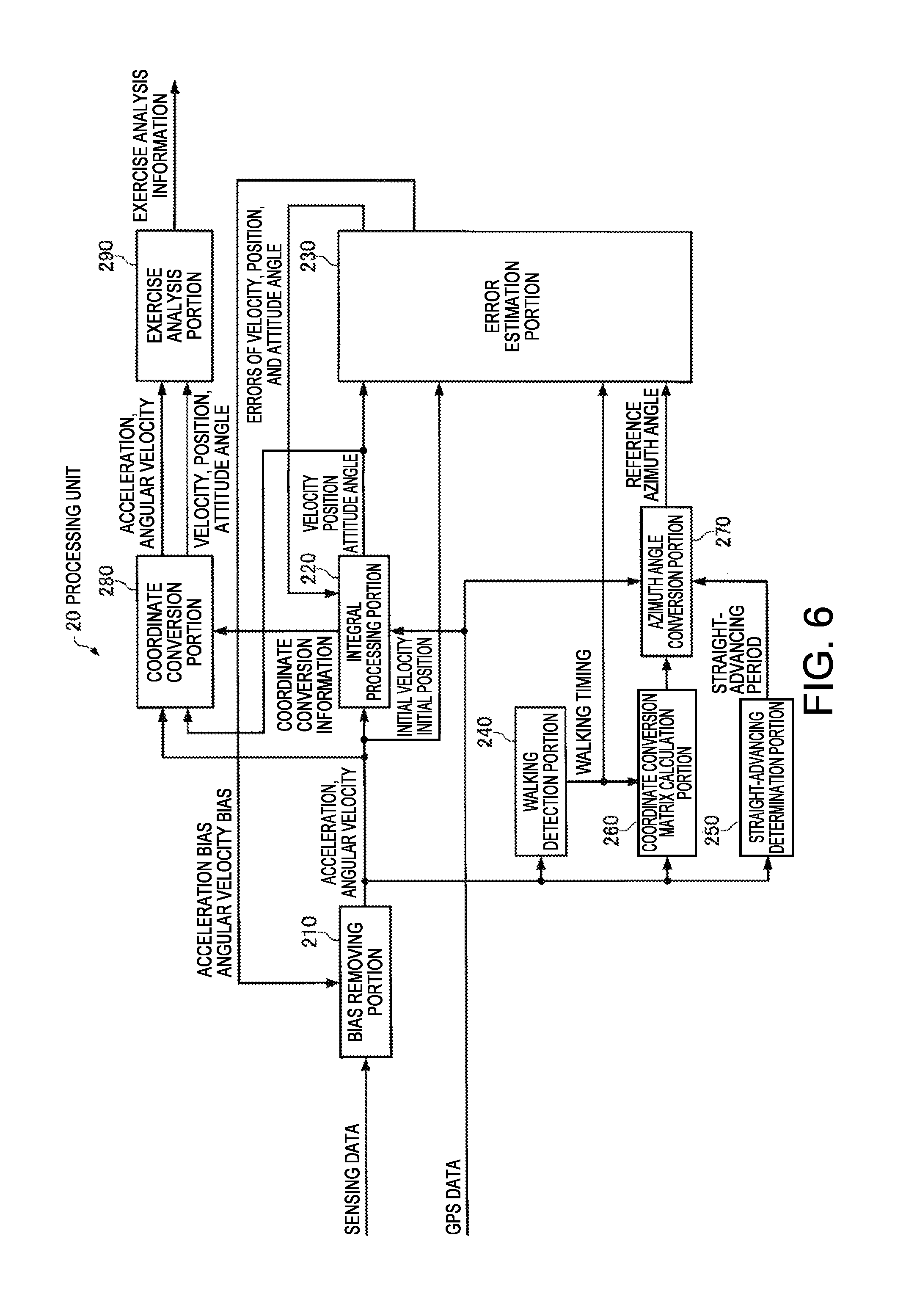

FIG. 6 is a functional block diagram illustrating a configuration example of the processing unit 20 of the motion analysis apparatus 2. In the present embodiment, the processing unit 20 functions as a bias removing portion 210, an integral processing portion 220, an error estimation portion 230, a walking detection portion 240, a straight-advancing determination portion 250, a coordinate transformation matrix calculation portion 260, an azimuth angle transformation portion 270, a coordinate transformation portion 280, and a motion analysis portion 290, by executing the motion analysis program 300 stored in the storage unit 30.

The bias removing portion 210 subtracts an acceleration bias b.sub.a and an angular velocity bias b.sub..omega. estimated by the error estimation portion 230 from accelerations (three-axis accelerations) and angular velocities included in acquired new sensing data, so as to perform a process of correcting the accelerations and the angular velocities. Since the acceleration bias b.sub.a and the angular velocity bias b.sub..omega. are not present in an initial state right after measurement is started, the bias removing portion 210 computes initial biases by using sensing data from the inertial measurement unit assuming that an initial state of the user is a stationary state.

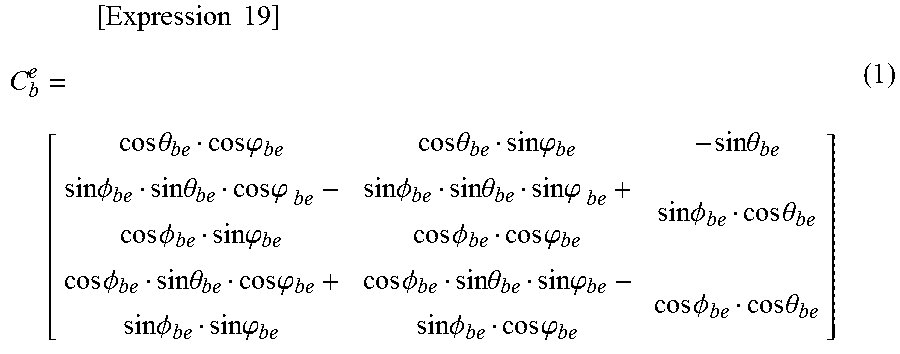

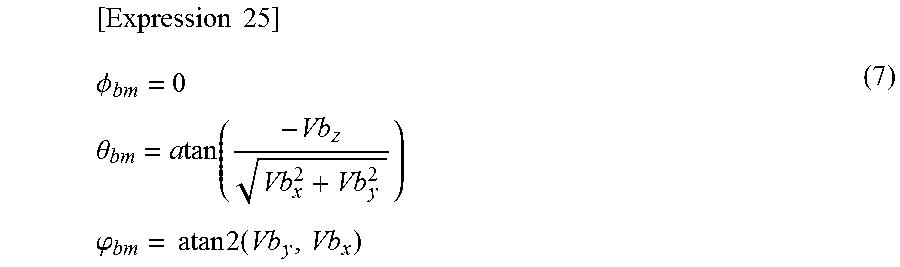

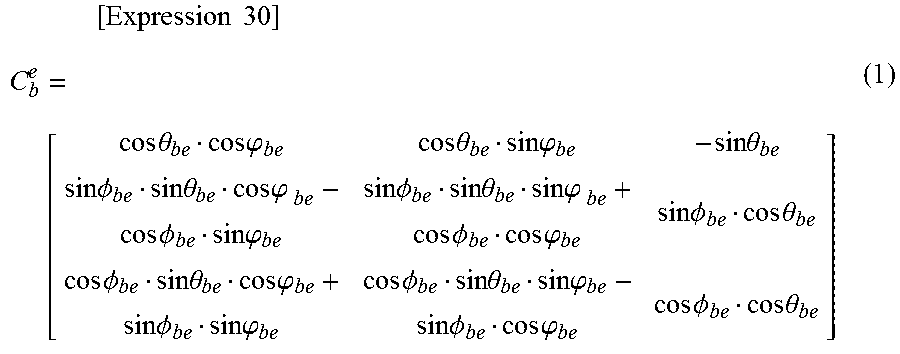

The integral processing portion 220 performs a process of calculating a velocity v.sup.e, a position p.sup.e, and attitude angles (a roll angle .PHI..sub.be, a pitch angle .theta..sub.be, and a yaw angle .psi..sub.be) of the e frame on the basis of the accelerations and the angular velocities corrected by the bias removing portion 210. Specifically, first, the integral processing portion 220 sets an initial velocity to zero assuming that an initial state of the user is a stationary state, or calculates an initial velocity by using the velocity included in the GPS data and also calculates an initial position by using the position included in the GPS data. The integral processing portion 220 specifies a gravitational acceleration direction on the basis of the three-axis accelerations of the b frame corrected by the bias removing portion 210 so as to calculate initial values of the roll angle .PHI..sub.be and the pitch angle .theta..sub.be, also calculates an initial value of the yaw angle .psi..sub.be on the basis of the velocity including the GPS data, and sets the calculated initial values as initial attitude angles of the e frame. In a case where the GPS data cannot be obtained, an initial value of the yaw angle .psi..sub.be is set to, for example, zero. The integral processing portion 220 calculates an initial value of a coordinate transformation matrix (rotation matrix) C.sub.b.sup.e from the b frame into the e frame, expressed by Equation (1) on the basis of the calculated initial attitude angles.

.times..times..times. .times..times..theta..times..times..phi..times..times..theta..times..time- s..phi..times..times..theta..times..times..PHI..times..times..theta..times- ..times..phi..times..times..PHI..times..times..phi..times..times..PHI..tim- es..times..phi..times..times..PHI..times..times..phi..times..times..PHI..t- imes..times..theta..times..times..PHI..times..times..theta..times..times..- phi..times..times..PHI..times..times..phi..times..times..PHI..times..times- ..theta..times..times..phi..times..times..PHI..times..times..phi..times..t- imes..PHI..times..times..theta. ##EQU00001##

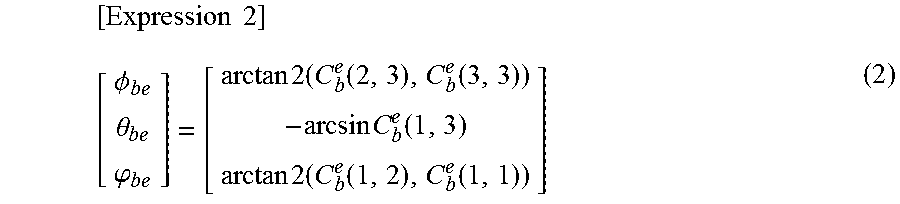

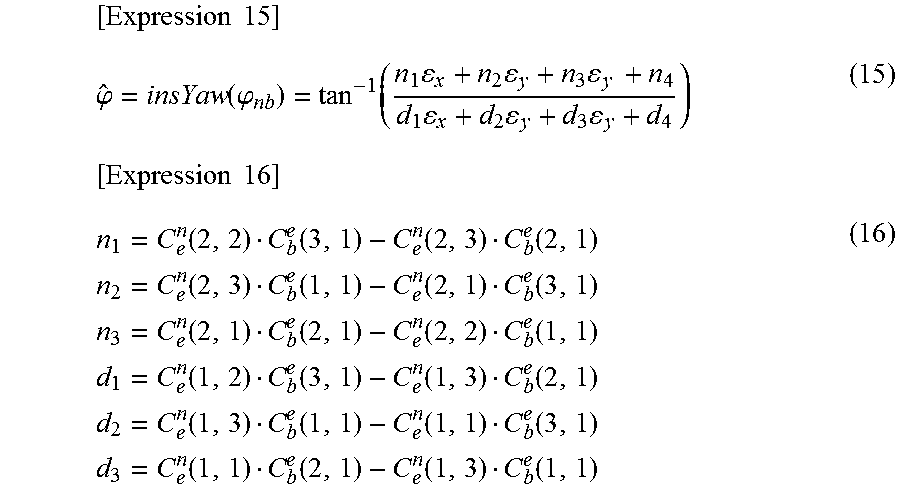

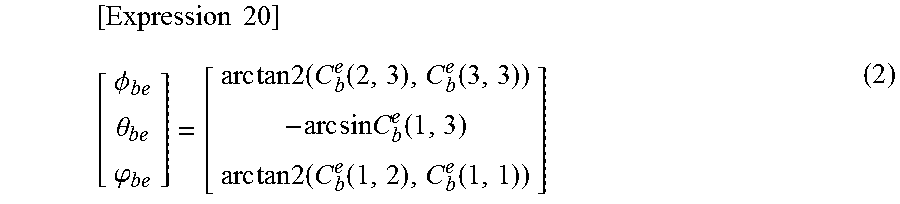

Then, the integral processing portion 220 performs integration (rotation calculation) of the three-axis angular velocities corrected by the bias removing portion 210 so as to calculate the coordinate transformation matrix C.sub.b.sup.e, and calculates attitude angles by using Equation (2).

.times..times..PHI..theta..phi..times..times..times..function..function..- times..times..function..times..times..times..function..function. ##EQU00002##

The integral processing portion 220 converts the three-axis accelerations of the b frame corrected by the bias removing portion 210 into three-axis accelerations of the e frame by using the coordinate transformation matrix C.sub.b.sup.e, and removes a gravitational acceleration component therefrom for integration so as to calculate the velocity v.sup.e of the e frame. The integral processing portion 220 integrates the velocity v.sup.e of the e frame so as to calculate the position p.sup.e of the e frame.

The integral processing portion 220 also performs a process of correcting the velocity v.sup.e, the position p.sup.e, and the attitude angles by using a velocity error .delta.v.sup.e, a position error .delta.p.sup.e, and attitude angle errors .epsilon..sup.e estimated by the error estimation portion 230.

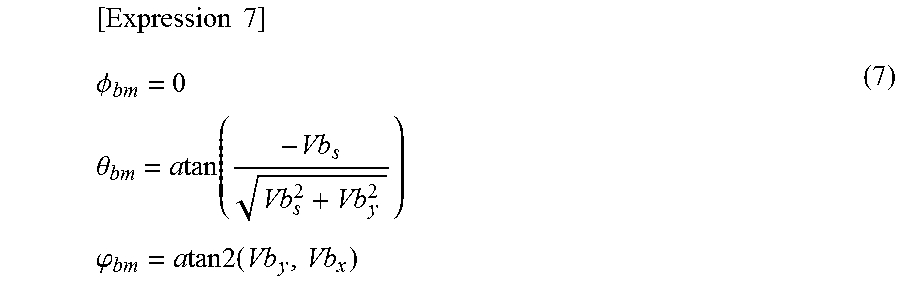

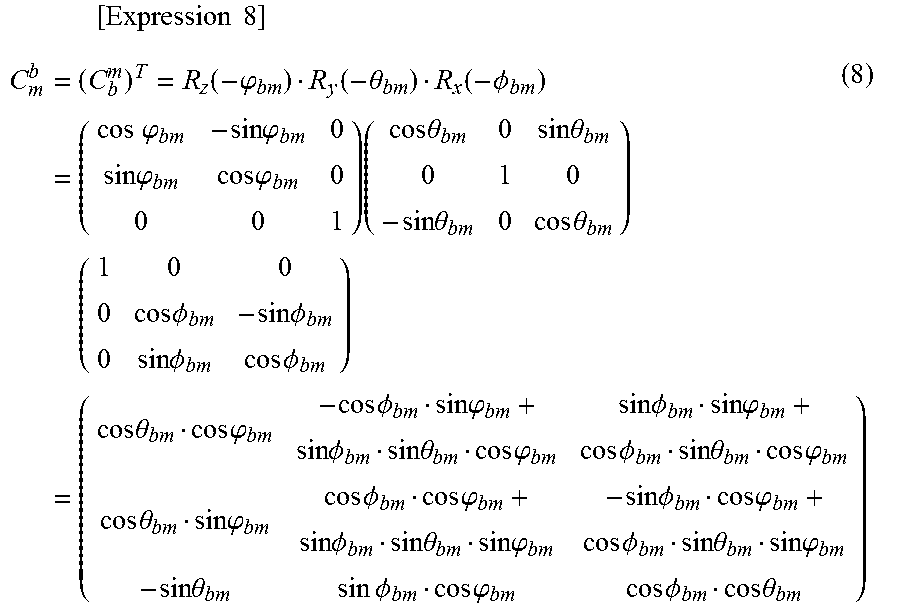

The integral processing portion 220 also calculates a coordinate transformation matrix C.sub.b.sup.m from the b frame into the m frame, and a coordinate transformation matrix C.sub.e.sup.m from the e frame into the m frame. The coordinate transformation matrices are used for a coordinate transformation process in the coordinate transformation portion 280 which will be described later as coordinate transformation information.

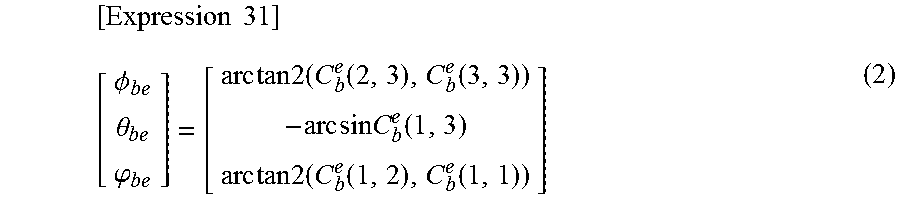

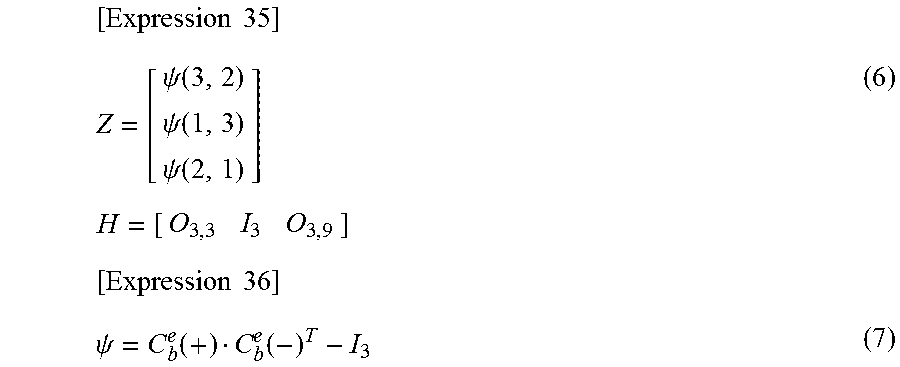

The error estimation portion 230 estimates an error of an index indicating a state of the user by using the velocity and/or the position, and the attitude angles calculated by the integral processing portion 220, the acceleration or the angular velocity corrected by the bias removing portion 210, and a reference azimuth angle calculated by the azimuth angle transformation portion 270 which will be described later. In the present embodiment, the error estimation portion 230 uses the velocity, the attitude angles, the acceleration, the angular velocity, and the position as indexes indicating a state of the user, and estimates errors of the indexes by using the extended Karman filter. In other words, the error estimation portion 230 uses an error (velocity error) .delta.v.sup.e of the velocity v.sup.e calculated by the integral processing portion 220, errors (attitude angle errors) .epsilon..sup.e of the attitude angles calculated by the integral processing portion 220, the acceleration bias b.sub.a, the angular velocity bias b.sub..omega., and an error (position error) .delta.p.sup.e of the position p.sup.e calculated by the integral processing portion 220, as state variables of the extended Karman filter, and a state vector X is defined as in Equation (3).

.times..times..delta..times..times..omega..delta..times..times. ##EQU00003##

The error estimation portion 230 predicts state variables (errors of the indexes indicating a state of the user) included in the state vector X by using prediction formulae of the extended Karman filter. The prediction formulae of the extended Karman filter are expressed as in Equation (4). In Equation (4), the matrix .PHI. is a matrix which associates the previous state vector X with the present state vector X, and is designed so that some elements thereof change every moment while reflecting attitude angles, a position, and the like. Q is a matrix indicating process noise, and each element thereof is set to an appropriate value. P is an error covariance matrix of the state variables. [Expression 4] X=.PHI.X P=.PHI.P.PHI..sup.T+Q (4)

The error estimation portion 230 updates (corrects) the predicted state variables (errors of the indexes indicating a state of the user) by using update formulae of the extended Karman filter. The update formulae of the extended Karman filter are expressed as in Equation (5). Z and H are respectively an observation vector and an observation matrix, and the update formulae (5) indicate that the state vector X is corrected by using a difference between the actual observation vector Z and a vector HX predicted from the state vector X. R is a covariance matrix of observation errors, and may have predefined constant values, and may be dynamically changed. K is a Karman gain, and K increases as R decreases. From Equation (5), as K increases (R decreases), a correction amount of the state vector X increases, and thus P decreases. [Expression 5] K=PH.sup.T(HPH.sup.T+R).sup.-1 X=X+K(Z-HX) P=(I-KH)P (5)

In the present embodiment, under the condition that an azimuth angle calculated by using a detection result in the inertial measurement unit is the same as an azimuth angle of the inertial measurement unit 10 calculated by using GPS data, and the azimuth angle of the inertial measurement unit 10 calculated by using the GPS data is a true azimuth angle (reference azimuth angle), the error estimation portion 230 uses a difference between an azimuth angle calculated by using a detection result in the inertial measurement unit and an azimuth angle of the inertial measurement unit 10 calculated by using GPS data as the observation vector Z and applies the extended Karman filter thereto so as to estimate the state vector X.

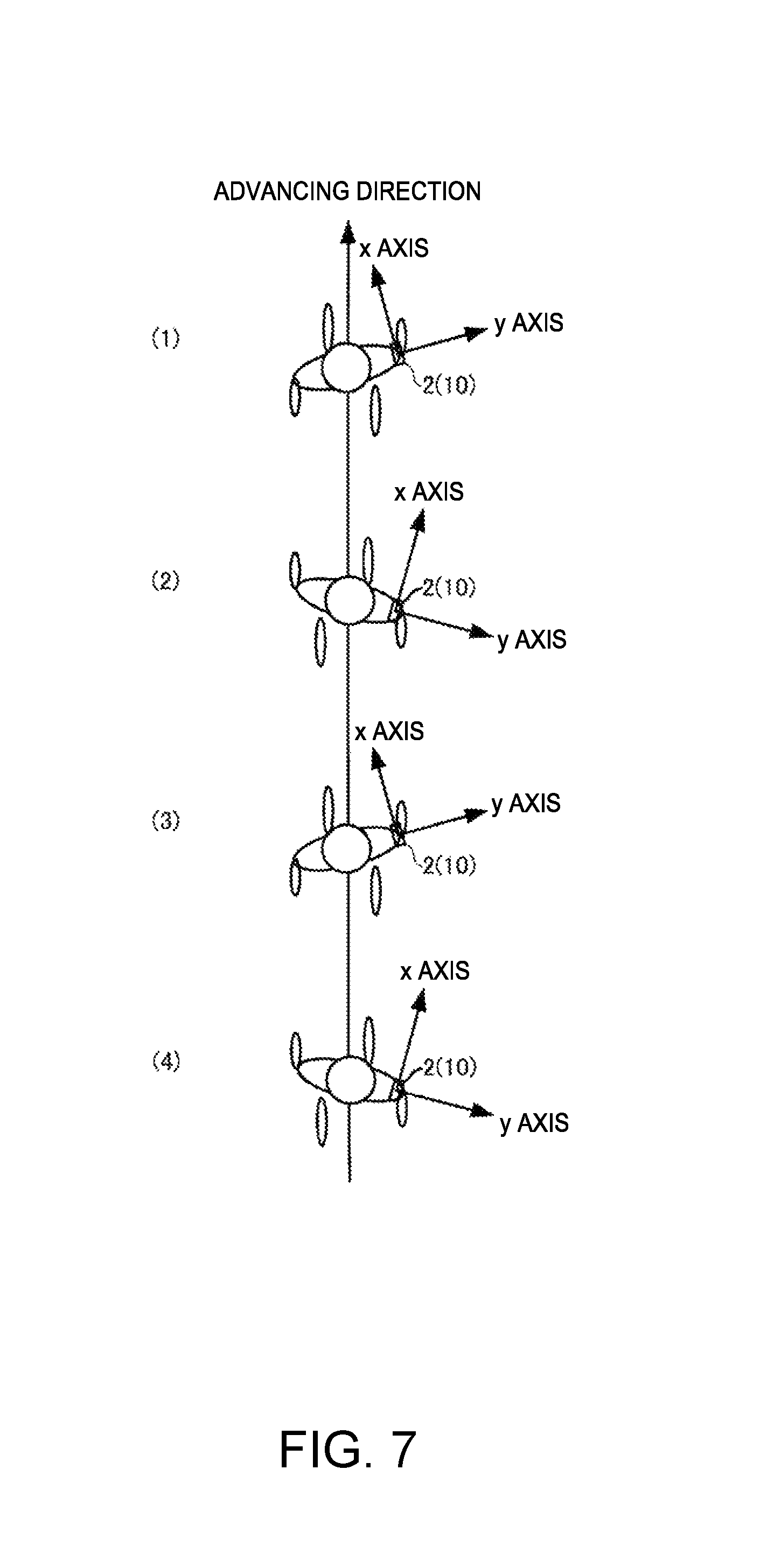

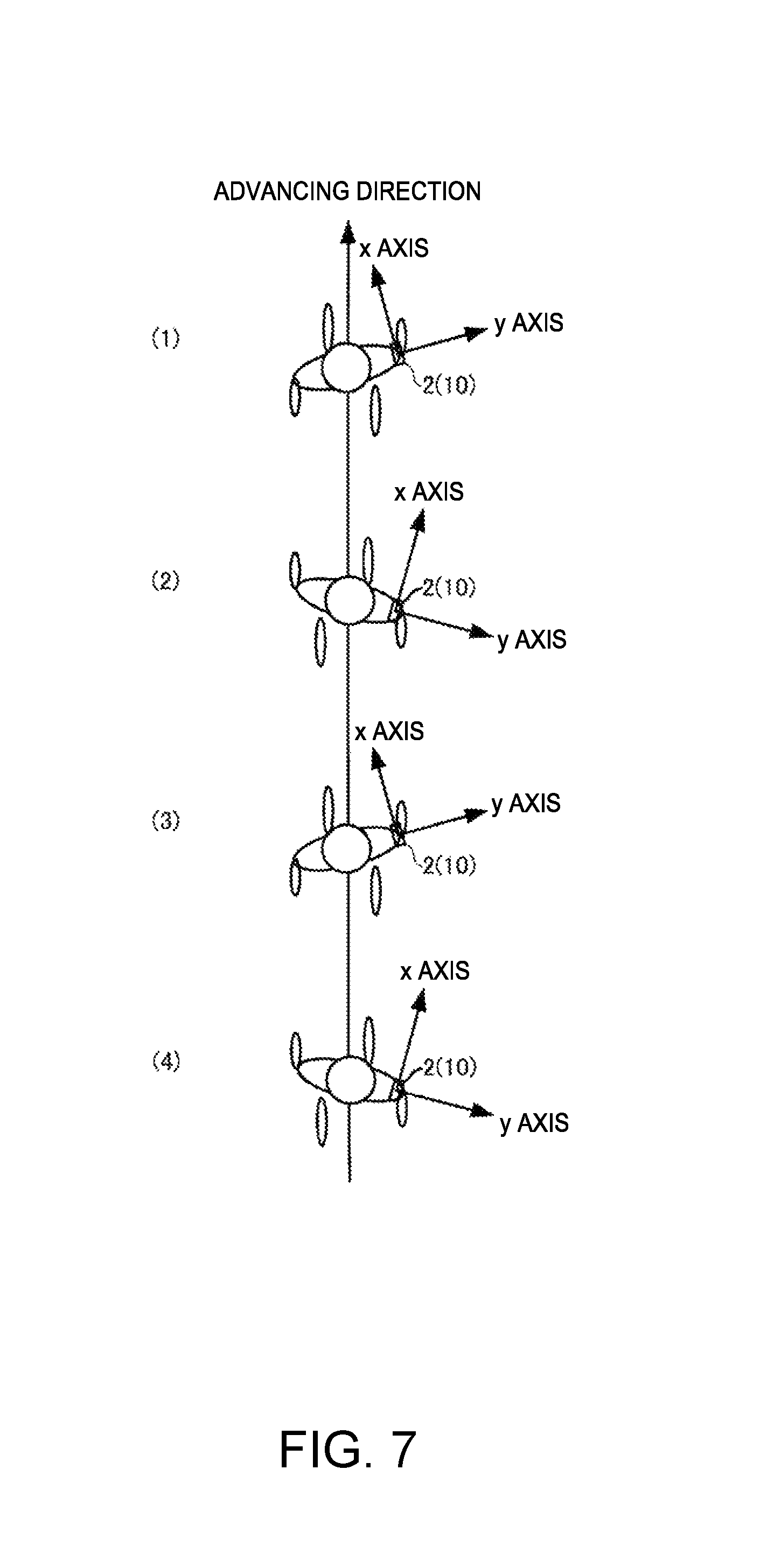

Meanwhile, in a case where the user performs a walking action (advances straight), an advancing direction is nearly constant, but an attitude of the inertial measurement unit 10 periodically changes according to motion of the user. FIG. 7 is an overhead view of movement of the user in a case where the user wearing the motion analysis apparatus 2 (IMU 10) on the user's right waist performs a walking action (advancing straight). As illustrated in FIG. 7, an attitude of the inertial measurement unit 10 relative to the user changes at any time due to the walking action of the user. In a state in which the user takes a step forward with the right foot, as illustrated in (2) or (4) of FIG. 7, the inertial measurement unit 10 is tilted to the right side with respect to the advancing direction (the x axis of the m frame). In contrast, in a state in which the user takes a step forward with the left foot, as illustrated in (1) or (3) of FIG. 7, the inertial measurement unit 10 is tilted to the left side with respect to the advancing direction (the x axis of the m frame).

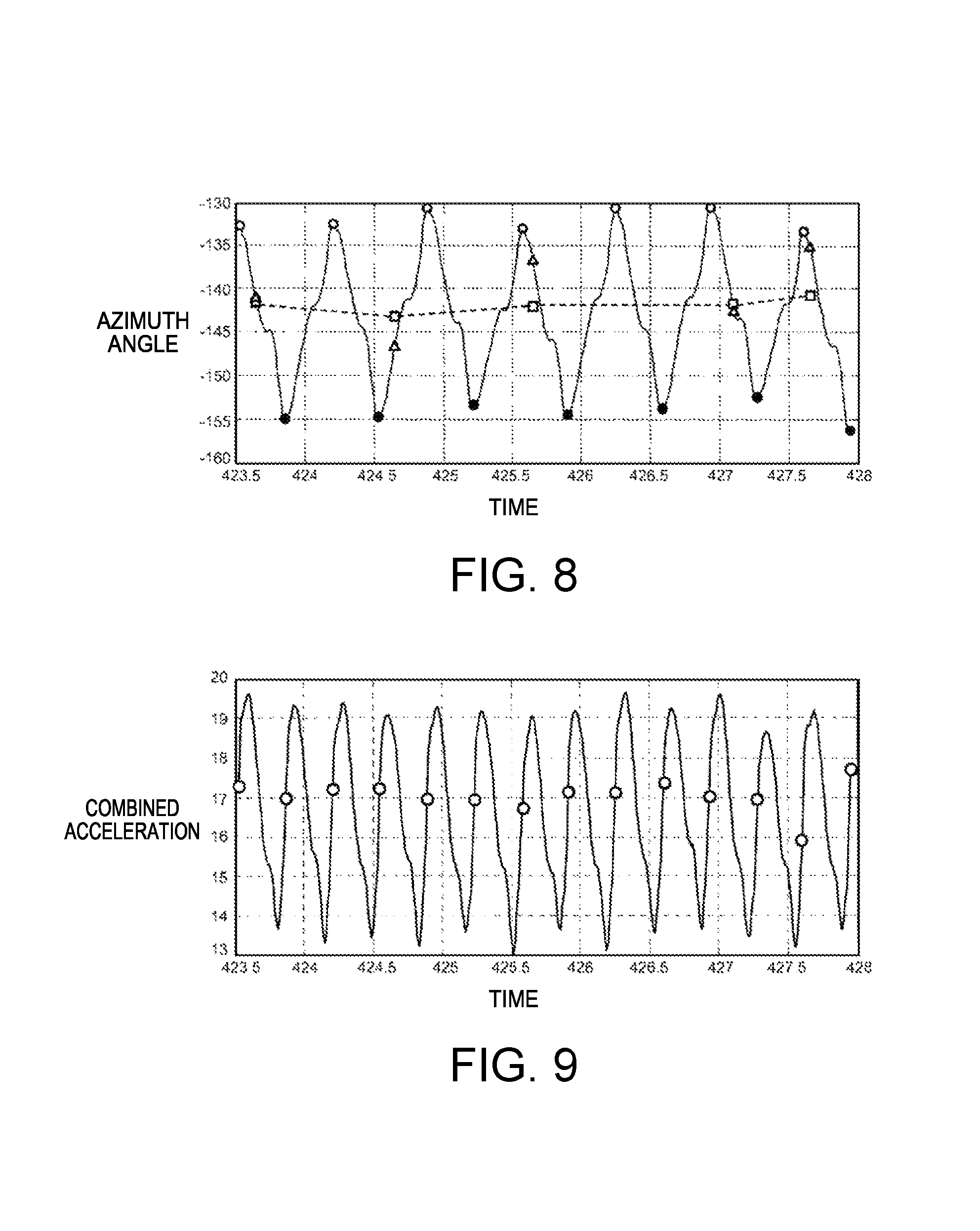

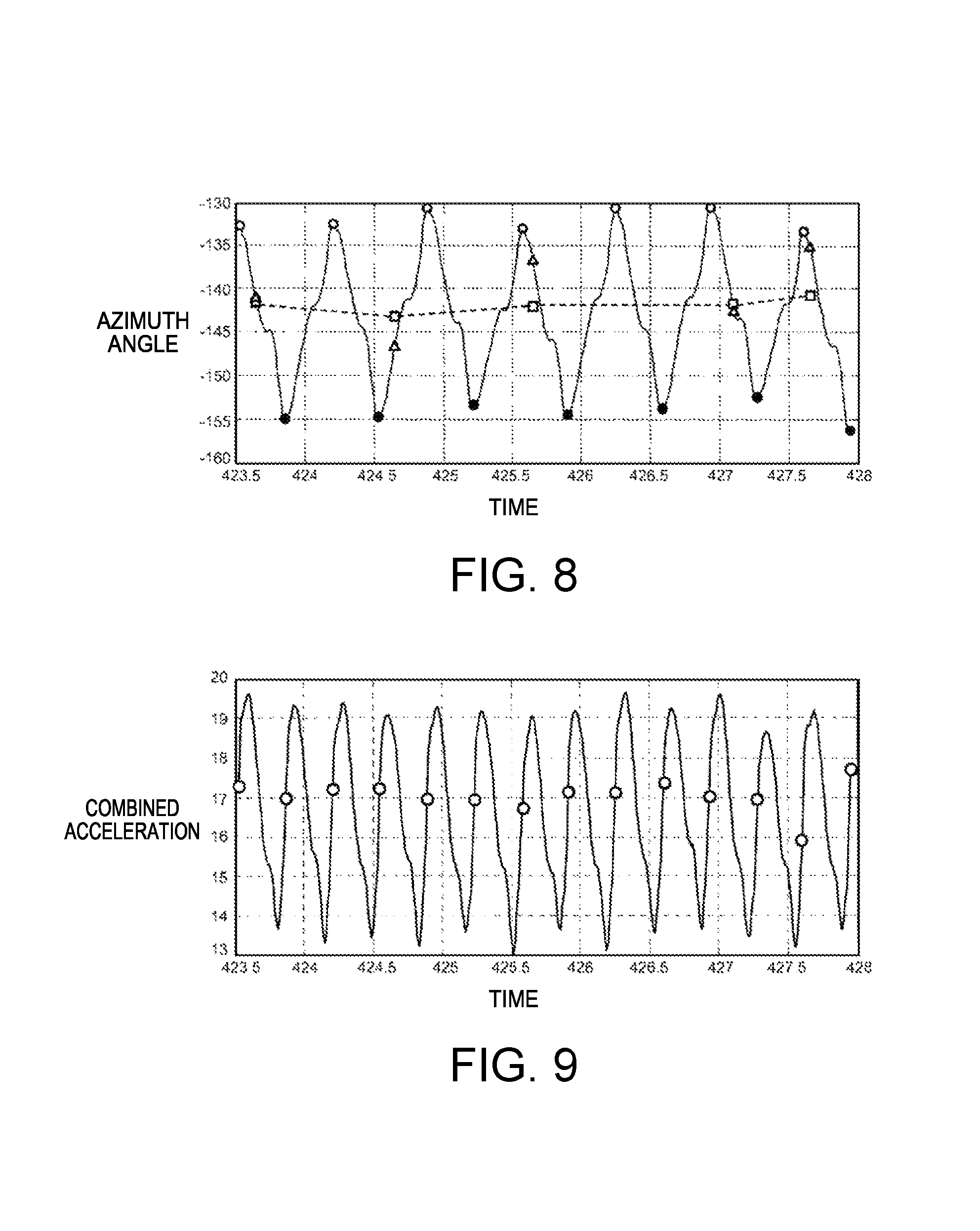

In other words, the azimuth angle of the inertial measurement unit 10 periodically changes every two steps including left and right steps due to the walking action of the user, and thus an azimuth angle calculated by using a detection result in the inertial measurement unit 10 also periodically changes. In contrast, an advancing direction of the user is substantially constant, and thus an azimuth angle calculated by using GPS data is also substantially constant. FIG. 8 is a diagram illustrating examples of an azimuth angle of the inertial measurement unit 10 and an azimuth angle of an advancing direction in a case where the user performs a walking action (advancing straight), in which a transverse axis expresses time, and a longitudinal axis expresses an azimuth angle. In FIG. 8, a solid line indicates an azimuth angle of the inertial measurement unit 10, and a dashed line indicates an azimuth angle of the advancing direction. As illustrated in FIG. 8, the azimuth angle of the inertial measurement unit 10 periodically changes every two steps. For example, the azimuth angle is the maximum (indicated by O) in a state in which the user takes a step forward with the right foot, and is the minimum (indicated by ) in a state in which the user takes a step forward with the left foot. On the other hand, since the azimuth angle of the advancing direction is substantially constant without greatly changing, an azimuth angle calculated by using GPS data is also substantially constant.

Therefore, in order to satisfy the above-described condition for estimating the state vector X, it is necessary to compute information regarding a difference between an azimuth angle of the inertial measurement unit and an azimuth angle of the advancing direction calculated by using GPS data, that is, a coordinate transformation matrix C.sub.m.sup.b between the b frame and the m frame. However, as illustrated in FIG. 8, a timing for obtaining GPS data is irregular, and thus a difference between an azimuth angle (indicated by the triangular mark) of the inertial measurement unit 10 and an azimuth angle (indicated by the square mark) calculated by using the GPS data is not constant at a timing at which the GPS data is obtained. Therefore, the coordinate transformation matrix C.sub.m.sup.b is required to be calculated whenever the GPS data is obtained in order to perform error estimation using the extended Karman filter at the timing at which the GPS data is obtained, and thus there is a problem in that a processing load increases.

Therefore, in the present embodiment, the coordinate transformation matrix C.sub.m.sup.b is computed only once at a predetermined timing in a walking cycle by using the fact that an azimuth angle of the inertial measurement unit 10 periodically changes every two steps according to the user's walking motion, and, thereafter, a reference azimuth angle is calculated by using the coordinate transformation matrix C.sub.m.sup.b at the next predetermined timing (the same timing as the time at which the coordinate transformation matrix C.sub.m.sup.b has been computed) in the walking cycle whenever GPS data is obtained, so that the state vector X is estimated. In the above-described manner, it is not necessary to compute the coordinate transformation matrix C.sub.m.sup.b again whenever GPS data is obtained, and thus it is possible to considerably reduce a processing load.

In a case where the user changes an advancing direction between the timing at which GPS data is obtained and the next predetermined timing in the waking cycle, a difference between a GPS azimuth angle and an azimuth angle of the inertial measurement unit differs from the time when the coordinate transformation matrix has been calculated. Thus, if the reference azimuth angle calculated by using the most recently obtained GPS data is used at the predetermined timing, there is a concern that estimation accuracy of the state vector X may be considerably reduced. Therefore, in the present embodiment, it is determined whether or not the user advances straight, and, in a case where the user changes an advancing direction between the timing at which GPS data has been obtained and the next predetermined timing in the waking cycle, estimation of the state vector X is not performed at the predetermined timing.

Referring to FIG. 6 again, the walking detection portion 240 performs a process of detecting a cycle of two steps (including left and right steps) as a walking cycle (walking timing) by using a detection result (specifically, sensing data corrected by the bias removing portion 210) in the inertial measurement unit 10. FIG. 9 is a diagram illustrating an example of a combined acceleration of three-axis accelerations detected by the inertial measurement unit 10 during the user's walking. In FIG. 9, a transverse axis expresses time, and a longitudinal axis expresses a value of the combined acceleration. As illustrated in FIG. 9, the combined acceleration periodically changes, and an intermediate timing between the minimum value and the maximum value of the combined acceleration matches the timing at which the azimuth angle in FIG. 8 becomes the maximum or the minimum. Therefore, for example, in a case where a difference (amplitude) of the maximum value and the minimum value of the combined acceleration is equal to or more than a threshold value, the walking detection portion 240 can detect a walking cycle every other time at timings at which an intermediate value between the minimum value and the maximum value of the combined acceleration is obtained. For example, the walking detection portion 240 may preserve an intermediate value obtained at the time at which the combined acceleration has most recently changed from the minimum value to the maximum value (or from the maximum value to the minimum value), and may detect a walking cycle every other time whenever the intermediate value is exceeded (O in FIG. 9).