Systems and methods for providing integrated security management

Roundy , et al.

U.S. patent number 10,242,187 [Application Number 15/265,750] was granted by the patent office on 2019-03-26 for systems and methods for providing integrated security management. This patent grant is currently assigned to Symantec Corporation. The grantee listed for this patent is Symantec Corporation. Invention is credited to Matteo Dell'Amico, Chris Gates, Michael Hart, Stanislav Miskovic, Kevin Roundy.

| United States Patent | 10,242,187 |

| Roundy , et al. | March 26, 2019 |

Systems and methods for providing integrated security management

Abstract

The disclosed computer-implemented method for providing integrated security management may include (1) identifying a computing environment protected by security systems and monitored by a security management system that receives event signatures from the security systems, where a first security system uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system, (2) observing a first event signature that originates from the first security system and uses the first event signature naming scheme, (3) determine that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme, and (4) performing, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment. Various other methods, systems, and computer-readable media are also disclosed.

| Inventors: | Roundy; Kevin (El Segundo, CA), Dell'Amico; Matteo (Valbonne, FR), Gates; Chris (Venice, CA), Hart; Michael (Farmington, CT), Miskovic; Stanislav (San Jose, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Symantec Corporation (Mountain

View, CA) |

||||||||||

| Family ID: | 65811643 | ||||||||||

| Appl. No.: | 15/265,750 | ||||||||||

| Filed: | September 14, 2016 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 21/564 (20130101); G06F 21/552 (20130101); G06F 2221/034 (20130101) |

| Current International Class: | G06F 21/55 (20130101); G06F 21/56 (20130101) |

| Field of Search: | ;726/1 |

References Cited [Referenced By]

U.S. Patent Documents

| 5414833 | May 1995 | Hershey |

| 6279113 | August 2001 | Vaidya |

| 7260844 | August 2007 | Tidwell |

| 8205257 | June 2012 | Satish |

| 8448224 | May 2013 | Wei |

| 8839435 | September 2014 | King |

| 9165142 | October 2015 | Sanders |

| 9256739 | February 2016 | Roundy |

| 2002/0129140 | September 2002 | Peled |

| 2002/0129264 | September 2002 | Rowland |

| 2002/0166049 | November 2002 | Sinn |

| 2003/0084349 | May 2003 | Friedrichs |

| 2004/0044912 | March 2004 | Connary |

| 2004/0064731 | April 2004 | Nguyen |

| 2004/0255147 | December 2004 | Peled |

| 2005/0015624 | January 2005 | Ginter |

| 2007/0136455 | June 2007 | Lee |

| 2008/0005798 | January 2008 | Ross |

| 2008/0028468 | January 2008 | Yi |

| 2008/0086772 | April 2008 | Chesla |

| 2008/0091681 | April 2008 | Dwivedi |

| 2008/0162592 | July 2008 | Huang |

| 2009/0300156 | December 2009 | Yalakanti |

| 2009/0319249 | December 2009 | White |

| 2010/0083382 | April 2010 | Farley |

| 2011/0145920 | June 2011 | Mahaffey |

| 2011/0234406 | September 2011 | Young |

| 2012/0110174 | May 2012 | Wootton |

| 2012/0137365 | May 2012 | Lee |

| 2012/0159564 | June 2012 | Spektor |

| 2012/0167162 | June 2012 | Raleigh |

| 2013/0067572 | March 2013 | Muramoto |

| 2013/0074143 | March 2013 | Bu |

| 2013/0086682 | April 2013 | Mahaffey |

| 2013/0212684 | August 2013 | Li |

| 2013/0298244 | November 2013 | Kumar |

| 2014/0096246 | April 2014 | Morrissey |

| 2015/0074756 | March 2015 | Deng |

| 2015/0074806 | March 2015 | Roundy |

| 2015/0096024 | April 2015 | Haq |

| 2015/0101044 | April 2015 | Martin |

| 2015/0207813 | July 2015 | Reybok |

| 2016/0072836 | March 2016 | Hadden |

| 2016/0267270 | September 2016 | Lee |

| 2017/0026394 | January 2017 | Bartos |

| 2017/0068706 | March 2017 | Bray |

| 2017/0134406 | May 2017 | Guo |

| 2017/0142136 | May 2017 | Yi |

| 2017/0214701 | July 2017 | Hasan |

Other References

|

Kolmogorov--Smirnov test; https://en.wikipedia.org/wiki/Kolmogorov%E2%80%93Smirnov_test, retrieved Oct. 6, 2016; May 4, 2009. cited by applicant . Kevin Alejandro Roundy, et al; Systems and Methods for Detecting Security Threats; U.S. Appl. No. 15/084,522, filed Mar. 30, 2016. cited by applicant. |

Primary Examiner: Zand; Kambiz

Assistant Examiner: Ahmed; Mahabub S

Attorney, Agent or Firm: FisherBroyles, LLP

Claims

What is claimed is:

1. A computer-implemented method for providing integrated security management, at least a portion of the method being performed by a computing device comprising at least one processor, the method comprising: identifying a computing environment protected by a plurality of security systems and monitored by a security management system that receives event signatures from each of the plurality of security systems, wherein the plurality of security systems includes a first security system and a second security system that respectively use a first naming scheme and a second naming scheme to name event signatures, the first and second naming schemes differing from each other; observing a first event signature that originates from the first security system, the first event signature having been given a first event signature name by the first security system according to the first naming scheme; determining that the first event signature is equivalent to a second event signature that has been given a second event signature name by the second security system according to the second naming scheme, the second event signature name being different than the first event signature name; and performing, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature even though the first and second event signature names are different.

2. The computer-implemented method of claim 1, wherein determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme comprises: identifying a first co-occurrence profile that describes how frequently the first event signature co-occurs with each of a set of event signatures; identifying a second co-occurrence profile that describes how frequently the second event signature co-occurs with each of the set of event signatures; and determining that a similarity between the first co-occurrence profile and the second co-occurrence profile exceeds a predetermined threshold.

3. The computer-implemented method of claim 2, wherein the first co-occurrence profile describes how frequently the first event signature co-occurs with each of the set of event signatures by describing how frequently each of the set of event signatures is observed within a common computing environment and within a predetermined time window when the first event signature occurs within the common computing environment.

4. The computer-implemented method of claim 1, wherein determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme comprises: comparing a name of the first event signature with a name of the second event signature according to a similarity metric; and determining, based on comparing the name of the first event signature with the name of the second event signature according to the similarity metric, that a similarity of the name of the first event signature and the name of the second event signature according to the similarity metric exceeds a predetermined threshold.

5. The computer-implemented method of claim 4, wherein comparing the name of the first event signature with the name of the second event signature comprises: identifying a first plurality of terms within the name of the first event signature; identifying a second plurality of terms within the name of the second event signature; weighting each term within the first plurality of terms and the second plurality of terms according to an inverse frequency with which each term appears within event signature names produced by the plurality of security systems; and comparing the first plurality of terms as weighted with the second plurality of terms as weighted.

6. The computer-implemented method of claim 4, wherein comparing the name of the first event signature with the name of the second event signature comprises: identifying a first term within the name of the first event signature; identifying a second term within the name of the second event signature; determining that the first term and the second term are synonymous; and treating the first term and the second term as equivalent when comparing the name of the first event signature with the name of the second event signature based on determining that the first term and the second term are synonymous.

7. The computer-implemented method of claim 6, wherein determining that the first term and the second term are synonymous comprises: providing a malware sample to each of a plurality of malware detection systems; and determining that a first malware detection system within the plurality of malware detection systems identifies the malware sample using the first term and a second malware detection system within the plurality of malware detection systems identifies the malware sample using the second term.

8. The computer-implemented method of claim 1, wherein determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme comprises: identifying a signature association statistic that describes how frequently the first event signature is observed in association with observing the second event signature and how frequently the second event signature is observed in association with observing the first event signature; and determining that the signature association statistic exceeds a predetermined threshold.

9. The computer-implemented method of claim 1, wherein performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature comprises: identifying a security rule that specifies performing the security action at least in part in response to observing the second event signature; and enhancing the security rule to respond to the first event signature with the security action.

10. The computer-implemented method of claim 1, wherein performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature comprises: identifying a first statistical description of how reliably the first event signature indicates a predetermined security condition; identifying a second statistical description of how reliably the second event signature indicates the predetermined security condition; and attributing a confidence level to an existence of the predetermined security condition within the computing environment at least in part by generating a merged statistical description of how reliably a class of event signatures that comprises the first event signature and the second event signature indicates the predetermined security condition and using the merged statistical description to generate the confidence level upon observing the first event signature within the computing environment.

11. The computer-implemented method of claim 1, wherein performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature comprises: determining that the plurality of security systems within the computing environment collectively support reporting the second event signature to the security management system; determining, based on determining that the first event signature is equivalent to the second event signature and based on determining that the plurality of security systems collectively support reporting the second event signature, that the plurality of security systems effectively support reporting the first event signature to the security management system; and identifying a recommended security product for the computing environment in light of determining that the plurality of security systems effectively support reporting the first event signature to the security management system.

12. A system for providing integrated security management, the system comprising: an identification module, stored in memory, that identifies a computing environment protected by a plurality of security systems and monitored by a security management system that receives event signatures from each of the plurality of security systems, wherein the plurality of security systems includes a first security system and a second security system that respectively use a first naming scheme and a second naming scheme to name event signatures, the first and second naming schemes differing from each other; an observation module, stored in memory, that observes a first event signature that originates from the first security system, the first event signature having been given a first event signature name by the first security system according to the first naming scheme; a determination module, stored in memory, that determines that the first event signature is equivalent to a second event signature that has been given a second event signature name by the second security system according to the second naming scheme, the second event signature name being different than the first event signature name; a performing module, stored in memory, that performs, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature even though the first and second event signature names are different; and at least one physical processor configured to execute the identification module, the observation module, the determination module, and the performing module.

13. The system of claim 12, wherein the determination module determines that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by: identifying a first co-occurrence profile that describes how frequently the first event signature co-occurs with each of a set of event signatures; identifying a second co-occurrence profile that describes how frequently the second event signature co-occurs with each of the set of event signatures; and determining that a similarity between the first co-occurrence profile and the second co-occurrence profile exceeds a predetermined threshold.

14. The system of claim 13, wherein the first co-occurrence profile describes how frequently the first event signature co-occurs with each of the set of event signatures by describing how frequently each of the set of event signatures is observed within a common computing environment and within a predetermined time window when the first event signature occurs within the common computing environment.

15. The system of claim 12, wherein the determination module determines that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by: comparing a name of the first event signature with a name of the second event signature according to a similarity metric; and determining, based on comparing the name of the first event signature with the name of the second event signature according to the similarity metric, that a similarity of the name of the first event signature and the name of the second event signature according to the similarity metric exceeds a predetermined threshold.

16. The system of claim 15, wherein the determination module compares the name of the first event signature with the name of the second event signature by: identifying a first plurality of terms within the name of the first event signature; identifying a second plurality of terms within the name of the second event signature; weighting each term within the first plurality of terms and the second plurality of terms according to an inverse frequency with which each term appears within event signature names produced by the plurality of security systems; and comparing the first plurality of terms as weighted with the second plurality of terms as weighted.

17. The system of claim 15, wherein the determination module compares the name of the first event signature with the name of the second event signature by: identifying a first term within the name of the first event signature; identifying a second term within the name of the second event signature; determining that the first term and the second term are synonymous; and treating the first term and the second term as equivalent when comparing the name of the first event signature with the name of the second event signature based on determining that the first term and the second term are synonymous.

18. The system of claim 17, wherein the determination module determines that the first term and the second term are synonymous by: providing a malware sample to each of a plurality of malware detection systems; and determining that a first malware detection system within the plurality of malware detection systems identifies the malware sample using the first term and a second malware detection system within the plurality of malware detection systems identifies the malware sample using the second term.

19. The system of claim 12, wherein the determination module determines that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by: identifying a signature association statistic that describes how frequently the first event signature is observed in association with observing the second event signature and how frequently the second event signature is observed in association with observing the first event signature; and determining that the signature association statistic exceeds a predetermined threshold.

20. A non-transitory computer-readable medium comprising one or more computer-readable instructions that, when executed by at least one processor of a computing device, cause the computing device to: identify a computing environment protected by a plurality of security systems and monitored by a security management system that receives event signatures from each of the plurality of security systems, wherein the plurality of security systems includes a first security system and a second security system that respectively use a first naming scheme and a second naming scheme to name event signatures, the first and second naming schemes differing from each other; observe a first event signature that originates from the first security system, the first event signature having been given a first event signature name by the first security system according to the first naming scheme; determine that the first event signature is equivalent to a second event signature that has been given a second event signature name by the second security system according to the second naming scheme, the second event signature name being different than the first event signature name; and perform, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature even though the first and second event signature names are different.

Description

BACKGROUND

Individuals and organizations often seek to protect their computing resources from security threats and corresponding attackers. Accordingly, enterprise organizations may employ a variety of security product solutions, such as endpoint antivirus products and network firewall products. In some examples, a security vendor, acting as a managed security service provider, may effectively manage a bundle of security services for a client. More specifically, in some examples, the managed security service provider may aggregate security incident signatures from a variety of endpoint security products and software security agents, thereby providing a more comprehensive and informative overview of computing resource security and relevant security incidents.

However, frequently an organization may deploy heterogeneous security products from different vendors and/or using differing classification and reporting schemes. The resulting heterogeneous signature sources to be consumed by the managed security service provider may include redundant information that appears distinct, thereby potentially reducing the effectiveness of the managed security service and/or adding confusion, expense, uncertainty, inconsistency, and human error to the administration and configuration of the managed security service.

The instant disclosure, therefore, identifies and addresses a need for systems and methods for providing integrated security management.

SUMMARY

As will be described in greater detail below, the instant disclosure describes various systems and methods for providing integrated security management.

In one example, a computer-implemented method for providing integrated security management may include (i) identifying a computing environment protected by a group of security systems and monitored by a security management system that receives event signatures from each of the security systems, where a first security system within the security systems uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system within the security systems, (ii) observing a first event signature that originates from the first security system and uses the first event signature naming scheme, (iii) determining that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme, and (iv) performing, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature.

In some examples, determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme may include: (i) identifying a first co-occurrence profile that describes how frequently the first event signature co-occurs with each of a set of event signatures, (ii) identifying a second co-occurrence profile that describes how frequently the second event signature co-occurs with each of the set of event signatures, and (iii) determining that a similarity between the first co-occurrence profile and the second co-occurrence profile exceeds a predetermined threshold.

In one embodiment, the first co-occurrence profile describes how frequently the first event signature co-occurs with each of the set of event signatures by describing how frequently each of the set of event signatures is observed within a common computing environment and within a predetermined time window when the first event signature occurs within the common computing environment.

In some examples, determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme may include: comparing a name of the first event signature with a name of the second event signature according to a similarity metric and determining, based on comparing the name of the first event signature with the name of the second event signature according to the similarity metric, that a similarity of the name of the first event signature and the name of the second event signature according to the similarity metric exceeds a predetermined threshold.

In some examples, comparing the name of the first event signature with the name of the second event signature may include: (i) identifying a first plurality of terms within the name of the first event signature, (ii) identifying a second plurality of terms within the name of the second event signature, (iii) weighting each term within the first plurality of terms and the second plurality of terms according to an inverse frequency with which each term appears within event signature names produced by the security systems, and (iv) comparing the first plurality of terms as weighted with the second plurality of terms as weighted.

In some examples, comparing the name of the first event signature with the name of the second event signature may include: (i) identifying a first term within the name of the first event signature, (ii) identifying a second term within the name of the second event signature, (iii) determining that the first term and the second term are synonymous, and (iv) treating the first term and the second term as equivalent when comparing the name of the first event signature with the name of the second event signature based on determining that the first term and the second term are synonymous.

In some examples, determining that the first term and the second term are synonymous may include: providing a malware sample to each of a group of malware detection systems and determining that a first malware detection system within the malware detection systems identifies the malware sample using the first term and a second malware detection system within the malware detection systems identifies the malware sample using the second term.

In some examples, determining that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme may include: identifying a signature association statistic that describes how frequently the first event signature is observed in association with observing the second event signature and how frequently the second event signature is observed in association with observing the first event signature and determining that the signature association statistic exceeds a predetermined threshold.

In one embodiment, performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature may include: identifying a security rule that specifies performing the security action at least in part in response to observing the second event signature and enhancing the security rule to respond to the first event signature with the security action.

In one embodiment, performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature may include: (i) identifying a first statistical description of how reliably the first event signature indicates a predetermined security condition, (ii) identifying a second statistical description of how reliably the second event signature indicates the predetermined security condition, and (iii) attributing a confidence level to an existence of the predetermined security condition within the computing environment at least in part by generating a merged statistical description of how reliably a class of event signatures that includes the first event signature and the second event signature indicates the predetermined security condition and using the merged statistical description to generate the confidence level upon observing the first event signature within the computing environment.

In one embodiment, performing, in connection with observing the first event signature, the security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature may include: (i) determining that the security systems within the computing environment collectively support reporting the second event signature to the security management system, (ii) determining, based on determining that the first event signature is equivalent to the second event signature and based on determining that the security systems collectively support reporting the second event signature, that the security systems effectively support reporting the first event signature to the security management system, and (iii) identifying a recommended security product for the computing environment in light of determining that the security systems effectively support reporting the first event signature to the security management system.

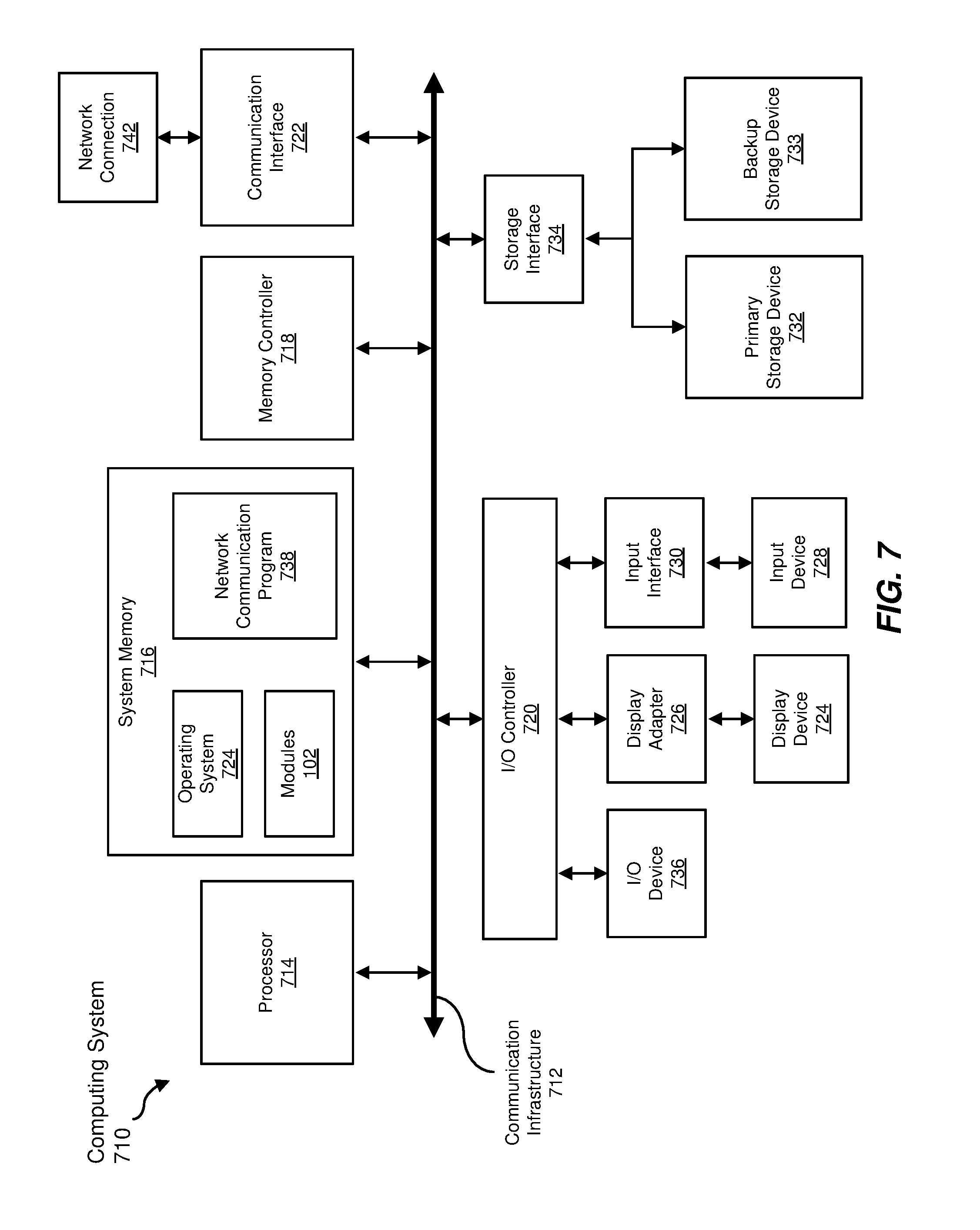

In one embodiment, a system for implementing the above-described method may include (i) an identification module, stored in memory, that identifies a computing environment protected by a group of security systems and monitored by a security management system that receives event signatures from each of the security systems, where a first security system within the security systems uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system within the security systems, (ii) an observation module, stored in memory, that observes a first event signature that originates from the first security system and uses the first event signature naming scheme, (iii) a determination module, stored in memory, that determines that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme, (iv) a performing module, stored in memory, that performs, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature, and (v) at least one physical processor configured to execute the identification module, the observation module, the determination module, and the performing module.

In some examples, the above-described method may be encoded as computer-readable instructions on a non-transitory computer-readable medium. For example, a computer-readable medium may include one or more computer-executable instructions that, when executed by at least one processor of a computing device, may cause the computing device to (i) identify a computing environment protected by a group of security systems and monitored by a security management system that receives event signatures from each of the security systems, where a first security system within the security systems uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system within the security systems, (ii) observe a first event signature that originates from the first security system and uses the first event signature naming scheme, (iii) determine that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme, and (iv) perform, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature.

Features from any of the above-mentioned embodiments may be used in combination with one another in accordance with the general principles described herein. These and other embodiments, features, and advantages will be more fully understood upon reading the following detailed description in conjunction with the accompanying drawings and claims.

BRIEF DESCRIPTION OF THE DRAWINGS

The accompanying drawings illustrate a number of example embodiments and are a part of the specification. Together with the following description, these drawings demonstrate and explain various principles of the instant disclosure.

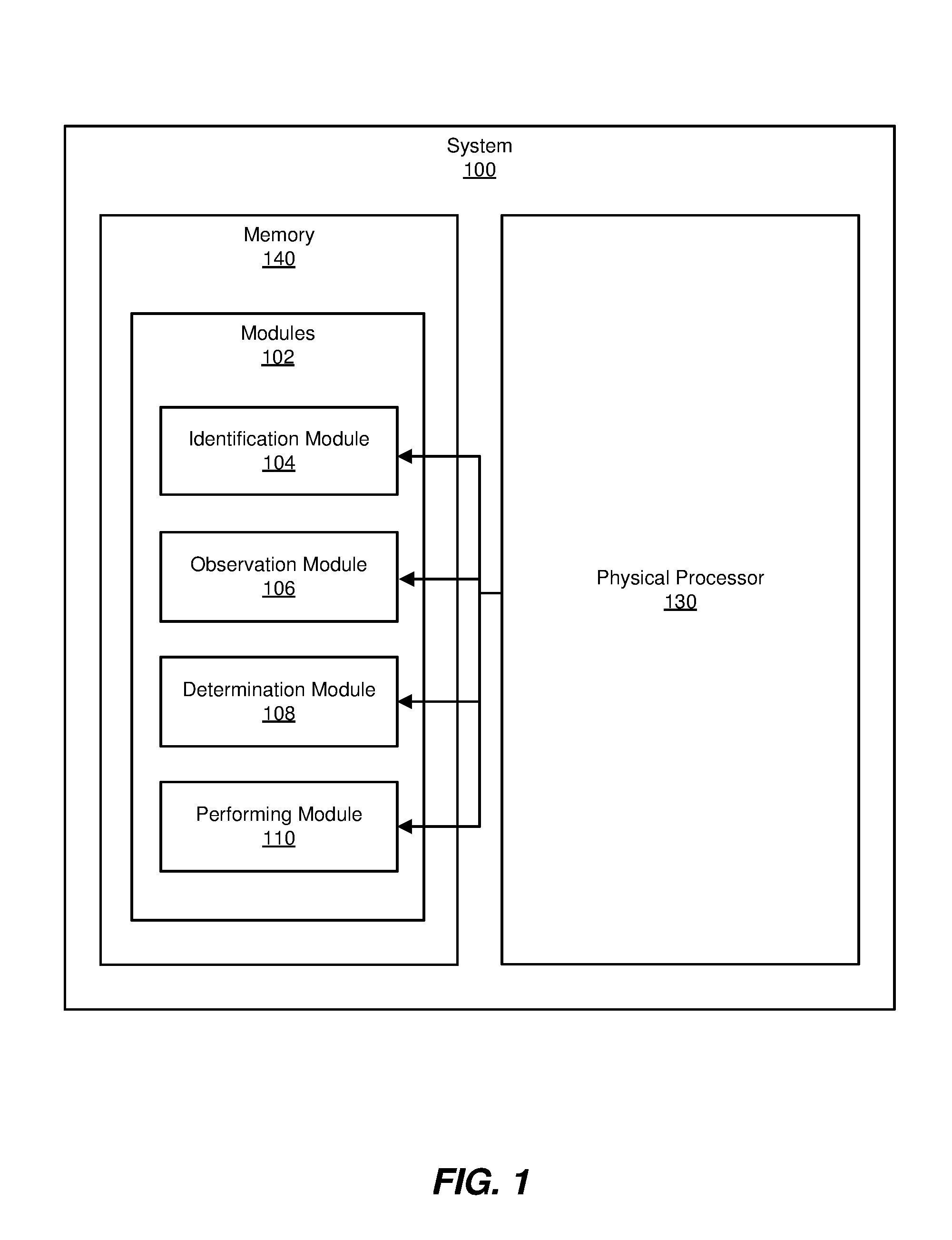

FIG. 1 is a block diagram of an example system for providing integrated security management.

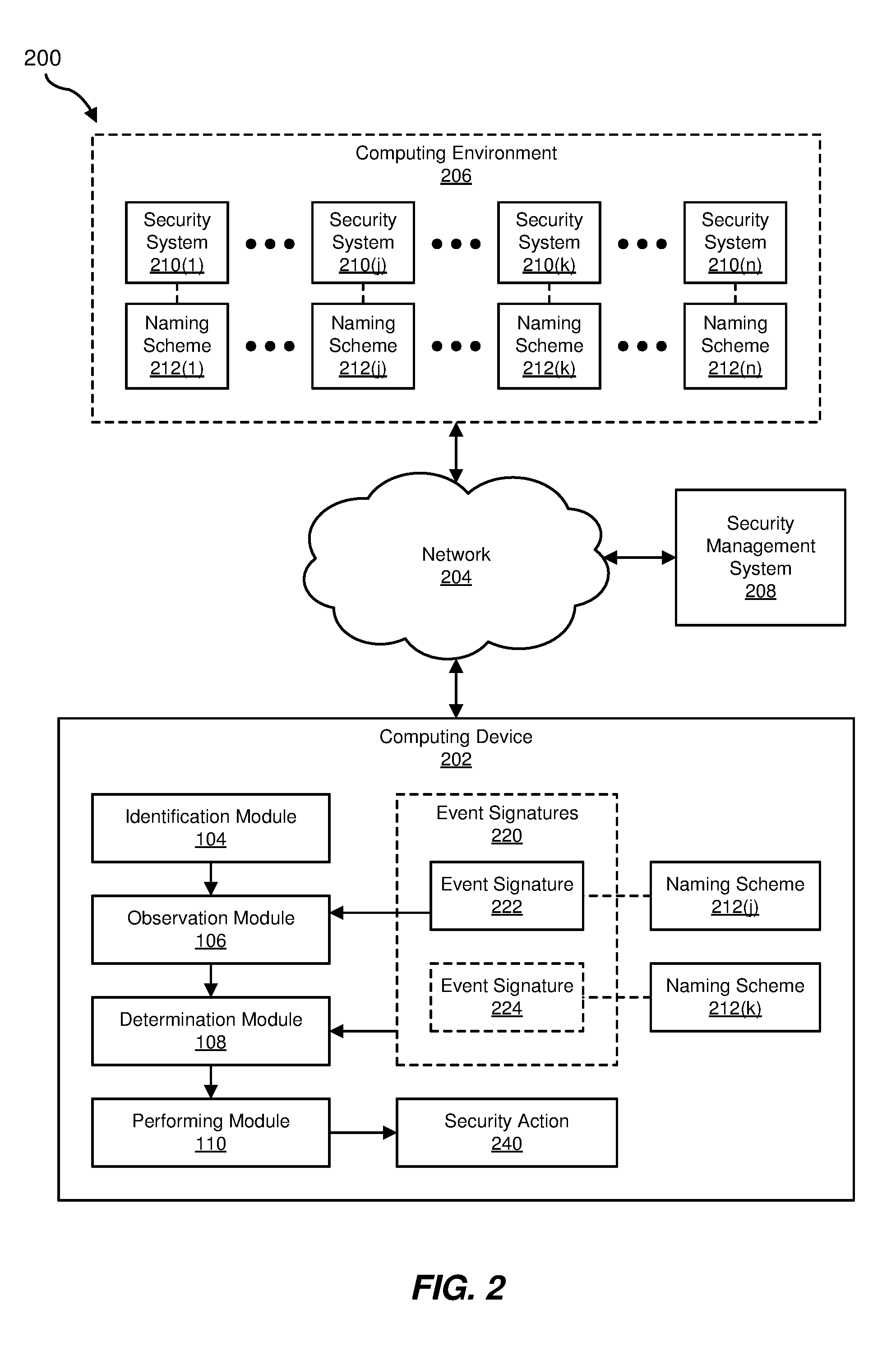

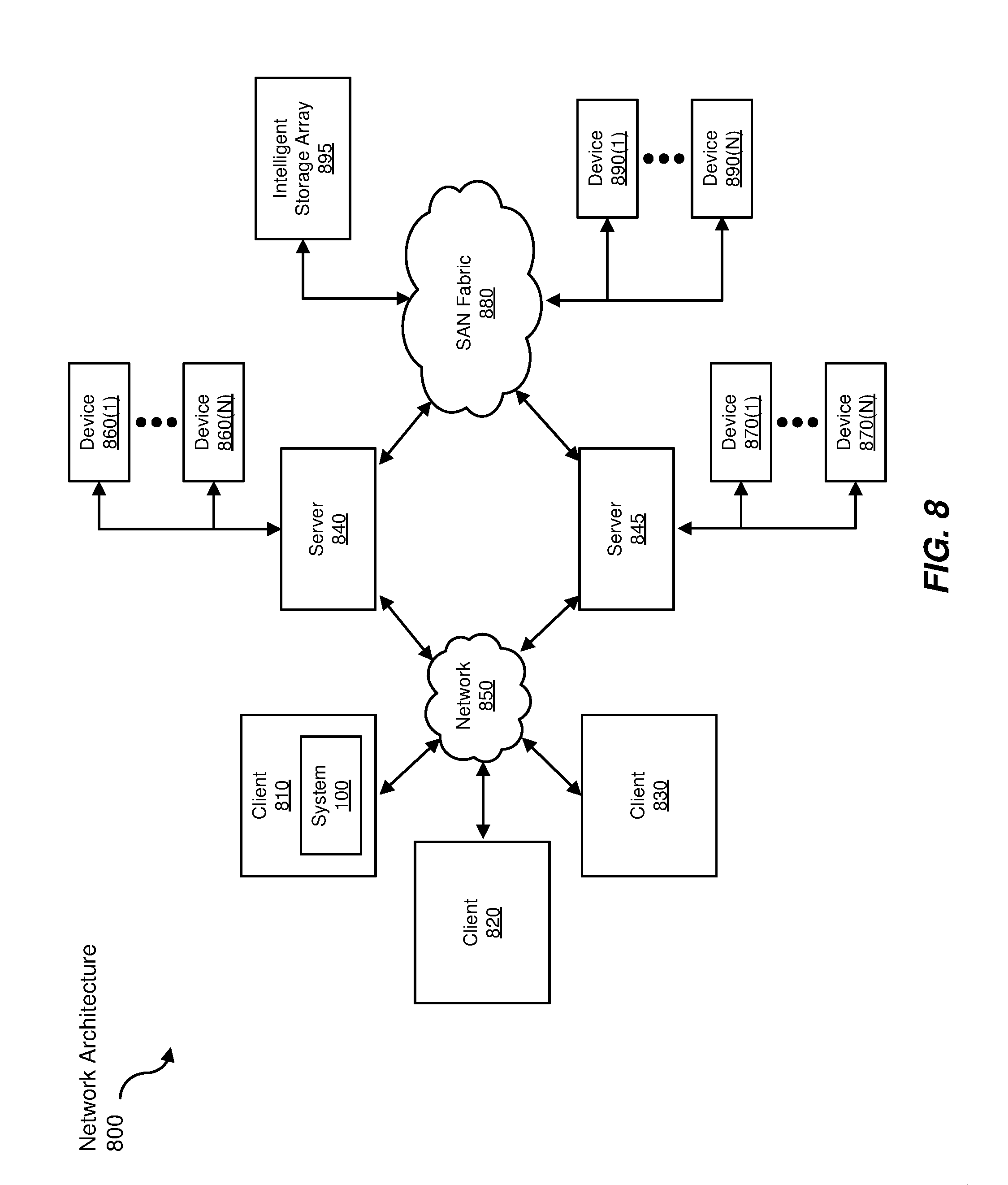

FIG. 2 is a block diagram of an additional example system for providing integrated security management.

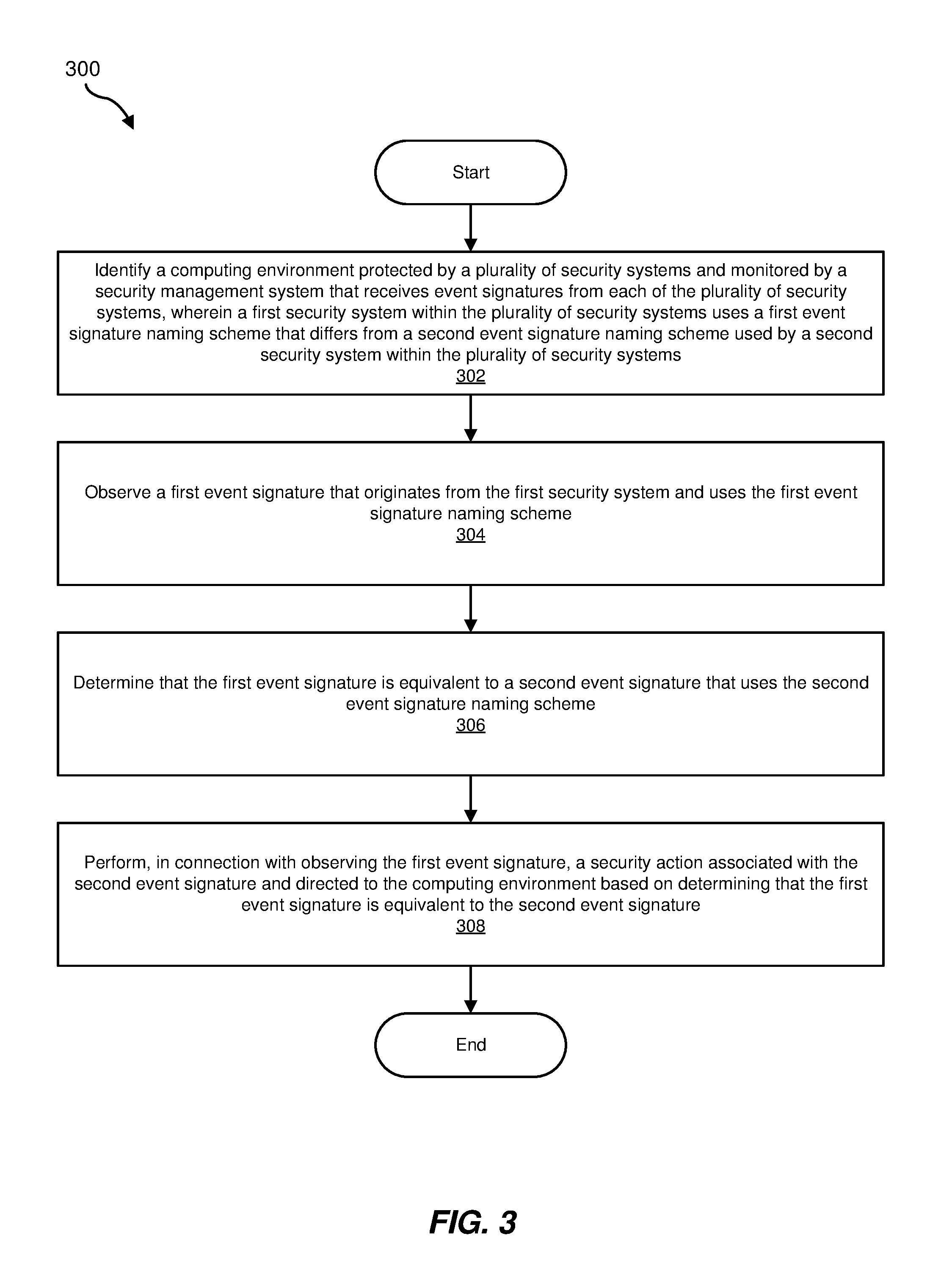

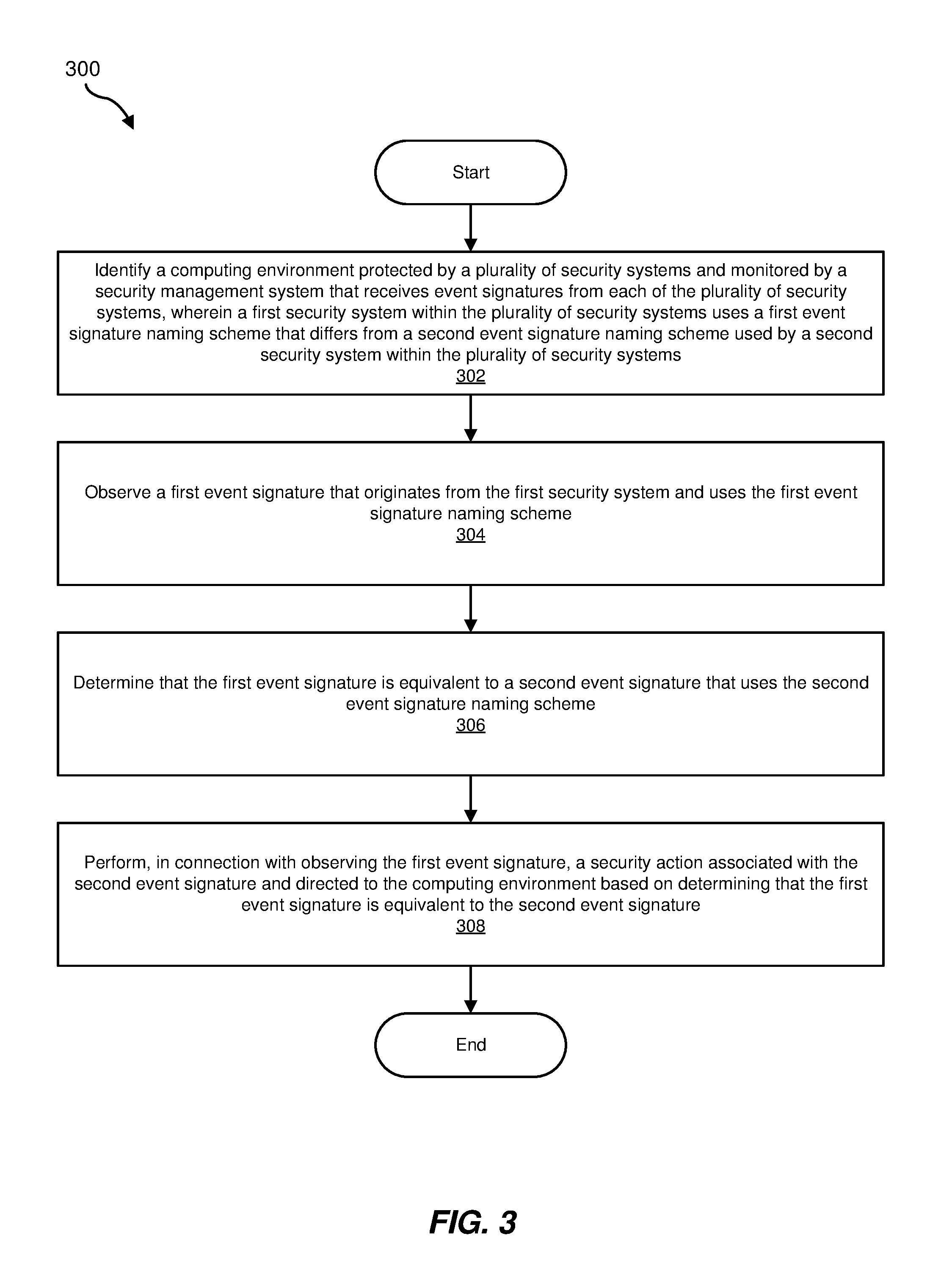

FIG. 3 is a flow diagram of an example method for providing integrated security management.

FIG. 4 is a diagram of example signature profiles for providing integrated security management.

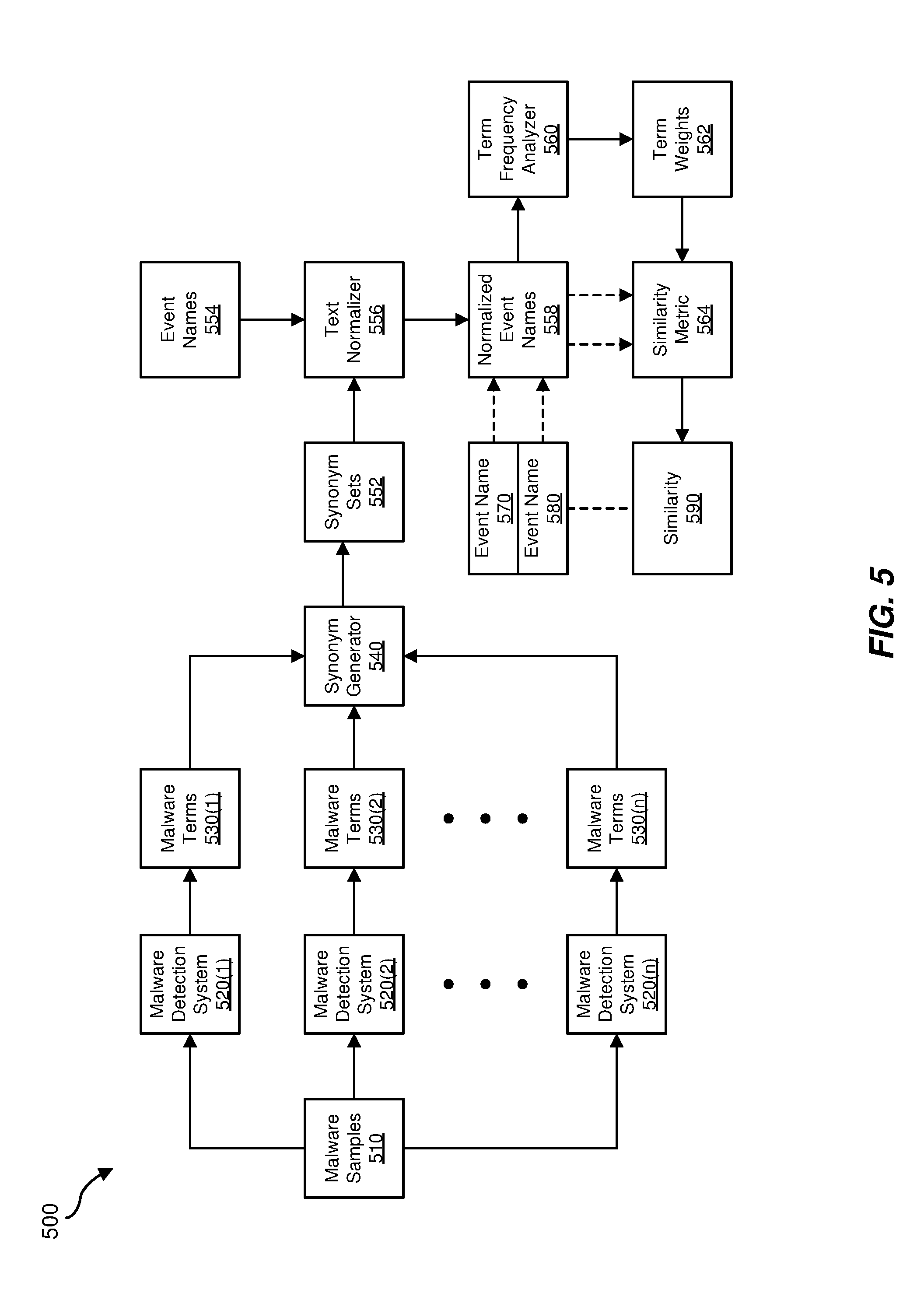

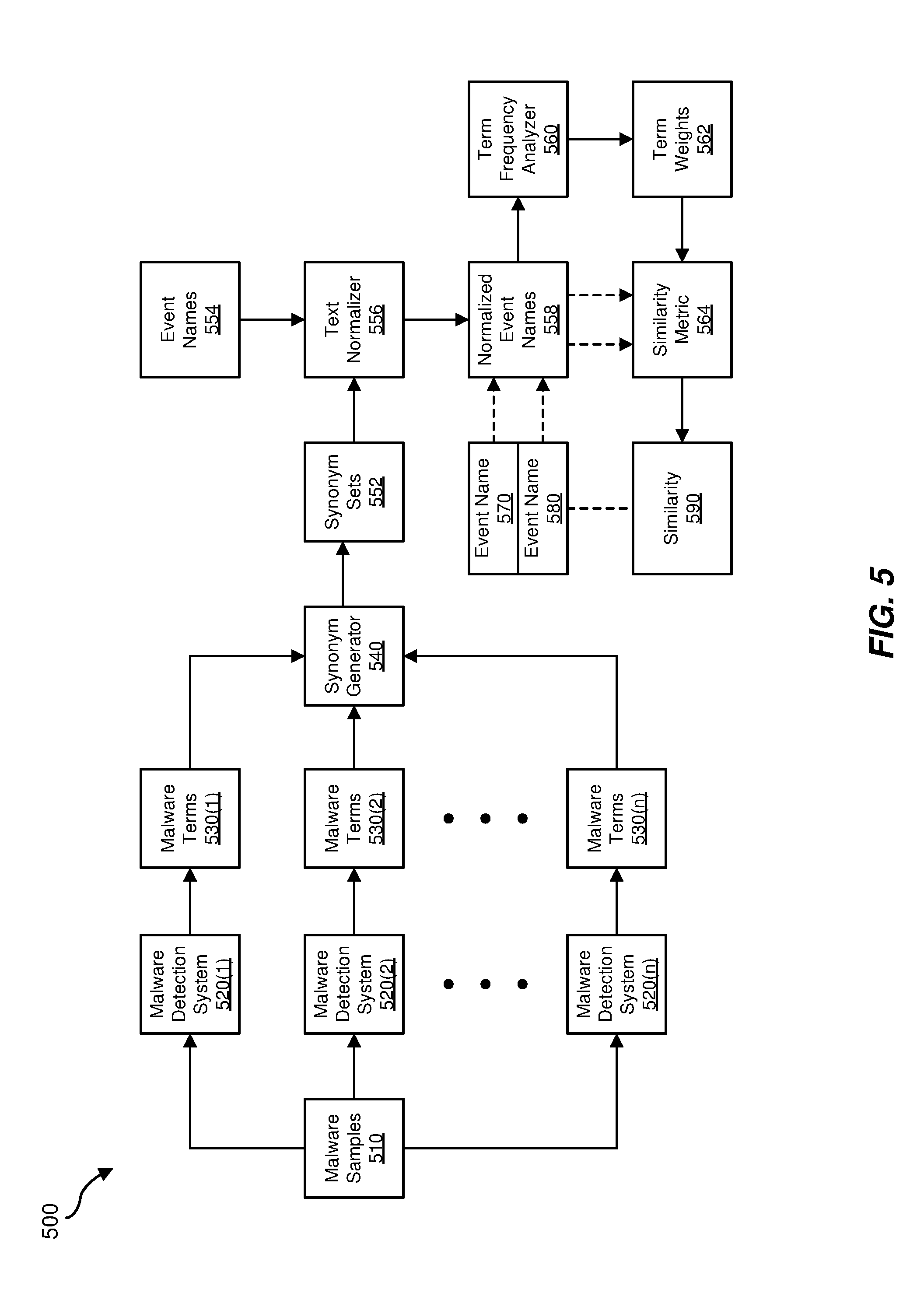

FIG. 5 is a block diagram of an example computing system for providing integrated security management.

FIG. 6 is a block diagram of an example computing system for providing integrated security management.

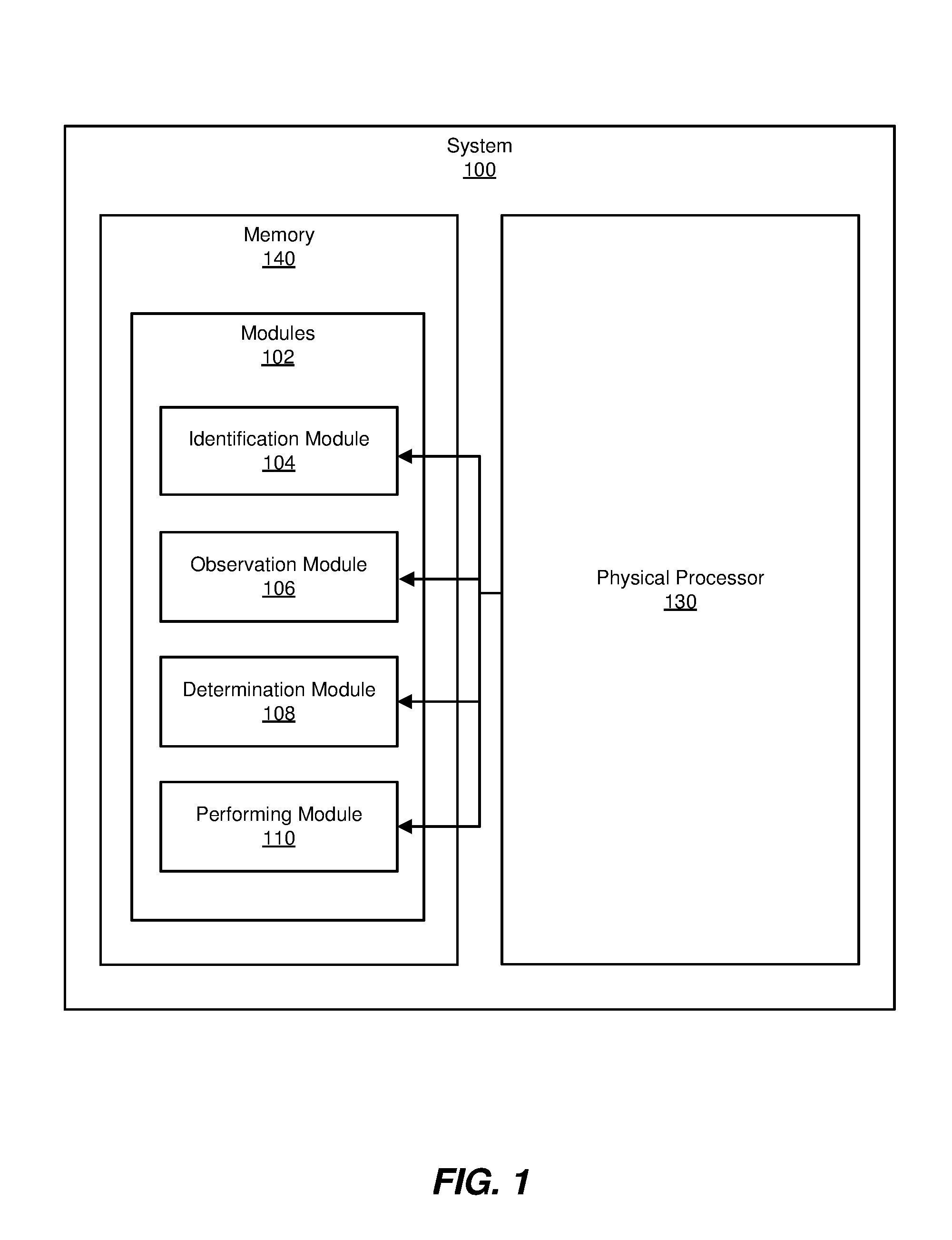

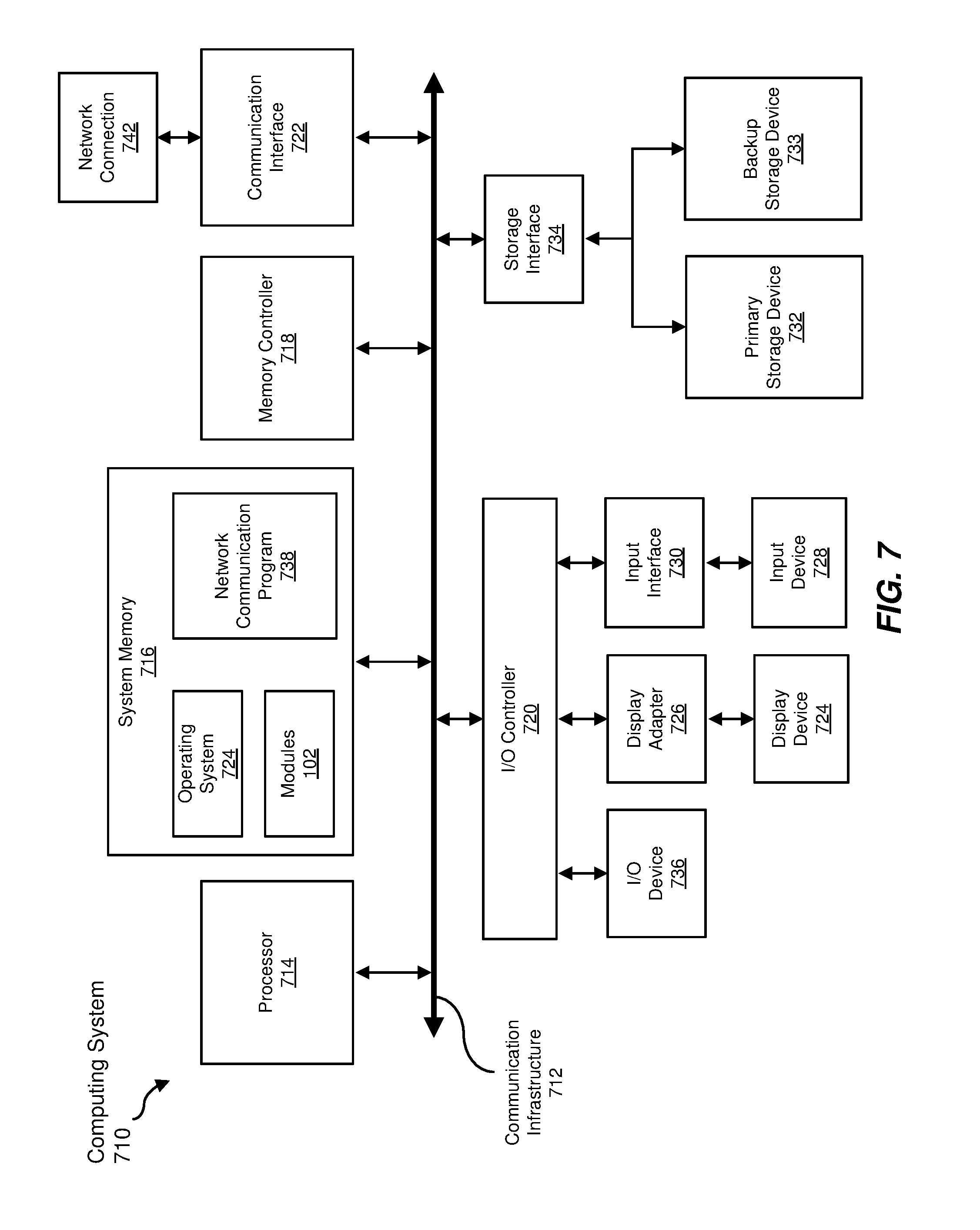

FIG. 7 is a block diagram of an example computing system capable of implementing one or more of the embodiments described and/or illustrated herein.

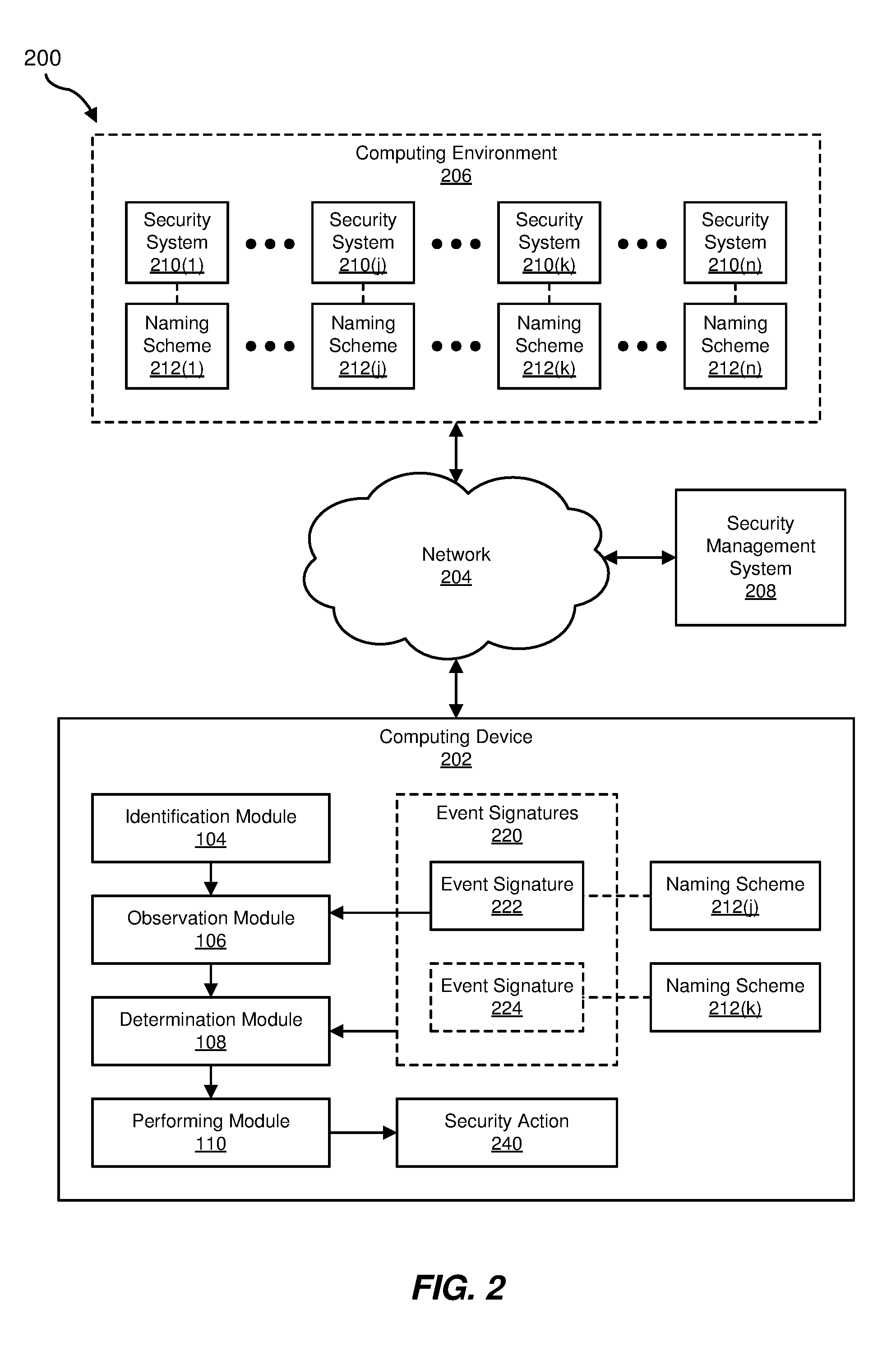

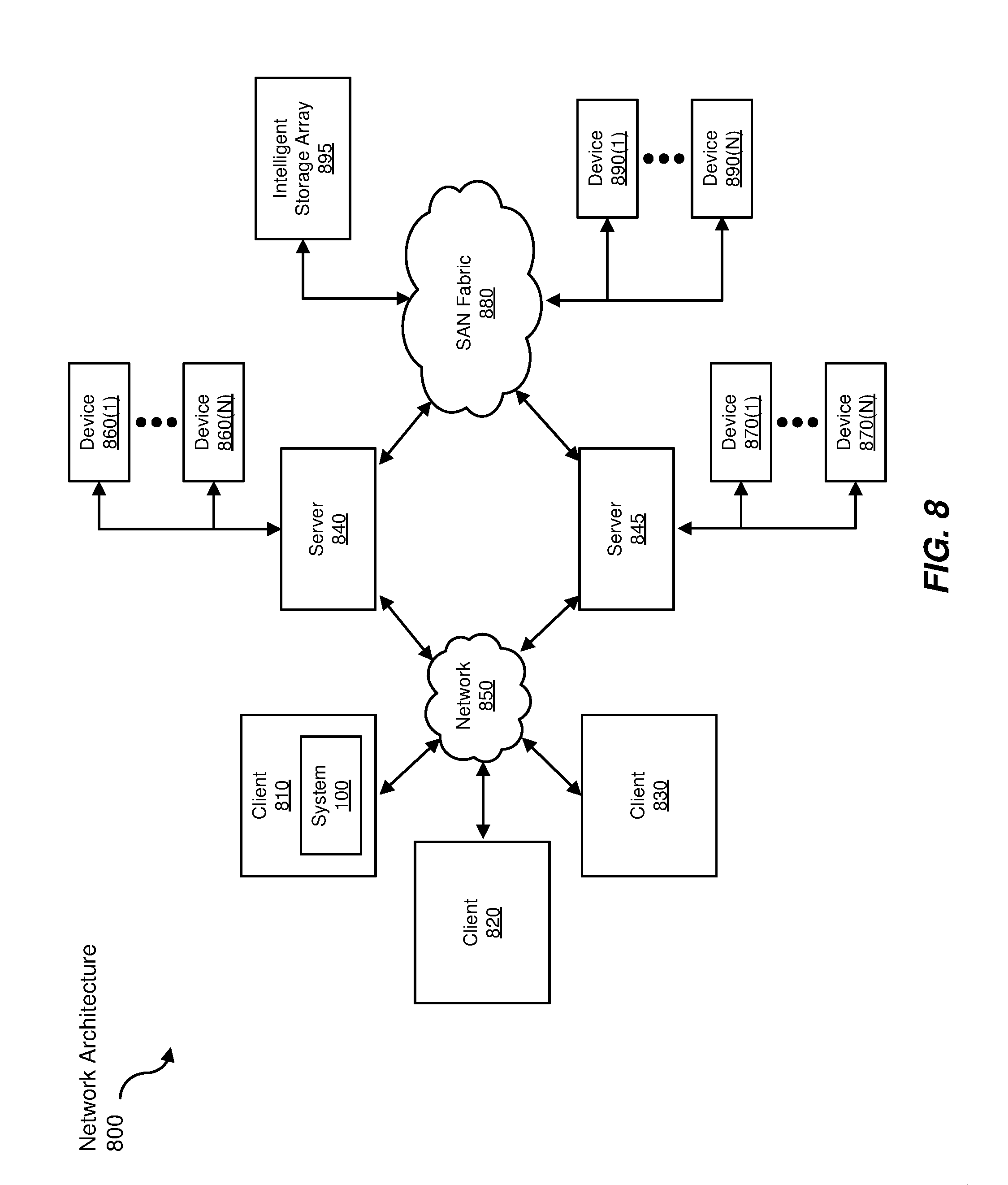

FIG. 8 is a block diagram of an example computing network capable of implementing one or more of the embodiments described and/or illustrated herein.

Throughout the drawings, identical reference characters and descriptions indicate similar, but not necessarily identical, elements. While the example embodiments described herein are susceptible to various modifications and alternative forms, specific embodiments have been shown by way of example in the drawings and will be described in detail herein. However, the example embodiments described herein are not intended to be limited to the particular forms disclosed. Rather, the instant disclosure covers all modifications, equivalents, and alternatives falling within the scope of the appended claims.

DETAILED DESCRIPTION OF EXAMPLE EMBODIMENTS

The present disclosure is generally directed to systems and methods for providing integrated security management. As will be explained in greater detail below, by identifying equivalent signatures generated by various (e.g., heterogeneous) security systems, the systems and methods described herein may improve the accuracy, consistency, administration, and/or performance of managed security services. For example, identifying equivalent signatures may improve the effectiveness and consistency of rules based on certain signatures by enhancing the rules to respond to equivalent signatures. In some examples, the systems and methods described herein may identify equivalent signatures by determining which signatures occur in the same contexts, by determining which signatures share similar names, and/or by determining which signatures are expected to occur in certain contexts based on a predictive analysis.

By improving the performance, accuracy, and/or consistency of managed security services (e.g., by correctly and/or automatically responding to a signature based on the signature's determined equivalence to another signature), the systems described herein may improve the functioning of a computing device that provides the managed security services. In addition, by improving the performance, accuracy, and/or consistency of managed security services, the systems described herein may improve the function of computing devices that are protected by the managed security services.

In some examples, an organization which deploys various computing security products within an enterprise environment (and/or a security vendor which does so on behalf of the organization) may realize more value from each deployed security product through the application of the systems and methods described herein. In addition, an organization may benefit more immediately from the deployment of a new security product (e.g., by automatically making effective use of signatures generated by the new security product which have not previously been leveraged within the enterprise environment).

The following will provide, with reference to FIGS. 1-2 and 5-6, detailed descriptions of example systems for providing integrated security management. Detailed descriptions of corresponding computer-implemented methods will also be provided in connection with FIG. 3. Detailed descriptions of example illustrations of signature profiles will be provided in connection with FIG. 4. In addition, detailed descriptions of an example computing system and network architecture capable of implementing one or more of the embodiments described herein will be provided in connection with FIGS. 7 and 8, respectively.

FIG. 1 is a block diagram of example system 100 for providing integrated security management. As illustrated in this figure, example system 100 may include one or more modules 102 for performing one or more tasks. For example, and as will be explained in greater detail below, example system 100 may include an identification module 104 that identifies a computing environment protected by a plurality of security systems and monitored by a security management system that receives event signatures from each of the plurality of security systems, where a first security system within the plurality of security systems uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system within the plurality of security systems. Example system 100 may additionally include an observation module 106 that observes a first event signature that originates from the first security system and uses the first event signature naming scheme. Example system 100 may also include a determination module 108 that determines that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme. Example system 100 may additionally include a performing module 110 that performs, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature. Although illustrated as separate elements, one or more of modules 102 in FIG. 1 may represent portions of a single module or application.

In certain embodiments, one or more of modules 102 in FIG. 1 may represent one or more software applications or programs that, when executed by a computing device, may cause the computing device to perform one or more tasks. For example, and as will be described in greater detail below, one or more of modules 102 may represent modules stored and configured to run on one or more computing devices, such as the devices illustrated in FIG. 2 (e.g., computing device 202, one or more devices within computing environment 206, and/or security management system 208). One or more of modules 102 in FIG. 1 may also represent all or portions of one or more special-purpose computers configured to perform one or more tasks.

As illustrated in FIG. 1, example system 100 may also include one or more memory devices, such as memory 140. Memory 140 generally represents any type or form of volatile or non-volatile storage device or medium capable of storing data and/or computer-readable instructions. In one example, memory 140 may store, load, and/or maintain one or more of modules 102. Examples of memory 140 include, without limitation, Random Access Memory (RAM), Read Only Memory (ROM), flash memory, Hard Disk Drives, (HDDs), Solid-State Drives (SSDs), optical disk drives, caches, variations or combinations of one or more of the same, and/or any other suitable storage memory.

As illustrated in FIG. 1, example system 100 may also include one or more physical processors, such as physical processor 130. Physical processor 130 generally represents any type or form of hardware-implemented processing unit capable of interpreting and/or executing computer-readable instructions. In one example, physical processor 130 may access and/or modify one or more of modules 102 stored in memory 140. Additionally or alternatively, physical processor 130 may execute one or more of modules 102 to facilitate providing integrated security management. Examples of physical processor 130 include, without limitation, microprocessors, microcontrollers, Central Processing Units (CPUs), Field-Programmable Gate Arrays (FPGAs) that implement softcore processors, Application-Specific Integrated Circuits (ASICs), portions of one or more of the same, variations or combinations of one or more of the same, and/or any other suitable physical processor.

Example system 100 in FIG. 1 may be implemented in a variety of ways. For example, all or a portion of example system 100 may represent portions of example system 200 in FIG. 2. As shown in FIG. 2, system 200 may include a computing device 202 in communication with a computing environment 206 and/or a security management system 208 via a network 204. In one example, all or a portion of the functionality of modules 102 may be performed by computing device 202, computing environment 206, security management system 208, and/or any other suitable computing system. As will be described in greater detail below, one or more of modules 102 from FIG. 1 may, when executed by at least one processor of computing device 202, computing environment 206, and/or security management system 208, enable computing device 202, computing environment 206, and/or security management system 208 to provide integrated security management. For example, and as will be described in greater detail below, one or more of modules 102 may cause computing device 202 to integrate event signatures 220 generated by security systems 210(1)-(n) to improve the functioning of security management system 208. For example, and as will be described in greater detail below, identification module 104 may identify computing environment 206 protected by security systems 210(1)-(n) and monitored by security management system 208 that receives event signatures 220 from security systems 210(1)-(n), where a security system 210(j) within security systems 210 uses a naming scheme 212(j) that differs from a naming scheme 212(k) used by a security system 210(k) within the security systems 210. Observation module 106 may observe an event signature 222 that originates from security system 210(j) and uses naming scheme 212(j). Determination module 108 may determine that event signature 222 is equivalent to an event signature 224 that uses naming scheme 212(k). Performing module 110 may perform, in connection with observing event signature 222, a security action 240 associated with event signature 224 and directed to computing environment 206 based on determining that event signature 222 is equivalent to event signature 224.

Computing device 202 generally represents any type or form of computing device capable of reading computer-executable instructions. For example, computing device 202 may represent a device providing a security management service. In some examples, computing device 202 may represent a security-vendor-side computing device providing one or more security management services (e.g., for computing environment 206). Additionally or alternatively, computing device 202 may represent a portion of security management system 208. Additional examples of computing device 202 include, without limitation, servers, desktops, laptops, tablets, cellular phones, Personal Digital Assistants (PDAs), multimedia players, embedded systems, wearable devices (e.g., smart watches, smart glasses, etc.), gaming consoles, variations or combinations of one or more of the same, and/or any other suitable computing device.

Computing environment 206 generally represents any type or form of computing device, collection of computing devices, and/or computing environment provided by one or more computing devices capable of reading computer-executable instructions. For example, computing environment 206 may represent an enterprise network environment that is protected by one or more security systems (e.g., security systems 210(1)-(n)). Examples of computing environment 208 and/or devices that may operate within and/or underlie computing environment 208 include, without limitation, servers, desktops, laptops, tablets, cellular phones, Personal Digital Assistants (PDAs), multimedia players, embedded systems, wearable devices (e.g., smart watches, smart glasses, etc.), gaming consoles, combinations of one or more of the same, example computing system 710 in FIG. 7, or any other suitable computing device, and/or any suitable computing environment provided by such computing devices.

Security management system 208 generally represents any type or form of computing device capable of providing one or more security services and/or processing security-relevant information derived from one or more security systems. In some examples, may represent a device providing a security management service. In some examples, security management system 208 may represent a security-vendor-side computing device providing one or more security management services (e.g., for computing environment 206). Additionally or alternatively, security management system 208 may include computing device 202.

Network 204 generally represents any medium or architecture capable of facilitating communication or data transfer. In one example, network 204 may facilitate communication between computing device 202, computing environment 206, and/or security management system 208. In this example, network 204 may facilitate communication or data transfer using wireless and/or wired connections. Examples of network 204 include, without limitation, an intranet, a Wide Area Network (WAN), a Local Area Network (LAN), a Personal Area Network (PAN), the Internet, Power Line Communications (PLC), a cellular network (e.g., a Global System for Mobile Communications (GSM) network), portions of one or more of the same, variations or combinations of one or more of the same, and/or any other suitable network.

FIG. 3 is a flow diagram of an example computer-implemented method 300 for providing integrated security management. The steps shown in FIG. 3 may be performed by any suitable computer-executable code and/or computing system, including system 100 in FIG. 1, system 200 in FIG. 2, and/or variations or combinations of one or more of the same. In one example, each of the steps shown in FIG. 3 may represent an algorithm whose structure includes and/or is represented by multiple sub-steps, examples of which will be provided in greater detail below.

As illustrated in FIG. 3, at step 302, one or more of the systems described herein may identify a computing environment protected by a plurality of security systems and monitored by a security management system that receives event signatures from each of the plurality of security systems, where a first security system within the plurality of security systems uses a first event signature naming scheme that differs from a second event signature naming scheme used by a second security system within the plurality of security systems. For example, identification module 104 may, as part of computing device 202 in FIG. 2, identify computing environment 206 protected by security systems 210(1)-(n) and monitored by security management system 208 that receives event signatures 220 from security systems 210(1)-(n), where security system 210(j) within security systems 210 uses naming scheme 212(j) that differs from naming scheme 212(k) used by security system 210(k) within the security systems 210.

The term "computing environment," as used herein, generally refers to any environment hosted by, established by, and/or composed of any computing subsystem, computing system, and/or collection of interconnected and/or interoperating computing systems. For example, the term "computing environment" may refer to an enterprise network that is protected by one or more security systems.

The term "security system," as used herein, generally refers to any system that provides computing security and/or that generates computing security information (e.g., that monitors a computing environment for events that are relevant to computing security). Examples of security systems include, without limitation, malware scanners, intrusion detection systems, intrusion prevention systems, firewalls, and access control systems. Examples of security systems may also include, without limitation, systems that log and/or report events that are relevant to computing security determinations.

The term "security management system," as used herein, generally refers to any system that monitors, manages, provides, controls, configures, and/or consumes data from one or more security systems. In some examples, the term "security management system" may refer to a managed security service and/or one or more systems providing a managed security service. For example, the term "security management system" may refer to a system deployed and/or partly administrated by a third-party security vendor. In some examples, the managed security service may perform installations and/or upgrades of security systems within the computing environment, may modify configuration settings of security systems within the computing environment, may monitor the computing environment for threats, and/or may report observed threats and/or vulnerabilities to an administrator of the computing environment and/or to a security vendor. In some examples, the managed security service may perform security actions (e.g., one or more of the security actions discussed above) in response to an aggregation of information received from multiple security products within the computing environment. In some examples, the term "security management system" may refer to a security information and event management system. For example, the security management system may analyze security information generated by one or more security systems to monitor and/or audit the computing environment for threats and/or vulnerabilities by analyzing, in aggregate, information provided by multiple security systems.

As used herein, the term "event" may refer to any event, incident, and/or observation within a computing environment. Examples of events include, without limitation, network communication events (e.g., an attempted communication across a firewall), file system events (e.g., an attempt by a process to create, modify, and/or remove a file), file execution events, system configuration events (e.g., an attempt to modify a system configuration setting), access control events (e.g., a login attempt, an attempt to access a resource where the access requires a permission, etc.), etc. In general, the term "event," as used herein, may refer to any event that may be detected by a security system, reported by a security system, and/or responded to by a security system, and/or which may inform one or more actions taken by a security system and/or a security management system. Accordingly, the term "event signature," as used herein, generally refers to any identifier of an event that is generated by and/or used by a security system. Examples of event signatures include warnings, alerts, reports, and/or log entries generated by security systems. As will be explained in greater detail below, in some examples, an event signature may be associated with an event name. In some examples, the event signature may be represented by and/or include the event name.

Identification module 104 may identify the computing environment in any suitable manner. For example, identification module 104 may identify the computing environment by identifying configuration information that specifies the computing environment. In some examples, identification module 104 may identify the computing environment by communicating with the computing environment. Additionally or alternatively, identification module 104 may identify the computing environment by executing within the computing environment. In some examples, identification module 104 may identify the computing environment by identifying, communicating with, and/or retrieving information generated by one or more of the security systems within the computing environment.

Returning to FIG. 3, at step 304, one or more of the systems described herein may observe a first event signature that originates from the first security system and uses the first event signature naming scheme. For example, observation module 106 may, as part of computing device 202 in FIG. 2, observe an event signature 222 that originates from security system 210(j) and uses naming scheme 212(j).

The phrase "event signature naming scheme," as used herein, generally refers to any mapping between events and names for events and/or any mapping between event signatures and names for event signatures. For example, two different security products may observe the same event and report the same event using different event signatures and/or different names for event signatures. Accordingly, the two different security products may employ differing event signature naming schemes.

Observation module 106 may observe the first event signature in any of a variety of ways. In some examples, observation module 106 may observe the first event signature by observing the first event signature within a log and/or report generated by the first security system. Additionally or alternatively, observation module 106 may observe the first event signature by receiving a communication from the first security system. In some examples, observation module 106 may observe the first event signature within a signature database for the first security system. The signature database may include any data structure that stores event signatures used by the first security system. In some examples, the signature database may be derived from past observations of the first security system (e.g., the signature database may include a compilation of signatures previously observed from the first security system). Additionally or alternatively, the signature database may be derived from documentation that describes the first security system. In some examples, observation module 106 may observe the first event signature by observing a reference to the first event signature maintained by the security management system. For example, the security management system may include a record of the first event signature and/or one or more rules that reference the first event signature.

Returning to FIG. 3, at step 306, one or more of the systems described herein may determine that the first event signature is equivalent to a second event signature that uses the second event signature naming scheme. For example, determination module 108 may, as part of computing device 202 in FIG. 2, determine that event signature 222 is equivalent to event signature 224 that uses naming scheme 212(k).

Determination module 108 may determine that the first event signature is equivalent to the second event signature in any of a variety of ways. In some examples, determination module 108 may determine that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by (i) identifying a first co-occurrence profile that describes how frequently the first event signature co-occurs with each of a set of event signatures, (ii) identifying a second co-occurrence profile that describes how frequently the second event signature co-occurs with each of the set of event signatures, and (iii) determining that a similarity between the first co-occurrence profile and the second co-occurrence profile exceeds a predetermined threshold. For example, some observable events within a computing environment may be interrelated, giving rise to observable patterns in events. Thus, the observation of one event may frequently be accompanied by the observation of another event, infrequently accompanied by the observation of yet another event, and almost never accompanied by the observation of yet another event. Accordingly, profiles that indicate the frequency with which each of a spectrum of event signatures occur with a given event signature may act as a fingerprint for an event signature that indicates the underlying event.

To provide an example of co-occurrence profiles, FIG. 4 illustrates a set of profiles 400 (e.g., illustrated as histograms). In one example, a profile 410 may describe an event signature by describing the frequency (e.g., in terms of a percentage) with which other event signatures are observed to co-occur with the profiled event signature (e.g., each bar in the histogram representing a different event signature and the height of each bar representing the frequency with which that event signature is observed to co-occur with the profiled event signature). Profiles 420 and 430 may each represent profiles for different respective event signatures. Profiles 410, 420, and 430 may be built using the same set of event signatures (e.g., the first bar of each histogram may represent the same event signature, the second bar of each histogram may represent the same event signature, etc.). As may be appreciated, profiles 410 and 420 may be more similar to each other than either is to profile 430 (e.g., because they share similar event signature co-occurrence frequencies). Thus, determination module 108 may determine that the event signature profiled by profile 410 is equivalent to the event signature profiled by profile 420 (e.g., that the respective event signatures describe equivalent underlying events and/or the same class of event).

Systems described herein (e.g., observation module 106, determination module 108 and/or the security management system) may determine co-occurrence for the purpose of generating and/or defining the co-occurrence profiles in any suitable manner. For example, a co-occurrence profile may describe how frequently the profiled event signature co-occurs with each of the set of event signatures by describing how frequently each of the set of event signatures is observed within a common computing environment and within a predetermined time window when the profiled event signature occurs within the common computing environment. For example, systems described herein may determine that two event signatures "co-occur" by observing one event signature within an environment (e.g., at a computing device, within a network, etc.) and then observing the other event signature within the same environment and within a predefined period of time (e.g., within a minute, within an hour, within a day). In some examples, systems described herein may use different time and environment scopes to determine co-occurrence for different pairs of event signatures and/or types of event signatures. For example, systems described herein may determine co-occurrence between an event signature originating from an intrusion detection system and other event signatures using a time window of an hour and by observing event signatures that may occur throughout an enterprise network that is protected from intrusion. In another example, systems described herein may determine co-occurrence between an event signature relating to a behavioral malware scanner and other event signatures using a time window of ten minutes and by observing event signatures that may occur at the same computing device.

Determination module 108 may determine that the similarity between the first co-occurrence profile and the second co-occurrence profile exceeds the predetermined threshold using any suitable similarity metric. For example, determination module 108 may compare the co-occurrence profiles by comparing the sum of the differences between the respective frequencies of co-occurrence of each event signature within the set of event signatures. Additionally or alternatively, determination module 108 may generate curves describing the respective co-occurrence profiles and may calculate the similarity of the generated curves (e.g., by calculating the Frechet distance between the curves). In one example, the co-occurrence profiles may be represented as collections of empirical distribution functions. For example, the co-occurrence between a profiled event signature and each selected event signature within the set of event signatures may be represented as an empirical distribution function that describes the cumulative probability of observing the selected event signature as a function of time. Accordingly, determination module 108 may determine the similarity between two co-occurrence profiles by comparing the respective empirical distribution functions of the two co-occurrence profiles using two-sample Kolmogorov-Smirnov tests.

In some examples, one or more of the systems described herein may build the co-occurrence profiles. For example, systems described herein may build the co-occurrence profiles based on observations within the computing environment. Additionally or alternatively, systems described herein may build the co-occurrence profiles based on observations collected from many computing environments. For example, a backend system owned by a security that provides a managed security service to many customers may collect and aggregate co-occurrence information across customers. Systems described herein may then access co-occurrence information and/or co-occurrence profiles from the backend system. In some examples, co-occurrence information collected at the backend may include information that characterizes the computing environment within which the co-occurrences were observed. In these examples, systems described herein may construct co-occurrence profiles for a computing environment based only on co-occurrence information that came from matching computing environments (e.g., computing environments with a shared set of characteristics).

Determination module 108 may determine that the first and second event signatures are equivalent in any of a variety of additional ways. In some examples, determination module 108 may determine that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by comparing a name of the first event signature with a name of the second event signature according to a similarity metric and determining, based on comparing the name of the first event signature with the name of the second event signature according to the similarity metric, that a similarity of the name of the first event signature and the name of the second event signature according to the similarity metric exceeds a predetermined threshold. As used herein, the term "name" may refer to any identifier and/or descriptor of an event and/or an event signature that includes descriptive content characterizing the event and/or the event signature. For example, the name of an event signature may include textual content that describes (e.g., in natural language) the event. In some examples, the event signature may include the event signature name (e.g., the name may itself stand as the signature). Additionally or alternatively, the signature name may be associated with the event signature (e.g., appear alongside the event signature in security system logs).

Determination module 108 may compare the names of the first and second event signatures using any suitable similarity metrics. For example, determination module 108 may calculate the edit distance between the names of the first and second event signatures. In some examples, determination module 108 may use edit distance operations that operate on whole terms within the names (instead of, e.g., only using edit distance operations that operate on single characters). Additionally or alternatively, as will be explained in greater detail below, determination module 108 may normalize the names of the first and/or second event signatures and/or may weight aspects of the names of the first and/or second event signatures before and/or as a part of comparing the names.

In some examples, determination module 108 may compare the name of the first event signature with the name of the second event signature by (i) identifying a first plurality of terms within the name of the first event signature, (ii) identifying a second plurality of terms within the name of the second event signature, (iii) weighting each term within the first plurality of terms and the second plurality of terms according to an inverse frequency with which each term appears within event signature names produced by the plurality of security systems, and (iv) comparing the first plurality of terms as weighted with the second plurality of terms as weighted. As used herein, "inverse frequency" may refer to any value that tends to decrease as the stated frequency increases. For example, determination module 108 may calculate and/or otherwise identify a term frequency-inverse document frequency (TF-IDF) value for each term, where the corpus of documents corresponds to the collection of event signature names produced by the security systems within the computing environment. In this example, determination module 108 may attribute greater distances to operations that modify highly weighted terms when calculating the edit distance between the names of the first and second event signatures (e.g., the attributed cost of each edit operation may correlate to the weight of the term being edited). In this manner, rare term matches may receive greater weight than common term matches when determining the similarity of event signature names.

In some examples, determination module 108 may normalize event signature names before and/or when comparing the names of the first and second event signatures. For example, determination module 108 may compare the name of the first event signature with the name of the second event signature by (i) identifying a first term within the name of the first event signature, (ii) identifying a second term within the name of the second event signature, (iii) determining that the first term and the second term are synonymous, and (iv) treating the first term and the second term as equivalent when comparing the name of the first event signature with the name of the second event signature based on determining that the first term and the second term are synonymous.

Determination module 108 may determine that terms are synonymous in any suitable manner. In some examples, determination module 108 may identify synonymous malware variant names. Because different security products may use a variety of names for any given malware variant, determination module 108 may assess similarity between signature event names more accurately where the signature event names reflect consistent malware variant names. Accordingly, in some examples, one or more of the systems described herein (e.g., determination module 108 and/or the security management system) may determine that the first term and the second term are synonymous by providing a malware sample to each of a plurality of malware detection systems and determining that a first malware detection system within the plurality of malware detection systems identifies the malware sample using the first term and a second malware detection system within the plurality of malware detection systems identifies the malware sample using the second term. In this manner, systems described herein may generate malware variant synonyms with which to normalize signature event names.

FIG. 5 illustrates an example system 500 for determining the equivalence of event signatures. As shown in FIG. 5, example system 500 may include malware samples 510 provided to malware detection systems 520(1)-(n). Malware detection systems 520(1)-(n) may produce, through detection of malware samples 510, respective malware terms 530(1)-(n). A synonym generator 540 may then cross-reference malware terms 530(1)-(n) to produce synonym sets 552, representing sets of synonyms used by malware detection systems 520(1)-(n) for each of malware samples 510. A text normalizer 556 may then normalize event names 554 based on synonym sets 552 (e.g., by choosing, for each malware variant within malware samples 510, a single consistent variant name to represent the malware variant and replacing, within each of event names 554, all synonymous names with the chosen single consistent variant name), thereby producing normalized event names 558. A term frequency analyzer 560 may then process normalized event names 558 to determine the inverse frequency with which terms appear in the normalized event names and, according to the inverse frequency, assign term weights 562 to the terms within the normalized event names. System 500 may then apply a similarity metric 564 (that, e.g., uses term weights 562 when determining similarity) to event names 570 and 580 (as normalized) to determine a similarity 590 of event names 570 and 580. In this manner, systems described herein (e.g., determination module 108) may determine the equivalence of event signatures corresponding to event names 570 and 580, respectively.

Determination module 108 may determine that the first and second event signatures are equivalent in any of a variety of additional ways. In some examples, determination module 108 may determine that the first event signature that uses the first event signature naming scheme is equivalent to the second event signature that uses the second event signature naming scheme by identifying a signature association statistic that describes how frequently the first event signature is observed in association with observing the second event signature and how frequently the second event signature is observed in association with observing the first event signature and determining that the signature association statistic exceeds a predetermined threshold. For example, determination module 108 may simply determine the frequency with which the first event signature and the second event signature co-occur (using, e.g., any of the systems and methods for determining co-occurrence described earlier).

In some examples, determination module 108 may determine that the first and second event signatures are equivalent by using a signature association statistic that includes inferences of event co-occurrences and/or event signature co-occurrences, even absent observed event signature co-occurrences. For example, various security products may be deployed within different computing environments in various combinations. When security products are deployed within a common computing environment, systems described herein (e.g., determination module 108 and/or the security management system) may associate co-occurring signatures within a signature association database. Thus, when a computing environment does not include a security system whose event signatures are included within a signature association database, systems described herein may query the signature association database with event signatures observed within the computing environment to identify associated event signatures that may have been generated by the missing security system had the missing security system been present in the computing environment. The systems described herein may thereby determine that the first and second event signatures are equivalent by using a signature association statistic that is predictive (e.g., that, given an observation of the first event signature, the second event signature would have been observed had the security system corresponding to the second event signature been deployed on the same computing device as the security system corresponding to the first event signature). In some examples, systems described herein may store and/or process event signature association information using locality-sensitive hashing techniques.

For an example of predictive signature associations, FIG. 6 illustrates an example system 600. As shown in FIG. 6, example 600 may include a computing environment 620, a computing environment 630, and a computing environment 640. In some examples, computing environments 620, 630, and 640 may be owned, controlled, and/or administered separately. Additionally or alternatively, computing environments 620, 630, and 640 may represent sub-environments of a larger overarching computing environment. As shown in FIG. 6, computing environment 620 may include security systems 610 and 614. Computing environment 630 may include security systems 610, 612, 614, and 616. Computing environment 640 may include security systems 612, 614, and 616. Security systems 610 and 614 of computing environment 620 may produce signatures 622. Security systems 610, 612, 614, and 616 of computing environment 630 may produce signatures 624. Security systems 612, 614, and 616 of computing environment 640 may produce signatures 626. Systems described herein may submit signatures 622, 624, and 626 (and information about the co-occurrence of the signatures) to event signature association database 650.

Once event signature association database 650 is populated, systems described herein may identify and/or produce an observation matrix 652 from one or more computing environments, such as computing environment 620, 630, and/or 640. Each row of observation matrix 652 may represent a particular computing device on a particular day, and each column of observation matrix 652 may represent a different observable event signature. Each cell of observation matrix 652 may therefore represent whether (or, e.g., how many times) a given event signature was observed on a given computing device on a given day. A matrix factorization system 660 may query event signature association database 650 to determine whether values of cells within observation matrix 652 may be inferred--e.g., whether, given a row, similar rows produced from computing environments that had a security system that provides a given event signature show that the given event signature was observed. The given row, if produced from a computing environment without the security system that provides the given event signature, may be modified to reflect the inference that the given event signature would have been observed if the security system had been deployed to the computing environment. The systems described herein may thereby generate a predictive matrix 670, reflecting observation matrix 652 enhanced with predictive associations. Thus, computing environment 630 may produce event signature information showing that when event signature A from security system 610 and event signature B from security system 614 co-occur, so does event signature C from security system 612. Accordingly, systems described herein may enhance an observation matrix produced by computing environment 620 with the information about signatures 624 submitted by computing environment 630 to event signature association database 650 to reflect that, when security system 610 in computing environment 620 produces event signature A and security system 614 in computing environment 620 produces event signature B, that event signature C may be imputed to computing environment 620, even though computing environment may lack security system 612 that produces event signature C.

In some examples, systems described herein (e.g., determination module 108) may also use the inferred signature associations described above to enhance the event signature profile comparisons described earlier (e.g., with reference to FIG. 4).

Returning to FIG. 3, at step 308, one or more of the systems described herein may perform, in connection with observing the first event signature, a security action associated with the second event signature and directed to the computing environment based on determining that the first event signature is equivalent to the second event signature. For example, performing module 110 may, as part of computing device 202 in FIG. 2, perform, in connection with observing event signature 222, security action 240 associated with event signature 224 and directed to computing environment 206 based on determining that event signature 222 is equivalent to event signature 224.

The term "security action," as used herein, generally refers to any suitable action that a computing system may perform to protect a computing system and/or environment from a computing security threat and/or vulnerability. Examples of security actions include, without limitation, generating and/or modifying a security report, alerting a user and/or administrator, and/or automatically performing (and/or prompting a user or administrator to perform) a remedial action such as updating an antivirus signature set, executing one or more cleaning and/or inoculation scripts and/or programs, enabling, heightening, and/or strengthening one or more security measures, settings, and/or features, reconfiguring a security system, adding and/or modifying a security rule used by a security system, and/or disabling, quarantining, sandboxing, and/or powering down one or more software, hardware, virtual, and/or network computing resources.