Information reproduction/I/O method using dot pattern, information reproduction device, mobile information I/O device, and electronic toy using dot pattern

Yoshida Ja

U.S. patent number 10,192,154 [Application Number 15/187,403] was granted by the patent office on 2019-01-29 for information reproduction/i/o method using dot pattern, information reproduction device, mobile information i/o device, and electronic toy using dot pattern. The grantee listed for this patent is Kenji Yoshida. Invention is credited to Kenji Yoshida.

View All Diagrams

| United States Patent | 10,192,154 |

| Yoshida | January 29, 2019 |

Information reproduction/I/O method using dot pattern, information reproduction device, mobile information I/O device, and electronic toy using dot pattern

Abstract

The present invention proposes a dot pattern on which code information and x and y coordinate information can be defined even if the dot pattern is extremely small, and proposes an information reproducing method and an information reproducing device based on the dot pattern. More specifically, a medium such as a printed material on which is formed a dot pattern portion by arranging in accordance with a given rule dots generated by a dot code generating algorithm in order to recognize various kinds of multimedia information is scanned as image data by scanning means. Then, the image data is converted into code data. Multimedia information corresponding to the code data is read out of storing means to be reproduced.

| Inventors: | Yoshida; Kenji (Tokyo, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Family ID: | 32046108 | ||||||||||

| Appl. No.: | 15/187,403 | ||||||||||

| Filed: | June 20, 2016 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20170004339 A1 | Jan 5, 2017 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 13155785 | Jun 8, 2011 | 9372548 | |||

| 10529440 | Jun 28, 2011 | 7967217 | |||

| PCT/JP03/01264 | Sep 26, 2003 | ||||

Foreign Application Priority Data

| Sep 26, 2002 [JP] | 2002-281815 | |||

| Oct 4, 2002 [JP] | 2002-292907 | |||

| Dec 27, 2002 [JP] | 2002-380503 | |||

| Dec 27, 2002 [JP] | 2002-380932 | |||

| Dec 27, 2002 [JP] | 2002-381743 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/03545 (20130101); A63H 33/26 (20130101); A63H 3/02 (20130101); G06F 40/58 (20200101); A63H 3/003 (20130101); A63H 33/22 (20130101); A63F 1/00 (20130101); A63F 3/00643 (20130101); G06F 3/0395 (20130101); G06K 19/06 (20130101); A63H 3/28 (20130101); G06K 19/06037 (20130101); G06F 3/167 (20130101); G06K 19/06028 (20130101); G06K 7/10732 (20130101); G06K 7/12 (20130101); G06K 7/10772 (20130101); G06F 3/0317 (20130101); G06K 7/10881 (20130101); G06K 7/10722 (20130101); G06F 3/03543 (20130101); A63H 33/38 (20130101); G06K 7/1413 (20130101); G06K 19/0614 (20130101); G06F 3/0321 (20130101); A63F 2003/00662 (20130101); G06K 2007/10524 (20130101) |

| Current International Class: | G06K 7/10 (20060101); A63F 3/00 (20060101); A63H 3/02 (20060101); G06F 3/16 (20060101); A63H 3/28 (20060101); G06K 7/12 (20060101); G06K 19/06 (20060101); G06F 3/03 (20060101); G06F 3/0354 (20130101); G06F 3/039 (20130101); A63H 3/00 (20060101); A63H 33/22 (20060101); A63H 33/26 (20060101); A63H 33/38 (20060101); G06K 7/14 (20060101); A63F 1/00 (20060101); G06F 17/28 (20060101); G06K 17/00 (20060101) |

| Field of Search: | ;235/454,487,494 |

References Cited [Referenced By]

U.S. Patent Documents

| 4263504 | April 1981 | Thomas |

| 4604065 | August 1986 | Frazer et al. |

| 4627819 | December 1986 | Burrows |

| 5051736 | September 1991 | Bennett et al. |

| 5124536 | June 1992 | Priddy et al. |

| 5128528 | July 1992 | Heninger |

| 5329107 | July 1994 | Priddy et al. |

| 5329108 | July 1994 | Lamoure |

| 5416312 | May 1995 | Lamoure |

| 5577774 | November 1996 | Morikawa et al. |

| 5591957 | January 1997 | Morikawa et al. |

| 5612524 | March 1997 | Sant'Anselmo et al. |

| 5661506 | August 1997 | Lazzouni et al. |

| 5852434 | December 1998 | Sekendur |

| 5860679 | January 1999 | Fukuda et al. |

| 5866895 | February 1999 | Fukuda et al. |

| 5874718 | February 1999 | Matsui |

| 5878023 | March 1999 | Matsui |

| 5892846 | April 1999 | Davis |

| 5896403 | April 1999 | Nagasaki et al. |

| 5897669 | April 1999 | Matsui |

| 5920661 | July 1999 | Mori et al. |

| 5971279 | October 1999 | Raistrick et al. |

| 5992752 | November 1999 | Wilz, Sr. et al. |

| 6012961 | January 2000 | Sharpe, III et al. |

| 6043899 | March 2000 | Morohashi et al. |

| 6050731 | April 2000 | Matsui |

| 6052813 | April 2000 | Nagasaki et al. |

| 6058498 | May 2000 | Nagasaki et al. |

| 6072917 | June 2000 | Mori et al. |

| 6116510 | September 2000 | Nishino |

| 6119937 | September 2000 | Wang et al. |

| 6133927 | October 2000 | Arai et al. |

| 6186405 | February 2001 | Yoshioka |

| 6244764 | June 2001 | Lei et al. |

| 6360948 | March 2002 | Yang et al. |

| 6438251 | August 2002 | Yamaguchi |

| 6446866 | September 2002 | Tatsuta |

| 6460155 | October 2002 | Nagasaki et al. |

| 6502756 | January 2003 | Fahraeus |

| 6548768 | April 2003 | Pettersson et al. |

| 6561423 | May 2003 | Yoshioka |

| 6570104 | May 2003 | Ericson et al. |

| 6586688 | July 2003 | Wiebe |

| 6627870 | September 2003 | Lapstun et al. |

| 6633008 | October 2003 | Johnson |

| 6633526 | October 2003 | Imade et al. |

| 6663008 | December 2003 | Pettersson et al. |

| 6666376 | December 2003 | Ericson |

| 6674427 | January 2004 | Pettersson et al. |

| 6732927 | May 2004 | Olsson et al. |

| 6964373 | November 2005 | Sasaki et al. |

| 7328845 | February 2008 | Tsai |

| 7475824 | January 2009 | Yoshida |

| 7664312 | February 2010 | Yoshida |

| 7967217 | June 2011 | Yoshida |

| 8031375 | October 2011 | Yoshida |

| 8237983 | August 2012 | Yoshida |

| 8253982 | August 2012 | Yoshida |

| 8430328 | April 2013 | Yoshida |

| 8553284 | October 2013 | Yoshida |

| 9372548 | June 2016 | Yoshida |

| 2002/0030104 | March 2002 | Matsui et al. |

| 2002/0033820 | March 2002 | Wiebe |

| 2002/0044138 | April 2002 | Edso et al. |

| 2002/0159089 | October 2002 | Wiebe et al. |

| 2003/0012455 | January 2003 | Olsson et al. |

| 2005/0052682 | March 2005 | Ishikawa et al. |

| 2005/0173533 | August 2005 | Pettersson |

| 2005/0173544 | August 2005 | Yoshida |

| 2006/0154559 | July 2006 | Yoshida |

| 2007/0023523 | February 2007 | Onishi |

| 2007/0164110 | July 2007 | Yoshida |

| 2008/0149714 | June 2008 | Cheung et al. |

| 2008/0149722 | June 2008 | Cheung et al. |

| 2008/0252916 | October 2008 | Huang |

| 2012/0325910 | December 2012 | Yoshida |

| 2013/0050724 | February 2013 | Yoshida |

| 2013/0334322 | December 2013 | Yoshida |

| 2014/0029062 | January 2014 | Yoshida |

| 2 374 716 | Nov 2000 | CA | |||

| 2 374 808 | Dec 2000 | CA | |||

| 2 519 271 | Sep 2004 | CA | |||

| 0 407 734 | Jan 1991 | EP | |||

| 0 670 555 | Sep 1995 | EP | |||

| 1 159 991 | Dec 2001 | EP | |||

| 1 229 71 | Aug 2002 | EP | |||

| 1 548 635 | Jun 2005 | EP | |||

| 1 605 395 | Dec 2005 | EP | |||

| 1 703 434 | Sep 2006 | EP | |||

| 1833001 | Sep 2007 | EP | |||

| 1876555 | Jan 2008 | EP | |||

| 2 349 836 | Nov 2000 | GB | |||

| 61-065213 | Apr 1986 | JP | |||

| 7-141104 | Jun 1995 | JP | |||

| 07-178257 | Jul 1995 | JP | |||

| 07-296387 | Nov 1995 | JP | |||

| 8-171620 | Jul 1996 | JP | |||

| 09-185488 | Jul 1997 | JP | |||

| 10-187907 | Jul 1998 | JP | |||

| 10-257309 | Sep 1998 | JP | |||

| 2000-285225 | Oct 2000 | JP | |||

| 2000-325668 | Nov 2000 | JP | |||

| 2001-318926 | Nov 2001 | JP | |||

| 2001-346032 | Dec 2001 | JP | |||

| 2002-002024 | Jan 2002 | JP | |||

| 2002-041199 | Feb 2002 | JP | |||

| 2002-149331 | May 2002 | JP | |||

| 2002-205291 | Jul 2002 | JP | |||

| 2003-511761 | Mar 2003 | JP | |||

| 2002-380503 | Apr 2004 | JP | |||

| 2002-380932 | Apr 2004 | JP | |||

| 2002-381743 | Apr 2004 | JP | |||

| 2005-031932 | Feb 2005 | JP | |||

| 2004/029871 | Apr 2004 | NO | |||

| 2126598 | Feb 1999 | RU | |||

| 2 166 207 | Apr 2001 | RU | |||

| 98/14346 | Apr 1998 | WO | |||

| 99/50787 | Oct 1999 | WO | |||

| 00/72124 | Nov 2000 | WO | |||

| 00/73981 | Dec 2000 | WO | |||

| 01/26032 | Apr 2001 | WO | |||

| 01/48591 | Jul 2001 | WO | |||

| 01/61455 | Aug 2001 | WO | |||

| 02/23464 | Mar 2002 | WO | |||

| 03/049023 | Jun 2003 | WO | |||

| 2004/084125 | Sep 2004 | WO | |||

| 2005/064523 | Jul 2005 | WO | |||

Other References

|

Non-Final Office Action dated Feb. 3, 2017, issued in U.S. Appl. No. 15/187,356. (30 pages). cited by applicant . Non-Final Office Action dated Dec. 28, 2016, issued in U.S. Appl. No. 15/281,841. (33 pages). cited by applicant . U.S. Non-Final Office Action dated Feb. 14, 2012, issued in U.S. Appl. No. 11/993,527 (5 pages). cited by applicant . European Search Report dated Jun. 18, 2010, issued in corresponding European Petent Application No. 05737287.2 (6 pages). cited by applicant . International Search Report of PCT/JP2005/008210, dated Aug. 16, 2005 (1 page). cited by applicant . European Search Report dated Feb. 17, 2006 issued in corresponding European Patent Application No. 03798532.2 (4 pages). cited by applicant . Russian Office Action dated Jan. 19, 2009, issued in Russian Patent Application No. 2007128915/09(031489) (Cited in U.S. Appl. No. 11/794,174 (now U.S. Pat. No. 8,237,983); (11 pages). cited by applicant . Russian Office Action dated Nov. 17, 2008 (mailing date), issued in Russian Patent Application No. 2007128915/09(031489).(Cited in U.S. Appl. No. 11/794,174 (now U.S. Pat. No. 8,237,983); ( 5 pages). cited by applicant . International Preliminary Report on Patentability dated Oct. 30, 2007, issued in PCT Application No. PCT/JP2005/008210 (Form PCT/IB/373 and Form PCT/ISA/237). Cited in U.S. Appl. No. 11/794,174 ( 4 pages). cited by applicant . International Search Report of PCT/JP2004/019613, dated Apr. 12, 2005 (2 pages). cited by applicant . International Preliminary Report dated Jul. 3, 2007, issued in PCT Application No. PCT/JP2004/019613 (Form PCT/IB/373 and Form PCT/ISA/237) (4 pages). cited by applicant . U.S. Notice of Allowance dated Apr. 4, 2012, issued in U.S. Appl. No. 11/794,174 (now U.S. Pat. No. 8,237,983) (14 pages). cited by applicant . U.S. Non-Final Office Action dated Jul. 12, 2011, issued in U.S. Appl. No. 11/794,174 (now U.S. Pat. No. 8,237,983) (13 pages). cited by applicant . Extended European Search Report dated Aug. 14, 2013, issued in European Patent Application No. 12173995.7 (10 pages). cited by applicant . European Opposition dated Aug. 13, 2013, issued in European Patent Application No. 05737287.2 (40 pages). cited by applicant . German Cancellation Action dated Apr. 30, 2012, issued in German Patent Application DE 202005022002.7, w/English-language explanation of relevance (27 pages). cited by applicant . U.S. Notice of Allowance dated Nov. 28, 2011, issued in U.S. Appl. No. 11/794,174 (now U.S. Pat. No. 8,237,983) (9 pages). cited by applicant . U.S. Non-Final Office Action dated Jan. 13, 2012, issued in U.S. Appl. No. 13/251,903 (10 pages). cited by applicant . U.S. Office Action dated Jul. 12, 2011, issued in U.S. Appl. No. 11/794,174. cited by applicant . European Search Report dated Apr. 25, 2006 issued in corresponding European Patent Application No. 03798532.2 (5 pages). cited by applicant . European Search Report dated Aug. 5, 2008 issued in corresponding European Patent Application No. 08008386.8 (8 pages). cited by applicant . U.S. Final Office Action dated Jul. 23, 2012, issued in U.S. Appl. No. 11/993,527 (11 pages). cited by applicant . U.S. Notice of Allowance dated Apr. 25, 2012, issued in U.S. Appl. No. 13/251,903 (12 pages). cited by applicant . U.S. Non-Final Office Action dated Jun. 20, 2013, issued in U.S. Appl. No. 13/566,637 (071757A) (36 pages). cited by applicant . U.S. Notice of Allowance dated May 7, 2015, issued in U.S. Appl. No. 13/872,555 (5 pages). cited by applicant . Russian Office Action dated Apr. 28, 2005, issued in Russian Patent Application No. 2007-144103 (8 pages). cited by applicant . U.S. Office Action dated Jan. 14, 2015 issued in U.S. Appl. No. 13/872,555 (5 pages). cited by applicant . European Office Action dated Jun. 18, 2010 issued in European Patent Application No. 05737287.2 (6 pages). cited by applicant . U.S. Notice of Allowance dated Jan. 3, 2013, issued in U.S. Appl. No. 11/993,527 (8 pages). cited by applicant . U.S. Final Office Action dated Jun. 24, 2010, issued in U.S. Appl. No. 10/529,440 (16 pages). cited by applicant . U.S. Non-Final Office Action dated Nov. 12, 2009, issued in U.S. Appl. No. 10/529,440 (8 pages). cited by applicant . U.S. Non-Final Office Action dated Apr. 9, 2014, issued in U.S. Appl. No. 13/872,555 (8 pages). cited by applicant . U.S. Office Action dated Mar. 6, 2014, issued in U.S. Appl. No. 13/566,637 (44 pages). cited by applicant . U.S. Notice of Allowance dated Dec. 9, 2014, issued in U.S. Appl. No. 14/047,759 (12 pages). cited by applicant . U.S. Final Office Action dated Jan. 2, 2015, issued in U.S. Appl. No. 13/566,637 (52 pages). cited by applicant . U.S. Notice of Allowance dated Mar. 30, 2015, issued in U.S. Appl. No. 14/047,759 (9 pages). cited by applicant . U.S. Notice of Allowance dated Aug. 21, 2014, issued in U.S. Appl. No. 14/047,759 (7 pages). cited by applicant . Office Action dated Dec. 19, 2013, issued in U.S. Appl. No. 14/047,759 (14 pages). cited by applicant . Decision on Grant, issued in corresponding Russian Patent Application No. 2005112458/09(014383) having a filing date of Sep. 26, 2003 (37 pages). cited by applicant . "Data Matrix" article in Wikipedia captured Nov. 24, 2014 at http://en.wikipedia.org/wiki/Data_Matrix (13 pages). cited by applicant . Text (without images) of Pettersson (WO 2003049023 A1) published Jun. 12, 2003 and retrieved at http://www.google.com/patents/WO2003049023A1 (9 pages). cited by applicant . International Search Report, dated Oct. 19, 2006, issued in PCT/SG2006/000185 (3 pages). cited by applicant . U.S. Final Office Action dated Apr. 11, 2016, issued in U.S. Appl. No. 14/047,759 (12 pages). cited by applicant . Communication of Notice of Opposition dated Jun. 24, 2015, issued in counterpart EP application No. 06748126.7, (78 pages). cited by applicant . Taiwanese Office Action dated Jun. 26, 2015, issued in counterpart Taiwanese Patent Application No. 103119047 (with partial translation) (4 pages). cited by applicant . U.S. Non-Final Office Action dated Sep. 11, 2015, issued in U.S. Appl. No. 13/56,637 (55 pages). cited by applicant . U.S. Non-Final Office Action dated Aug. 10, 2015, issued in U.S. Appl. No. 14/546,705 (14 pages). cited by applicant . U.S. Notice of Allowance dated Aug. 24, 2015, issued in U.S. Appl. No. 13/872,555 (28 pages). cited by applicant . U.S. Non-Final Office Action dated Aug. 14, 2015, issued in U.S. Appl. No. 14/047,759 (13 pages). cited by applicant . Russian Office Action dated Feb. 19, 2008, issued in corresponding Russian Patent Application No. 2005112458/09(014383). cited by applicant . U.S. Notice of Allowance dated Oct. 20, 2016, issued in U.S. Appl. No. 14/047,759 (7 pages). cited by applicant . U.S. Non-Final Office Action dated Jul. 29, 2016, issued in U.S. Appl. No. 13/566,637 (75 pages). cited by applicant . Non-Final Office Action dated Jul. 20, 2017, issued in U.S. Appl. No. 15/187,264 (8 pages). cited by applicant . Non-Final Office Action dated May 4, 2017, issued in U.S. Appl. No. 15/187,046. (36 pages). cited by applicant . Notice of Allowance dated May 19, 2017, issued in U.S. Appl. No. 15/187,356. (11 pages). cited by applicant . Final Office Action dated Jan. 3, 2018, issued in U.S. Appl. No. 15/187,046 (17 pages). cited by applicant . Notice of Allowance dated Dec. 1, 2017, issued in U.S. Appl. No. 15/187,150 (44 pages). cited by applicant . Notice of Allowance dated Jan. 31, 2018, issued in U.S. Appl. No. 15/187,264 (8 pages). cited by applicant. |

Primary Examiner: Hess; Daniel

Attorney, Agent or Firm: Westerman, Hattori, Daniels & Adrian, LLP

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATIONS

This application is a divisional of U.S. application Ser. No. 13/155,785, filed on Jun. 8, 2011, and wherein U.S. application Ser. No. 13/155,785 is a continuation of U.S. application Ser. No. 10/529,440, filed on Mar. 25, 2005, and wherein U.S. application Ser. No. 10/529,440 was filed under 35 U.S.C. .sctn. 371 of International Application No. PCT/JP2003/01264, filed on Sep. 26, 2003, and which is based upon and claims the benefit of priority of prior Japanese Patent Application No. 2002-281815, filed on Sep. 26, 2002, Japanese Patent Application No. 2002-292907, filed on Oct. 4, 2002, and Japanese Patent Applications Nos. 2002-380503, 2002-380932 and 2002-381743, each filed on Dec. 27, 2002, the entire contents of which are incorporated herein by reference.

Claims

The invention claimed is:

1. A dot pattern comprising: reference dots that define a first direction line being vertical or horizontal and a second direction line perpendicularly crossing with the first direction line, and that are equally spaced along the first direction line or the second direction line; at least one dot for recognition of at least one of a range and an orientation of the dot pattern, that is arranged by shifting at least one of the reference dots from the first direction line or the second direction line to a position in a range narrower than an equidistance between the reference dots, or, that is arranged at a position that is shifted from the first direction line or the second direction line in a range narrower than an equidistance between the reference dots in addition to at least one of the reference dots; and information dots that define information by either (A) or (B); (A) an arrangement of shifting the information dots from virtual points that are intersections of virtual lines that pass though the reference dots defining the second direction line and are parallel to the first direction line and virtual lines that pass though the reference dots defining the first direction line and are parallel to the second direction line; (B) an arrangement of shifting the information dots from virtual points that are center points of lattice areas each of which is surrounded by a lattice including the reference dots.

2. The dot pattern according to claim 1, wherein the at least one dot is used for recognition of an angle of scanning means with respect to the orientation of the dot pattern.

3. The dot pattern according to claim 1, wherein the information dots define information by at least one of a distance and a direction from the virtual points.

4. The dot pattern according to claim 1, wherein the reference dots are arranged in a lattice form.

5. The dot pattern according to claim 1, wherein the reference dots and the information dots are alternately arranged in a lattice form.

6. The dot pattern according to claim 1, wherein the reference dots are arranged in a first direction, and the reference dots are arranged in a second direction that is perpendicular to the first direction.

7. The dot pattern according to claim 6, wherein the reference dots arranged in the first direction configure a plurality of lines at a first predetermined interval, and the reference dots arranged in the second direction configure a plurality of lines at a second predetermined interval.

8. A medium wherein the medium is formed with the dot pattern according to claim 1 thereon.

9. The medium on which the dot pattern is formed according to claim 8, wherein the dot pattern is printed with an ink that absorbs an infrared light or an ink that reacts to ultraviolet light.

10. The medium on which the dot pattern is formed according to claim 8 wherein the dot pattern is printed with a carbon ink.

11. The medium on which the dot pattern is formed according to claim 8, wherein the dot pattern is printed with a transparent ink.

12. The medium on which the dot pattern is formed according to claim 8, wherein the medium is a printed material or a photograph and formed with the dot pattern that is formed superposedly on an illustration or letters of the medium.

13. The medium on which the dot pattern is formed according to claim 8, wherein the dot pattern is printed over a plurality of areas in accordance with the illustration or letters of the medium.

14. The medium on which the dot pattern is formed according to claim 8 wherein the medium is an attachable seal member or card.

15. A dot pattern scanning method comprising: scanning the dot pattern according to claim 1; analyzing the dot pattern and acquiring a data content defined by the dot pattern.

16. An information processing method using a dot pattern, including the steps of: by scanning means, scanning the medium on which the dot pattern according to claim 1 is formed; by processing means, extracting dots from the dot pattern; analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and outputting information corresponding to the data content stored in storing means via outputting means.

17. A dot pattern scanning device comprising: with respect to a medium on which the dot pattern according to claim 1 is formed, scanning means for scanning the dot pattern of the medium.

18. The dot pattern scanning device according to claim 17 further comprising: extracting means for extracting dots from the dot pattern.

19. The dot pattern scanning device according to claim 18 further comprising: analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots.

20. The dot pattern scanning device according to claim 19, further comprising: transmission means for wiredly or wirelessly transmitting the information to an external device.

21. An information processing device using a dot pattern comprising: with respect to a medium on which the dot pattern according to claim 1 is formed, scanning means for scanning the dot pattern of the medium; extracting means for extracting dots from the dot pattern; analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and storing means for storing information corresponding to the data content; and outputting means for outputting the information corresponding to the data content.

22. A program that causes an information processing device to execute the steps of: extracting dots from the dot pattern according to claim 1; and analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots.

23. An information processing device using a dot pattern, comprising: extracting means for extracting dots from the dot pattern according to claim 1; and analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots.

24. An information processing device using a dot pattern, comprising: a transparent film that is a medium on which the dot pattern according to claim 1 is formed; a dot pattern scanning device that comprises: scanning means, when the transparent film is attached on display means of an electronic device, scanning and capturing the dot pattern of the transparent film in accordance with instruction information displayed on the display means; extracting means for extracting dots from the dot pattern; analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and transmitting means for wiredly or wirelessly transmitting the data content to an external device; and the electronic device that comprises: storing means for storing information corresponding to the transmitted data content; and means for reading the information and outputting the information via outputting means.

25. The information processing device using the dot pattern according to claim 24, wherein an infrared cut off filter is arranged between the transparent film and a screen of the display means.

26. The information processing device using the dot pattern according to claim 24, wherein the electronic device is a personal computer, a PDA, a portable phone, or a television.

27. An information processing device using a dot pattern comprising: a mouse pad that is a medium on which the dot pattern according to claim 1 is formed; a mouse that comprises: scanning means, that is housed in a mouse-shaped case, for scanning and capturing the dot pattern formed on the mouse pad; extracting means for extracting dots from the dot pattern; analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and transmitting means for wiredly or wirelessly transmitting the data content to an external device.

28. An information processing device comprising: with respect to a printed material on which only the dot pattern according to claim 1 is printed, a camera unit for capturing the dot pattern in the printed material; an image processing portion that comprises: extracting means for extracting dots from the dot pattern; and analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; processing means for reading information corresponding to the data content from a storing portion; and outputting means for outputting the information.

29. The information processing device according to claim 28, wherein, with respect to a printed material on which the dot pattern is printed with an ink that reacts to predetermined wavelength light and on a same surface of which an information transfer portion that includes letters or an illustration is printed with an ink that does not react to the predetermined wavelength light, the camera unit captures only the dot pattern in the printed material by radiating the printed material with the predetermined wavelength light.

30. The information processing device according to claim 29, wherein the ink that reacts to the predetermined wavelength light is a carbon ink, an infrared ink, or a transparent ink, and the ink that does not react to the predetermined wavelength light is a non-carbon ink.

31. The information processing device according to claim 28, wherein a ring switch is provided around the camera unit, and pressing the ring switch causes the camera unit or the image processing portion to operate.

32. The information processing device according to claim 28, wherein a camera comprised in the camera unit is a C-MOS camera or a CCD camera.

33. The information processing device according to claim 28, wherein the camera unit is configured separately from the image processing portion, the storing portion, the processing means, and the outputting means to carry out transmission of image data of the dot pattern from the camera unit to the image processing portion via an interface portion.

34. The information processing device according to claim 28, wherein the camera unit and the image processing portion are configured separately from the storing portion, the processing means and the outputting means to carry out transmission of the data content from the image processing portion to the processing means via an interface portion.

35. The information processing device according to claim 28 comprising: a microphone as an inputting portion; and associating means for associating a voice input via the microphone with the dot pattern as the data content.

36. The information processing device according to claim 28, wherein dots are extracted from the dot pattern input by the camera unit, the data content defined by the dot pattern is analyzed in accordance with the arrangement of the dots, and the data content is transmitted to a computer via a communication card.

37. The information processing device according to claim 28, wherein dots are extracted from the dot pattern input by the camera unit, the data content defined by the dot pattern is analyzed in accordance with the arrangement of the dots, the data content is transmitted to a computer via a communication card, and information or a program corresponding to the data content is received from the computer via the communication card.

38. The information processing device according to claim 28 further comprising a GPS (global positioning system) unit for inputting position information to output information based on the position information as well as the data content from the outputting means.

39. The information processing device according to claim 28 wherein the information processing device is a portable phone.

40. The information processing device according to claim 39 wherein the portable phone includes the camera unit that is integrally-configured therein, mounted thereon, or wiredly or wirelessly connected thereto.

41. An electronic toy comprising: a voice storing portion for storing a voice corresponding to the dot pattern according to claim 1; a camera unit for capturing and reading the dot pattern; and a processing portion for extracting dots from the dot pattern read by the camera unit, analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots, and outputting via a speaker or an earphone a voice corresponding to the data content from the voice storing portion.

42. The electronic toy according to claim 41 further comprising a liquid crystal display for displaying an image or a video corresponding to the data content by an image reproducing LSI.

43. A figure unit having an information outputting function by a camera input comprising: a figure of a given shape; a camera unit that incorporates: scanning means for scanning the dot pattern according to claim 1; and an image processing portion that comprises: extracting means for extracting dots from the dot pattern; and analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and an outputting unit that comprises: a processing portion for, according to the data content, executing a program that includes an instruction for outputting information stored in a storing portion and outputting portion for outputting the information.

44. The figure unit according to claim 43 wherein the camera unit is provided on a bottom of the figure and facing downward.

45. A mouse pad wherein the mouse pad is a medium on which the dot pattern according to claim 1 is formed.

46. A mouse comprising: scanning means for scanning a dot pattern formed on the mouse pad according to claim 45.

47. A tablet wherein the tablet is a medium on which the dot pattern according to claim 1 is formed.

48. An electronic toy comprising: a board medium on which the dot pattern according to claim 1 is printed superposedly on a text or an image; scanning means for scanning the dot pattern; and outputting means for, when a plurality of the dot patterns are sequentially read out, randomly outputting any one of comments among a plurality of comments corresponding to a combination of the read dot patterns.

49. A foreign language translator comprising: scanning means for scanning the dot pattern according to claim 1 that is printed superposedly on a text; extracting means for extracting dots from the dot pattern; analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots, and outputting means for outputting a translated text corresponding to a content of the text as information corresponding to the data content.

50. An electronic toy comprising: a mini figure that is provided with the dot pattern according to claim 1 on a back side of a seat; a voice storing portion, that is housed in a case main body, for storing voice information corresponding to a character of the mini figure; a camera unit arranged upward on top of the case main body so as to scan the dot pattern by placing the mini figure thereon; extracting means for extracting dots from the read dot pattern; recognizing means for recognizing an orientation of the dot pattern; analyzing means for analyzing a data content defined by the dot pattern in accordance with the arrangement of the dots; and a processing portion for reading the voice information of the character of the mini figure corresponding to the data content from the voice storing portion and reproducing the voice information via a speaker by a voice reproducing LSI.

51. An electronic toy according to claim 50 wherein the electronic toy can connect to a network by connecting to a personal computer via connecting means provided on the case main body.

52. An information reproducing device comprising: a pen type scanner that includes scanning means for scanning the dot pattern according to claim 1; and a device main body for reading video data corresponding to the dot pattern from a memory card or a build-in memory and outputting the video data on a television monitor.

53. A personal digital assistant (PDA) comprising a scanner portion that includes scanning means for scanning the dot pattern according to claim 1.

54. A personal computer comprising a scanner portion that includes scanning means for scanning the dot pattern according to claim 1.

55. A pen type scanner comprising: a liquid crystal display on a side of a pen type member; a scanner portion that includes a writing material provided at an end of the pen type member and scanning means for scanning the dot pattern according to claim 1; and a central processing unit for, when the dot pattern corresponding to a content selected by the writing material is read out, reading text information corresponding to the dot pattern from a memory and displaying the text information on the liquid crystal display.

56. A pen type scanner comprising: a liquid crystal display on a side of a pen type member; a scanner portion that includes scanning means for scanning the dot pattern according to claim 1 at an end of the pen type member; and a central processing unit for, when the dot pattern is read out, reading text information corresponding to the dot pattern from a memory and displaying the text information on the liquid crystal display.

Description

BACKGROUND OF THE INVENTION

Field of the Invention

The present invention relates to a technique of optically scanning dot pattern information formed on a printed material and reproducing various kinds of information corresponding to the dot pattern information.

Description of the Related Art

Heretofore, there has been proposed a voice emitting toy for reading a barcode printed on a picture book or a game card using an optical sensor and emitting a particular voice. Such a voice emitting toy enables to read from a memory various kinds of voice information corresponding to a read barcode to reproduce the voice information.

However, such a technique using a barcode requires a dedicated area on paper to be reserved for printing the barcode, and the barcode is only for an information processing system to read, and a code description of the barcode can not be visually understood by a reader of a book including a picture book. Since the barcode is printed on a limited paper space, a reader feels it unpleasant and the barcode sometimes may reduce a product value of a book including a picture book.

Further, since the barcode, as mentioned above, can not be printed over letters, graphics or symbols printed on a paper sheet, when these letters, graphics, symbols and the like are used to reproduce voices, the barcode has to be printed near them, which presents a trouble such that it is difficult for a reader to intuitively know voice information or the like added on the letters or the like.

Regarding this point, a "dot code" technique disclosed in the Japanese Laid-Open patent publication No. 10-261059 proposes a method for scanning code information printed by a dot pattern to reproduce information.

In the related art, data is defined by way for arranging a dot pattern in a block field, and a marker is defined by a dot pattern which is different from the data dot pattern to serve as a synchronization signal. According to this technique, a dot pattern created by printing dots in the two-dimensional direction on a paper sheet in accordance with a predetermined rule is read by a pen type scanner, and the scanning speed and the scanning direction of this scanner is analyzed by an information processing device thereby to reproduce information including a voice which is associated therewith in advance.

However, since such a dot code technique is based on the assumption that dots are dynamically scanned by a scanner, although it can reproduce voice information along letters printed on a paper sheet, it is not adequate to reproduce information only by statically abutting a scanning device to a picture book or the like on which a character and the like are freely arranged and printed. In other words, since this dot code technique requires to carry out more than a predetermined distance of scanning on the x and y coordinates in order to obtain significant code information, it is impossible to associate a minimum area with a dot code and to print the area.

SUMMARY OF THE INVENTION

The present invention proposes a dot pattern that allows to define code information or the x and y coordinates even if the dot pattern is an minimum area, and an information reproducing method and an information reproducing device based on the dot pattern

A first aspect of the invention is configured to include the steps of:

scanning as image data by scanning means (602) a medium such as a printed material (606) on which is formed a dot pattern portion (607), the dot pattern portion being formed by arranging in accordance with a given rule dots (605) generated by a dot code generating algorithm, in order to recognize various kinds of multimedia information; converting the image data of the dot pattern portion (607) into code data; and reading multimedia information corresponding to the code data out of storing means to reproduce the multimedia information.

The multimedia information here may be any one of followings: voice information, image information, video information, and visible, audible and readable information such as a letter and a symbol. Further, the multimedia information may be digital data for another personal computer, a television system or a radio terminal to reproduce video/image information, text information and the like.

Here, on the dot pattern portion (607), code information corresponding to voice data registered in the storing means may be defined or the x and y coordinates may be defined. Also, both of the code information and the x and y coordinates may be defined. In a header of the dot pattern portion (607), a flag may be registered to determine the dot pattern portion is code information or x and y coordinates.

The medium may be a picture book or a photograph. The dot pattern portion (607) for recognizing voice information corresponding to image (606b) of the picture book or the like may be printed over the image (606b).

The dot pattern portion (607) may be printed on a seal member.

The dot pattern portion (607) may be formed on a transparent film (611). In this case, the transparent film may be arranged over a paper sheet, or the transparent film (611) may be attached to display means (613) of an electronic device as a touch panel. Then, the display means (613) is used to display instruction information so as to make a user to operate scanning means.

Between the touch panel (612) and the medium such as the paper sheet or the display means (613), an infrared cutoff filter (614) may be arranged.

In addition to the case that the touch panel is attached to the aforementioned display means (613), the touch panel may be attached on a book such as a picture book, a figure or the like.

Here, the scanning means (602) may be configured separately from an electronic device including a personal computer (608), a PDA and a portable phone, and data communication may be established between them by wire communication, radio communication or optical communication. However, the scanning means (602) may be housed in the electronic device integrally. In this case, the electronic device may be configured by a pen type case or a mouse type case, in addition to the electronic devices.

A second aspect of the invention is an information inputting/outputting method by camera inputting comprising the steps of: printing on one surface of a printed material (5) a dot pattern portion (6) formed by arranging in accordance with a given rule dots generated by a dot code generating algorithm in order to recognize various kinds of multimedia information and an information transfer portion (7) which includes a text, an illustration or the like to be recognized as information content; capturing by a camera unit (2) only image data of the dot pattern portion (6) in the printed material (5) and digitalizing the image data into numeric values; and based on the numeric values, outputting information and a program corresponding to the dot pattern portion (6) from a storing portion (10) and executing the information and the program.

The dot pattern portion (6) and the information transfer portion (7) comprising of the text or the illustration or the like may be printed on the one surface to be superimposed.

The dot pattern portion (6) may be defined by x and y coordinate information and the x and y coordinate information may be associated with content of the information transfer portion (7).

The dot pattern portion (6) may be defined by code numeric information and the code numeric information may be associated with content of the information transfer portion (7).

The dot pattern portion (6) of the x and y coordinate information and the dot pattern portion (6) of the code numeric information are printed on a flat surface of the printed material (5).

The dot pattern portion (6) may be printed with an ink that absorbs infrared light, a carbon ink or a transparent ink.

When the camera unit (2) is used to capture image data of the dot pattern portion (6), the dot pattern portion (6) may be radiated with ultraviolet light.

Information to be output may be digital data including a voice, image, video and text code.

The configuration may be made to output a program in addition to the information of digital data.

The information transfer portion (7) to be printed on one surface together with the dot pattern portion (6) may be a text or an illustration.

The camera unit (2) may be an image pickup device such as a C-MOS camera or a CCD camera. Hereinafter, what is called "camera unit" may include any imaging means having such a configuration.

In addition, the camera unit (2) may be configured separated from the image processing portion (12), the storing portion (10), the processing portion (9) and the outputting portion (15) to carry out transmission via an interface portion. Here, the interface portion may include both function means having an integrally-formed CPU and sound source memory in abstract terms and function means such as a connector for exchanging data.

The camera unit (2) and the image processing portion (12) is configured separated from the storing portion (10), the processing portion (9) and the outputting portion (15) to carry out transmission and reception via an interface portion. Communication with the interface portion may be realized by wire communication, radio communication including wireless LAN and blue tooth, or optical communication such as infrared communication.

The printed material (5) on which the dot pattern portion (6) is printed may be attached to various mediums via an adhesive agent.

The storing portion (10) may store, in addition to information including a text, image and video, a program.

Such information and program may be stored in the storing portion (10) via an inputting portion (17). Accordingly, a use can store any voice information as associated with a given dot pattern portion (6) in advance.

This inputting portion (17) may be a microphone or a line-in interface.

Further, the configuration may be made to mount a communication card (16). Then, the numeric data obtained by digitalizing a dot pattern (1) scanned by the camera unit (2) may be transmitted to a computer (23) such as a server via the communication card (16).

This configuration may allow to store a huge amount of multimedia information in a server and reproduce various types of multimedia information via communication. More specifically, a network address (URL: Uniform Resource Locator) is defined on the dot pattern (1), the communication card (16) is used to establish communication to TCP/IP communication network (so-called Internet) and thereby voice data stored at the network address may be downloaded in the storing portion (10) to be reproduced.

Here, other than the communication card (16), a GPS (Global Positioning System) receiver (24) may be further provided. This makes it possible to reproduce multimedia information based on position information together with content scanned from the dot pattern (1).

A third aspect of the invention is an information inputting/outputting device using a portable-phone camera, comprising: a camera unit (102) for scanning only image data of the dot pattern portion (6) printed on the printed material (5), the dot pattern portion (6) formed by arranging in accordance with a given rule dots generated by a dot code generating algorithm in order to recognize various kinds of information and an information transfer portion (7) which includes a text, an illustration or the like to be recognized as information content being printed on one surface of the printed material; an image processing portion (112) for digitalizing the image data into numeric values; an interface portion (119) for transferring the digitalized numeric information so as to output from a portable phone (110) and execute information and a program corresponding to the dot pattern portion (6).

Since such a camera equipped portable phone is used, the information reproducing device can be configured simply.

Such a camera equipped portable phone may be an information-processing-device-integrated portable phone such as i-mode phone provided from NTT Docomo Inc. This information processing device includes a central processing unit, a storing device (memory), a liquid crystal display screen and the like. In the storing device (memory), a program, voice data, video data and text data can be stored. An operation system (OS) mounted on a portable phone may be Toron, Symbian, Windows CE available from Microsoft Corporation, LINUX, PALM-OS or the like.

Such a camera equipped portable phone may be configured so that a memory card such as an SD card, a memory stick, a SIMM card can be mounted on the portable phone and further, content data is recorded in the memory card to be reproduced.

A fourth aspect of the invention is a portable electronic toy comprising: a voice storing portion (804) for storing a voice corresponding to a dot pattern portion (803) formed on a medium (802) including a book, a game card, a small article and a toy, the dot pattern portion (803) on which numeric data or code information are recoded in order to recognize various voices; a camera (810) for capturing image data of the dot pattern portion (803); a processing portion (806) for processing the image data captured by the camera (810) and reading voice data corresponding the numeric data out of the voice storing portion (804) to output the voice data by use of a speaker (805); and a case main body (808) for housing the voice storing portion (804), the speaker (805) and the processing portion (806).

This case main body (808) may be configured to be of organizer size. Besides, the case main body (808) may be provided with an LC display (812).

Further, the dot pattern portion (803) can be printed on a versus game card. Or, the dot pattern portion (803) may be formed on a miniature figure (hereinafter referred to as "mini figure") of an animation character on sale in convenience stores and the like as a candy toy or a seal on which the dot pattern portion (803) is printed may be attached to such a mini figure.

Furthermore, in order to allow intercommunication between plural portable electronic toys (821), a connector for a connection cable may be provided on the case main body (823). In this case, the connector may be a USB connector or any connector in conformity with IEEE 1394. Further, communication may be used by Blue tooth, wireless LAN or infrared data communication.

A fifth aspect of the invention provides a configuration with an information outputting function by camera inputting, in a figure (218) of a given shape, the configuration comprising: a camera (202) for scanning only image data of a dot pattern portion (6) printed on a printed material (5), the dot pattern portion (6) formed by arranging in accordance with a given rule dots generated by a dot code generating algorithm in order to recognize information and an information transfer portion (7) which includes a text, an illustration or the like to be recognized as information content being printed on one surface of the printed material; an image processing portion (212) for digitalizing the image data into numeric values; and a processing portion (209) and an outputting portion (215) for outputting and executing information and a program of a storing portion (210) corresponding to the dot pattern portion (6) based on the numeric values processed by the image processing portion (212).

Besides, a speaker (214) may be provided as an outputting portion (215) to output a voice.

Further, the storing portion (210) may be configured to store information and a program from the outside by use of a microphone (217).

Further, the figure (218) may be configured to be a stuffed toy (231) made by stuffing an elastic material in an outer skin of a predetermined shape.

Furthermore, the configuration with an information outputting function by camera inputting may include in a figure (218): a camera unit (A) configured by including a camera (202) for capturing only image data of a dot pattern portion (6) printed on a printed material (5), the dot pattern portion (6) formed by arranging in accordance with a given rule dots generated by a dot code generating algorithm in order to recognize information and an information transfer portion (7) which includes a text, an illustration or the like to be recognized as information content being printed on one surface of the printed material and an main processor (209) digitalizing the image data into numeric values; an outputting unit (B) including a processing portion (9) and an outputting portion (15) for outputting and executing information and a program of a storing portion (10) corresponding to the dot pattern portion (6) based on the numeric values processed by the image processing portion (12) in the camera unit (A); and an interface portion for mediating communication between the camera unit (A) and sand outputting unit (B).

BRIEF DESCRIPTION OF THE DRAWINGS

FIGS. 1(a) and 1(b) are block diagrams each for illustrating a configuration of an information reproducing method using a dot pattern according to the present invention, and more specifically, FIG. 1(a) is an explanatory view of dot code generation and FIG. 1(b) of dot pattern recognition.

FIG. 2 is an elevation view illustrating an example of the dot pattern.

FIG. 3 is a functional block diagram for explaining a state of a picture-book and the information reproducing method.

FIGS. 4(a) and 4(b) are block diagrams each illustrating another configuration of the information reproducing method using a dot pattern, and specifically, FIG. 4(a) is an explanatory view of dot code generation while FIG. 4(b) is of dot pattern recognition.

FIG. 5 is an elevation view illustrating another example of dot pattern.

FIG. 6 is an elevation view illustrating another example of dot pattern.

FIG. 7 is an elevation view illustrating another example of dot pattern.

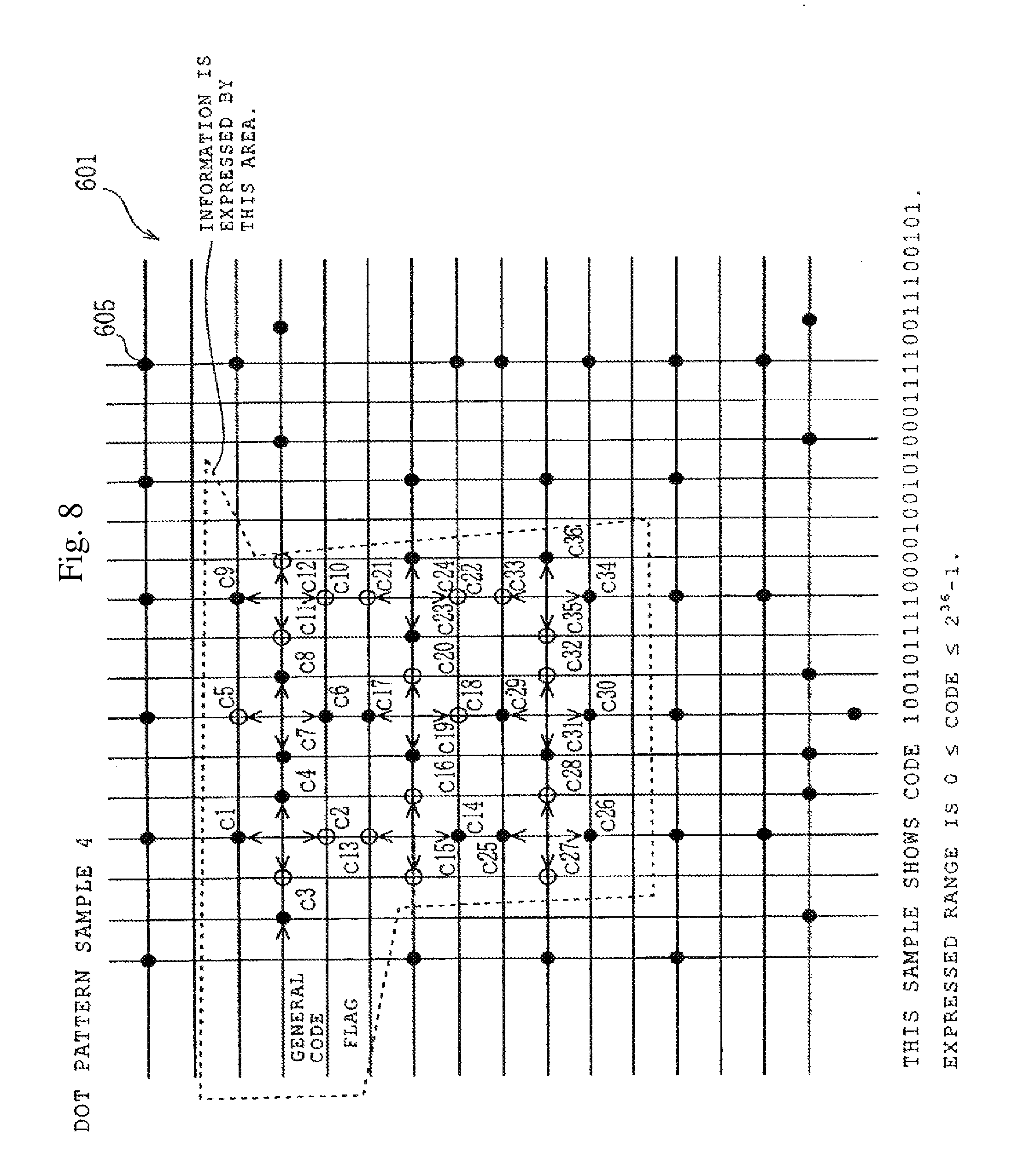

FIG. 8 is an elevation view illustrating another example of dot pattern.

FIG. 9 is an elevation view illustrating an example of a picture book on which a picture of and a text of a story are printed.

FIG. 10 is an elevation view illustrating another example of a picture book on which pictures and story texts are printed.

FIG. 11 is an elevation view illustrating still another example of a picture book on which pictures and story texts are printed.

FIG. 12 is a perspective view for explaining a touch panel on which a dot pattern portion is formed.

FIG. 13 is an exploded side view for explaining a touch panel on which a dot pattern portion is formed.

FIG. 14 is a cross sectional view for explaining another embodiment including a mouse pad on which a dot pattern portion is formed and a mouse type camera.

FIG. 15 is a plane view for illustrating a mouse type camera.

FIGS. 16(a) and 16(b) are views each illustrating another embodiment of the mouse type camera and more specifically, FIG. 16(a) is a plane view and FIG. 16(b) is a side view.

FIGS. 17(a) and 17(b) are views each illustrating yet another embodiment of the mouse type camera and more specifically, FIG. 17(a) is a plane view and FIG. 17 (b) is a side view.

FIG. 18 is a cross sectional view illustrating another embodiment of using as a tablet a printed surface on which a dot pattern portion is formed.

FIG. 19 is a cross sectional view illustrating another embodiment in which a camera is mounted at an end of a pen member.

FIG. 20 is a cross sectional view illustrating yet another embodiment in which a camera is mounted at an end of a pen member.

FIGS. 21(a) and 21(b) are block diagrams each illustrating a configuration of an information inputting/outputting method by camera inputting according to the invention, and specifically, FIG. 21(a) is an explanatory view of dot code generation and FIG. 21(b) of dot pattern recognition.

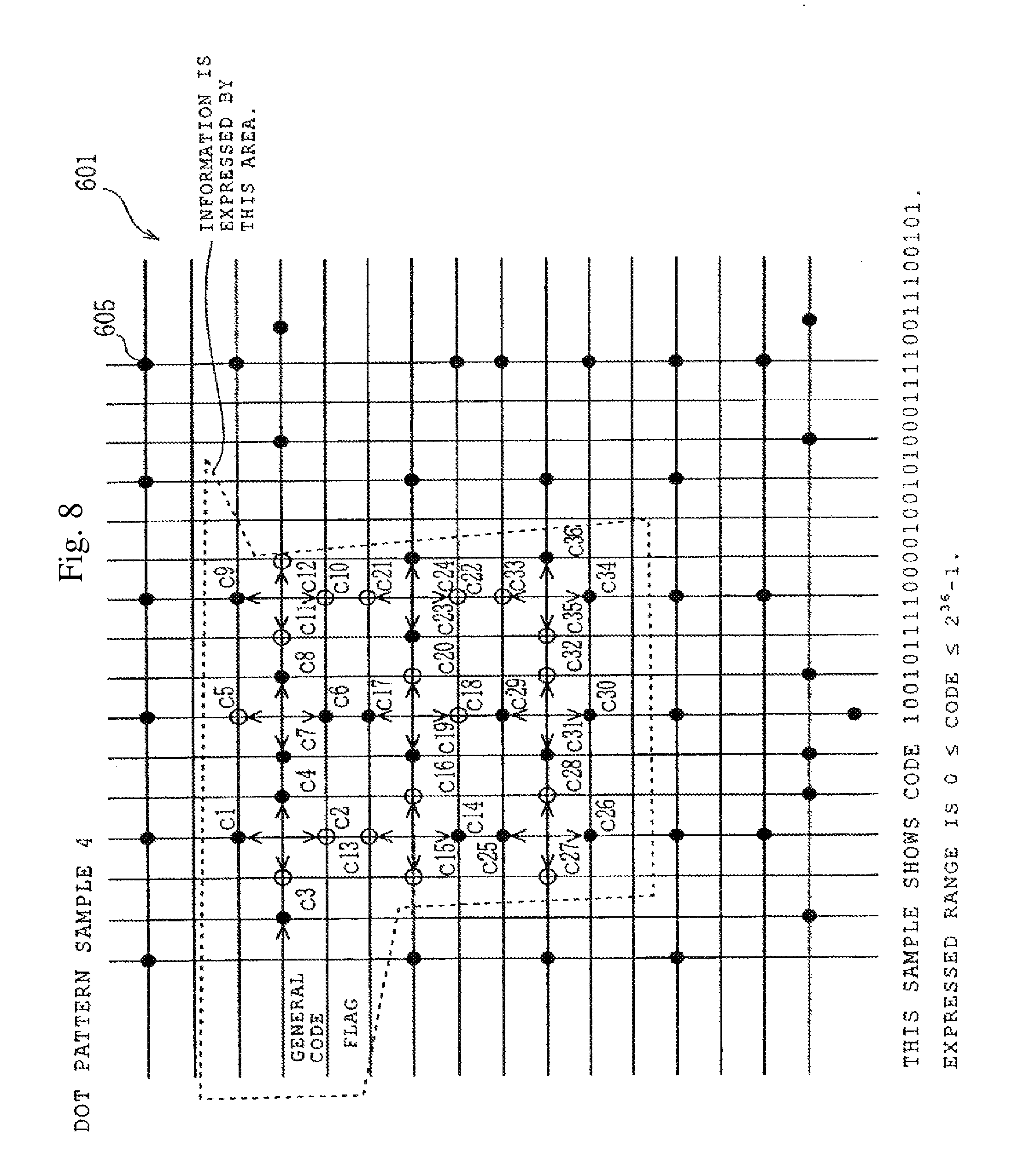

FIG. 22 is an elevation view illustrating an example of a dot pattern.

FIG. 23 is an explanatory view for showing a dot pattern formed of x and y coordinates information.

FIG. 24 is a view for explaining a method of recognizing and processing a dot pattern formed of x and y coordinates information.

FIG. 25 is an explanatory view for showing a dot pattern formed of code numeric information.

FIG. 26 is a view for explaining a method of recognizing and processing a dot pattern formed of code numeric information.

FIG. 27 is an explanatory view for explaining a way of scanning by a camera only image data of a dot pattern portion printed with a carbon ink, separately from an information transfer portion, which include text and figures, printed with a con-carbon color ink by radiating a printed material with infrared light.

FIG. 28 is a functional block diagram for explaining an embodiment of a portable information inputting/outputting device using an information inputting/outputting method by camera inputting.

FIG. 29 is a functional block diagram for explaining an embodiment of a portable information inputting/outputting device using an information inputting/outputting method by camera inputting.

FIG. 30 is a perspective view showing a portable information inputting/outputting device which is housed in a compact case.

FIGS. 31(a) to 31(d) are views each showing a portable information inputting/outputting device which is housed in a compact case of another shape, and more specifically, FIG. 31(a) is a perspective view showing the whole case, FIG. 31(b) is a plane view, FIG. 31(c) is a side view and FIG. 31(d) is an elevation view.

FIG. 32 is a functional block diagram for explaining an embodiment in which a camera unit and an outputting portion are configured separately.

FIG. 33 is a functional block diagram for explaining an embodiment in which a camera unit and an outputting portion are configured separately.

FIG. 34 is a perspective view showing a device in which a camera unit portion and an outputting-side main body are separated.

FIGS. 35(a) to 35(d) are perspective views each showing another form of a camera unit portion, and more specifically, FIG. 35(a) shows a pen type camera unit, FIG. 35(b) shows a pen type camera unit, FIG. 35(c) shows a mouse type camera unit and FIG. 35 (d) shows a stethoscope type camera unit.

FIG. 36 is a functional block diagram for explaining an embodiment of an information inputting device using a camera for portable phone.

FIG. 37 is a functional block diagram for explaining an embodiment of an information inputting device using a camera.

FIG. 38 is an explanatory view showing an information inputting device using a camera for portable phone.

FIG. 39 is an explanatory view showing an information inputting device using a camera for portable phone.

FIG. 40 is an explanatory view for showing a portable phone in which an information inputting device is integrated.

FIG. 41 is a functional block diagram of a portable electronic device according to the embodiment of using a dot pattern portion.

FIG. 42 is an elevation view for showing an embodiment of a portable electronic device using a dot pattern portion.

FIG. 43 is a right side view showing a portable electronic toy.

FIG. 44 is a left side view showing a portable electronic toy.

FIG. 45 is a bottom view showing a portable electronic toy.

FIG. 46 is a perspective view for showing an embodiment of a portable electronic toy which emits a voice mainly corresponding to a mini figure.

FIG. 47 is a functional block diagram of a portable electronic toy according to the embodiment.

FIG. 48 is a perspective view showing a plurality of voice emitting toys being connected to a controller unit.

FIG. 49 is an elevation view showing n embodiment of portable electronic toy which utilizes optical character recognition (OCR).

FIG. 50 is a functional block diagram of a portable electronic toy showing an embodiment which utilizes a magnetic member.

FIG. 51 is a functional block diagram of a portable electronic toy showing an embodiment which utilizes a shooting pen of a camera or the like.

FIG. 52 is a functional block diagram for showing a figure unit having an information outputting function by camera inputting, in which a camera unit and an outputting unit are configured separately.

FIG. 53 is a functional block diagram for explaining a modified example of the embodiment.

FIG. 54 is a functional block diagram for showing a figure unit having an information outputting function by camera inputting, in which a camera unit and an outputting unit are configured separately.

FIG. 55 is a functional block diagram for explaining a modified example of the embodiment.

FIGS. 56(a) to 56(d) are perspective views each showing a figure with a camera unit and more specifically, FIG. 56(a) is a view of a doll, FIG. 56(b) is of a soccer ball, FIG. 56(c) is of a bicycle and FIG. 56(d) is of an animal.

FIG. 57 is a perspective view showing figure units put on the center battle stage of a new simulation board game.

FIG. 58 is a cross sectional view for explaining another embodiment of the invention in which a camera unit and an outputting unit are housed in a stuffed toy that is one form of the figure.

FIG. 59 is a cross sectional view for explaining another embodiment in which a camera unit and an outputting unit are housed in a stuffed toy that is one form of the figure.

FIG. 60 is a cross sectional view for explaining another embodiment of the invention in which a camera unit and an outputting unit are housed in a stuffed toy that is one form of the figure.

FIG. 61 is a cross sectional view of a camera.

FIG. 62 is a view for explaining an image pickup area of a camera.

FIG. 63 is a perspective view for showing information dots of four blocks.

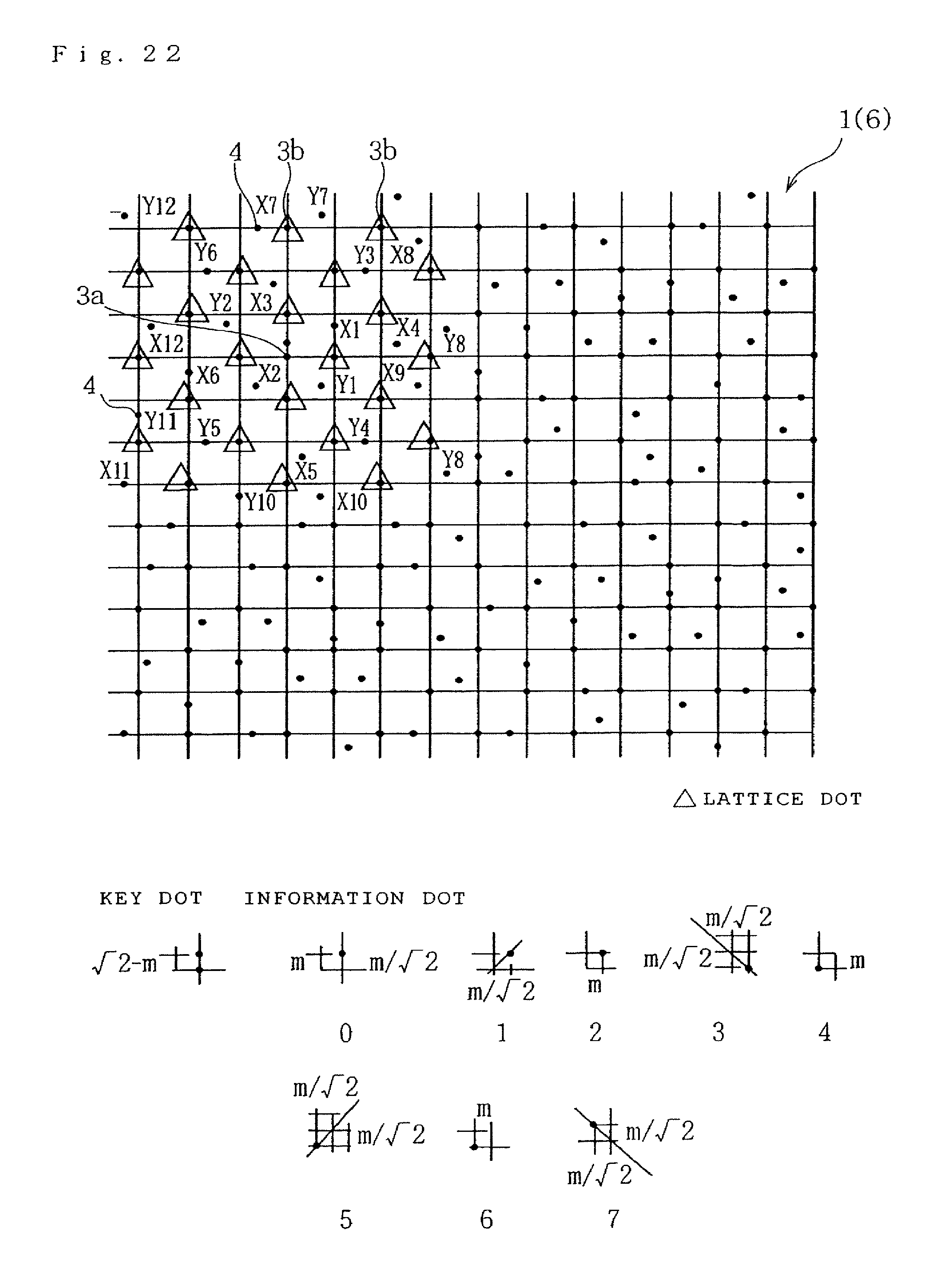

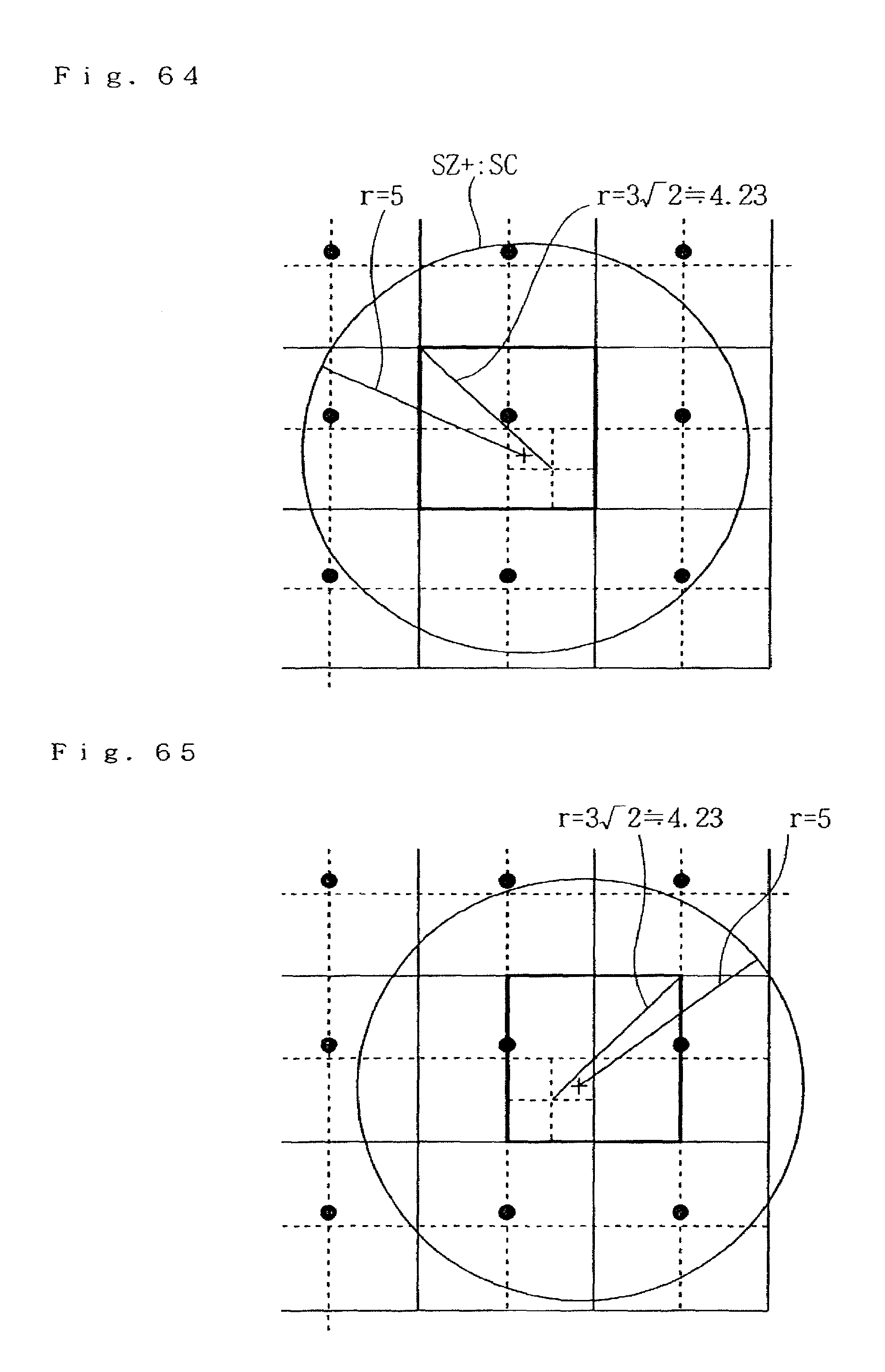

FIG. 64 is a view for explaining the image-pickup center position of a camera and the input procedure of a sub block.

FIG. 65 is a view for explaining the image-pickup center position of a camera and the input procedure of a sub block.

FIG. 66 is a view for explaining the image-pickup center position of a camera and the input procedure of a sub block.

FIG. 67 is a view for explaining the image-pickup center position of a camera and the input procedure of a sub block.

FIG. 68 is a view for explaining a configuration of a pen type scanner.

FIG. 69 is a view illustrating an example of use of the embodiment.

FIG. 70 is a view illustrating an example of use of the embodiment.

FIG. 71 is a view illustrating an example of use of the embodiment.

FIG. 72 is a view illustrating an example of use of the embodiment.

FIG. 73 is a view illustrating an example of use of the embodiment.

FIG. 74 is a view illustrating an example of use of the embodiment.

FIG. 75 is a view illustrating an example of use of the embodiment.

FIG. 76 is a view illustrating an example of use of the embodiment.

FIG. 77 is a view illustrating an example of use of the embodiment.

FIG. 78 is a view illustrating an example of use of the embodiment.

FIG. 79 is a view illustrating an example of use of the embodiment.

FIG. 80 is a view illustrating an example of use of the embodiment.

FIG. 81 is a view illustrating an example of use of the embodiment.

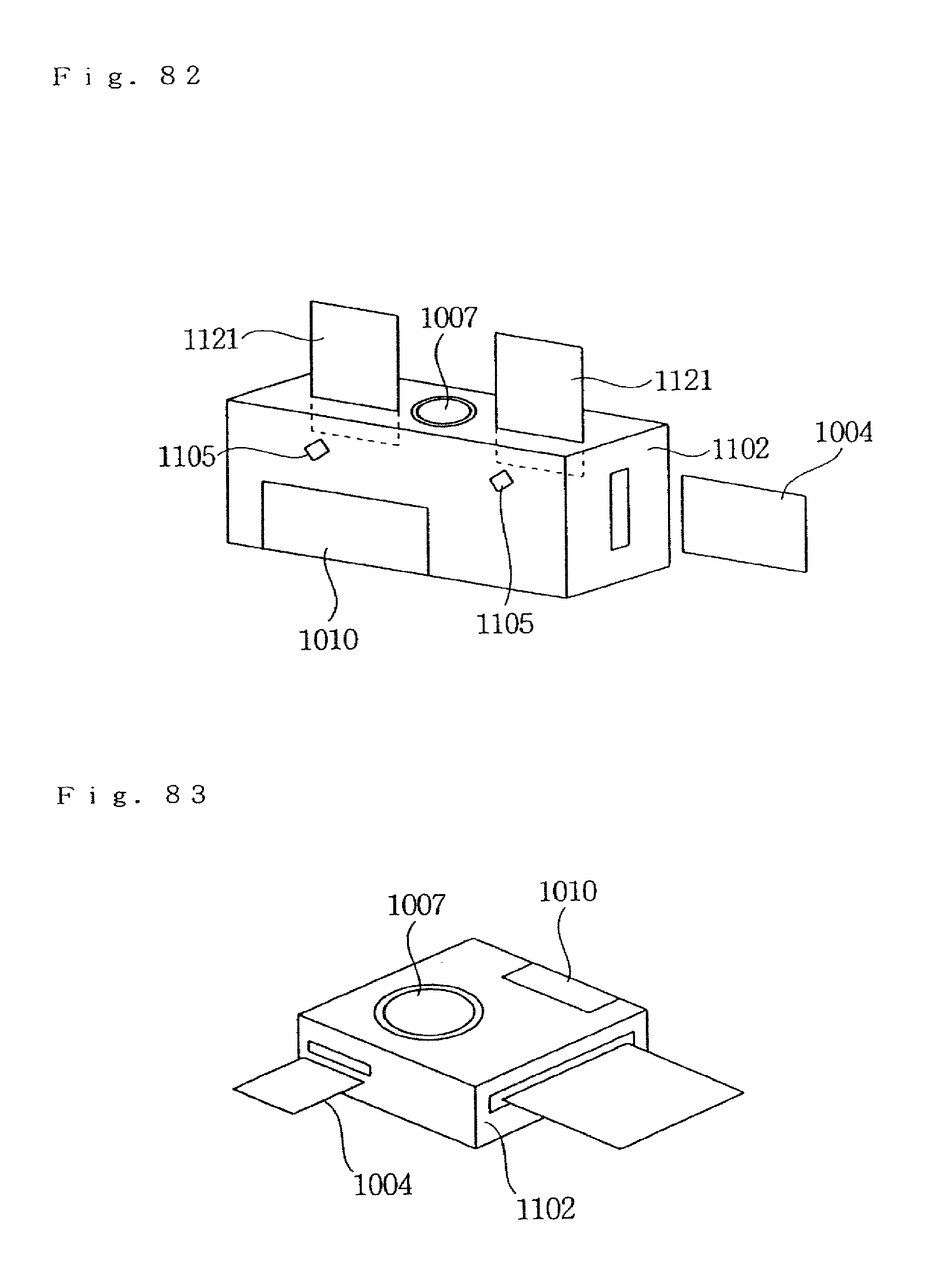

FIG. 82 is a view illustrating an example of use of the embodiment.

FIG. 83 is a view illustrating an example of use of the embodiment.

FIG. 84 is a view illustrating an example of use of the embodiment.

FIG. 85 is a view illustrating an example of use of the embodiment.

FIG. 86 is a view illustrating an example of use of the embodiment.

FIG. 87 is a view illustrating an example of use of the embodiment.

FIG. 88 is a view illustrating an example of use of the embodiment.

FIG. 89 is a view illustrating an example of use of the embodiment.

FIG. 90 is a view illustrating an example of use of the embodiment.

FIG. 91 is a view illustrating an example of use of the embodiment.

FIG. 92 is a view illustrating an example of use of the embodiment.

FIG. 93 is a view illustrating an example of use of the embodiment.

FIG. 94 is a view illustrating an example of use of the embodiment.

FIG. 95 is a view illustrating an example of use of the embodiment.

FIG. 96 is a view illustrating an example of use of the embodiment.

FIG. 97 is a view illustrating an example of use of the embodiment.

FIG. 98 is a view illustrating an example of use of the embodiment.

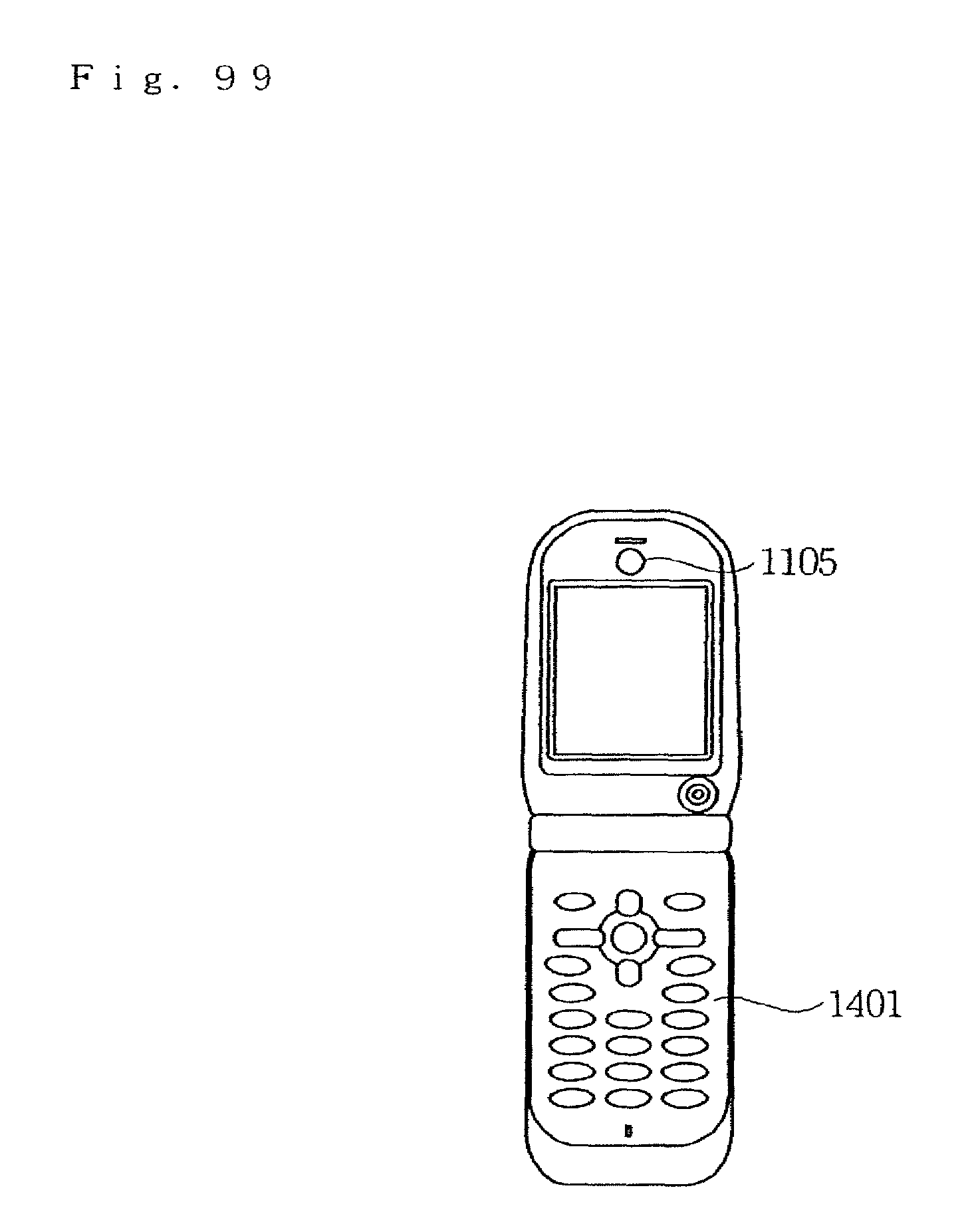

FIG. 99 is a view illustrating an example of use of the embodiment.

FIG. 100 is a view illustrating an example of use of the embodiment.

FIG. 101 is a view illustrating an example of use of the embodiment.

FIG. 102 is a view illustrating an example of use of the embodiment.

FIG. 103 is an explanatory view (1) of specifications of dot pattern according to an embodiment.

FIG. 104 is an explanatory view (2) of specifications of dot pattern according to the embodiment.

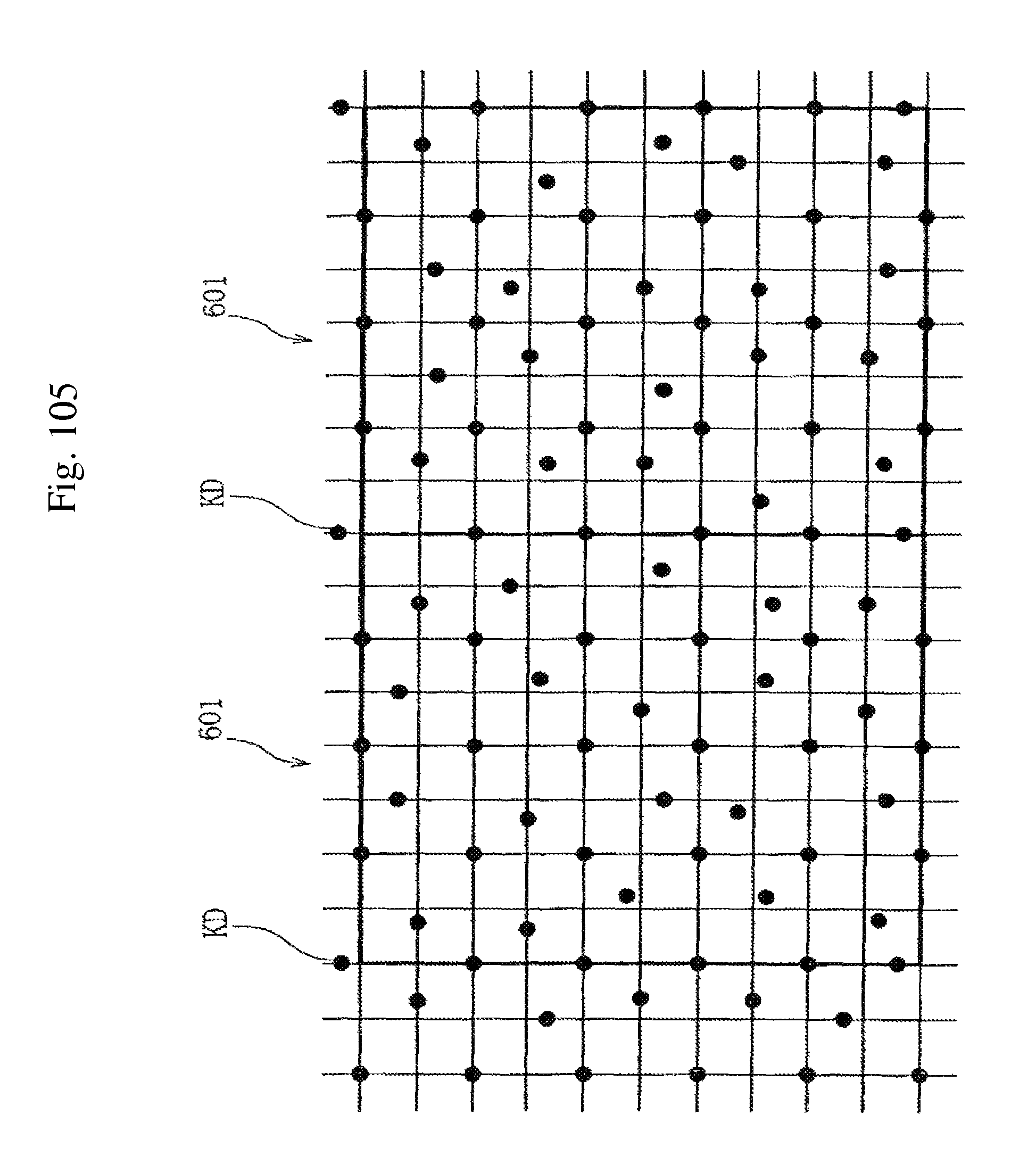

FIG. 105 is an explanatory view (3) of specifications of dot pattern according to the embodiment.

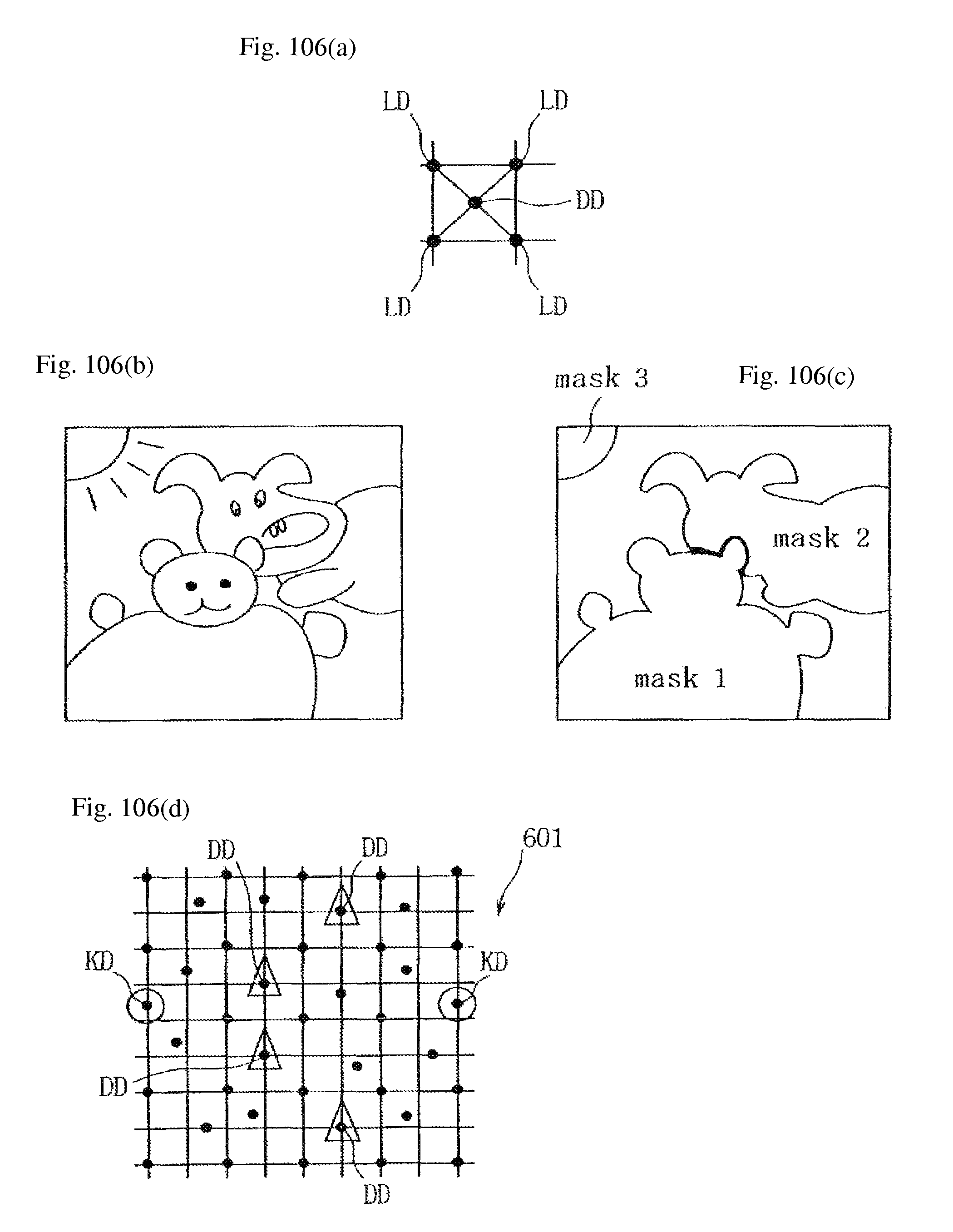

FIGS. 106(a) to 106(d) are explanatory views (4) of specifications of dot pattern according to the embodiment.

FIG. 107 is a view (1) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 108 is a view (2) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 109 is a view (3) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 110 is a view (4) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 111 is a view (5) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 112 is a view (6) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

FIG. 113 is a view (7) for explaining a device configuration of means for scanning a dot pattern portion according to the embodiment.

BEST MODE FOR CARRYING OUT THE INVENTION

FIGS. 1(a) and 1(b) are block diagrams each showing a configuration of an information reproducing method using a dot pattern of the present invention and specifically, FIG. 1(a) explains generation of a dot code and FIG. 1(b) explains recognition of a dot pattern. FIG. 2 is an elevation view illustrating an example of a dot pattern and FIG. 3 is a functional block diagram for explaining a state of a picture book and the information reproducing method.

The information reproducing method using a dot pattern of the invention includes generation of a dot pattern 601, recognition of the dot pattern 601 and reproducing of voice information corresponding to the dot pattern 601. Specifically, image data of the dot pattern 601 is scanned by a camera 602, which is scanning means, a distortion factor on the image is corrected, the image is converted into numeric values to be digitalized, the digitalized numeric values are divided into a first direction 603 and a second direction 604, its position is read, and voice information corresponding to the dot pattern 601 is then reproduced on a personal computer (hereinafter referred to as "PC") 608, PDA, portable phone or the like.

The camera 602 of FIG. 3 is configured by a pen type scanner, and an image pickup device such as CCD or C-MOS is integrated in the camera. However, the camera can be implemented by a digital camera or a camera mounted on a mobile terminal including a portable phone, as described later.

Generation of the dot pattern 601 according to the invention is performed in such a manner that: in order to recognize voice information, small dots 605 are arranged, by a dot code generating algorithm, in a first direction line 603 in accordance with a predetermine rule, and arranged in a second direction line 604 perpendicular to this first direction line 603 in accordance with a predetermined rule. Then, a mapping table is also generated in a memory in the PC 608 or a memory provided in a camera 602. This first direction line 603 and the second direction line 604 are not limited to those perpendicular to each other, however, they can be crossed forming an angle of 60 degree, for example.

Recognition of the dot pattern 601 includes correction of a distortion factor by a lens of the camera 602, correction of a distortion caused by tilt of the camera 602, reproducing of numeric information in the first direction 603 and reproducing of numeric information in the second direction 604. The image data of the dot pattern 601 is captured by using the camera 602 which has an image pickup device such as a C-MOS camera and a CCD camera. The pen type scanner may be replaced by a portable phone equipped with a camera or a camera connected to a portable phone. In the case of such portable phones, control of a JAVA program and the like downloaded in a memory of the portable phone is utilized to reproduce a voice as it is. The image data captured by the camera 602 is processed by an image processing algorithm to extract dots 605, which are subjected to correction of a distortion factor by a lens of the camera 602 by a distortion correcting algorithm. Or, distortion by tilt of the camera 602 with respect to the dot pattern 601 is corrected.

The image data captured by the camera 602 is processed by a CPU (central processing unit) of the PC 608 using a predetermined image processing algorithm to extract dots 605. Since distortion caused by the camera 602 itself is corrected by the distortion correcting algorithm. Therefore, even when image data of the dot pattern 601 is captured by a common camera 602 equipped with a lens high in distortion factor, accurate recognition is possible. Also even when the dot pattern 601 is tilt with respect to the screen and scanned by the camera 602, the dot pattern 601 can be accurately captured.

Reproducing of numeric information in the first direction 603 is carried out in such a manner that: two lines of the first direction 603 are extracted, dot information between the two lines of the first direction 603 is digitalized, a pattern thereof is recognized by a pattern recognizing algorithm and numeric information in the first direction 603 is reproduced using a mapping table. In reproducing, if line reading can not be carried out accurately by smudges or noise, a next line is extracted and the same processing is performed. Its information is recorded as numeric correcting information, which is used in correcting when the numeric information is reproduced.

Reproducing of numeric information in the second direction 604 is carried out in such a manner that: two lines of the second direction 604 is extracted, dot information between the lines of the second direction line 604 is digitalized, a pattern thereof is recognized by a pattern recognizing algorithm and numeric information in the second direction 604 is reproduced using a mapping table. In reproducing, if line scanning can not be carried out accurately by smudges or noise, a next line is extracted and the same processing is performed. Its information is recorded as numeric correcting information, which is used in correcting when the numeric information is reproduced.

The aforementioned dot pattern 601 is configured of a dot pattern portion 607 printed on a printed material 606 such as a picture book and a text book. Image of this dot pattern portion 607 is recognized by the camera 602, corresponding voice information is read from a memory based on numeric data extracted from the image data, and corresponding voice and music are reproduced by outputting means such as a speaker 9 of the PC 608, a PDA or portable phone.

FIGS. 4(a) and 4(b) are block diagrams each illustrating another configuration of an information reproducing method using a dot pattern and more specifically, FIG. 4(a) a view for explaining generation of a dot code and FIG. 4(b) is a view for explaining recognition of a dot pattern. FIGS. 5 through 8 are elevation views each showing another example of a dot pattern.

As mentioned above, image data captured by a camera 602 is subjected to processing by image processing algorithm to extract dots 5. Since distortion caused by the camera 602 and distortion due to tilt of the camera 602 are corrected by the distortion correcting algorithm, accurate recognition is possible in capturing the dot pattern 601.

In recognition of the dot pattern, first, a line composed by successive equally spaced dots 5 is extracted, and it is determined whether or not the extracted line is correct. If the line is not correct, another line is extracted.

Next, one extracted line is assumed as a horizontal line. This horizontal line is used as a basis to extract a line which extends vertically from the horizontal line. A vertical line starts from a dot which consists in the horizontal line and the vertical direction is recognized from the fact that the next dot or the third dot is not on the line.

Finally, an information area is extracted and information thereof is converted into numeric values to reproduce this numeric information.

FIG. 9 is an elevation view illustrating an example of printing of pictures of a picture book and story texts.

In such a page, an icon 606a at the upper left side of the page is scanned by the camera 602 and a switch is turned on. Next, a text portion 606c printed of the story corresponding to the picture 6b is scanned by the camera 602. Since dot pattern portions 607 are printed on the icon 606a and the text portion 606c, these dot pattern portions 607 are used to recognize of which area, on which page of the picture book the information is and to make PC 608 reproduce correspondingly-stored voice of the story. For example, dots 5 of the dot pattern portion 607 is printed with a carbon ink while the other portion is printed with a non-carbon color ink so as to be scanned by irradiation of infrared light.

FIG. 10 is an elevation view illustrating another example of a picture book on which pictures and story texts are printed.

FIG. 11 is an elevation view illustrating still another example of a picture book on which pictures and story texts are printed.

The information reproducing method using a dot pattern according to the invention is not limited to a story based picture book, and can be applied to an educational material for teaching mathematics in an easily understood manner as shown in FIG. 10. Also as illustrated in FIG. 11, the information reproducing method according to the invention can be applied to an educational material for teaching music in an easily understood manner.

Stored in a memory of a PC 608, a PDA or a portable phone is a content which can be utilized as a picture book emitting music and conversation of central characters and the like as well as pictures of a picture book. Also can be stored are a content which can be utilized an educational material emitting a voice in combination with a toy such as assembly blocks and a content for storing which can be used as a dictionary software for translating by tracing words and text in a foreign language.

The information reproducing method using a dot pattern according to the invention can be further utilized in the following way.

"Pop Picture Book which Generates Voice"

Taking advantage of a feature that the camera 602 has only to scan or abut on the dot pattern portion 607, the information reproducing method according to the invention can be combined in a "pop picture book" which makes a three-dimensional material appear when a page is opened. After the page is opened, the dot pattern portion 607 is attached to or printed on the inside of the three-dimensional material. When this dot pattern portion 607 is searched and an end of the camera 602 is abutted to the dot pattern portion 607, various voices are outputted, thereby producing a "pop picture book which outputs a voice". For example, when the page is opened, a "horror house" is opened by pop-up. When a dot pattern portion 607 at a window is traced by the camera 602, a voice of scream of a woman such as "yipe" is reproduced. When a dot pattern portion 607 at a hall is traced by the camera 602, a voice of ominous footstep such as "tap tap" is reproduced.

"Creative Picture Book (Creative Book)"

A dot pattern portion 607 can be attached to a desired portion of a picture book which is a printed material 6. As the dot pattern portion 607 for a user himself to create content is attached, is can be used as a "creative picture book (creative book)" which is able to set a switch anywhere. For example, a user can create an original story by attaching a dot pattern portion 607 of a set of a picture book, a speech collection, a sound list, sound source data and the like a dot pattern portion 607 of voice or music as a user like to the picture book 6.

Further, a seal of a sound source list or an icon seal with a dot pattern portion 607 formed thereon is prepared, a user draws a picture on a picture book which has nothing drawn in advance, and then the user attaches the seal to the picture book to create an original story. With this configuration, a user himself can create a picture book which outputs a voice.

"Educational Material which Outputs Voice"

The invention can be used as an "educational material which outputs a voice" dedicated for children, adults, aged people in any generation. For example, an end of the camera 602 is abutted to a dot pattern portion 607 of a printed material 606 and the dot pattern portion 607 is scanned to reproduce a voice. With such a configuration, the invention can be used as language education such as English conversation, child education such as intellectual education and music and teaching aid such as a drill.

Since the invention can be used as an input interface printed on a printed material 606 or the like, it is possible to manufacture an interface suitable for each content. Further, it is configured to download dot pattern data to the PC 608 via the general-purpose network such as Internet, when a user freely combines dot pattern data and prints a dot pattern on a paper sheet by a general-purpose printer, then, the user himself can create such a "picture book which outputs a picture" as mentioned above.

Further, URL information can be defined on a dot pattern portion 607 of the printed material 606 or another medium. When the URL information is extracted from image data obtained by taking a picture of the dot pattern 607 by a camera 602, a browser program installed in the PC 608 accesses to the aforementioned URL to carry out a predetermined operation.

FIG. 12 is a perspective view for explaining a touch panel with a dot pattern portion 607 formed thereon. FIG. 13 is an exploded lateral view for explaining a touch panel with a dot pattern portion 607 formed thereon.